What Is Phishing?

Learn what phishing is, how it works, why it succeeds, and which controls - from MFA to clear signing - actually reduce phishing risk.

Introduction

Phishing is the practice of tricking a person into helping an attacker: by revealing a secret, clicking a malicious link, opening a harmful attachment, installing remote access software, or approving a transaction or permission they do not truly intend. The stakes are high because phishing attacks do not need to break cryptography or exploit exotic bugs. They often succeed by exploiting something much more common: the gap between what a system means and what a human being thinks it means.

That gap shows up everywhere. In email, it appears when a message looks like it came from your bank, colleague, or cloud provider. On the web, it appears when a fake page looks like a login screen you already trust. In crypto, it appears when a wallet prompt looks like a routine approval, but actually grants an attacker permission to move assets later. Different surfaces, same underlying mechanism: the attacker wants the victim to perform a valid action for an invalid reason.

This is why phishing is best understood as a security problem at the boundary between people and protocols. Computers are usually very literal. A password typed into the wrong page is still a valid password. A signed off-chain message is still a valid signature even if the signer misunderstood it. A transfer to the wrong address on a blockchain is still final. The system often sees a formally correct action; only the human context is wrong.

How does phishing trick users into authorizing attacks?

Most security mechanisms answer a narrow question: is this credential valid? is this signature valid? did this account approve this action? Phishing attacks aim at a different question: did the human mean to trust this party and authorize this outcome? If the system cannot answer that second question well, phishing remains possible.

That is the compression point for the whole topic. Phishing works by inducing a legitimate user action that a machine will accept as legitimate. The attacker does not always need to bypass authentication; they can borrow the victim’s own authentication. They do not always need to inject malware; they can persuade the victim to run it. They do not always need to steal a private key; they can trick the user into revealing a seed phrase, signing an approval, or sending funds to a lookalike address.

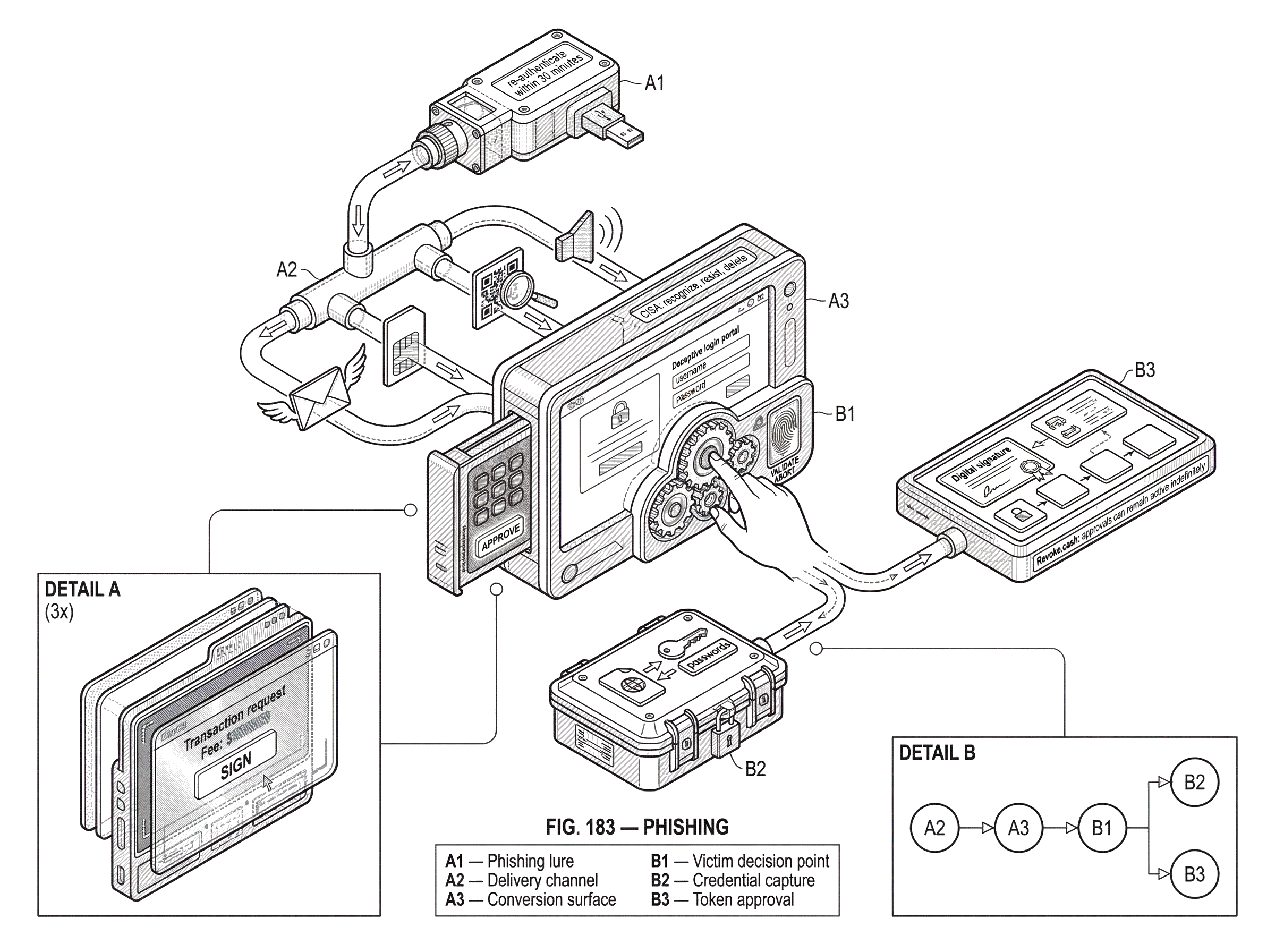

Calling phishing “social engineering” is accurate, but a bit too broad to be satisfying. Here is the mechanism more precisely. The attacker first manufactures a believable context: urgency, authority, familiarity, fear, scarcity, or routine business process. Then they present a surface that seems to match that context: an email, text, phone call, chat message, login page, wallet popup, QR code, or transaction history entry. Finally, they ask for a concrete action that benefits them: type credentials, open a document, call a number, install a tool, sign a message, copy an address, or approve access.

If those three pieces line up, the victim may supply the final missing ingredient the attacker cannot safely create alone: a trusted human decision.

Why do phishing attacks still succeed despite modern defenses?

It is tempting to think phishing succeeds because users are careless. That explanation is too simple. Better phrased: phishing succeeds because modern digital systems ask humans to make trust decisions using weak signals under time pressure.

Some of those signals are genuinely weak. A sender name can be forged in human perception even if protocol-level checks exist underneath. A web page can visually imitate a trusted service. A wallet may show abbreviated addresses, opaque contract data, or signature prompts that are technically precise but not meaningfully understandable. In older advice, poor grammar was treated as a strong warning sign. That can still help sometimes, but CISA now notes that grammar is a less reliable signal in the era of AI-assisted writing. Attackers have improved.

Some of the problem is attentional, not intellectual. A classic usability study found that many users did not even look at browser-based cues such as the address bar or security indicators, and realistic phishing websites fooled a large share of participants. The lesson is not that users are irrational. It is that security cues often compete with stronger psychological cues: “your account will be locked,” “your invoice is overdue,” “your package failed delivery,” “your boss needs this urgently,” or “sign here to continue.” Humans are good at following narratives; phishing supplies the wrong narrative at exactly the wrong moment.

This also explains why experienced users still get caught. Skill helps, but phishing is not just a knowledge test. Attackers can hijack real email threads, abuse compromised accounts, send messages through legitimate third-party services, or imitate familiar workflows closely enough that the victim’s guard is lowered. MITRE ATT&CK describes phishing as electronically delivered social engineering and notes that it can arrive not only by email but also through services such as social platforms and via voice-based tactics.

What are the typical steps in a phishing attack?

Across platforms, there are two levers attackers repeatedly use: delivery and conversion. Delivery is how the lure reaches the victim. Conversion is how the victim is induced to take the action the attacker needs.

Delivery used to be associated mainly with email, and email is still central. But CISA’s public guidance is explicit that phishing messages can arrive by email, text, direct message on social media, or phone call. QR codes add another wrapper around the same idea: the victim scans instead of clicks, but the goal is the same. APWG has reported that criminals are sending large volumes of QR-code-bearing emails that direct people to phishing sites and malware.

Conversion depends on what the attacker wants. If the goal is credential theft, the victim is directed to a fake login page. If the goal is malware delivery, the message carries an attachment or link that leads to execution. If the goal is business fraud, the victim may be told to change bank details or initiate a wire transfer. If the goal is crypto theft, the victim may be asked to connect a wallet, reveal a seed phrase, sign an off-chain message, approve a token allowance, or copy a poisoned address from recent transaction history.

A worked example makes the pattern concrete. Imagine an employee receives a message that appears to come from Microsoft 365 saying their session has expired and they must re-authenticate within 30 minutes to avoid losing access to shared files. The email uses familiar branding and urgent language. The embedded link opens a page that looks almost identical to the real login portal. The employee enters username, password, and maybe even a one-time code. At that point, nothing “technical” looks broken from the victim’s point of view; they logged in somewhere that looked right. But the attacker now has the credentials and can reuse them immediately, perhaps to access mail, hijack threads, or launch further phishing from a compromised account.

Now translate the same structure into crypto. A user is told they must “verify” a wallet, claim an airdrop, relist an NFT, or approve a routine dApp action. The connected site presents a wallet prompt requesting an off-chain signature or token approval. The interface language suggests a harmless action, but the signed message actually authorizes later asset movement. The user did sign it; the signature is real. The deception lies in the meaning the human attached to the action.

What common phishing techniques should I watch for?

The usual classifications matter because they track what action the attacker is trying to extract.

At the broadest level, there is non-targeted phishing and targeted phishing. Non-targeted phishing is mass distribution: send enough lures and some fraction will work. Targeted phishing, often called spearphishing, is tailored to a specific person, company, or role. MITRE distinguishes these because the attacker’s preparation changes the quality of the lure. A generic payroll message and a thread-hijacked vendor conversation are not equally difficult to spot.

The technical payload also changes the shape of the attack. A spearphishing attachment tries to get the victim to open a file that executes code or exposes macros, scripts, or exploit chains. A spearphishing link pushes the victim toward a credential-harvesting page or malware host. Spearphishing via service uses platforms other than traditional email: social networks, collaboration tools, marketplaces, and messaging systems. Voice phishing, sometimes called vishing, moves the trust manipulation into a phone call or callback workflow.

Business email compromise sits near phishing but deserves special attention because it often aims at money movement rather than malware. The attack may involve impersonating an executive, supplier, lawyer, or payroll contact to persuade staff to send funds or alter payment instructions. The phishing step is the trust-establishment step; the financial fraud comes after.

In crypto, the taxonomy shifts because the valuable action is often not “log in” but “sign” or “send.” Seed phrase phishing asks for the master secret directly. Signature phishing asks the user to sign an off-chain message that can later be used to move assets. MetaMask describes this pattern explicitly: a scammer obtains an off-chain signature and later uses it to steal funds, sometimes after a delay. Approval phishing gets the user to grant a token or NFT allowance to a malicious contract. Address poisoning manipulates transaction history so a victim later copies a lookalike address and sends funds to the attacker.

These are not different in essence from classic phishing. They are phishing adapted to what counts as authorization in a blockchain system.

What weaknesses do attackers exploit in phishing attacks?

| Weakness | Meaning | Why it helps | Best defense |

|---|---|---|---|

| Identity ambiguity | Brands and addresses look similar | Casual checks accept impersonation | SPF/DKIM/DMARC and verification |

| Action opacity | Requests are machine‑precise, not human readable | Users approve opaque payloads | Trusted displays and readable prompts |

| Attention scarcity | Decisions under time pressure | Urgency suppresses checking | Pause‑and‑verify flows |

| Irreversibility | Consequences are permanent or delayed | High payoff, low recovery cost | Limit approvals and enable revocation |

Phishing attacks often look diverse on the surface, but they usually exploit one or more of four underlying weaknesses.

The first is identity ambiguity. Digital environments let attackers imitate names, brands, roles, and domains well enough to survive casual inspection. DMARC, SPF, and DKIM help reduce exact-domain spoofing in email, but even the DMARC standard is clear about its limit: it helps validate authorized use of a domain and combat certain forms of spoofing, yet it does not solve cousin domains or deceptive display names. In plain language, protocol authentication can prove “this mail is allowed to represent this domain,” but it cannot prove “this message is honest” or “this domain is the one the human intended.”

The second is action opacity. Many systems expose the user to requests they cannot easily interpret. A wallet prompt may show contract interactions or signatures in a form that is machine-precise but human-unfriendly. That opacity is why blind signing is dangerous: the user approves bytes, not meaning. Product guidance from Ledger frames clear signing as a mitigation precisely because it tries to translate complex transactions into human-readable details on a trusted device screen.

The third is attention scarcity. Security often depends on careful inspection at moments when people are busy, interrupted, or stressed. A good phishing lure does not need perfect deception if it can create enough urgency to suppress checking.

The fourth is irreversibility or delayed consequence. If a phished password can be reset quickly, the window of harm may be limited. If a seed phrase is revealed, an off-chain signature remains valid, or a blockchain transfer is finalized, recovery is much harder. Solana’s user guidance states this starkly: transactions are permanent and cannot be reversed. Revoke.cash similarly notes that token approvals can remain active indefinitely unless revoked, which means the harmful consequence may arrive well after the deceptive moment.

How can organizations and users reduce phishing risk?

| Control | What it stops | Main benefit | Main limit |

|---|---|---|---|

| User vigilance | Conversion actions (clicks, replies) | Immediate interruption of lures | Attention dependent and brittle |

| Multifactor authentication | Credential reuse and takeover | Reduces account takeover risk | Can be bypassed by advanced phishing |

| Email authentication | Exact domain spoofing | Makes impersonation harder | Does not stop lookalike domains |

| Detection correlation | Post‑delivery compromise chains | Finds compromise earlier | Requires integrated telemetry |

| Trusted device approvals | Blind signing and UI tampering | Shows untampered transaction meaning | Needs vendor and dapp adoption |

Because phishing exploits both human judgment and technical pathways, good defense is layered. No single control is enough.

At the user level, the simplest advice remains surprisingly durable. CISA recommends three steps: recognize, resist, and delete. Recognize means looking for common signs such as urgent emotional language, requests for personal or financial information, suspicious links, shortened URLs, and mismatched addresses. Resist means not clicking, not opening attachments, and reporting the message instead. Delete means removing it without replying or using the “unsubscribe” link in a suspicious message.

That advice works because it interrupts the attacker’s conversion step. Phishing needs an action. If the user does not supply the action, the lure fails.

But user vigilance alone is brittle, so systems need technical controls that narrow what phishing can accomplish. For accounts, multifactor authentication matters because a stolen password alone may no longer be sufficient. CISA explicitly recommends MFA, and this is one of the clearest examples of changing the economics of an attack. The attacker must now capture or bypass an additional factor, which does not make phishing impossible but often makes commodity credential theft much less useful.

For email ecosystems, SPF, DKIM, and DMARC reduce straightforward sender spoofing by letting receivers validate whether a domain authorized the sending infrastructure and whether identifiers align properly. NIST recommends these mechanisms as core anti-phishing controls. Their strength is clear: they make exact-domain impersonation harder and more visible. Their limit is equally important: they do not stop a message sent from a lookalike domain, a compromised legitimate account, or a real service being abused for malicious outreach.

For organizations, detection improves when defenders correlate message delivery with downstream behavior. MITRE notes that suspicious inbound emails become much more meaningful when followed by process execution, file creation, network activity, odd logins, or unusual MFA behavior. This is a deeper point than simple spam filtering. The most reliable evidence that a phish mattered is often not the message itself but the sequence that follows it.

Training also matters, though it should be understood correctly. The goal is not to turn users into protocol analysts. It is to make common attack patterns familiar enough that suspicion activates sooner. NIST’s Phish Scale reflects this by focusing on the human difficulty of detecting a phishing email in awareness programs. That framing is useful because some phish are genuinely harder than others; measuring difficulty is more honest than assuming all failures come from negligence.

How does phishing target crypto users, wallets, and approvals?

| Phish type | Goal | Mechanism | Mitigation |

|---|---|---|---|

| Seed phrase theft | Total wallet compromise | Ask user to reveal recovery phrase | Never enter online; use hardware wallet |

| Signature phishing | Replayable off‑chain authorization | Collect an off‑chain signature for later use | Verify intent and use device approvals |

| Approval phishing | Malicious contract token access | Grant broad token allowance to contract | Limit allowances and revoke approvals |

| Address poisoning | Send funds to lookalike address | Insert lookalike into transaction history | Verify full address and use allowlists |

Crypto systems make phishing unusually consequential because authorization is often direct and final. There may be no central operator who can reverse a transfer, freeze a fraudulent withdrawal, or unwind a malicious approval. That changes both attacker incentives and defense design.

The most obvious crypto phishing attack asks for the seed phrase. This is the wallet’s master recovery secret. Solana’s safety guidance is unambiguous: it should never be entered on websites, stored in email or cloud services, or shared with anyone. A phish that obtains the seed phrase is not really “breaking into” the wallet; it is getting the user to hand over total control.

A subtler attack asks for a signature rather than the seed phrase. MetaMask’s guidance explains why this is dangerous: off-chain signatures are not broadcast on-chain and can be reused later by the dApp that collected them. The user may think they are signing a harmless login, listing, or verification step, while the actual signed message grants broad permission. Because the signature itself is legitimate, blockchain security does not reject it. Again, the machine sees valid authorization; only the human story was false.

Another variant asks for a token approval. In many smart contract systems, interacting with a dApp involves granting a contract permission to move tokens on the user’s behalf. This is useful for trading, lending, and marketplace actions. It is also dangerous if the approval is broader than the user realizes or if it is granted to a malicious contract. Revoke.cash emphasizes that approvals can remain active indefinitely, which means a single successful phishing event can create long-lived risk.

Then there is address poisoning, which is especially instructive because it shows phishing without a fake website or explicit credential prompt. Research measuring Ethereum and BNB Smart Chain found large-scale campaigns where attackers generated lookalike addresses and inserted them into victims’ transaction histories using tiny transfers, zero-value transfers, or counterfeit-token transfers. The hope is that the victim later copies the poisoned address from recent history instead of the intended recipient. This is still phishing in the broader sense: the attacker manipulates what the user believes they are selecting.

Notice what all of these crypto examples share. The attacker is not defeating the blockchain’s signature verification. They are getting the victim to produce a valid signature, valid approval, or valid transfer under false beliefs.

How do trusted displays and clear signing reduce phishing risk?

Once you see phishing as a mismatch between machine-valid action and human-understood intent, a design principle emerges: security improves when the system can present the meaning of an action clearly on a surface the attacker cannot easily tamper with.

That is why hardware wallets help. Their core value is not just key isolation, though that matters. It is also that approval occurs on a separate trusted display, reducing the chance that malware on the host machine can rewrite what the user sees. Ledger’s clear-signing guidance pushes this further by arguing that users should see human-readable details rather than opaque payloads. The analogy is reading a contract before signing it instead of being shown a page of hashes. The analogy helps explain the point, but it fails if taken too far: even a well-presented transaction summary depends on accurate metadata and careful reading, so it does not eliminate deception entirely.

Wallet and exchange UX can also reduce mistakes by showing more address context, flagging suspicious history entries, supporting allowlists, and warning on unusual approvals. The address-poisoning research specifically suggests wallet-interface improvements because abbreviated addresses and history-driven habits create the opening attackers exploit.

The deeper lesson is that phishing is partly a user-interface problem. Bad security UX forces users to guess. Good security UX makes the intended meaning legible.

What immediate steps should I take if I suspect a phishing attempt?

The first rule is simple: pause the action. Most phishing campaigns depend on momentum. If a message demands urgency, treat that urgency itself as evidence. Do not click links or open attachments from a suspicious message. Do not call the provided number. Do not enter a seed phrase on a website. Do not sign a wallet prompt you do not understand.

Then switch channels. If the message claims to come from your bank, employer, exchange, or wallet provider, navigate to the official site yourself or use a known-good contact method. CISA recommends reporting suspected phishing through built-in report functions or organizational channels rather than interacting with the message.

If the concern is crypto-specific and you think you may already have approved something malicious, speed matters. Check and revoke token approvals if possible, move assets from an at-risk wallet when appropriate, and stop using the compromised account or connected site. MetaMask points users toward approval-management and scanning tools, and Revoke.cash focuses specifically on revocation because harmful permissions can persist long after the original phish.

The recovery burden can be substantial even when losses are partially mitigated. That is another reason phishing matters: the damage is not only monetary. It includes time, operational disruption, account lockouts, incident handling, and lingering uncertainty about what was exposed.

Conclusion

Phishing is best understood as a way of making a human being authenticate the wrong story. The attacker does not always need to break the system; they need the victim to use the system on the attacker’s terms.

That is why phishing spans email, texts, phone calls, websites, collaboration tools, and crypto wallets. It is also why the right defenses are layered: better identity signals, clearer interfaces, MFA, domain authentication, safer wallet signing, user training, and fast reporting. The enduring fact to remember is this: a digitally valid action is not the same thing as an intentionally informed one. Phishing lives in that gap.

How do you secure your crypto setup before trading?

Secure your crypto setup by combining basic account hardening with careful destination and approval checks before you trade. On Cube Exchange, fund your account and use the platform’s non-custodial MPC workflow to trade while keeping control of signing decisions.

- Enable multifactor authentication (MFA) for your Cube account and confirm your recovery/contact methods.

- Fund your Cube account using a trusted fiat on‑ramp or a direct crypto transfer you initiated; verify the on‑chain deposit by checking the transaction ID on a block explorer.

- If you use an external device or wallet, connect it and verify transaction details on the device before approving (check human‑readable amounts and destination).

- Before sending funds or approving a token transfer, copy the destination address and confirm checksum characters (first/last 6 characters) and, for approvals, check the allowance scope and revoke any overly broad permissions.

Frequently Asked Questions

Phishing is primarily a decision‑manipulation problem: attackers persuade a real person to perform a valid system action (enter credentials, sign a message, approve a transfer) for a malicious reason, so the system sees a formally correct authorization even though the human’s intent was hijacked.

Domain‑level email controls (SPF/DKIM/DMARC) make exact‑domain spoofing harder but do not stop lookalike domains, forged display names, compromised legitimate accounts, or messages sent through abused third‑party services, so they reduce some attacks but do not eliminate phishing.

Crypto phishing often asks for a seed phrase, an off‑chain signature, or a token approval; these are legitimate authorizations that attackers reuse or abuse later, and because blockchain actions are often irreversible and approvals can remain active indefinitely, the consequences are especially hard to undo.

Address poisoning inserts attacker addresses into a user’s visible transaction history (via tiny or zero‑value transfers or counterfeit tokens) so victims later copy a poisoned address and send funds to the attacker instead of the intended recipient.

hardware wallets and clear‑signing improve safety by moving approval displays to a tamper‑resistant device and showing human‑readable transaction details, which reduces blind signing and host‑side manipulation, but they cannot prevent every social‑engineering trick and require ecosystem support for accurate metadata.

Pause and verify using a known good channel, report the message, and if you fear a wallet approval or seed exposure act quickly: check and revoke token approvals where possible, move assets from a compromised wallet, and stop using the affected account - revocation and moving assets can limit future misuse but cannot always undo an immediate theft.

Training should not aim to make users protocol experts but to make common phishing patterns and suspicious cues familiar so suspicion activates earlier; measuring phishing difficulty (e.g., NIST’s Phish Scale) and combining training with technical controls improves resilience because user vigilance alone is brittle.

Defenders need layered controls: multifactor authentication to reduce value of stolen credentials, domain authentication for email, detection that correlates suspicious messages with downstream behavior (execution, unusual logins, MFA anomalies), safer UI/UX for approvals, and fast reporting and incident playbooks - no single control fully prevents phishing.

Related reading