What is Multi-Party Computation (MPC)?

Learn what multi-party computation (MPC) is, how it protects private inputs during joint computation, and why it powers threshold signing and MPC wallets.

Introduction

Multi-party computation (MPC) is a way for multiple parties to compute something together without revealing their private inputs to one another beyond what the final output necessarily reveals. That sounds almost contradictory at first. Computation usually seems to require gathering data in one place: if you want a result based on everyone's secrets, someone must see the secrets. MPC exists because that intuition is wrong.

The core idea is simple enough to state and surprisingly deep in its consequences. Instead of moving secrets to the computation, MPC distributes the computation across parties so that each party learns only what it is supposed to learn. In the cleanest formulation, the parties reveal nothing but the output. That gives MPC two jobs at once: preserve privacy and still produce a correct joint result.

This matters well beyond academic cryptography. The same underlying idea can let institutions compute statistics across sensitive datasets, let committees control assets without any single person holding the whole key, and let modern custody systems sign transactions without ever assembling a full private key in one machine. In blockchain systems, that last application is especially important: MPC is one of the main techniques behind threshold wallets and threshold signing.

The useful question is not just what MPC is, but what problem it solves that ordinary encryption does not. Encryption protects data while it is stored or transmitted. But eventually, if you want to use the data, someone usually decrypts it and sees it. MPC aims at the harder point in the lifecycle: computation itself. It asks whether parties can get the benefit of a shared computation without creating a moment where all the secrets are exposed.

What problem does MPC solve and why is a single point of trust dangerous?

Suppose three companies want to know the average salary across all of them, but none wants to disclose its internal payroll data to the others. Or suppose several operators jointly control funds and want any subset of, say, two out of three of them to authorize a transfer, without ever placing the whole signing key in one device. In both cases, there is a common pattern: the group wants a shared outcome, but the inputs are individually sensitive.

Without MPC, the usual solutions are organizational rather than cryptographic. Everyone sends data to a trusted party. Or one party collects encrypted data, decrypts it locally, computes, and promises discretion. Or each party exposes only partial information and hopes the leakage is acceptable. All of these create a familiar bottleneck: there is a place where trust concentrates.

That concentration is the real problem MPC addresses. If there is a single place where the secrets become visible, then that place becomes the main target for compromise, coercion, insider abuse, operational error, and accidental leakage. The security of the whole system collapses to the security of the most trusted box or person.

MPC changes the shape of the system. Instead of saying, “we must trust one party with everything,” it says, “we can structure the protocol so that each party sees only a fragment, and correctness emerges from interaction.” That is the compression point. MPC is not primarily about hiding data at rest. It is about avoiding full exposure during collaborative computation.

How does the 'trusted black box' model explain MPC security?

The cleanest way to understand MPC is to start with an imaginary machine. Each party privately feeds its input into a perfectly trusted black box. The box computes a function on those inputs and returns the prescribed output to the appropriate parties. No one sees anyone else’s input. No one can tamper with the computation. No one learns more than what the output itself implies.

That black-box thought experiment is not just a teaching tool. It is the standard way security is defined. In modern MPC, a real protocol is considered secure if interacting with actual cryptographic messages is, from the adversary’s perspective, no worse than interacting through this ideal trusted party. This is the ideal/real simulation paradigm: if anything harmful could happen in the real protocol, there should be a corresponding way to explain it as something that could also happen in the ideal world.

Why define security this way? Because it captures the right target. Real protocols are messy. Parties send messages, randomness is used, shares are created, checks are performed, some parties may deviate, and networks may delay or drop messages. Rather than trying to enumerate every possible leakage pattern directly, the simulation approach asks a simpler question: does the protocol behave as if the trusted black box had done the work? If yes, then the protocol has achieved the intended abstraction.

This also clarifies what MPC does not guarantee. If the function’s output itself leaks something sensitive, MPC does not magically fix that. The protocol protects the process of computing, not the semantic consequences of the output. Likewise, in the usual ideal model, parties are allowed to choose any inputs they want. So if an application needs guarantees that inputs are well-formed or honestly sourced, it must add those checks separately.

How does MPC compute on private inputs without revealing them?

The surprising part of MPC is not the security definition but the mechanism. How can people compute on hidden inputs without revealing them?

The most common answer begins with secret sharing. Instead of giving a secret to one place, a party splits it into several pieces called shares and distributes them among participants. Each share by itself is designed to reveal nothing useful, but a sufficiently large authorized subset of shares can be combined to recover the secret or, more importantly for MPC, to help compute on it.

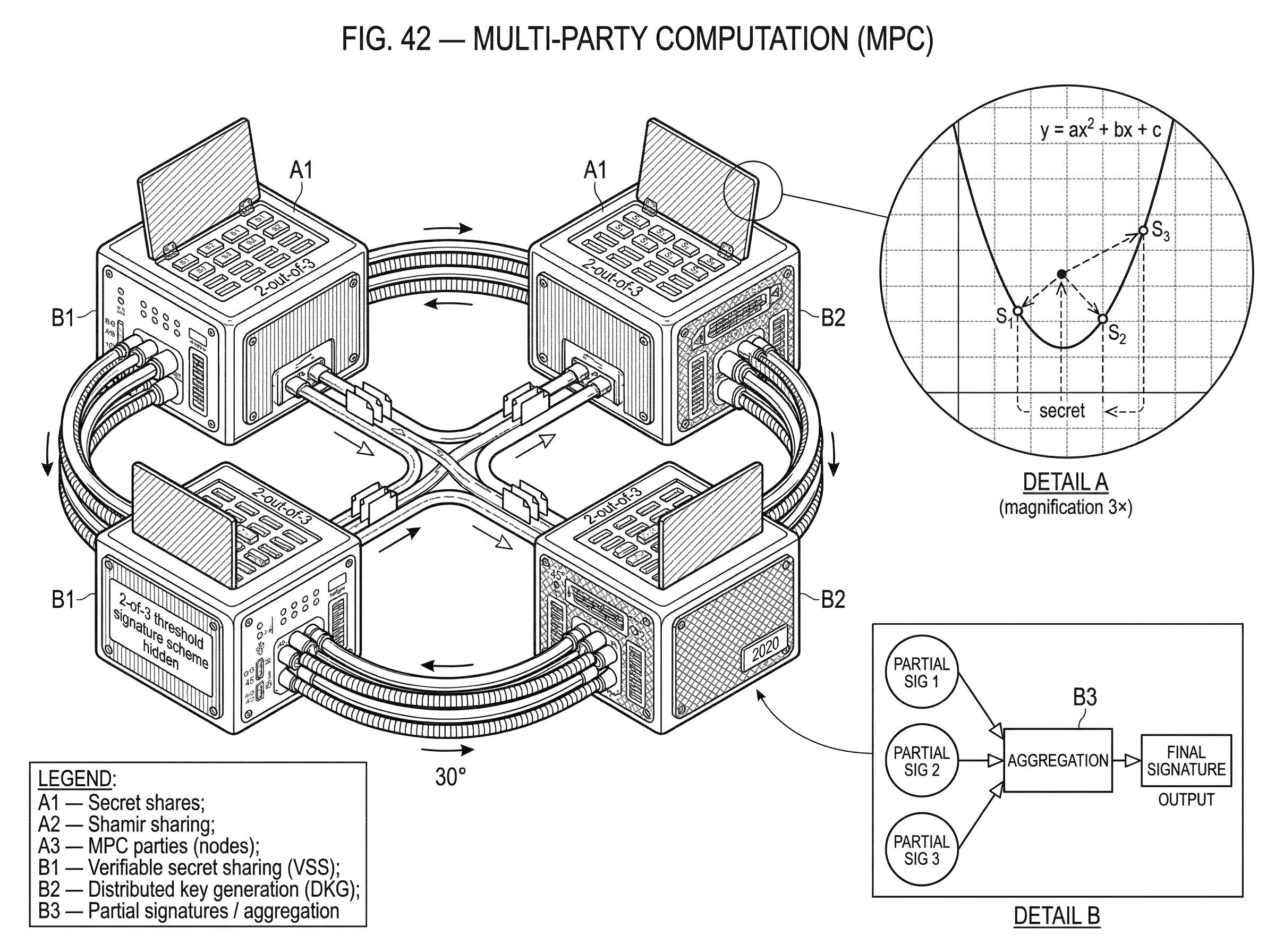

A canonical example is Shamir secret sharing. Here the secret is embedded as the constant term of a random polynomial over a finite field, and each participant receives the polynomial evaluated at a different nonzero point. The important invariant is this: any large enough set of points determines the polynomial and therefore the secret, but any too-small set leaves the secret completely undetermined. That is not just computationally hard to break; in the standard model it is information-theoretically hidden.

The mechanism is easier to see with a concrete story. Imagine a secret number s. To create a 2-out-of-3 sharing, the dealer chooses a random line q(x) = s + a*x, where a is random, and gives party 1 the value q(1), party 2 the value q(2), and party 3 the value q(3). Any two parties can reconstruct the line and read off q(0) = s. But a single share is compatible with every possible secret, because for any candidate s', there is exactly one line through the known point whose value at zero is s'.

That explains how to store a secret distributively. MPC goes further: it lets parties compute on shares so that the final result is itself obtained without exposing the underlying inputs.

The simplest operations behave very naturally. If two secrets are shared in compatible ways, then parties can often add their local shares, and the result is a valid share of the sum. That means the global computation “add the secrets” can be performed by local work plus a reconstruction step at the end. Multiplication is harder, because multiplying hidden values tends to increase the algebraic complexity of the sharing. Much of MPC protocol design is about handling this difficulty efficiently and securely.

How does MPC scale from arithmetic operations to arbitrary computations?

Once the secret-sharing picture clicks, the rest of MPC becomes easier to organize. A computation can be represented as a circuit made of simple gates such as addition, multiplication, comparisons, or boolean operations. If parties know how to evaluate each gate on hidden values, they can compose those steps into evaluation of the whole function.

This is why the theory says that, in a broad sense, any function can be securely computed. The statement is not that every function is equally efficient, or that every setting allows the same guarantees. It is that secure joint computation is not restricted to a narrow class of toy examples. In principle, arbitrary polynomial-time computation can be broken into primitive operations and evaluated under cryptographic protection.

Two large families of techniques dominate the intuition here. In the secret-sharing style, values are distributed as shares and parties jointly evaluate arithmetic or boolean circuits gate by gate. In the two-party setting, another classic approach is garbled circuits, associated with Yao’s work, where one party transforms a circuit into an encrypted form and the other evaluates it without learning the hidden wire values. These are not the only approaches, but they explain a lot of the landscape.

The deeper point is that MPC is really a discipline for preserving an invariant across a computation: no intermediate state should reveal more than allowed. Ordinary programming exposes values to the machine doing the work. MPC replaces that with encoded intermediate states (shares, encryptions, masked values, commitments) and carefully designed transitions that preserve correctness without breaking privacy.

This is why composition matters so much. If a protocol securely computes one step, and its output representation can feed into the next secure step, then large computations become possible. The theory formalizes this with composition theorems and, in stronger settings, with universal composability. The practical meaning is straightforward: a protocol that is secure in isolation is useful, but a protocol that stays secure when embedded inside larger systems is much more useful.

Which adversary models and assumptions determine MPC security?

| Adversary | Behavior | Efficiency cost | Guarantees | Typical use |

|---|---|---|---|---|

| Semi-honest | Follows protocol, observes transcripts | Low | Privacy (if protocol followed) | Research, trusted deployments |

| Malicious | May deviate arbitrarily | High | Privacy and integrity with proofs | Adversarial production systems |

| Covert | May cheat but avoids detection | Medium | Limited deterrence via detection | Balanced efficiency vs deterrence |

| Adaptive corruptions | Adversary corrupts during run | Higher | Stronger refresh/reshare needs | Long‑lived systems, proactivity |

When people first hear “MPC keeps inputs private,” they often imagine a single, absolute guarantee. In reality, MPC security depends heavily on the adversary model.

The first major distinction is between semi-honest and malicious behavior. A semi-honest party follows the protocol exactly but tries to infer extra information from whatever it sees. A malicious party may send malformed messages, use inconsistent shares, abort strategically, or otherwise deviate in order to cheat. Semi-honest security is easier and often more efficient. Malicious security is stronger and more realistic in adversarial environments, but it usually costs more in rounds, proofs, checks, and communication.

Another distinction is how corruptions occur. In a static model, the adversary chooses which parties to corrupt before the protocol starts. In an adaptive model, it can choose during execution after seeing partial information. There are also proactive settings, where shares are periodically refreshed so that compromise must happen within a time window rather than accumulating forever. That matters in long-lived systems, because without refresh, an attacker who slowly compromises enough parties over time may eventually reconstruct what no single snapshot could reveal.

There is also a threshold question: how many corrupted parties can the protocol tolerate, and what guarantees remain possible? The theory here is subtle. Different thresholds permit different combinations of privacy, correctness, fairness, and guaranteed output delivery. For example, broad feasibility results show that all functions can be securely computed, but stronger guarantees such as fairness or guaranteed delivery depend on how many parties may be corrupted relative to the total number of participants.

The memorable lesson is that MPC is not a magic privacy shield layered on top of arbitrary distrust. It is a protocol discipline with exact security envelopes. If you change the number of tolerated corruptions, the synchrony assumptions, or the kind of adversary, the achievable guarantees change too.

How do MPC-based threshold signatures work and what are the practical tradeoffs?

| Scheme | Algebra friendliness | Rounds | Robustness | Implementation risk |

|---|---|---|---|---|

| Schnorr (FROST) | High | 2 (1 with preprocessing) | Not robust by default | Moderate; standard proofs |

| ECDSA (classic) | Low | Multiple rounds | Often fragile | High; delicate math |

| ECDSA (robust / dealerless) | Low | 4+ pre-sign rounds | Higher robustness | Very high; heavy checks |

A useful concrete example is threshold signing, because it shows MPC as computation rather than just secret storage. Start with the goal: a group wants to use a public-key signature system, but no one wants the full private key to exist in one place. The ordinary way to sign is simple: hold the secret key, run the signing algorithm, output the signature. The whole risk sits in one machine.

In an MPC-based threshold signature scheme, the signing key is shared among parties. The protocol is designed so that the parties can jointly compute a valid signature on a message while never reconstructing the full secret key in memory anywhere. Each party performs local computations on its share, exchanges carefully structured messages, and contributes a partial result. If enough valid contributions are combined, the result is an ordinary signature verifiable under the standard public key.

This is where the connection between MPC and blockchain infrastructure becomes concrete. Modern threshold signing protocols exist for Schnorr-style signatures and for ECDSA, the signature family widely used in cryptocurrency systems. FROST, for example, is a two-round Schnorr threshold signing protocol standardized by the CFRG. It allows a threshold number of participants to cooperate to produce one Schnorr signature while keeping the signing key distributed. Its efficiency comes with tradeoffs: the standard notes that FROST is not robust against all misbehavior, so a faulty participant can cause denial of service unless an outer mechanism handles exclusion or retry.

Threshold ECDSA is harder because ECDSA’s algebra is less MPC-friendly than Schnorr’s. Practical protocols exist, including dealerless ones secure against malicious adversaries with a dishonest majority, but they require more machinery. A recurring engineering theme is that getting the math right is not enough; implementations must validate parameters, proofs, and commitment structures carefully. Real vulnerabilities have appeared when distributed key-generation code failed to enforce those checks.

A real-world illustration is Cube Exchange, which uses a 2-of-3 threshold signature scheme for decentralized settlement. The user, Cube Exchange, and an independent Guardian Network each hold one key share. No full private key is ever assembled in one place, and any two shares are required to authorize a settlement. This is a practical instance of MPC’s central promise: operational control without single-point key custody.

Why use verifiable secret sharing (VSS) and DKG instead of plain secret sharing?

Plain secret sharing solves only part of the problem. It tells you how to split a secret so that small subsets learn nothing and authorized subsets can reconstruct. But what if the dealer distributes inconsistent shares? What if a participant publishes commitments that do not match what others received? What if the protocol is dealerless and every participant effectively acts as a dealer for part of the final secret?

That is why practical MPC systems often rely on verifiable secret sharing (VSS) and distributed key generation (DKG). VSS adds the ability for recipients to check that their shares are valid relative to public commitments, without revealing the secret itself. DKG goes a step further: the parties jointly generate a shared secret or shared private key so that no single dealer ever knows the complete value.

These additions are not cosmetic. They change the trust model from “assume the party who split the secret behaved honestly” to “participants can detect cheating during setup.” In threshold-signature deployments, that distinction is essential, because a bad setup can quietly poison everything that follows.

But verifiability also illustrates an important truth about MPC systems: they are secure only as implemented. The mathematics may say that a VSS or DKG scheme is sound under certain checks, yet a real implementation that omits those checks can fail in ways the theory did not permit. The denial-of-service issue disclosed in some Pedersen DKG implementations is a good example. The attack was not a refutation of the underlying idea of DKG; it exploited missing validation in implementations. That difference matters because practitioners often hear “provably secure” and imagine “foolproof.” Those are not the same claim.

What can MPC still leak and what guarantees does it not provide?

The phrase “revealing nothing but the output” is elegant, but it can mislead if read too literally.

First, the output may itself reveal sensitive information. If two hospitals use MPC to compute whether one patient record matches another, the yes-or-no answer is already a disclosure. If several firms compute an average over a tiny population, the result may allow inference about one participant. MPC prevents leakage through the process beyond the intended result, but it does not rescue a badly chosen function.

Second, MPC does not usually guarantee that parties supplied truthful inputs. In the ideal formulation, parties hand whatever inputs they choose to the trusted functionality. So if an application needs authenticated inputs, range-limited values, or compliance with external rules, the protocol must add those properties with signatures, commitments, zero-knowledge proofs, or system-level controls.

Third, many practical protocols can abort. Privacy and correctness are one thing; guaranteed completion is another. In some settings, fairness (meaning no party gets the output while preventing others from getting theirs) is possible only under stricter assumptions about how many parties are corrupted. In threshold signing, this often shows up as a liveness problem rather than a privacy failure: the key remains protected, but a malicious participant can stall signing.

So the right way to think about MPC is disciplined and bounded. It gives a powerful answer to a specific problem: how to compute jointly without centralizing secrets. It does not eliminate the need to reason about outputs, inputs, availability, system integration, or operational abuse.

Why is MPC practical today and when are specialized protocols used?

For a long time, MPC was mostly a theoretical triumph: astonishingly general and often too expensive for everyday deployment. That has changed. Improvements in protocol design, network assumptions, preprocessing, specialized subprotocols, hardware performance, and engineering practice have made MPC practical in more domains.

The practical turning point came from understanding where the real costs were. In many protocols, local computation is cheap compared with network rounds, interaction patterns, and expensive cryptographic subroutines. So protocol designers optimized the number of rounds, shifted work into preprocessing, tailored designs for common functions such as set intersection or signatures, and accepted explicit tradeoffs; for example, less robustness in exchange for lower latency.

This is why modern deployments often use not “generic MPC” in the abstract, but specialized MPC protocols for a well-defined task. Threshold signatures are the clearest example. The task is narrow, the algebra is known in advance, and the system can be optimized around that shape. That makes production use far more realistic than a fully general secure virtual machine.

At the same time, broad MPC systems are genuinely in use for privacy-preserving analytics and collaborative data processing. The practical lesson is not that theory gave way to engineering, but that engineering found the right simplifications. A protocol becomes deployable when its security model, computation pattern, and operational environment line up.

How does MPC compare to secret sharing, threshold cryptography, and homomorphic encryption?

| Primitive | Trust model | Computation locus | Best use case | Main strength |

|---|---|---|---|---|

| MPC | Distributed among parties | Collaborative across participants | Joint computation without central decryption | Avoids single‑point key exposure |

| Homomorphic encryption | Central decryptor owns key | One party computes on ciphertexts | Cloud computation on encrypted data | Compute while data stays encrypted |

| Threshold cryptography | Key material shared among parties | Distributed cryptographic operations | Key custody and signing | No single full key holder |

MPC sits next to several other cryptographic ideas, and the boundaries matter.

Secret sharing is a building block, not the whole story. It explains how to distribute a secret among parties, but by itself it does not tell you how to compute arbitrary functions on those hidden values. MPC often begins with secret sharing and then adds protocols for computation, verification, and reconstruction.

Threshold cryptography is best thought of as a major application area of MPC. In threshold encryption or threshold signing, the joint function being computed is a cryptographic primitive itself; decrypting, signing, generating a key, refreshing shares. Many systems described as threshold systems are really specialized MPC systems.

Homomorphic encryption addresses a related but different question: how to compute on encrypted data, often with one party holding the secret decryption key. It can be used inside MPC, and MPC can be used to distribute decryption or key management for homomorphic schemes, but the trust structure is different. Homomorphic encryption often centralizes decryption authority unless combined with threshold methods; MPC is explicitly about distributing trust among multiple parties.

That is why bridges, wallets, and exchanges often adopt MPC-based threshold signing. The problem is not just “compute on ciphertexts.” It is “make sure no single operator can unilaterally move assets, and no single compromise exposes the signing key.” MPC fits that institutional problem very closely.

Conclusion

Multi-party computation is the cryptographic answer to a simple but difficult question: how can several parties use their secrets together without first surrendering them to one place? Its central trick is to distribute not only the data but the computation itself, so privacy is preserved throughout the process rather than only before and after it.

The durable idea to remember is this: MPC tries to make a real protocol behave like an imaginary trusted black box, even though no such box exists. Secret sharing, verifiable sharing, distributed key generation, garbled circuits, and threshold-signing protocols are all ways of approximating that black box under explicit assumptions.

When those assumptions fit the environment, MPC can remove single points of trust in places where they matter most. And when they do not, the limitations are usually not mysterious: the output leaks what it leaks, malicious parties may still abort, and implementations must still earn the security that the theory promises.

What should you understand about MPC before using it?

Understand the key design choices, failure modes, and recoverability before you deposit or trade. On Cube Exchange, you can confirm how MPC is implemented, review audits or protocol claims, and perform a small, on-chain test settlement to validate expected behavior.

- Check the threshold and share model: confirm the m-of-n threshold (for example, 2-of-3) and whether the scheme uses Schnorr/FROST or a threshold ECDSA variant.

- Review protocol guarantees and evidence: read Cube's documentation and any third-party audits for VSS/DKG, malicious vs. semi-honest security claims, and mitigation for aborts.

- Validate liveness and recovery in practice: run a small withdrawal or settlement to observe timeouts, retry behavior, and how missing participants are handled.

- Fund and execute a full on-chain test using Cube’s normal deposit and settlement flow, then inspect the on-chain signature and confirmation pattern to ensure it matches the stated threshold policy.

Frequently Asked Questions

MPC and homomorphic encryption both let you work with protected data, but they solve different trust problems: homomorphic encryption lets a party compute on ciphertexts (usually centralizing decryption authority later), whereas MPC distributes both data and the computation so no single party needs to hold the decryption key or full secret during the computation.

No - MPC prevents leakage through the computation process, but it cannot stop whatever sensitive information the chosen output itself reveals, so poorly chosen outputs or small populations can still allow inference about participants.

Security guarantees depend on the adversary model: semi-honest parties follow the protocol and try to learn extra information (cheaper to protect against), while malicious parties can deviate arbitrarily requiring extra checks and cost; other distinctions like static vs adaptive corruptions and thresholds determine what privacy, correctness, fairness, and liveness guarantees are achievable.

Secret sharing distributes a secret into shares that individually reveal nothing (Shamir's scheme is a canonical example), but to defend against inconsistent or malicious setup one usually adds verifiable secret sharing (VSS) and distributed key generation (DKG) so participants can detect cheating during setup rather than trusting a single dealer.

Many MPC techniques that are theoretically general became practical by optimizing for common tasks and shifting work into preprocessing; consequently production systems often use specialized MPC protocols (for example, threshold signing) rather than fully generic MPC to get acceptable latency and communication costs.

Threshold signing shares the private key and runs an MPC protocol so parties produce a standard signature without ever assembling the full key; Schnorr-based schemes like FROST are relatively efficient but trade robustness for efficiency, while threshold ECDSA is harder algebraically and needs more complex machinery and careful implementation checks.

Real-world failures usually stem from implementation or integration errors rather than the mathematics: missing validation (for example, commitment vector length checks in Pedersen DKG) or omitted proofs can let a participant sabotage setup or stall protocols, and some deployed schemes explicitly accept denial-of-service risk rather than add costly robustness.

MPC security rests on explicit assumptions: besides the protocol-level model (honest/malicious, static/adaptive, thresholds), many practical constructions rely on standard cryptographic hardness assumptions (e.g., discrete-log for Schnorr/FROST or factoring/Paillier variants in some ECDSA protocols), so choosing parameters and primitives matters to concrete security.

Related reading