What is Execution Sharding?

Learn what execution sharding is, how it scales blockchains by splitting state and computation, and why cross-shard coordination is the real challenge.

Introduction

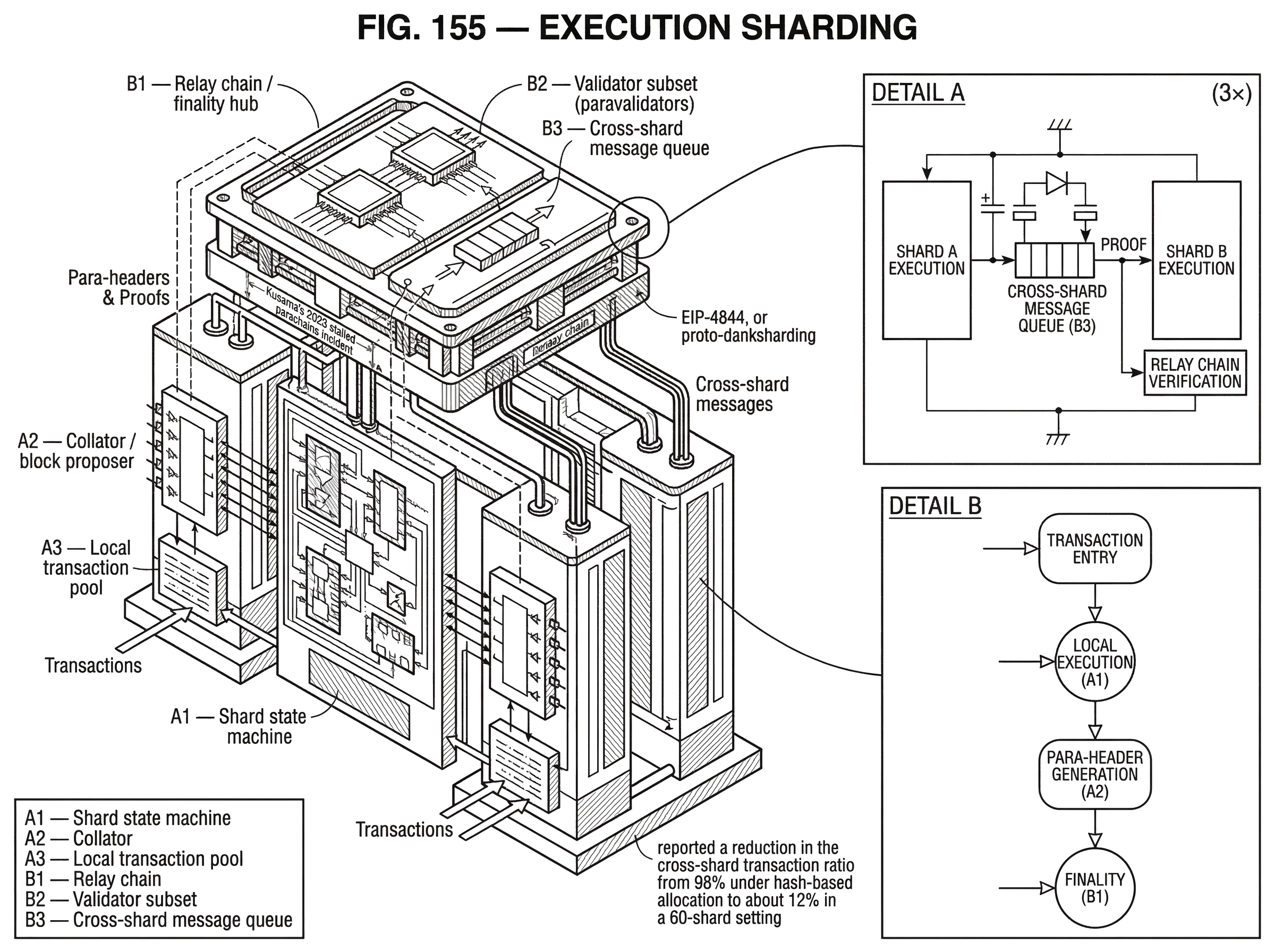

Execution sharding is a way to scale a blockchain by splitting transaction execution across multiple partitions so that different parts of the system can process work in parallel. The appeal is obvious: if every node no longer has to execute every transaction, throughput should rise without requiring a single machine to do all the computation. But the hard part is not the slogan "parallelize execution." The hard part is preserving the properties people want from a blockchain (deterministic state transitions, composability, and shared security) once the state is no longer in one place.

That tension explains why execution sharding is both powerful and difficult. A monolithic chain is simple in one crucial sense: there is one state, one ordering, and one place where a transaction runs. As soon as execution is split across shards, the protocol must answer new questions. Which shard owns which state? What happens when one transaction touches accounts on different shards? Can cross-shard actions be atomic, or must they become asynchronous messages? How much of the network must verify each shard's work? These are not implementation details around the edges. They are the core of the design.

This is also why execution sharding should be distinguished from data sharding. Data sharding increases the amount of data the base layer can make available, often so rollups can publish their transaction data more cheaply. Execution sharding instead distributes the actual computation and state transitions. Ethereum's recent scaling path makes this contrast especially clear: the ecosystem has moved toward a rollup-centric model with blob-based data availability through proto-danksharding and, eventually, danksharding, rather than scaling layer-1 execution by splitting it into shards.

How does execution sharding split state versus blocks, and why does that matter?

A blockchain executes transactions against state: balances, contract storage, account metadata, application-specific records, and so on. In a traditional design, every fully validating node tracks the whole state and re-executes all transactions in canonical order. That gives a strong and simple invariant: every validator can independently check the entire chain.

Execution sharding changes that invariant. Instead of one globally executed state machine, the system is partitioned into multiple state machines, each responsible for part of the overall state. A shard is therefore not just a bucket of data. It is a unit of ownership over state and execution. If an account or contract belongs to shard A, then transactions affecting that state are, in the first instance, executed by shard A rather than by every validator over the whole network.

The performance gain comes from parallelism. If shard A and shard B each process disjoint work at the same time, aggregate throughput can exceed what one sequential global executor could handle. This is the basic mechanism behind parachain-style systems, where independent chains run in parallel, and it also appears in more local forms of parallel execution, where a runtime schedules non-conflicting transactions concurrently.

But there is an immediate consequence: once state is partitioned, global synchrony becomes expensive. On a monolithic chain, a contract call from application X to application Y is just one more step inside a single transaction. On a sharded chain, that same interaction may cross execution boundaries. The protocol must now move information, prove validity, and often wait at least one more step before the remote side can respond.

That is the first idea that makes execution sharding click: it trades a single globally shared execution context for parallelism, and the price is coordination.

Why use execution sharding? What problem does it solve for blockchains?

The problem execution sharding tries to solve is straightforward. Public blockchains face a resource bottleneck because every fully validating node is often asked to do the same work: download the same data, execute the same transactions, and store the same expanding state. This duplication is useful for verification, but it caps throughput. Raising hardware requirements too far would price out validators and weaken decentralization.

Execution sharding attacks the computation side of that bottleneck. Instead of making one machine bigger, it tries to spread the work across the network. In the ideal picture, adding shards lets the network process more independent activity at once. A payment-heavy application on one shard need not compete directly for execution time with a gaming or identity application on another.

The difficulty is that blockchain workloads are not naturally independent. Users trade across applications. Contracts call other contracts. Shared assets and shared liquidity create coupling. So execution sharding is most effective when the workload can be partitioned with relatively few interactions across shard boundaries. If activity is highly entangled, the protocol spends more effort coordinating shards, and the theoretical throughput gain gets eaten by cross-shard overhead.

That is why state placement matters so much. A sharded system is not only deciding how many shards exist. It is deciding which activity lives together. Research on transaction allocation makes this explicit: if accounts are assigned naively, cross-shard transactions can dominate. In one evaluated scheme, TxAllo reframed account placement as a graph community-detection problem and reported a reduction in the cross-shard transaction ratio from 98% under hash-based allocation to about 12% in a 60-shard setting. The exact numbers depend on workload and assumptions, but the underlying lesson is robust: execution sharding lives or dies by locality.

How does execution sharding work in practice? (state placement, block production, verification, messaging)

| Model | Who executes | Verifier scope | Cost per validator | Liveness/complexity | Best when |

|---|---|---|---|---|---|

| Full replication | Every validator executes | Full state and transactions | High compute and storage | Simple pipeline, less plumbing | High-composability workloads |

| Subset validation | Assigned validator subset | Shard headers and samples | Moderate per-validator cost | Pipeline plus dispute resolution | Shared-security hubs |

| Proof-based verification | Executors produce proofs | Cryptographic proofs only | Low verification cost | Proof generation heavy | When strong proofs available |

At a high level, any execution-sharded design needs to solve four linked problems. It must decide where state lives, who proposes and executes shard blocks, who verifies them, and how shards communicate.

State placement comes first because it determines the conflict surface. Some systems make the shard boundary explicit and durable, so a chain or contract account belongs to a particular shard. Others expose a more unified developer experience while still partitioning work internally. In either case, the protocol needs a rule for ownership: when a transaction wants to mutate state, there must be a clear answer about which execution domain has authority.

Next comes block production. Someone has to gather transactions for a shard, execute them against that shard's local state, and assemble a candidate block. In Polkadot, that role belongs to collators, which maintain parachain state and package transactions into Proof of Validity blocks, or parablocks. Those candidate blocks are then sent to a subset of relay-chain validators.

Verification is where the shared-security story becomes concrete. If every validator had to fully execute every shard, the scaling benefit would largely disappear. So most execution-sharded systems validate shard work using subsets, proofs, or structured assumptions. In Polkadot's parachain model, small randomly assigned validator groups, often called paravalidators, validate a parachain's candidate using the parachain's Wasm state transition function. Backed candidates are then represented on the relay chain by para-headers rather than by storing full parachain blocks there. The relay chain supplies shared security and finality; the shards handle their own local execution.

Communication is the hardest part because it is where the abstraction of "many chains, one ecosystem" either works or breaks. Once shards are independent execution domains, a cross-shard action is no longer just local function composition. It becomes message passing. That message may be delivered later, handled asynchronously, and acknowledged separately.

What happens to a multi‑step transaction when it crosses shards? (worked example)

Imagine a user action that would be trivial on a monolithic chain: deposit an asset into a lending market, borrow against it, and route the borrowed funds into another application, all inside one transaction. With one shared state machine, the transaction can read balances, update collateral, mint debt, and hand the resulting funds to another contract before finalizing. If any step fails, the entire transaction can revert.

Now imagine the same flow in an execution-sharded system where the asset lives on shard A, the lending protocol on shard B, and the destination application on shard C. The first step may lock or transfer value on shard A. But shard B cannot simply inspect and mutate shard A's state in the same instant unless the protocol provides some special synchronous cross-shard mechanism; and those mechanisms are expensive and complicated. More commonly, shard A emits a message that can be consumed by shard B once shard A's action is accepted. Shard B then processes that message, updates collateral accounting, and perhaps emits another message to shard C. Shard C later receives that message and performs the final action.

What changed is not only latency. The failure model changed. On a monolithic chain, the whole operation can often succeed or fail as a unit. In a sharded design, each step may complete in its own execution context. If the final step fails, the earlier steps may already be committed, and undoing them requires explicit compensating logic rather than implicit rollback.

This is why developers on sharded systems often think in terms closer to distributed systems than to single-process programming. Messages, callbacks, retries, partial failure, and eventual completion become normal concerns.

Why is cross‑shard communication the main performance and complexity cost?

| Model | Delivery | Atomicity | Failure handling | Developer burden |

|---|---|---|---|---|

| NEAR-style async | 1–2 blocks delay | No cross-shard atomicity | Explicit callbacks required | High compensation code |

| Polkadot XCM | Transport-dependent delivery | Fire-and-forget by default | Separate ack / error messages | Protocol integration required |

| Synchronous atomic | Immediate delivery | Strong atomic guarantees | Implicit rollback on failure | Very high engineering cost |

Cross-shard communication is where smart readers most often underestimate the difficulty. It is tempting to think that once execution is parallelized, messaging between shards is a secondary engineering detail. In practice, it is the place where many user-facing guarantees become weaker or at least more conditional.

NEAR's cross-contract model makes this explicit. Its documentation states that because NEAR is sharded, cross-contract calls are asynchronous and independent. The original call and the callback execute in different blocks, typically one to two blocks apart. If the external call fails, the caller's earlier state changes do not automatically roll back; the contract must handle failure explicitly in the callback. That is a clean and honest expression of the underlying mechanism: once execution spans shards, atomic synchronous composition is no longer the default.

Polkadot's XCM ecosystem reflects a similar reality from a different angle. XCM is a messaging format for communication between consensus systems, not itself a transport protocol. Its design is intentionally asynchronous and, by default, closer to "fire and forget" than to a synchronous function call with automatic rollback. That does not make it weak; it makes the model explicit. Separate consensus systems can interoperate trustlessly, but the programmer must design for delayed delivery, acknowledgements, and failure handling.

This is the central tradeoff of execution sharding in practical terms. You gain throughput by letting different execution domains advance semi-independently. You lose the simplicity of pretending the whole system is one instantly shared computer.

Shared security vs sovereign shards: what are the trade‑offs for validators and apps?

| Model | Security source | Validator cost | Composability | Failure modes | Best for |

|---|---|---|---|---|---|

| Shared-security (parachains) | Relay-chain validators | Low per shard | Easier trustless composability | Pipeline / dispute freezes | Specialized apps needing shared security |

| Sovereign chains | Independent validator set | High per chain | Bridges or extra trust needed | Isolated security failures | Maximum autonomy and flexibility |

Not every sharded-looking ecosystem is the same. A useful dividing line is whether the execution shards inherit shared security from a common base layer or operate more like loosely connected sovereign chains.

Polkadot's parachains are a clear shared-security model. The relay chain provides security and transaction finality, while parachains manage their own state transitions and specialized logic. Developers therefore do not need to bootstrap a separate validator set for each application chain. This is one of execution sharding's strongest forms because it combines parallel execution with a common security umbrella.

That design has architectural consequences. Because the base layer secures many execution domains, it must validate enough about shard execution to avoid accepting invalid shard state. The backing and dispute process exists precisely for that reason. And it introduces operational failure modes that monolithic chains do not have in the same way. Kusama's 2023 stalled parachains incident is revealing here: all parachains stopped producing blocks while the relay chain continued finalizing. The post-mortem ties the freeze to dispute handling, pruning behavior, and the fact that a candidate already finalized on the relay chain was later concluded invalid by the dispute system. The lesson is not that execution sharding is broken by definition. The lesson is that once execution and global finality are separated into a pipeline, liveness depends on that pipeline behaving correctly under edge cases.

In contrast, some ecosystems scale more by running many chains in parallel under weaker shared assumptions. That can simplify local execution but pushes more burden onto bridges, messaging protocols, or application-level trust models. Execution sharding is therefore not just a performance trick. It is a choice about where to place security and coordination.

How can blockchains get parallel execution without user‑facing shards?

It is also worth separating execution sharding from a broader family of parallel execution techniques. The common goal is the same (avoid serially executing everything on every node) but the mechanism can differ.

Solana's Sealevel runtime is a good contrast. It is not described as a classic shard-per-state-slice architecture. Instead, transactions declare in advance which accounts they will read or write. Because the runtime knows these access sets ahead of time, it can schedule non-overlapping transactions concurrently. The Programs-and-Accounts model reinforces this by separating code from state and constraining which program may modify which account. The result is a form of parallel execution that extracts concurrency from access patterns rather than from visibly splitting the chain into user-facing shards.

This comparison helps sharpen the concept. The fundamental scaling problem is conflicting access to shared state. A classic execution-sharded design reduces conflicts by partitioning state into separate execution domains. A parallel runtime like Sealevel reduces conflicts by making dependencies explicit and scheduling around them. Both are trying to answer the same question: when can two pieces of work safely happen at once?

The analogy to a multi-core operating system is useful here, but only up to a point. It explains why independent workloads can run in parallel and why declared read/write sets matter. It fails because a blockchain also needs deterministic replay across many nodes, adversarial robustness, and economic security. A local scheduler in one machine can optimize freely; a blockchain scheduler must produce outcomes that the whole validating network can agree on.

Why did Ethereum shift from execution sharding to a rollup‑centric approach?

Ethereum is the clearest example of a major ecosystem that reconsidered execution sharding at the base layer. Sharding was on the roadmap for a long time, and the general idea was the familiar one: split the system so subsets of validators would be responsible for individual shards rather than every validator tracking all of Ethereum.

But Ethereum's scaling direction changed as rollups matured. The community came to favor a rollup-centric approach in which execution happens offchain in rollups, while layer 1 provides consensus, settlement, and increasingly abundant data availability. Ethereum's official scaling materials say this shift was driven by rapid rollup development and the invention of danksharding, and that it also helps keep Ethereum's consensus logic simpler.

EIP-4844, or proto-danksharding, shows what this means mechanically. It introduces blob-carrying transactions: large data blobs whose contents are not accessible to EVM execution, though commitments to them are. The blobs are propagated as beacon-chain sidecars, with their own fee market via blob gas, and are retained only for a limited period. This is not execution sharding. Validators still do not split Ethereum layer-1 execution across shards in the traditional sense. Instead, the protocol increases cheap data availability so rollups can publish transaction data more efficiently and do their own execution offchain.

That choice reveals something important about execution sharding itself. The hard part was never merely adding more bandwidth or storage. It was preserving synchronous composability and manageable protocol complexity while splitting stateful execution. Ethereum chose to scale execution indirectly (through rollups) while using data sharding ideas to support them.

What use cases benefit most from execution sharding?

In practice, execution sharding is most attractive when applications benefit from specialization and relatively self-contained state. Application-specific chains are an obvious fit. A payments shard, gaming shard, identity shard, or DeFi-focused shard can optimize its runtime and fee model for its own workload while still participating in a broader ecosystem.

This is why parachain-style architectures are compelling to builders. A parachain is a specialized blockchain attached to a shared-security hub. The shard boundary becomes a product boundary as well as a scaling boundary. Teams can tune execution logic, governance, and runtime features without forcing every other application to share exactly the same virtual machine constraints.

But the same specialization that helps local performance can hurt composability if too many workflows constantly cross shard boundaries. Execution sharding works best when there is enough internal cohesion that most interactions stay local, and enough messaging infrastructure that the unavoidable remote interactions remain safe and understandable.

When does execution sharding fail to deliver scalability or safety?

Execution sharding depends on assumptions that can fail.

It depends on locality. If users and contracts interact across shards as often as they interact within shards, the protocol spends increasing effort on coordination, message passing, and waiting. Throughput gains shrink, and the user experience can become more complex.

It depends on predictable state ownership. If moving accounts or contracts between shards is too hard, hotspots can form. If moving them is too easy, the system may incur migration overhead and new complexity. Dynamic allocation schemes can reduce cross-shard traffic, but they introduce their own operational questions around reconfiguration and state movement.

It depends on messaging semantics developers can live with. Asynchronous cross-shard calls are often the practical answer, but they require applications to handle partial completion and explicit compensation logic. Many developers raised on synchronous smart-contract composition underestimate how much this changes program design.

And it depends on the validation pipeline being robust. Shared-security systems reduce the need for per-shard validator sets, but they create elaborate coordination machinery around backing, approval, disputes, and data availability. The Kusama parachain stall is a concrete reminder that these pipelines can fail in ways that stop shard execution even when the coordinating chain itself remains live.

Conclusion

Execution sharding scales blockchains by partitioning state and running different parts of the workload in parallel. The basic promise is simple: not every node should have to execute everything. The basic cost is just as simple: once execution is split, cross-shard coordination becomes a first-class problem.

That is the idea to remember tomorrow. Execution sharding is not mainly about creating more shards; it is about deciding where state lives, who is allowed to change it, and how separate execution domains stay coherent. When those answers line up with the workload, execution sharding can deliver real scalability. When they do not, the system pays for parallelism with complexity, latency, and weaker composability.

How does execution sharding affect how I should fund, trade, or use a network?

Execution sharding changes composability, finality, and operational risk across a network. Before you fund or trade a shard-linked asset, check the network's shard model, messaging semantics, and finality assumptions so you understand latency and failure modes. Use Cube Exchange to fund your account and execute trades once you confirm those infrastructure details fit your risk profile.

- Read the chain docs and find the shard and messaging model (look for phrases like “asynchronous cross‑contract calls,” XCM, or “parachain/relay chain”) to confirm how cross‑shard calls behave.

- Verify the security model: note whether the shard inherits shared security (relay/validator hub) or runs sovereign validators, and record any backing/dispute or data‑availability pipeline the project uses.

- Search recent chain incident reports and explorer activity for stalls, disputes, or migration events to gauge operational liveness under edge cases.

- Fund your Cube account with fiat or a supported crypto transfer so you can trade or hold the network token on Cube.

- Open the token market on Cube, choose limit orders for price control or market orders for immediate fills, enter your amount, review fees and on‑chain withdrawal requirements (finality and cross‑shard settlement), and submit.

Frequently Asked Questions

Execution sharding typically breaks single-transaction atomicity across state owned by different shards: cross-shard interactions are implemented as asynchronous messages that are delivered and processed later, so developers must handle partial failure and compensation rather than relying on implicit rollback.

Ethereum shifted away from base-layer execution sharding because rollups matured as the preferred place for execution while layer‑1 focuses on consensus and data availability; EIP‑4844 (proto‑danksharding) increases cheap blob data availability for rollups instead of splitting layer‑1 execution into shards.

shared‑security shards inherit protection from a common hub (e.g., Polkadot parachains rely on the relay chain), which simplifies validator economics and bootstrapping but requires backing, approval, and dispute pipelines that can create new liveness and operational failure modes not present in a single monolithic chain.

You get meaningful throughput gains only when most activity stays within shards (high locality); poorly placed state that generates frequent cross‑shard interactions can make coordination overhead dominate the benefits - studies like TxAllo show intelligent placement can cut cross‑shard ratios dramatically (e.g., ~98%→~12% in one 60‑shard experiment), but results depend on workload and assumptions.

Most execution‑sharded systems avoid every validator re‑executing every shard by using smaller validator subsets, validity proofs, or structured backing: for example, collators propose parachain candidates and small randomly assigned paravalidator groups validate them, while the relay chain stores para‑headers rather than full blocks.

Cross‑shard calls are usually asynchronous by design because synchronous, atomic cross‑shard execution is expensive and complex; systems like NEAR explicitly expose asynchronous cross‑contract promises and Polkadot’s XCM is a messaging format that defaults to asynchronous delivery rather than synchronous function calls.

Execution sharding differs from parallel runtimes like Solana’s Sealevel in mechanism: execution sharding partitions state into distinct execution domains (shards), while Sealevel extracts concurrency by having transactions declare their read/write account sets so a scheduler can run non‑overlapping transactions concurrently without a user‑facing shard boundary.

The main failure modes are lost locality (too many cross‑shard interactions), confusing or costly state migration policies, the need for developer‑visible asynchronous messaging semantics, and brittle validation pipelines - illustrated by incidents such as the Kusama parachain stall where dispute/backing mechanics caused a freeze of parachain block production.

Related reading