What is Restaking for L2 Security?

Learn what restaking for L2 security is, how it works for rollups, what AVSs and slashing do, and why shared security differs from native L1 security.

Introduction

Restaking for L2 security is the idea of using already-staked assets (most often stake anchored on a Base chain like Ethereum) as collateral to secure additional L2 functions such as data availability, validation, or other verifiable services a rollup depends on. The attraction is obvious: bootstrapping a new security set from scratch is expensive, slow, and often weak in the early years, while borrowing some of the economic weight of an existing staking system promises faster scaling. But the important question is not just whether extra stake exists. It is what exactly that stake is securing, who can punish failures, and under what assumptions that punishment is real.

That is the puzzle behind the whole topic. A rollup already inherits some security from its settlement layer, yet many parts of its operation happen elsewhere: sequencers order transactions, data availability layers store batch data, provers or committees attest to state transitions, bridges verify messages, and middleware coordinates operator sets. If those components fail, the rollup can become unavailable, unsafe, or both. Restaking enters here as a way to attach economic penalties to those off-L1 duties.

The core idea sounds simple, but it is easy to misunderstand. Restaking does not magically make every L2 “as secure as Ethereum.” What it does is let an L2 or an adjacent service define additional obligations for operators and make some stake slashable if those obligations are violated. That can create real security, but only if the fault is observable enough, the slashing path is credible enough, and the operational design avoids introducing new trust bottlenecks.

Why do rollups borrow security from L1s?

An L2 needs more than a place to settle final state. It also needs machinery that keeps the system live and auditable between settlements. In an optimistic rollup, for example, users must be able to access the underlying transaction data during the challenge window so fraud proofs can be constructed if needed. In a zk rollup, proof generation may be cryptographic, but the system still depends on data publication, sequencing, and various off-chain services. Even when final correctness ultimately resolves on Ethereum, there are still many ways for users to have a bad experience: data can be withheld, operators can censor, service nodes can fail to perform assigned duties, and committees can collude.

Here is the economic problem. A new rollup can ask people to run its own validator set or committee, but that means building a fresh market for trust. Validators need incentives, users need confidence that penalties are meaningful, and the network needs enough decentralization that collusion is expensive. Early on, most new systems do not have that. Their native token may be illiquid, the validator set may be small, and the total value at risk may exceed the cost of corrupting the security layer.

Restaking tries to reduce that bootstrapping burden by reusing an existing pool of staked capital. Instead of saying, “please acquire and stake this new asset to secure my service,” the protocol says, in effect, “if you already control stake in a trusted Base ecosystem, you may opt into securing this additional service, earn extra rewards, and face extra slashing if you misbehave.” That changes the supply side of security from create new capital to reallocate slashable exposure.

The idea is not unique to Ethereum-style systems. Cosmos replicated security, for instance, also extends validator security from one chain to others, though with a different structure: consumer chains inherit validation from most Cosmos Hub validators through governance rather than an opt-in market. That comparison is useful because it shows that “shared security” is a broad design space, not one fixed mechanism. Restaking is the opt-in, market-mediated version of that broader goal.

How does restaking work? Stake, operators, and services explained

The cleanest mental model is to separate three roles.

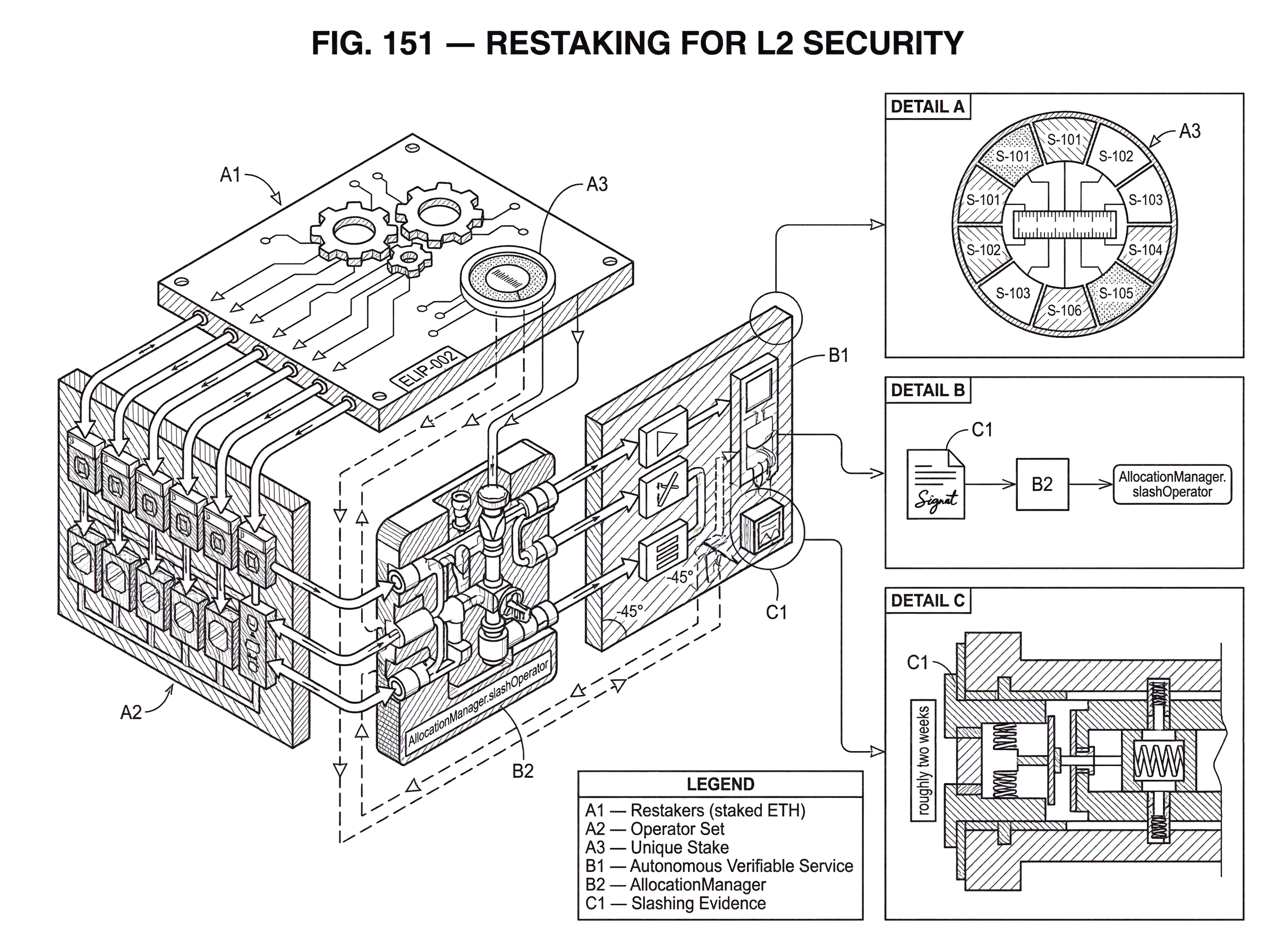

First there are restakers, the people or entities whose assets are ultimately at risk. In Ethereum-oriented designs, these may be native ETH stakers, liquid staking token holders, or other supported assets. Second there are operators, the entities that actually perform work: running nodes, signing attestations, storing chunks of data, or participating in some service-specific protocol. Third there are the services themselves (often called AVSs, for Autonomous Verifiable Services) which are the applications that want security.

According to EigenLayer’s contract repository, the protocol “brings together Restakers, Operators, and Autonomous Verifiable Services (AVSs) to extend Ethereum's cryptoeconomic security with penalty and reward commitments.” That framing matters because it shows what restaking is really doing. It is not merely “locking tokens twice.” It is creating a routing layer between capital, labor, and enforcement.

For an L2 context, the AVS might be a data availability service, a bridge verifier, a decentralized sequencer network, or some specialized committee that checks rollup-related statements. The service defines duties and reward rules. Operators opt in to perform those duties. Restaked assets become slashable against those duties. If the operator behaves correctly, rewards flow. If not, penalties can apply.

The crucial mechanism is that the same base stake can support multiple downstream services only if the accounting of slashable commitments is explicit. Without that accounting, the same dollar of stake could be promised everywhere at once, producing the illusion of abundant security while leaving every service undercollateralized in practice.

What is Unique Stake and why does it prevent double‑counting?

| Aspect | Unique stake | Shared stake |

|---|---|---|

| Slash authority | Exclusive to one AVS | Ambiguous across AVSs |

| Accounting clarity | Explicit per allocation | Only aggregate totals |

| Security budgeting | Predictable per service | Overstates protection |

| Collateral exclusivity | Allocated and reserved | Risk of double-counting |

| Best for | Services needing isolated guarantees | Measuring ecosystem adoption |

This is where protocol details start to matter. In the EigenLayer slashing design, Unique Stake is defined as assets “made slashable exclusively by one Operator Set.” An Operator Set is an on-chain grouping identified by the pair (avs, operatorSetId) and represents a subset of operators securing particular tasks for an AVS.

That design solves a very specific problem: if an operator is serving multiple services, which service has the right to slash which portion of the operator’s delegated stake? Without isolation, slashing authority becomes ambiguous. Two AVSs might both believe they are protected by the same collateral, or one slash could unexpectedly impair another service’s security assumptions.

Unique Stake turns the answer into accounting rather than hope. An operator allocates portions of delegated stake to specific operator sets. That allocation makes those portions slashable for that set’s duties and, by design, not simultaneously countable as exclusive collateral elsewhere. The point is not just bookkeeping neatness. It is what makes security budgeting possible. An L2-related AVS can ask: how much stake is uniquely committed to my service, under my slashing conditions, in this operator set? That is a more meaningful number than “total restaked value in the ecosystem.”

This distinction is easy to miss because public discussions often cite large aggregate restaked totals. Those totals may say something about adoption, but they do not directly tell you the effective security of a particular L2 service. Effective security depends on how much slashable stake is actually allocated to that service, how concentrated it is among operators, what assets compose it, and whether slashing can be executed for the faults that matter.

How does restaking secure data availability for rollups?

| DA option | Cost | Security level | Throughput | Best for |

|---|---|---|---|---|

| On-chain L1 DA | High cost | Maximal (native ETH) | Low to moderate | Maximum safety, simple trust model |

| Restaked DA (EigenDA) | Lower cost | Lower than L1 | Higher throughput | Scalable rollups seeking cost savings |

| Centralized DA | Lowest cost | Single-point trust | Very high | Fast, trusted deployments |

The most natural worked example is a data availability service for rollups, because this is where the security need is tangible. Suppose a rollup wants to post commitments on Ethereum but publish its bulk transaction data to a cheaper external DA layer. Users accept this only if they believe the data will remain available long enough for the rollup’s safety model to work.

Now imagine that a set of operators agrees to store and serve the rollup’s data. On its own, that promise is weak. If operators disappear or collude to withhold data, users suffer unless there is a credible penalty. Restaking adds that penalty. Operators opt into the DA service as an AVS. They allocate unique slashable stake to its operator set. The service distributes encoded data chunks, operators attest that they hold what they are supposed to hold, and the protocol defines what counts as a provable fault.

If an operator can be shown to have violated an objective rule (for example, by failing a proof-of-custody style check or producing contradictory attestations) then the service can seek slashing against the operator’s allocated stake. In principle, that makes withholding or dishonesty economically costly rather than merely reputationally costly.

This is the intuition behind systems like EigenDA, which LlamaRisk describes as a data availability service implemented as an AVS on EigenLayer for L2 rollups. The attraction is that rollups get a DA layer with higher throughput and lower costs than posting all data to Ethereum mainnet. But the same assessment also emphasizes the tradeoff: this security profile is lower than native Ethereum DA. That is exactly the right way to think about restaking for L2 security. It is often a trade of some security margin for lower cost and greater scalability, not a free upgrade.

Who can slash restaked stake and where is the authority executed?

Economic security only exists if someone can enforce the penalty. Here the evidence is unusually clear and unusually important. ELIP-002 describes a slashing entrypoint through AllocationManager.slashOperator, and states that an AVS may slash operators in its operator sets with maximal flexibility. Just as important, the protocol itself provides no built-in veto or proof requirement. If an AVS wants fraud proofs, timelocks, human review, a veto committee, or any other restraint on slashing, the AVS has to build that process itself.

That has two consequences.

The first is positive: AVSs can define security rules that fit their service. An objective cryptographic fault and an operational policy violation are not the same kind of event, and maximal flexibility lets each service design around its actual risks.

The second is more sobering: the quality of the security model moves upward into AVS governance and middleware. If the slashing authority is centralized, rushed, or not transparently constrained, then the restaked collateral may be real but the justice process around it may be weak. Users then face a different threat: not only “will bad actors be slashed?” but also “can good actors be slashed incorrectly?”

This is not theoretical. Risk analyses of EigenDA have stressed that centralized components, such as a disperser or whitelisted batch confirmer set, can create unjust-slashing or censorship risks if they hold too much practical control. Even if the collateral base is large, bottlenecks in evidence production or decision-making can narrow the system’s real trust assumptions.

Why do restaking systems enforce multi‑week withdrawal delays?

Restaking systems often impose long delays for allocation changes, deallocation, and withdrawal. In ELIP-002, the noted parameters are on the order of roughly two weeks for deallocation and withdrawal, with an even longer configuration delay for certain allocation changes.

At first glance, these delays look like pure friction. But here is the mechanism: if operators could instantly move slashable stake in and out of services, then the promise of security would evaporate exactly when stress arrives. An operator could earn rewards while conditions are calm and pull collateral the moment risk increases. A delayed exit keeps stake exposed long enough that penalties remain credible for recent behavior.

The cost is obvious. Capital becomes less liquid, and operators carry lingering exposure to service-specific risks even after deciding to leave. LlamaRisk noted a similar dynamic in EigenDA’s opt-out path, where responsibilities can continue over a multi-week period. This is not an implementation accident. It is part of what turns “I was participating yesterday” into “my collateral still stands behind what I did yesterday.”

So when evaluating restaking for an L2, withdrawal delays are not a side detail. They are one of the hidden prices paid for making slashability believable.

How does cross‑chain restaking work and where does enforcement happen?

Another important detail is that the enforcement and the protected service need not live on the same chain. The EigenLayer contracts repository describes a multichain model in which AVSs register on a source chain and their stakes can be transported to supported destination chains. It also notes a practical limitation: on Base, for example, task verification can exist while “standard core protocol functionality (restaking, slashing) does not exist on Base.”

This tells you something fundamental about restaking-based L2 security. The economic weight may remain anchored on Ethereum even when the useful work happens on another chain. In that case, the destination chain is consuming a security service whose collateral and punishment machinery live elsewhere.

That can be perfectly reasonable, but it changes system design. If a rollup on a destination chain depends on a restaked committee, then proofs, task certificates, operator tables, and evidence often have to bridge between execution environments. Final enforcement may require going back to the source chain where the slashable stake resides. That usually means more latency, more coordination complexity, and more dependence on clean cross-chain state synchronization.

So “restaking for L2 security” often really means cross-domain security leasing. The operators may act near the L2, but the collateral layer may still be elsewhere.

Which L2 components are effectively protected by restaked collateral?

The main use of restaking in L2 contexts is not replacing the rollup’s settlement layer. It is securing the parts of the stack that settlement alone does not cover continuously or cheaply.

Data availability is the clearest example. If a rollup wants cheaper or higher-throughput DA than Ethereum provides, a restaked DA service gives it a way to impose penalties on storage and availability operators.

Decentralized sequencing is another natural fit. A rollup that does not want a single sequencer can require a committee or operator set to follow ordering rules, liveness obligations, or inclusion policies, with slashable stake behind those promises. The same pattern can apply to bridge verification networks, fast-finality committees, oracle-style rollup inputs, and coprocessor-style services that produce verifiable outputs used by the L2.

The unifying principle is simple: restaking works best where an L2 depends on an external service whose operators can be identified, rewarded, and penalized for well-specified duties. If you cannot define the duty clearly enough, or cannot prove violation credibly enough, restaking gives you much less than the headline suggests.

Which operator faults are objectively slashable and which require social adjudication?

| Fault type | Detectability | Enforceable on-chain? | Resolution path | Security fit |

|---|---|---|---|---|

| Objective | Cryptographically decidable | Yes; automated slashing | On-chain proof or fraud proof | Strong fit for restaking |

| Intersubjective | Depends on off-chain evidence | No or contested enforcement | Governance, appeals, social process | Weaker fit for restaking |

This is the deepest conceptual limit in the whole design. Some faults are objective: double-signing, missing a proof obligation, signing contradictory statements, failing a challenge that is crisply machine-checkable. Those fit restaking well because slashing can be triggered by evidence with little ambiguity.

Other faults are intersubjective. Galaxy’s research highlights this distinction clearly: some AVS failures are not fully cryptographically decidable on-chain and may require social or governance processes. Data withholding is the classic example. If a group of operators claims to be serving data and users claim they are not, the question may depend on timing, network conditions, off-chain evidence, or assumptions not cleanly captured in a smart contract.

Once a fault becomes intersubjective, restaking security becomes more political. Who decides whether the fault occurred? How long is the review? Can the AVS slash immediately? Is there an appeals process? Does resolution depend on a token-holder vote, a committee, or social consensus? Eigen-related designs have discussed using the EIGEN token for intersubjective fault resolution rather than burdening Ethereum consensus directly, but the broader lesson is independent of any one implementation: shared security is strongest for objective duties and weaker for socially adjudicated ones.

That is why “secured by restaked ETH” can mean very different things depending on the service. A cryptographic attestation network and a subjective availability committee do not produce the same kind of assurance, even if both cite the same collateral pool.

What are the economic trade‑offs of using restaked collateral for L2s?

Restaking often gets described as capital efficiency. That is true in one sense: the same staked capital can support more than one service. But there is a reason this extra yield exists. The capital is taking on extra risk.

From the base chain’s perspective, heavy downstream slashing can reduce the stake still protecting the base protocol. Galaxy’s research points out that slashing at the restaking layer can impair base-chain security, especially if restaked stake is large relative to the total or concentrated among a few operators. In other words, an L2 borrowing security from Ethereum is not only receiving support; it may also be participating in a broader redistribution of risk across the staking ecosystem.

From the L2’s perspective, the relevant question is not just total collateral, but cost of corruption versus value extractable by attack. If colluding operators can gain more by censoring, withholding, or misreporting than they expect to lose from slashing, the security budget is weak. And if a large share of stake is concentrated in a handful of operators, the nominal slashable amount overstates the practical difficulty of collusion.

Asset composition matters too. If the slashable pool includes volatile or externally risky assets, then the dollar value of security can fall sharply in stressed markets. LlamaRisk notes this concern for EigenDA when many accepted restaked assets may carry depeg or correlated-risk exposure. A service that advertises a large collateral number may be far less protected than that number suggests at exactly the moment it matters most.

What operational controls and keys matter when relying on restaking?

Once slashing authority exists, the keys and middleware around it become part of the security model. ELIP-003’s User Access Management proposal exists for exactly this reason: operators and AVSs need more careful key rotation, revocation, and appointee permissions because the operational surface is sensitive.

This can sound secondary compared with elegant cryptoeconomic models, but it is not. If an AVS admin key is compromised, or if registry and rewards permissions are handled badly, the problem is immediate and concrete. A secure slashing design on paper does not help much if the wrong key can register operators, alter permissions, or submit actions that affect stake exposure.

The same lesson appears in reference implementations such as ServiceManagerBase, where registration, deregistration, and rewards forwarding are mediated by specific contracts and access controls. Real systems are not just theory plus collateral. They are contract code, admin paths, upgrade authorities, batch limits, off-chain coordinators, and service-specific operators. Every one of those can narrow or widen the actual trust model.

How should I evaluate restaking claims about L2 security?

Here is the right middle view.

Restaking for L2 security is a serious mechanism for attaching economic consequences to off-L1 services that rollups depend on. It can help L2s bootstrap security faster than building a validator economy from zero. It is especially useful when the protected duty is clear, the operator set is legible, slashing conditions are objective, and the collateral allocation is explicit.

But it is not a magic transfer of Ethereum’s full security guarantees onto every adjacent service. Native Ethereum settlement, Ethereum data availability, a restaked AVS, and a centralized middleware component are different layers with different failure modes. Saying they all “inherit Ethereum security” compresses away the very details that determine whether the system is robust.

The question to ask is always: what exact promise is backed by what exact collateral, under what exact evidence and governance process? Once you ask that, the concept becomes much clearer.

Conclusion

Restaking for L2 security exists because rollups and related services need stronger security before they can afford to build it natively. Its mechanism is to make already-staked assets slashable for additional duties performed by opted-in operators. The promise is faster security bootstrapping and more scalable rollup infrastructure; the limit is that shared collateral only protects what can actually be monitored, proven, and enforced. In practice, restaking is best understood not as “Ethereum security everywhere,” but as conditional, service-specific cryptoeconomic security borrowed from an existing staking base.

How does this part of the crypto stack affect real-world usage?

Restaking affects real-world usage because it changes what collateral actually protects a service and how quickly that protection can be removed. Before you fund, trade, or hold assets tied to a restaked service, check the AVS’s slashing rules, withdrawal delays, and operator concentration. Use Cube Exchange to act on your conclusions: fund your account, then buy, hedge, or exit positions using Cube’s markets.

- Read the AVS documentation and on‑chain contracts to confirm which duties are slashable and whether slashing requires off‑chain governance or committee approval.

- Inspect operator sets and unique stake allocations (via the service UI or a block explorer) to measure concentration and how much collateral is exclusively committed to the service.

- Check deallocation and withdrawal delay lengths so you understand how long collateral remains exposed during stress events.

- Fund your Cube Exchange account with fiat or a supported crypto transfer.

- Use Cube markets to buy, hedge, or reduce exposure: choose a limit order for price control, review estimated fees and settlement instructions, then submit.

Frequently Asked Questions

Unique Stake is assets that have been allocated so they are slashable exclusively by one Operator Set; this lets an AVS know how much collateral is actually committed to its duties rather than relying on aggregate restaked totals. That exclusivity converts ambiguous promises into accounting that enables meaningful security budgeting for an L2 service.

Slashing is executed via protocol entrypoints (e.g., ELIP-002's AllocationManager.slashOperator) but the core protocol intentionally provides no built-in veto or required proof workflow - an AVS itself must define fraud‑proofs, timelocks, or governance restraints if it wants them. In short, the protocol enables slashing but leaves evidence collection and adjudication policies to the AVS and its governance.

Restaking is well suited to objective, machine‑decidable faults - double‑signing, missing required proofs, or provable contradictory attestations - because those generate clear on‑chain evidence; faults like data withholding are often intersubjective and may require social or governance processes to resolve, making restaking less clean for those cases.

Allocation, deallocation, and withdrawal delays (on the order of weeks in ELIP‑002) are deliberate: they prevent operators from instantly removing collateral when conditions worsen, preserving the credibility of slashing, but the tradeoff is reduced capital liquidity and extended exposure for operators leaving a service.

The economic collateral for a restaked service may remain anchored on the source chain (typically Ethereum) while the DA or service work happens on a destination chain; enforcement often requires bridging proofs back to the source chain and can therefore add latency and cross‑domain coordination complexity, especially where destination chains lack native restaking/slashing support.

No - restaking does not automatically make an L2 as secure as native Ethereum settlement; it makes specific duties slashable and provides conditional, service‑specific security that can be weaker than native L1 DA or settlement depending on observability, governance, and operator concentration.

Operational surface area such as admin keys, registry and rewards permissions, and upgradeable permission controllers matter: compromises or poor access controls (addressed in ELIP‑003 recommendations) can enable incorrect operator registrations, wrongful slashes, or other immediate failures regardless of the underlying economic model.

Concentration of restaked stake among few operators reduces the practical cost of collusion, and inclusion of volatile or depeg‑prone assets can sharply reduce the dollar value of collateral in stress events, so nominal slashable amounts may overstate real protection at the moment of attack.

Restaking is most effective for external services with clearly specified, provable duties and identifiable operators - common examples are data availability layers, decentralized sequencer committees, bridge‑verification networks, oracle‑style inputs, and verifiable coprocessors - because those duties can be instrumented for rewards and slashes.

Related reading