What is a Medianizer?

Learn what a medianizer is, why oracle systems use median aggregation, how it works on-chain, and where its security assumptions can fail.

Introduction

Medianizer is the name commonly given to an oracle component that turns many reported values into one on-chain reference value by taking the median. That sounds almost trivial, but it solves one of the hardest problems in blockchain systems: smart contracts need external data, yet any single data source can be wrong, delayed, manipulated, or offline at exactly the moment the data matters most.

The puzzle is this: if a lending protocol needs “the price of ETH,” what should it trust? There is no single canonical ETH price floating in the blockchain waiting to be read. There are exchange prices, aggregator prices, signed reports from node operators, and delayed or batched updates crossing different systems. A medianizer exists because the problem is not merely getting data on-chain. The real problem is deciding which reported value deserves to become the protocol’s truth.

The key idea is simple enough to remember tomorrow: a medianizer assumes that some inputs may be bad, and it chooses the middle value so extremes cannot dominate unless too many reporters are corrupted or missing. Everything else (whitelists, signatures, quorum rules, timestamps, batching, and update cadence) exists to make that middle value meaningful.

Why use the median for oracle aggregation?

Suppose seven independent reporters submit prices for the same asset: 99, 100, 100, 101, 101, 140, and 0. If a protocol used an average, the 140 and 0 would drag the answer around. If it trusted whichever report arrived first, the result could depend on timing, network conditions, or manipulation. But if it sorts the values and takes the middle one, the answer is 101. The wild outliers are still present, but they stop deciding the output.

That is the core mechanism. A medianizer does not need every reporter to be perfect. It needs enough reporters to be reasonable that the middle of the set still lands in the reasonable range. In Chainlink’s OCR3 numerical reporting design, this is made explicit: the reporting plugin produces the median of more than 2f observations, where f is the number of faulty or Byzantine oracles the system is designed to tolerate. Under that assumption, the reported median stays within the range of values observed by correct oracles. That property matters more than elegance. It means bad actors cannot push the final number arbitrarily far unless they control too much of the reporting set.

This is why medianizers show up repeatedly in oracle architecture. Maker’s Median contract computes a median from signed price reports and stores it as the protocol’s reference price. Chainlink price feeds use median-style aggregation at the oracle-network layer, and also describe median-taking at the node-operator layer when combining upstream data sources. Even when implementations differ (on-chain median computation in one system, off-chain reporting plus on-chain verification in another) the same logic is doing the work: the system is trying to be robust to outliers rather than perfectly informed about an unknowable “true” price.

There is an important limit here. A medianizer is not magic truth discovery. It can suppress a minority of bad values, but it cannot rescue a feed if most reporters are wrong in the same direction, if all of them use the same broken upstream source, or if the world moves faster than the reporting process. The median is robust to some disagreement. It is not robust to the disappearance of independence.

How does a medianizer validate reports and publish an on‑chain price?

At a high level, a medianizer has four jobs. It must decide who is allowed to report, decide when a report set is valid, compute the middle value, and expose that value to downstream contracts in a way they can safely consume.

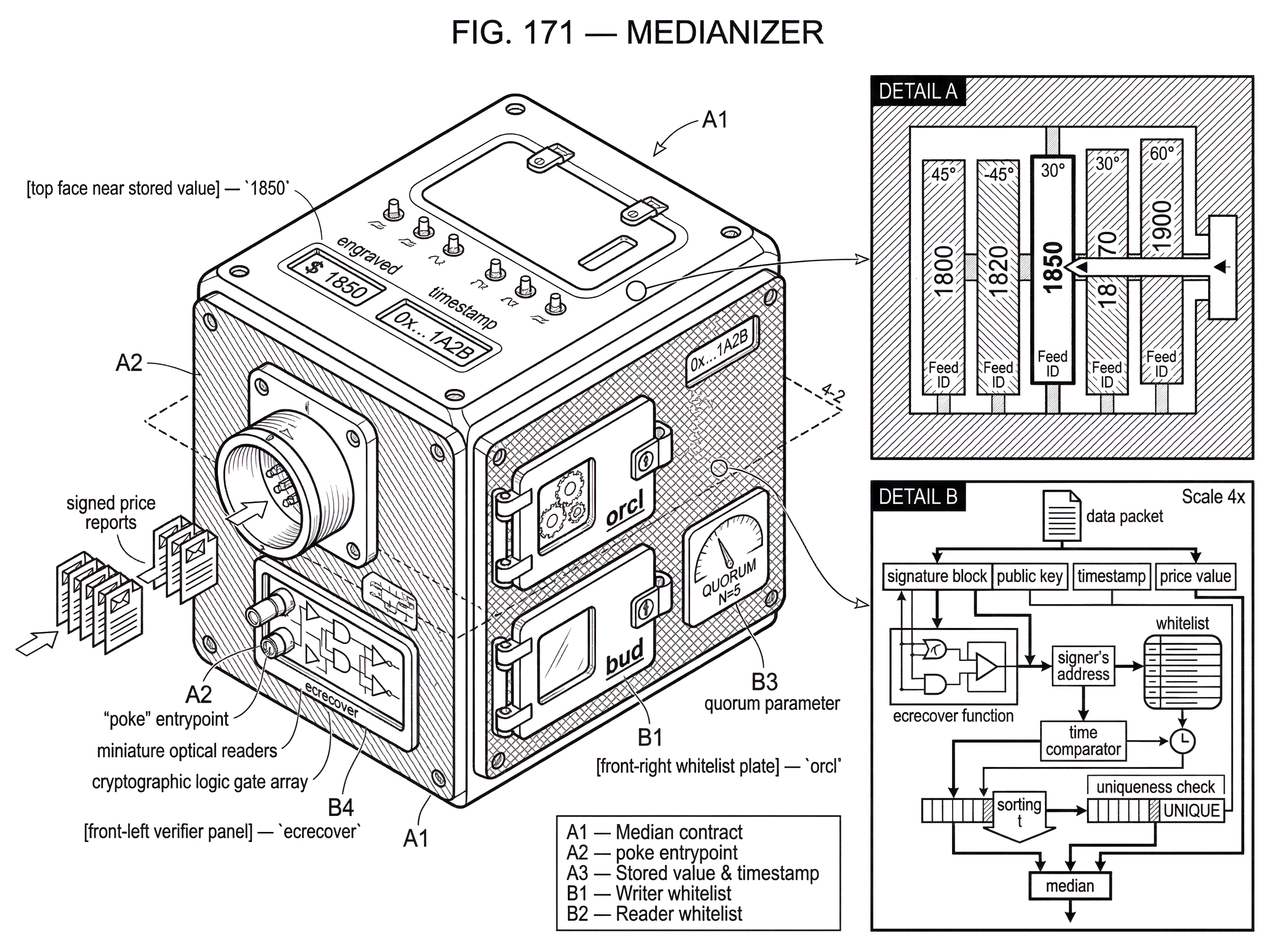

Maker’s median.sol shows this pattern clearly. The contract maintains a whitelist of authorized writers, called orcl, and a separate whitelist of authorized readers, called bud. Writers are the oracle signers whose price messages may be used in an update. Readers are the contracts or addresses permitted to call peek or read. Governance-controlled functions such as lift and drop manage the writer set, while kiss and diss manage the reader set. The contract also stores a quorum parameter called bar, which is the number of valid messages required to update the price.

The interesting part is that the actual update entrypoint, poke, is public. In Maker’s design, poke is not protected by admin authorization. Anyone can call it (often a keeper or relayer) but the call only succeeds if the submitted data passes all of the contract’s checks. That separation matters. The protocol does not want to trust a single caller to decide the price. It wants the caller to be replaceable, because the real authority lies in the signed reports and the validation rules, not in who pays gas for the transaction.

When poke receives a batch of reports, the contract verifies each one. In the reference implementation, the signer is recovered with ecrecover over a message built from the reported value, a timestamp, and a feed identifier wat. Binding the signature to wat matters because it prevents a signature intended for one feed from being replayed against another. Binding it to a timestamp matters because the contract can reject stale messages. In Maker’s code, each report must have an age_ greater than the previously stored age zzz, so old signed values cannot be resubmitted to overwrite fresher state.

The contract also requires the submitted values to be in non-decreasing order. That is partly an optimization and partly a correctness check. If the array is already sorted, the contract can take the middle element directly instead of sorting on-chain, which saves gas. But it also means the off-chain assembler of the transaction has to provide a coherent ordered set. If values are out of order, poke reverts. So the contract is not just aggregating data; it is enforcing a very particular data shape that makes the median well-defined and cheap to compute.

Uniqueness is another subtle requirement. A median only means something if the reports come from distinct authorized sources. Maker’s implementation uses a bitset-style bloom mechanism during poke to ensure the same oracle cannot be counted twice within one submission, and it also uses a slot mapping derived from part of the signer address so governance cannot whitelist two signers that collide in the same uniqueness slot. These implementation details are not the essence of a medianizer, but they reveal something important: robust aggregation depends not just on the aggregation formula, but on proving that the inputs are fresh, distinct, and authorized.

Once validation passes, the contract selects the median. In Maker’s implementation, that is the array element at index length >> 1, meaning the middle position of an odd-length sorted array. The result is stored along with the current block timestamp as the contract’s latest value. Downstream readers can call peek, which returns the value and a validity flag, or read, which returns the value and reverts if the stored value is invalid.

How does quorum size affect medianizer security and liveness?

| Quorum size | Fault tolerance | Manipulation cost | Liveness risk |

|---|---|---|---|

| Small (low) | Tolerates few faults | Low attacker cost | High availability |

| Medium (2f+1) | Tolerates f faults | Moderate attacker cost | Moderate availability |

| Large (near total) | High tolerance if honest | High attacker cost | High update-failure risk |

If the median is the visible idea, quorum is the deeper one. A medianizer only works because it does not accept arbitrary numbers of reports. It requires a threshold number of valid observations before it will update. That threshold determines the tradeoff between fault tolerance and liveness.

In Maker’s median, bar is the minimum writers quorum. The code enforces that bar is nonzero and odd. The oddness is not cosmetic. If you want the median to be a single existing element of the sorted set, an odd-sized quorum gives you an unambiguous middle report. With an even number, you would have to choose a convention such as averaging the two middle values, choosing the lower one, or choosing the upper one. Maker’s implementation avoids that ambiguity and gas cost by requiring odd bar values.

Now the mechanism becomes clearer. If bar is 13, then a caller must supply exactly 13 valid, fresh, authorized, sorted, unique reports for poke to succeed. This raises the cost of manipulation because an attacker must influence enough of those 13 reports to move the middle of the set. But it also raises the risk of no update happening at all, because if too many honest reporters are offline or delayed, the threshold cannot be met. The contract will not gracefully degrade to fewer reports. It simply will not update.

Chainlink’s OCR3 specification describes the same logic in a more formal distributed-systems frame. The numerical reporting plugin sets the observation quorum to 2f + 1, where f is the number of Byzantine faults tolerated. The reason is structural. With more than 2f observations, even if up to f of them are faulty, there are still enough correct observations to ensure the median lies within the correct range. That is the invariant the protocol is protecting. The exact contract interface differs from Maker’s older on-chain median computation, but the design pressure is the same: you choose a threshold so the middle of the accepted values still reflects correct participants despite some faults.

A smart reader might ask whether “median” and “majority” are the same thing here. Not quite. Majority tells you how many reporters must agree on a proposition. Median tells you which numeric value sits in the middle after ordering. But for manipulation resistance, the two ideas become related. To move the median materially, an attacker typically needs to control enough of the ordered positions around the center. That often means corrupting something like a majority of the effective influence over the central ranks. The exact threshold depends on the setup, but the intuition is stable: outliers at the edges do not matter until they stop being edges.

Step‑by‑step: how signed reports become the protocol’s price

Imagine a lending protocol that uses a medianizer for the collateral price of an asset. Fifteen approved oracle signers monitor markets. Off-chain, each signer produces a signed message containing a price, a timestamp, and the feed identifier for that asset pair. Those reports do not update the blockchain by themselves. They are just signed claims waiting to be assembled.

A relayer (perhaps run by the protocol, perhaps by an independent keeper) gathers a fresh set of signed reports. Suppose the valid signed values are 1848, 1849, 1850, 1850, 1851, 1851, and 2200, with a quorum bar of 7. The relayer sorts the values, bundles the signatures and timestamps, and calls poke.

On-chain, the medianizer does not care whether the relayer is trusted. It only cares whether the included signatures recover to authorized signers, whether each timestamp is newer than the current stored age, whether the values are sorted, and whether no signer appears twice. If one report is stale, or one signer is unauthorized, or the values are not ordered, the entire update fails. If all checks pass, the contract takes the middle element, which is the fourth value in this seven-item set: 1850.

That number becomes the stored reference value. Downstream contracts do not need to inspect every underlying report. They consume the medianizer’s output as the canonical answer. In Maker’s broader architecture, they usually do not even consume the median directly. The Oracle Security Module, or OSM, sits in front of the core protocol and introduces a delay before the price is used by liquidation-sensitive components. That extra step exists because even a correct current price may be dangerous if the protocol wants time to react to sudden moves. So the medianizer answers the question, “What is the current aggregated reference price?” while the OSM answers the separate question, “When should the protocol trust this new price enough to act on it?”

This distinction matters because people often treat “oracle” as one box. In practice, a price path may involve upstream exchanges, data aggregators, node-level aggregation, network-level medianization, signature attestations, on-chain verification, and then a delayed consumer module. The medianizer is the robust aggregation step inside that larger pipeline.

What oracle risks does a medianizer mitigate in DeFi?

The reason medianizers matter is not academic. Many DeFi protocols are built around thresholds: collateral ratios, liquidation points, minting limits, interest adjustments, settlement conditions, redemption rates. A bad price is not just misinformation. It is state transition authority. It decides who gets liquidated, what can be borrowed, and whether positions remain solvent.

If a protocol trusted a single exchange price, then a flash-loan attack against that venue could temporarily become the protocol’s reality. Research on flash-loan-enabled oracle manipulation shows exactly why this is dangerous: large amounts of capital can be borrowed atomically, used to distort on-chain market prices, and repaid within one transaction, leaving the victim protocol with losses if it consumed the manipulated price. This is one reason oracle systems moved away from naive single-source DEX reads toward multi-source, multi-reporter aggregation.

A medianizer raises the attacker’s job from “move one market” or “compromise one source” to “shift enough independent reports that the middle moves.” That is a much harder target; if the reports are truly independent. Chainlink’s public explanation of price feeds emphasizes layered aggregation for this reason: upstream data-source aggregation reduces noise and venue-specific anomalies; node operators medianize across multiple aggregators; the oracle network then medianizes across node responses once a response threshold is reached. The same philosophy can be implemented differently on various chains, but the purpose stays constant: make manipulation require coordination across multiple independent failures, not exploitation of one thin point.

This pattern is not limited to Ethereum-style contracts. Pyth, for example, distributes price data across many chains with deterministic on-chain delivery for its core product, even though the high-level developer hub does not spell out its exact aggregation formula in the overview. The broader point is that robust aggregation is chain-agnostic. Whether the final verification happens in an EVM contract, a Solana program, or another execution environment, any oracle network that combines multiple reports into one value faces the same design question: what aggregator gives acceptable robustness, latency, and cost?

When and how can a medianizer fail? (collusion, common‑mode, liveness)

| Failure mode | Underlying cause | Effect on reported median | Typical mitigation |

|---|---|---|---|

| Collusion | Many signers compromised | Median reflects bad value | Governance remediation |

| Common-source error | Reporters share broken upstream | Median repeats same error | Increase source diversity |

| High quorum / liveness | Too many required reports | Updates stall | Reduce quorum or add keepers |

| MEV / mempool ordering | Update visible/preempted | Timing-based exploitation | Delay, private relays, or OSM |

The medianizer’s strength is also its boundary. It assumes that bad reports are a minority and that honest reports are not all making the same mistake.

The most obvious failure mode is collusion or correlated failure. Maker’s documentation notes a blunt limitation: there is no contract-level mechanism in median.sol to “turn off” the feed if all authorized signers collude to sign an invalid value such as zero. In that scenario, the aggregation logic has done exactly what it was designed to do (it found the median of the submitted reports) but the security assumption underneath has collapsed. peek may return the value as invalid, and downstream governance or external modules must intervene. The medianizer cannot manufacture correctness from unanimous bad input.

A subtler version is when reporters appear independent but share the same upstream dependency. If ten oracle nodes all read the same broken API, the median of their reports is just the API’s error, repeated ten times. Medianization protects against independent outliers better than against common-mode failures. This is why serious oracle systems care so much about source diversity, operator diversity, and update process diversity. Independence is not a nice-to-have around the medianizer. It is what makes the median meaningful.

Another limitation is liveness. A high quorum makes manipulation harder but updates harder too. Maker’s docs explicitly warn that quorum sizing near the total signer count can leave the feed effectively un-updatable if members go offline. This is not a bug in the code. It is the inevitable consequence of demanding many fresh reports before accepting a new median. In fast markets, stale-but-available and fresh-but-unavailable are both dangerous in different ways.

There is also the problem of lag. Compound’s Open Oracle audit highlights an important operational truth about median-based systems: even if each reporter updates independently and honestly, the published median may move only after enough of the sample has crossed to the new regime. In sudden price moves, that can create delay. The audit also found a more concrete correctness flaw in that implementation: certain submitted prices could be recorded without triggering a recomputation of the official median, leaving the public median stale. That issue is implementation-specific, not an indictment of medians in general, but it shows the broader lesson: aggregation logic is only as good as the state machine that actually recomputes and publishes it.

Finally, there is the surrounding market microstructure. Oracle updates are transactions, and transactions live in adversarial ordering environments. Research on miner- or validator-extractable value shows that profitable transactions can be reordered, delayed, copied, or contested through priority bidding. A medianizer does not eliminate these effects. If an update transaction is visible in the mempool, other actors may trade ahead of it, race to exploit the soon-to-change reference price, or try to influence inclusion timing. So medianization solves one class of problem (robust aggregation of reports) but not all the game theory around when updates land on-chain.

Medianizer vs. other oracle components: OSM, feeds, and networks

| Component | Primary role | Latency | When to use |

|---|---|---|---|

| Medianizer | Aggregate multiple reports | Low to medium | Robust numeric output |

| Oracle network | Produce signed observations | Variable | Source diversity and scale |

| OSM (delay module) | Delay and vet updates | Higher (intentional delay) | Protect liquidation logic |

| Data feed / aggregator | Upstream price sourcing | Low (external APIs) | Reduce venue noise |

It helps to separate the medianizer from neighboring ideas that are often conflated with it.

A data feed is the broader pipeline that gets external data to smart contracts. A medianizer is one mechanism inside that pipeline, specifically the part that combines multiple observations into a single output.

An oracle network is the set of reporters, signers, or nodes producing observations. The medianizer is the rule for collapsing their observations into one value. You can have an oracle network without median aggregation if it uses another rule, such as a weighted average or committee approval. And you can have a medianizer inside a larger oracle network that already performed aggregation at earlier layers.

An OSM or delayed-security module is not a medianizer. Maker’s OSM intentionally delays consumption of the price produced by the median. The medianizer answers “what is the current aggregated price?” The OSM answers “when should downstream protocol logic see that price?” Those are different control problems.

An oracle manipulation defense is broader still. Medianization is one defense because it reduces sensitivity to outliers and minority corruption. But it is usually paired with other defenses: time delays, heartbeat requirements, deviation thresholds, source weighting, circuit breakers, governance controls, and monitoring. A protocol that treats a medianizer as a complete oracle security solution is usually missing half the picture.

Which parts of medianizer design are fundamental and which are implementation choices?

The fundamental part of a medianizer is extremely small: collect multiple observations of the same quantity and choose the middle one so a minority of extreme values cannot dominate.

Almost everything else is convention, engineering choice, or threat-model response. Whether reports are aggregated on-chain or off-chain is a cost and architecture decision. Whether readers are permissioned is a protocol design choice; Maker gates reads with bud, while other systems expose public views. Whether the feed stores the current price directly or passes through a delayed module depends on the downstream protocol’s risk tolerance. Whether the signer set is governance-managed, committee-managed, or economically permissionless depends on how the oracle network is organized.

Even the exact notion of “medianizer” varies somewhat across systems. In Maker, the term is tightly associated with a contract that validates signed reports and stores a median. In Chainlink OCR3, the medianizer is described as a numerical reporting plugin in a broader off-chain reporting protocol. In audits of Maker-adjacent systems such as StETHPrice, “medianizer” can simply mean a component that returns the current aggregated price directly rather than operating like an OSM with propagation delay. The family resemblance is clear even when the implementations are not identical.

Conclusion

A medianizer is an oracle aggregation mechanism that takes many reported values and turns them into one reference value by choosing the middle report. Its value comes from a simple invariant: as long as enough inputs are fresh, authorized, distinct, and independent, a few extreme or malicious reports cannot determine the output.

That is why medianizers are common in blockchain oracle systems. They do not solve the oracle problem completely, but they solve a crucial part of it: they make “one bad source” less likely to become “the protocol’s truth.” The catch is equally important to remember: a medianizer is only as strong as the independence of its reporters, the sanity of its quorum, and the update machinery around it.

What should you understand before relying on a price feed?

Understand the medianizer’s limits (quorum, source diversity, and update lag) before you act on a price. On Cube Exchange, you can check the market’s oracle metadata and then fund and execute trades with protections that reflect what the feed shows.

- Deposit fiat via Cube’s on‑ramp or transfer a supported stablecoin (for example USDC) into your Cube account.

- Open the asset’s market page and inspect the oracle information: check the feed’s last update timestamp, reported source diversity, and the quorum/heartbeat or delay values (watch for large OSM delays or stale timestamps).

- Choose an appropriate order type: use a limit order for large or thin‑book trades to avoid trading into an uncertain feed, or a market order for small, urgent fills; set a conservative price/size if feeds look lagged.

- Set execution protections (slippage tolerance, stop‑loss or take‑profit). After the trade, monitor the feed for fresh updates and wait for chain‑specific confirmation thresholds before relying on the new on‑chain state for further actions.

Frequently Asked Questions

Maker’s median contract requires an odd, nonzero quorum parameter (bar) so the median is an unambiguous single element of the submitted sorted set; choosing an odd bar avoids tie‑breaking rules and extra gas costs that an even quorum would introduce.

If the required quorum is not met the contract simply will not update the stored price - raising manipulation resistance but also risking liveness because honest updates can be blocked when too many reporters are offline or delayed.

The contract enforces that submitted report values are non‑decreasing and that each signer is unique in the submission, so an off‑chain assembler (often called a relayer or keeper) must gather, sort and de‑duplicate the signed reports before calling poke.

Medianization mitigates isolated or extreme outliers but cannot protect against collusion or common‑mode failures where most or all reporters read the same broken upstream source or collude to sign an invalid value; in such cases the median simply reflects the unanimous bad input and off‑chain governance/action is needed.

Maker’s implementation uses a slot/bitset uniqueness check derived from signer address bytes to prevent double‑counting a signer in a submission, but governance still must manage signer collisions and the code only enforces first‑byte uniqueness rather than full‑address uniqueness.

A medianizer only addresses aggregation robustness; protocols typically pair it with other defenses - e.g., time delays (OSM), heartbeat checks, deviation thresholds, source and operator diversity, circuit breakers, and monitoring - because medianization alone does not solve ordering/MEV, common‑mode failures, or governance risks.

An OSM (Oracle Security Module) is a separate component that delays or gates when a published price is allowed to affect protocol state; the medianizer answers ‘what is the aggregated current price?’ while the OSM answers ‘when should downstream logic act on that price?’, so they solve different control problems.

Medianizers do not eliminate mempool‑level adversarial ordering or MEV risks: visible update transactions can be front‑run, copied, or reordered by miners/validators or bots, so aggregation robustness must be complemented by transaction‑level mitigations and monitoring.

Some implementations have operational bugs unrelated to the median concept - for example audits found that certain contracts could record signed prices without triggering a recomputation of the public median, leaving the published median stale - so correctness of the recomputation and publishing state machine is essential.

Chainlink’s OCR3-style numerical reporting formalizes the quorum as 2f+1 so that even with up to f Byzantine reporters the median remains within the range observed by correct oracles; choosing the quorum thus directly determines how many faulty reporters the system tolerates versus how often updates can proceed.

Related reading