What Is Social Engineering?

Learn what social engineering is, how it works, why phishing and wallet-signing scams succeed, and which controls actually reduce the risk.

Introduction

Social engineering is the security problem of using deception to make a person reveal information, grant access, or perform an action that helps an attacker. That sounds almost too simple, but it explains why some of the most expensive attacks begin with something that looks ordinary: an email, a text, a phone call, a wallet signature prompt, or a request from someone who seems familiar.

The important contrast is this: many security defenses are built to stop unauthorized actions by machines, yet organizations and users still need humans to approve, interpret, and decide. That gap is where social engineering lives. An attacker does not always need to break encryption, discover a software flaw, or overpower a firewall. If they can persuade the right person to hand over a password, trust a fake identity, or sign a malicious transaction, the technical system may faithfully carry out the attacker’s goal.

NIST’s glossary captures the core idea in several closely related definitions: social engineering is an attempt to trick someone into revealing information, such as a password, that can be used to attack systems or networks, and more broadly the act of deceiving an individual into revealing sensitive information, obtaining unauthorized access, or committing fraud by gaining confidence and trust. CISA uses similar language, emphasizing that the attacker uses human interaction and social skills to obtain or compromise information about an organization or its computer systems. The wording varies slightly, but the mechanism is the same: the human becomes the attack surface.

That is why social engineering belongs in any serious discussion of security risks and controls. It is not a side issue for “less technical” users. It is what happens when the strongest technical boundary still depends on a person to open the door.

How does social engineering turn trust into actionable capability?

To understand social engineering from first principles, start with a simple security fact: systems grant power through capabilities. A password lets someone log in. A reset link lets someone change credentials. A wire approval moves money. A wallet signature authorizes a blockchain action. A support agent can override a process. These capabilities are supposed to be released only under certain conditions.

Social engineering works by creating a false picture of those conditions inside someone’s mind. The victim believes the requester is legitimate, the action is routine, the urgency is real, or the risk is low. Once that belief is established, the victim may voluntarily provide the capability the attacker needs. In that sense, social engineering is not magic and not merely “human error.” It is an attack on the decision process that sits in front of technical controls.

This is the compression point that makes the topic click: social engineering does not bypass security controls so much as recruit an authorized person to exercise them on the attacker’s behalf. The employee types the password. The user clicks the approval link. The administrator resets the account. The wallet owner signs the transaction. The system may behave exactly as designed; the attacker changed the inputs by changing what the person believed.

Trust is central here, but trust by itself is too vague. What the attacker really exploits is a bundle of shortcuts people use because they are necessary for normal life. We answer calls that sound official. We respond quickly to urgent requests. We assume familiar logos, domains, or voices are meaningful. We let context fill in missing details. None of these habits is irrational. In fact, organizations could not function without them. Social engineering succeeds because secure behavior is often slower, more suspicious, and less convenient than ordinary cooperative behavior.

This is also why the term covers more than a single channel. The deception can happen by email, phone, text message, website, direct message, video call, or in person. NIST’s glossary definitions do not narrowly limit the concept to digital messages, and CISA explicitly discusses email-based phishing, voice-based vishing, and text-based smishing. The channel matters for tactics, but the underlying structure does not change.

Why do social‑engineering attacks continue to succeed despite defenses?

A common misunderstanding is that social engineering works only on uninformed or careless people. That view is comforting, and mostly wrong. The deeper reason it works is that modern systems create conditions in which people must make fast trust decisions with incomplete information.

Consider what a normal workday or online session looks like. People receive many messages, many approvals, many prompts, and many exceptions. They are rewarded for responsiveness far more often than for skepticism. A request that appears to come from a colleague, vendor, exchange, wallet app, or support team usually is legitimate. If every interaction required deep forensic checking, nothing would get done. So people develop efficient heuristics. The attacker’s job is to shape a message or interaction that fits those heuristics just well enough.

CISA highlights a practical version of this problem: an attacker may gather small pieces of information from one source, then use them to build credibility with another source inside the same organization. That matters because legitimacy is often judged cumulatively. A reference to an internal project name, a real coworker, a recent invoice, or a prior support ticket can make the next request feel authentic. The victim is not being fooled by one isolated detail; they are being guided into a coherent story.

Urgency amplifies this effect. The attacker does not merely ask for an action; they frame delay as dangerous or costly. Maybe payroll must be fixed immediately. Maybe a wallet needs to be reconnected now. Maybe suspicious account activity requires immediate verification. Under time pressure, people shift from careful validation to fast pattern-matching. Social engineering often succeeds at exactly that moment.

New tools make the credibility problem worse. CISA notes that VoIP can easily allow caller ID spoofing, which exploits misplaced trust in phone services. Joint guidance from CISA, the FBI, and NSA adds another layer: synthetic media and deepfakes can impersonate executives, financial officers, and trusted contacts, enabling access to networks, communications, and sensitive information.

In other words, signals that once carried some evidentiary weight have become cheaper to fake.

- a recognizable voice

- a plausible caller ID

- a polished message

The lesson is not that users should trust nothing. That is impossible. The lesson is that signals of legitimacy must be made costly to fake and easy to verify. That principle drives the best defenses.

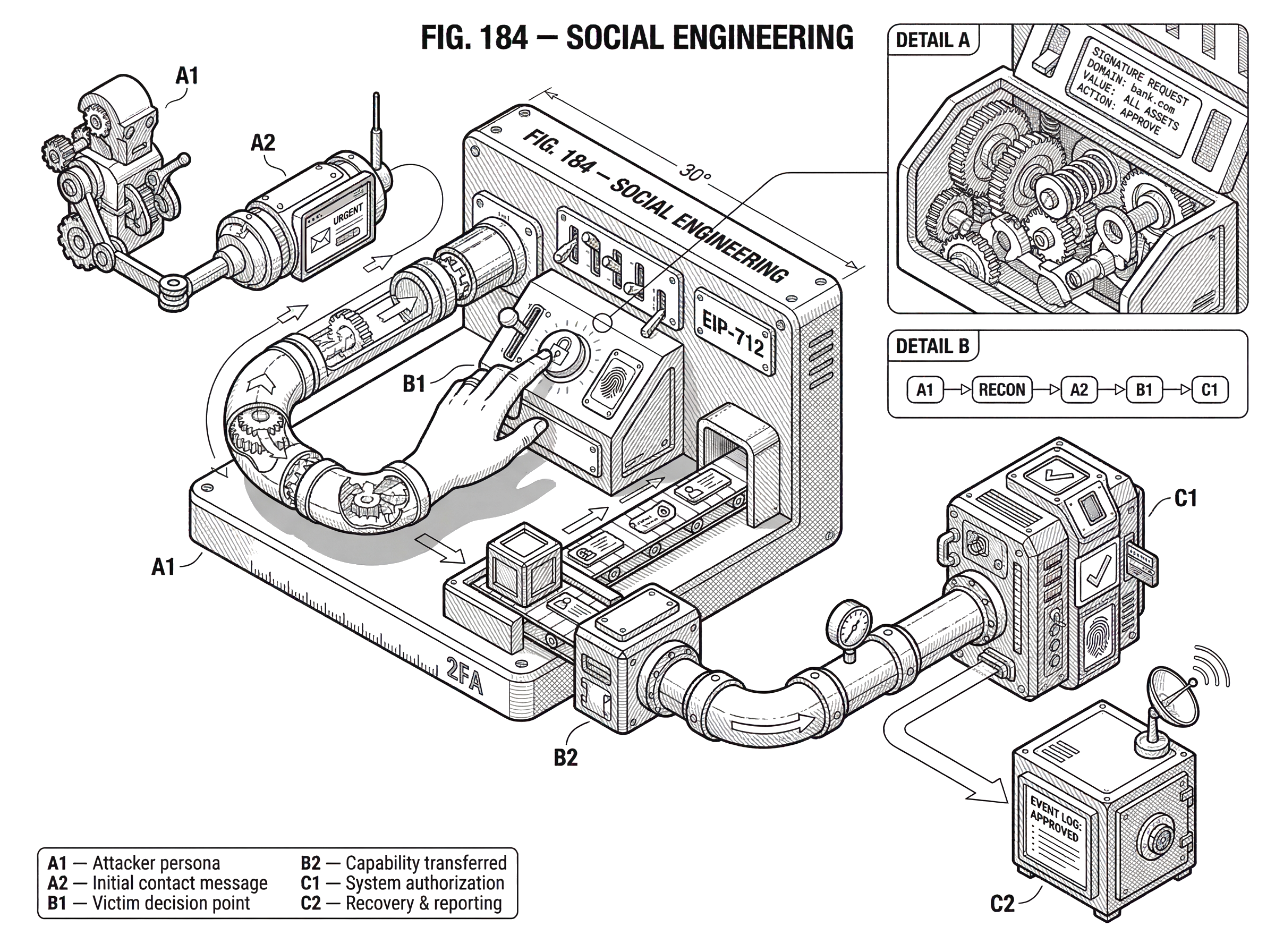

How does a social‑engineering attack chain form step by step?

| Stage | Attacker action | Typical victim response | Defender control |

|---|---|---|---|

| Reconnaissance | Harvest public data and breaches | Profiles and context exposed online | Harden profiles, monitor breaches |

| Initial contact | Send plausible message or request | Clicks, replies, or engagement | Email filtering, anti-spoofing, training |

| Foothold | Ask for a small verification action | Provides a confirming signal | Verify out-of-band, limit info disclosed |

| Escalation | Add context or switch channel | Follow-up instructions accepted | Require step-up auth, independent checks |

| Capability transfer | Request credential, reset, or signature | Grants access or signs transaction | Readable prompts, limit approvals |

| Exploitation & cashout | Consolidate and move assets | Compromise discovered late | Rapid alerts, freeze or report funds |

Imagine an attacker wants access to a company’s internal systems or wants to drain a user’s crypto wallet. They usually do not begin with the final action. They begin by reducing uncertainty.

The attacker first collects context from public sources, old breach data, social media, project documentation, or prior conversations. They learn names, roles, vendors, schedules, wallet habits, or recent events. Then they make contact through the channel most likely to feel ordinary: a familiar-looking email, a text that appears to be from support, or a wallet connection request from a site that resembles a real application.

The first message does not need to ask for everything. In many successful campaigns, it asks for something small: confirm an account, review a file, reconnect a wallet, check a billing issue, or sign a message to “verify ownership.” If the victim responds, the attacker gains a stronger foothold. Perhaps they now know the victim is active, what browser or wallet they use, or that they are willing to follow instructions. If needed, the attacker escalates to another channel. A phone call references the email. A text references the support ticket. Each step makes the story feel more real.

Now the crucial transfer happens. In a traditional enterprise setting, the victim may provide credentials, install remote-access software, reveal internal information, or approve a reset. In a crypto setting, the victim may sign a transaction or token approval. The technical form differs, but the mechanics are identical: the attacker has induced an authorized action that the system itself will respect.

After that, the attacker often moves quickly. Stolen credentials are used before they can be changed. Approved spend permissions are exercised before the victim understands what was signed. Funds are consolidated, forwarded, or cashed out. CISA’s victim guidance reflects this reality: once sensitive information may have been revealed, organizations should report internally, change exposed passwords including reused ones, and contact financial institutions where relevant. Speed matters because the attacker’s advantage often comes from the victim learning the truth too late.

How does phishing relate to broader social engineering?

People often use social engineering and phishing as if they were interchangeable. They are not. Phishing is best understood as a common implementation of social engineering, especially over email, websites, and messaging systems.

CISA defines phishing as a form of social engineering that uses email or malicious websites to solicit personal information by posing as a trustworthy organization. Smishing applies the same logic through SMS, often using links or actions that open a browser or dial a number. Vishing uses voice communication, including fully voice-based scams assisted by caller ID spoofing. These are different delivery systems for the same underlying move: create a believable context, request an action, and capture a capability.

This distinction matters because defenses that target one implementation do not solve the whole problem. Email filtering may reduce phishing volume, but it does nothing against an in-person impostor, a voice-cloned executive call, or a fake dapp prompting a malicious signature. At the same time, the distinction should not be overstated. The shared structure means many defensive ideas carry across channels: independent verification, multi-factor checks, constrained permissions, readable prompts, and escalation paths that do not depend on the attacker-controlled channel.

How does social engineering target crypto and Web3 users?

In crypto, social engineering becomes unusually dangerous because many actions are irreversible and because the user often acts as their own final approver. There may be no bank fraud desk, no central authority to reverse a transaction, and no administrator who can restore control after a malicious signature has been used.

That changes the value of the attacker’s target. In a traditional phishing attack, the attacker often wants credentials. In crypto, they may want something subtler: a transaction approval, a token allowance, a message signature, or a wallet connection to a malicious app. The victim may never directly “send” funds in the familiar sense. Instead, they authorize a capability that later allows the attacker to move assets.

The approval-phishing pattern illustrates this clearly. Chainalysis describes approval phishing as a scam in which victims are tricked into signing a malicious blockchain transaction that grants the scammer’s address approval to spend tokens from the victim’s wallet. Once that approval exists, the scammer can drain those tokens. The key insight is that the socially engineered step is not always the theft itself. Sometimes it is the creation of a standing permission that makes theft easy afterward.

This is why wallet UX matters so much. If a user sees an opaque hex string or a confusing signature prompt, they cannot meaningfully evaluate what they are approving. EIP-712 was designed in part to improve this by standardizing typed structured data signing so messages can be displayed to users for verification rather than as unreadable blobs. It also introduces domain separation through EIP712Domain, including fields such as name, version, chainId, and verifyingContract, so wallets can distinguish where a signature is meant to apply and can perform checks like refusing to sign when the chainId does not match the active chain.

This is an important pattern in security design: make the security-relevant meaning visible at the point of consent. Users cannot defend against what they cannot see. Typed structured signing does not eliminate risk (EIP-712 explicitly does not provide replay protection, so applications must add their own defenses) but it improves the odds that a signer can tell what is being authorized.

Wallet and wallet-connection systems are increasingly adding similar context cues. WalletConnect’s Verify API lets wallets show whether a connecting domain is a match, unverified, a mismatch, or flagged as a threat. WalletConnect describes this as a phishing mitigation, not a bulletproof guarantee, which is the right framing. The point is not to create perfect trust from one signal. The point is to interrupt the attacker’s attempt to make a malicious request feel routine.

The same logic appears in Hardware Wallet and enterprise-signing guidance. Ledger warns that raw signing is effectively blind signing: the signing module cannot understand the transaction’s content or intent, so there is no contextual validation. That is exactly the condition social engineers want. If the approver cannot inspect meaning, persuasion becomes much easier.

The recommended countermeasures are ways of rebuilding context and friction around a dangerous approval path.

- thorough validation before submission

- limited operator access

- auditing

- monitoring

- education

Why is social‑engineering detection harder than detecting malware?

Technical attacks often leave clear structural traces: a malformed packet, a known exploit sequence, a suspicious binary. Social engineering is different because its core payload is meaning. A sentence can be malicious not because of its syntax but because of what it falsely implies.

That is why user-facing indicators are probabilistic rather than definitive. CISA lists suspicious sender addresses, generic greetings, spoofed hyperlinks, spelling and layout issues, and unexpected attachments as common signs of phishing. These are useful, but none is a law of nature. Some legitimate emails are ugly. Some malicious ones are polished. Attackers improve by removing the clues defenders teach users to expect.

This does not make detection hopeless. It means detection has to be layered. Technical systems can authenticate domains, filter likely spam, inspect links, enforce MFA, and flag anomalous behavior. People can verify out of band, distrust urgency, and treat unusual requests as exceptions. Processes can require independent approval or step-up verification for sensitive actions. No single signal is enough because the attack itself is trying to manufacture a convincing interpretation.

This also explains why blaming users is such a weak strategy. The UK NCSC argues for a layered approach precisely because some phishing attacks will always get through. It also notes the limits of phishing simulations: they cannot teach people to spot every attack, and punitive use can erode trust. That is a sober and useful principle. If your defense assumes users will never be deceived, your defense has already failed. Good security design assumes some deception will succeed and then works to limit the resulting damage.

What practical controls reduce social‑engineering damage?

| Control family | Primary effect | Example measures | Residual risk |

|---|---|---|---|

| Verification | Confirms requester identity | Out-of-band checks, known numbers | Can be bypassed by spoofing |

| Authentication & Authorization | Raises attacker cost | MFA, step-up auth, time-bound tokens | Adds user friction |

| Message authenticity | Makes fakes costlier | DMARC/SPF/DKIM, EIP-712, Verify API | Dependent on adoption |

| Permission constraints | Reduces blast radius | Least privilege, limited approvals, delays | Operational friction |

| Recovery speed | Limits damage window | Monitoring, alerts, rapid revoke | Depends on detection speed |

The strongest controls against social engineering all follow the same general rule: they reduce the amount of harm a single mistaken belief can cause.

One family of controls improves verification. CISA recommends independently verifying unknown callers or requesters and avoiding disclosure of personal or organizational information until legitimacy is confirmed. In practice, this means using a known-good phone number, website, or internal directory rather than replying through the original message. In crypto, it means checking the real domain, understanding what a signature does, and preferring interfaces that show human-readable details.

A second family improves authentication and authorization. MFA is especially valuable because it breaks a common attack chain: stealing one secret is no longer enough. CISA explicitly recommends using multifactor authentication, and the broader principle is more general. If a password, code, device, approver, and time-bound session are all required, the attacker must defeat more than a single socially engineered disclosure.

A third family improves message authenticity and rendering. NCSC recommends anti-spoofing controls such as DMARC, SPF, and DKIM to make domain spoofing harder. EIP-712 improves signing clarity by structuring what is shown to the user. WalletConnect Verify adds domain-risk signals to connection flows. These controls do not teach users to be perfect. They make legitimate requests look more distinct from fake ones.

A fourth family constrains permissions and blast radius. Limit admin privileges. Reduce standing approvals. Require additional review for high-risk actions. Use withdrawal delays, device whitelisting, or anti-phishing features on exchanges. In wallets and dapps, be cautious with token approvals and broad delegated permissions. The fewer powers a single action grants, the less valuable that action becomes to an attacker.

A fifth family improves recovery speed. Monitoring, alerting, and clear reporting channels matter because social engineering often becomes obvious only after the action is taken. CISA advises immediate internal reporting, password changes including reused passwords, and financial notifications when compromise is suspected. In organizations, users need to know where to report without fear of punishment. If reporting is slow or embarrassing, attackers get more time.

Notice what these controls have in common. They do not attempt to eliminate persuasion from the world. They change the environment so that persuasion alone is insufficient.

How do AI and deepfakes change social‑engineering risk?

| Signal | Old trust value | New AI-driven risk | Defensive shift |

|---|---|---|---|

| Voice | Recognizable voice implied authenticity | Easy voice cloning enables impersonation | Provenance, liveness, out-of-band checks |

| Video | Live appearance suggested legitimacy | Deepfake video can impersonate leaders | Signed provenance, verification workflows |

| Text and messages | Personalized text felt credible | Mass-personalized, highly targeted lures | Multi-channel verification, stricter filters |

| UI and interfaces | Familiar UI felt trustworthy | Polished fake interfaces trick users | On-device verification, readable prompts |

The arrival of better generative tools does not create social engineering, but it changes its economics. Guidance from CISA, FBI, and NSA emphasizes that synthetic media can be used to impersonate leaders and enable access to networks, communications, and sensitive information.

The practical effect is that signals once treated as stronger evidence become easier to counterfeit at scale.

- a convincing voice note

- a live video call

- a personalized message

That shifts defense away from pure content judgment and toward stronger provenance and process. Detection tools can help, but the same guidance notes that detection is a cat-and-mouse problem and may produce false positives. Authentication methods that embed provenance or require real-time identity verification can be stronger, but they are not yet universal. So the near-term implication is straightforward: organizations should assume that apparent realism is becoming less trustworthy.

In crypto, the same pattern appears in more polished fake interfaces, realistic support interactions, and better-crafted governance or signing requests. A malicious proposal, website, or support flow does not need obvious mistakes anymore. The burden therefore moves to mechanisms that bind requests to verifiable context: official domains, signed metadata, on-device verification, typed structured signing, and narrow approvals.

Why can’t systems remove all human trust from security?

It is tempting to ask for a final cure. There is none, because any usable system must leave some role for trust, interpretation, and consent. Humans still hire people, approve payments, reset accounts, join meetings, and sign transactions. If every action were fully automated, some social-engineering paths would shrink, but many systems would become unusable or dangerously rigid.

So the real goal is not to remove humans from security. It is to design systems where human judgment is supported by good context, where errors do not immediately become catastrophes, and where attackers cannot cheaply imitate legitimacy. This is why social engineering sits at the boundary between technical design, interface design, operations, and culture. It is not only about awareness training, and not only about software. It is about how trust is represented and checked.

Conclusion

Social engineering is the practice of using deception to get a person to reveal information, grant access, or approve an action the attacker should not control. Its power comes from a simple fact: secure systems still depend on human decisions.

The most useful way to remember it is this: the attacker is trying to turn trust into capability. Defenses work when they make legitimacy easier to verify, dangerous actions easier to understand, permissions narrower, and mistakes less costly. That is as true for passwords and help desks as it is for wallet signatures and token approvals.

How do you secure your crypto setup before trading?

Social Engineering belongs in your security checklist before you trade or transfer funds on Cube Exchange. The practical move is to harden account access, verify destinations carefully, and slow down any approval or withdrawal that could expose you to this risk.

- Secure account access first with strong authentication and offline backup of recovery material where relevant.

- Translate Social Engineering into one concrete check you will make before signing, approving, or withdrawing.

- Verify domains, addresses, counterparties, and approval scope before you confirm any sensitive action.

- For higher-risk or higher-value actions, test small first or pause the workflow until the security check is complete.

Frequently Asked Questions

Awareness training reduces risk but cannot teach people to spot every possible deception, and punitive phishing simulations can erode trust or create legal risk; therefore guidance (NCSC and the article) recommends layered technical and process controls rather than relying on training alone.

EIP-712 lets dapps present typed, structured data and domain fields so wallets can show human‑readable meaning and where a signature applies, which helps users judge legitimacy; however the standard does not provide replay protection and its effectiveness depends on wallet UX and wide implementation by clients.

Approval‑phishing in crypto typically tricks a user into granting an on‑chain approval or token allowance (a standing permission) that the attacker later uses to drain funds, rather than stealing a password; because many blockchain actions are irreversible, that granted permission can enable rapid, permanent loss.

Controls that change the game are those that reduce what a single mistaken trust decision can do: independent verification of requesters, multifactor authentication, anti-spoofing (DMARC/SPF/DKIM) and clearer message rendering, constrained permissions and reduced standing approvals, plus fast reporting and recovery procedures to limit damage.

Because social engineering attacks convey deceptive meaning rather than a malformed technical artifact, automated signals (sender address, links, layout) are all probabilistic; detection therefore needs layered defenses - authentication, filters, behavioral anomaly detection, out‑of‑band verification, and restricting high‑risk actions - not a single decisive flag.

Improved generative tools and deepfakes make familiar signals (voices, video, polished messages) cheaper to fake, so organizations should assume apparent realism is less trustworthy and move defenses toward provenance and process controls - signed metadata, on-device verification, and binding requests to verifiable contexts - while recognizing detection will remain cat‑and‑mouse.

WalletConnect’s Verify API and similar signals raise the bar by showing domain verification and threat flags, but they are explicitly described as mitigations not guarantees; wallets should treat Verify results as one input (warning, block, or require extra verification) rather than a single‑point authority for trust decisions.

Related reading