What is Client Diversity?

Learn what client diversity is, why blockchains need multiple independent clients, how it reduces correlated failure, and why operators use minority clients.

Introduction

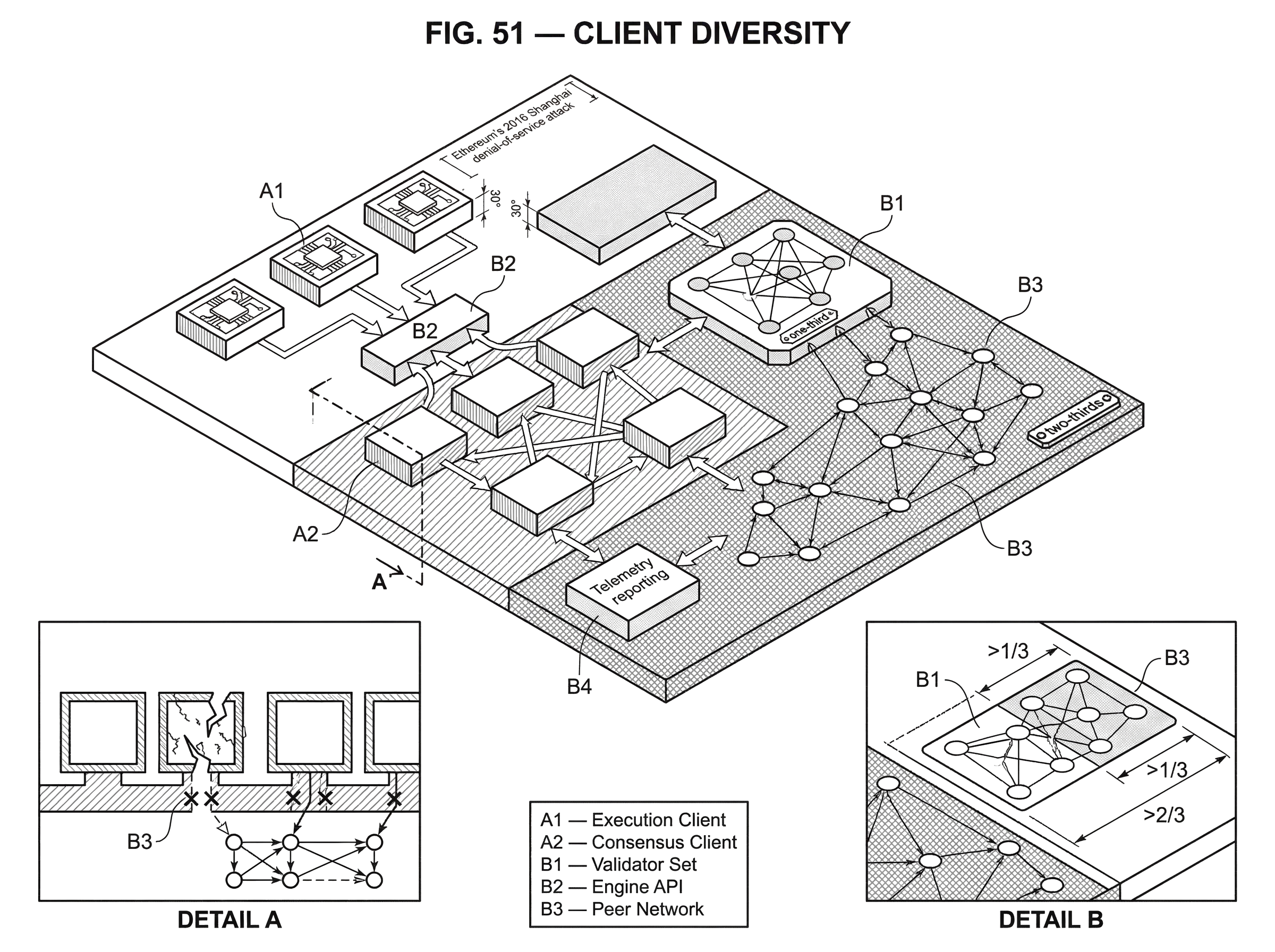

Client diversity is the practice of having a blockchain network run on multiple independently developed node clients rather than one dominant implementation. That may sound like a software distribution preference, but it is really a safety property of the network itself. If too many nodes, validators, or infrastructure providers rely on the same codebase, then one bug can become a network-wide event.

The reason this matters is simple: blockchains are supposed to be decentralized at the level of control, but they can still be dangerously centralized at the level of software. A network can have many operators in many countries and still behave like a monoculture if most of them run the same client. In that case, the independence of the operators does not buy much protection against implementation mistakes, denial-of-service weaknesses, or bad upgrades.

A useful way to think about the problem is this: the protocol is the rulebook, but the client is the machine that interprets and applies the rulebook. If every machine is built differently but all implement the same rules correctly, the network is robust. If nearly everyone uses the same machine, then the protocol may be decentralized in theory while operationally fragile in practice.

That is why ecosystems such as Ethereum put so much emphasis on minority clients, cross-client testing, and measuring client share. The goal is not diversity for its own sake. The goal is to limit correlated failure.

How can multiple independent clients implement the same blockchain protocol?

A client is the software that determines how a node behaves. It validates blocks, checks transactions, communicates with peers, stores state, and follows upgrade rules. In Ethereum after The Merge, an operator typically runs both an execution client and a consensus client, each implementing a different part of the system and communicating through defined APIs.

The key distinction is between the protocol and the implementation. The protocol says what must happen. A client is one team’s attempt to encode those rules in actual software. If the protocol is well specified, different teams can write different clients in different languages and still interoperate on the same network.

This separation is what makes client diversity possible. Ethereum’s proof-of-stake consensus rules, for example, are maintained in public specifications, with reference tests and versioned upgrades. That shared specification gives independent teams a common target. They do not need to copy one codebase; they only need to satisfy the same rules and pass the same tests.

That may seem like duplication of effort. In one sense, it is. But the duplication is deliberate. When several teams independently implement the same rules, they create redundancy against software failure. The network is no longer betting its safety on one parser, one networking stack, one database design, or one release process.

The important phrase here is independently developed. Two binaries derived from the same codebase do not give much protection. Diversity works when implementations differ in meaningful ways: separate teams, separate code, often separate programming languages, and ideally somewhat different operational assumptions. That independence reduces the chance that one mistake, one exploit path, or one misjudged optimization knocks out the same fraction of the network all at once.

Why is running a single dominant client risky for a blockchain?

The core mechanism behind client diversity is not complicated. Software bugs are unavoidable. The question is whether a bug becomes local or systemic.

If a minority client has a bug, the damage is usually bounded by that client’s share of nodes or validators. The affected operators may go offline, fail to import blocks, or behave incorrectly, but the rest of the network can often keep the chain alive and provide a reference point for recovery. If a majority client has the same kind of bug, the bug stops being a client problem and becomes a consensus problem.

This is a classic correlated-failure issue. Operators often think they are independent because they control separate machines or keys. But if they all run the same software version, their failures are correlated. What changes the blast radius is not just how many operators exist, but how many independent failure domains exist.

An analogy helps here. Imagine a city where every building is owned by a different person, but all of them were constructed from the same flawed blueprint. Ownership is decentralized, yet structural risk is concentrated. Client monoculture works the same way. The analogy explains why operator count alone is not enough; it fails in the sense that software can often be patched faster than buildings can be rebuilt. Still, the underlying point holds: common design creates common failure.

This is why Ethereum guidance argues that users should be spread roughly across multiple production-grade clients, rather than clustering around a favorite one. The exact “ideal” distribution is partly a judgment call, but the principle is stable: no single client should become so large that its failure can dominate the network.

At what client-share thresholds does finality and safety become at risk?

| Validator share | Primary risk | Practical effect | Operator action |

|---|---|---|---|

| Under one-third | Low systemic risk | Finality intact | Monitor client share |

| ≈ one-third | Finality risk | Can prevent finalization | Reduce reliance on that client |

| ≈ two-thirds | Consensus integrity | May finalize wrong chain | Emergency coordination required |

| Execution dominance | State/tx risk | Incorrect transaction processing | Diversify execution clients |

Client concentration is not equally dangerous at every level. Some thresholds matter more than others because of how the consensus system works.

On Ethereum’s consensus layer, a client with more than roughly one-third of validating weight represents a serious risk because faults above that level can interfere with finality. Finality is the property that the network has irreversibly agreed on the chain’s history. If too much of the validator set is stuck, partitioned, or behaving incorrectly due to one client bug, the chain may keep producing blocks but fail to finalize them. That is not just a performance issue. It undermines the confidence that recent history is settled.

The next threshold is even more severe. If something like two-thirds of validators share a client bug that causes them to agree on the wrong state transition, the network can finalize an incorrect chain or split into conflicting views. At that point the problem is no longer “some validators are down.” It becomes “the majority may have applied the protocol incorrectly.” Recovery can be operationally painful and economically destructive, especially if validators are exposed to slashing or become trapped on incompatible branches.

These thresholds explain why people often talk about keeping any single client below about one-third share. That number is not magic in the abstract; it comes from the underlying fault-tolerance structure of the protocol.

Execution clients matter too, even though their risk sometimes feels less intuitive. Execution software interprets transactions, maintains state, and produces the payloads that the consensus layer ultimately agrees on. If one execution client dominates and misprocesses transactions or state transitions, that error can propagate upward into block production and chain health. Ethereum guidance has explicitly warned that heavy concentration in a single execution client is problematic for the same reason as consensus concentration: too much of the network depends on one implementation being right.

How did client diversity limit the impact of past network incidents?

The value of client diversity becomes easiest to see during an incident.

Ethereum’s 2016 Shanghai denial-of-service attack is a useful example. Attackers exploited a weakness in the dominant client, Geth, by causing it to perform a slow disk I/O operation many times per block. Because Geth had such a large share, the attack put enormous stress on the network. But the entire chain did not fail in the same way, because alternative clients were online and did not share the same vulnerability.

What mattered was not that the network had a backup chain somewhere. What mattered was that live nodes implementing the same protocol through different code paths continued to function. They provided continuity, alternative views of valid chain progress, and a basis for diagnosis. In other words, diversity turned what could have been a total monoculture failure into a more survivable event.

This is the pattern to remember. A client-specific bug can still be serious. Operators may still suffer downtime, missed rewards, or emergency migration. But with enough diversity, the network retains a healthy fraction of nodes that can keep validating, networking, and coordinating recovery. Diversity does not eliminate incidents; it changes their shape.

The same logic applies beyond denial-of-service attacks. A parsing bug, a fork-choice bug, a database corruption issue, a peer-discovery failure, or a bad release can all remain contained if they are not shared across most of the network. What diversity buys you is not perfection. It buys you room to recover.

How is client diversity built and encouraged in real networks?

| Pillar | What it is | Why it helps | Operator step |

|---|---|---|---|

| Specification | Public precise protocol spec | Enables independent interoperability | Use reference tests |

| Independent implementations | Separate teams and codebases | Reduces shared bug vectors | Encourage minority clients |

| Operator adoption | Actual nodes running clients | Makes diversity network-effective | Migrate carefully (slashing) |

A network does not get client diversity automatically just because its protocol is open source. It needs three things to line up.

First, there must be a public and reasonably precise specification of the protocol. If the rules are vague, then independent implementations will drift for accidental reasons rather than deliberate independence. Ethereum’s consensus specifications and reference tests exist precisely to reduce that risk. They give client teams a shared target and a way to check whether different implementations behave the same under known conditions.

Second, there must be real implementation independence. In practice this means separate teams, separate repositories, different engineering cultures, and often different programming languages. Language diversity is not a complete solution, but it matters because classes of bugs and performance tradeoffs often differ by ecosystem. When one client is written in Go, another in Rust, another in Java, and another in Nim, the chance of identical implementation failure drops compared with a single-language monoculture.

Third, operators actually have to use those clients. This is the part people often skip. It is possible for an ecosystem to have many excellent clients on paper while still being fragile because one of them dominates real deployment. Diversity only exists at the network level when usage is distributed, not merely when alternatives exist in a GitHub organization.

That is why operator guidance often focuses on switching to minority clients. From the perspective of resilience, the most valuable new node is usually not “another node on the majority client.” It is a node that increases the share of an underrepresented, production-grade implementation.

How do teams measure client market share and what are the limitations?

| Method | Data source | Strength | Limitation |

|---|---|---|---|

| Node telemetry | ethernodes.org, peer scans | Direct node counts | Anti-bot and visibility gaps |

| Beacon fingerprinting | Attestation timing, Blockprint | Classifies consensus clients | Degrades with client modes |

| Engine API / graffiti | ClientVersion in graffiti | Improves EL visibility | Hidden by mev-boost or opt-out |

| Self-reported dashboards | Staking pools reports | Stake-weighted insight | Incomplete and unverifiable |

If client diversity is so important, it seems like it should be easy to measure. In practice, measurement is surprisingly messy.

For execution nodes, telemetry services can sometimes infer client type from peer behavior, network fingerprints, or self-reporting, and sites such as ethernodes.org are commonly used to estimate distribution. But these numbers are snapshots, methods vary, and anti-bot restrictions or incomplete visibility can make independent verification difficult.

On the consensus side, the problem is even trickier. Some tools infer client identity from beacon-chain behavior, such as attestation timing and other observable patterns. Blockprint is a well-known example. But this is classification, not direct revelation. The accuracy of these systems depends on the model, the training data, and operational details such as whether clients are running in unusual modes. Research evaluating Blockprint found that classifier performance can degrade when clients operate under different subnet subscription behaviors, which means measurement itself has error bars.

This matters because governance by dashboard can be misleading if the dashboard is uncertain. A reported minority client might actually be somewhat larger or smaller than estimated. Ethereum documentation explicitly notes that consensus-client market share can be hard to measure and that classification algorithms may confuse minority clients.

There is also a deeper problem: many validators do not reveal their execution client on-chain. After The Merge, execution and consensus clients communicate through the Engine API, but block proposals often do not carry an obvious fingerprint of the execution client, especially when validators use mev-boost. That weakens visibility into execution-layer diversity right where the network most wants confidence.

In response, the ecosystem has worked on better reporting hooks. A recent Engine API change added a standardized way for clients to expose version information so consensus clients can embed client identification into the block graffiti field by default. This does not create diversity by itself, and the data is still self-reported rather than cryptographically guaranteed, but it improves measurability. That is valuable because you cannot manage a concentration risk you cannot see.

Why software diversity alone doesn't eliminate correlated infrastructure risk?

There is an easy misunderstanding here: if several client names appear on a dashboard, the network must be safe. Not necessarily.

The point of client diversity is to reduce correlated failure. Client implementation is one source of correlation, but not the only one. If many validators run different clients while all depending on the same cloud provider, the same staking operator, the same relay setup, or the same key-management path, then significant correlated risk remains.

Ethereum’s security analysis makes this explicit. Homogeneity in client choice and infrastructure setup increases correlated-failure risk, and the effect is amplified when validator stake is concentrated in a few large staking pools, custodians, or operators. In other words, software diversity can be undermined by operational centralization.

Here the mechanism is straightforward. Suppose two clients are genuinely independent, but 40% of stake using them is hosted in the same cloud region or controlled by the same large provider with identical deployment automation. A cloud outage, bad rollout, or operator misconfiguration can still produce synchronized failures. The chain does not care whether those failures came from the same binary or the same hosting dependency; what matters is how much validating weight disappears or misbehaves together.

So client diversity should be understood as part of a larger resilience strategy. It works best when paired with diversity in operators, geography, hosting, networking, and operational processes. The precise mix depends on the chain, but the principle is the same across architectures: reduce common-mode failure.

How should validators and node operators switch clients safely?

For node operators and validators, client diversity is not mainly a theory question. It shows up as an operational choice: which software stack do I run, and how do I switch safely if the network is too concentrated?

In Ethereum, that choice usually involves selecting both an execution client and a consensus client. Official guidance explicitly recommends choosing combinations that improve diversity, not just defaulting to the most popular stack. The exact recommended client list changes over time, but the direction is clear: if you are on an overrepresented client and a stable minority alternative exists, moving helps the network.

The hard part is that switching clients is not costless. Operators worry, often reasonably, about downtime, unfamiliar tooling, monitoring differences, performance characteristics, and upgrade cadence. Validators have an additional concern: slashing protection. If you move between consensus clients incorrectly and lose signing history, you can expose yourself to double-signing risks. That is why client teams document migration procedures and import/export of slashing-protection databases.

A realistic migration story looks something like this. A validator operator notices that their current client has become too dominant. They decide to move to a minority client, but before touching the live setup they export slashing protection history, verify backups, and prepare the new client with compatible network and authentication settings. Only then do they stop the old validator process, import the slashing database into the new environment, confirm connection to the execution layer, and bring the validator back online while watching status carefully. Each step exists for a reason: the goal is to change the failure domain without creating a new failure through sloppy operations.

This is a good place to separate principle from convention. The principle is that minority-client adoption improves resilience. The convention is how a given ecosystem organizes that migration: which tools exist, how telemetry works, what import format is used, and how teams recommend sequencing upgrades. Those details vary, but the underlying logic does not.

Is client diversity important for chains other than Ethereum?

Ethereum is the clearest example because it has multiple production clients on both execution and consensus layers, well-developed public discussion about market share, and explicit thresholds tied to proof-of-stake safety. But the underlying concept is broader.

Any blockchain or distributed system that relies on replicated software faces the same basic tradeoff. A single dominant implementation is easier to coordinate around in the short term. Documentation is simpler, tooling converges, and bugs may be fixed quickly because attention is concentrated. But that convenience comes at the cost of systemic fragility. The network becomes dependent on the correctness and operational discipline of one codebase.

Other ecosystems illustrate the opposite side of the tradeoff. In some chains, the canonical client is so dominant that “the protocol” and “the implementation” are almost treated as the same thing. That can work operationally for a while, especially when the codebase is mature and upgrades are tightly coordinated. But from a resilience standpoint, it means the chain has less protection against implementation-specific bugs than a genuinely multi-client network would.

So client diversity is best understood as a general infrastructure principle, not a chain-specific branding choice. Whenever independent nodes are supposed to agree on shared state, diversity of implementation can reduce the chance that one software failure becomes a network failure.

What are the limits of client diversity and how can it fail?

Client diversity is powerful, but it is not a magic shield.

The first limitation is that all clients can still share the same misunderstanding if the specification is wrong, ambiguous, or interpreted the same flawed way by multiple teams. Independent implementations defend best against implementation bugs, not against every possible specification-level mistake.

The second limitation is that popular minority clients are not automatically safer than dominant ones. A small client can have less battle-tested code, fewer operators, or weaker operational support. The goal is not to maximize the number of obscure clients. The goal is to spread usage across production-grade clients that are independently maintained and actively tested.

The third limitation is that recovery from catastrophic client failure can still be socially messy. Ethereum security analysis notes that in extreme scenarios, the community might need off-chain coordination such as social slashing or exceptional recovery measures, and the norms and tooling for that are underdeveloped. Diversity reduces the probability that such measures are needed; it does not fully solve what happens if they are.

There is also a live research question around incentives. Today, client diversity is driven mostly by operator choice, ecosystem norms, and public dashboards. That may not be enough if convenience keeps pulling people toward the largest client. Researchers have started exploring mechanisms that could provably identify minority-client use and reward it economically, including systems using verifiable computation and on-chain reward schemes. Those proposals are interesting, but they are not yet the standard operating model. For now, diversity remains more of a coordination problem than a protocol-enforced one.

Conclusion

Client diversity is the idea that a blockchain should rely on multiple independent implementations of the same protocol, not one dominant codebase. Its value comes from a simple mechanism: when failures are not correlated, bugs and attacks have a smaller blast radius.

That is why client market share matters, why minority clients matter, why specifications and reference tests matter, and why operators are urged to switch when one client becomes too large. A decentralized network is not truly robust if most of it runs the same software.

The short version worth remembering tomorrow is this: decentralization of operators is not enough if their software is centralized. Client diversity is how a network turns that insight into engineering practice.

How does client and infrastructure diversity affect my ability to use a network?

Client and infrastructure concentration affects real-world usage because it raises the chance of downtime, finality delays, or unexpected chain behavior that can block trades or withdrawals. Before funding or trading an asset, check client and operator diversity; on Cube Exchange you can research network health signals and then fund your account to execute trades or transfers.

- Visit client-share and telemetry pages (for example ethernodes.org for execution clients and Blockprint-style classifiers for consensus clients) and note any client with roughly >33% share.

- Review recent incident reports and client release notes on official client repos or community posts to see if that client had recent bugs or DoS events.

- Check operator and infra concentration: list the top staking pools or validators for the chain, inspect their hosting/cloud footprint, and verify whether major validators use the same relays or RPC providers.

- Fund your Cube account via the fiat on-ramp or by transferring a supported crypto asset to your Cube deposit address.

- Open the market or transfer flow on Cube. Use a limit order for price control or a market order for immediate execution, submit the trade or withdrawal, and monitor on-chain confirmations before moving funds off the exchange.

Frequently Asked Questions

On Ethereum’s consensus layer, a single client controlling roughly one-third of validating weight is risky because faults above that level can interfere with finality; if around two-thirds of validators share a bug the network can finalize an incorrect chain or split into incompatible views. These thresholds come from the protocol’s underlying fault-tolerance structure and are discussed explicitly in the article’s section on thresholds.

Operators typically export and verify slashing-protection data, set up the new client with compatible Engine/API and network settings, import the slashing database into the new environment, and only then stop the old process and bring the new validator online while closely monitoring status to avoid double-signing or downtime. The article emphasizes each step exists to change failure domain without creating a new failure through sloppy operations.

Measuring client share relies on imperfect signals: execution-client telemetry and explorer snapshots (e.g., ethernodes), and classifier techniques for consensus clients (e.g., Blockprint), but these methods have error bars because classifiers can be confused by different client modes and many validators don’t expose obvious fingerprints; the article notes this makes dashboards useful but uncertain. The evidence pages referenced (Blockprint experiments and Engine API changes) illustrate both the techniques and their limitations.

No: merely having different client names is not sufficient; diversity only reduces correlated failure when implementations are independently developed (different teams/repos/languages) and when operators’ deployments are also independent, because shared dependencies like the same cloud provider, staking pool, or deployment automation can recreate correlated risk. The article stresses that two binaries derived from the same codebase or clients all hosted the same way do not provide the intended protection.

Client diversity primarily defends against implementation bugs; it does not reliably protect against specification-level mistakes because multiple independent implementations can still interpret the same flawed or ambiguous spec the same way. The article explicitly warns that independent clients defend best against implementation-level failures, not against every possible specification error.

There are no widely adopted protocol-level incentives yet; the article notes diversity today is driven by operator choice and norms, though research and prototypes have proposed economic rewards using verifiable computation or zk/TEE-based proofs to incentivize minority-client use, but those mechanisms are not standard practice. The source evidence includes prototype research that explores such incentive designs but highlights unresolved calibration and generalizability questions.

Execution clients matter because they interpret transactions, maintain state, and produce block payloads; a dominant execution client that misprocesses transactions can propagate incorrect state up into the consensus layer, so concentration among execution clients creates similar systemic risk as concentration among consensus clients. The article calls out execution-client concentration as explicitly problematic for the same reasons as consensus concentration.

Operators should also diversify hosting, geography, staking operators/relays, and key-management paths because software diversity can be undermined by infrastructure or operator centralization - for example, many validators on different clients but the same cloud region or the same staking pool still creates correlated failure risk. The article highlights these non-software common-mode failure sources as important caveats to client-diversity benefits.

Related reading