What is SHA-256?

Learn what SHA-256 is, how it works internally, why it matters in cryptography and blockchains, and where its assumptions and limits matter.

Introduction

SHA-256 is a cryptographic hash function: it takes an input of almost any practical length and deterministically turns it into a fixed 256-bit output. That sounds modest, but it solves a deep problem. Computers are good at copying and moving data, yet many security systems need a short value that stands in for a much larger message; a fingerprint you can recompute, compare, commit to, and build protocols around. SHA-256 is one of the standard tools for doing that.

The reason hash functions matter is that security systems rarely protect raw data directly. A blockchain header commits to transactions through hashes. A digital signature scheme often signs a hash of a message rather than the whole message. A key-derivation or message-authentication construction may use hashing internally. In all of those settings, the hash is useful only if it behaves in a very particular way: easy to compute forward, hard to reverse, and hard to manipulate into accidental or malicious collisions.

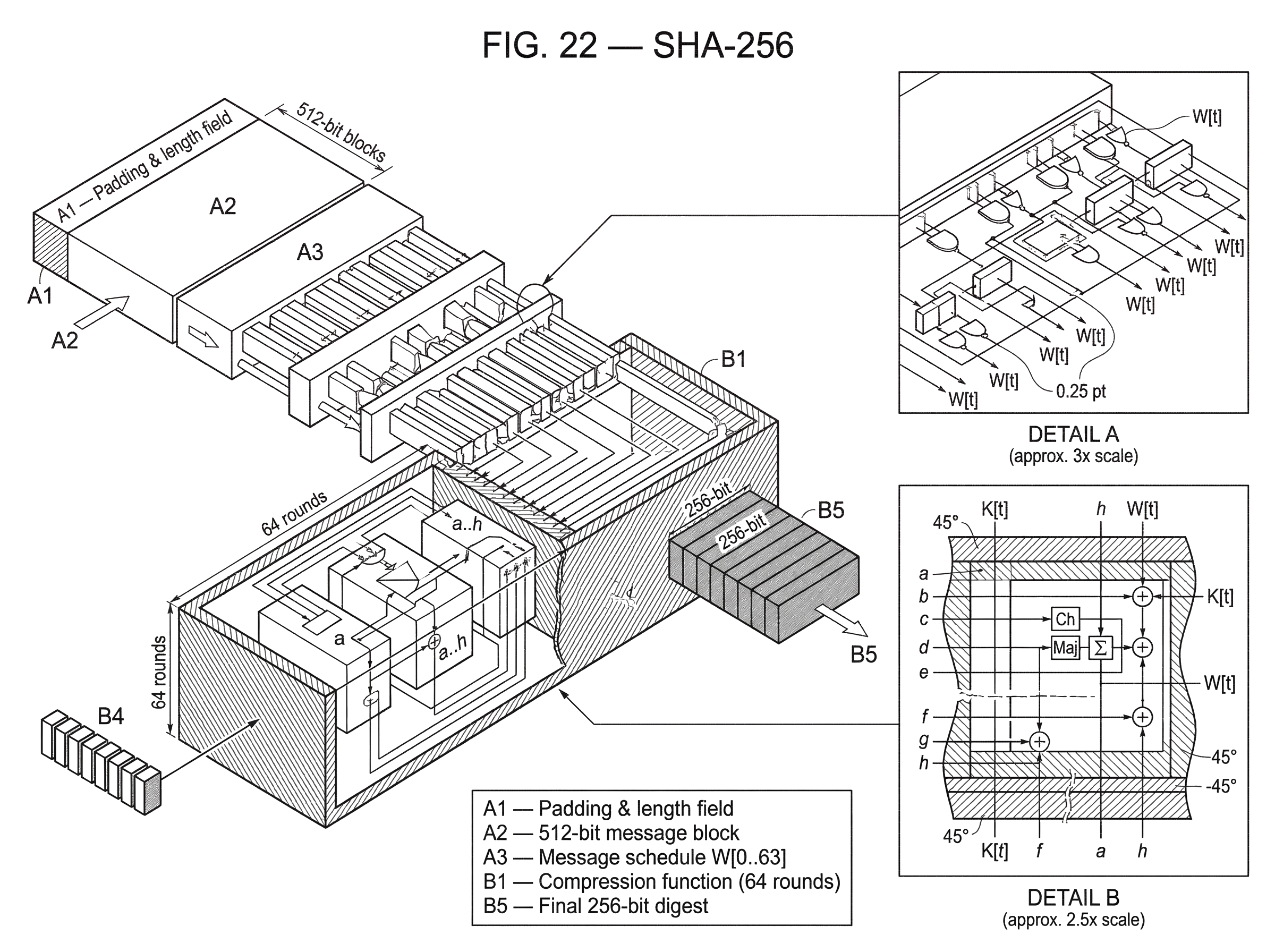

SHA-256 is part of the SHA-2 family standardized by NIST in the Secure Hash Standard. The standard defines SHA-256 as producing a 256-bit message digest, operating on 512-bit blocks, and using 32-bit words internally. Those numbers are not cosmetic. They shape how the algorithm breaks a message apart, mixes it, and accumulates a final digest.

If you only remember one idea, remember this: SHA-256 is a machine for repeatedly compressing structured input into a fixed-size state, in a way designed to destroy visible patterns while preserving determinism. The same input always gives the same digest. But finding a different input with the same digest, or recovering the input from the digest, is intended to be computationally infeasible.

Why do cryptographic systems use hash functions?

The puzzle a hash function solves is a mismatch of sizes. Real messages vary in length: a password, a software release, a block header, an archive, a stream of transactions. Security checks, by contrast, want compact values with a fixed size. A verifier wants to compare 32 bytes, not an entire gigabyte file. A block header wants to commit to complex data with a single field. A signer wants a bounded input size for the expensive public-key operation.

A normal checksum is not enough. Checksums are designed mostly to catch random errors like transmission noise. An attacker can often manipulate them. A cryptographic hash tries to give you something stronger: a short digest that changes drastically when the input changes, and that resists deliberate attempts to game the mapping.

That is why standards documents describe secure hash algorithms in terms of two hardness goals: for a given digest, it should be computationally infeasible to find a message that hashes to it, and it should be computationally infeasible to find two different messages with the same digest. The first property is usually called preimage resistance. The second is collision resistance. There is also a closely related property, often crucial in practice: given one message, it should be hard to find a different message with the same digest. That is second-preimage resistance.

These properties are not magic absolutes. A hash function maps a huge input space into a fixed 256-bit output space, so collisions must exist in principle. The claim is not “collisions are impossible.” The claim is that finding one should be so expensive that protocols can rely on the hash as a practical commitment.

How is SHA‑256 structured (state, blocks, and iteration)?

At a high level, SHA-256 follows a common design pattern in hash functions: break the message into fixed-size blocks, process each block in sequence, and carry forward an internal state. The final state becomes the digest.

For SHA-256, that internal state consists of eight 32-bit words. You can think of it as an eight-register running summary of everything processed so far. The message is handled in 512-bit blocks. Each block does not directly replace the state; instead, it is mixed into the current state through a compression procedure, and the result becomes the next state.

This “state plus block goes to new state” pattern matters more than any individual constant. It means SHA-256 is iterative. The digest of a long message is built by repeatedly applying the same core machinery. That is what lets it handle messages of arbitrary length below the standard’s bound of less than 2^64 bits for SHA-256.

But there is an immediate issue. If messages can have arbitrary length, where do the blocks end? How do you tell apart messages that happen to align differently? The answer is preprocessing.

How does SHA‑256 padding work and why does it matter?

Before hashing starts, SHA-256 pads the message in a very specific way. The standard rule is: append a single 1 bit, then append enough 0 bits so that the message is 64 bits short of a multiple of 512, and then append a 64-bit encoding of the original message length.

This may seem like a technical detail, but it does important work. First, it makes every padded message length a multiple of 512, so the algorithm can parse the message cleanly into blocks. Second, the final 64-bit length field makes the encoding of messages unambiguous. Without a length encoding, different underlying messages could interact badly with the padding boundary.

A concrete example makes this easier to see. Suppose the input is the three-byte ASCII string abc. That is 24 bits long. SHA-256 first appends a 1 bit, then enough 0 bits to leave room for the final 64-bit length field, and finally appends the 64-bit representation of 24. The result is exactly one 512-bit block. A much longer message might require many blocks, but the rule is the same: every message becomes a sequence of complete 512-bit chunks with its original length encoded at the end.

This is one place where a smart reader can get misled. Padding is not just a formatting trick for software convenience. In Merkle–Damgård-style hash constructions like SHA-256, the exact padding rule is part of the cryptographic definition. Change it, and you have changed the function.

How does SHA‑256 process a 512‑bit block across 64 rounds?

Once a 512-bit block is ready, SHA-256 parses it into sixteen 32-bit words. Those are not enough by themselves for the full mixing process, so the algorithm expands them into a message schedule of sixty-four 32-bit words, usually written as W[0] through W[63].

The first sixteen schedule words come directly from the block. The remaining forty-eight are generated from earlier schedule entries using shifts, rotations, and XOR-like mixing operations. This expansion step matters because it spreads local structure across a wider temporal window. Instead of each original word affecting only an isolated part of the computation, the schedule causes information from the block to keep reappearing later in transformed form.

The compression step then runs for 64 rounds. Each round updates eight working variables, each 32 bits wide. These working variables begin as the current hash state, then evolve round by round under the influence of the schedule words, a fixed round constant for that round, and several nonlinear bitwise functions.

The standard names these functions Ch, Maj, Σ0, Σ1, σ0, and σ1. The names matter less than what they do. Some functions use rotations and XORs to diffuse bits within a word. Others choose or combine bits from multiple words in a way that introduces nonlinearity. That mix is deliberate: pure linear operations are too structured and therefore too analyzable. The design tries to create fast software operations that still frustrate attacks.

The round constants are also fixed in the standard: a sequence of sixty-four 32-bit words derived from the fractional parts of the cube roots of the first sixty-four prime numbers. The practical point is not that primes are mystical. It is that the constants are fixed, public, and generated by a rule that does not look tailored to hide a trapdoor.

What operations occur in a single SHA‑256 round?

It helps to understand the mechanism in prose before seeing formulas. At each round, SHA-256 takes the current eight working words and computes two temporary values. One temporary value depends heavily on the “upper” part of the state, the current schedule word, the round constant, and a choose-style nonlinear function. The other depends on a majority-style combination of part of the state and another rotation-based mix.

Those two temporary values then shift the eight working words forward in a structured way. One word drops out of its old place and reappears, modified, elsewhere. Another gets the sum of one temporary value and an older register. Over 64 rounds, every part of the current state is repeatedly mixed, rotated, added modulo 2^32, and influenced by both the message schedule and the constants.

The important invariant is that the state always remains eight 32-bit words, but the meaning of each word becomes harder and harder to interpret as rounds progress. The algorithm is trying to create diffusion: a small difference in the input should spread so widely through the internal state that by the end, the output looks unrelated to the original message in any simple way.

After the 64 rounds, the final working variables are added back into the incoming hash state, word by word modulo 2^32. That updated eight-word value becomes the hash state for the next block. After the last block, those eight words concatenated together form the 256-bit digest.

This “feed-forward” step (adding the round result back into the incoming state rather than simply replacing it) is part of how the compression function accumulates history across blocks.

What is the formal flow of SHA‑256 (initial values, schedule, rounds)?

With the intuition in place, the formal structure is straightforward.

SHA-256 begins with an initial hash value H(0), consisting of eight fixed 32-bit words defined by the standard. For each message block, it constructs the schedule W[0..63]. It copies the current hash value into eight working variables, commonly a through h.

For each round t from 0 to 63, it computes two temporary values from the current working variables, the schedule word W[t], and the round constant K[t]. The functions used include:

Ch(x, y, z), which selects bits fromyorzdepending onxMaj(x, y, z), which takes the bitwise majority ofx,y, andzΣ0(x)andΣ1(x), built from rotate-right operations and XORσ0(x)andσ1(x), also built from rotations, shifts, and XOR

The schedule words W[16] through W[63] are derived from earlier schedule entries using σ0 and σ1. The working variables are updated each round using modular addition modulo 2^32.

You do not need the exact formulas memorized to understand SHA-256 conceptually. What matters is the architecture: structured expansion of the block, repeated nonlinear mixing, and cumulative state update.

Why do SHA‑256 digests appear random despite determinism?

A frequent confusion is this: if SHA-256 is deterministic, why do people talk as if its output is random? The answer is that the digest is not random in the probabilistic sense; it is fully determined by the input. But for a well-designed cryptographic hash, the mapping should look random-like to anyone who does not know an exploitable structure.

That random-like behavior has practical consequences. Flip one bit in the input, and the digest should change dramatically. Two similar files should not produce similar-looking digests. If outputs were visibly patterned, attackers could exploit that structure to search faster for collisions or preimages.

This is often called the avalanche effect. The analogy is useful because it explains the visible consequence: tiny causes, large output differences. But the analogy fails if taken too literally. SHA-256 is not a chaotic natural system; it is a precisely specified sequence of bit operations. Its security comes not from mystique but from the difficulty of finding a shortcut through those operations.

Where is SHA‑256 used in blockchains and other systems?

| Use case | Why used | Best practice | Common pitfall |

|---|---|---|---|

| Blockchain commitments | Compact, fixed fingerprint | Use full digest (or chain convention) | Truncation lowers security |

| Address derivation | Deterministic identifier | Derive then truncate cautiously | Collisions if truncated too short |

| Authentication / MACs | Fast hash primitive | Use HMAC-SHA-256 | Do not ad-hoc key the hash |

| Password storage | Fast computation (bad here) | Use Argon2 / scrypt / PBKDF2 | Raw SHA-256 enables brute force |

| File integrity checks | Quick integrity fingerprint | Use SHA-256 for adversarial checks | Mistaking checksum for security |

SHA-256 appears everywhere in cryptographic systems because fixed-size commitments are useful everywhere.

In blockchain systems, the most obvious use is data commitment. Bitcoin is the canonical example: SHA-256 underpins block and transaction-related hashing, and the network’s proof-of-work uses double application of SHA-256 to the block header. But the idea is not unique to Bitcoin. Tendermint-based systems document SHA-256 in validator address derivation by hashing a public key and truncating the result to 20 bytes. The Cosmos SDK similarly uses SHA-256 in address pipelines: for example, secp256k1 account addresses are derived by hashing the compressed public key with SHA-256 and then applying RIPEMD-160, while Ed25519 consensus addresses use a truncated SHA-256-based form.

Elsewhere, SHA-256 shows up as an available primitive rather than the chain’s sole canonical hash. Substrate exposes a sha2_256 host function to runtimes alongside Keccak, Blake2, and others. Solana’s whitepaper describes Proof of History as an iterative sequence built from a cryptographic hash such as SHA-256, where each output becomes the next input and selected outputs act as verifiable timestamps.

Outside blockchains, SHA-256 is also widely used for file integrity checks, certificate and signature workflows, HMAC-based message authentication, and HMAC-based key derivation. The RFC material around SHA-256 includes reference code not just for the hash itself, but also for HMAC and HKDF built on top of SHA-family functions. That is an important distinction: many secure applications do not use raw SHA-256 directly. They use a construction built from it.

What are SHA‑256’s strengths and limits (when to use it and when not to)?

| Task type | SHA-256 fit | Recommended alternative | Why |

|---|---|---|---|

| Public commitments | Suitable | Raw SHA-256 (full digest) | Deterministic compact fingerprint |

| Message authentication | Not by itself | HMAC-SHA-256 | Prevents length-extension misuse |

| Password storage | Unsuitable | Argon2 / scrypt / PBKDF2 | Requires slow, salted hashing |

| Key derivation | Partial fit | HKDF (HMAC-SHA-256) | Use HMAC-based KDF construction |

The strongest way to understand SHA-256 is to separate the primitive from the constructions built around it.

By itself, SHA-256 gives you a deterministic, unkeyed hash. That means anyone can compute it, and the same input always yields the same output. This is exactly what you want for public commitments and integrity fingerprints. It is not what you want for everything.

For passwords, raw SHA-256 is the wrong tool. Password hashing needs to be deliberately expensive and usually salted, so that guessing attacks become costly. Fast hash functions are useful for integrity, but their speed works against you in password storage.

For authentication, raw SHA-256 is also often not enough. If you want to authenticate a message with a secret key, the standard tool is usually HMAC-SHA-256, not “hash the key and message together however seems natural.” That distinction matters because SHA-256’s Merkle-Damgård structure creates a well-known issue: length extension.

Length extension means that if an attacker knows SHA-256(message) for some unknown internal state boundary implied by the padded message, the attacker can sometimes compute the hash of an extended message without knowing the original message content in full as a fresh string. The exact exploitability depends on how the hash is being used, but the lesson is simple: using raw Merkle-Damgård hashes as ad hoc MACs is dangerous. Standard constructions like HMAC exist precisely to close that gap.

This is a good example of a broader principle in cryptography: a primitive can be sound, and a protocol can still misuse it.

What current security guarantees and limits does SHA‑256 have?

The standard describes SHA-256 as secure in the sense that preimages and collisions should be computationally infeasible to find. That remains the intended security claim. But careful wording matters.

There is a large difference between attacking full SHA-256 and attacking reduced-round variants. Research papers continue to improve collision attacks on reduced-step SHA-2 instances, including practical results on variants with substantially fewer than the full 64 steps. Those papers are important because they probe the security margin and teach us how much slack the design may have. But they do not amount to a break of the standardized full-round SHA-256 function.

That distinction is easy to blur in casual discussion. If a paper reports a collision for 39-step SHA-256, that is not a collision for the full function. It is evidence about structure and attack methods, not evidence that Bitcoin blocks or software release checksums can now be forged at will.

So the right summary is measured: cryptanalysts have made meaningful progress on reduced-round SHA-2 analysis, but there is no cited evidence here of a practical collision or preimage break for full SHA-256.

How does truncating SHA‑256 outputs affect security?

| Option | Output length | Security effect | Best for |

|---|---|---|---|

| Full SHA-256 | 256 bits (32 bytes) | Maximum collision/preimage margin | High-assurance commitments |

| Truncate (e.g., 20 bytes) | 160 bits (20 bytes) | Reduced security margin | Space-limited IDs with analysis |

| SHA-256 → RIPEMD-160 | 160 bits (20 bytes) | Different-algorithm diversity | Bitcoin-style address pipeline |

Sometimes systems do not use the full 256 bits of SHA-256 output. They truncate it to a shorter value, often for address size or storage convenience. This is common enough that it deserves explicit attention.

Truncation does not make the hash useless. A truncated digest can still be a perfectly serviceable identifier if its security level matches the application’s risk. But truncation changes the margin. If you keep only 160 bits, then collision and preimage security are no longer those of the full 256-bit output; they are bounded by the shorter output length.

That is not just theory. An audit of a Cosmos SDK liquidity module flagged the use of 20-byte truncated SHA-256 in deterministic reserve-account address generation as a security concern, precisely because two different inputs that collide on the truncated output could map to the same address. The audit did not claim an imminent practical exploit, but it correctly identified the mechanism: shortening the digest shortens the security cushion.

So when you see SHA-256 in a protocol, ask a more precise question than “does it use SHA-256?” Ask: does it use the full digest, a truncation, or SHA-256 inside another construction? Those are materially different design choices.

Why does a correct SHA‑256 implementation still need careful integration and testing?

Another easy misunderstanding is to assume that if the algorithm is standardized, every implementation is equally safe. The standard itself warns against this simplification. Conformance means an implementation computes the specified outputs correctly. It does not guarantee that the surrounding software is free from side-channel leaks, memory errors, misuse, or protocol design mistakes.

This is why practical libraries wrap SHA-256 in APIs and higher-level constructions. RFC reference code exists to clarify behavior and interoperability. Libraries such as libsodium expose both one-shot and streaming SHA-256 APIs, while also warning that SHA-2 is mainly included there for interoperability and that other primitives may be better choices for generic hashing or password handling.

In other words, there are two layers of questions. The first is, “What is SHA-256 mathematically?” The second is, “How is this system using it?” Many real-world failures live in the second layer.

Conclusion

SHA-256 is a standardized cryptographic hash function that turns variable-length input into a fixed 256-bit digest by padding the message, processing it in 512-bit blocks, expanding each block into a 64-word schedule, and repeatedly mixing that schedule into an eight-word state over 64 rounds.

Its importance comes from what that mechanism buys you: a compact, deterministic fingerprint that is easy to compute but designed to resist inversion and collisions. That is why it appears across blockchains, signatures, integrity checks, HMACs, and key-derivation systems. And that is also why the details matter: truncation changes the security level, raw SHA-256 is not a password hash, and using it directly where HMAC is needed can go wrong.

The short version worth remembering tomorrow is this: SHA-256 is not “encryption” and not “just a checksum.” It is a fixed-size commitment function whose whole job is to make large data easy to bind to, compare, and build secure protocols around.

What should I understand about SHA-256 before using it?

SHA-256 is a foundational commitment and address‑derivation primitive, but its real‑world use matters: truncation, double‑hashing, or using raw SHA‑256 for authentication change the practical risk. Before you fund, trade, or transfer on Cube Exchange, confirm how the chain or service applies SHA‑256 and follow a concrete checklist to reduce address, collision, and authentication surprises.

- Check address and identifier derivation: read the protocol docs or inspect derivation code to confirm whether the chain or wallet uses full SHA‑256, double SHA‑256 (Bitcoin), or a truncated digest (and to how many bits), and note whether an address checksum is used.

- Confirm authentication and key derivation: verify APIs, wallets, or contracts use HMAC‑SHA‑256, HKDF, or a proper KDF for MACs and keys rather than ad‑hoc raw SHA‑256; if you find raw SHA‑256 used for authentication or password hashing, avoid reusing credentials and escalate to support.

- Prepare the transfer on Cube: deposit the asset or stablecoin into your Cube account via the fiat on‑ramp or a direct transfer, copy the full recipient address and any required memo/tag exactly, and prefer address formats that include checksums when available.

- Execute and verify the activity: open the relevant market or withdrawal on Cube, submit the trade or transfer, then confirm the transaction hash on a reliable block explorer and wait the chain‑recommended confirmations (note if the chain uses double SHA‑256 or another hashing scheme).

Frequently Asked Questions

SHA-256 is a deterministic cryptographic hash that maps variable-length input to a fixed 256-bit digest and is designed to offer preimage resistance, second-preimage resistance, and collision resistance in the sense that finding an input for a given digest or two inputs with the same digest should be computationally infeasible.

Before processing, SHA-256 pads the message by appending a single 1 bit, then enough 0 bits so the padded message is 64 bits short of a multiple of 512, and finally a 64-bit encoding of the original message length; this makes block boundaries unambiguous and is part of the function’s cryptographic definition.

A length‑extension issue of Merkle–Damgård hashes means an attacker who knows SHA-256(m) can sometimes compute the hash of m || suffix without knowing m; for message authentication you should use a standard keyed construction such as HMAC‑SHA‑256 instead of ad-hoc hashing of keys and messages.

Cryptanalysis has produced practical or near‑practical collisions and differential results for reduced‑round variants (substantially fewer than the full 64 rounds), but there is no cited evidence of a practical collision or preimage break for the full 64‑round SHA‑256; reduced‑round work probes the margin but does not equal a full‑function break.

Truncating SHA‑256 shortens its security margin: a shorter digest reduces collision and preimage work factors, so truncated outputs can be acceptable if the reduced security meets the application’s risk but must be evaluated carefully (the Cosmos/Tendermint audit flagged using 20‑byte truncation as a security concern even if an immediate exploit was judged unlikely).

SHA‑256 is the wrong primitive for password storage because it is intentionally fast; password hashing requires deliberately expensive, salted functions to slow attackers - use dedicated password‑hashing APIs rather than raw SHA‑256 (libraries such as libsodium recommend password‑hashing functions and prefer keyed/generic hashes like HMAC or BLAKE2 for other use cases).

Conformance to the SHA‑256 specification ensures correct outputs, but it does not guarantee a secure deployment: implementations can leak via side channels, be buggy, or be misused in protocols, and standards documents explicitly warn implementers to ensure overall implementation and integration security.

Internally SHA‑256 keeps an eight‑word (eight 32‑bit words) state, parses each 512‑bit block into sixteen 32‑bit words then expands them into a 64‑word message schedule, and runs 64 rounds of nonlinear mixing (with functions like Ch, Maj, Σ, σ and fixed round constants) before feed‑forwarding the result into the state; this repeated expansion and mixing is how small input differences are diffused into a seemingly random output.

Related reading