What is Smart Contract Risk?

Learn what smart contract risk is, why blockchain code is uniquely exposed, how exploits happen, and which controls reduce loss, lockup, and compromise.

Introduction

Smart contract risk is the risk that code deployed on a blockchain behaves in a way that causes loss, lockup, manipulation, or loss of control over assets and protocol functions. That sounds like ordinary software risk, but the stakes and mechanics are different. A smart contract is not just an application feature sitting behind a company-controlled server. It is, as NIST defines it, a collection of code and data deployed through cryptographically signed transactions, executed by network nodes that must all derive the same result and record that result on the blockchain. When that code holds money, assigns permissions, or defines liquidation rules, a flaw is not merely a bug report. It can become the system’s actual behavior.

That is the puzzle at the center of smart contract security: blockchains are designed to reduce the need to trust operators, yet users often end up trusting software much more intensely than they would in ordinary systems. If a bank backend has a defect, the bank may be able to pause service, reverse entries internally, or patch the server before much damage spreads. If a deployed contract has a defect, the whole point of the system is often that no operator can quietly alter history or override execution. The property that makes smart contracts credible (deterministic execution without a central administrator) is also what makes mistakes unusually expensive.

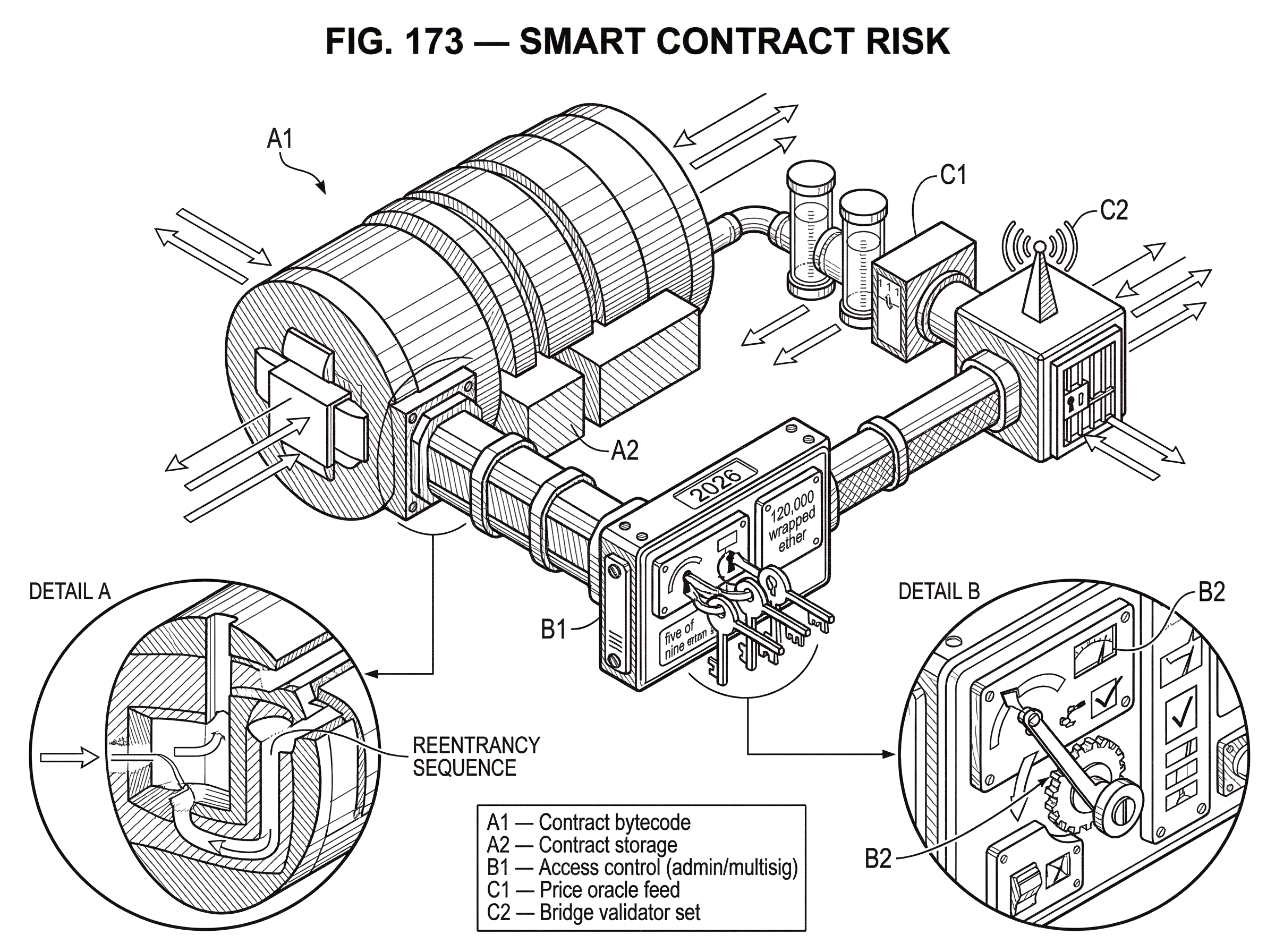

This is why smart contract risk is best understood not as a list of famous exploits, but as a consequence of a particular design choice: we let public, deterministic, often hard-to-change code directly control valuable state. Once you see that, the familiar incidents start to line up. reentrancy, bad access control, broken upgrade logic, oracle manipulation, validator key compromise in bridges, and unchecked external calls are different surface forms of the same deeper problem: the contract’s assumptions about authority, state transitions, and external interaction were wrong or incomplete.

Why do smart contracts create unique security risks?

A smart contract is executed by many nodes that must reach the same outcome. That requirement creates a very strict environment. Contract code cannot depend on private server state, informal operator discretion, or hidden recovery steps. The chain only knows what is in the transaction, what is already on-chain, and whatever external data the contract has been given through accepted mechanisms. This gives smart contracts a powerful integrity property: everyone can verify that the code ran as written. But it also means the contract has to encode its own defenses, limits, and recovery paths in advance.

Here is the mechanism. In ordinary software, there is often a separation between the code that recommends an action and the institution that authorizes or settles it. In smart contracts, those layers are compressed together. The contract checks balances, verifies permissions, updates state, and may transfer assets immediately. So a mistake in authorization is not just a bad log entry. It may grant a real attacker the power to upgrade the contract, withdraw funds, or mint claims they should not have.

Immutability raises the stakes further. Ethereum’s developer documentation emphasizes that deployed contract code usually cannot be changed to patch security flaws, and stolen assets are extremely difficult to recover. That does not mean every contract is literally unchangeable; many systems use proxy-based upgrade patterns or emergency stops. But those are themselves design choices with their own risks. A system that is fully immutable may be hard to rescue. A system that is upgradeable may be easier to rescue, but it introduces trust in whoever controls upgrades. Smart contract risk is therefore not just the risk of code defects. It is also the risk that the chosen balance between immutability and control is wrong for the value at stake.

A useful way to think about it is this: a smart contract is both software and institution. It is software because it is code with logic, storage, and interfaces. It is an institution because it defines who can do what, under what conditions, with what finality. If the code is wrong, the institution is wrong.

Who can change on-chain state and why that matters

Most smart contract failures look different on the surface, but they often collapse to one question: who was able to cause a state change that should not have been possible? Security becomes clearer when you focus on state transitions rather than vulnerability names.

A contract stores state: balances, debt positions, ownership, admin roles, upgrade pointers, accounting totals, liquidation thresholds, and so on. Every function is a proposal to move that state from one valid configuration to another. Risk appears when the contract allows a transition that violates its intended invariant. Perhaps a user withdraws twice before their balance is reduced. Perhaps an attacker calls an admin-only function because access control is flawed. Perhaps a bridge contract accepts a forged message and mints wrapped assets without valid backing. Perhaps an upgrade proxy points to malicious logic. In every case, the external symptoms differ, but the invariant failure is the same: the contract accepted an invalid state transition.

This is why the most damaging smart contract bugs are usually not random crashes. They are logic errors about authority, sequencing, or trust boundaries. The contract did exactly what it was told, but what it was told to do was insecure.

A common misunderstanding is to treat “smart contract risk” as equivalent to “Solidity coding mistakes.” Solidity-specific pitfalls matter a lot, and the Solidity security guidance is explicit about several of them. Everything in a contract is publicly visible, including data marked private; external calls can hand control to another contract; tx.origin should never be used for authorization; and loops that grow with storage can become unexecutable because of gas limits. But these language-level issues matter because of the deeper invariant problem. Public visibility matters because secrecy assumptions fail. External call behavior matters because control flow can escape in the middle of an update. Gas limits matter because liveness is part of correctness: a function that can no longer execute may lock users out even if no attacker directly steals funds.

How does reentrancy allow an attacker to drain funds?

Reentrancy is the classic example because it shows the mechanism of smart contract risk with unusual clarity. The basic pattern is simple: a contract sends assets to an external address before it has finished updating its own accounting. If the recipient is not a passive account but another contract, that recipient can run code immediately. It can call back into the vulnerable contract while the vulnerable contract still believes the old balance is present.

Imagine a withdrawal function that first sends 10 units to the caller and only after that subtracts 10 from the caller’s recorded balance. The programmer may think of this as a single withdrawal. But the blockchain sees a sequence of operations. When the outbound call happens, control temporarily leaves the contract. If the receiving contract is malicious, it can call the withdrawal function again before the first call finishes. Because the recorded balance has not yet been reduced, the second call also appears valid. The same thing can happen repeatedly within one transaction until the available funds are drained.

This is the logic behind The DAO exploit, which Ethereum’s incident update described as a recursive calling vulnerability. The attacker invoked a split-related function and reentered before state had been finalized, collecting ether repeatedly in one transaction. The attack became historically important not only because of the funds involved, but because it made the failure mode visible to everyone: the contract’s internal accounting and the actual movement of value had fallen out of sync during execution.

The standard mitigation (checks-effects-interactions) works because it repairs that sequencing error. First check that the withdrawal is allowed. Then update internal state so the balance is reduced. Only then interact with the external contract. The analogy is closing your cash drawer before handing money across the counter. It explains the idea well: finish your own bookkeeping before exposing yourself to external action. Where the analogy fails is that smart contracts are not physically sequential in the everyday sense; the danger comes from handing off control to adversarial code inside the same transaction context. Still, the principle is right. Reentrancy is dangerous because external interaction happened before the contract had made its own state self-consistent.

Not every chain exposes reentrancy in the same way. Solana, for example, documents different semantics for cross-program invocation: direct self-recursion is allowed, but indirect reentrancy of the form A -> B -> A is rejected with ReentrancyNotAllowed. That changes which callback patterns are possible, but it does not eliminate smart contract risk in general. It shifts the boundary. On Ethereum, callback-driven reentrancy is a central design hazard. On Solana, developers instead have to reason carefully about cross-program invocations, privilege extension, and shared compute budgets. Different runtimes remove some failure modes and create others.

How access-control failures cause catastrophic smart contract losses

| Model | Single‑point failure | Speed of change | Operational complexity | Best use |

|---|---|---|---|---|

| Owner | Yes | Immediate | Low | Small teams, prototypes |

| Multisig | Reduced single point | Moderate | Medium | Treasury and high‑value keys |

| Role‑based RBAC | Depends on roles | Flexible | High | Modular protocols with separation |

| On‑chain governance | Decentralized (less SPoF) | Slow | High | Protocol‑level policy changes |

If reentrancy teaches the importance of sequencing, access control teaches the importance of authority. Many devastating incidents are not subtle arithmetic errors. They are cases where the wrong party could exercise power that should have been tightly constrained.

This is why access control now sits near the top of maintained vulnerability rankings. The OWASP Smart Contract Top 10 places access control vulnerabilities first in its forward-looking 2026 list, and describes them as flaws that let unauthorized users or roles invoke privileged functions or modify critical state. That description is broad, but it gets at the real issue. In many protocols, the most dangerous functions are not user-facing swaps or deposits. They are admin actions: changing price feeds, upgrading implementations, pausing markets, moving reserves, changing signers, or modifying validator sets.

A single owner address can be operationally convenient, but Ethereum’s guidance notes the obvious risk: it is a single point of failure. If that key is compromised, lost, socially engineered, or used maliciously, the contract may be fully controlled. That is why multisignature control, role separation, and time delays matter. They do not make a contract bug-free. They make it harder for one failure to become a catastrophe.

The Ronin bridge breach is an unusually clear example. The postmortem says the attacker gained control of five of nine validator keys and used them to forge withdrawals. The immediate cause was not an arithmetic bug in token accounting. It was a failure of authority design and operational hygiene: concentrated validator control, lingering allowlist permissions, and insufficient monitoring. The “contract risk” here spilled beyond contract source code into key management and governance, but the mechanism is the same. The bridge accepted state transitions (withdrawals) because the signatures presented were sufficient under its rules. The rules were too easy to satisfy after the operational compromise.

This is an important boundary to understand. Smart contract risk is partly code risk and partly control-plane risk. If an attacker compromises the keys that can upgrade a proxy, sign bridge attestations, or administer a treasury, the on-chain contract may execute exactly as intended and still produce a disastrous outcome.

Why oracles, bridges, and composability increase smart contract risk

| Dependency | Trust boundary | Common failure | Mitigation | Impact severity |

|---|---|---|---|---|

| Price oracle | External data provider/aggregator | Price manipulation | Secure oracles, TWAPs, redundancy | Financial losses/liquidations |

| Bridge | Cross‑chain validators or proofs | Validator compromise, spoofed proofs | Decentralized validators, fraud proofs | Large-scale minting/theft |

| Shared library | Third‑party on‑chain code | Bug in shared logic | Use audited libs, pin versions | Systemic freezing or propagation |

| Composed protocol | Other contracts' state | Input manipulation, flash loans | Limit exposures, sanity checks | Cascading protocol failures |

A smart contract rarely lives alone. Modern protocols depend on price oracles, liquidity pools, governance systems, bridge attestations, libraries, and other contracts. Each dependency extends the trust boundary.

Price oracles are a good illustration. A lending market may be perfectly correct about collateral ratios given a price input p, yet still fail if p is manipulable. In that case, the vulnerable logic is not “the math is wrong,” but “the contract trusted an input whose security assumptions were too weak.” This is why oracle manipulation and flash-loan-facilitated exploits appear prominently in current risk taxonomies. Composability is useful because contracts can build on one another. It is dangerous for the same reason: one protocol’s “input” is often another protocol’s temporarily deformable state.

Bridges concentrate this problem. A bridge is usually trying to enforce a strong claim across domains: if assets are locked or messages are validated on chain X, then mint or release assets on chain Y. That means the bridge has to trust a verification mechanism, validator set, or message proof system. If that mechanism fails, the bridge may mint unbacked assets or release locked assets incorrectly.

The Wormhole exploit on Solana shows how this can happen at the verification layer. CertiK’s analysis attributes the attack to a missing validation step around a sysvar account and a deprecated helper, which allowed signature verification to be spoofed and 120,000 wrapped ether to be minted. Again, the deeper pattern is not chain-specific. A contract treated an invalid proof as valid, so the state transition (minting representation of locked assets) occurred without legitimate backing.

Bridges are therefore not just bigger smart contracts. They are smart contracts whose correctness depends on cross-system agreement. That makes them structurally high risk.

What trade-offs do upgradeable proxy patterns introduce?

| Pattern | Patchability | Trust required | Attack surface | Best for |

|---|---|---|---|---|

| Immutable | None after deploy | Minimal operator trust | Bugs irreversible | High‑assurance protocols |

| Proxy upgradeable | Logic can be swapped | Admin multisig or key holders | Compromised admin can control logic | Rapid fixes for teams |

| Beacon proxy | Central beacon points to impl | Trust in beacon operator | Beacon compromise affects many | Many linked deployments |

| On‑chain governance upgrades | Upgrades via governance votes | Trust in token-holder process | Vote manipulation or timelock abuse | Community‑governed protocols |

Because immutability makes post-deployment fixes hard, many teams use proxy patterns. In the common form described by Ethereum documentation, a proxy stores state while delegating logic to an implementation contract. To upgrade the system, an authorized party changes the implementation address. ERC-1967 standardizes well-known storage slots for the implementation, beacon, and admin addresses so tooling can inspect proxies more reliably.

The appeal is obvious. If a defect is found, a team can patch logic without migrating all user state. But notice what has changed. A supposedly trust-minimized system now contains a privileged upgrade path. The critical question becomes: who can change the implementation, under what process, and with what visibility?

This is not a side detail. It is a central security tradeoff. An upgradeable protocol can recover from some bugs; a non-upgradeable protocol cannot. But an upgradeable protocol can also be rug-pulled, socially engineered, or compromised through its admin path. That is why good practice is not merely “use upgradeability” or “avoid upgradeability.” It is to make the trust model legible. Multisigs, timelocks, on-chain governance, emitted upgrade events, and careful separation of duties are attempts to control the new risk introduced by the recovery mechanism.

The Parity multisig library failure remains a cautionary example of how brittle shared upgrade-like architectures can be. Parity’s postmortem describes how an uninitialized shared library retained self-destruct functionality. An attacker effectively became owner of that library and destroyed it, freezing funds in hundreds of dependent wallets. The key lesson is deeper than the historical details: if many deployed contracts depend on a shared logic component, then the shared component becomes a systemic point of failure. Reuse reduces duplicated bugs, but it also centralizes blast radius.

How visibility, gas limits, and liveness failures break contracts without theft

When people hear “smart contract risk,” they often imagine stolen funds. Theft is only one outcome. Contracts can also become unusable, permanently frozen, or economically distorted.

The Solidity security guidance stresses that all contract state and execution details are publicly visible. That means confidentiality assumptions fail by default. A contract cannot rely on hidden local variables or “private” storage for security. This matters in auctions, commit-reveal schemes, governance strategies, and any design where participants might exploit visible intermediate state.

Gas and resource limits create a different class of risk: liveness failure. A loop that depends on ever-growing storage may work when there are ten users and fail when there are ten thousand. At that point the protocol may not be hacked in the dramatic sense, but key operations can no longer complete within block limits. Users experience the result as a lockup or denial of service. Checked arithmetic in modern Solidity can likewise improve safety against silent overflow while still creating edge cases where reverts make a function unusable if the protocol did not account for them.

This matters because correctness on-chain is not just “bad things never happen.” It is also “good things can continue to happen under realistic conditions.” A vault users cannot withdraw from is broken even if no attacker profits.

How do teams layer defenses to reduce smart contract risk?

There is no single control that makes smart contracts safe. The reason is structural: different failures arise from logic, tooling, governance, runtime behavior, and external dependencies. So effective defenses have to be layered.

The first layer is conservative design. Reduce complexity where possible. Minimize privileged functions. Avoid unnecessary external calls. Bound loops. Assume everything on-chain is public.

Treat every external dependency as part of the threat model rather than as background infrastructure.

- oracle

- bridge verifier

- library

- proxy admin

- signer set

The second layer is reusable, reviewed components. Widely used libraries such as OpenZeppelin matter because security is cumulative. Reusing a standard implementation of access control, token logic, or proxy support is often safer than inventing a custom version. This is not blind trust; widely reused code can also become systemic risk if flawed. But review effort compounds when many teams and auditors look at the same code.

The third layer is testing and analysis before deployment. Ethereum’s guidance is explicit that unit testing alone is not enough. Static analysis, dynamic or property-based testing, and formal verification each catch different classes of errors. Tools like Slither are useful because they can quickly flag suspicious patterns and integrate into development workflows. Formal verification is narrower but stronger: when the specification is right and the proof is valid, it can rule out entire classes of behavior rather than merely failing to find them.

The fourth layer is independent review and incentivized disclosure. Audits matter, but Ethereum’s documentation warns against treating them as a silver bullet. Auditors work under time limits and assumptions; they are not proving perfection. bug bounties extend review to a broader adversarial audience and can be especially valuable after launch, when production context reveals issues that static review missed.

The fifth layer is operational controls after deployment. Monitoring, alerts, timelocks, emergency stops, and clearly defined incident procedures can sharply reduce damage. Ronin’s postmortem explicitly notes that large outflows were not detected quickly because tracking was inadequate. That is a reminder that post-deployment security is not just about code correctness; it is also about noticing when reality diverges from expectation.

Finally, teams need a clear classification and checklist discipline. Taxonomies such as the SWC registry helped give names to common weaknesses, though the registry itself warns that it is no longer actively maintained and may be incomplete. More current reviewer-facing standards and checklists now fill that role. The naming matters less than the habit: every contract change should be examined against recurring failure modes, not just against whatever bug is fashionable this month.

What common mistakes teams underestimate about smart contract risk

The easiest mistake is to think smart contract risk is mainly about clever attackers finding obscure low-level bugs. Those bugs exist, but many major failures have been much more ordinary: forgotten initialization, overpowered admin roles, invalid trust in an external input, stale permissions, missing monitoring, unsafe upgrade paths. The environment is cryptographic and adversarial, but the root causes are often familiar engineering failures made harsher by immutability and direct asset control.

The second mistake is to think audits substitute for system design. They do not. If a protocol’s security depends on a small multisig acting honestly, or on an oracle that can be cheaply manipulated, or on a bridge validator set that is too concentrated, an audit may correctly report the code while the system remains fragile.

The third mistake is to think smart contract risk belongs only to Ethereum. Ethereum provides many of the canonical examples and much of the best-known tooling, but the underlying issue is broader. Solana’s CPI model creates its own privilege and compute-budget considerations. CosmWasm changes the runtime and language assumptions and explicitly positions itself as reducing classes of Solidity-style issues such as reentrancy and misuse of tx.origin. Those design differences matter, but they do not erase risk. They move it into new places: account validation, runtime semantics, inter-chain communication, and operational authority.

Conclusion

Smart contract risk exists because blockchains let public code directly govern valuable state with limited room for discretionary repair. Once deployed, a contract’s logic becomes part of the settlement system itself.

The memorable version is this: in smart contracts, the bug is often not beside the system; it is the system. Managing that risk means protecting the validity of state transitions: who can trigger them, what inputs they can rely on, what outside calls they make, and how the system can respond when assumptions fail.

How do you secure your crypto setup before trading?

Smart Contract Risk belongs in your security checklist before you trade or transfer funds on Cube Exchange. The practical move is to harden account access, verify destinations carefully, and slow down any approval or withdrawal that could expose you to this risk.

- Secure account access first with strong authentication and offline backup of recovery material where relevant.

- Translate Smart Contract Risk into one concrete check you will make before signing, approving, or withdrawing.

- Verify domains, addresses, counterparties, and approval scope before you confirm any sensitive action.

- For higher-risk or higher-value actions, test small first or pause the workflow until the security check is complete.

Frequently Asked Questions

There is no single correct choice; immutability reduces reliance on trusted operators but makes post-deployment fixes extremely hard, while upgradeability enables patching at the cost of introducing a privileged upgrade path that must itself be trusted and governed. Teams should make the trust model explicit and protect upgrade authority with multisigs, timelocks, on‑chain governance, emitted upgrade events, and separation of duties so recovery capability does not become a single point of failure.

Reentrancy occurs when a contract interacts with an external address before it has finished updating its own state, allowing that external code to reenter and trigger repeated valid-looking transitions; the usual mitigation is checks‑effects‑interactions - validate the call, update internal state, then perform external calls - so bookkeeping is finalized before control is handed out.

Oracles, bridges, and other contracts expand the protocol’s trust boundary because the contract’s correctness depends on inputs or attestations from external systems; if those inputs are manipulable or their verifier/validator set is compromised, the contract can accept invalid state transitions (e.g., minting unbacked assets or triggering wrongful liquidations).

Proxy upgradeability solves the inability to patch deployed logic but introduces a privileged control plane: whoever can change the implementation can alter protocol behavior, so upgrade admins must be governed and observable (e.g., multisigs, timelocks, emitted events, and clear upgrade processes); the ERC‑1967 pattern standardizes where proxies store implementation/admin slots to improve discovery, but it doesn’t eliminate the trust tradeoff.

Access control failures arise when the contract’s rules let an unintended actor cause privileged state changes - this often looks like compromised or overpowered keys, an uninitialized owner, or a single owner account able to perform many admin actions; reducing that risk means using multisignatures, role separation, time delays, and least‑privilege design so no single failure leads to catastrophic state changes.

Audits are valuable but not a guarantee; auditors work under assumptions and time limits, so audits and code review should be combined with extensive testing, static/dynamic analysis, bug bounties, monitoring, and operational controls because many major failures stem from design or operational choices rather than solely from coding bugs.

Not all failures are theft: public visibility and gas/resource limits can cause confidentiality leaks, unusable functions, or permanently frozen contracts (liveness failures) when operations become too expensive or loops outgrow gas limits; these outcomes break the protocol even without an attacker directly stealing funds.

Different blockchains change which failure modes are most likely: for example, Solana’s CPI model disallows certain indirect reentrancy patterns (it rejects A→B→A with a ReentrancyNotAllowed error) but creates other concerns like privilege extension and shared compute budgets, while Wasm/CosmWasm and other runtimes shift risks into account validation, sandboxing, and resource metering - no platform eliminates the need to reason about authority, sequencing, and external inputs.

Related reading