What is Tokenomics?

Learn what tokenomics is, how token supply and demand are designed, and why incentives, governance, utility, and credibility shape token value.

Introduction

Tokenomics is the design of the economic rules that govern a token: what the token is for, how it is created, who receives it, how it moves, and what makes holding or using it rational. The reason this matters is simple: a token is not valuable because it exists on a blockchain. It becomes economically meaningful only when the rules around supply, demand, rights, and incentives produce behavior that the system actually needs.

That is the puzzle at the center of tokenomics. Two projects can both issue a token with the same technical standard, and yet one becomes useful while the other becomes noise. The difference is usually not the token contract alone. It is the economic design layered on top: whether the token coordinates users, secures a protocol, allocates cash flows or rights, absorbs volatility, or merely speculates on attention.

At a technical level, a token is just a ledger object with transfer rules. On Ethereum, an ERC-20 token exposes standard functions like totalSupply, balanceOf, transfer, approve, allowance, and transferFrom, along with events such as Transfer and Approval so wallets, exchanges, and applications can interoperate consistently. ERC-721 does the same for individually identified non-fungible tokens, where each asset is distinguished by a unique tokenId within a contract. ERC-1155 generalizes this further by letting one contract manage many token types at once, including fungible and non-fungible assets, with batch transfers and event-based accounting. These standards make tokens usable across an ecosystem, but they do not tell you what the token should do economically. Tokenomics begins where the interface standard ends.

The key idea is that tokenomics is really about incentive engineering under constraints. The constraints come from code, market structure, user psychology, governance, and the base chain itself. A token on Solana inherits a mint authority model, where supply remains mutable as long as a valid mint_authority exists, and becomes fixed only when that authority is set to None. A native token on Cardano lives inside the ledger’s multi-asset model, where minting and burning are governed by a minting policy and every output containing custom tokens must also carry a minimum amount of ada. Those are not cosmetic implementation details. They shape what kinds of promises about supply, control, and usability are credible.

So when people ask what tokenomics is, the useful answer is not “supply and demand for tokens.” It is this: tokenomics is the mechanism design of a blockchain asset. It asks what behavior the system needs, what levers the token provides, and whether the resulting equilibrium is stable enough to survive contact with real users.

How does a token act as a control surface for a product or network?

A common mistake is to treat the token itself as the product. Usually it is not. The product might be blockspace, a marketplace, a game economy, a lending protocol, a governance system, or a settlement network. The token is a way to influence how participants behave inside that system.

This is the right mental model: a token is a control surface attached to a network. By changing token balances, issuance rules, transfer permissions, or redemption rights, the protocol changes incentives. That in turn changes participation, liquidity, security, and pricing. If the token is well designed, people who pursue their own interests end up reinforcing the system’s goals. If it is badly designed, the token attracts behavior that looks good in the short term but weakens the network underneath.

Consider a lending protocol. It needs lenders to supply assets, borrowers to post collateral, liquidators to act when accounts become unsafe, and governance to set risk parameters. Compound’s design makes this concrete. Deposited assets are pooled into markets, and suppliers receive ERC-20 cTokens, which represent claims on an increasing amount of the underlying asset as interest accrues through a rising exchange rate. Borrowing capacity is determined by collateral factors, and liquidators are economically motivated to close unsafe positions because liquidation includes a discount. None of this works because “there is a token.” It works because each tokenized position carries incentives that produce the needed behavior.

The same principle appears in very different systems. A governance token tries to turn token holders into decision-makers. A staking token tries to turn idle capital into security. A stablecoin tries to turn reserve structure and redemption expectations into price stability. An in-game asset tries to turn ownership and scarcity into player behavior. In each case, the token is a tool for shaping actions.

That is why tokenomics cannot be reduced to a cap table or emission chart. Those are visible outputs of the design, but the more important question is causal: what does owning or using this token let someone do, and why would that lead to healthy system behavior?

What three core questions should you ask to analyze a token mechanism?

Most tokenomic designs can be understood by asking three linked questions: what creates demand for the token, what governs its supply, and what keeps those two from drifting too far apart. If you understand those three things, much of the rest follows.

Demand is not “people want number go up.” Sustainable demand comes from some form of utility, rights, or expected future benefit. That might mean paying fees, obtaining governance power, posting collateral, accessing services, receiving revenue-linked claims, or using the token as a medium inside an application. The important distinction is between demand that comes from the system’s ongoing operation and demand that comes mainly from speculative expectation. Speculation is always present, but if it is the only source of demand, the design is fragile because the token’s support disappears exactly when confidence weakens.

Supply is the opposite side of the mechanism. A token can have a fixed maximum, a predictable disinflationary curve, discretionary issuance, algorithmic rebalancing, or asset-backed mint-and-burn dynamics. OpenZeppelin’s widely used ERC-20 implementation makes this separation clear: the base token contract is agnostic about how supply is created, and projects must deliberately implement supply mechanisms through minting and burning logic or extensions like ERC20Capped. On Solana, supply mutability is explicit in the mint authority. On Cardano, minting policy determines when creation and destruction are allowed, and that policy association is permanent for the asset. Across chains, the principle is the same: supply credibility depends on who can change it, under what conditions, and whether observers can verify those conditions.

The stabilizing part is where tokenomics becomes more than issuance. If demand and supply interact without any damping mechanism, the system often oscillates: rewards attract mercenary users, emissions create sell pressure, price falls reduce participation, and the network loses the very activity it was subsidizing. Stabilizers can take many forms: vesting schedules, lockups, fee sinks, reserve requirements, collateral rules, redemption arbitrage, dynamic interest rates, or governance limits. The exact form matters less than the function. A token economy needs some reason that short-term opportunism does not immediately overwhelm long-term usefulness.

A recent survey on cryptoeconomics and tokenomics makes this point in more formal language: serious token design requires simultaneous attention to strategic behavior, spamming, Sybil attacks, free-riding, marginal cost, marginal utility, and stabilizers. In plain terms, a token is not designed in a vacuum. People react to incentives, exploit loopholes, and coordinate around whatever the mechanism rewards.

What does a token's supply actually promise (cap vs credibility)?

| Type | On-chain enforcement | Credibility | Typical risk | Best use |

|---|---|---|---|---|

| Hard-coded cap | Enforced in contract | High if immutable | Contract bugs or upgrades | Scarcity / value signaling |

| Authority-disabled | Mint authority revoked | High if irreversible | Key mismanagement risk | Fixed-supply launches |

| Governance promise | Policy via votes | Medium; social trust | Reversal or capture | Flexible protocol upgrades |

| Vesting / lockups | Time-locked releases | Medium–high if visible | Unlocks flood liquidity | Align insiders long-term |

People often talk about token supply as if totalSupply were the main fact. It is not. The economically relevant question is what the supply number means.

A fixed supply sounds simple, but even there the promise structure matters. Is supply hard-capped in code, as in an ERC-20 with a cap enforced on minting? Is it fixed because the mint authority has been permanently disabled, as with a Solana mint whose authority is set to None? Is it fixed only socially, because a governance process promises not to mint more? These are different commitments with different credibility. The same headline number can represent very different trust assumptions.

Circulating supply is even more slippery. Tokens may exist but remain locked by vesting, treasury controls, protocol reserves, collateral contracts, or exchange custody. Burns may be permanent or offset by future minting rights. Event logs may allow off-chain systems to infer minted and burned supply, as in ERC-1155 where TransferSingle and TransferBatch events involving the zero address can be used to derive circulation, but that still depends on implementations emitting the required events correctly. market cap calculations often hide these distinctions, which is why two tokens with the same quoted supply can behave very differently in the market.

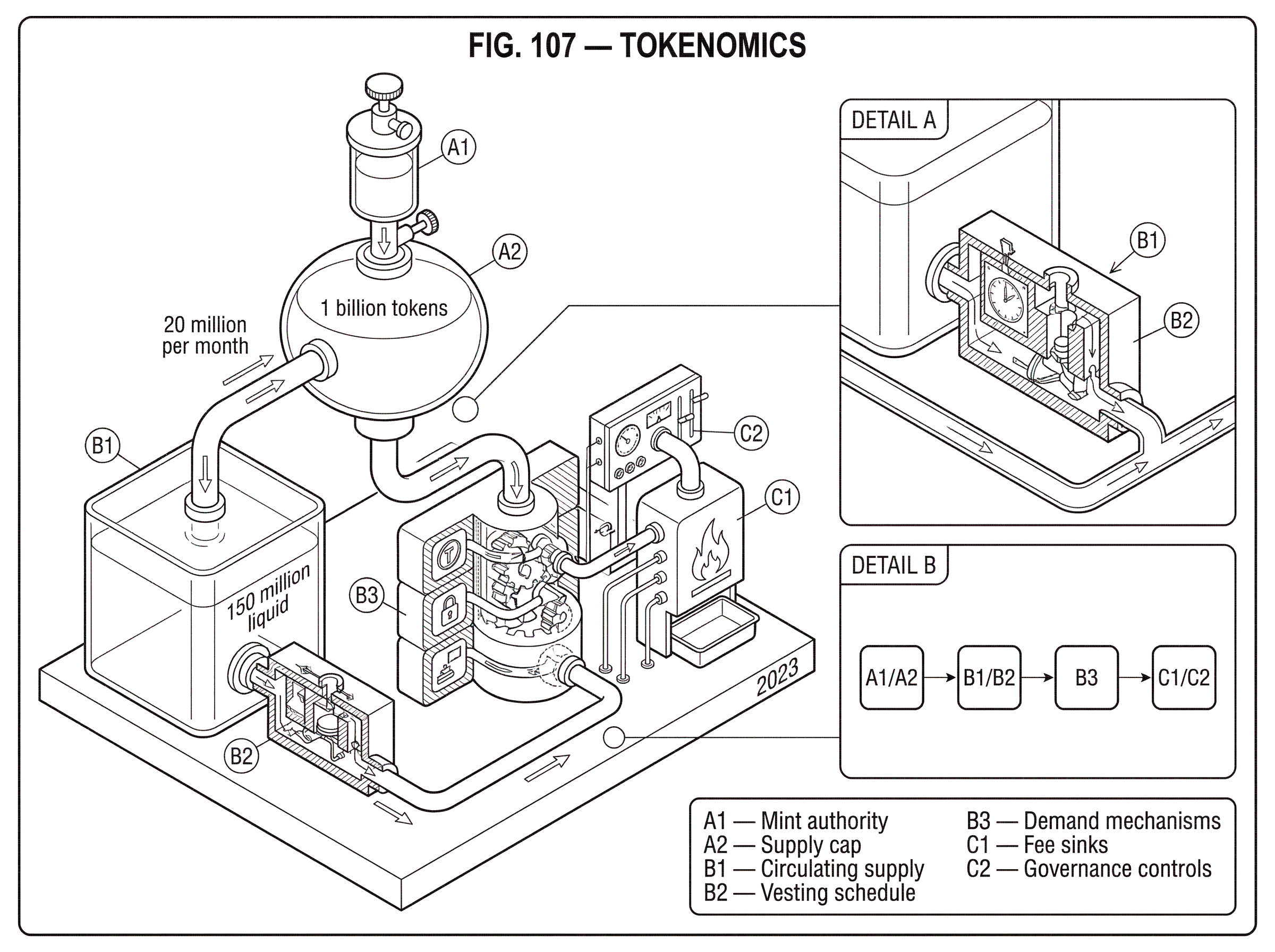

A simple example shows the mechanism. Imagine a project launches with 1 billion tokens. Only 150 million are liquid, 350 million are held by the treasury, and 500 million are locked for team and investors under multi-year vesting. The headline supply is 1 billion, but the sellable float is much smaller. If emissions or unlocks add 20 million liquid tokens per month while organic demand grows slowly, price can fall even though “nothing changed” in the total maximum supply. What changed was the rate at which future promises became present liquidity.

This is why vesting is central to tokenomics, not a side detail. Vesting converts ownership claims into a time-structured release schedule. It tries to align insiders with the long-term system by making immediate exit costly or impossible. But vesting is not automatically good. It works only if the unlock schedule matches the network’s ability to absorb new supply. Otherwise it simply postpones the imbalance.

Why would someone hold a token; utility, rights, or reflexive demand?

| Reason | Durability | Growth speed | Main risk | Best example |

|---|---|---|---|---|

| Utility | High | Slow | Usage-dependent | Fee or access token |

| Rights | Medium | Medium | Enforcement doubts | Governance token |

| Reflexive / speculation | Low | Fast | Rapid confidence loss | Momentum-driven token |

Why would someone hold a token rather than immediately sell it? There are only a few fundamental answers.

They may need it to do something. This is utility in the narrow sense: paying fees, obtaining service access, posting margin, or transacting inside an application. They may hold it because it gives them rights: governance power, redemption claims, or some share in protocol-controlled value. Or they may hold it because they expect others to value it more in the future. That last case is reflexive: demand comes partly from expectations about future demand.

Most live token economies mix all three. The challenge is that reflexive demand is fastest to grow and fastest to disappear. Utility and rights usually accumulate more slowly because they depend on actual usage and institutional trust. So projects are often tempted to bootstrap adoption with narratives and incentives that create immediate price support, hoping utility catches up later. Sometimes that works. Often it creates a gap between the token’s market story and the system’s genuine economic base.

Stablecoins reveal this clearly because their whole purpose is price stability rather than upside. Their tokenomics is not about scarcity. It is about credible redemption and reserve quality. Research comparing stablecoins with money market funds shows that stablecoins can experience flight-to-safety dynamics during stress: flows move away from coins perceived as riskier toward those seen as safer. That means stablecoin tokenomics depends crucially on collateral composition, transparency, and confidence in the stabilization mechanism. Algorithmic stablecoins are especially revealing here. If the stabilizing mechanism depends on confidence in a related token and that confidence breaks, the system can fail very quickly, as the Terra episode demonstrated.

The broader lesson is that utility and credibility are inseparable. A token can promise governance rights, fee discounts, or redemption mechanics, but if users doubt those rights will function under stress, the token’s economic role weakens exactly when it is most needed.

What do token standards (ERC‑20/721/1155) guarantee and what do they leave unspecified?

| Standard | Transfer semantics | Supply controls | Best for | Notes |

|---|---|---|---|---|

| ERC-20 | Fungible transfers, allowances | External mint/burn logic | Currencies, fungible tokens | Widely supported |

| ERC-721 | Unique token transfers, safeTransfer | Project-defined mint/burn | Individual NFTs | Per-item identity |

| ERC-1155 | Batch safe transfers | Per-ID supply rules | Games, multi-assets | Gas-efficient batching |

| Cardano native | Ledger-level multi-asset transfers | Minting policy governs supply | Native tokens | No per-token contract required |

It helps to separate the token standard from the token economy built on top of it.

ERC-20, ERC-721, and ERC-1155 tell wallets and contracts how to interact with assets. ERC-20 defines methods for balances, transfers, approvals, and allowances. It also includes caveats that matter in practice: callers must handle false return values where present, and the approve/allowance pattern has a known race condition, which is why interfaces are advised to set allowances to zero before changing them. ERC-721 defines unique token identifiers and safe transfer rules so NFTs are not sent into contracts that cannot receive them. ERC-1155 allows many asset types in one contract and relies heavily on transfer events for off-chain accounting and supply reconstruction.

These standards are important because interoperability is an economic force. If wallets, exchanges, and protocols can understand a token automatically, the token becomes cheaper to integrate and easier to trade. That lowers friction and can deepen liquidity. But standards do not answer whether the token should have inflation, whether it should convey governance power, whether it should be redeemable for reserves, or whether its emissions are sustainable.

A useful contrast comes from Cardano’s native multi-asset model. There, custom tokens do not require separate ERC-20-style smart contracts for basic ledger accounting. The ledger itself tracks multi-asset balances, and minting and burning are governed by policy rules. This reduces some classes of smart-contract implementation risk because transfer and accounting are handled natively rather than reimplemented by each token contract. But even here, tokenomics remains an overlay of economic design choices: the policy can be permissive or restrictive, supply can be one-time or ongoing, and the asset still needs a coherent reason to exist.

So the standard answers how the token moves. Tokenomics answers why anyone cares that it moves.

How do governance and control choices affect a token's credibility and risk?

Every token design embeds some theory of control. Who can mint? Who can pause? Who can freeze? Who can change parameters? Who can list new assets, update oracles, or redirect reserves? These questions are economic because they determine what promises token holders can trust.

This is obvious on Solana, where a mint may have a freeze_authority that can render accounts unusable, and where mint authority determines whether supply can expand. It is obvious on Cardano, where the minting policy permanently defines the conditions under which an asset can be minted or burned. It is also obvious in DeFi protocols like Compound, where an admin or governance layer can set market parameters, choose supported assets, update price oracles, and withdraw reserves. These are not just operational settings. They change risk, dilution, and the distribution of power.

Governance tokens often present themselves as decentralization instruments, but the important question is what is actually governed and on what timeline. A token that votes on insignificant settings while a multisig controls the critical contracts does not decentralize much. Conversely, a system can be technically decentralized but economically concentrated if voting power is heavily skewed.

This is also where custody design can matter. In systems that use threshold signing or multi-party computation for settlement, the control structure is distributed by cryptography rather than placed in one key holder. Cube Exchange, for example, uses a 2-of-3 threshold signature scheme for decentralized settlement: the user, Cube Exchange, and an independent Guardian Network each hold one key share, no full private key is ever assembled in one place, and any two shares are required to authorize a settlement. That is not tokenomics by itself, but it is closely related. The more credibly a system limits unilateral control over assets or settlement, the more believable its economic promises become.

How should tokenomics align participants' marginal actions with protocol health?

The most important design test is not whether a token sounds elegant. It is whether the next rational action for each participant helps the system rather than extracts from it.

If a protocol rewards liquidity providers with token emissions, the question is what kind of liquidity appears. Does the reward attract durable depth near useful trading ranges, or does it attract capital that leaves as soon as emissions decline? If a governance token distributes voting power, the question is whether informed participation is rewarded, or whether passive holders delegate without scrutiny while concentrated actors dominate. If an NFT project creates scarcity, the question is whether scarcity supports genuine use or community value, or merely creates thinly traded status games.

This is why purely descriptive tokenomics decks are often unhelpful. They may say 20% for team, 15% for treasury, 35% for community, 10% for ecosystem, and so on. But those numbers matter only through behavior. A treasury allocation is useful if governance can deploy it productively without capture. Community allocation helps if distribution reaches users who contribute to the network rather than short-term farmers. Team allocation aligns incentives if vesting is long enough and if the team’s future impact justifies the claim. The labels do not do the work; the mechanism does.

A practical way to think about this is to ask where the system’s marginal buyer and marginal seller come from. The marginal buyer is the next participant willing to absorb supply; the marginal seller is the next participant motivated to exit. Emissions, unlocks, fee capture, staking yield, governance demand, and application utility all push on those margins. If your tokenomics constantly creates more urgent sellers than credible buyers, price weakness is not a market misunderstanding. It is the mechanism expressing itself.

Why run simulations (e.g., cadCAD) when designing tokenomics?

Tokenomics is hard partly because the effects are delayed and recursive. A reward policy changes user behavior, which changes liquidity, which changes price, which changes treasury runway, which changes governance incentives, which then changes future policy. Static spreadsheets miss much of this.

That is why simulation tools are increasingly important. Frameworks such as cadCAD are built to design, test, and validate complex systems through simulation, with support for Monte Carlo methods, A/B testing, parameter sweeps, and both agent-based and system-dynamics modeling. For tokenomics, that matters because many questions are not about a single expected outcome. They are about distributions and feedback loops. What happens if volatility doubles? What if user retention is lower than expected? What if emissions bring in capital that exits in correlated fashion? What if governance reacts slowly to a shock?

Simulation does not produce certainty. Its output is only as good as the assumptions in the model. But it forces the right discipline: make the mechanism explicit, define the agents, specify how state changes, and test what breaks when assumptions change. In tokenomics, that is often more valuable than a polished narrative about long-term alignment.

What common failure modes break tokenomic designs?

Some token designs fail because they never had real demand. Others fail because they had demand but no supply discipline. Others fail because governance was too centralized, reserves too opaque, incentives too extractive, or stabilization assumptions too fragile.

A particularly common breakdown is confusing price support with economic value creation. Buybacks, staking rewards, emissions, and fee rebates can affect market behavior, but if the underlying system is not generating durable usefulness, these tools often just rearrange claims among participants. Another breakdown is importing a design from one context into another without respecting the base architecture. A transfer model that is safe and familiar in one token standard may imply different integration risks elsewhere. Even the meaning of fixed supply differs across ERC-20 contracts, Solana mints, Cardano native assets, and Substrate asset pallets because the authority and accounting models are different.

There is also a deeper limitation. Tokenomics cannot solve a product problem by itself. If the application does not need coordination through a scarce digital asset, adding a token may only create friction and speculation. The strongest tokenomic systems usually attach the token to something the network already needs: security, liquidity, governance, settlement, or access. The weakest attach a token to the hope that markets will infer necessity later.

Conclusion

Tokenomics is the economic design of a tokenized system. Its job is to turn technical assets into incentive mechanisms that create useful behavior under real-world constraints.

The simplest way to remember it is this: a token standard defines the interface, but tokenomics defines the deal. It tells you who can create the asset, why anyone would hold it, what keeps supply and demand in balance, and whether the system still works when participants act in their own interest. If that deal is coherent, the token can help a network coordinate and endure. If it is not, the token usually reveals the problem faster than the project’s marketing does.

How do you evaluate a token before using or buying it?

Evaluate a token by checking its supply rules, governance controls, vesting schedule, utility, and market liquidity before you buy or approve it. On Cube Exchange you can inspect on‑chain contracts, view market depth, and execute a small test order to validate execution and slippage.

- Open the token contract on a block explorer (Etherscan, Solscan, Cardanoscan). Check for owner/mint functions, a mint_authority or minting policy, and whether transfer events are emitted.

- Review the tokenomics and vesting details in the whitepaper or linked contracts. Note treasury and team allocations, monthly unlock amounts, and calculate the monthly dilution rate.

- Inspect governance and control primitives: find multisigs, timelocks, pause/freeze functions, and any single‑key owners that can mint or change parameters.

- Check market liquidity on Cube: view order book depth and spread for the token/quote pair, then place a small limit order to measure slippage before sizing a full trade.

- If you must approve an ERC‑20 allowance, set allowance to 0 first and then to the exact spend amount; start with a small trade to confirm settlement and expected fees.

Frequently Asked Questions

Token standards (ERC‑20, ERC‑721, ERC‑1155, etc.) define how wallets and contracts read balances and move tokens - they specify transfer functions, events, and receiver hooks so tooling can interoperate - but they do not prescribe why a token should be scarce, who should hold it, or what economic behaviors it should produce; tokenomics is the mechanism design layered on top that answers those economic questions.

“Fixed supply” is a legalistic headline that can mean very different operational commitments: on EVM chains a cap can be enforced in the token contract (e.g., via an ERC20Capped pattern), on Solana supply remains mutable while a mint_authority exists and becomes immutable only if set to None, and on Cardano an asset’s minting policy permanently encodes when issuance is allowed - these are different trust assumptions about who can change supply and under what conditions.

Circulating supply is not the same as totalSupply because tokens can be locked in vesting, held in treasuries, used as collateral, or otherwise non‑sellable; vesting and timed unlocks change the rate at which promised tokens enter the sellable float, and that flow rate - not just the headline cap - often determines short‑term price pressure.

Control primitives (mint/freeze/upgrade authorities, admin multisigs, or governance tokens) are economic variables: who can pause, mint, freeze accounts, or change risk parameters determines which promises about dilution and recoverability are credible, and a design that centralizes critical controls while issuing governance tokens can leave holders with less real power than they expect.

Stabilizers are intentional mechanisms that dampen destructive feedback loops - examples include vesting/lockups, fee sinks or buybacks, dynamic interest or reward rates, redemption arbitrage, and collateral or reserve rules - they exist because emissions and speculative flows alone tend to create oscillations that destroy long‑term usefulness.

Token mechanisms that boost short‑term price (buybacks, rewards, emission‑driven farming) can create temporary demand, but if the underlying product does not generate ongoing utility or rights, that demand can vanish; tokenomics cannot substitute for a genuine product need for coordination, security, or settlement.

Because token economies are dynamic and recursive, simulation tools like cadCAD are useful to make mechanisms explicit, model agents and feedback loops, and test how outcomes change under shocks or parameter variation; simulation doesn’t prove reality but it helps identify fragile assumptions and failure modes before launch.

Some token standards (notably ERC‑1155 and many ERC‑20 implementations) rely on emitted events to let off‑chain indexers reconstruct balances and circulating supply, so implementations that omit or vary event emission make on‑chain supply accounting unreliable and can break downstream metrics and tooling.

Related reading