What is a BLS Signature?

Learn what BLS signatures are, how pairing-based verification works, why aggregation matters, and the key security trade-offs in practice.

Introduction

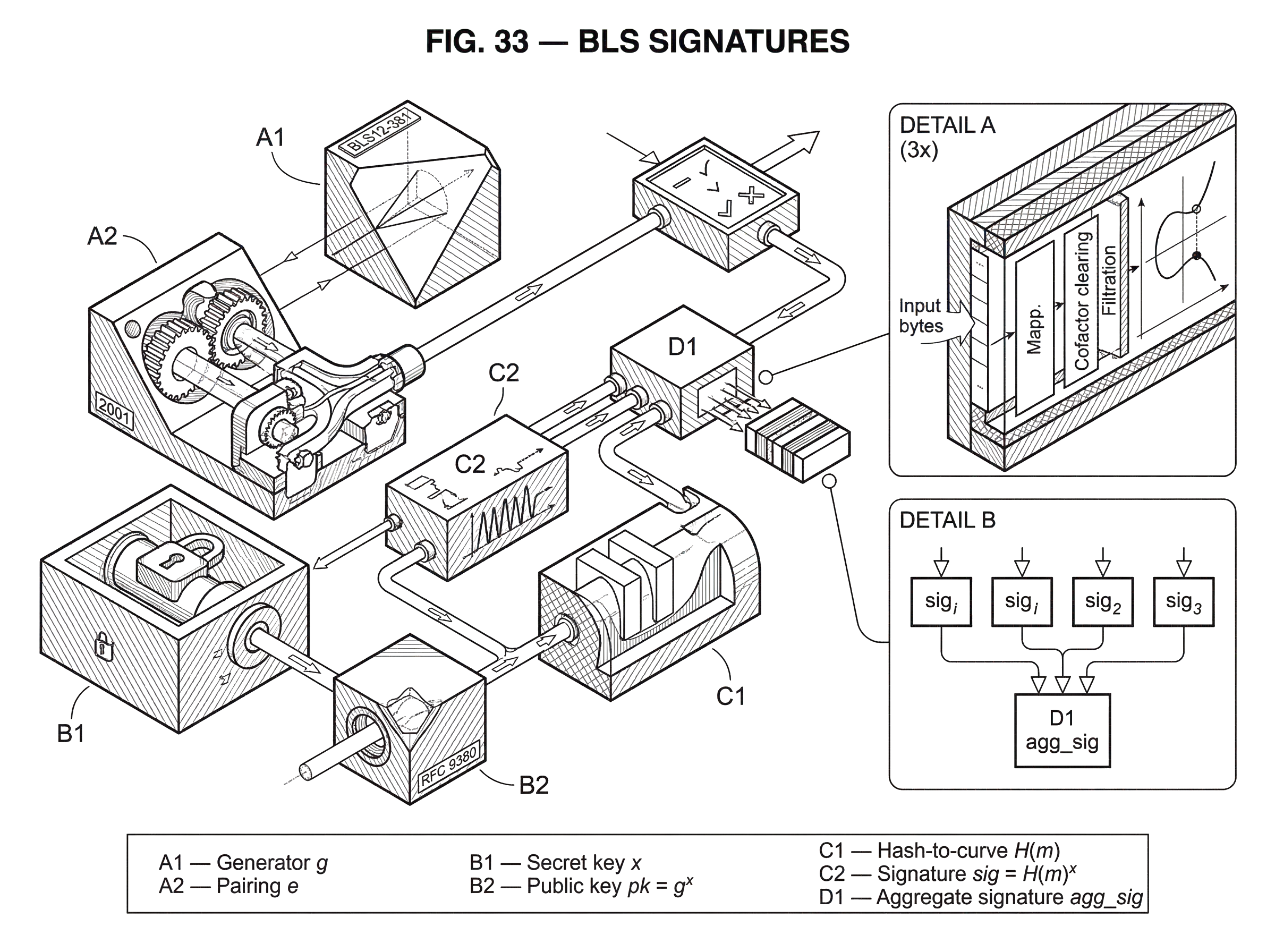

BLS signatures are a digital signature scheme built from bilinear pairings on elliptic curves. They are interesting because they make two goals coexist that often fight each other in cryptography: very compact signatures and natural aggregation, where many signatures can be combined into one.

That combination solves a real systems problem. If thousands of participants each need to sign some message, ordinary signature schemes such as ECDSA or EdDSA give you thousands of separate signatures to transmit, store, and verify. BLS changes the shape of that cost. A single signer still gets a short signature, but more importantly, many signatures can often be compressed into one group element while preserving verifiability. That is why BLS appears in large-validator consensus systems, multisignature constructions, and on-chain pairing precompiles.

The idea can feel almost suspiciously simple once you see it: a BLS signature is just the message, mapped into a curve group, raised by the signer’s secret exponent. The reason this works is that a bilinear pairing lets the verifier check an exponent relation without learning the exponent itself. Everything important about BLS flows from that mechanism.

Why do blockchain systems use BLS signatures?

A digital signature scheme has a basic job: let someone with a secret key authenticate a message, and let everyone else verify that authentication using a public key. If that were the whole story, BLS would just be one more signature algorithm among many.

What pushed people toward BLS was a more specific problem: in many distributed systems, the bottleneck is not merely signing a single message. It is handling many signers at once. Consensus protocols, committee votes, threshold systems, and multisignature wallets all create a pattern where dozens, hundreds, or thousands of participants are attesting to related data. If each participant contributes a separately encoded signature, communication and storage grow linearly with the number of signers. Verification work often grows linearly too.

BLS attacks this by making signatures live in a group where multiplication or point addition corresponds to combining signers’ contributions. Because the verification equation is pairing-based and bilinear, the verifier can check a combined result against combined public information. This is the compression point: the algebra of the signature object matches the algebra of verification.

That is also why BLS is not just “a shorter signature.” The original Boneh–Lynn–Shacham paper introduced it as a short-signature construction, with signatures about half the size of comparable DSA-style signatures at the time, but the deeper reason it became important in blockchains is aggregation. Shortness matters; aggregation is what changed system design.

How do BLS signatures work (simple mental model)?

Here is the simplest way to think about BLS.

Start with a cyclic group with generator g, and let the secret key be a random scalar x. The public key is pk = g^x. So far this looks like many discrete-log-based systems.

Now take a message m and hash it, not to ordinary bits, but to a point in a group. Call that point H(m). The signature is then:

sig = H(m)^x

So signing is mechanically simple: hash the message into the group, then do one scalar multiplication by the secret key. The verifier’s problem is to check that sig really is H(m) raised to the same hidden exponent x that links pk to g.

Normally that would be difficult. Given only g, g^x, H(m), and H(m)^x, how do you test that the same exponent x was used in both places? BLS relies on a bilinear pairing e that turns this hidden-exponent consistency check into a public equality:

e(g, sig) = e(pk, H(m))

If sig = H(m)^x and pk = g^x, bilinearity makes both sides equal to e(g, H(m))^x.

This is the whole mechanism. Signing is “apply the secret exponent to the hashed message.” Verification is “use the pairing to confirm that the same exponent relation holds.”

How do bilinear pairings enable BLS verification?

A pairing is a map e : G1 x G2 -> GT between two elliptic-curve groups G1, G2 and a target multiplicative group GT. What matters is not the notation but the invariant: it is bilinear, meaning exponents can move through the pairing in a controlled way. In ordinary language, if you scale one input by a and the other by b, the output scales by ab.

Concretely, if P is in G1 and Q is in G2, then bilinearity gives:

e(aP, bQ) = e(P, Q)^(ab)

This property is what lets verification compare exponent relationships indirectly. The signer knows x. The verifier does not. But the verifier can still test whether two objects were both transformed by x, because the pairing exposes exactly enough algebraic structure to make that test possible.

This comes with a price. The original BLS paper already emphasized the trade-off: signing is cheap, verification is relatively expensive. Signing is just one scalar multiplication on the curve. Verification requires pairings, and pairings are much heavier than ordinary curve operations. So BLS is not a universal replacement for Schnorr, EdDSA, or ECDSA. It is attractive when its structural benefits (especially aggregation) outweigh that verification cost.

Example: signing and verifying a single BLS signature

Suppose Alice generates a secret scalar x. She publishes pk = g^x as her public key.

Later she wants to sign the message “approve block 125.” The first step is easy to miss but absolutely central: the message must be mapped into the correct prime-order subgroup on the curve. This is not “take a hash and reinterpret bytes as coordinates.” Modern standards use a carefully specified hash_to_curve procedure, including domain separation and cofactor clearing, because the security proof assumes the message lands in the right group in the right way.

So Alice computes H(m), where m is the message and H is a hash-to-curve function. Then she computes sig = H(m)^x. That point is the signature.

A verifier receives m, sig, and Alice’s public key pk. The verifier recomputes H(m) and checks whether e(g, sig) = e(pk, H(m)). If Alice signed correctly, then sig is H(m)^x, and the left side becomes e(g, H(m)^x) = e(g, H(m))^x. The right side becomes e(g^x, H(m)) = e(g, H(m))^x. The equality holds.

If an attacker tries to forge a signature without knowing x, they would need to manufacture a point that satisfies that pairing equation anyway. The original security proof shows that doing this would break the Computational Diffie–Hellman assumption in the relevant pairing-friendly group, in the random-oracle model.

Why can BLS signatures be aggregated so easily?

| Mode | Compression | Verification cost | Security needs | Best for |

|---|---|---|---|---|

| Same-message aggregation | One aggregate signature | 2 pairings to verify | Anti-rogue defenses required | Large validator attestations |

| Different-message aggregation | One signature object overall | n + 1 pairings (aggregated) | Per-message checks still needed | Compress mixed independent signatures |

The reason BLS became so important in blockchain systems is that its signatures combine cleanly.

If several users sign the same message m, each signer i has public key pk_i = g^(x_i) and signature sig_i = H(m)^(x_i).

Multiply the signatures together and you get:

- or

- in elliptic-curve additive notation

- add the points

agg_sig = product(sig_i) = H(m)^(sum x_i)

If you also combine the public keys into:

agg_pk = product(pk_i) = g^(sum x_i)

then the same verification equation still works:

e(g, agg_sig) = e(agg_pk, H(m))

That is not an extra trick layered on top of BLS. It is a direct consequence of the same exponent-linearity that made single-signature verification work in the first place.

This matters enormously in systems with large committees. Ethereum consensus uses BLS signatures on BLS12-381 so validators can produce attestations that are then aggregated. Instead of carrying thousands of separate signatures, the protocol can often carry one aggregated signature plus metadata indicating which validators participated. That is why BLS is described as making large validator sets practical.

The same pattern appears outside Ethereum. Cardano has specified BLS12-381 pairing support in Plutus builtins, and Ethereum has proposed dedicated precompiles for BLS12-381 operations so on-chain contracts can use pairing-friendly cryptography more efficiently. The attraction is not chain-specific. Any system that needs many signers to authenticate related statements faces the same scaling pressure.

Same-message vs different-message BLS aggregation: what changes?

There is an important distinction here.

When many signers sign the same message, aggregation is especially simple. The verifier can combine public keys and check one aggregate signature against one hashed message. This is the cleanest case, and it is the one most often highlighted in validator-attestation settings.

BLS also supports aggregation across different messages. In that setting, the verifier checks a product of pairings involving each public key and each hashed message. Standards note that verifying n signatures separately would take 2n pairings, while aggregate verification can reduce this to n + 1 pairings. That is still linear in the number of distinct messages, but it saves work and, more importantly, compresses transmitted signature data down to one signature object.

So the deepest efficiency gain is not always “constant verification regardless of signer count.” It depends on whether messages are identical, whether proofs of possession are available, and which aggregation mode is being used. A common misunderstanding is to treat “BLS aggregates” as one single optimization with one single verification cost. In practice there are several modes, each with different security conditions.

What is a rogue-key attack against BLS aggregation?

| Defense | How it works | Interaction | Security guarantee | When to use |

|---|---|---|---|---|

| Basic | No extra binding | None | Unsafe for same-message | Distinct-message settings only |

| Message augmentation | Include PK in signed message | None | Prevents simple key substitution | Simple deployments, low friction |

| Proof of possession (PoP) | One-time proof signer controls key | One-time at registration | Strong practical security | Open validator sets, deposits |

| Key-weighting (Hpk) | Hash PK set into weights | None | Provably resistant (papers) | Non-interactive multisig variants |

Aggregation creates a new attack surface: rogue-key attacks.

The problem is subtle. If public keys can be chosen adversarially and then combined naively, an attacker may publish a crafted public key that algebraically cancels honest participants’ keys. Then the attacker can create what looks like a valid aggregate signature implicating others who never signed.

This is not a break of the pairing math. It is a consequence of letting key registration and aggregation interact without enough structure.

Modern BLS standards defend against this in three main ways. The simplest “basic” mode is only safe when all signed messages are distinct. A second option is message augmentation, where the public key is included in the signed message, tying the signature more tightly to the signer. A third and very common option is proof of possession, where each participant first proves they control the secret key corresponding to their published public key. The CFRG BLS draft standardizes these modes and their APIs.

Ethereum’s validator onboarding uses the deposit signature as a proof of possession. That is not incidental plumbing. It is the mechanism that prevents rogue-key attacks from undermining aggregate verification in the validator set.

There is also deeper research here. Some papers show how to build modified BLS multisignatures that resist rogue-key attacks in the plain public-key model, without separate proofs of possession, by hashing the set of public keys into aggregation weights. But the baseline lesson is simpler: aggregation is only secure if key registration is handled correctly.

Why must BLS use standardized hash-to-curve and subgroup checks?

In textbook summaries, BLS is often written as if H(m) were an obvious object. In real implementations, this is where many mistakes happen.

The hash function in BLS must output a valid point in the correct subgroup. That requires a standardized process: hash arbitrary bytes to field elements, deterministically map those field elements to curve points, and clear the cofactor so the result lands in the prime-order subgroup. RFC 9380 defines standard hash_to_curve constructions for curves including BLS12-381 G1 and G2, together with domain separation requirements.

This part is fundamental, not cosmetic. If different implementations hash messages differently, they will produce different signatures for the same logical message and fail interoperability. Worse, incorrect subgroup handling can break security assumptions and produce invalid or dangerous inputs for pairings.

That is why standards insist on subgroup checks and key validation. The BLS draft explicitly requires public-key validation, and implementations that skip subgroup checks step outside the intended security model. EIP-2537 likewise requires subgroup checks for relevant operations. In practice, much of secure BLS engineering is not about the elegant equation; it is about faithfully preserving the algebraic assumptions that equation depends on.

BLS12-381 variants: minimal-signature-size vs minimal-pubkey-size

| Variant | Signature group | Public-key group | Wire-size trade-off | Best when |

|---|---|---|---|---|

| Minimal-signature-size | Signatures in G1 | Pubkeys in G2 | Smaller signatures, larger keys | Many signatures transmitted |

| Minimal-pubkey-size | Signatures in G2 | Pubkeys in G1 | Smaller keys, larger signatures | Many public keys transmitted |

Today, BLS deployments usually mean BLS signatures instantiated over the BLS12-381 pairing-friendly curve. This is not the same construction as the exact supersingular-curve instantiations used in the 2001 paper, but it follows the same pairing-based signature idea and is standardized for modern use.

Implementations often choose between two layout variants. In the minimal-signature-size variant, signatures live in G1 and public keys in G2. In the minimal-pubkey-size variant, public keys live in G1 and signatures in G2. The trade-off is straightforward: you decide which object you expect to transmit more often and place that object in the smaller-encoding group.

This is a convention of instantiation, not the heart of BLS. The heart is the exponent relation checked by the pairing. But the group assignment matters for bandwidth, interoperability, and implementation APIs, so standardized ciphersuites fix the choice explicitly.

What are the practical trade-offs and common failure modes of BLS?

BLS has a clean algebraic story, but deployments live or die on engineering details.

The first trade-off is computational. Verification needs pairings, and pairings are expensive. That can be acceptable when aggregation dramatically cuts bandwidth or total verification work, but it is a bad fit if you only need many cheap independent signatures with no aggregation advantage.

The second trade-off is dependence on pairing-friendly curves and their assumptions. The original BLS proof is in the random-oracle model and relies on hardness assumptions such as CDH or co-CDH in the relevant bilinear-group setting. That is a real security foundation, but it is not the same kind of proof one would get in the standard model. It also means parameter choice matters more than in casual summaries.

The third trade-off is implementation fragility. BLS systems must get serialization, subgroup membership, hashing, and domain separation right. A good illustration came from Filecoin Lotus, where acceptance of multiple valid encodings for the same logical BLS signature created a malleability-style problem at the byte level. The mathematics of BLS had not failed; the surrounding system had assumed a unique byte representation where the library did not enforce one. The result was that the same logical block could have multiple valid CIDs because the signature bytes differed. That is a reminder that in cryptographic systems, the object being signed and the bytes being serialized are both part of the security boundary.

How does BLS support threshold and multi-party signing?

BLS also fits naturally with threshold and multi-party settings because signatures combine algebraically. If secret material is split among participants, they can often produce partial results that combine into a valid signature without reconstructing one monolithic secret key in one place.

A useful contrast is Cube Exchange’s decentralized settlement design, which uses a 2-of-3 threshold signature scheme: the user, Cube Exchange, and an independent Guardian Network each hold one key share; no full private key is ever assembled in one place, and any two shares are required to authorize a settlement. That is an example of the broader threshold-signing idea. BLS is not the only way to build threshold signatures, but its linear structure is one reason pairing-based signatures often appear in discussions of threshold and aggregated authentication.

The key conceptual connection is this: when a signature equation is linear in the secret exponent, distributed parties can often contribute pieces of that exponent relation separately and then combine them. The details depend on the protocol, but the algebra is what makes such constructions plausible.

BLS vs Schnorr/ECDSA: when should you choose each?

Compared with ECDSA, BLS gives simpler aggregation and often shorter signatures, but at the cost of slower verification and more specialized curve machinery. Compared with Schnorr-based schemes, the contrast is subtler. Schnorr has elegant multisignature constructions, but many of them require interaction among signers at signing time. BLS signatures can often be aggregated publicly and after the fact, because the signature objects themselves compose.

That is a powerful distinction in open networks. A collector can gather independently produced signatures and combine them later, without having coordinated the signers in an interactive signing round. In blockchain consensus, where validators independently broadcast attestations and aggregators later compress them, that property is exactly the right shape.

Conclusion

A BLS signature is, at bottom, a very simple object: the signer applies their secret exponent to a curve point derived from the message. What makes that simple idea useful is the pairing, which lets everyone else verify the exponent relation publicly.

From that mechanism, two consequences follow. First, signatures can be compact. Second, and more important for modern systems, signatures compose naturally, so many signers can often be represented by one aggregate signature. That is why BLS matters.

The catch is that the surrounding details are not optional. Secure BLS depends on correct hash-to-curve, subgroup checks, key validation, anti-rogue-key defenses, and canonical serialization. If you remember one thing tomorrow, remember this: BLS is powerful because its algebra lines up perfectly with aggregation; and fragile if implementations fail to preserve that algebra at the edges.

What should you understand before using this part of crypto infrastructure?

BLS Signatures should change what you verify before you fund, transfer, or trade related assets on Cube Exchange. Treat it as an operational check on network behavior, compatibility, or execution timing rather than a purely academic detail.

- Identify which chain, asset, or protocol on Cube is actually affected by this concept.

- Write down the one network rule BLS Signatures changes for you, such as compatibility, confirmation timing, or trust assumptions.

- Verify the asset, network, and transfer or execution conditions before you fund the account or move funds.

- Once those checks are clear, place the trade or continue the transfer with that constraint in mind.

Frequently Asked Questions

A bilinear pairing e lets exponents move multiplicatively across its inputs, so the verifier checks e(g, sig) = e(pk, H(m)); if sig = H(m)^x and pk = g^x both sides equal e(g, H(m))^x, proving the same hidden exponent was used without revealing x.

Because signing is one scalar multiplication on the curve but verification requires one or more expensive pairing computations, BLS makes signing cheap but verification heavier; pairings are significantly costlier than ordinary curve ops, so BLS is worth it mainly when aggregation or bandwidth savings offset that verification cost.

A rogue-key attack lets a maliciously chosen public key algebraically cancel honest keys in an aggregate, allowing forged-looking aggregates; defenses standardized in practice include requiring a proof of possession (PoP), message augmentation, or hashing public keys into aggregation weights, and Ethereum uses the deposit signature as PoP for validator keys.

Hash-to-curve and subgroup checks are essential because the security proof and pairing math assume messages map deterministically into the correct prime-order subgroup; incorrect or inconsistent mapping, missing cofactor clearing, or skipped subgroup checks break interoperability and can enable forgeries or malleability problems.

BLS can aggregate signatures on different messages, but aggregation savings are smaller: verifying n distinct-message signatures naively takes 2n pairings while aggregate verification can reduce that to about n+1 pairings - so aggregation still compresses transmitted data but verification cost remains roughly linear in the number of distinct messages.

Deployments typically pick one of two instantiations: minimal-signature-size (signatures in G1, smaller) or minimal-pubkey-size (public keys in G1, smaller); the choice is an instantiation convention that trades bandwidth and encoding size depending on which object will be transmitted more often.

No - pairing-based BLS as deployed today is not considered quantum-safe because its security relies on discrete-log/CDH-type assumptions that quantum algorithms would break; standards and EIPs explicitly note BLS is not quantum-safe and that post-quantum alternatives would require different constructions.

Real incidents typically arise from engineering edges: for example, Filecoin Lotus accepted multiple valid byte encodings for the same logical BLS signature (a deserialization/serialization mismatch), which produced malleability-like problems even though the math was correct - showing canonical serialization and normalization are security-critical.

BLS’s linear exponent structure makes it natural for threshold signing: parties holding shares can produce partial signature contributions that combine into a valid signature without reconstructing the full private key, so many threshold or multi-share schemes leverage BLS-like algebra to avoid a single point of secret collapse.

BLS is a poor fit when you need many independent, non-aggregatable signatures because its verification cost (pairings) is high; compared with Schnorr, BLS supports public, post‑hoc aggregation without interactive signing rounds, while Schnorr multisignatures typically require interaction at sign time, so choose BLS when non-interactive, late aggregation or extreme bandwidth compression matters.

Related reading