What is a Cryptographic Hash Function?

Learn what cryptographic hash functions are, how they work, and why collision and preimage resistance make them essential in modern cryptography.

Introduction

Cryptographic hash functions are algorithms that turn data of arbitrary length into a fixed-size output called a hash or message digest. That sounds simple enough that it can seem almost trivial. The interesting part is not that they summarize data, but that they do so in a way that creates a very particular asymmetry: hashing should be fast, while reversing the hash or finding two different inputs with the same output should be computationally infeasible.

That asymmetry solves a real problem. Computers constantly need short handles for large objects: a file, a transaction, a public key, a block of data, a password candidate, a software package. But if the short handle is easy to fake, it is useless for security. A cryptographic hash function is the tool that makes a short fingerprint meaningful.

This is why hashes appear everywhere in cryptography and distributed systems. A blockchain links blocks by hash so that changing old data breaks every later reference. A Merkle tree proves that one item belongs to a large set by hashing along a path instead of revealing the whole set. Digital signatures usually sign a hash of the message rather than the entire message. Password systems often store a derived hash rather than the password itself. Key-derivation and message-authentication constructions also build on hashes, though usually not by using a bare hash directly.

The central idea to keep in mind is this: a cryptographic hash function is not valuable because it makes data shorter; it is valuable because it makes tampering detectable without preserving an easy path back to the original input.

How does a hash map arbitrary inputs to a fixed-size digest?

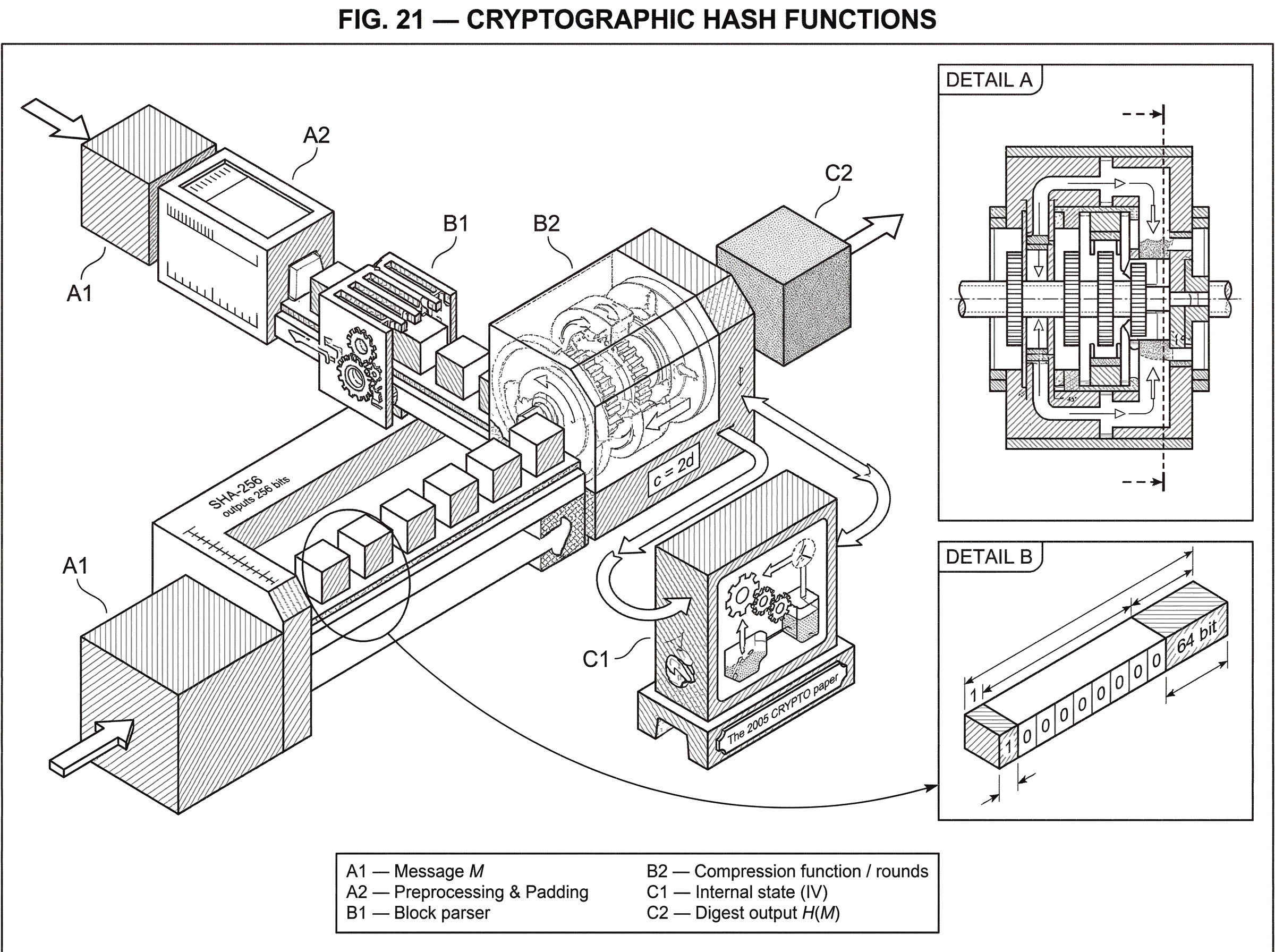

A hash function takes an input M (often called the message) and computes a fixed-length output H(M). For a standard hash, the output length is predetermined by the algorithm. NIST describes hash functions as producing a condensed representation of an arbitrary-length input with a predetermined output length, and calls the result a message digest. In the SHA-2 family, for example, SHA-256 outputs 256 bits and SHA-512 outputs 512 bits. In the SHA-3 family, SHA3-256 outputs 256 bits and SHA3-512 outputs 512 bits.

The first thing to notice is a mathematical inevitability: because infinitely many possible inputs are mapped into a finite set of outputs, collisions must exist. Two different messages somewhere in the universe will hash to the same digest. So Collision resistance can never mean “collisions do not exist.” It means something weaker and more realistic: given the algorithm and realistic computational resources, it should be infeasible to find such a pair.

That distinction is where many first-time explanations go wrong. A hash is not like a checksum in the ordinary engineering sense, where the main goal is to detect random noise. Checksums are designed against accidents. Cryptographic hashes are designed against intelligent adversaries who get to choose inputs strategically.

A useful mental model is a machine that takes any document, file, or byte string and stamps it with a compact fingerprint. If you change even a small part of the input, the fingerprint should change in a way that looks unpredictable. This is often described as an avalanche effect: a tiny input change should propagate widely through the internal state so the output bears no simple local relationship to the original digest. The analogy helps explain sensitivity to input changes, but it fails if taken too literally: a real fingerprint is meant to identify a single person uniquely, while a hash digest is not unique in principle and relies on computational hardness rather than perfect uniqueness.

What are preimage, second-preimage, and collision resistance?

| Property | Attacker goal | Given | Work factor | Signature risk |

|---|---|---|---|---|

| Preimage resistance | Recover original message | Digest y | 2^n work | Leads to message disclosure |

| Second-preimage resistance | Find alternative for M1 | Specific message M1 | 2^n work | Breaks integrity of that message |

| Collision resistance | Find any colliding pair | None (attacker chooses) | 2^(n/2) work | High; undermines signatures |

For a cryptographic hash function to be useful, three related resistance properties matter.

Preimage resistance means that given a digest y, it should be computationally infeasible to find a message M such that H(M) = y. NIST’s Secure Hash Standard states this as the infeasibility of finding a message corresponding to a given message digest. This is the “one-way” aspect people usually have in mind.

Second-preimage resistance means that given a specific message M1, it should be infeasible to find a different message M2 such that H(M1) = H(M2). The attacker is forced to collide with a particular existing message.

Collision resistance means it should be infeasible to find any two distinct messages M1 and M2 with the same digest. NIST highlights this as a core security goal for secure hash algorithms.

These properties are related, but they are not interchangeable. Collision resistance is often the most delicate in practice because of the birthday phenomenon. If an n-bit hash behaves like a random function, then a brute-force collision search is expected around 2^(n/2) work, not 2^n. That is why digest length matters so much. A 256-bit hash gives roughly 128-bit collision security under that heuristic, while preimage resistance remains closer to 256-bit brute-force cost.

This is also why some older hashes became unsafe before others. A function can remain hard to invert in the preimage sense and yet become unsafe for digital signatures because attackers learn how to manufacture collisions. That difference explains why collision attacks on MD5 and SHA-1 were so important: signatures and certificates rely on the inability to create two different messages with the same digest.

How do modern hash functions process input internally (blocks, IVs, compression)?

Although different hash families use different internal designs, most practical cryptographic hashes follow a common pattern: they process the input in blocks and update an internal state repeatedly until a final digest is produced.

NIST describes SHA-1 and SHA-2 as having two broad stages: preprocessing and hash computation. Preprocessing includes padding the message, parsing it into fixed-size blocks, and setting initial values. Hash computation then iterates over those blocks, using a compression procedure and internal mixing operations to update the state until the final digest is output.

That high-level pattern is easier to understand with a concrete narrative. Imagine hashing a long document with SHA-256. The algorithm does not look at the whole document at once. It first appends a 1 bit, then enough 0 bits, and finally a fixed-size encoding of the original message length so that the padded message fits neatly into 512-bit blocks. This padding rule is specified in FIPS 180-4 because it is part of what makes implementations interoperable: two correct implementations must split the same message into the same blocks.

Now the algorithm starts with a fixed initial internal state, sometimes called the IV, for initial value. It reads the first 512-bit block, expands that block into a larger schedule of working words, and runs a sequence of rounds that combine rotations, shifts, Boolean functions, constants, and modular additions. Those rounds are designed so that each bit of the block and of the current state influences many later bits. The updated state then becomes the input state for the next block. By the end, after the last block has been absorbed, the final state is serialized as the digest.

That is the mechanism. Here are the consequences. Because each block changes the running state, changing an early part of the message typically changes everything that follows. Because the message length is encoded into the padding, appending bytes to a message is not invisible to the hash. And because the state update is designed to destroy simple algebraic structure, there should be no practical shortcut from digest back to message.

In SHA-2, the internal machinery uses word-oriented operations such as rotate-right, shifts, XOR, AND, and modular addition. FIPS 180-4 defines operations like ROTR and the padding procedure exactly. The SHA-2 family includes SHA-224, SHA-256, SHA-384, SHA-512, SHA-512/224, and SHA-512/256. The 224/256 variants based on SHA-512 are not just “take SHA-512 and cut it off” in an ad hoc way; the standard specifies distinct initialization values, and for the general SHA-512/t construction it specifies an IV generation procedure.

How does SHA-3's sponge construction differ from SHA-2?

| Primitive | State model | Security knob | XOF support | Domain separation |

|---|---|---|---|---|

| Sponge (SHA-3) | Single wide state | Rate vs capacity | Yes (SHAKE) | Explicit suffix bits |

| Compression (SHA-2) | Chaining value per block | Digest length | No (fixed output) | Padding and length field |

SHA-3 is still a hash family, but its internal construction is different enough that it is worth understanding on its own terms.

FIPS 202 defines the SHA-3 family as four fixed-output hash functions (SHA3-224, SHA3-256, SHA3-384, and SHA3-512) and two extendable-output functions, SHAKE128 and SHAKE256. The fixed-output SHA-3 hashes are standardized hash functions. The SHAKE functions are related but belong to the broader class of extendable-output functions, or XOFs, where the caller can request as many output bits as needed.

The key structural idea is the sponge construction. A sponge has an internal state of fixed width. Part of that state, called the rate, is used to absorb input and squeeze output; the rest, called the capacity, is reserved as hidden internal space that underwrites security. FIPS 202 defines SHA-3 as instances of this sponge construction built from the KECCAK-p[1600,24] permutation.

The intuition is simple. Instead of compressing one message block plus a chaining value into a new chaining value, the sponge repeatedly mixes blocks into a large internal state using a permutation. During the absorbing phase, blocks of the padded message are XORed into the rate portion and the permutation is applied. During the squeezing phase, output bits are read from the rate portion, applying the permutation again if more output is needed.

Why split the state into rate and capacity? Because there is a tradeoff between speed and security. A larger rate means more input is processed per permutation call, which is faster. A larger capacity means more hidden internal entropy and stronger generic security bounds. FIPS 202 sets the capacity c for SHA-3 hash functions to twice the digest length d, so c = 2d. That is the design rule tying the construction to the intended collision and preimage strengths.

SHA-3 also uses domain separation bits appended to the message so different modes do not accidentally overlap. FIPS 202 specifies that SHA-3 hash functions use suffix 01, while SHAKE uses suffix 1111. This detail may seem small, but it solves a real problem: if two modes shared the exact same encoding space, the same internal primitive could create ambiguous outputs across functions. Domain separation marks which function family the input belongs to.

There is a subtle but important distinction here. NIST transition guidance treats the fixed-output SHA-3 functions as hash functions acceptable for general hash applications, while noting that XOFs are not considered hash functions in that specific guidance document. That does not mean XOFs are weak; it means they are a different kind of primitive with different usage assumptions.

How can a short hash securely represent a large file?

At first glance, it can seem impossible that a short digest can say anything reliable about a large file or message. If a terabyte and a one-byte string can both map to 256 bits, why trust the digest at all?

The answer is that a hash does not preserve enough information to recover the input, but it can still preserve enough structure to make forgery infeasible. If H(M) is collision resistant, then publishing the digest commits you to one message in a practically binding way: later, you should not be able to reveal a different message with the same digest. If H(M) is preimage resistant, then seeing the digest does not let others reconstruct the message except by brute force over plausible candidates.

This is exactly why hashes are so useful in commitments, signatures, and blockchains. A block header does not need to contain the entire previous block; it contains the previous block’s hash. That short reference works because changing the earlier block would change its digest, which would in turn invalidate the next block’s reference, and so on. The compactness is convenient, but the security comes from the infeasibility of fabricating data that preserves all those digest links.

Merkle trees apply the same idea recursively. Each parent node is the hash of its children, so the root commits to the entire dataset. A Merkle proof does not reveal every leaf; it reveals a path of sibling hashes that lets a verifier recompute the root. Again, what makes this convincing is not compression alone but the assumption that an attacker cannot swap in a different leaf without changing some hash on the path.

When should you use a bare hash versus HMAC or a KDF?

A common misunderstanding is to treat a bare hash as a general-purpose security tool. It is not.

In digital signatures, the hash usually acts as a preprocessing step. Instead of signing an arbitrarily large message directly, the signer hashes the message and signs the digest or a structured encoding containing it. This is efficient, but it also means collision resistance matters: if an attacker can find two messages with the same hash, they may trick someone into signing one and later present the signature as authorizing the other.

For message authentication, a bare hash is usually the wrong tool because it has no secret. What you want is a keyed construction such as HMAC, which RFC 6234 includes alongside SHA reference code, or a built-in keyed hash design such as BLAKE2’s keyed mode. The key changes the problem from “can anyone compute this digest?” to “can anyone compute the correct tag without the secret?” That is a different security goal.

For key derivation, the same lesson applies. Hashes often appear inside KDFs, but the full construction matters. HKDF, for example, uses HMAC in an extract-and-expand design because just hashing raw material once is usually not the right way to turn imperfect input into well-separated cryptographic keys.

This distinction matters in real systems. In threshold signing and multi-party computation, the hash often appears inside larger protocols rather than standing alone. A concrete example is decentralized settlement systems using threshold signatures. Cube Exchange uses a 2-of-3 threshold signature scheme for decentralized settlement: the user, Cube Exchange, and an independent Guardian Network each hold one key share; no full private key is ever assembled in one place, and any two shares are required to authorize a settlement. In a system like that, hashes help define the exact message being signed and committed to, but the security of authorization comes from the threshold-signature protocol, not from hashing by itself.

What practical attacks and failures follow when a hash function is broken?

The cleanest way to understand why these properties matter is to look at what happens when they fail.

MD5 is the standard cautionary tale. Researchers developed practical collision methods and then refined them into chosen-prefix collisions, where two attacker-chosen message prefixes can be extended so the full messages collide. Marc Stevens and coauthors used such a construction to create a rogue CA certificate based on an MD5 collision with a legitimately issued certificate. That attack was not just a laboratory curiosity. It showed how a collision failure can undermine trust infrastructure when signatures authenticate only the digest.

SHA-1 followed a similar path, though later. NIST now treats SHA-1 as disallowed for digital-signature generation except where specific protocol guidance says otherwise, while permitting legacy verification and some uses that do not require collision resistance. The reason is not that SHA-1 suddenly became easy to invert. The deeper issue is that its collision margin eroded. The 2005 CRYPTO paper by Wang, Yin, and Yu estimated full SHA-1 collisions below the ideal 2^80 brute-force bound, and later work pushed practical attacks further. Once collision resistance is no longer trusted, signature-related uses become unsafe.

This gives a useful rule of thumb: when collision resistance fails, anything that relies on one digest standing uniquely for one document becomes suspect. Certificates, signed software manifests, and commitment schemes are especially exposed.

Why do we need multiple hash families (SHA-2, SHA-3, BLAKE2, Poseidon)?

| Family | Best for | Design focus | Keyed mode | Typical use |

|---|---|---|---|---|

| SHA-2 | Broad interoperability | CPU efficiency | No (use HMAC) | Federal and legacy systems |

| SHA-3 | Design diversity and XOFs | Permutation / sponge | KMAC available | Alternative standard / XOF needs |

| BLAKE2 | High practical speed | Software performance | Built-in keyed mode | Fast general hashing |

| ZK-friendly (Poseidon/MiMC) | Zero-knowledge proofs | Low arithmetic cost | No (circuit primitives) | SNARK/STARK circuits |

If the goal is just “one-way fingerprinting,” why do we have SHA-2, SHA-3, BLAKE2, and specialized designs like Poseidon or MiMC?

Because the job of a hash function is partly universal and partly context-dependent. The universal part is the security target: resistance to preimages, second preimages, and collisions. The context-dependent part is the cost model.

SHA-2 is widely deployed and acceptable for all hash-function applications under NIST guidance. It is efficient on conventional CPUs and deeply integrated into software and hardware. SHA-3 provides a structurally different family based on sponge constructions and Keccak permutations, which is valuable both for diversity of design and for applications where extendable output or permutation-based constructions are useful.

BLAKE2, specified in RFC 7693, aims at high practical performance and includes a built-in keyed mode, so message authentication does not require wrapping it in HMAC. It has two main variants: BLAKE2b for 64-bit platforms and BLAKE2s for smaller machines. That design choice reflects a performance reality: the best hash on one architecture may not be the best on another.

Then there are domain-specific hashes. In Zero-knowledge proof systems, the main cost is often not CPU cycles but arithmetic circuit constraints. A hash that is excellent in ordinary software can be painfully expensive inside a SNARK or STARK circuit. That is why designs like Poseidon and MiMC exist. Poseidon is a family of hash functions over finite fields designed to use far fewer constraints per message bit than conventional hashes in proof systems, while MiMC minimizes multiplicative complexity for contexts like MPC, FHE, and ZK. These are still hash functions in the broad sense, but their internal choices are driven by a different bottleneck.

So the fundamental idea is stable, but the engineering optimum moves with the environment.

What does 'secure' mean for a hash function in real deployments?

A standard will often say a secure hash makes it computationally infeasible to find preimages or collisions. The phrase computationally infeasible is doing a lot of work.

It does not mean impossible in principle. It means that, under current knowledge and resource assumptions, the cost of the best known attack is beyond what is realistically achievable for the intended security level. NIST’s transition guidance frames this in terms of security strength measured in bits and requires at least 112 bits of classical security strength for federal cryptographic protection.

This is also why output length alone is not the whole story. A 256-bit digest from a badly designed function is not automatically secure, while a 256-bit digest from a well-analyzed function can be. The construction, internal mixing, padding rules, and resistance to structural cryptanalysis all matter.

And implementation still matters. FIPS 180-4 explicitly notes that conformance to the standard does not by itself guarantee an overall secure system. A correct implementation can still be embedded in a bad protocol. A secure hash can be used in the wrong mode. A reference implementation can omit memory-cleanup or hardening measures needed in hostile environments. Security lives in the full design, not in the primitive alone.

Conclusion

A cryptographic hash function is a fixed-output, efficiently computable map from arbitrary-length input to a short digest, designed so that reversing it or engineering collisions is computationally infeasible. That one-way, tamper-evident structure is why hashes are foundational in signatures, commitments, Merkle trees, blockchains, password systems, and many higher-level cryptographic constructions.

The short version worth remembering tomorrow is this: a cryptographic hash is a compact fingerprint whose security comes from hardness, not uniqueness. It works because anyone can compute it, but no one should be able to fake another message that fits the same fingerprint on demand.

What should you understand before using this part of crypto infrastructure?

Before moving funds or trusting signed artifacts, understand how hash functions affect integrity and finality and then use Cube Exchange to execute transfers or trades. Verify the digest algorithm and the on-chain transaction hash where relevant, and follow chain-specific confirmation rules before acting on a deposit or settlement.

- Fund your Cube account with fiat on-ramp or a supported crypto transfer.

- When depositing crypto, copy the deposit address and network, then submit the transfer from your wallet and verify the returned transaction ID (txid) on a block explorer by matching the transaction hash.

- Check the chain-specific confirmation requirement (for example, wait ~6 confirmations for Bitcoin or the protocol's finality threshold for finality chains) before considering the deposit settled.

- If relying on signed artifacts (signed manifests, Merkle proofs, or receipts), confirm the declared hash algorithm (e.g., SHA-256 vs SHA3-256) and recompute the digest locally to verify the artifact before trading or withdrawing.

Frequently Asked Questions

Preimage resistance means it should be infeasible to find any message M that hashes to a given digest y; second‑preimage resistance means it should be infeasible, given a particular M1, to find a different M2 with the same hash; collision resistance means it should be infeasible to find any distinct pair M1,M2 that collide. Collision resistance is often the weakest in practice because of the birthday phenomenon: an n‑bit digest gives generic collision work about 2^(n/2) while preimage attacks cost roughly 2^n under the usual random‑function model.

Because collisions must exist mathematically, hashes are useful only when finding collisions or preimages is computationally infeasible; publishing a digest therefore practically binds you to one message and makes tampering detectable even though uniqueness is not guaranteed. The article explains this tradeoff repeatedly: security comes from hardness of forging, not from a theoretical one‑to‑one mapping.

The sponge construction (used by SHA‑3) keeps a fixed internal state split into a rate and a capacity: the rate is where input is XORed in and output is read for speed, while the capacity is hidden and underwrites security; FIPS 202 sets the capacity c = 2·d for a d‑bit digest to tie that hidden space to the target security level. This rate/capacity split is the explicit tradeoff between throughput and generic security bounds discussed in FIPS 202 and the article.

A bare, unkeyed hash has no secret and so is the wrong primitive for message authentication; use a keyed construction such as HMAC or a hash with a built‑in keyed mode (for example, BLAKE2’s keyed mode) so that an attacker cannot compute valid tags without the secret. The article cites HMAC and BLAKE2 as the correct kinds of keyed constructions for authentication rather than a plain hash.

There are many hash families because different contexts have different cost models and constraints: SHA‑2 is broadly efficient on conventional CPUs, SHA‑3 provides a different internal design and XOFs, BLAKE2 targets high practical performance and a built‑in keyed mode, and specialized hashes like Poseidon or MiMC are designed to be cheap inside arithmetic proof circuits. The article explains that the universal security goals are the same but implementation tradeoffs (performance, hardware, or circuit cost) drive multiple designs.

When collision resistance is effectively broken, anything that relies on a digest uniquely representing a document becomes suspect: signed documents, certificates, and software manifests can be forged by producing colliding alternatives, as demonstrated by practical MD5 collision attacks that enabled a rogue CA certificate. The article recounts those historical attacks (MD5 and later SHA‑1) to show the concrete impact of weakened collision resistance.

XOFs like SHAKE are extendable‑output functions that can produce arbitrarily long outputs and are treated differently in NIST guidance; FIPS 202 specifies domain‑separation suffixes (SHA‑3 uses suffix '01', SHAKE uses '1111') and notes XOFs are not always considered interchangeable with fixed‑output hash functions in certain standards or modes. The article highlights this distinction and the FIPS 202 evidence explains the domain separation and usage differences.

Output length helps but does not guarantee security: a long digest from a poorly designed function can still be vulnerable, while a well‑analyzed 256‑bit design can be secure; construction, mixing, padding rules, and resistance to cryptanalysis all matter, and implementers must also consider deployment and protocol-level issues. The article stresses that 'security comes from hardness, not uniqueness' and NIST notes that conformance to a standard does not automatically make a system secure.

For password storage and key derivation you should not rely on a single raw hash; the article recommends using proper KDF designs (for example, HKDF uses HMAC in an extract‑and‑expand pattern) or dedicated password KDFs so that imperfect or low‑entropy inputs are turned into keys safely. The article cautions that just hashing raw material once is usually not the right way to derive cryptographic keys.

Related reading