What is Kaspa?

Learn what Kaspa is, how its blockDAG and GHOSTDAG consensus work, why it uses proof-of-work, and how it achieves fast settlement.

Introduction

For the token explainer, see KAS.

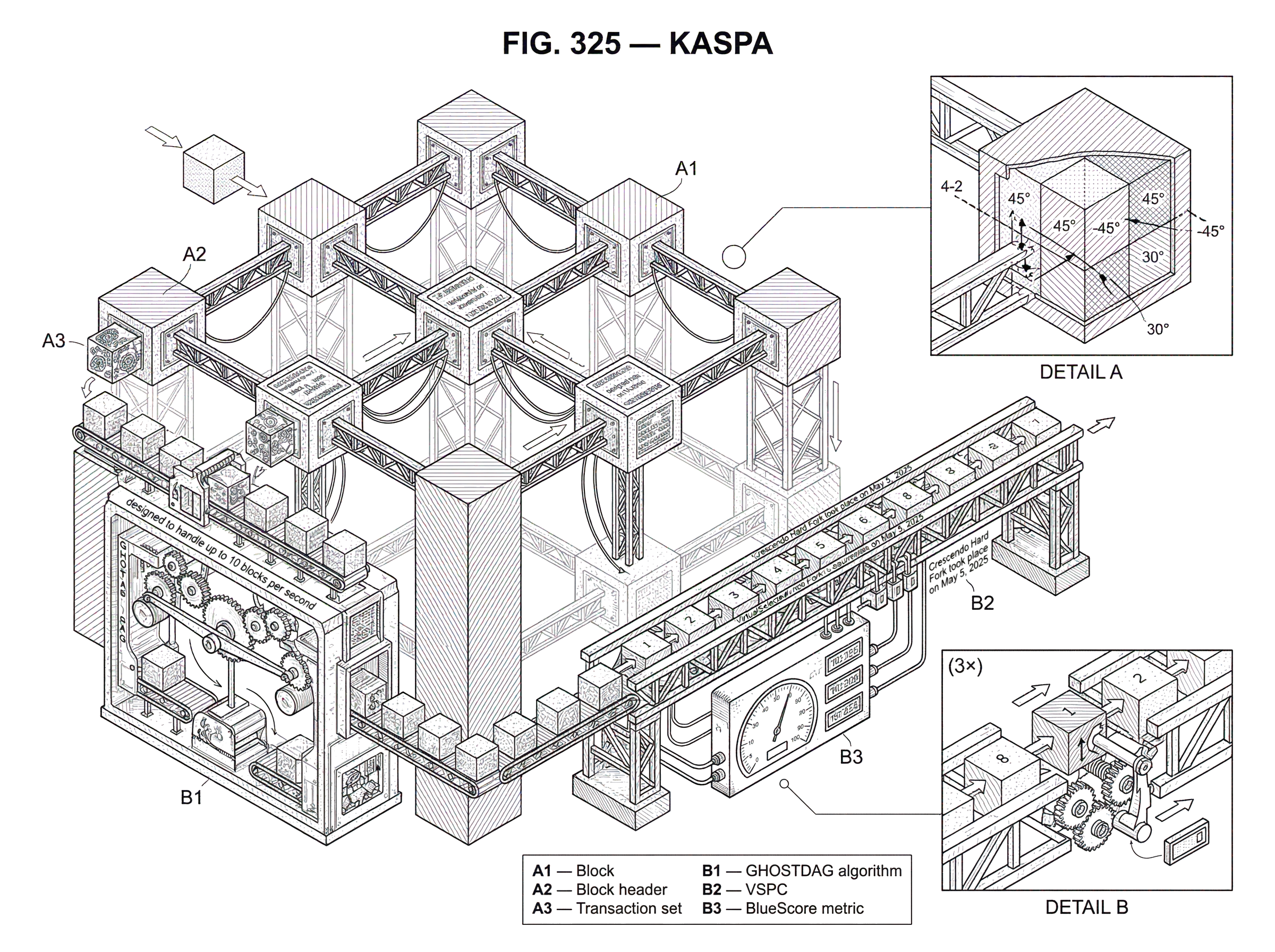

Kaspa is a proof-of-work Layer 1 that tries to solve a problem Bitcoin exposed early and clearly: the faster you produce blocks, the more often honest miners collide with each other, and the more work gets wasted. Traditional blockchains respond by keeping block production relatively slow, because a single-chain design turns many near-simultaneous blocks into losers. Kaspa changes the structure underneath the system. Instead of insisting that only one block at a time can cleanly extend the ledger, it uses a blockDAG so parallel blocks can coexist and still be ordered by consensus.

That is the main idea that makes Kaspa worth understanding. It is not simply “a fast chain” in the ordinary sense. It is a network designed around the claim that you can push proof-of-work to much higher block rates if you stop treating honest concurrency as an error condition. Kaspa’s protocol, GHOSTDAG, is the mechanism that makes that claim concrete: it preserves and orders parallel blocks rather than orphaning them by default.

If you already know Bitcoin, the easiest way to think about Kaspa is this: keep the open participation and probabilistic security model of Nakamoto-style proof-of-work, but replace the single-lane road with a road system that can tolerate many honest cars arriving at nearly the same time. The hard part is not allowing concurrency. The hard part is agreeing on a consistent ordering afterward without opening easy attack paths. That is where most of Kaspa’s design lives.

Why single-chain block structures limit block rate and increase orphaned blocks

| Design | Wasted work | Block-rate tolerance | Confirmation model | Centralization pressure | Best for |

|---|---|---|---|---|---|

| One canonical chain | High when blocks are fast | Low | Chain-depth probabilistic | Higher (propagation races) | Simple, low-BPS PoW |

| Parallel blocks recorded | Low (fewer orphaned blocks) | High (designed for 10 BPS) | GHOSTDAG ordering + VSPC | Lower (tolerates concurrency) | High-throughput PoW (Kaspa) |

A normal blockchain asks the network to maintain one canonical sequence of blocks. That sounds natural, but it creates a hidden tension between speed and agreement. Suppose blocks are found very frequently. Because network messages do not travel instantly, two honest miners will often find valid blocks before learning about each other’s work. In a single-chain protocol, only one of those blocks can remain on the main chain. The other becomes stale, or orphaned, even if it was produced honestly.

This wasted work is not just aesthetically unpleasant. It changes incentives and weakens security margins. If many honest blocks are routinely discarded, then a larger fraction of total mining effort stops contributing to the chain’s final history. The network becomes noisier, confirmations become less intuitive, and pressure builds toward centralization because better-connected miners are more likely to win propagation races. This is why traditional proof-of-work systems usually keep a conservative block interval: they are not merely being slow for no reason; they are buying time for the network to hear about the last block before the next one arrives.

Kaspa’s starting observation is that the waste comes from the data structure as much as from the block rate. If the ledger only allows one clean successor at a time, then simultaneous honest production must look like conflict. But if the ledger can record many blocks created in parallel, then the protocol can try to extract useful ordering information from all of them instead of throwing most of them away.

That is the reason Kaspa uses a blockDAG, short for directed acyclic graph of blocks. In ordinary language, miners can create blocks in parallel, and blocks can reference multiple predecessors. The ledger no longer has to pretend the network is perfectly synchronized when it is not.

How does a blockDAG let parallel blocks coexist and still be ordered?

A blockDAG is best understood as separating two jobs that a blockchain usually bundles together. The first job is recording that work happened. The second is deciding the final order that matters for transactions. A traditional chain forces these jobs into one narrow structure: a block either sits on the main chain or it effectively loses. Kaspa loosens the first job so the second job can make a more informed decision.

On Kaspa, blocks can be created in parallel, and each new block tries to merge known tips that do not yet have children. This means the ledger grows as a graph rather than as a single linked list. The graph stays acyclic because blocks only point backward, never forward. So the system accepts that many honest views of recent history can exist briefly at the same time.

That does not mean Kaspa gives up on having a coherent ledger. Payments still need an ordering. If Alice spends a coin and then tries to double-spend it elsewhere, the network eventually needs a single answer about which transaction counts. The key claim is that you can allow many recent blocks to coexist and still derive a total ordering over blocks and transactions. In fact, the PHANTOM research line and Kaspa’s practical implementation, GHOSTDAG, are built around exactly that problem.

The whitepaper’s language is useful here. PHANTOM generalizes Nakamoto consensus by letting blocks reference multiple predecessors, forming a blockDAG, and then producing a total ordering over blocks and transactions. The technical heart of the approach is identifying which blocks belong to the well-connected “honest-looking” region of the graph and which are more suspiciously disconnected. The exact PHANTOM optimization is computationally hard, so Kaspa uses GHOSTDAG, a greedy algorithm that approximates the same basic idea efficiently enough to run in practice.

So the design is not “accept everything and hope for the best.” The design is: accept parallel block production, then use graph structure to decide which blocks are well integrated into honest network activity and should be prioritized in consensus ordering.

How does GHOSTDAG order blocks in Kaspa's blockDAG?

| Approach | Goal | Computation cost | Handles parallelism | Practicality |

|---|---|---|---|---|

| PHANTOM | Total ordering in a blockDAG | NP-hard (max k-cluster) | Explicit via k-clusters | Theoretical, impractical at scale |

| GHOSTDAG | Approximate PHANTOM ordering | Efficient greedy computation | Classifies blue vs anticone blocks | Practical, implemented in Kaspa |

The intuition behind GHOSTDAG is that honest blocks produced under normal network delay should form a densely connected region of the DAG. Attackers, or blocks that are poorly integrated with the honest network, tend to stand out because they sit in larger “anticones” relative to many other blocks. The anticone of a block is, roughly, the set of blocks that are neither its ancestors nor its descendants. In plain English, these are blocks that exist beside it rather than clearly before or after it.

PHANTOM formalizes this with the idea of a k-cluster: a set of blocks where each block has at most k other blocks from the same set in its anticone. The parameter k matters because it encodes how much honest parallelism the network expects under real propagation delay and block production rate. If k is chosen appropriately, then honest concurrency should mostly fit inside that bound, while more adversarial or anomalous structure becomes easier to identify.

The original PHANTOM protocol tries to find the maximum k-cluster, but that optimization is NP-hard. That is not a minor inconvenience; it means the direct version is not practical for a live high-throughput network. GHOSTDAG is the practical answer. It uses a greedy ordering procedure that captures the same broad principle without requiring impossible computation at every step.

The result is a protocol that can classify and order blocks efficiently while retaining a probabilistic security story familiar from proof-of-work systems. The whitepaper’s informal theorem says that, assuming an honest majority of computational power, the probability that GHOSTDAG’s ordering between two published transactions later reverses falls exponentially over time. That is the kind of statement users actually need from a settlement system. Not absolute finality in one instant, but rapidly strengthening confidence that the ordering will stay put.

This is the most important point to keep straight: Kaspa is fast because it reorganizes how proof-of-work uses network concurrency, not because it abandons the basic logic of probabilistic PoW settlement. You still wait for confidence to accumulate. Kaspa just tries to make that accumulation happen with much lower latency and much less wasted honest work.

How do confirmations work on Kaspa (VSPC and BlueScore explained)?

Imagine two miners on opposite sides of the network both find a valid block almost simultaneously. On a single-chain system, one block would usually end up outside the main chain once the network converged. In Kaspa, both blocks can remain in the blockDAG. A later block can point to multiple tips, effectively acknowledging both. Over the next few seconds, more blocks arrive and the graph becomes richer.

At that point, consensus is not asking, “Which one surviving chain tip won the race?” It is asking, “Given this graph of honest and possibly adversarial activity, what ordering does GHOSTDAG assign?” Blocks that are well connected to the main flow of honest network production are favored in the ordering. Transactions become more reliable as additional blocks reinforce that ordering.

From an integration perspective, Kaspa exposes a simplified chain view called the VirtualSelectedParentChain, or VSPC. This matters because applications do not want to reason directly about the full graph every time they check whether a transaction is accepted. The VSPC selects exactly one chain_block per daa_score, and that chain view dictates which transactions from merged blocks are considered accepted.

This separation is subtle but important. A transaction may appear in a block, yet acceptance is a logical property determined by the chain view, not merely by physical inclusion in one graph node. The integration guide emphasizes that the acceptingBlockHash can change during a reorg of the VSPC. So “accepted” on Kaspa is not a magical yes-or-no bit attached forever the moment a transaction first appears. It is a consensus interpretation that becomes stronger as the virtual chain advances and reorg risk decays.

For confirmations, developers commonly look at BlueScore, which the guide describes as the total count of blue blocks in the blockDAG. The confirmation count for an accepted transaction is computed as the current VSPC BlueScore minus the BlueScore of the accepting block. That gives applications a way to express the familiar question, “How deep is this settlement signal now?” in Kaspa’s graph-based world.

What throughput and confirmation latency does Kaspa aim to achieve?

Kaspa’s official site describes it as the first blockDAG and says it is designed to handle up to 10 blocks per second without compromising security or decentralization. That figure matters because it captures the protocol’s ambition: not just slightly faster blocks, but a materially different operating regime from classical PoW chains.

The project’s materials also emphasize low confirmation latency and “instant transaction confirmation,” but this phrase needs careful interpretation. In a proof-of-work system, there is always a difference between being seen quickly and being economically final enough for a given risk tolerance. Kaspa’s research results and integration docs support the more precise version of the claim: users often get an initial confirmation quickly, and confidence in the ordering strengthens rapidly as the DAG grows. The whitepaper reports empirical confirmation statistics on hundreds of thousands of transactions, with a large majority first-confirmed within seconds.

That does not mean confirmations become risk-free in a literal instant. It means the network is engineered so the first useful settlement signal arrives much sooner than on slower single-chain proof-of-work systems, and the path from “seen” to “trustworthy enough for this use case” is shorter.

There is also an important update in Kaspa’s recent evolution. The Rust-based node implementation notes that the Crescendo Hard Fork took place on May 5, 2025 and transitioned the network from 1 BPS to 10 BPS. The roadmap before that change made clear that the higher block rate was meant to preserve user balances and per-second emission schedule by reducing reward per block proportionally. In other words, the network was not trying to mint faster overall; it was trying to settle with finer-grained block production.

This distinction matters because block frequency and monetary issuance are separate knobs. Kaspa increased the first while aiming to keep the second constant on a per-second basis.

How is Kaspa mined and what is kHeavyHash?

Kaspa remains a proof-of-work network, so its performance story is not separate from mining. The official site states that Kaspa uses the kHeavyHash algorithm for consensus and security. The same source describes the combination of kHeavyHash, a high-throughput DAG, and “no-wasted-blocks” as less energy intensive than other PoW networks.

That energy claim should be read carefully. The source clearly makes the claim, but the materials provided here do not include a quantified comparative study across networks. So the safe explanation is narrower: Kaspa argues that when honest work is less frequently discarded, the network makes better use of the work miners perform. That mechanism is plausible in concept. The exact degree of energy advantage relative to other PoW systems would require more detailed measurement than these sources supply.

On the algorithm itself, an unofficial but reputable explainer describes kHeavyHash as a matrix multiplication sandwiched between two Keccak hashes, and notes that its compute-heavy character can enable dual-mining alongside memory-intensive algorithms. Because that specific structural description comes from a secondary source rather than Kaspa’s canonical homepage, it is best treated as a useful implementation-oriented explanation, not the protocol’s own full formal specification.

What is fundamental is simpler: Kaspa is not “proof-of-stake with DAG branding.” It is a high-throughput PoW system, and its consensus properties depend on the same broad assumption as other Nakamoto-style systems: an honest majority of total hashpower.

What node software, RPCs, and wallet APIs do developers use with Kaspa?

| API | Encoding | Best use | Where defined | Notes |

|---|---|---|---|---|

| gRPC (kaspad) | Protocol Buffers | Production clients and SDKs | rpc/grpc/core/proto .proto files | Authoritative, high performance |

| JSON RPC | JSON | Simple HTTP clients | kaspad docs / JSON port | Easy but less performant |

| Borsh RPC | Borsh binary | Compact binary clients | kaspad docs | Lower payloads, niche use |

| REST API | JSON over HTTP | Dashboards and quick integrations | api.kaspa.org (Swagger) | Public endpoint; self-hostable |

For users, Kaspa may look like a wallet balance and a fast transfer. For developers, the real experience is more concrete: node software, RPC APIs, indexing logic, acceptance tracking, and operational tradeoffs.

Historically, the Go implementation kaspad served as the reference node, but that repository is now deprecated because the reference implementation was rewritten in Rust. Today, Rusty Kaspa is the recommended node software. The repository describes it as a drop-in replacement for the older Go node and the recommended implementation for the network.

Kaspa nodes expose multiple interfaces. The integration guide identifies kaspad as the node and describes a gRPC + Protocol Buffers API, while the public developer docs also mention JSON RPC, Borsh RPC, and the relevant ports used by a node. The Rust repository includes the .proto files under rpc/grpc/core/proto, including messages.proto and rpc.proto, which is exactly the sort of detail integrators need when generating clients or validating message formats.

Wallet behavior on Kaspa also reflects the network’s UTXO structure. The integration guide explains that wallet applications read UTXOs from the node and submit signed transactions back through RPC methods such as GetUtxosByAddresses and SubmitTransaction. The default signing algorithm is Schnorr, with ECDSA also supported.

If you are used to account-based chains, the main adjustment is that indexing and confirmation logic are more stateful than “wait for block number N.” Because the VSPC can reorg, an indexer has to track accepted transactions and update their acceptingBlockHash when the chain view changes. The guide explicitly warns that requesting the VSPC may return blocks not yet indexed, so services may need to backfill missing pieces before they can produce a complete answer.

This is not a flaw unique to Kaspa. It is the practical price of exposing a richer consensus structure. The protocol can give you lower latency and parallelism, but the software sitting on top has to be honest about graph-aware finality instead of pretending the world is a simple linear block number.

What assumptions (network delay, k parameter) underlie Kaspa's security and performance?

Kaspa’s core promise depends on a few assumptions that are easy to miss if you only read marketing-level summaries. The first is network delay. The whitepaper parameterizes security around an upper bound Dmax on propagation delay, the block creation rate λ, and a tail-probability parameter δ. Those determine the choice of k, which controls how much parallelism the protocol treats as expected honest behavior.

The causal logic is straightforward. If block production becomes faster, or if propagation delay is worse, then honest miners will naturally produce more parallel blocks that sit in each other’s anticone. The protocol must tolerate that without confusing normal network conditions for adversarial behavior. But raising k to tolerate more concurrency can also increase confirmation latency. So there is no free lunch. Kaspa’s design expands the useful region of the tradeoff curve; it does not erase the tradeoff entirely.

That is one of the most common misunderstandings about high-throughput DAG systems. People hear “parallel blocks” and imagine a protocol that somehow beats network physics. It does not. It uses structure more efficiently so the network can run at higher block rates before the usual problems become intolerable.

There are also ordinary operational risks. Running a Kaspa node is not trivial consumer software at the protocol edge. The developer docs list meaningful hardware requirements, and initial sync can take many hours or more. The project has also seen real-world node issues around IBD, data corruption, and incomplete UTXO indexing in public issue reports. More recent Rusty Kaspa release notes highlight improvements to IBD, pruning-point movement handling, storage efficiency, and pruning-proof logic, which suggests active engineering in exactly the areas that matter for a high-throughput graph-based node.

This is the normal shape of a serious protocol, not a contradiction. Ambitious consensus designs do not live or die only by whitepaper elegance. They also live or die by whether nodes can sync, prune, index, and recover under real hardware and network conditions.

Was Kaspa fair-launched and how does its governance compare to Bitcoin?

Kaspa’s official site emphasizes that the network was fair-launched on November 7, 2021, with no premine and no pre-allocation of coins. It also describes the project as open source and community-driven, with no central governance. Those claims matter because Kaspa deliberately presents itself in the lineage of Bitcoin-like networks: open participation, proof-of-work issuance, and a reluctance to center control in a foundation or privileged validator set.

That resemblance is real, but it should not obscure the architectural departure. Bitcoin’s consensus discipline comes from a single chain and slow blocks. Kaspa keeps the proof-of-work ethos while changing the ledger geometry underneath it. So the family resemblance is mostly about who can participate and how security is grounded, not about using the same data structure or the same performance profile.

Conclusion

Kaspa is easiest to remember this way: it is a proof-of-work network that treats parallel block creation as something to organize, not something to discard. That is the design move behind its blockDAG, its GHOSTDAG consensus, and its push toward fast settlement at high block rates.

Everything else follows from that choice. If you allow many honest blocks to coexist, you can reduce wasted work and shorten confirmation latency. But then you need a consensus rule sophisticated enough to order the graph, an integration model that tracks acceptance through the VSPC, and node software capable of handling the operational complexity. Kaspa’s significance is that it tries to do all of that without leaving the probabilistic, open-membership world of proof-of-work.

How do you buy Kaspa?

You can buy Kaspa (KAS) on Cube by funding your account and trading on the spot market for the KAS pair. Cube’s workflow keeps the trade on-exchange and gives you immediate execution options (market) or price control (limit).

- Fund your Cube account with fiat via the on-ramp or transfer a supported stablecoin (for example USDC) into your Cube wallet.

- Open the KAS/USDC (or KAS/BTC) spot market on Cube and view the order book for price and depth.

- Choose an order type: use a market order for immediate execution or a limit order to set the exact price (use post-only or IOC if you want maker/taker control).

- Enter the KAS amount or spend amount, review the estimated fill, fees, and slippage, then submit the order.

Frequently Asked Questions

Kaspa keeps probabilistic Nakamoto-style PoW security but allows many honest blocks to coexist by recording them in a blockDAG and then ordering them with GHOSTDAG; this reduces wasted honest work and lets settlement confidence accumulate much faster than on a single slow chain while still relying on exponential strengthening of confidence over time rather than instantaneous finality.

PHANTOM is the theoretical generalization that finds a maximum k-cluster in a blockDAG, but that optimization is NP-hard; GHOSTDAG is the practical, greedy approximation Kaspa implements to efficiently classify well-connected (honest-looking) blocks and produce a total order in live operation.

The parameter k encodes how much honest parallelism the protocol tolerates: a larger k accepts more concurrent honest blocks but - because the protocol must tolerate greater anticone sizes - raising k also tends to increase confirmation latency; k is chosen based on assumptions about max propagation delay (Dmax), block rate (λ), and a tail probability (δ).

Yes - because Kaspa exposes a VirtualSelectedParentChain (VSPC), an acceptingBlockHash for a transaction can change when the VSPC reorganizes; indexers and applications must therefore track acceptingBlockHash updates and handle missing blocks/backfill when the VSPC returns blocks not yet indexed.

Kaspa is a proof-of-work network that currently uses the kHeavyHash algorithm for mining; the project argues fewer wasted blocks makes better use of miner work and thus lower energy per useful settlement, but the provided sources do not supply a quantified cross-network energy comparison, so any concrete energy advantage remains unproven in these materials.

From a developer/operator perspective you must treat acceptance as a graph-aware property: instead of relying only on block height, you query the VSPC and BlueScore to compute confirmations, handle VSPC reorgs that change acceptingBlockHash, and implement backfill logic because the VSPC can reference blocks your indexer hasn’t yet stored.

Running a node has nontrivial operational demands: the docs recommend a dedicated 24/7 machine, at least ~256 GB free for chain data, and warn that initial sync and IBD can take many hours; there have also been real-world reports of IBD, indexing, and storage issues, and the Rust implementation (Rusty Kaspa) is now the recommended reference node.

Kaspa was fair-launched on November 7, 2021 with no premine and positions itself as open-source and community-driven with no central governance; architecturally it still departs from Bitcoin by replacing the single-chain data structure with a blockDAG and GHOSTDAG ordering while keeping open participation and PoW issuance.

Related reading