What is a Honeypot Token?

Learn what a honeypot token is, how it blocks selling through smart-contract logic, how detection works, and why balances can hide trapped value.

Introduction

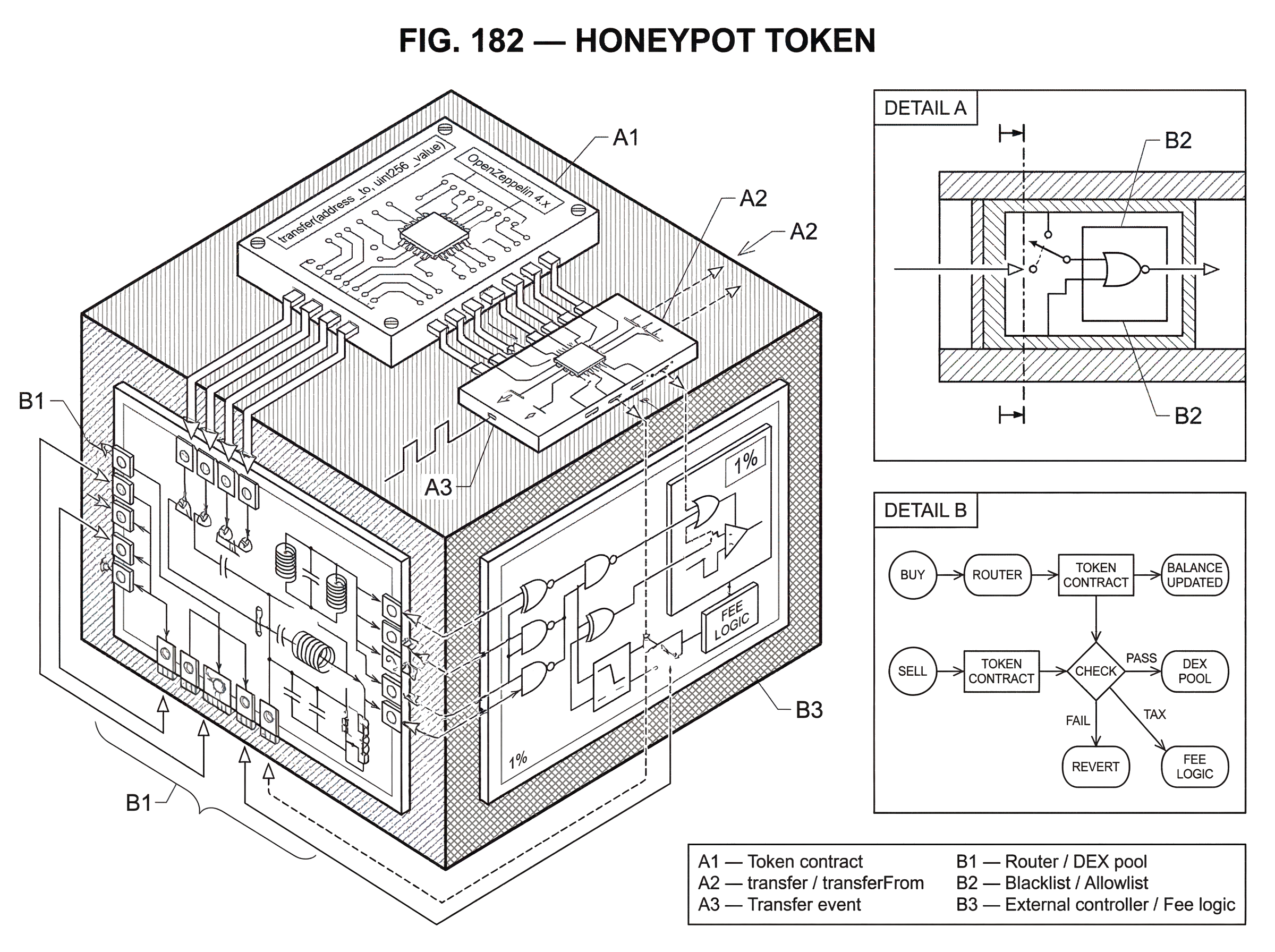

Honeypot token is the name for a token scam in which buying is easy but selling is blocked, selectively restricted, or made economically pointless. That asymmetry is what makes the idea dangerous: in a decentralized exchange, many users assume that if a token can be bought through a familiar router and appears in a standard interface, it can also be sold the same way. A honeypot token exploits exactly that assumption.

The important point is not merely that the token is “bad” or “risky.” Many tokens are risky. A honeypot is more specific: it creates the appearance of an open market while hiding a one-way door. Victims see price appreciation, liquidity, and successful buy transactions, but when they try to exit, the token’s rules treat selling differently from buying.

That difference can be implemented in several ways. A contract might blacklist buyers after purchase, impose an extreme sell tax, require an inaccessible condition before transfer, or route transfer checks through another contract that can change behavior after launch. The unifying mechanism is simple: the token contract or closely related control logic decides who may transfer out, under what conditions, and at what cost.

This is why honeypot tokens sit at the intersection of token standards, decentralized exchange mechanics, and smart-contract trust.

The token may present an ERC-20-style surface while embedding additional logic that turns nominal ownership into unusable ownership.

- with familiar functions like

transfer approvetransferFrom

Understanding honeypots means understanding where “I hold the token” parts ways with “I can freely dispose of the token.”

How can token ownership differ from the ability to sell it?

The easiest way to understand a honeypot token is to start from a puzzle. On-chain, a wallet can show a positive token balance. A chart can show rising price. A liquidity pool can exist. Yet a holder may still be unable to realize any of that value. How can all three be true at once?

Because a token balance is only a record inside contract-controlled state. The balance matters, but it is not the whole story. To sell on a decentralized exchange, the token must successfully move from the holder to the pool, usually through a router that calls token transfer logic directly or indirectly. If that transfer path fails, returns false, applies an overwhelming fee, or is conditionally denied, the practical result is the same: the holder cannot exit.

This is the compression point: a honeypot token is not mainly about misleading price; it is about controlling transfer permission at the moment of exit. Price manipulation often helps attract victims, but the trap itself is enforced in transfer logic.

On EVM chains, the baseline expectation comes from ERC-20. The standard defines functions such as transfer(address _to, uint256 _value) and transferFrom(address _from, address _to, uint256 _value), and says they must emit Transfer events while callers must also handle a false return value rather than assuming success. But ERC-20 is an interface standard, not a guarantee of economic fairness. A token can expose the expected methods and still add extra checks around who is allowed to transfer, when, and how much value survives the transfer.

That flexibility is useful for legitimate designs too, which is part of the problem. Smart contracts often need permissions, fees, anti-bot rules, pausing controls, or upgrade hooks. A honeypot abuses that flexibility by making the transfer path appear open during discovery and closed during exit. What breaks the victim is not that the token lacks a transfer function, but that the function contains logic they did not anticipate.

How does a token sale execute on a decentralized exchange?

A worked example makes this concrete. Imagine a user buying a newly launched token through a Uniswap-style pool on Ethereum, BNB Smart Chain, or Base. The front end shows a pair, a price, and a swap button. The user spends ETH, BNB, or another paired asset, and the router coordinates the trade so the new token arrives in the user’s wallet.

So far, nothing unusual has to happen. The scammer wants buying to work. In fact, smooth buying is essential because it creates social proof, chart activity, and rising on-paper valuations. Other users see successful transactions and infer market legitimacy.

Now the user decides to sell. The interface asks for approval if needed, then routes the sell transaction. Mechanically, the sell requires the token to leave the user’s wallet and move into the pool or router-controlled path. That is the crucial checkpoint. If the token contract checks whether the sender is blacklisted, whether the recipient is a pool, whether selling is currently enabled, whether a hidden threshold is met, or whether an external controller approves the transfer, it can make this transaction fail even though the earlier buy succeeded.

Sometimes the transaction simply reverts. Sometimes the token returns false, which tooling must explicitly handle because the ERC-20 specification warns that callers must not assume false never occurs. Sometimes the transfer “succeeds” but a confiscatory fee leaves the seller with effectively nothing. From the user’s perspective these feel like different problems. From the mechanism’s perspective they are the same family: the token makes exit non-viable.

This is why a honeypot often fools people who think in terms of balances rather than transfer paths. The wallet can still display tokens. Explorers can still show holdings. Even event logs can look superficially normal. But the market value is not realizable if the transfer outflow is under adversarial control.

What techniques do honeypot tokens use to block or cripple sales?

| Method | How it blocks | Seller outcome | Detectability | Typical sign |

|---|---|---|---|---|

| Address blacklist/whitelist | Checks sender or recipient | Sell tx reverts or denied | Visible if code explicit | Repeated failed sells |

| Confiscatory sell tax | High fee on transfers | Net proceeds nearly zero | Detected by simulation | Huge fee shown on sell |

| Stateful control | Owner flips rules later | Sellability changes over time | Missed by point‑in‑time code scans | Sellable then blocked later |

| External indirection | Calls helper contract for checks | Behavior hidden off main code | Requires call‑graph review | Opaque external dependencies |

| Edge‑case logic | Min thresholds or balance edits | Apparent balance not transferable | Subtle in static review | Absurd min‑sell amount |

The implementations vary, but they cluster around a small number of control levers. The first is address-based permissioning. A contract may maintain a blacklist or whitelist and check it inside transfer logic. CertiK describes a basic version in which buyers are added to a blacklist after purchase, and later sell attempts are rejected if the seller’s address is on that list. The same idea can be inverted into a whitelist: only privileged addresses are allowed to sell.

The second lever is economic obstruction rather than absolute denial. Instead of reverting, the token may impose a sell tax so high that selling becomes meaningless. Academic work on scam-token detection highlights this pattern explicitly: restrictive selling conditions can include high selling taxes or limits that make tokens effectively unsellable or illiquid. This matters because many users think “honeypot” means only “hard revert.” In practice, a token that always lets you sell 1% of your holdings while confiscating the rest can be just as trapping.

The third lever is stateful or dynamic control. The token’s behavior can change after deployment. A scanner may test the token while it is temporarily sellable, only for the owner or related controller contract to later update a tax, enable a blacklist, or change an external dependency. Honeypot.is explicitly warns that a token not classified as a honeypot at scan time may still change later. That warning is not a disclaimer by habit; it follows from the mechanism. If the sell restriction depends on mutable state, point-in-time inspection has a built-in limit.

The fourth lever is indirection through external contracts. Instead of placing the obvious malicious rule directly in the visible token code, a token can call another contract to determine whether a transfer is allowed or what fee applies. This makes manual inspection harder because the dangerous behavior may live outside the main token contract or may be activated through ownership and access-control pathways. Research on honeypot tokens notes that implementations often involve external contract interactions, blacklists or whitelists, and liquidity-pool manipulations.

A further variation is fake compliance through edge-case logic. For example, a token may technically allow sales above a minimum amount, but set that minimum absurdly high; even above realistic circulating supply. Or it may alter a user’s effective balance in contract state so the visible position and the transferable position diverge. CertiK documents both “minimum-sell-amount” and “balance-change” patterns as concrete examples of how scammers preserve the appearance of normal token ownership while removing actual exit rights.

Why can't static code review reliably detect honeypot tokens?

A smart reader might ask: if the mechanism lives in contract logic, why not just inspect the code and be done with it?

Sometimes that works. If the contract is verified and the blacklist or sell gate is obvious, static review can reveal the trap quickly. But many honeypots are difficult precisely because the dangerous condition is subtle, obfuscated, or not always active. A transfer function may look ordinary until it reaches a helper call with an innocuous name. A tax variable may be owner-controlled and changed after launch. A sell restriction may trigger only when the recipient is a known liquidity pool. And some scams rely on combinations of conditions that are individually common in legitimate tokens.

This is why research has argued that contract-only analysis is often insufficient for detecting many token scams, including honeypots. The problem is not merely syntax; it is behavior over time. A token can be benign-looking at deployment, suspicious only after a state change, or malicious only in interaction with specific counterparties such as a DEX pair.

That temporal aspect matters. A honeypot is often a staged scam. The attacker sets up the token, seeds liquidity, allows purchases, promotes price action, and only then turns the transfer asymmetry into a victim-facing reality. Some controls may be active from the start; others may be introduced after volume appears. If your detection method sees only the contract text and not the evolving transaction behavior, it may miss the point at which the trap actually closes.

When and how does transaction simulation detect honeypots?

Because the decisive question is “Can this token be sold right now under realistic conditions?”, simulation is a natural defense. Honeypot.is describes its detector in exactly these terms: it simulates both a buy and a sell transaction to determine whether the token behaves like a honeypot. That approach aligns with the underlying mechanism. If the scam is enforced at execution time, then execution testing is often more informative than reading declarations.

Simulation has an important advantage over pure static inspection. It asks the practical question users care about: not whether a function exists, but whether a trade path succeeds. If a sell reverts, returns failure, or yields pathological output under the same routing conditions a real user would face, the detector can flag the token even if the code is obfuscated.

But simulation is not magic. It inherits assumptions from the state it observes. If the owner can later change the sell tax, flip a blacklist bit, or update an external controller, today’s successful simulation does not guarantee tomorrow’s exit. Honeypot.is says this directly: a token that is not a honeypot now may still become one later. That is not a minor caveat. It is one of the central truths of adversarial smart-contract systems.

There is also a simpler technical limitation. EVM tokens do not all fail in the same way. Some revert. Some return false. Some produce strange side effects. The ERC-20 standard itself warns integrators to handle false return values. Good detectors therefore need to inspect both reverts and returned results, and ideally estimate whether the realized proceeds after fees make the sale economically meaningful.

This is also where transaction simulation connects to neighboring security ideas. For many user-facing checks, transaction simulation is the most direct mitigation for honeypots because it tests the exact path the scam tries to break: the exit transaction.

Honeypot vs. rug pull: how do the attacks differ?

| Scam | What is blocked | Primary mechanism | Best defenses | Typical signal |

|---|---|---|---|---|

| Honeypot | Selling by ordinary holders | Transfer gates, taxes, whitelists | Simulate sells; check transfer logic | All‑green chart; few sells |

| Rug pull | Market liquidity for everyone | Drain or lock liquidity | Verify liquidity locks; ownership analysis | Sudden liquidity drain; rug alerts |

Honeypot tokens are often mentioned alongside rug pulls because both target speculative buyers in thin, fast-moving markets. But the mechanics differ in an important way.

A honeypot traps the holder by restricting the holder’s ability to sell. A rug pull typically destroys the market by draining or removing liquidity, collapsing the conditions under which anyone could trade. In a honeypot, the market may appear alive while victims are selectively unable to exit. In a rug pull, the market itself is often dismantled or rendered functionally empty.

The distinction matters because the defenses differ. For a rug pull, liquidity controls, ownership analysis, and lock verification are central. For a honeypot, the key question is whether token transfer and sell conditions remain open to ordinary holders. The two can also coexist. A scammer may first trap buyers with sell restrictions and later remove liquidity or abandon the token. CoinMarketCap’s consumer glossary even notes this overlap when describing manipulated coins that lure investors, prevent withdrawal, and can later be rug-pulled.

What on-chain signals suggest a token might be a honeypot?

Honeypots tend to produce a recognizable mismatch between visible market activity and realizable exit. CertiK points to a simple symptom: a chart that is “all green,” with zero or very few sells, is a strong warning sign. The reason is mechanical. If buyers can enter but ordinary holders cannot exit, buy counts accumulate while sell counts remain unusually sparse or confined to insider wallets.

That said, this signal is only a heuristic. In the earliest life of a genuine token, sells may also be low for innocent reasons. Low sell count becomes meaningful when combined with other evidence: failed sell simulations, owner-controlled transfer logic, suspicious access control, unverifiable contract code, or marketing patterns that pressure immediate entry.

Research on new-token markets gives a stronger version of the same point. A recent empirical study of newly created Uniswap V2 tokens found that a very large share of apparent market value was trapped in honeypot-classified tokens. The deeper lesson is not the exact percentage, which depends on detection methodology and sampling window. It is that headline price and nominal market value can be largely illusory when exit rights are impaired. A wallet balance times a quoted price is not the same thing as cashable value.

This also explains the emotional pull of honeypots. Victims may watch their holdings appear to appreciate dramatically. On paper, they are making money. Mechanically, they are not. The scam exploits the gap between valuation as displayed and valuation as executable.

Are honeypot tokens limited to Ethereum, or do they appear on other chains?

The best-known honeypot patterns come from EVM ecosystems because Ethereum-compatible chains share similar token and DEX mechanics. Avalanche’s C-Chain is explicitly EVM-compatible, which means Ethereum-style token contracts and tooling can generally be ported there. The same broad logic applies on BNB Smart Chain and Base, both of which are also common environments for simulation-based honeypot detectors.

The chain-specific details can differ, but the core structure does not: if a token or related program controls outbound transfer conditions, it can create a one-way market. On Solana, the standard SPL Token program documents basic transfer, approval, authority, and account-freeze operations. Those baseline instructions are not themselves a guide to honeypot scams, but they show the broader point that token systems always live inside a permission and execution model. Whether a “honeypot token” label is commonly used on a given chain depends partly on ecosystem conventions, but the underlying risk (ownership that cannot be freely exited) is not unique to Ethereum.

On Polkadot smart-contract chains, the docs emphasize that uploaded contracts are permissionless and untrusted. That framing is useful here. Runtime safety controls such as gas metering protect the chain from malicious code exhausting resources, but they do not by themselves guarantee fair token transfer semantics for users. The security problem of honeypots is not chain liveness; it is adversarial application logic.

So the right generalization is modest: honeypot tokens are most discussed in EVM token markets, but the underlying security lesson applies anywhere users infer economic rights from surface-level balances without testing actual exit conditions.

How should users and developers reduce the risk of honeypot tokens?

| Actor | Primary step | Concrete action | Why it helps |

|---|---|---|---|

| User | Verify sellability | Simulate buy+sell; inspect charts | Tests the real exit path |

| Developer | Limit bespoke logic | Use OpenZeppelin; restrict owner powers | Reduces hidden transfer traps |

| Wallets / DEXs / Monitors | Combine methods | Static analysis + simulation + history | Catches code and runtime traps |

For users, the most important habit is to treat sellability as something to verify, not assume. A token matching a familiar interface or appearing in a popular DEX UI says little by itself. Simulation tools are useful because they test the practical sell path. But they should be read as current-state checks, not permanent guarantees.

Behavioral signals help too. An unusually one-sided chart, a token that surges while sell reports accumulate, opaque or unverified contracts, privileged owner controls, and elaborate claims about “anti-dump” or special sell permissions should all raise suspicion. The SQUID case became famous for exactly this kind of mismatch: extraordinary price gains paired with repeated reports that holders could not sell.

For developers building legitimate tokens, the lesson runs in the opposite direction. Avoid bespoke transfer logic unless it is truly necessary. Use community-vetted implementations such as standard ERC-20 libraries from OpenZeppelin rather than modifying token code casually. OpenZeppelin explicitly recommends using installed library code as-is and not copy-pasting or hand-editing it. That advice is partly about reducing bugs, but it also reduces the space in which hidden transfer restrictions can creep in.

If a project really does need privileged controls (pausing, fees, anti-bot windows, or allowlists) those controls should be narrow, auditable, and clearly disclosed. The danger zone is not “any custom logic,” but custom logic that lets insiders selectively decide whether holders can exit. Access control is the hinge. A token whose owner can arbitrarily alter transfer rights is asking users to trust governance over code, even if the interface looks decentralized.

For wallets, DEX interfaces, and monitoring systems, the practical implication is to combine methods. Static inspection can catch obvious blacklists or mutable tax hooks. Simulation can test the live sell path. Transaction-history analysis can identify asymmetries such as abundant buys with vanishingly few successful sells. Research systems like TokenScout push this further by modeling token activity as temporal transaction graphs, precisely because behavior over time often reveals scams more reliably than code shape alone.

Conclusion

A honeypot token is a token that lets you enter the trade but obstructs your exit.

Everything else is secondary to that core asymmetry.

- the marketing

- the chart

- the hype

- even the apparent price

The durable idea to remember is simple: holding a token is only valuable if the transfer rules let you realize that value. In decentralized markets, ownership is a contract-defined permission set, not a guarantee. Honeypots exploit the difference.

How do you secure your crypto setup before trading?

Secure your setup by verifying sellability, checking contract permissions, and testing small real trades before committing significant funds. On Cube Exchange (non‑custodial, MPC signing), follow a short verification and test-trade workflow to confirm a token’s exit path before you scale a position.

- Fund your Cube account with a small test deposit (for example, $10–$50 in USDC or the chain native asset) using fiat on‑ramp or a supported crypto transfer.

- Inspect the token contract on the chain explorer: look for owner-controlled sell taxes, blacklist/whitelist logic, external controller addresses, or unverified code that could alter transfer behavior.

- Run a concrete buy+sell test using the same DEX/router, slippage, and gas you would use in production. Attempt a tiny sell immediately after the buy to confirm the transfer path does not revert and net proceeds remain meaningful after fees/taxes.

- If the test trade succeeds, place your real order on Cube with a controlled size (use a limit order if you need price control). If the test fails or shows confiscatory fees, do not trade and escalate to further review or avoid the token.

Frequently Asked Questions

By putting extra checks or costs into the token’s transfer path so that buys succeed but sell transfers are denied, taxed, or rerouted; common techniques include blacklists/whitelists, very high sell taxes, external controller contracts that flip behavior after launch, or minimum-sell thresholds that are effectively unreachable.

Sometimes - if the malicious rule is visible and immutable, static code review will reveal it - but often not, because honeypot behavior can be obfuscated, delegated to external contracts, or enabled later by mutable state, so code-only inspection frequently misses staged or indirect traps.

Simulating a realistic buy+sell path is the most direct test of current sellability because honeypots enforce the trap at execution time; however, simulation is a point‑in‑time check and can be invalidated later if the owner changes taxes, blacklists, or external controllers, and detectors must also inspect both reverts and false returns.

A honeypot blocks holders from realizing value by restricting transfers, whereas a rug pull removes or drains liquidity to collapse the market; the two are different attack mechanics but can be combined in a single scam (e.g., sell-gate first, liquidity withdrawal later).

Heuristics include a chart that’s 'all green' or has almost no sells, many buy transactions but few successful sells, unverified or owner‑modifiable code, privileged owner controls or external callouts in transfer logic, and failed sell simulations - none alone is definitive but the combination raises strong suspicion.

No - ERC-20 defines an interface, not a guarantee of fair transfer semantics; tokens may still add owner-controlled checks, taxes, or external calls and the standard even warns integrators to handle returned false values and not assume success.

Treat sellability as something to verify: run a sell simulation, inspect for owner‑controlled tax/blacklist hooks or external controller calls, prefer well‑audited standard libraries (e.g., community-vetted OpenZeppelin implementations) and combine static review, simulation, and transaction‑history analysis before trusting a new token.

No - the underlying risk is cross‑chain: while honeypots are most discussed in EVM ecosystems, the same class of attack (surface‑level balances that are not freely transferable) can occur on Solana, Avalanche C‑Chain, Polkadot smart‑contract parachains, and any platform where transfer semantics are defined by program logic.

Related reading