What Is a Rug Pull?

Learn what a rug pull is in crypto, how it works mechanically, the main attack paths, and which controls actually reduce rug-pull risk.

Introduction

Rug pull is the name for a family of crypto scams in which insiders or privileged actors extract value from users after attracting their money into a token, protocol, or liquidity pool. What makes the topic important is that rug pulls often do not look like classic software hacks. In many cases, the system behaves exactly as its creators designed it to behave; the real problem is that users misunderstood who still controlled the critical levers.

That is the central idea to keep in view. A rug pull is usually not about code “breaking.” It is about trust being placed where code, governance, or market structure did not actually remove it. If a team can mint more tokens, upgrade the contract, withdraw liquidity, freeze selling, or spend assets via previously granted approvals, then the project may appear decentralized while still containing an exit door for insiders.

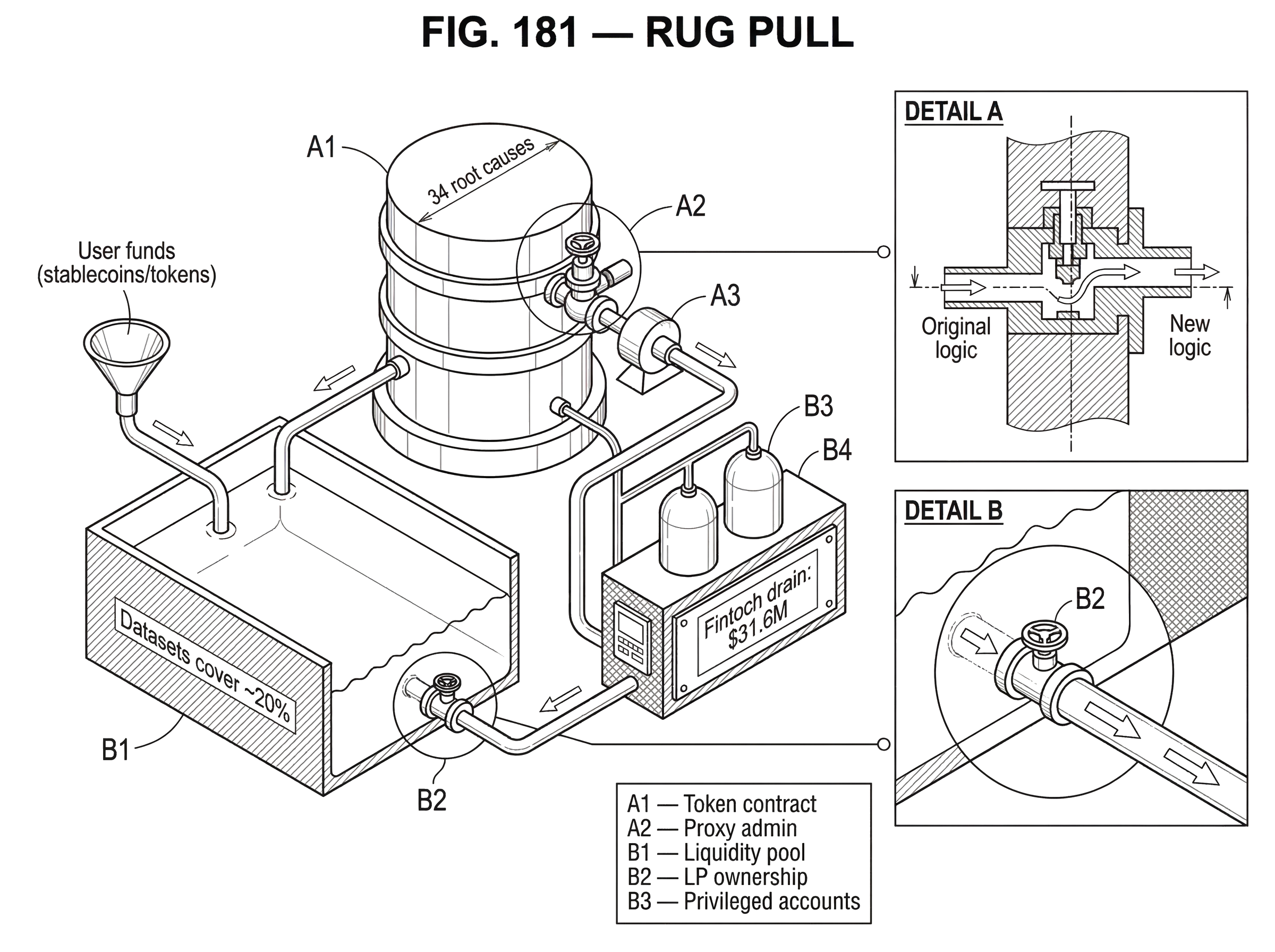

Research and incident data show both the scale of the problem and the difficulty of pinning it down cleanly. A recent systematization paper describes rug pulls as a grave threat to the crypto ecosystem and builds a taxonomy of 34 root causes, noting that public datasets cover only a small share of them. Another paper separates rug pulls into two broad mechanisms: those driven by malicious smart-contract functions and those driven by trading behavior without necessarily malicious code. That split is useful because it explains why some rug pulls can be found by reading contracts, while others only become visible when money starts moving.

How does retained control enable a rug pull?

The simplest way to understand a rug pull is to ask a blunt question: after users buy in, who can still change the game? If the answer is “the deployer, the treasury signers, the proxy admin, the liquidity controller, or a privileged contract,” then you are looking at the core risk.

In ordinary finance, users often rely on legal contracts, audited reporting, and institutional enforcement to limit what insiders can do with pooled money. Public blockchains replace some of that trust with transparent code and on-chain rules. But that replacement is incomplete unless the important powers are actually constrained. A token can be on-chain, the liquidity pool can be public, and the project can still be structured so that a small group can remove liquidity, push a malicious upgrade, mint themselves inventory, or drain approved wallets.

So the invariant is not “the project uses smart contracts.” The invariant that matters is whether the path from user deposits to insider extraction is still open. Rug pulls differ in style, but they share that structure. Users commit capital because they believe the rules are stable enough for the investment thesis to make sense. Insiders then exploit a retained privilege, hidden condition, or market asymmetry to capture that capital.

This is why the phrase “exit scam” is often used, but it can be slightly misleading. Some rug pulls are abrupt disappearances after fundraising. Others are highly technical and happen through code paths that were present from day one. Still others look like normal market activity until you notice that the team controlled both supply and liquidity and was effectively trading against its own users.

What types of rug pulls exist and how do they differ?

| Type | Trigger | Detection method | Primary mitigation |

|---|---|---|---|

| Contract-related | Malicious contract functions | Static code analysis | Limit privileges and audit |

| Transaction-related | Insider supply dump | Trading behavior monitoring | Limit holder concentration |

It is tempting to define a rug pull as “developers take the money and leave.” That is directionally right, but too vague to be useful. The more precise view is that rug pulls sit at the intersection of privileged control, user expectation, and extractive execution.

The two-category split from the CRPWarner paper helps here. A contract-related rug pull happens when malicious or dangerous functionality is embedded in the smart contract itself. The code may include hidden minting rights, transfer restrictions, owner-only withdrawals, upgrade hooks, blacklist functions, fee manipulation, or external calls that let the privileged actor redirect value. In these cases, the contract is part of the scam mechanism.

A transaction-related rug pull is different. Here, the extraction may happen through trading behavior rather than an obviously malicious contract function. For example, a team may create hype, seed liquidity, attract buyers, and then dump a large pre-allocated supply into that liquidity. The contract may be technically simple, but the market structure is rigged because insiders own the inventory and control the timing.

That distinction matters because people often ask the wrong question. They ask, “Was the code hacked?” when the better question is, “What rights did insiders retain, and how could those rights be used against users?” A contract can be bug-free and still be unsafe as an investment if its control model makes insider extraction easy.

How does a liquidity‑and‑supply rug pull unfold in practice?

Imagine a team launches a token and pairs it against a stablecoin in a decentralized exchange pool. Early marketing tells a familiar story: fair launch, community growth, future utility. Buyers see a live market, rising price, and visible on-chain liquidity, which creates the impression that the token has become a real asset with a discoverable market price.

What many buyers may not notice is that the team kept most of the token supply at launch and also controls either the liquidity-provider position or a privileged token function. As attention grows, outside users buy the token with stablecoins. This does two things at once. It pushes the price up, and it loads the liquidity pool with more valuable assets on the other side of the pair.

Now the insiders use the control they never really gave up. If they hold a large inventory of tokens, they can sell that inventory into the pool, extracting the stablecoins users provided. If they control minting, they may create fresh tokens and dump those instead. If they control the liquidity position, they may withdraw the pool assets directly. The exact path varies, but the mechanism is the same: users supplied the valuable side of the market, insiders retained the lever that lets them take it.

This is close to what CertiK described in its report on Fintoch, where users were induced to buy the FTH token with BSC-USD and the operators then dumped tokens minted during deployment to drain roughly $31.6M from the pool. The detail that matters is not just that tokens were sold. It is that the project’s structure let insiders create or hold the inventory needed to unload against user capital.

Notice what makes this different from an ordinary market crash. In a normal crash, many participants lose money because beliefs about value change. In a rug pull, the insiders often have a structural advantage they built in advance. They are not just bearish sellers. They are the people who defined the supply, permissions, or liquidity terms under which everyone else entered.

Which on‑chain places hide the power that enables rug pulls?

| Power location | What it enables | Detection signal | Best mitigation |

|---|---|---|---|

| Token supply | Minting and dilution | Sudden supply increase | Renounce or multisig control |

| Liquidity control | Withdraw or fake LP lock | Liquidity balance drops | On-chain LP lock and timelock |

| Admin authority | Upgrade or change rules | Owner or proxy admin present | Multisig plus timelock |

| User approvals | Contract spends user tokens | Active unlimited allowances | Revoke allowances regularly |

The broad taxonomy in recent research is useful because it shows that “rug pull” is not one exploit signature. It is a family of extraction paths. The specific categories are numerous, but the important unifying principle is simpler: the power usually hides in one of a few places where users assume constraints exist when they do not.

A common place is token supply control. If insiders can mint, burn selectively, or alter balances in ways users do not expect, then the economic meaning of ownership changes. A buyer thinks they own a scarce asset, but the issuer can dilute or manipulate that scarcity after the fact.

Another common place is liquidity control. If the team or associated wallets can remove liquidity, fake a liquidity lock, or otherwise regain access to the assets backing the market, then the price users see is partly an illusion. The market is deep only until the controller takes the depth away.

A third place is administrative contract authority. Ownership roles, privileged functions, proxy-admin rights, and upgrade authority all matter because they let someone change the rules after deposits arrive. On Ethereum-like systems, that authority may live in an owner variable, a proxy admin, a multisig, or a governor contract. On Solana, similar power appears as program authority or upgrade authority, which can update or close a program unless revoked. Different architecture, same underlying issue: someone may still control the executable logic users rely on.

A fourth place is user-granted approvals. Many users think the danger ends once they buy a token, but approvals create another attack surface entirely. If a user granted a contract permission to spend tokens on their behalf, then a malicious or later-compromised contract may be able to drain those assets without taking the user’s private key. That is why tools like Etherscan’s Token Approvals checker and Revoke.cash matter: they let users inspect and revoke allowances that otherwise remain active.

The SoK paper’s finding that existing datasets cover only about 20% of identified root causes is a warning against oversimplification. The visible patterns people talk about on social media are only part of the landscape.

How does upgradeability create rug‑pull vulnerabilities?

| Pattern | Who controls | Risk level | Best guard |

|---|---|---|---|

| Immutable | No upgrade authority | Low | Make code immutable |

| Proxy single-admin | Single admin key | High | Replace with multisig |

| Proxy multisig+timelock | Multisig and timelock | Moderate | Timelock holds admin |

| Solana program authority | Program authority keypair | High if single key | Revoke or multisig authority |

If there is one concept that makes rug-pull risk click for technically minded readers, it is upgradeability. Upgradeable systems are useful because they let developers fix bugs and add features after deployment. But they also preserve a form of centralized power: whoever controls the upgrade path can potentially change the contract into something hostile.

This is not a fringe concern. OpenZeppelin’s upgrade tooling exists precisely because upgradeable contracts are common and need careful security checks. CertiK’s guidance on proxy security states the risk plainly: if a proxy admin’s private key is compromised, an attacker can upgrade the logic contract to malicious code and steal or destroy user funds. The same thing can happen if the admin is not compromised but is malicious from the start.

Here the mechanism is straightforward. In a proxy pattern, users interact with one contract address, but the logic executed may come from a separate implementation contract. Change the implementation address, and you may change the behavior while keeping the same public-facing address and stored funds. That is operationally convenient, but it means the true question is not “is the deployed contract safe today?” It is “who can decide what this contract becomes tomorrow?”

This is where many projects mix decentralization theater with real centralization. They advertise immutable code while using a proxy. Or they publish an audit for one version, while a retained admin can later replace the implementation. Or they technically disclose upgradeability, but the governance around it is a single key or a small insider multisig that users never meaningfully assessed.

Solana has a parallel problem in different form. A deployed program can have a program authority that can update or close it. The Solana docs are explicit: removing that authority with --final makes the program immutable, while keeping it requires securing the key appropriately. Again, different chain, same principle. Immutability is not a vibe; it is a specific condition about whether future code changes are still possible.

How can governance and timelocks reduce rug‑pull risk?

The natural response to the danger of admin control is governance. Instead of one deployer key, let a community or multisig decide. That helps, but only if governance changes the timing and distribution of power in a meaningful way.

A crucial control is the timelock. OpenZeppelin’s governance docs explain why: adding a timelock gives users time to exit the system before a decision is executed. That may sound modest, but it addresses the core asymmetry in many rug pulls, which is that insiders can act faster than users can react. If a malicious treasury transfer, upgrade, or role change can happen instantly, users discover the threat only after the damage. If it must sit queued on-chain for a delay period, users and monitors get a window to withdraw, sell, revoke permissions, or raise alarms.

The mechanism matters more than the label. A governor contract by itself is not enough if a small group can still propose and pass arbitrary actions immediately, or if the treasury remains outside the timelock. OpenZeppelin is explicit that when a timelock is used, it should hold the funds, ownership, and critical roles, because it is the entity that executes proposals. Otherwise the timelock becomes cosmetic while the actual power remains elsewhere.

Safe modules can implement a similar idea operationally. The Zodiac Delay Modifier, for example, enforces a cooldown between when a module initiates a transaction and when it can be executed. That does not eliminate trust, but it changes the system from “privileged actors can move now” to “privileged actors can move later, and the move is observable first.” For rug-pull prevention, that change in timing is often the difference between recoverable risk and instant loss.

Of course, governance can also fail. If voting power is concentrated, borrowed, manipulated, or captured, governance may simply become the attacker’s tool. So the fundamental question remains the same: who can move assets or change logic, under what delay, and with what visibility?

What common misconceptions do users have about rug‑pull risk?

The most common misunderstanding is to treat audits as proof that a project cannot rug. Audits can be valuable, but they answer a narrower question: whether the reviewed code has certain security or correctness properties. They do not remove privileges that the code intentionally grants, and they do not guarantee that all relevant components were in scope.

The Fintoch incident is an unusually clean illustration. CertiK noted that it had audited the pool and lending product, but the exploited token contract was a different product and outside the audit scope. So users could easily hear “audited” and infer “safe from a rug pull,” even though the crucial component was never covered.

Another misunderstanding is to confuse wallet disconnection with permission removal. Revoke.cash states this clearly: disconnecting a wallet from a website does not revoke approvals. The site simply stops seeing your address; the approval on-chain remains active. If a malicious contract already has spending permission, that permission survives your browser hygiene.

A third misunderstanding is to assume a hardware wallet solves approval-based risk. Hardware wallets protect keys, but approvals let a contract spend tokens without stealing the key. So a user can sign a bad approval safely on a hardware device and still lose funds later.

Finally, many users hear “liquidity locked” or “ownership renounced” and stop asking questions. But these are only meaningful if they are verifiable and complete. A fake LP lock, hidden owner path, ownership transfer trick, or unverified contract can preserve control while imitating decentralization. The research literature explicitly names several such patterns as under-covered by existing tools.

What can automated detection find about rug pulls; and what can't it detect?

Automated detection helps because some rug-pull patterns leave technical fingerprints. The CRPWarner paper shows this for contract-related rug pulls by identifying malicious functions in token contracts and warning on risky behavior. In a large Ethereum scan, the authors flagged thousands of contracts with suspicious functionality. That is strong evidence that code analysis can surface real risk.

But detection does not solve the full problem. The same paper separates contract-related from transaction-related rug pulls for a reason: some scams are visible in code, while others are visible mainly in market behavior, holder concentration, liquidity management, or off-chain deception. The broader SoK paper strengthens that point by showing that even current datasets and tools leave several root causes uncovered.

So readers should avoid two equal and opposite errors. The first is thinking “if scanners found nothing, the project is safe.” The second is thinking “if the contract contains admin functions, it must be a scam.” Reality is harder. Many legitimate systems retain privileges for upgrades, pausing, compliance features, treasury management, or emergency response. The question is not whether privilege exists at all, but whether it is bounded, visible, and governed well enough that users can price the trust they are taking.

What practical steps reduce rug‑pull risk for projects and users?

The strongest controls are the ones that remove or slow down extraction paths rather than merely describing them.

For project teams, that usually starts with minimizing privileged authority. If upgradeability is not necessary, remove it and make the code immutable. If it is necessary, move authority away from a single operator and into a well-designed multisig or governance system. Use standard, audited proxy patterns rather than custom upgrade logic. Initialize implementations correctly, avoid unsafe delegatecall patterns, and protect admin keys like treasury keys, because in economic terms they are treasury keys.

Then comes execution delay. Timelocks are powerful because they turn hidden power into observable queued action. If upgrades, treasury transfers, or role changes must wait, users gain time to respond. The timelock should hold the critical roles and, where appropriate, the funds themselves; otherwise the real power sits outside the delay.

For users, the practical controls are different because users do not govern the protocol. The first is to inspect who controls what: owner roles, upgradeability, mint authority, liquidity ownership, and contract verification status. On Solana, that includes checking whether a program still has upgrade authority. On EVM chains, it includes checking proxy admin arrangements and whether the implementation can change.

The second is to manage approvals aggressively. Etherscan’s approval checker and Revoke.cash both support reviewing and revoking permissions that dapps retain. This does not protect against every rug pull, but it closes one direct path by which malicious contracts drain wallets after trust has already been granted.

The third is to treat marketing claims as hypotheses that require on-chain confirmation. “Locked liquidity,” “renounced ownership,” “community governed,” and “audited” are not end states. They are claims about control structure. The question is always whether the chain state and contract design actually enforce those claims.

Conclusion

A rug pull is best understood as retained power turned against trusting users.

The details vary but the structure is stable.

- minting

- dumping

- draining liquidity

- abusing approvals

- upgrading logic

- using governance badly

Users put value into a system because they believe the rules are constrained. Insiders profit because those constraints were weaker, narrower, or more reversible than users thought.

If you remember one thing tomorrow, remember this: the real risk is not whether a project looks decentralized, but whether it has truly made the dangerous powers hard to use, easy to see, or impossible to keep.

How do you secure your crypto setup before trading?

Secure your crypto setup by verifying on‑chain controls, limiting token approvals, and using careful order execution before you trade. With Cube Exchange’s non‑custodial MPC accounts you can fund, inspect, and execute trades while keeping key material protected by threshold signing.

- Fund your Cube account with fiat on‑ramp or a supported crypto transfer.

- Inspect the token and pool on‑chain: check contract verification, owner/admin addresses, proxy upgradeability and proxy admin, mint/treasury authorities, and who holds LP tokens (use Etherscan, Solana Explorer, or equivalent).

- Revoke or limit token approvals using Etherscan’s Token Approvals checker or Revoke.cash before trading.

- Place a conservative trade on Cube: use a limit order to control execution price or a market order for immediacy, set low slippage tolerance, and size the order to avoid large price impact.

- After trading, confirm balances, revoke any temporary approvals you created, and (if you plan to hold long term) move funds to your chosen cold storage following your own custody procedures.

Frequently Asked Questions

Upgradeability lets a privileged admin swap the implementation behind a public proxy address, so changing the implementation can replace benign logic with malicious code while keeping the same contract address and stored funds. If the proxy admin key is malicious or compromised, an attacker can upgrade to code that steals or drains assets, which is why OpenZeppelin and CertiK highlight proxy-admin risk and the need to secure admin keys.

Yes; audits examine specific code and scope and do not eliminate privileges or components outside the review. The article cites the Fintoch post‑mortem where CertiK had audited the pool and lending product but the exploited token contract was outside audit scope, illustrating that “audited” does not guarantee immunity to a rug pull.

Before buying, check who controls owner/admin roles, whether the contract is upgradeable and who the proxy admin is, mint/treasury authorities, who owns the liquidity-provider position or LP tokens, and whether the contract source is verified on‑chain; these are the on‑chain levers the article flags as common hiding places for power. Doing those checks does not remove all risk but reveals the main structural paths insiders could use.

Automated detectors are useful for surfacing contract-related red flags - for example, CRPWarner flagged thousands of contracts with suspicious functions - but they miss many transaction-related schemes and root causes. The SoK paper and the article warn that existing datasets and tools cover only a subset of root causes, so detection lowers risk but does not eliminate it.

Timelocks slow execution of privileged actions by forcing proposals to queue for a delay, which gives users and monitors time to react or exit; OpenZeppelin guidance and the article emphasize that delay visibility is the key benefit. However, a timelock is effective only if it actually holds the critical roles and funds - otherwise the real power can remain outside the delayed path.

Token approvals allow a contract to spend your tokens on‑chain without taking your private key, so a compromised or malicious contract can drain approved balances even if you use a hardware wallet. The article and tools like Revoke.cash and Etherscan’s approval checker are explicit that hardware wallets protect keys but do not prevent misuse of existing on‑chain allowances.

Contract-related rug pulls arise from malicious or privileged functions encoded in the contract (e.g., hidden minting, owner‑only drains), whereas transaction-related rug pulls exploit market structure and trading behavior (e.g., insiders dumping pre‑allocated supply). Code analysis can detect many contract-related patterns (the CRPWarner work focuses here) but will miss schemes that only appear once trading and on‑chain flows unfold.

Claims like “ownership renounced” or “liquidity locked” are meaningful only if verifiable on‑chain and complete; attackers and immature tooling have produced fake LP locks, hidden owner paths, and other illusions that mimic decentralization. The article and the SoK evidence note several root causes (e.g., Fake LP Lock) that are under‑covered by current tools, so these marketing claims should be independently confirmed.

On Solana a program’s upgrade/close authority (the program authority) can update or remove a program unless the deployer permanently sets the program to immutable (the article references the Solana docs’ --final flag). That default authority is typically the deployer’s key, so if it remains single‑sig or compromised the program can be maliciously changed later; making a program immutable is irreversible and thus a deliberate tradeoff.

Teams should minimize single‑party privileges (avoid unnecessary upgradeability), place admin roles and funds under multisig or governance, use standard audited proxy patterns if upgrades are required, and put critical actions under timelocks so they become observable before execution; the article and industry guidance (OpenZeppelin/CertiK) recommend these controls. These steps reduce but do not eliminate risk, so protecting admin keys and clear, verifiable on‑chain governance remain essential.

Related reading