What is a Pedersen Commitment?

Learn what Pedersen commitments are, how `C = vH + rG` works, why they are perfectly hiding, computationally binding, and crucial in zero-knowledge.

Introduction

Pedersen commitments are a cryptographic way to lock in a value without revealing it, while still preserving enough algebraic structure that other proofs can be built on top. That combination is unusual. Many tools can hide data, and many tools can support efficient verification, but Pedersen commitments do both in a form that is simple enough to compose into larger systems.

The puzzle they solve is easy to state. Suppose you want to convince a verifier that you have chosen a number now, but you do not want to reveal the number until later. A basic commitment scheme gives you that: first you commit, later you open. But in modern cryptography, and especially in blockchain systems, that is often not enough. You also want commitments to add together in meaningful ways, so that a network can check conservation of value, a prover can show a secret lies in a range, or a protocol can compress many hidden values into a small object. Pedersen commitments were designed for exactly this setting.

Their importance comes from a very specific tradeoff. A Pedersen commitment is perfectly hiding: from the commitment alone, the hidden value is information-theoretically concealed. At the same time, it is only computationally binding: under standard assumptions, a committer cannot open the same commitment to two different values, but that guarantee depends on the hardness of discrete logarithms. That asymmetry is not an accident. It is the reason the scheme is so useful.

What problem does a commitment scheme (and Pedersen commitments) solve?

A commitment scheme is often described as the digital analogue of putting a message in a sealed envelope. That analogy explains the goal, but not the mechanism. The envelope should have two properties. First, it should hide the message before opening. Second, it should bind the sender to that message so they cannot later claim the envelope contained something else.

In cryptography, those two goals pull in opposite directions. If the object you publish leaks too much structure about the message, hiding gets weaker. If it leaves too much freedom, binding gets weaker. Pedersen commitments sit at a carefully chosen point in that design space: they maximize hiding, preserve a useful algebraic structure, and accept a computational notion of binding.

That last phrase matters. Some commitment schemes aim for very strong binding, sometimes even unconditional binding. Pedersen commitments do not. Instead, they give up unconditional binding in exchange for stronger hiding and a clean homomorphic structure. In practice, that trade is often exactly what higher-level protocols need.

How do Pedersen commitments hide a value while preserving algebraic structure?

The central mechanism is to mix the message with fresh randomness inside a group where discrete logarithms are hard. Intuitively, the message contributes one “direction” in the group and the randomness contributes another. Because the randomness is chosen uniformly, it washes out the information about the message. But because the commitment still has a rigid algebraic form, someone who knows both the message and the randomness can later demonstrate that the commitment was formed correctly.

The critical requirement is that these two directions must be independent in a very strong sense: no one should know the discrete logarithm relationship between the two generators. If one generator can be expressed as a known scalar multiple of the other, the scheme becomes malleable in exactly the wrong way, and binding can collapse.

This is the point where many explanations become too compressed. A Pedersen commitment is not secure merely because it uses an elliptic curve or a cyclic group. It is secure because the message and the blinding randomness are embedded into a prime-order group using generators whose relative discrete log is unknown, and because the resulting commitment inherits both the group’s hardness assumptions and its linear structure.

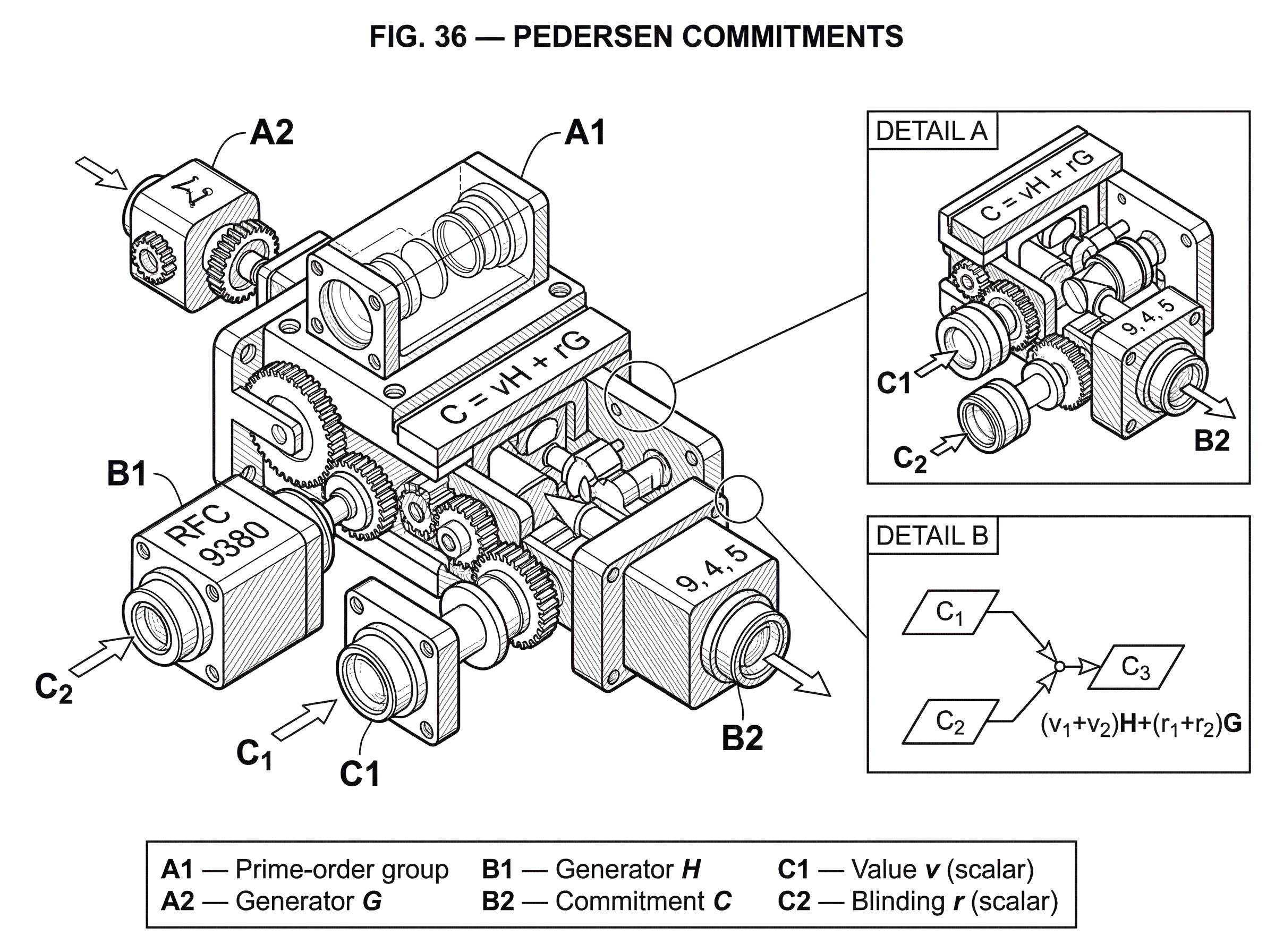

What is the formal definition of a Pedersen commitment (C = vH + rG)?

| Form | Expression | Group notation | Common use | Notes |

|---|---|---|---|---|

| Additive | C = vH + rG | Additive group | Elliptic curves | Natural for EC implementations |

| Multiplicative | C = g^v h^r | Multiplicative group | Finite field groups | Older literature notation |

Let G be a cyclic group of prime order q. Write the group operation additively, which is common in elliptic-curve settings. Let G and H also denote two generator points in that group, with the crucial condition that nobody knows a scalar x such that H = xG.

To commit to a value v in Z_q, choose random blinding factor r uniformly from Z_q, and compute:

C = vH + rG

This C is the commitment.

To open it later, the committer reveals v and r. The verifier recomputes vH + rG and checks that it equals C.

The multiplicative version, common in older literature, writes the same idea as C = g^v h^r. These are the same construction expressed in different group notation. In blockchain systems built on elliptic curves, the additive form is usually the more natural one.

Why are Pedersen commitments perfectly hiding?

Here is the mechanism behind hiding. Fix any commitment C. If the blinding factor r is uniform in Z_q, then for every possible message v, there is exactly one corresponding r that makes C = vH + rG true. Because r was chosen uniformly, the distribution of C does not depend on v.

This is stronger than saying an attacker cannot feasibly recover v. It says the commitment reveals no information at all about v, even to an infinitely powerful observer. That is why the property is called perfect hiding.

A useful way to think about this is that the randomness does not merely “mask” the value in an informal sense. It statistically absorbs it. The published point C could have come from any message, provided the randomness were adjusted accordingly. Since the randomness is genuinely random and large enough, the observer gets no distinguishing advantage.

This perfect hiding property is one reason Pedersen commitments are so attractive in privacy systems. If the setup is sound and the implementation is correct, the privacy of the committed value is not resting on a delicate reduction or a tight security margin. It is built into the distribution itself.

Why are Pedersen commitments only computationally binding (not unconditionally)?

| Generator relation | Binding | Equivocation | Security basis |

|---|---|---|---|

| No known relation | Computational binding | Hard to equivocate | Discrete log hardness |

| Known relation | Not binding | Can equivocate freely | Trapdoor knowledge |

Binding is where the unknown relationship between G and H becomes essential. Suppose a malicious committer could open the same commitment C as both (v, r) and (v', r') with v ! = v'. Then:

vH + rG = v'H + r'G

Rearranging gives:

(v - v')H = (r' - r)G

If v - v' is nonzero, this equation reveals a discrete logarithm relationship between H and G:

H = ((r' - r) / (v - v')) G

So, if someone can find two different valid openings, they can compute the discrete log of H with respect to G. Therefore, under the assumption that this discrete log is hard to find, producing two openings should also be hard.

That is computational binding. The scheme is binding not because double openings are mathematically impossible, but because finding one would solve a problem believed to be computationally infeasible.

This also explains a subtle but important fact: if the party choosing the generators secretly knows the relation H = xG, then they have a trapdoor. They can take any commitment C = vH + rG = (vx + r)G and re-open it to a different value v' by adjusting the randomness to r' = r + x(v - v'). The commitment still checks out. In other words, the party who knows the generator relation can equivocate at will.

So the scheme’s security is not just about the group assumption. It also depends on generator generation being trustworthy.

How does additive homomorphism make Pedersen commitments useful?

Pedersen commitments are additively homomorphic. This means the group operation on commitments corresponds to addition of the hidden values and addition of the blinders.

If C1 = v1H + r1G and C2 = v2H + r2G, then:

C1 + C2 = (v1 + v2)H + (r1 + r2)G

So C1 + C2 is itself a valid commitment to v1 + v2 with blinding factor r1 + r2.

This is the real engine behind many applications. The commitment is not just a locked box. It is a locked box that still participates in arithmetic. That sounds almost contradictory at first, but it is exactly the feature advanced protocols need.

In confidential transaction systems, for example, each amount is hidden in a Pedersen commitment. The verifier cannot see the amounts, but can still add input commitments and output commitments. If the transaction is valid, the difference should be a commitment to zero, up to public fee adjustments and excess terms depending on the protocol design. That lets the network check conservation of value without seeing the values themselves.

The same algebraic structure is what makes Pedersen commitments useful in range proofs such as Bulletproofs. A commitment is a group element in the same algebraic setting where efficient inner-product arguments and other zero-knowledge subprotocols operate. That compatibility is not incidental; it is what lets proof systems remain compact.

How do Pedersen commitments enable value conservation in confidential transactions?

Imagine a transaction with one hidden input amount and two hidden output amounts. The input amount is 9, and the outputs are 4 and 5. The network should be able to verify that no new value was created, but it should not learn any of the three numbers.

The sender forms three commitments: Cin = 9H + rinG, Cout1 = 4H + r1G, and Cout2 = 5H + r2G, using fresh random blinders rin, r1, and r2. To an outside observer, each commitment looks like an unrelated random group element. The amounts are not visible.

Now consider the difference Cin - Cout1 - Cout2. Substituting the formulas gives:

(9H + rinG) - (4H + r1G) - (5H + r2G)

The H terms cancel because 9 - 4 - 5 = 0, leaving only:

(rin - r1 - r2)G

So the result is a commitment to zero. The hidden values disappear in the group arithmetic because they balance exactly. What remains is a pure blinding term, which can be handled by the surrounding protocol as a commitment excess or a proof of knowledge of the corresponding scalar, depending on the system.

This is why Pedersen commitments are so powerful in cryptocurrencies. The verifier can check a transaction-level invariant (inputs equal outputs plus fees) without seeing the amounts. The arithmetic survives the hiding.

But this example also shows what Pedersen commitments do not solve by themselves. A commitment to -3 is just as algebraically valid as a commitment to 3. So without an extra proof that each output amount lies in a nonnegative range, a malicious user could exploit wraparound or negative values to fake balance. That is why range proofs are a necessary companion in confidential transaction systems.

Which real-world protocols and systems use Pedersen commitments?

Pedersen commitments are foundational in several families of protocols because they combine three properties that rarely coexist: perfect hiding, computational binding, and homomorphism.

In confidential transactions, they hide amounts while preserving public balance checks. This appears in designs associated with Bitcoin-derived confidential transaction systems, in Monero’s RingCT construction, and in Mimblewimble-style systems such as Grin. The surrounding mechanisms differ (ring signatures in one case, cut-through and transaction excesses in another) but the role of the commitment is the same: hide the numeric value while keeping arithmetic verifiable.

In Bulletproofs, Pedersen commitments serve as the commitment layer over which compact range proofs are built. The fact that a commitment is itself a group element is exploited directly in the proof system’s inner-product argument. That is part of why Bulletproofs can give short proofs without a trusted setup for the range-proof system itself.

Pedersen-style ideas also show up in verifiable secret sharing and vector commitments. The common thread is that one wants to commit to data in a way that preserves enough linear structure to support efficient proofs, consistency checks, or aggregation.

How does generator selection affect the security of Pedersen commitments?

| Method | Transparency | Trapdoor risk | Implementation note |

|---|---|---|---|

| Hash-to-curve | High transparency | Low if DST used | Use RFC 9380 hash-to-curve |

| Nothing-up-my-sleeve | Moderate transparency | Low if public | Pick well-known constants |

| Secret selection | Opaque | High risk | Avoid unless auditable |

If there is one implementation issue that deserves to be remembered, it is this: the generators matter as much as the formula.

A Pedersen commitment assumes two independent generators G and H in the same prime-order group, with unknown discrete log relation. If a protocol designer chooses H maliciously as xG for known x, they retain a trapdoor that breaks binding. So practical systems try to derive generators in a way that is auditable and resistant to manipulation.

A common approach is to take a standard base point as G and derive H deterministically by hashing a domain-separated label to a curve point, then clearing the cofactor if necessary so the point lies in the prime-order subgroup. RFC 9380 standardizes constant-time hash-to-curve methods and requires domain separation tags for exactly this kind of use. The purpose is not magic; it is to make generator derivation reproducible and to reduce the risk that someone secretly engineered a known relation.

Even then, one should be precise about what this does and does not guarantee. Hashing to a curve point gives a transparent, standardized way to derive generators. It does not produce a formal proof that nobody knows the discrete log relation. Instead, it removes obvious opportunities for manipulation and makes the choice publicly auditable. In practice, that is often the right engineering answer.

The subgroup issue matters too. If points are not forced into the correct prime-order subgroup, small-subgroup structure can create attacks or invalid assumptions. Standards such as RFC 9380 emphasize cofactor clearing for this reason.

What common misunderstandings should you avoid about Pedersen commitments?

The first common misunderstanding is to think “perfect hiding” means “perfectly secure overall.” It does not. Hiding is unconditional, but binding is not. The scheme still depends on the hardness of discrete log and on sound generator selection.

The second is to treat Pedersen commitments as standalone privacy. They hide values, but they do not prove anything interesting about those values unless paired with additional proofs. In cryptocurrency settings, range proofs are indispensable because arithmetic in Z_q wraps around. Without a proof that committed amounts lie in a valid interval such as [0, 2^n), conservation checks alone do not prevent inflation.

The third is to assume any group-element compression based on “Pedersen-like” formulas is automatically safe. It is not. Related work on Pedersen hashes shows that security can fail if inputs are variable-length without proper encoding, if message encodings are not injective, if outputs are reduced carelessly to a single coordinate, or if generators have hidden relations. The commitment formula is simple; safe instantiation is not automatic.

What tradeoff motivates the Pedersen commitment design (hiding vs. binding vs. homomorphism)?

There is a deeper principle underneath the scheme. A commitment cannot usually be both perfectly hiding and perfectly binding in the strongest sense while also remaining compact and algebraically homomorphic in the way Pedersen commitments are. Something has to give.

Pedersen commitments choose to make hiding absolute and binding assumption-based. That choice turns out to be especially valuable in systems where privacy is non-negotiable and where higher-level proofs can tolerate computational assumptions. Bulletproofs makes this trade explicit: compact vector commitments and short proofs rely on a Pedersen-style layer, and that layer is computationally binding under discrete log.

This is also why Pedersen commitments are different from commitment schemes that rely on a trusted setup, such as certain polynomial-commitment families.

Those schemes may optimize for different goals but they bring different assumptions, often including setup trapdoors.

- constant-size openings

- batched evaluation proofs

- so on

Plain Pedersen commitments do not need that kind of setup. Their trust surface is narrower: choose a good group, choose generators transparently, and rely on discrete-log hardness.

What are the limits and long‑term assumptions of Pedersen commitments?

Pedersen commitments inherit the strengths and weaknesses of discrete-log cryptography. Today, in appropriate groups, that assumption is standard and widely used. But the binding guarantee is not quantum-resistant. A sufficiently capable quantum computer would threaten discrete-log hardness and therefore threaten binding. Several papers on privacy-focused cryptocurrency protocols note this explicitly.

That does not mean the commitments become useless overnight under weaker conditions. It means the exact security story changes. The hiding property is still information-theoretic for the commitment distribution, but if binding fails, an adversary may be able to equivocate and undermine soundness in protocols that depend on unique openings. In money systems, that can become an inflation risk.

There are also ordinary engineering limits. Performance can be excellent because commitments are just group elements and operations are linear, but once range proofs and other zero-knowledge machinery are added, the total system cost depends far more on the proof layer than on the commitment formula itself. This is why improvements in proof systems (for example, moving from older range proofs to Bulletproofs) can dramatically affect practical deployment even when the underlying commitment remains Pedersen.

Conclusion

A Pedersen commitment is a commitment of the form C = vH + rG in a prime-order group, where v is the hidden value, r is random blinding, and the relation between G and H is unknown. Its design matters because it achieves three things at once: perfect hiding, computational binding, and additive homomorphism.

That combination is why Pedersen commitments appear so often in modern cryptography. They let you hide numbers completely, yet still prove arithmetic facts about them. If you remember one idea tomorrow, it should be this: Pedersen commitments work because randomness erases the value for privacy, while group linearity preserves the value for proof.

What should I understand before relying on Pedersen commitments?

Before relying on Pedersen commitments in production, understand three practical facts: who derived the generators (an H chosen as xG is a trapdoor), binding depends on discrete-log hardness, and confidential transactions require range proofs (e.g., Bulletproofs) to prevent wraparound. On Cube Exchange, use these checks to assess an asset or protocol before depositing, trading, or interpreting balance proofs.

Frequently Asked Questions

Because the blinding randomness r is uniform, any fixed commitment C can be explained by every possible message v with a suitable r, so the commitment distribution leaks no information about v (perfect hiding). However, if an adversary could produce two different openings (v,r) and (v',r') for the same C they would compute a discrete-log relationship between the two generators, so under the discrete-log hardness assumption finding such a double opening is infeasible (computational binding).

The scheme assumes two generators G and H whose discrete-log relation is unknown; if a party chooses H = xG they keep a trapdoor and can equivocate by adjusting r to open any value. Practical guidance is to derive H transparently (for example by hashing a domain-separated label to the curve and clearing cofactors per RFC 9380) so generator selection is auditable and reduces the risk of a hidden relation.

No - Pedersen commitments alone only hide values and preserve algebraic checks; because arithmetic is over Z_q (with wraparound) a commitment to a negative or wrapped value is indistinguishable from a positive one. Protocols therefore must add range proofs (or other checks) to prove amounts lie in a valid nonnegative interval to prevent inflation or wraparound exploits.

No - the binding guarantee depends on discrete-log hardness and would be broken by an adversary with a quantum computer that can solve discrete logs; hiding remains information-theoretic but an attacker who can break binding could equivocate and undermine protocols that assume unique openings.

Their additive homomorphism means commitments add like their hidden values: C1+C2 is a valid commitment to v1+v2 with blinder r1+r2. That lets verifiers check conservation (for example, inputs minus outputs collapses the H-terms to zero when amounts balance, leaving only a blinding term) without revealing individual amounts.

Common implementation pitfalls include: deriving H or other generators incorrectly (allowing trapdoors), failing to clear cofactors or use the correct prime-order subgroup, non-injective or variable-length encodings when hashing inputs (which can break collision resistance), and improperly extracting a single coordinate as the commitment output. These mistakes are known to break security in practical Pedersen-style instantiations.

Compared with schemes that need a trusted setup (e.g., many polynomial or pairing-based commitments), Pedersen commitments require no global trapdoor ceremony but instead trade off unconditional binding for perfect hiding and simple homomorphism; their trust surface is narrower (group choice and generator derivation) and they avoid the broader setup assumptions pairing-based constructions use.

If someone finds two distinct openings (v,r) and (v',r') for the same C, algebra gives (v-v')H=(r'-r)G and hence reveals the scalar relating H to G, so anyone who can produce such a collision effectively breaks the discrete-log relation. Conversely, whoever knows that scalar x (so H=xG) can recompute r' = r + x(v-v') to open C to any v'; thus knowledge of the discrete-log relation is exactly the equivocation trapdoor.

Related reading