What is Bittensor?

Learn what Bittensor is, how subnets, validators, Yuma Consensus, stake, and TAO rewards work, and where the protocol’s assumptions matter.

Introduction

For the token explainer, see TAO.

Bittensor is a network built to reward useful digital work (especially machine intelligence) with an on-chain incentive system. The puzzle it is trying to solve is simple to state and hard to solve well: if many independent participants can provide AI inference, compute, storage, predictions, or other digital services, how do you decide who actually contributed value, and how do you pay them without relying on a single platform owner?

Most blockchains reach agreement about transactions. Bittensor is aiming at something different. It uses a blockchain, but the economically important question is not merely which transfer happened. The harder question is which off-chain participant produced work that other participants found valuable. That shifts the center of gravity from transaction validation to performance evaluation.

At a high level, Bittensor is an open-source platform where participants produce digital commodities and are rewarded in TAO, the network’s token. In current documentation, those commodities are broader than just AI models: they can include compute, storage, AI inference and training, protein folding, financial prediction, and more. The network is organized into subnets, and each subnet is an independent community with its own miners, validators, and incentive rules.

The idea sounds straightforward: let producers compete, let evaluators score them, and let the network pay accordingly. But that immediately raises a deeper problem. If rewards depend on peer scoring, what stops groups from rating each other highly, excluding outsiders, or simply converging on noisy signals that do not measure real usefulness? Much of Bittensor’s design is an attempt to answer that problem with stake-weighted incentives, validator permissions, and reward formulas intended to favor signals that align with the broader network rather than small collusive clubs.

The shortest accurate description is this: Bittensor is a blockchain-coordinated market for digital commodities, organized through subnets, where validators evaluate miners and on-chain reward logic distributes TAO based on those evaluations. To understand why it exists, you have to understand why pricing intelligence is harder than pricing block space.

How does Bittensor make AI usefulness measurable to a network?

If you pay for machine intelligence in the ordinary web model, someone runs a platform, sets the rules, measures usage, and decides who gets access and revenue. That central operator solves a coordination problem: it defines what counts as good output. Bittensor tries to replace that central judgment with a protocol.

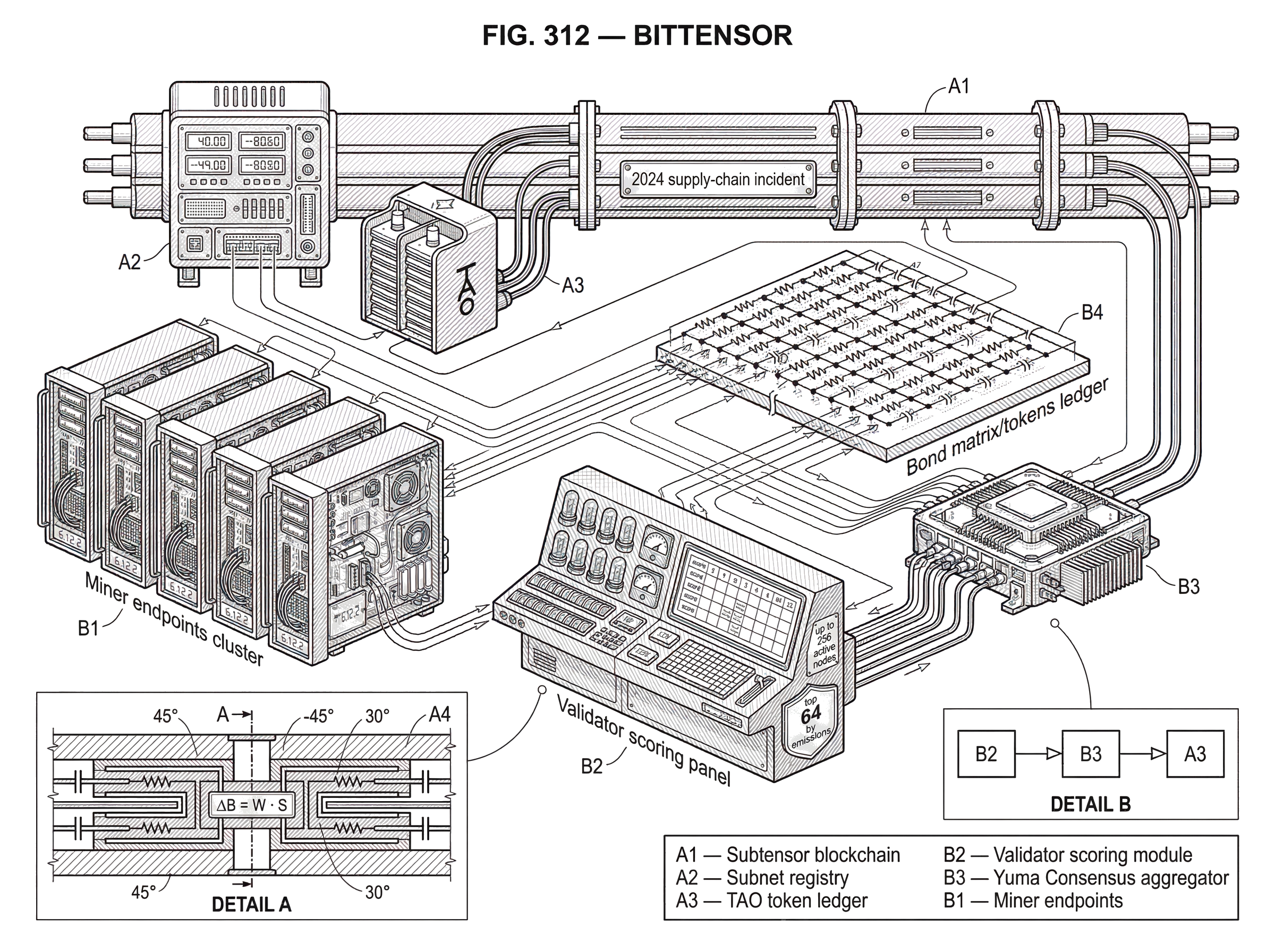

Here is the key mechanism in plain language. A subnet defines a digital commodity and an incentive scheme for measuring it. Miners produce the commodity. Validators test, compare, and score miner outputs. Those scores are submitted on-chain, and Yuma Consensus determines how rewards are allocated. The blockchain is not doing the AI task itself; it is recording state, stake, and reward-relevant weights while the actual competition happens off-chain.

This split matters. Many network designs fail if they try to force heavy computation on-chain. Bittensor instead uses a single blockchain (often described as the subtensor) as the coordination layer, while subnets run the application-specific work off-chain. That lets the system support tasks that would be far too expensive or too domain-specific to verify directly inside a general-purpose chain.

You can think of a subnet as a standing tournament around a particular kind of output. A miner enters by producing answers, predictions, model responses, or other work. A validator observes the outputs and applies the subnet’s incentive logic to judge quality. If the validator’s judgments are accepted by the broader reward system, the miner gains emissions, and strong validators can also be rewarded for providing good evaluation.

That is the attractive part of the design: it creates a market for services that are hard to commoditize. But it also exposes the central weakness. For many digital goods (especially model outputs) quality is not directly observable in a simple, objective way. The protocol therefore does not escape judgment; it redistributes judgment across validators and then tries to discipline that judgment economically.

Why does Bittensor use one coordination chain with many subnets?

Bittensor’s architecture only makes sense once you separate global coordination from local competition. There is one blockchain that records shared state, token balances, staking relationships, and subnet-relevant updates. Connected to that chain are many subnets, each of which acts like its own marketplace with its own evaluation logic.

This design solves a practical problem. Different digital commodities need different tests. A subnet for AI inference may care about response quality, latency, and robustness. A subnet for storage may care about availability and retrieval performance. A subnet for financial prediction may care about calibration and realized outcomes over time. Trying to force all of those into a single universal scoring function would either make the system vague or make it unusably rigid. Subnets let Bittensor specialize.

That is why the current docs describe each subnet as an independent community of miners and validators. The subnet creator or governor manages the incentive mechanism, and stakers can support validators by staking TAO to them. So although people sometimes describe Bittensor as one giant AI network, the more precise picture is a federation of competitive markets sharing a base coordination layer.

This also explains why Bittensor should not be understood as a single monolithic model. It is closer to an economic substrate for many service competitions. The protocol gives you a shared token, shared staking and identity machinery, and a common reward settlement layer. What each subnet considers valuable is, within limits, subnet-specific.

What is Yuma Consensus and how does it determine rewards?

| Approach | Weighting | Anti-collusion | Reward scaling | Best for |

|---|---|---|---|---|

| Naive peer ranking | Equal votes | Low resistance | Linear scaling | Simple benchmarks |

| Yuma Consensus | Stake-weighted votes | Higher resistance | Super-linear near majority | Majority-aligned signals |

The phrase Yuma Consensus can be misleading if you import assumptions from Bitcoin or Ethereum. In those systems, consensus is mainly about agreeing on the ordering and validity of state transitions. In Bittensor, the important on-chain aggregation also includes something more economic: whose evaluation of subnet work should influence rewards.

According to the SDK and docs, validators score miners and submit weights on-chain, and those scores determine emissions allocation through Yuma Consensus. In other words, validators are not just attesting that a transaction is valid. They are expressing judgments about which producers created the most valuable output in a subnet’s competition.

The whitepaper gives the underlying intuition more sharply. A naïve peer-ranking scheme is vulnerable to collusion because participants can simply vote for themselves or their allies. So the protocol introduces a stake-weighted trust and consensus term intended to favor peers that receive support from more than half the stake. In the whitepaper’s formulation, the consensus term C is built from a trust signal and sharply changes around the point where a peer is connected to a majority of stake. The reason for that shape is economic, not aesthetic: the protocol wants rewards to scale much more strongly once support broadens beyond a narrow clique.

That is the central anti-collusion bet. Instead of treating all votes equally, Bittensor gives more importance to evaluation patterns that are backed by stake and that cross a majority threshold. Under the explicit assumption that no colluding group controls a majority of stake, honest-majority-aligned evaluations should receive more inflation over time than a smaller cabal, causing the honest side to compound faster.

Notice what is fundamental here and what is conventional. The fundamental part is that rewarding digital work requires rewarding evaluators whose judgments are themselves trustworthy. The conventional part is the exact functional form chosen to express that on-chain. The whitepaper uses a specific differentiable activation around a majority threshold. Future protocol versions can change details, but the deeper design problem remains the same.

Why does Bittensor weight validator influence with stake?

Stake in Bittensor is not only a passive asset. It is part of the system’s claim about who should matter when quality is disputed. This has an obvious circularity: if stake influences rewards, and rewards create more stake, how do you avoid simply entrenching incumbents? Bittensor’s answer is that stake should amplify evaluators whose judgments are already aligned with broad network consensus, not replace performance altogether.

In practice, validator participation is constrained. The validator docs describe a permit system within each subnet. Each subnet can support up to 256 active nodes, and by default only the top 64 by emissions are eligible to serve as validators, though that cap can be changed by subnet governance. To receive a validator permit, a node must have a registered hotkey, sufficient stake weight, and ranking high enough in the subnet’s selection process.

The docs define validator stake weight as α + 0.18 × τ, where α is alpha stake and τ is TAO stake. A minimum stake weight of 1000 is required for permit consideration. Permits are recalculated every epoch through stake filtering and top-K selection. If a validator loses its permit, it loses critical powers, including the ability to set non-self weights, participate in Yuma Consensus, and retain associated bonds.

Mechanically, this does two things. First, it limits who can influence rewards, which reduces spam and trivial Sybil participation. Second, it ties validation authority to economic exposure. A validator with meaningful stake has something to lose if it behaves in a way that causes the market to discount its influence or if subnet rules penalize it indirectly.

That said, stake is not magic. It improves resistance to cheap attacks, but it does not solve the hard epistemic problem of evaluating AI outputs. A wealthy validator can still be wrong, lazy, or strategically biased. So stake should be understood as a credibility filter and a cost-imposition mechanism, not as proof that an evaluation is correct.

What are bonds in Bittensor and how do they incentivize early discovery?

| Design | Time horizon | Incentive effect | Discovery incentive | Main risk |

|---|---|---|---|---|

| Weight-only ranking | Short term | Immediate payments | Low discovery incentive | Herding |

| Bonds plus weights | Longer term | Gains from future success | Rewards early discovery | Reinforces mistaken consensus |

One of the more unusual parts of the original Bittensor design is the bond system. If simple ranking says “I think this peer is good,” bonds add a stronger claim: “I am willing to accumulate an economic position based on that judgment.”

In the whitepaper, bonds are represented by a matrix B, where b[i,j] is the proportion of bonds owned by peer i in peer j. Bonds accumulate from the weights a participant sets, scaled by stake, via ΔB = W · S, where W is the weight matrix and S is stake. Inflation is then redistributed partly through these bond holdings. The stated form in the whitepaper splits stake emission between direct incentive and bond-based redistribution, summarized as ΔS = 0.5 B^T I + 0.5 I, where I is the incentive score vector.

The intuition is more important than the notation. A validator is not only paid for being right now. It can build an ongoing speculative position in the peers it chooses to back. If those backed peers later attract incentive, the validator benefits through its bond exposure. That creates a reason to discover undervalued contributors early, rather than merely copying whoever is already famous.

This is a clever move because it gives the network memory. Without something like bonds, evaluations could become short-term and shallow: everyone waits to see who looks strong this block, then copies that ranking. Bonds reward earlier, informative selection. The protocol is trying to turn “finding good peers” into a capital allocation problem.

But the mechanism depends on a strong assumption: that evaluators can actually identify future value better than noise or social coordination. If validators mostly herd, then bonds may reinforce consensus without adding much discovery. If subnet metrics are easy to game, bonds may reward manipulation dressed up as foresight. So the bond mechanism is best understood as a tool for sharpening incentives, not a guarantee of accurate price discovery.

How does Bittensor define and measure a miner’s value or contribution?

This is where Bittensor becomes more conceptually ambitious than many token networks. The whitepaper does not define value as simple uptime or raw throughput. It frames peer ranking as a form of information contribution: roughly, how costly it would be to remove a peer from the network’s representational capacity.

In formal terms, the paper describes a ranking objective related to pruning significance, using Fisher-information-style intuition. The basic idea is that a useful peer is one whose absence would materially worsen the network’s ability to represent or predict. That is a deeper claim than “this peer answered quickly” or “this peer got a good benchmark score once.” It tries to get at marginal contribution.

Why is that attractive? Because many AI systems are complements, not just substitutes. A model that covers a niche capability, a rare modality, or a difficult corner case may be very valuable even if it is not globally best on average. A contribution-based ranking framework can, in principle, reward what is uniquely informative rather than merely what is most popular.

Why is it difficult? Because the exact contribution is hard to compute at scale. The whitepaper acknowledges practical heuristics are needed. In real subnet deployments, validators typically operationalize value through task-specific scoring procedures rather than computing full information-theoretic significance directly. This is an important boundary between theory and implementation: the ideal objective is elegant, but the deployed network depends on practical approximations.

A smart reader should be cautious here. Bittensor’s deepest promise is not fully “solved” by the existence of a formula in a whitepaper. The hard part is always the measurement pipeline: what validators query, what they compare, how they prevent overfitting to the test, and how they keep evaluation costs manageable.

How does a Bittensor subnet operate in practice (miners, validators, scoring)?

Imagine a subnet built around language-model inference. Miners expose endpoints that answer prompts. Validators send prompts to many miners, compare outputs, and maintain a scoring process that rewards responses that are useful by that subnet’s standards; perhaps accuracy, formatting reliability, latency, or consistency on hidden evaluation tasks.

As those judgments are submitted on-chain, Yuma Consensus aggregates the validator side of the market rather than directly recomputing the model outputs itself. Miners that consistently perform well attract emissions. Validators that provide evaluations the subnet and wider stake structure treat as credible retain influence and may benefit through bonds or other subnet-specific incentive structures.

Now change the commodity. A storage subnet could have miners providing storage capacity and retrieval service. Validators would not be scoring prose quality anymore; they would be probing data availability, persistence, and retrieval correctness. The same network skeleton still works because the chain is not hardcoding a single task. The subnet defines what “good” means, and the shared Bittensor machinery handles identity, stake, permissions, and reward settlement.

This is why Bittensor’s architecture generalizes beyond AI in the narrow sense. What it really provides is a way to create competitive, validator-scored digital commodity markets under a shared token and coordination layer.

How does Bittensor scale querying to limit bandwidth and support offline use?

| Approach | Bandwidth cost | Freshness | Implementation complexity | Best use case |

|---|---|---|---|---|

| All-to-all querying | Very high bandwidth | Highest freshness | Low complexity | Small networks only |

| Sparse routing | Low bandwidth | High freshness | Moderate complexity | Large dynamic networks |

| Distillation proxies | Minimal bandwidth | Lower freshness | High complexity | Offline or low-cost access |

A peer-to-peer intelligence market quickly runs into a physical constraint: querying everyone all the time is expensive. If every participant had to ask every other participant for every input, bandwidth costs would dominate.

The whitepaper addresses this with conditional computation. Instead of querying every peer, a participant learns a sparse selection rule for which peers to query for a given example. The paper describes this as a sparsely gated layer, effectively a trainable routing mechanism. That reduces outward bandwidth while still letting the system compose specialist peers when needed.

This matters because decentralized AI systems often fail on economics before they fail on theory. A routing layer is the difference between “everyone can, in principle, talk to everyone” and “the network can operate at usable cost.” The analogy is a trainable directory or router: it helps direct requests toward likely-useful peers. What the analogy explains is selective communication. Where it fails is that the routing decision is itself learned and incentive-sensitive, not just static addressing.

The whitepaper also discusses distillation as a way to create offline proxy models. A distilled model can approximate the behavior of the broader network closely enough for some validation or inference uses without requiring constant live access. That is useful because it lets participants take some of the network’s learned capability offline. But it also introduces tension: if too much value can be extracted into static proxies, the live network must continue to offer freshness, adaptability, or specialization that a distilled snapshot cannot fully capture.

What are Bittensor’s core assumptions and main failure modes?

Bittensor is strongest where three conditions hold at once. First, the commodity is valuable and can be tested meaningfully by validators. Second, validator incentives are aligned well enough that gaming the metric is harder than genuinely improving performance. Third, stake remains sufficiently decentralized that majority-backed consensus has real anti-collusion force.

The protocol is much weaker when any of those conditions fail. The whitepaper is explicit that naïve peer ranking is collusion-prone and that its security argument relies on the assumption that no group controls a majority of stake. That assumption is not a minor footnote. It is the core condition under which the claimed anti-collusion dynamics work.

There are also practical uncertainties the primary materials do not fully resolve. The whitepaper does not specify in operational detail how initial stake and early trust graphs are bootstrapped to avoid capture. It also does not fully specify how the idealized information-contribution ranking is computed efficiently at network scale. Modern docs clarify participation mechanics much more than they resolve every theoretical measurement issue.

Some centralization questions also matter. Regulatory disclosures associated with TAO investment products describe the Subtensor blockchain as using a Proof-of-Authority model in which node admission is controlled by the Opentensor Foundation and a majority of nodes are owned or controlled by it. If accurate for the described period, that means the network’s transaction-ordering and infrastructure decentralization differ from the most decentralized public-chain ideals. That does not negate the subnet incentive model, but it does change the trust picture.

A further real-world reminder came from the 2024 supply-chain incident affecting the Python package distribution for Bittensor. PyPI yanked version 6.12.2 with the reason “Malicious release,” and secondary reporting described a wallet-drain attack that led the network to enter a restricted safe mode while the incident was investigated. This was not evidence that Yuma Consensus itself had failed. It was evidence that infrastructure around a protocol (SDK distribution, wallet operations, release security) can be just as critical as the protocol’s internal economics.

What can developers, validators, and token holders do on Bittensor?

The official docs frame Bittensor as a platform for producing digital commodities, and that wording is important because it broadens the use beyond a single AI niche. In practice, people use the ecosystem to build and operate subnets, run miners and validators, stake TAO behind validators, and track network activity through tools such as block explorers and analytics dashboards.

For developers, the practical use is straightforward: define a commodity, define how miners produce it, define how validators test it, then launch a subnet locally, on test environments, or on mainnet using the SDK and CLI tooling. For operators, the use is to compete within an existing subnet by producing work or by evaluating others’ work credibly enough to earn and retain validator status.

For token holders, the network offers a different role. They may not produce the commodity directly, but they can support validators through staking. That creates a layered market structure: producers compete on performance, validators compete on evaluation quality and influence, and stakers allocate backing toward validators they expect to perform well.

This is why Bittensor occupies an unusual place among crypto networks. It is neither just a smart-contract platform nor just a data marketplace nor just a decentralized AI label. It is trying to be a protocol for ongoing competitive evaluation of off-chain services.

Conclusion

Bittensor is easiest to understand if you stop thinking of it as “a blockchain for AI” and start thinking of it as a market that tries to price useful digital work through decentralized evaluation. Subnets define the commodity, miners produce it, validators score it, and the chain settles stake, permissions, and TAO rewards.

The big idea is powerful: if intelligence and other digital services can be evaluated competitively, they can be rewarded without a single platform owner. The hard part is equally clear: everything depends on whether the evaluation process remains informative, costly to manipulate, and backed by a stake structure that does not collapse into collusion.

What is worth remembering tomorrow is this: Bittensor’s real innovation is not putting AI on-chain. It is trying to make the judgment of AI and other digital work into a protocol-level economic process.

How do you buy Bittensor (TAO)?

You can buy Bittensor (TAO) on Cube Exchange by funding your account and trading the TAO spot market. Fund via the fiat on‑ramp or transfer a stablecoin, then place a spot order (TAO/USDC or TAO/USDT) using a market order for immediate fills or a limit order for price control.

- Deposit USDC or fiat into your Cube account via the on‑ramp or by transferring USDC from an external wallet.

- Open the TAO/USDC (or TAO/USDT) spot market on Cube. Select Market for immediate execution or Limit to set your target price.

- Enter the TAO amount or the USDC you want to spend, review estimated fees and slippage, and submit the order.

- After the trade fills, optionally move TAO to cold storage or set a limit sell or stop‑loss to manage risk.

Frequently Asked Questions

Bittensor is a blockchain-coordinated market that rewards off-chain digital work (especially machine intelligence) in TAO: subnets define a commodity, miners produce work, validators score outputs, and on-chain reward logic (via Yuma Consensus) allocates emissions based on those evaluations.

Yuma Consensus extends ordinary transaction consensus by aggregating validators' evaluations about which off‑chain producers are valuable - it records validator-submitted weights on-chain and uses a stake-weighted aggregation (including a majority-sensitive consensus term) to determine emissions rather than only ordering transactions.

Subnets are independent, task-specific marketplaces connected to a single coordination chain: each subnet defines what ‘good’ means for its commodity, runs validators and miners off‑chain to test and produce that commodity, and uses the shared chain for identity, staking, permits, and reward settlement.

Bittensor defends against collusion by making votes stake-weighted, using a consensus term that sharply favors peers backed by a majority of stake, limiting validator slots and requiring minimum stake/permit rules, but these protections explicitly depend on the assumption that no group controls a majority of stake.

A bond is an economic exposure validators acquire to peers they back: validators’ weight and stake produce bond holdings that let validators earn if their chosen peers later attract emissions, incentivizing early discovery of valuable contributors rather than short-term copying.

Validator permits are limited (subnets support up to 256 active nodes with a default 64 eligible validators), and permit consideration requires a registered hotkey and a minimum stake weight (the docs define stake weight roughly as α + 0.18 × τ with a minimum of 1000); permits are recalculated each epoch and govern who can participate in Yuma Consensus.

To avoid prohibitive bandwidth, Bittensor uses conditional computation (a learned sparse routing layer that selects a small set of peers to query for a given input) and supports distillation so offline proxies can approximate the network when live queries are too costly.

The protocol’s security and utility rest on three conditions: meaningful, hard‑to‑game tests for the commodity; validator incentives that make gaming harder than honest evaluation; and sufficiently decentralized stake - if these fail, the model is vulnerable to capture, herding, or reinforcement of noise, and the whitepaper and docs explicitly acknowledge these limits.

In July 2024 a malicious PyPI release (package v6.12.2) and a subsequent wallet‑drain prompted the chain to enter a restricted ‘safe mode’ while the incident was investigated; this was an infrastructure/supply‑chain security incident rather than a demonstrated failure of Yuma Consensus, and public reporting left some root‑cause details unresolved.

The whitepaper frames peer value as an information‑contribution or marginal‑contribution objective (e.g., Fisher‑information style pruning significance), but it acknowledges that computing that precisely at scale is impractical, so deployed subnets rely on task‑specific heuristics and validator scoring pipelines rather than full information‑theoretic ranks.

Related reading