What is Poseidon Hash?

Learn what Poseidon hash is, why it was built for zero-knowledge proofs, how its sponge and HADES rounds work, and where its tradeoffs matter.

Introduction

Poseidon hash is a cryptographic hash designed for a setting where ordinary hashes become unexpectedly expensive: zero-knowledge proofs over arithmetic circuits. If you hash data with SHA-256 on a CPU, the cost is modest. If you ask a SNARK or similar proof system to prove that the same SHA-256 computation happened correctly, the cost can become enormous, because bitwise logic is awkward to represent as field arithmetic. Poseidon exists to solve that mismatch.

The key idea is simple once you see the constraint that drives everything else. Modern proof systems such as SNARKs, STARKs, and Bulletproof-style circuits do not naturally think in bits; they think in operations over a finite field. A hash that is built from field additions, multiplications, and low-degree power maps can be much cheaper to prove than a hash built from XORs, bit rotations, and word-level Boolean structure. Poseidon is one of the best-known hashes built around that fact.

That does not make Poseidon a universal replacement for SHA-256 or Keccak. On ordinary hardware, traditional hashes are highly optimized, deeply studied, and usually the right choice. Poseidon is valuable because it changes the economics inside proofs. That is why it shows up in Merkle trees for zk applications, private transaction systems, rollups, and proof-heavy storage systems such as Filecoin.

Why are SHA-256 and Keccak inefficient inside zero-knowledge proofs?

| Hash | CPU cost | Proof constraints | Best for |

|---|---|---|---|

| SHA-256 / Keccak | Low (very fast) | High (bitwise emulation) | General software and hardware |

| Pedersen | Moderate | Medium (EC ops in field) | R1CS-friendly commitments |

| Poseidon | Higher on CPU | Low (field-native, few constraints) | Merkle trees inside SNARKs |

A cryptographic hash function has a familiar job: take an input of arbitrary length and produce a fixed-size digest that is hard to invert and hard to collide. The subtle part is that there are many ways to realize that job, and the best design depends on the machine that will execute it.

Traditional hashes were designed for ordinary processors. Their internal machinery uses operations like XOR, bit shifts, bitwise Boolean functions, and fixed-width modular arithmetic. Those are excellent for software and hardware implementations. But in a proof circuit, each such operation has to be encoded as constraints over field elements. The representation works, but it is costly.

That cost matters because proving systems charge for complexity in a way users can feel. More constraints usually mean more prover time, more memory, and often larger proofs or more expensive verification. So if a protocol repeatedly hashes values inside a circuit (for example while verifying a Merkle path, checking a commitment, or updating private state) the hash function can dominate the proof cost.

Poseidon starts from the opposite direction. Instead of asking, “What hash is fastest on a CPU?” it asks, “What permutation can we build from field-native operations so that a proof system can represent it cheaply, without giving up the security properties we need from a hash?” That design target explains nearly every feature of Poseidon.

The original Poseidon paper states this goal explicitly: optimize primarily for the R1CS metric, the common cost model for rank-1 constraint systems, while also performing well in arithmetic execution settings relevant to other proof systems. In that setting, the authors report that Poseidon can use up to 8x fewer constraints per message bit than Pedersen Hash, which is why the design attracted immediate attention in zk systems.

How does Poseidon use a sponge construction over a finite field permutation?

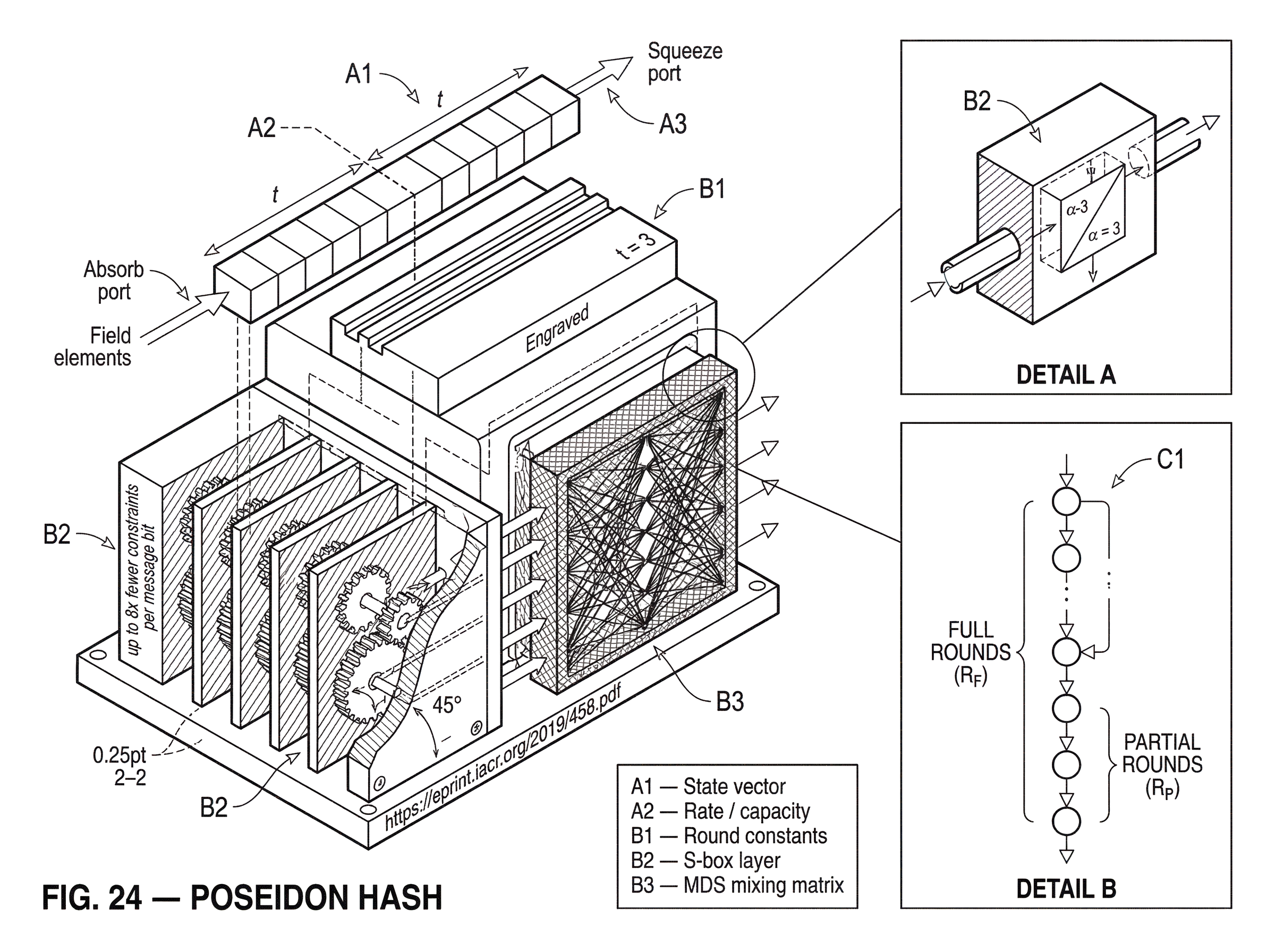

At a high level, Poseidon is usually used as a sponge hash. A sponge is a general recipe for turning a fixed-size internal permutation into a hash that can absorb arbitrarily long inputs and produce outputs of the desired length.

The state is a vector of t field elements. You can think of it as a small register of values living in a prime field F_p, where p is a large prime chosen by the surrounding cryptographic system. This state is split conceptually into two parts: the rate and the capacity. Input blocks are absorbed into the rate portion; the capacity portion is left untouched by direct input and acts as the security reserve.

Here is the mechanism. Start from an initial state, usually all zeros or a domain-separated variant. Break the message into field elements. Add a block of message elements into the rate part of the state. Then apply the internal Poseidon permutation. Repeat until the whole message is absorbed. After that, read output elements from the rate; if more output is needed, apply the permutation again and continue squeezing.

Why does this work? Because if the internal permutation behaves like a strong pseudorandom permutation, the sponge construction inherits good hash-like behavior. The standard sponge intuition is that the capacity controls the security margin: a sponge with c bits of capacity is indifferentiable from a random oracle up to about 2^(c/2) calls, giving roughly 2^(c/2) collision and preimage-style security in the usual regime. Poseidon therefore is not just “a fancy permutation”; it is a permutation chosen so that the sponge around it is efficient in arithmetic circuits.

This also explains an important parameter choice you will see in real deployments. A Poseidon instance is tied to a field F_p, a target security level M, and a state width t. In Filecoin’s specification, for example, the triple (p, M, t) determines the instance. The field fixes the arithmetic universe, the security target helps determine capacity and digest sizing, and the width controls how many field elements can be absorbed at once.

What are the internal steps of a single Poseidon permutation round?

The internal permutation is where the design becomes distinctive. Each round has three ingredients:

- add round constants,

- apply an S-box layer,

- apply a linear mixing layer.

In the Poseidon papers, these are often described as ARC, SB, and M. The mechanism matters more than the names.

The round constants break symmetry. Without them, structured states might evolve in overly predictable ways, and cryptanalysis often becomes easier. So in each round, constants are added to state elements.

The S-box layer supplies nonlinearity. This is the part that prevents the whole permutation from collapsing into something linear and easy to solve. Poseidon uses a power map of the form S(x) = x^α, typically with α = 3 or α = 5 in the recommended families. For many zk-relevant fields, x^5 is a good fit; the original paper specifically notes suitability for popular scalar fields such as those of BLS12-381 and BN254.

The mixing layer spreads information across the state. It multiplies the state vector by a carefully chosen matrix, traditionally an MDS matrix. The purpose is diffusion: after some rounds, each output coordinate should depend in a complicated way on every input coordinate. If nonlinearity is the source of confusion for an attacker, diffusion is what ensures that confusion does not stay localized.

A useful analogy is kneading colored dough. The S-box changes the texture of some pieces in a nonlinear way; the matrix layer folds and spreads those changes through the whole mass. The analogy explains why you need both local nonlinear disruption and global mixing. It fails, of course, as a security argument: dough does not have algebraic structure, while finite-field permutations do, and that algebraic structure is exactly what cryptanalysts study.

How do Poseidon's full and partial (HADES) rounds trade off security and efficiency?

| Round type | S-box coverage | Circuit nonlinear cost | Diffusion speed | Role |

|---|---|---|---|---|

| Full rounds | All state elements | High per round | Immediate wide diffusion | Establish security margin |

| Partial rounds | Single state element | Low per round | Gradual via linear layer | Reduce prover cost |

| HADES mix | Full→many partial→full | Balanced total S-boxes | Fast then incremental | Cost-security compromise |

If Poseidon used a nonlinear S-box on every state word in every round, it would be secure-looking but more expensive than necessary for proof systems. If it used too little nonlinearity, algebraic attacks could exploit the structure. The core Poseidon design choice is the compromise between those two extremes.

This compromise is called the HADES strategy. A Poseidon permutation begins with some number of full rounds, where the S-box is applied to every state element. It ends with more full rounds. In the middle, it uses many partial rounds, where the S-box is applied to only a single state element while the others pass unchanged through that nonlinear step before the mixing layer redistributes the effect.

This is the compression point for understanding Poseidon: full rounds buy wide, immediate disruption; partial rounds buy cheaper incremental disruption. Because S-boxes are the expensive nonlinear component in arithmetic circuits, reducing the number of S-box applications reduces circuit cost significantly. But because the linear layer keeps spreading the effect of the active S-box across the whole state, the design can still accumulate enough complexity to resist known attacks; if the number of rounds and the mixing matrices are chosen carefully.

So Poseidon is not “cheap because it does less cryptography.” It is cheap because it spends nonlinearity where it matters most. Early and late full rounds help block structural and statistical weaknesses. The long middle section of partial rounds lowers cost while continuing to grow algebraic complexity through repeated mixing.

The original paper also describes implementation tricks that exploit this structure. In partial rounds, by moving constants and using sparse-equivalent linear forms, implementers can reduce multiplication work in the linear layer substantially; from a dense t^2 style cost toward roughly 2t constant multiplications in optimized forms for those rounds. That matters in native implementations and often in circuit representations as well.

How do you compute a Merkle-tree parent hash with Poseidon inside a SNARK?

Suppose you want to prove in a SNARK that a leaf belongs to a Merkle tree. At each level of the path, the circuit must recompute a parent hash from two child values. If the tree uses SHA-256, the circuit has to encode a lot of bit-level logic for every step. If the tree uses Poseidon over the proof system’s base or scalar field, the circuit can stay much closer to its native arithmetic.

Imagine the two child nodes are already represented as field elements. With a width t = 3 instance, the state can hold those two inputs plus one more element reserved for capacity or output structure. The circuit absorbs the two child values into the state, applies the Poseidon permutation rounds, and outputs one field element as the parent hash. The proof does not need to emulate a machine that shuffles bits around in 32-bit or 64-bit words; it only needs to constrain field additions, multiplications, constant additions, and exponentiation by a small fixed power such as 5.

That difference compounds over a path. A Merkle proof of depth 32 means 32 hash computations inside the circuit. If each hash is much cheaper in constraints, the entire proof becomes materially cheaper. This is why Poseidon appears so often in zk systems that maintain Merkle trees of balances, notes, storage slots, identities, or commitments.

The same mechanism is not specific to one chain. Filecoin uses Poseidon in Merkle trees and commitments. Ethereum-oriented work has gone far enough that an EIP proposed a Poseidon precompile for EVM interoperability with zk rollups, precisely because many rollups use Poseidon internally and need efficient on-chain checking. Other libraries in the zk ecosystem expose both native Poseidon hashing and circuit gadgets for the same reason: the value is not abstract elegance, but that the exact same arithmetic structure can be used both in ordinary code and in provable circuits.

Which Poseidon parameters must be fixed and why do they matter?

| Parameter | Controls | Security impact | Cost impact |

|---|---|---|---|

| Field p | Arithmetic universe | Compatibility with exponents | Element size affects cost |

| Width t | Rate vs capacity | Diffusion per permutation | Inputs absorbed per call |

| S-box exponent α | Nonlinearity degree | Algebraic resistance | Exponentiation cost |

| Rounds (RF,RP) | Nonlinearity budget | Resists algebraic attacks | Total S-box count |

| Mixing matrix | Diffusion pattern | Prevents invariant trails | Matrix multiplication cost |

With Poseidon, the parameterization is part of the cryptographic substance. You do not just say “use Poseidon” and stop there. You must specify the field, the state width, the S-box exponent, the number of full rounds R_F, the number of partial rounds R_P, the round constants, and the mixing matrix.

The field matters because Poseidon is built over F_p. The same algorithm over BN254’s scalar field and over BLS12-381’s scalar field is not literally the same instance. The S-box exponent must be compatible with the field so that the power map is a permutation on field elements when needed. That is why recommendations often focus on exponents like 3 or 5, depending on the arithmetic properties of p - 1.

The width t matters because it determines how much input you can absorb per permutation call and how diffusion behaves. In Filecoin, for example, different widths are used for different Merkle arities and proof structures. A width-3 instance can hash two field elements to one output; larger widths support broader arities more efficiently.

The number of rounds matters because the HADES strategy only works if you choose enough rounds to defeat known statistical and algebraic attacks. The designers explicitly updated their guidance over time and recommend using the updated round-selection formulas. They also advise against certain variants, notably the broader STARKAD family and the x^-1 instantiation of Poseidon, which they consider dismissed. That is a practical warning: implementers should not improvise with “close enough” variants.

The mixing matrix matters for a deeper reason. Poseidon’s security analysis relies on the matrix diffusing activity in a way that prevents weak invariant or iterative subspaces and related structural trails. The EIP discussion and later research both emphasize that matrix selection is not a secondary implementation detail. A poorly chosen matrix can preserve too much structure and make attacks easier than the round counts alone would suggest.

What is the security evidence for Poseidon and what are its limitations?

Poseidon’s designers analyze resistance to interpolation attacks, Gröbner-basis methods, higher-order differential techniques, and other algebraic or statistical approaches. Their design goal is roughly that no attack should find a relevant non-random property of the permutation faster than 2^M queries for an M-bit target. That gives the intended margin for the sponge-based hash.

But with Poseidon, you should hold two ideas at once. First, this is a serious, research-grounded design with extensive cryptanalysis and substantial real-world adoption. Second, its security story is more conditional and more actively debated than that of older mainstream hashes.

The reason is structural. Poseidon intentionally accepts a high degree of algebraic regularity in order to be efficient in arithmetic circuits. That is exactly what makes it attractive for zk systems, and exactly what invites algebraic cryptanalysis. Several later papers sharpened this picture.

Some results showed practical algebraic attacks on reduced-round Poseidon challenge instances by modeling constrained-input constrained-output problems as polynomial systems and solving them with standard algebra software. These do not amount to breaks of full recommended parameters, but they are a reminder that the algebraic structure is real and exploitable when margins are too small.

Other work argues that partial rounds in HADES-based designs may not provide as much algebraic resistance as earlier arguments assumed, especially at unusually high claimed security levels. In particular, some asymptotic analyses found examples where claimed 512-bit or 1024-bit security levels were overstated. The practical takeaway is narrower than the headline: common deployed security targets like 128 bits are not thereby shown broken. But the papers do show that round-count justification is delicate, and that simplistic counting arguments can overestimate security.

This is why careful implementations follow concrete, updated parameter recommendations rather than inventing custom round counts. It is also why standards conversations around Poseidon often include the caveat that it is younger and less battle-tested than SHA-256 or Keccak.

How do real projects implement Poseidon safely and ensure interoperability?

In deployed systems, Poseidon is rarely used as a generic “hash bytes of anything” primitive in the same way as SHA-256. More often, it hashes field elements or values that are naturally embedded into the proof system’s field. That keeps the design aligned with its strengths.

A common pattern is domain-separated hashing of structured data: Merkle-tree nodes, nullifiers, commitments, note openings, identity data, or public inputs. Some implementations expose explicit domains so that “hash these four field elements as a Merkle node” is distinguished from “hash these same field elements for another protocol purpose.” That is not unique to Poseidon, but it matters because many zk protocols reuse the same permutation in multiple contexts.

Another practical pattern is optimization through sparse or preprocessed linear layers. Filecoin’s implementation work and audit material highlight that optimized sparse-equivalent matrices can preserve the intended permutation behavior while reducing cost. The audit also notes something more mundane but important: even when the high-level design is sound, implementation details such as constant generation, bit ordering, or message-length handling in higher-level hash constructions can go wrong. In Filecoin’s case, some Poseidon-based constructions were initially incorrect and later fixed, and the audit verified that the circuit and native implementations matched after correction.

That lesson generalizes. Poseidon is a family of parameterized constructions, not a single byte-for-byte universal standard. Different systems have chosen different parameter sets, which is one reason EVM precompile proposals discuss supporting arbitrary parameters instead of a single hardcoded instance. Interoperability therefore requires more than saying “we both use Poseidon”; it requires agreeing on the full instance.

What is the difference between Poseidon and Poseidon2?

If you encounter Poseidon2, it is best understood not as a different idea, but as a refinement of the same design goal. Poseidon2 changes the linear layers, adds an initial linear layer, and modifies how constants are applied in internal rounds. The aim is to reduce arithmetic cost further, especially in settings like Plonkish circuits where linear operations themselves carry more visible cost than in R1CS.

According to the Poseidon2 paper, these changes can reduce linear-layer multiplications by up to 90% and Plonk constraints by up to 70%, with concrete implementation speedups as well. The paper also presents the added initial linear layer as a mitigation against a practical algebraic shortcut that can effectively skip the first two nonlinear layers in the original Poseidon analysis.

This matters because it clarifies what is fundamental in Poseidon and what is conventional. The fundamental idea is the arithmetization-oriented sponge permutation with low-degree S-boxes and HADES-style round scheduling. The exact linear layer design is more of an engineering and security-tuning choice, which newer work continues to revise. So when someone says “Poseidon” in a current engineering context, it is worth checking whether they mean the original permutation or Poseidon2.

When should you use Poseidon versus a traditional hash like SHA-256?

Poseidon is good at one particular tradeoff: minimizing proof cost for hashing over finite fields. That makes it a natural fit when the hash is inside the statement being proved. Merkle-tree membership proofs, commitment schemes, private balances, note systems, rollups, and proof-of-storage circuits all benefit from this property.

What Poseidon is not trying to be is the default hash for every application. If you need a conservative, battle-tested general-purpose hash for software, protocols, or hardware outside proof circuits, traditional functions still have strong advantages: mature standardization, huge implementation experience, and decades of cryptanalysis.

There is also a subtle mismatch in interfaces. Poseidon naturally works on field elements, while many applications naturally start from byte strings. Mapping bytes into field elements is possible, but it adds conventions, edge cases, and interoperability choices. That does not make Poseidon unsuitable; it just means its most natural home is a protocol already living in field arithmetic.

Conclusion

Poseidon hash is best understood as a hash designed for the machine inside a zero-knowledge proof. Instead of optimizing for bitwise computation on ordinary processors, it optimizes for arithmetic constraints over a finite field. That is why it uses a sponge over a field permutation, low-degree power S-boxes, and the HADES mix of full and partial rounds.

The result is a hash that can make zk circuits dramatically cheaper, which is why it appears in Merkle trees, commitments, rollups, and proof-heavy systems like Filecoin. But its efficiency comes with conditions: parameters matter, matrix choice matters, and the security analysis is more active and assumption-sensitive than for older mainstream hashes. The short version to remember is this: Poseidon exists because proving a hash is a different problem from computing a hash, and Poseidon was built for the proving side of that tradeoff.

What should you understand before using this part of crypto infrastructure?

Poseidon Hash should change what you verify before you fund, transfer, or trade related assets on Cube Exchange. Treat it as an operational check on network behavior, compatibility, or execution timing rather than a purely academic detail.

- Identify which chain, asset, or protocol on Cube is actually affected by this concept.

- Write down the one network rule Poseidon Hash changes for you, such as compatibility, confirmation timing, or trust assumptions.

- Verify the asset, network, and transfer or execution conditions before you fund the account or move funds.

- Once those checks are clear, place the trade or continue the transfer with that constraint in mind.

Frequently Asked Questions

Because SNARKs/STARKs express computation as arithmetic over a finite field, bitwise operations used by SHA-256 are expensive to encode as field constraints; Poseidon is built from field additions, multiplications, and low-degree power maps so the same hashing work requires far fewer circuit constraints and thus much cheaper proving costs.

Poseidon uses the HADES schedule: a few full rounds (S-box on every state element) at the start and end to give wide nonlinear disruption, and many cheaper partial rounds (S-box on a single element) in the middle so mixing spreads that single nonlinear effect across the state; this concentrates nonlinearity where it most helps security while reducing expensive S-box applications in proofs.

A complete Poseidon instance is defined by its prime field F_p, state width t, S-box exponent α, target security M, the number of full and partial rounds (R_F and R_P), the per-round constants, and the mixing matrix; these choices determine absorption capacity, how many inputs fit per permutation, and the algebraic resistance of the permutation, so they must be fixed and agreed for interoperability.

Researchers have demonstrated algebraic and Gröbner-basis style attacks on round‑reduced or specially constructed Poseidon instances and have raised concerns about overstated asymptotic claims at very high security targets, but these works do not claim breaks of full recommended parameters - rather they show that round counts, matrix choice, and parameter justification are delicate and must follow updated recommendations.

Poseidon2 is a refinement that changes the linear layers, adds an initial linear layer, and alters constant application to substantially reduce linear-layer multiplications and Plonk-style constraints, and it also mitigates certain algebraic shortcuts identified against the original Poseidon, so it is an optimization/security-tuning evolution rather than a different conceptual hash.

Not directly - Poseidon is designed to hash field elements, so hashing arbitrary byte strings requires a chosen embedding into the field (and related conventions); many deployments therefore hash already-field-native data or use explicit domain separation and agreed parameter sets to avoid interoperability and edge-case problems.

Real-world pitfalls include incorrect higher-level constructions and implementation mismatches (Filecoin fixed earlier incorrect constructions and bit‑order differences in round constants), and library bugs have affected related tooling (e.g., a gnark deserialization issue); these examples show parameter generation, bit ordering, and careful matching of circuit and native implementations are common sources of errors.

Inside a SNARK you absorb child nodes as field elements into a width-t Poseidon state (e.g., t=3 for two children plus capacity), apply the permutation, and read a field-element parent - this avoids bit-level emulation, keeps the circuit in native arithmetic, and yields much smaller constraint cost per Merkle level than SHA-style hashes.

Related reading