What is Distributed Key Generation (DKG)?

Learn what Distributed Key Generation (DKG) is, how dealerless key setup works, why it matters for threshold signatures, and where it can fail.

Introduction

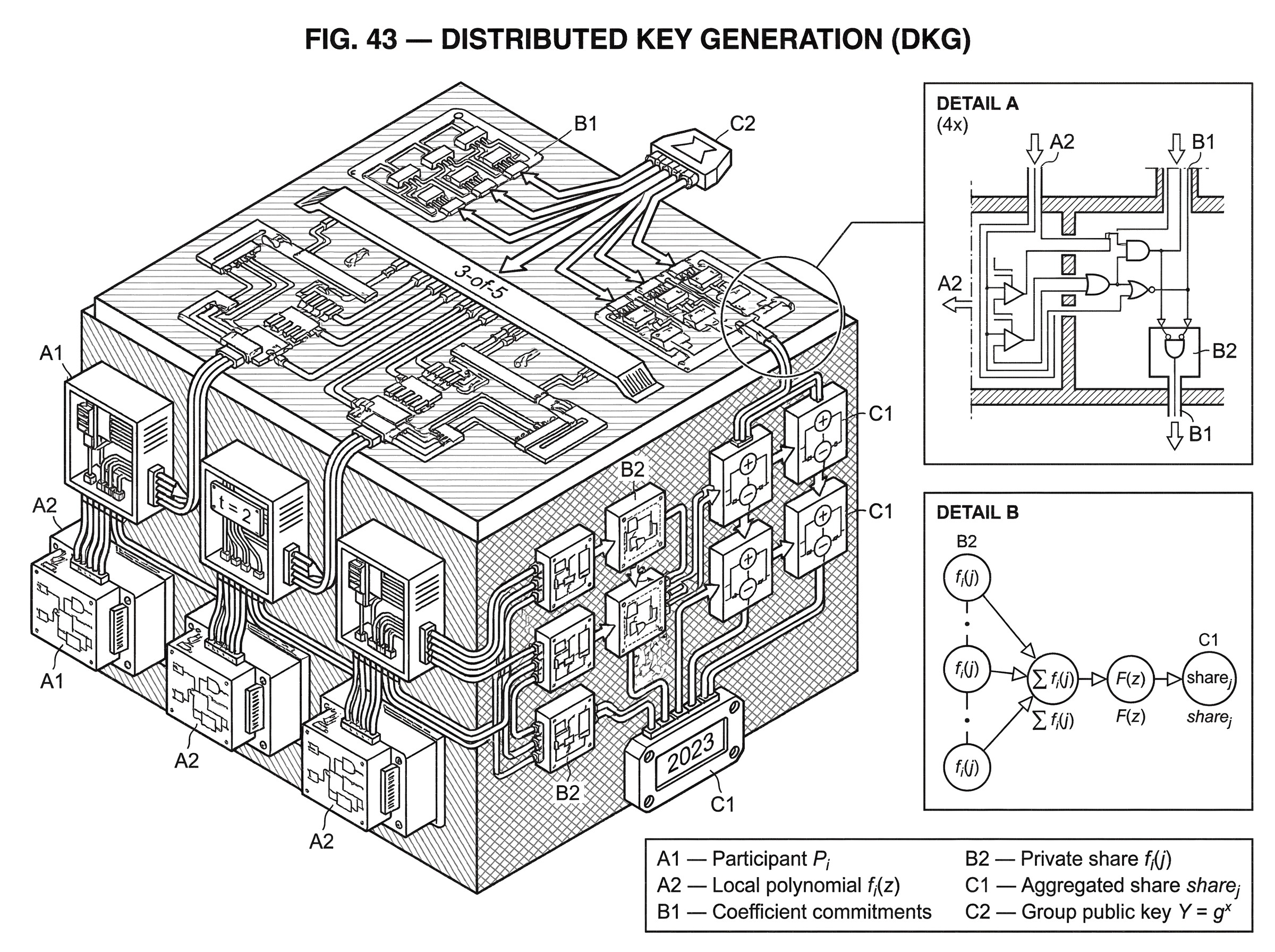

Distributed Key Generation (DKG) is a cryptographic protocol for creating a public key and a set of private key shares without ever assembling the full private key in one place. That sounds like a small variation on secret sharing, but it solves a different and more important problem. In ordinary secret sharing, someone already has the secret and then splits it up. In DKG, there is no trusted dealer to begin with. The secret key comes into existence only as a distributed object.

That distinction matters because the dealer is often the weakest point in a threshold system. If one machine generates the key first and then shards it, that machine could leak the key, be coerced into revealing it, or bias the key before distributing shares. DKG removes that single point of trust. The result is the setup ceremony that makes threshold signatures, distributed custody, and randomness beacons meaningfully decentralized rather than merely replicated.

The core idea is surprisingly simple: each participant acts like a temporary dealer for their own random secret, everyone proves their shares are well formed, and the final private key is the sum of all those contributions. No one knows the sum, because no one knows everyone else's secret. But everyone can compute the corresponding public key, because public keys combine algebraically in the same way the hidden secrets do.

That is the compression point. Once you see DKG as "many verified local secrets whose sum becomes one shared global secret", most of the machinery falls into place.

What trust problem does Distributed Key Generation (DKG) solve?

Suppose n participants want a t+1-of-n threshold signature key. They want any t+1 of them to be able to sign together, while t or fewer learn nothing useful about the private key. There is an easy but unsatisfying way to do this: let one trusted coordinator generate a private key x, split x using Shamir secret sharing, and hand out the shares.

Mechanically, that works. Trust-wise, it fails. The coordinator saw x. Even if it deletes the key afterward, everyone else must trust that deletion, trust that the coordinator's machine was clean, and trust that the coordinator did not bias the key or hand out inconsistent shares. In a threshold system, this is backward: the whole point was to avoid having any single party be all-powerful.

DKG replaces that trusted coordinator with a protocol. Instead of starting from a known secret and distributing it, the participants jointly sample a random secret that never appears in full. In discrete-log based systems, the goal is typically to end with a public key Y = g^x while each participant holds only a share of x. The secret x itself is never reconstructed during setup.

This is why DKG is best understood not as "secret sharing" but as dealerless secret sharing plus public-key generation. It creates both the hidden structure needed for threshold use and the public object outsiders will later verify against.

How does DKG extend Shamir secret sharing to remove a dealer?

| Scheme | Secret source | Share flow | Trust model | Public key | Typical risk |

|---|---|---|---|---|---|

| Shamir (dealer) | single dealer | dealer distributes shares | trust dealer | not jointly generated | dealer compromise |

| DKG (dealerless) | each participant samples | participants exchange shares | no single trusted party | computed from commitments | malicious shares need VSS |

The natural starting point is Shamir secret sharing. In Shamir's scheme, a dealer chooses a random polynomial f(z) of degree t, sets the secret to f(0), and gives participant i the value f(i). Any t+1 points determine the polynomial and recover f(0), while t or fewer reveal nothing.

The invariant that matters is the polynomial's degree. A degree-t polynomial is exactly what makes t+1 shares sufficient and t shares insufficient. Change the degree, and you change the threshold.

DKG keeps that invariant but removes the dealer. Each participant P_i chooses their own random degree-t polynomial f_i(z). You can think of f_i(0) as that participant's private contribution to the eventual master secret. Participant P_i sends share f_i(j) to participant P_j. Then each participant locally adds up all shares they received:

share_j = sum over i of f_i(j)

This works because sums of degree-t polynomials are still degree t. If we define the global polynomial as

F(z) = sum over i of f_i(z)

then participant P_j now holds share_j = F(j). The shared private key is the hidden constant term F(0), which equals the sum of the participants' secret contributions:

x = F(0) = sum over i of f_i(0)

No participant knows all the f_i(0) values, so no one knows x. But the threshold structure is valid because everyone holds a point on the same degree-t polynomial F.

This is the basic DKG mechanism. If everyone is honest, it is elegant and enough.

If anyone is malicious, it is not enough at all.

Why does DKG require verifiable secret sharing (VSS)?

A malicious participant has several obvious ways to sabotage this process. They could send different shares to different recipients that do not lie on a single polynomial. They could send malformed data to some parties but not others. They could try to bias the resulting key by selectively causing aborts after learning partial information. They could even change the effective threshold by using a polynomial of the wrong degree.

So DKG needs more than secret sharing. It needs verifiable secret sharing, usually abbreviated VSS. VSS adds a way for recipients to check that their private shares are consistent with a public commitment to the dealer's polynomial, without revealing the polynomial itself.

This is where classic constructions like Feldman VSS and Pedersen-style techniques enter. Feldman's practical non-interactive VSS showed how a dealer can publish commitments to polynomial coefficients so that each recipient can verify their share locally. Pedersen's work on verifiable secret sharing is a foundational source in this lineage, and later Pedersen-style DKG constructions became some of the standard dealerless designs.

Here is the mechanism in ordinary language. Suppose participant P_i has polynomial

f_i(z) = a_i,0 + a_i,1 z + ... + a_i,t z^t

They publish commitments to each coefficient, such as A_i,0, A_i,1, ..., A_i,t, where each commitment hides the coefficient but still supports algebraic checking. Then recipient P_j, who privately received f_i(j), can verify that this number matches the published commitments. The point is not that P_j learns the coefficients. The point is that P_j can detect inconsistency.

In discrete-log settings, the public key corresponding to participant P_i's secret contribution is derived from the commitment to the constant term. When all participants' commitments are multiplied or otherwise combined in the group, the result is the public key for the sum of their private contributions. That is how DKG gets a public key for a private value no one ever assembled.

So the protocol has a split personality:

- privately, parties exchange shares;

- publicly, they post commitments that let everyone check those shares and derive the final public key.

That dual view is the heart of DKG.

How does a DKG ceremony run in practice? (worked 3‑of‑5 example)

Imagine five participants want a 3-of-5 threshold key, so t = 2. Each participant samples a random quadratic polynomial. Alice picks f_A(z), Bob picks f_B(z), and so on. The constant term of each polynomial is that participant's hidden contribution to the final secret.

Alice now sends Bob the value f_A(Bob), sends Carol f_A(Carol), and similarly for the rest. But she does not just ask them to trust her. She also publishes commitments to the coefficients of f_A. Bob can then check that the private share he received really matches those commitments. If it does, Bob knows Alice's share to him is part of some quadratic polynomial consistent with her public transcript. Everyone does the same.

After all valid contributions are collected, Bob adds together all the shares sent to him: Alice's share to Bob, Carol's share to Bob, Dave's share to Bob, Eve's share to Bob, and his own local one. That sum is Bob's final private share of the group key. Carol does the same for her position, Dave for his, and so on. Nobody ever adds up the constant terms directly, so nobody learns the full secret key.

Yet the public side also combines cleanly. The commitment to Alice's constant term corresponds to Alice's public contribution, the same for everyone else, and multiplying these public contributions gives the group's public key. Later, when any three of the five sign together, they use their shares of the hidden global polynomial, and the result verifies under that public key.

This example shows why DKG is not a separate curiosity. It is the setup ceremony that makes threshold signing possible.

What are Joint Feldman and Pedersen‑style DKGs, and what does “dealerless” mean?

Many practical DKGs for discrete-log systems follow the shape often called Joint Feldman or Pedersen DKG. The terminology varies across papers and implementations, and not every source uses the same names for the same refinements, so it is better to focus on the mechanism than the label.

The common structure is that each participant runs a VSS as if they were a dealer of their own random secret. The system then aggregates all these VSS instances into one shared key. The security comes from two facts working together: first, a malicious participant cannot quietly hand out inconsistent shares if verifiability checks are done correctly; second, as long as at least one honest participant contributes unpredictable randomness, the final secret remains unknown and, under the protocol's assumptions, suitably random.

This "at least one honest contribution" point is fundamental. DKG does not require every participant to be honest. It requires enough honest participants to preserve the threshold structure and enough protocol checks to exclude or expose invalid contributions. That is why complaint phases, dispute procedures, and qualification sets appear in many protocols. They are not bureaucratic extras. They are how the system decides whose contribution counts.

In Ethereum-oriented work like EthDKG, this logic is made explicit. Participants publish their commitments and encrypted shares on-chain, disputes can be raised non-interactively, and only parties in the resulting qualified set contribute to the final key. The blockchain acts as the authenticated broadcast channel and dispute arbiter, but the underlying DKG logic is the same: verified contributions are summed into one distributed key.

What security properties does DKG provide (secrecy, correctness, robustness, unpredictability)?

A useful way to think about DKG security is that it is trying to guarantee four things at once.

First, secrecy: no coalition below the threshold should learn the private key. This comes from the threshold secret-sharing structure itself.

Second, correctness: honest parties should end with shares of the same underlying secret and a matching public key. This comes from verification and consistency checks.

Third, robustness: malicious parties should not be able to derail the ceremony too easily or cause honest parties to finish with inconsistent outputs. Some modern formal treatments distinguish strong robustness, where the protocol always completes when enough honest parties exist, from weak robustness, where aborts are allowed but honest parties never end in inconsistent states.

Fourth, uniformity or unpredictability of the resulting key: the final secret should not be adversarially biased in some hidden way. This is subtle. In naïve dealerless protocols, an attacker may try to observe enough state to decide whether to continue or abort depending on whether they like the resulting key. Countermeasures often add extra reveal steps or use carefully designed qualification rules.

These properties depend on the model. Some constructions assume a synchronous network where rounds complete in order. Others, like asynchronous DKGs, are designed for networks where message delays are unpredictable and there is no global clock. Asynchrony is much harder because the protocol can no longer rely on everyone seeing the same messages at the same time, yet it still needs to end with one consistent distributed key.

Recent formal work has tried to clean up the security story by defining DKG in a more modular way. One 2023 treatment builds a generic DKG from three building blocks: an aggregatable verifiable secret sharing scheme, a non-interactive key exchange primitive, and a hash function. The practical message is not that every deployment uses that exact blueprint. It is that DKG is being understood less as a bag of ad hoc tricks and more as a composable cryptographic object with clear security games.

How do asynchronous networks and large committees change DKG design and costs?

| Setting | Network model | Communication | Complexity | Best for |

|---|---|---|---|---|

| Synchronous | rounds with timeouts | all‑to‑all broadcast | O(n^2) comm | small committees |

| Asynchronous | no global clock | gossip + agreement | higher, e.g. O(n^3) | geo‑distributed nodes |

| Large-scale (aggregatable) | gossip + aggregation | aggregate transcripts | ≈O(n log n) verify | thousands of participants |

The simplest DKG picture assumes synchronous rounds and broad broadcast. That becomes expensive or brittle in real networks.

In asynchronous settings, the problem is not just slowness. It is ambiguity. Some honest nodes may receive enough evidence to move forward while others are still waiting. Practical asynchronous DKG protocols therefore introduce stronger agreement machinery, often built from asynchronous secret sharing and Byzantine agreement components. A recent high-threshold asynchronous DKG for n = 3t + 1 shows that this can be done with only discrete-log assumptions, avoiding some heavier assumptions from earlier work. The price is more protocol complexity and more communication than in the synchronous case.

At large committee sizes, public verifiability itself becomes a bottleneck. If every party publishes a full transcript and everyone must verify everything, costs can grow quadratically. Aggregatable DKG research tackles this by making transcripts compressible: separate public proofs can be combined into a smaller object that is still efficiently verifiable. One such construction reduces final transcript size and verification work from O(n^2) toward O(n log n) verification, with gossip-style dissemination instead of pure all-to-all broadcast. The implementation details are specialized, but the general lesson is simple: once DKG leaves the whiteboard and enters thousand-node systems, communication structure matters as much as the core algebra.

How is DKG used with threshold signatures and multiparty computation (MPC)?

DKG is usually not the end product. It is the setup phase for some later threshold operation.

In threshold BLS systems, DKG creates the shared signing key and the public verification key. drand is a concrete example. During setup, drand nodes run a Joint Feldman-style DKG to create one collective public key and one private key share per server. The nodes never use the full private key explicitly. Later, in each randomness round, they produce threshold BLS signature shares, and any sufficient subset can combine those into a full signature whose hash becomes the public randomness output. Here DKG is what makes the beacon trustless: no single operator ever held the beacon's signing key.

In threshold ECDSA, DKG is even more operationally important because ECDSA is awkward to thresholdize. Practical dealerless ECDSA systems use DKG to establish the shared long-term signing key and then rely on additional multiparty computation during signing. The setup matters because if the key ceremony were centralized, much of the benefit of threshold ECDSA custody would disappear.

This is also the right place to notice the distinction between threshold signatures and MPC more broadly. DKG is narrower than general MPC. It does one very specific job: it creates shared secret key material with a corresponding public key. But that narrow job is foundational. Many multiparty signing systems begin with DKG because you cannot sign with shared secrets until you have securely created those shared secrets.

A concrete real-world example is Cube Exchange's decentralized settlement design. It uses a 2-of-3 threshold signature scheme in which the user, Cube Exchange, and an independent Guardian Network each hold one key share. No full private key is ever assembled in one place, and any two shares are required to authorize settlement. That architecture depends on the same threshold cryptography logic DKG was designed to support: control is distributed at key creation time so that operational trust does not collapse back into one machine or one institution.

What practical implementation failures and attacks should you watch for in DKG?

| Failure | Cause | Impact | Mitigation |

|---|---|---|---|

| Degree mismatch | malicious higher-degree polynomial | raises reconstruction threshold (DoS) | check coefficient-commitment length |

| Inconsistent shares | participant sends different shares | honest parties disagree | use VSS commitments and complaints |

| Biasing via aborts | selective reveal or aborts | adversary biases final key | qualification sets + reveal steps |

| Implementation gaps | missing validation checks | silent DoS or unusable key | strict input validation and tests |

The clean algebra of DKG can hide a practical truth: implementations fail on small checks, not just on big proofs.

A sharp recent example came from Pedersen DKG implementations used around FROST and threshold ECDSA libraries. Trail of Bits reported that some implementations failed to validate the degree, or equivalently the coefficient-commitment vector length, of a participant's polynomial. That sounds minor. It is not. If one malicious party uses degree T instead of the agreed degree t, the global polynomial becomes degree T, which silently raises the number of shares required for reconstruction or signing from t+1 to T+1.

This attack does not reveal the secret key. Instead, it turns the shared key into a denial-of-service weapon. Honest participants think they created, say, a 2-of-3 key, but in reality the resulting shares may require three or more participants to sign. If T is large enough, the key can become unusable. That is a good example of a smart reader's likely misunderstanding: in DKG, not every failure mode is about key theft. Some of the worst ones are about quietly breaking the structure while preserving the appearance of success.

The mitigation in that case was straightforward: check that each participant published exactly the expected number of coefficient commitments. But that simplicity is the lesson. DKG security depends on both the deep mathematics and the boring invariants. If the protocol says "degree t," the implementation must enforce degree t.

Other practical assumptions can also matter more than they first appear. drand's documented setup still uses a trusted coordinator under a trust-on-first-use model to assemble the initial group configuration before starting DKG. That does not mean the coordinator knows the final private key; it does not. But it does mean the bootstrapping process still has an operational trust assumption separate from the cryptographic core. Similarly, on-chain DKG designs may be cryptographically sound yet economically costly or sensitive to timing assumptions about block finality and synchrony.

Which parts of DKG are fundamental versus optional design choices?

The fundamental part of DKG is short.

A group of parties jointly create a random secret x by summing independently generated contributions. They hold only shares of x, not x itself. They publish enough public information to verify consistency and derive the public key corresponding to x. That is the essence.

Many other features are design choices layered on top of that essence. Whether the protocol is synchronous or asynchronous is a design choice driven by the network model. Whether verification is publicly visible or only participant-visible is a design choice. Whether disputes are interactive, non-interactive, on-chain, or handled off-chain is a design choice. Whether transcripts can be aggregated, whether gossip replaces all-to-all broadcast, and whether the protocol prefers guaranteed completion or simpler abort semantics are all design choices.

Those choices matter because they determine where the protocol is usable. A small validator committee on a blockchain can tolerate a different ceremony than a globally distributed randomness beacon. A threshold BLS system has different algebraic affordances from threshold ECDSA. Some modern generic DKG frameworks assume a one-to-one relation between secret and public keys and note that extending the same ideas cleanly to lattice-based, post-quantum settings remains open.

So DKG is not one protocol. It is a family of protocols solving the same trust problem under different constraints.

Conclusion

Distributed Key Generation is the protocol that lets a group create one key without one key owner. It exists because threshold cryptography is not really decentralized if some dealer generated the secret first.

The enduring idea is simple enough to remember: each participant secretly contributes a piece, everyone verifies the pieces fit one polynomial structure, and the sum becomes a private key no one ever sees in full.

Everything else in DKG is there to protect that structure against the ways real networks and real adversaries try to break it.

- commitments

- complaints

- synchrony assumptions

- aggregation tricks

- on-chain disputes

What should you understand before using this part of crypto infrastructure?

Distributed Key Generation (DKG) should change what you verify before you fund, transfer, or trade related assets on Cube Exchange. Treat it as an operational check on network behavior, compatibility, or execution timing rather than a purely academic detail.

- Identify which chain, asset, or protocol on Cube is actually affected by this concept.

- Write down the one network rule Distributed Key Generation (DKG) changes for you, such as compatibility, confirmation timing, or trust assumptions.

- Verify the asset, network, and transfer or execution conditions before you fund the account or move funds.

- Once those checks are clear, place the trade or continue the transfer with that constraint in mind.

Frequently Asked Questions

DKG has every participant sample their own secret polynomial and distribute shares so the group's secret is the sum of those contributions; because no one participant learns the other parties' constant terms, nobody ever reconstructs the full private key even though everyone can compute the corresponding public key from aggregated commitments.

Verifiability prevents malicious parties from sending inconsistent or malformed shares that would break the shared polynomial; practical DKGs therefore pair dealerless sharing with verifiable secret sharing (e.g., Feldman or Pedersen-style commitments) so recipients can check their private shares against public commitments without revealing secrets.

If a participant uses a polynomial of higher degree than agreed, the global polynomial's degree increases and the effective threshold for reconstruction/signing silently rises (turning a 2-of-3 key into a 3-or-more-of-3 key), so implementations must validate the published coefficient-commitment vector length to prevent this denial-of-service attack.

Asynchronous DKG is possible but substantially harder: it requires extra agreement machinery (asynchronous secret sharing and Byzantine agreement), higher communication and protocol complexity, and often stricter thresholds like n=3t+1 in known constructions compared with synchronous protocols.

DKG is typically necessary but not sufficient for threshold ECDSA: DKG establishes the long‑term shared key material, but ECDSA signing usually requires additional interactive multiparty computation during each signature, so a complete threshold ECDSA system combines DKG plus signing MPC.

On-chain DKG designs publish commitments and encrypted shares to the blockchain and use on‑chain dispute or complaint procedures to form a qualified set of contributors; the chain acts as the authenticated broadcast and arbiter so only qualified (verified) contributions are aggregated into the final key.

Extending generic DKG constructions to post‑quantum/lattice-based primitives is an open problem in recent literature because some generic reductions require a one‑to‑one mapping between secret and public keys and NIKE primitives that are nontrivial to instantiate with lattice assumptions.

Common practical pitfalls are not only cryptographic proofs but small implementation invariants: for example, failing to check coefficient-commitment vector length (a Pedersen DKG pitfall) can raise the effective threshold, and other operational assumptions (bootstrapping coordinators, phase durations, or missing range proofs) have caused real-world vulnerabilities and deployment risks.

Related reading