What is Key Resharing?

Learn what key resharing is in threshold cryptography, how it preserves a public key while rotating shares, and why it matters for MPC and threshold signing.

Introduction

Key resharing is the process of taking a secret that is already split across multiple parties and redistributing it into a new set of shares, usually for a different group of participants, without changing the underlying private key. That sounds like a small administrative convenience, but it solves a deep operational problem in threshold cryptography: real systems change over time, while cryptographic keys are expected to remain stable.

A threshold system is built on an attractive promise. No one machine, employee, validator, or service ever holds the whole private key. Instead, the key exists only implicitly, as shares distributed across a group. Any sufficiently large subset can cooperate to sign, decrypt, or authorize an action, while smaller subsets learn nothing useful. That design reduces single points of failure. But it creates a new question: what happens when the group itself needs to change?

People leave teams. Nodes are rotated out of a subnet. A randomness beacon wants to add and remove operators. A signer set for a threshold wallet changes. A participant may be suspected of compromise, or a system may simply want to refresh shares periodically so that old leaked fragments become useless. If the only way to handle those changes were to generate an entirely new private key, every dependent address, public key, or external trust anchor would also need to change. In many systems, that is expensive or disruptive.

Key resharing exists to avoid that disruption. It preserves the public identity of the key while changing the hidden internal distribution of authority. The result is a threshold system that can adapt operationally without giving up the cryptographic benefit of never assembling the key in one place.

How does resharing produce fresh shares while keeping the same private key?

| Option | Public key impact | Best when | Main risk | Relative cost |

|---|---|---|---|---|

| Resharing | Unchanged | Replace or rotate participants | Colluding old holders | Moderate |

| Share refresh | Unchanged | Limit long-term leakage | Accumulating past compromises | Low per cycle |

| New key generation | Changes public key | Catastrophic compromise or reset | Breaks external continuity | High |

The central fact behind key resharing is simple: in secret sharing, the shares are not the secret itself. They are just one encoding of the secret. If the same secret can be encoded many different ways, then the holders can move from one encoding to another while keeping the underlying secret fixed.

This is easiest to see in Shamir secret sharing, which underlies many threshold systems. There, a secret is embedded as the constant term of a random polynomial. Each participant receives the value of that polynomial at a different point. What matters is not any one share, but the polynomial relation among them. If you choose a different random polynomial with the same constant term, you get a completely fresh set of shares that reconstructs to the same secret.

That is the compression point for the whole topic: resharing is re-randomization of the secret-sharing structure, not replacement of the secret. Once that clicks, many consequences follow naturally. Old shares can be invalidated while the public key remains unchanged. New participants can receive valid shares even if they were not present at the original key generation. A threshold can sometimes change along with membership, because the new sharing polynomial can have a different degree. And periodic refresh becomes meaningful, because leaked old shares stop helping once the system has moved to a fresh sharing.

This is why resharing is closely related to distributed key generation, or DKG. DKG creates the first distributed encoding of a secret key without a trusted dealer. Resharing performs a similar algebraic redistribution later, but starts from a key that already exists in distributed form. In practice, a resharing protocol often looks like a DKG-like ceremony whose job is not to invent a new secret, but to produce fresh shares of the existing one.

What operational and security problems does key resharing solve?

Without key resharing, threshold cryptography would work best only in static environments. But threshold systems are usually deployed precisely in settings that are not static: validator committees, custody operations, signing clusters, or multi-organization control systems.

There are two pressures that make resharing necessary.

The first is membership change. Suppose a 3-of-5 threshold wallet is run by five servers. One server is retired and another is added. If the system cannot reshare, it faces an awkward choice. Either it keeps the retired server as part of the cryptographic trust base, which is operationally bad, or it generates a new key entirely, which changes the wallet’s public identity. Resharing gives a third option: redistribute the existing secret into a fresh 3-of-5 sharing over the new five servers.

The second is long-term leakage. This is the motivation behind proactive secret sharing, introduced in foundational work from CRYPTO '95 under the framing of coping with “perpetual leakage.” The idea is not that one dramatic compromise happens once, but that small compromises may accumulate over time. A mobile adversary might corrupt different machines in different months and slowly collect enough historical shares to reconstruct a long-lived secret. Periodic resharing breaks that accumulation. If shares are refreshed and corrupted parties are reset between periods, then learning some shares in one period and some different shares later does not necessarily add up to the secret.

So key resharing is not just a convenience for rotating operators. It is also a security mechanism for maintaining a long-lived distributed secret under changing operational conditions and ongoing attack surface.

How does a resharing protocol redistribute shares without reconstructing the secret?

At a high level, a resharing protocol must preserve one invariant: the reconstructed secret, and therefore the public key derived from it, stays the same. Everything else may change; who holds shares, how many shares exist, what the threshold is, what commitments are published, and which internal verification data the system uses.

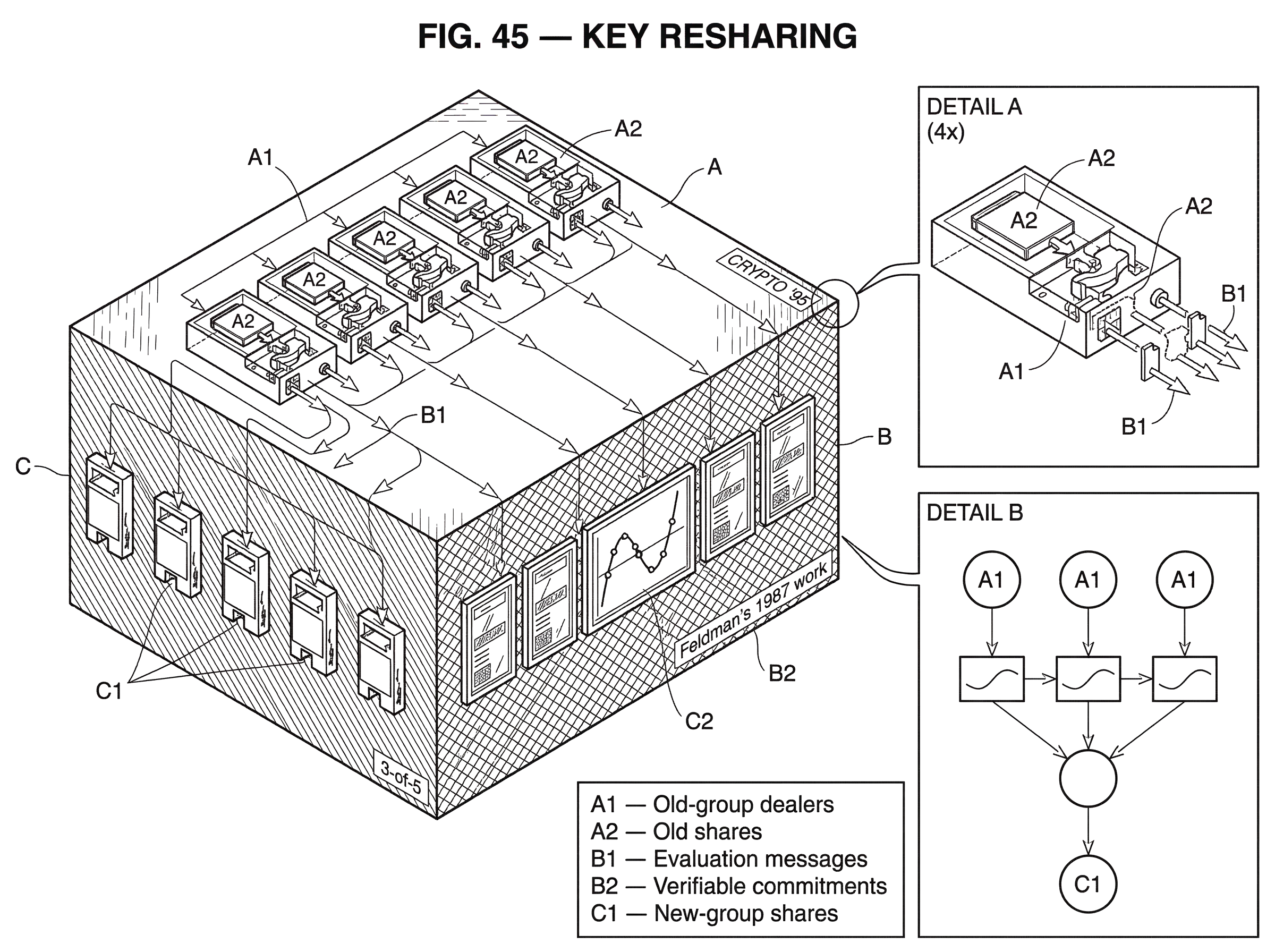

A useful way to think about the mechanism is as a sum of local contributions. Each current share holder acts, in effect, like a dealer for a temporary sharing of the share they already hold. They create a random polynomial whose constant term is their current share, then distribute evaluations of that polynomial to the parties in the new committee. The new parties add up the pieces they receive from all valid old holders. Because the old shares themselves add up, via interpolation, to the original secret, the new combined shares also end up encoding that same secret.

The drand specification makes this concrete. In its resharing procedure, each dealer from the old group constructs a private polynomial where the free coefficient is that dealer’s current share. The polynomial degree is chosen to match the threshold of the new group. New-group nodes receive evaluations, validate them, and in the finish phase use Lagrange interpolation over valid contributions to derive their final new shares and the new distributed public polynomial. The commitments change, but the free coefficient of the public polynomial (the public key clients rely on) remains the same. That captures the essence of resharing in one system, but the underlying pattern is much broader.

Here is a worked example in prose. Imagine a threshold signing service currently run by five parties with threshold three. Over time, the operator wants to replace two servers and raise the committee to seven parties while keeping the same public key that external users already trust. During resharing, the existing qualified parties do not reconstruct the private key anywhere. Instead, each old party takes its own share and embeds it as the constant term of a fresh random polynomial of degree matching the new threshold policy. Each new party receives one evaluation from each old party, along with commitments or proofs that the evaluations are consistent. After filtering invalid or missing contributions, each new party combines the valid evaluations it got. The result is a fresh share in the new 4-of-7 or 3-of-7 structure, depending on the chosen target threshold. No full secret ever appeared in one place, but the public key seen by the outside world is still the same because all the local redistributions were anchored to the same underlying secret.

The mathematics is elegant, but the practical burden is verification. If parties simply sent each other fresh shares with no proof of consistency, a malicious participant could bias or poison the result. That is why resharing leans on verifiable secret sharing, or VSS. Feldman’s 1987 work is foundational here: it showed how to augment Shamir sharing with commitments so recipients can check that the share they received is consistent with a committed polynomial. In modern systems, resharing usually includes some commitment or proof layer so the recipients of new shares can verify they are getting a piece of the same intended secret-sharing relation.

Why doesn’t resharing change the public key?

This is often the point that feels slightly magical to newcomers. If the shares are changing, why doesn’t the public key change too?

The answer is that the public key depends on the secret, not on the particular way that secret is shared. In threshold Schnorr or threshold ECDSA systems, participants hold shares of a scalar private key x. The public key is derived from x, for example as a group element corresponding to x. If resharing preserves x while only changing how it is split into shares, then the public key remains unchanged.

What does change are the internal commitments and verification structures associated with the current sharing. A reshared polynomial is a different polynomial. Its coefficient commitments are different. Its participant indices may be different. The quorum policy may be different. But as long as the constant secret underlying the distributed system is still the same x, the external public key is stable.

This distinction between the external identity and the internal encoding is what makes resharing so valuable in production systems. You can change who participates internally without forcing every external integrator, wallet, client, or verifier to update the key they trust.

How do resharing and proactive refresh limit cumulative compromise?

| Type | Membership change? | Primary goal | Cadence | Main trade-off |

|---|---|---|---|---|

| Resharing | Yes | Rotate or change committee | On membership events | Verification & comms cost |

| Share refresh | No | Mitigate accumulated leakage | Periodic | Only rate-limits compromise |

| Dynamic proactive | Yes or no | Scale frequent proactive renewals | Frequent / amortized | Complexity vs efficiency |

Not all resharing is driven by membership change. Sometimes the participant set stays the same, but the shares are refreshed anyway. That is usually called a share refresh or proactive refresh, and it is best understood as a special case of resharing where the old and new committees are identical.

The goal here is to defend against attackers who move over time. In an ordinary threshold scheme, if an attacker compromises enough parties over a long enough period and records their shares, eventually those historical shares may be enough to reconstruct the secret. Proactive secret sharing changes the security model. Instead of asking whether the adversary ever corrupts more than t parties in total, the scheme asks whether the adversary can corrupt too many parties within a single refresh period. After each refresh, the old shares become obsolete and should be erased. That converts cumulative compromise into a rate-limited problem.

This matters for long-lived infrastructure. The Internet Computer’s chain-key ECDSA documentation explicitly describes the system as comprising distributed key generation, threshold signing, and periodic key resharing. The point is not merely that a subnet can sign; it is that the key can remain distributed and usable while its share distribution is renewed inside the subnet over time. In the proactive model, that renewal is not a side feature. It is part of the security story.

The same idea appears in more advanced research on dynamic proactive secret sharing, where even the number of parties can change during execution. That line of work aims at systems where secrets are long-lived, membership is fluid, and resharing must happen efficiently enough to be practical at scale.

How does resharing integrate with threshold signing protocols like FROST or ECDSA?

Threshold signing protocols often assume some preexisting sharing of a secret key, but they do not always specify how that sharing is created or changed.

FROST is a good example. It specifies a two-round threshold Schnorr signing protocol and assumes the group signing key is Shamir-shared among participants. But the specification explicitly puts key generation out of scope, except for a trusted-dealer appendix. That means if you want to rotate participants or refresh shares while keeping the same FROST public key, you need an external DKG or resharing protocol compatible with FROST’s share structure.

Threshold ECDSA systems face the same issue, often with more machinery. Dealerless key generation is a major achievement in protocols like GG18/GG20, and those protocols provide important building blocks for keeping the private key distributed. But ECDSA’s arithmetic is more intricate than Schnorr’s, and implementations depend on subprotocols such as multiplicative-to-additive conversions and Paillier-based proofs. In practice, resharing in threshold ECDSA is therefore not just “run Shamir again.” It sits inside a larger protocol stack that must preserve the invariant that no private key is reconstructed while also preserving correctness for future signing.

That is one reason implementation quality matters so much. The high-level algebra of resharing may be sound, but the proof systems, modular arithmetic checks, and message encodings around it can still fail.

What can go wrong in real implementations

| Failure mode | Symptom | Risk | Short mitigation |

|---|---|---|---|

| Invalid-share acceptance | Recipients accept inconsistent shares | Bad final shares; wrong secret | Use VSS and strong proofs |

| Field-arithmetic bugs | IDs collide modulo group order | Secret leakage or node crashes | Validate IDs modulo group order |

| Proof-system shortcuts | Weak or omitted proofs | Leakage and key recovery | Follow spec; enforce proofs |

| Incomplete transitions | State overwritten mid-reshare | Availability loss | Do not persist until final |

| Transport issues | Dropped or unauthenticated messages | Stalls or active attacks | Use authenticated reliable transport |

The conceptual idea of resharing is clean. The engineering reality is less forgiving.

The first major failure mode is invalid-share acceptance. If a malicious party can send inconsistent evaluations during resharing and honest recipients lack a way to verify them, the new committee may end up with shares that do not correspond to a single secret. VSS and commitment checks exist to prevent this, but only if they are implemented correctly.

The second is field-arithmetic edge cases. Trail of Bits disclosed practical bugs in Feldman-VSS-based threshold implementations where participant identifiers were not validated modulo the curve order. In one case, choosing an identifier equivalent to zero modulo the group order caused the “share” to equal the secret itself. In another, modularly colliding identifiers caused a denominator in a Lagrange coefficient to become zero, which could crash nodes. These are not deep breaks of secret sharing theory. They are implementation mistakes at the exact places where resharing and reconstruction depend on correct finite-field arithmetic.

The third is proof-system shortcuts in threshold ECDSA stacks. The TSSHOCK report describes how ambiguous hashing, reduced Fiat–Shamir rounds, or omitted range proofs in dlnproof and MtA-related code can leak internal values and lead to key recovery. The important lesson is not that resharing is uniquely broken, but that resharing ceremonies often reuse the same proof machinery and validation assumptions as key generation and signing. If those components are weak, moving shares around can become an attack surface.

A fourth failure mode is operational rather than algebraic: incomplete transitions. The tss-lib documentation warns that during resharing, local key data may be modified across rounds and should not overwrite persisted key material until the final result is received. That is an example of a subtle but serious invariant. A resharing protocol is a state transition. If a node commits halfway, crashes, and discards the wrong version of its state, the group can lose availability even if the cryptography itself was correct.

Finally, transport matters. A library may implement the cryptographic core but leave messaging to the application. If broadcasts are unreliable, sessions are confused, or point-to-point channels are not authenticated and encrypted, the surrounding protocol can fail in ways the paper model does not capture.

When should you use key resharing in production systems?

The use cases are easiest to understand as consequences of the mechanism.

If the problem is changing operators without changing the public identity of a system, resharing is the natural tool. Randomness beacons use it to rotate committees while preserving the public key that clients already use for verification. The drand specification is explicit about this: resharing can remove and add nodes while keeping the same distributed public-facing key.

If the problem is long-lived threshold signing in changing infrastructure, resharing becomes part of lifecycle management. The Internet Computer treats periodic key resharing as one of the core components of chain-key ECDSA, alongside DKG and signing. That reflects a general truth: a production threshold system is not just a signing algorithm, but a way of operating a distributed secret over time.

If the problem is decentralized custody or settlement, resharing supports continuity when one participant or service must be replaced. This is where threshold signatures move from theory into service design. **Cube Exchange uses a 2-of-3 threshold signature scheme for decentralized settlement: the user, Cube Exchange, and an independent Guardian Network each hold one key share. No full private key is ever assembled in one place, and any two shares are required to authorize a settlement. ** In a system like that, resharing is the mechanism that would let the operator rotate the guardian set, replace infrastructure, or recover from compromise without forcing users onto a new on-chain identity each time.

And if the problem is reducing the impact of ongoing compromise, then periodic resharing is a security control. That is the proactive-security use case: same participants or slightly changed participants, same secret, fresh shares.

What limitations and trade-offs does key resharing have?

It is helpful to be precise about the boundaries.

Key resharing does not magically eliminate trust assumptions. A threshold scheme still depends on some bound on how many parties are corrupted in a period, or on some honest-majority or qualified-set assumption, depending on the protocol. If too many old share holders collude during resharing, they may already know enough to recover the secret. Resharing cannot undo a compromise that has already crossed the threshold.

It also does not automatically guarantee liveness or robustness. For example, FROST itself does not provide robustness; a misbehaving participant can cause signing to abort. Many resharing protocols have similar issues: they may guarantee safety if they complete, but still require enough cooperative parties and valid contributions to finish. A system that needs strong availability under faults often needs an additional wrapper or committee-management layer beyond the bare resharing mathematics.

And resharing is not always free. Communication can be significant, especially in dynamic or malicious-adversary models. Research on dynamic proactive secret sharing spends much of its effort on reducing that communication cost because naïve protocols become expensive as the committee grows.

Conclusion

Key resharing is the mechanism that lets a threshold system evolve without changing the key the outside world sees. It works because secret shares are only one randomized encoding of a secret, not the secret itself. By redistributing fresh shares of the same underlying secret (usually with verifiable proofs of consistency) a system can rotate participants, refresh against leakage, and keep long-lived threshold keys usable over time.

That is why resharing sits so close to the heart of practical threshold cryptography. DKG gets a distributed key into existence. Threshold signing uses it. Key resharing is what keeps it alive.

What should you understand about key resharing before using a service?

Understand that resharing preserves the same public key while changing who holds shares and what threshold applies, and that this matters for funds you move or custody you rely on. On Cube Exchange, map that concept into concrete readiness checks: verify the public key stability, request resharing/verifiability artifacts, confirm the active threshold and signer set, then proceed with funding and transfer.

- Verify the public key: check the on-chain public key for your Cube deposit/withdrawal and confirm it matches Cube Exchange’s published key and the latest resharing timestamp (ask support or consult Cube docs).

- Inspect resharing proofs: request or view the VSS commitments or resharing transcript Cube publishes and confirm verification records or auditor notes showing consistent commitments.

- Confirm threshold and signers: verify the current threshold policy (e.g., 2-of-3) and the identities of active signers or Guardian participants before sending large funds.

- Fund and initiate the transfer on Cube: deposit funds, start the withdrawal or settlement, and before confirming, review the transaction’s on-chain key reference and attached signer approvals.

Frequently Asked Questions

Because resharing preserves the underlying secret (the private scalar x) while only changing its randomized encoding into shares; the public key is derived from x not from any particular sharing, so if all new shares reconstruct to the same x the external public key remains unchanged.

Yes - many resharing protocols choose the new polynomial degree to match a different target threshold, so you can change committee size and the threshold by having old holders distribute contributions for a polynomial of the new degree; in practice this requires enough valid contributions from the old set and careful verification to ensure correctness.

No: a correct resharing protocol avoids reconstructing the private key in one place by having each old holder locally make a fresh sharing of their own share and the new parties sum those contributions to obtain fresh shares, so the secret is never reconstructed centrally.

Resharing and proactive refresh mitigate cumulative leakage by making old shares obsolete after a refresh period, so an attacker must corrupt enough parties within a single period to recover the key; however, resharing cannot undo or recover from a prior compromise that already exceeded the corruption threshold.

Common pitfalls include accepting invalid or inconsistent evaluations when VSS checks are missing, finite-field/identifier mistakes that turn a share into the secret or cause zero denominators, shortcuts or missing range proofs in proof systems (which can leak values), and operational mistakes like overwriting persisted key material mid‑transition or using an insecure transport for messages.

Recipients verify resharing contributions with verifiable secret sharing (VSS) tools such as Feldman-style commitments or stronger proof systems; these commitments let recipients check that an evaluation is consistent with a committed polynomial so that the combined new shares will encode a single secret.

Not usually - most threshold signing specifications (for example, FROST) leave key generation and resharing out of scope, so practical systems either run a DKG/resharing protocol separately or rely on a trusted dealer; for ECDSA stacks resharing is even more involved because of extra conversion and proof subprotocols.

No, resharing is not free: it consumes communication and coordination (which can grow with committee size), requires enough honest/available contributors to finish, and may need extra robustness wrappers to provide liveness under faults - research on dynamic/proactive schemes focuses on reducing these costs.

Related reading