What Is an Algorithmic Stablecoin?

Learn what an algorithmic stablecoin is, how it maintains a peg, why designs like UST failed, and where algorithmic stability breaks down.

Introduction

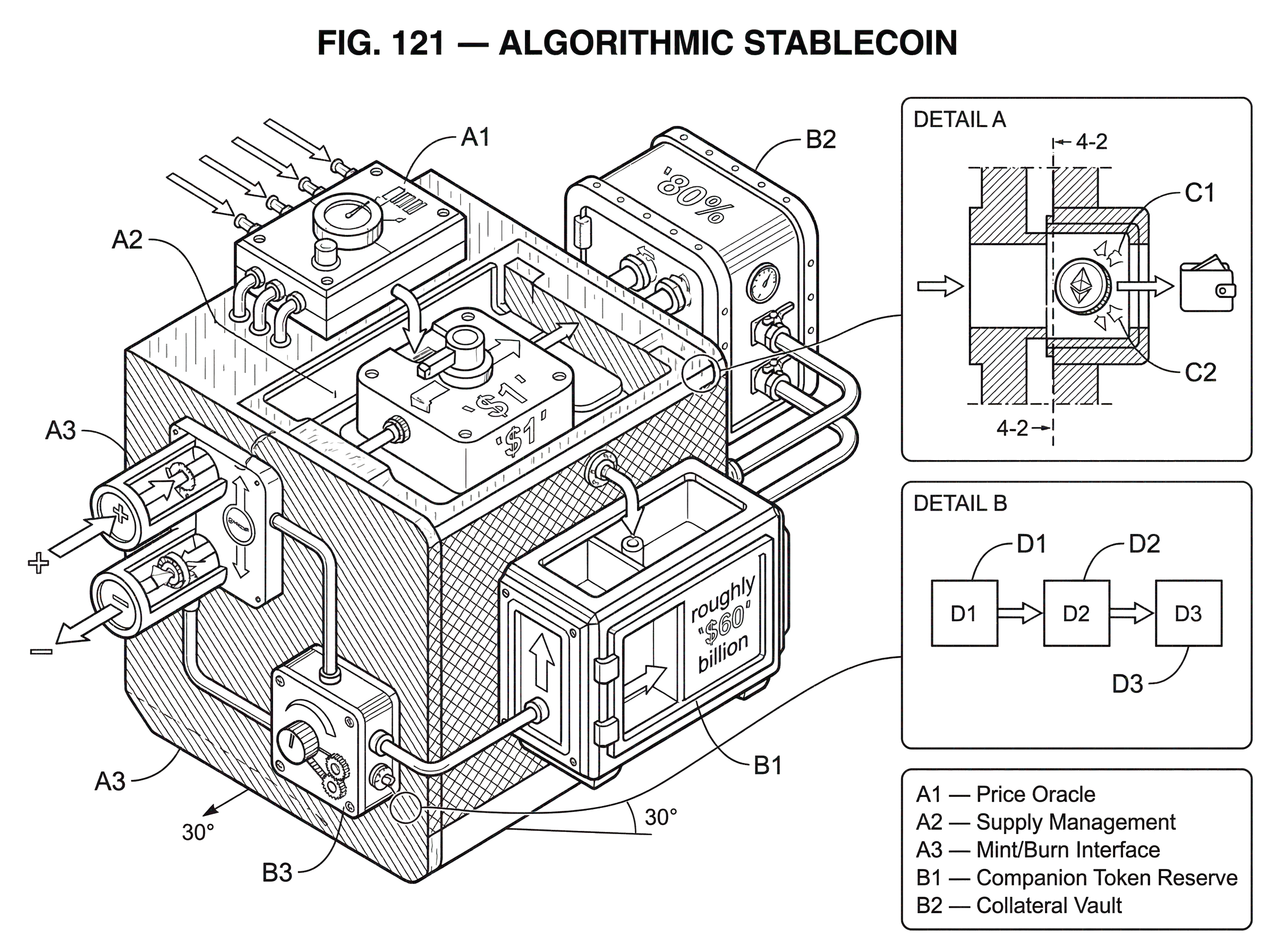

Algorithmic stablecoin is the name for a stablecoin design that tries to hold a target price (usually 1 unit of a fiat currency such as the U.S. dollar) primarily through protocol rules, smart contracts, and market incentives rather than straightforward 1:1 fiat reserves. That sounds like a narrow design choice, but it changes almost everything. A reserve-backed stablecoin asks a simple question: can I redeem this token for assets sitting somewhere? An algorithmic stablecoin asks a harder one: can rules and incentives make the market keep treating this token as if it were worth a dollar?

That distinction is why the topic matters. Stablecoins sit at the center of crypto trading, lending, liquidity pools, and settlement flows. official policy reports have noted that algorithmic or “synthetic” stablecoins are a distinct subset of stablecoin arrangements because they attempt stabilization by means other than direct fiat convertibility. They have also noted that stablecoins are central to DeFi, where they are often used for trading and as collateral. So if the stabilization mechanism is weak, the problem is not just that one token drifts off target. The deeper problem is that an asset many other protocols assumed was “stable” can suddenly stop being reliable.

The key idea to understand is this: an algorithmic stablecoin does not create stability out of nothing. It moves volatility somewhere else. Sometimes it moves it into a second token. Sometimes it moves it into future dilution. Sometimes it moves it into users’ balances through rebasing. Sometimes it leans partly on collateral and partly on algorithmic adjustment. If you remember that one sentence, the rest of the design space becomes much easier to reason about.

What problem do algorithmic stablecoins try to solve?

| Approach | Stabilization method | Credibility source | Capital efficiency | Main risk |

|---|---|---|---|---|

| Reserve-backed | 1:1 fiat or asset reserves | External assets / custodians | Low capital efficiency | Custody and reserve opacity |

| Algorithmic | Supply rules and arbitrage | Market expectations and arbitrage | High capital efficiency | Confidence-dependent depeg |

| Fractional‑hybrid | Partial collateral + algorithmic | Collateral plus market belief | Medium capital efficiency | Collateral correlation risk |

A cryptocurrency with a fixed or mostly predetermined supply, like Bitcoin, does not stabilize its own price. If demand changes unexpectedly, price absorbs the shock. That can be useful for a speculative asset, but it is awkward for something people want to use as a unit of account, trading pair, or medium of exchange. The original motivation behind algorithmic stabilization proposals was to create a crypto-native asset that behaved more like money and less like a volatile commodity.

There are two broad ways to get that stability. The first is to hold backing assets and promise redemption: dollars in a bank, Treasury bills, or onchain crypto collateral. The second is to make supply react to price. If the token trades above target, the protocol expands supply. If it trades below target, the protocol contracts supply or tries to create an incentive for someone else to absorb the excess supply. This second route is what people usually mean by algorithmic.

That promise is attractive for three reasons. It can be more capital efficient than full overcollateralization. It can be more onchain and automated, with fewer offchain custodians. And it can look more crypto-native, because the protocol itself performs something like monetary policy. But these advantages are not free. A reserve-backed design gets credibility from assets that exist outside the token’s own belief system. An algorithmic design often gets credibility from the expectation that other traders, arbitrageurs, or holders will keep playing their roles. That is a more reflexive foundation.

What makes a stablecoin 'algorithmic' instead of reserve‑backed?

At the definition level, the cleanest distinction is mechanical. Reputable policy and research sources describe algorithmic stablecoins as stablecoins that attempt to maintain a peg without relying on direct fiat convertibility or traditional reserve assets, instead using algorithms, smart contracts, and supply-management rules. Some designs hold no dedicated reserves at all. Others are hybrids that combine collateral with algorithmic adjustment. So “algorithmic stablecoin” is best understood as a family of mechanisms, not a single template.

The common structure is straightforward. The protocol needs some observable signal of whether the token is above or below target. It then needs a rule that changes supply, redemption terms, or incentives in response. And it needs market participants to find it profitable to trade against the deviation. Without that last step, the algorithm is just code making announcements that nobody follows.

This dependence on market behavior is easy to underestimate. A smart contract can mint, burn, reweight balances, or adjust collateral ratios. What it cannot do is force the market to value a token at 1. It can only make certain trades available and hope arbitrage closes the gap. That is why oracle quality, liquidity depth, and confidence matter so much more here than the word algorithmic might suggest.

How does supply elasticity work to maintain a peg?

Most algorithmic stablecoin designs are variations on a single principle: if price is too high, increase effective supply; if price is too low, decrease effective supply or make someone else want to absorb the overhang. Chainlink’s explainer puts this in the familiar form of expansion and contraction, and older design notes like Robert Sams’s seigniorage shares proposal treat the same idea as an elastic-supply monetary rule.

Why should this help? Suppose a token meant to be worth $1 trades at $1.05. That price says the market wants more of it than currently exists at that price. If the protocol can create additional units and sell or distribute them, the added supply should push price downward. Now reverse it. If the token trades at $0.95, the market is saying there is more supply than people want at $1. The protocol must somehow reduce circulating supply, or persuade traders to take the excess off the market in exchange for some future benefit.

The hard part is the downward case. Expanding supply when demand is strong is easy; many systems can mint new tokens. Contracting supply when demand weakens is much harder because someone must bear the pain. That pain can take several forms. Holders’ balances can shrink. A secondary token can be diluted. Future claims can be sold at a discount. Collateralization can rise. But there is no magic version where contraction is costless.

That is the invariant to keep in view: the peg can be defended only if losses are absorbable somewhere in the system.

What are the main types of algorithmic stablecoin designs?

| Design | Peg method | Loss absorber | Efficiency | Best fit |

|---|---|---|---|---|

| Rebasing | Pro‑rata balance rebases | All token holders | High efficiency | Unit‑price stabilization experiments |

| Seigniorage | Arbitrage with companion token | Companion token holders | Very high efficiency | When an absorber token exists |

| Fractional | Collateral + algorithmic rules | Collateral and risk token | Medium efficiency | Balance safety and efficiency |

The design space is easiest to understand by the mechanism that absorbs deviations from the peg. In practice, three families keep appearing.

Rebasing designs change balances directly

A rebasing stablecoin adjusts token balances in users’ wallets pro rata. Ampleforth is the clearest canonical example of elastic supply implemented this way. Its whitepaper describes a protocol that reacts to price information by expanding or contracting balances when price moves outside a target band. Technically, the system changes supply through a coefficient applied across balances rather than by sending separate transfers to every address.

The intuition is simple. If the token trades above target, everyone gets more units. If it trades below target, everyone gets fewer. Your share of the network stays roughly the same, but the number of tokens you hold changes.

This mechanism explains both what rebasing is good at and where it fails as “stable money.” It can help stabilize the unit price of the token, because supply responds automatically. But it does not preserve the purchasing power of any one wallet balance in the ordinary sense. If your wallet had 100 tokens yesterday and a negative rebase cuts it to 90, you did not experience stability in the way most users mean the word. Robert Sams made this point directly in criticizing simple rebase-style approaches: stabilizing coin price is not the same as stabilizing the purchasing power of wallet balances.

So rebasing explains one important lesson. A token can target a stable price per unit while still feeling economically unstable to holders. The mechanism solves one problem and exposes another.

Seigniorage or multi-token designs move volatility into a companion token

The second family uses at least two tokens: the stablecoin itself and a volatile token that absorbs gains and losses. Robert Sams’s seigniorage shares note is the clean conceptual version. In that model, the stablecoin is the monetary asset users hold for transactions, while a separate “share” token is the residual claimant on future expansion. When the system needs to expand supply, new stablecoins benefit share holders. When it needs to contract, the system sells claims on future upside to pull stablecoins out of circulation.

This is the basic insight behind later paired-token systems. Instead of forcing all holders to eat balance changes directly, the protocol creates a junior layer that absorbs volatility. The stablecoin tries to be stable; the companion token acts like leveraged equity in the system.

TerraUSD (UST) and LUNA made this logic famous, and then infamous. In the paired-token form described by the Congressional Research Service and the New York Fed staff report, UST was meant to stay near $1 through an arbitrage rule: if UST traded below $1, traders could buy discounted UST and redeem it for $1 worth of LUNA; if UST traded above $1, traders could effectively mint UST via LUNA conversion and sell it. As long as markets believed LUNA retained substantial value, the arbitrage channel looked credible.

Here is the mechanism in plain language. Imagine UST slips to $0.98. A trader buys 100,000 UST for $98,000 and redeems it into $100,000 worth of LUNA, pocketing the spread. That trade destroys UST, reducing its supply, which should help move UST back toward the peg. But the protocol has not eliminated the loss; it has transferred it into LUNA by issuing more of it. If the system is small relative to LUNA’s market value, that dilution may be tolerable. If redemptions become enormous, LUNA supply explodes, its price falls, and the redemption promise becomes less credible exactly when it is needed most.

That is the death spiral. The New York Fed describes it succinctly: the algorithm fails if both the stablecoin and the paired crypto asset fall in price at the same time. Once confidence breaks, more stablecoin holders rush to exit, causing more issuance of the companion token, causing more price collapse there, which weakens confidence further. The design works only while the market believes the absorber can absorb.

Fractional-algorithmic designs split the burden between collateral and a risk token

A third family tries to soften that reflexivity by keeping some collateral while still using algorithmic adjustment. FRAX is the clear example from the supplied material. Its whitepaper describes a two-token system where FRAX is always mintable or redeemable for $1 of value, but that dollar consists of a mix of collateral and FXS, the risk-bearing governance and value-accrual token. The protocol changes a global collateral ratio over time: more collateral when needed, less when confidence and market conditions allow.

This is best understood as a compromise. A pure algorithmic system is capital efficient but fragile because belief alone carries more of the peg. A fully collateralized system is safer but ties up more assets. A fractional model says: let collateral carry part of the credibility, and let the algorithmic token carry the residual risk.

Mechanically, that means when a user mints or redeems the stablecoin, they interact with both collateral and the companion token in proportions set by the protocol’s collateral ratio. If the ratio is 80%, then each $1 of stablecoin is supported by $0.80 of collateral and $0.20 of algorithmic exposure. If conditions worsen, the system can try to raise the collateral ratio, shifting more of the burden onto explicit backing.

This does not remove risk; it rearranges it. The system becomes less dependent on pure reflexive confidence, but it still depends on oracle inputs, collateral management, redemption mechanics, and the market value of the risk token. It is usually more robust than a zero-collateral design, but also less “purely algorithmic” than the label might imply.

Why do algorithmic stablecoins need reliable oracles?

An algorithmic stablecoin cannot react to market price unless it has some way to learn market price. Smart contracts do not observe the outside world natively. They require an oracle or some other data-ingestion mechanism. Several of the supplied sources emphasize this. The seigniorage-shares note treats price representation inside the network as one of the two hard design problems. Ampleforth relies on an onchain oracle system with data providers. Chainlink’s explainer stresses that algorithmic stablecoins depend on external price feeds to know when to expand or contract supply.

This is not a side detail. It is part of the core machinery. If the oracle is delayed, manipulated, too thinly sourced, or simply wrong, the monetary rule triggers at the wrong time and in the wrong direction. A stablecoin that expands supply because a bad feed says price is high when it is actually low can amplify the depeg instead of fixing it.

So when people say an algorithmic stablecoin is “decentralized,” it is worth asking: decentralized where? In token issuance? In governance? In collateral custody? Price input is often the least glamorous but most indispensable dependency.

How did TerraUSD (UST/LUNA) collapse so quickly?

TerraUSD is the example that made the design risk legible to a much wider audience because it showed the mechanism failing in public, at scale, and quickly. Multiple supplied sources converge on the same broad picture. UST used an arbitrage relationship with LUNA rather than conventional reserves. Demand for UST had been strongly supported by Anchor, a lending protocol that offered unusually high yields on UST deposits. When confidence and liquidity weakened, the peg came under pressure. Chainalysis reconstructs a sequence in which liquidity conditions became more fragile, large trades pushed UST off peg, supporters attempted to defend it by buying UST and deploying reserves, and then the system unraveled as redemptions caused LUNA issuance to explode.

The important point is not the minute-by-minute forensic detail. The important point is the causal chain. UST below $1 created a redemption incentive into LUNA. Heavy redemption created heavy LUNA issuance. Heavy LUNA issuance crushed LUNA’s price. That made the implicit backing of UST less credible, which increased the desire to flee UST. The mechanism intended to restore the peg became the mechanism that destroyed the absorber.

The New York Fed staff report describes the broader result starkly: the Terra ecosystem collapsed in less than a week, wiping out roughly $60 billion in value. The CRS note and Chainalysis analysis both frame the event as a run-like dynamic, which is the right analogy as long as we remember where it breaks. It is like a bank run in that everyone rushes for the exit at once. But unlike a traditional bank run, there is often no lender of last resort, no deposit insurance, and no credible external balance sheet standing behind the asset.

That is why “death spiral” is not just dramatic language. It names a genuine feedback loop: falling confidence lowers the value of the mechanism that was supposed to restore confidence.

What are algorithmic stablecoins used for in DeFi?

Even with these risks, algorithmic stablecoins have been used because they solve real onchain problems. Stablecoins are central to DeFi trading, collateralized borrowing, liquidity pools, and settlement between crypto positions. An algorithmic stablecoin can, in principle, provide a dollar-like asset without relying fully on bank-held reserves or centralized issuers. That makes it attractive in ecosystems that value censorship resistance, onchain composability, and automated issuance.

Different chains have hosted different expressions of the idea. On Ethereum, rebasing and fractional models such as Ampleforth and FRAX fit into the ERC-20 tooling stack, which matters because ERC-20 compatibility makes tokens usable by wallets, exchanges, and smart contracts. On CosmWasm-based chains, the relevant fungible-token interface is often CW20 rather than ERC-20, with different execution and allowance semantics. On Cardano, Djed shows the same concept can be implemented in yet another architecture, though the supplied audit is mainly about implementation correctness rather than economic design. Terra itself illustrated that an entire application ecosystem could be built around an algorithmic stablecoin on a Cosmos-family chain.

The chain-specific token standard is not the essence of the stablecoin, but it shapes how the design composes with the rest of the system. ERC-20, CW20, and other token interfaces define transfers, allowances, minting hooks, and integration behavior. The monetary policy may be “algorithmic,” yet the token still has to live inside a concrete contract environment.

When and why do algorithmic stablecoins fail?

| Failure channel | Trigger | Consequence | Mitigation |

|---|---|---|---|

| Contraction hardness | Price below peg | No willing loss absorber | Increase collateral or guarantees |

| Endogenous backing collapse | Companion token crash | Death spiral and hyperinflation | Limit reliance on single absorber |

| Oracle failure | Delayed or manipulated feeds | Wrong supply adjustments | Robust multi-source oracles |

| Artificial demand | High subsidized yields | Rapid mass withdrawals | Avoid unsustainable yields |

The central weakness of algorithmic stablecoins is not that the code is too simple or too complex. It is that stability is partly a confidence game, and confidence is procyclical. When a peg is trusted, arbitrage capital appears, companion tokens retain value, and deviations can be corrected. When a peg is doubted, the very actors and prices the system relies on may disappear or move against it.

Several failure channels follow from that.

First, contraction is structurally harder than expansion. Printing or distributing more units into strong demand is easy. Convincing markets to absorb losses during weak demand is the real test.

Second, the system often depends on a risk-bearing layer whose market value is endogenous to belief in the stablecoin itself. In paired-token systems, the “backing” is not independent wealth in the ordinary sense. It is a claim whose value can evaporate when redemptions surge.

Third, oracle and liquidity assumptions are fragile. If price feeds are delayed or manipulable, or if the market is too thin for arbitrage to operate cleanly, the control loop becomes noisy and may overcorrect or fail to correct.

Fourth, user demand may be artificial. High yields can attract deposits and create the appearance of strong stablecoin adoption, but if that demand rests on subsidies rather than organic utility, it can vanish suddenly. The CRS note’s discussion of Anchor and UST is the clearest example of this dynamic.

Fifth, implementation risk still matters. Economic elegance does not protect against smart-contract bugs. The Djed audit is a useful reminder that even if a design is mathematically appealing, the deployed code can have minting-policy flaws, specification mismatches, or validator bugs that undermine the whole system.

Why do developers keep building algorithmic stablecoins despite past failures?

If the risks are this visible, why do algorithmic stablecoins keep reappearing? Because the goal remains compelling. A stable, digital, crypto-native asset that is highly composable, minimally custodial, and capital efficient would be extremely useful. The failures do not erase the demand for the thing being attempted.

What has changed is the direction of design. Purely uncollateralized systems have lost credibility after repeated blowups, including Terra and earlier failures such as Iron/TITAN. More recent discussion increasingly favors hybrids: partial collateral, more explicit reserves, stronger oracle design, tighter redemption mechanics, and less reliance on a single reflexive token soaking up all downside.

That shift reflects a basic lesson from first principles. The less external backing a stablecoin has, the more its stability must come from expectations about future behavior. Expectations can work in good times. They are least reliable exactly when stability matters most.

Conclusion

An algorithmic stablecoin is a stablecoin that tries to keep a peg through rules and incentives rather than simple 1:1 reserve redemption. The idea is elegant because it turns monetary policy into code. The difficulty is that code cannot manufacture credibility by itself; it can only allocate gains and losses according to predefined rules.

The shortest way to remember the topic is this: algorithmic stablecoins do not eliminate volatility; they decide where volatility goes. If that destination cannot absorb stress, the peg holds only until confidence does not.

How do you evaluate a token before using or buying it?

Evaluate a token’s mechanics before you buy or approve it by checking the protocol’s stabilization rules, on‑chain supply mechanics, and market support. Use Cube Exchange to execute a trade after you confirm the design and liquidity meet your risk tolerance.

Frequently Asked Questions

Algorithmic stablecoins try to keep a peg via protocol rules, supply adjustments, and market incentives rather than by promising 1:1 fiat redemption from off‑chain reserves; reserve‑backed coins rely on external assets and redemption promises for credibility, while algorithmic designs depend more on arbitrage, companion tokens, or supply rules to make markets treat the token as worth $1.

Because expansion merely mints or issues new units, while contraction requires some actor or mechanism to absorb losses - shrinking balances, diluting a secondary token, or selling future claims - and those actors may refuse or lack capacity when confidence falls.

A “death spiral” is a feedback loop where redemptions or de‑pegging force issuance of a volatile absorber (like a companion token), which depresses its price and further weakens the peg; TerraUSD’s collapse showed this at scale when UST redemptions caused massive LUNA issuance, LUNA’s price crashed, and the peg unraveled - destroying roughly $60 billion of ecosystem value in under a week.

Oracles feed price signals into the protocol so it can know whether to expand or contract supply; if feeds are delayed, manipulable, or too thin, the protocol can trigger the wrong monetary action and amplify a de‑peg rather than fix it.

Rebasing directly adjusts every holder’s token balance pro rata (preserving share but not the nominal purchasing power of a wallet), seigniorage or paired‑token designs shift gains and losses into a volatile companion token, and fractional‑algorithmic systems split backing between collateral and a risk token so both share adjustments.

No - a rebasing coin can stabilize the unit price but still change the nominal token count in your wallet, so it does not guarantee stable purchasing power for any given balance and can feel economically unstable to holders.

They are only ‘decentralized’ in some dimensions; many algorithmic designs still depend on centralized or permissioned elements like oracle providers, collateral custodians, or governance choices, so one should ask “decentralized where?” rather than assume full decentralization.

Despite the risks, they are used for on‑chain trading, lending, liquidity pools, and settlement because they can be more capital‑efficient and composable than fully collateralized options; different chains and token standards (ERC‑20, CW20, etc.) affect how they integrate with wallets and DeFi protocols.

Check the protocol’s reliance points: oracle design and redundancy, liquidity depth and arbitrage channels, whether there is explicit collateral and how large it is, the existence and role of any companion risk token, and independent audits or on‑chain reserve transparency; none of these guarantees safety, but weaknesses among them are common failure modes noted in the literature.

Related reading