What Is Statistical Arbitrage?

Learn what statistical arbitrage is, how market-neutral mean-reversion strategies work, why execution matters, and where stat arb breaks down.

Introduction

Statistical arbitrage is a trading approach that tries to profit from temporary mispricings between related securities using statistical models rather than discretionary judgment. The attraction is obvious: if you can identify prices that have moved too far apart relative to their usual relationship, you can buy the relatively cheap side, sell the relatively expensive side, and aim to profit when the gap narrows. The difficulty is that markets are noisy, trading is costly, and the very patterns that look reliable in backtests often become fragile in live trading.

What makes statistical arbitrage worth understanding is the contrast at its core. It is often described as market-neutral, which sounds safe, yet the strategy can suffer sharp losses exactly when many similar portfolios try to exit at once. It is often called arbitrage, yet there is usually no law of nature forcing prices to converge on your schedule. The edge, when it exists, comes from a statistical tendency, not a guaranteed payoff.

The central idea is simple: many assets are linked by shared economics, sector exposures, index membership, capital structure, or persistent trading patterns. Those links create a notion of a “normal” relative price. Statistical arbitrage tries to estimate that normal relationship, measure deviations from it, and trade the expectation that the deviation will mean-revert. In practice, that turns into systematic long/short portfolios, frequent rebalancing, careful execution, and constant skepticism about whether the pattern is real or merely historical noise.

How does statistical arbitrage trade relative moves instead of betting on market direction?

The easiest way to see the mechanism is to start with a pair of related stocks. Imagine two utility companies whose prices have historically moved closely together because they face similar interest-rate sensitivity, regulation, and demand patterns. If one suddenly outperforms the other by much more than usual, a statistical-arbitrage model may interpret that as a temporary relative dislocation rather than a lasting change in fundamentals. The trade is to short the recent winner and buy the recent loser.

What matters here is relative value. If the whole equity market falls tomorrow, both stocks may fall. The strategy does not need the market to rise. It needs the spread between the two positions to narrow in the expected direction. That is why pairs trading is a canonical implementation of statistical arbitrage: it isolates a relative-price relationship and tries to hedge away broad market direction.

This idea generalizes well beyond literal pairs. Instead of comparing one stock to one other stock, a model can compare a stock to a sector ETF, to a basket of factor exposures, or to a residual price implied by a multi-asset model. Avellaneda and Lee describe statistical arbitrage strategies in U.S. equities as sharing three features: they are systematic, market-neutral in construction, and their return mechanism is statistical. That definition matters because it separates stat arb from discretionary long/short investing. The trade is not “I think this company is good.” It is “given the historical structure of this relationship, this deviation is large enough to justify a contrarian bet.”

An analogy helps, up to a point. You can think of statistical arbitrage as trading around a stretched spring. The model estimates the spring’s resting length, and when prices stretch too far from that equilibrium, the strategy bets on a pullback. What the analogy explains is mean reversion: deviations tend to shrink. Where it fails is that financial “springs” can permanently change length. A merger rumor, balance-sheet shock, index change, or earnings surprise can redefine the equilibrium itself.

Why do related assets move together, and how do you tell if that link is durable?

The strategy only makes sense if there is some structure underneath the observed co-movement. Two prices moving together in the past is not enough. The key question is whether the relationship reflects a durable common driver or just an accidental correlation.

One source of structure is shared exposure to broad factors. Two bank stocks often load on similar interest-rate and credit-cycle risks. A mining stock and a metals ETF may move together because both are linked to commodity prices. A stock may also have a stable relationship to a basket of sector and style factors. In that case, the tradable object is not the raw price but the residual: the part left over after accounting for common exposures. If that residual is stationary enough to oscillate around a typical level, a mean-reversion trade becomes plausible.

This is why simple correlation is weaker than a more structural relationship such as cointegration or a factor model with stable residual behavior. Correlation only says two series moved together over a sample. It does not tell you whether their spread is bounded, whether one can drift permanently away from the other, or whether a common latent driver explains both. In practice, many statistical-arbitrage shops search for relationships that are economically interpretable enough to survive beyond the sample in which they were discovered.

There is also a practical reason practitioners often stay within sectors or similar instruments. Gatev, Goetzmann, and Rouwenhorst’s classic pairs-trading study found strong historical profitability in top-ranked pairs, with notable concentration in utilities. That is not an incidental detail. Utilities tend to be more homogeneous than the broad stock universe, so “normal” relative relationships are easier to estimate and less likely to be contaminated by radically different business models.

How do you build a statistical‑arbitrage signal (estimate equilibrium, measure deviation, set thresholds)?

Once the strategy has identified a relationship worth monitoring, it needs a way to convert price movements into a trading signal. The broad logic has three steps: estimate equilibrium, measure deviation, then decide when deviation is large enough to trade.

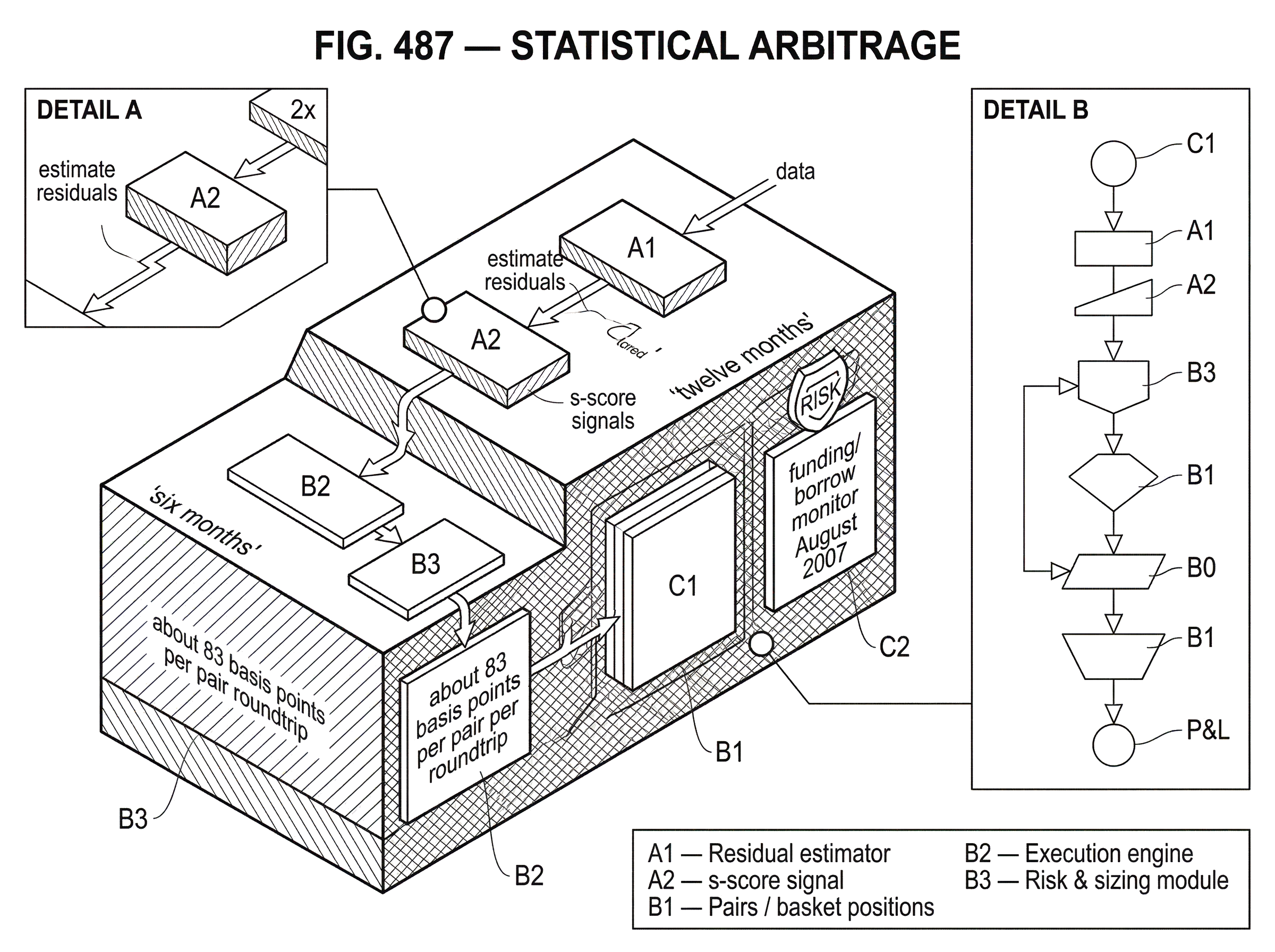

In a simple pairs setup, you begin with a historical formation window. Gatev, Goetzmann, and Rouwenhorst describe a rule that forms pairs over twelve months, then trades them over the next six months. Positions are opened when normalized prices diverge by more than two historical standard deviations and are closed when the spread crosses back. That rule is simple, but it already contains the essential ingredients of stat arb: historical calibration, standardized distance from normal, threshold-based entry, and rule-based exit.

More model-driven versions replace raw price spread with a residual from a factor model. Avellaneda and Lee discuss a dimensionless signal they call the s-score, which measures how far the residual is from equilibrium in units of its equilibrium standard deviation. The point of standardizing this way is straightforward. A one-dollar deviation means something very different for a low-volatility utility than for a volatile technology stock. Scaling by the residual’s own dispersion makes signals comparable across names.

A worked example makes this clearer. Suppose a stock usually moves with a sector index, and your regression model says today’s stock price is unusually weak relative to what the sector move would imply. The residual is negative and large compared with its own normal range. The model interprets that as the stock being cheap relative to its factor basket. If the signal passes the entry threshold, the strategy buys the stock and shorts an offsetting amount of the sector proxy or factor portfolio. If the residual later drifts back toward zero, the long gains relative to the short, and the position is closed. Each step follows from the same mechanism: define normal, detect abnormal, trade the expected snap-back.

What often gets misunderstood is that the signal is not a prediction of absolute return. A stock can be “cheap” relative to its model and still fall in price tomorrow. The trade can still work if the hedge falls more, or if the stock falls less than implied by the original dislocation. That distinction is why stat arb belongs closer to relative-value trading than to directional forecasting.

Why can statistical‑arbitrage returns persist despite apparent arbitrage?

If these patterns are systematic, why are they not instantly arbitraged away? The answer is that the strategy is competing against frictions, risk, and limited capital.

First, convergence is uncertain in timing. A spread can widen before it narrows, forcing losses, margin usage, or stop-outs. A trader with infinite patience and funding might survive where a leveraged fund cannot. So even a pattern with positive expected value can remain hard to exploit.

Second, the strategy demands balance-sheet capacity and operational sophistication. To short one asset and buy another at scale, you need borrow availability, financing, execution systems, and risk controls. Those are scarce resources. This is one reason historical profit opportunities can persist longer than an idealized efficient-market story would suggest.

Third, many apparent dislocations are compensation for hidden risks rather than free money. Gatev, Goetzmann, and Rouwenhorst found that pairs portfolios were largely uncorrelated with the S&P 500, but they still had meaningful exposure to other factors such as size, value-growth spreads, and bond or yield-curve conditions. That is a useful reminder: neutral to the market is not neutral to everything.

There is also a subtler point. The strategy may harvest liquidity provision and behavioral overreaction rather than correcting pure pricing errors. If some investors demand immediacy and push prices away from a local equilibrium, stat arb traders who fade that move can earn a premium for taking the other side. But that premium is payment for bearing inventory, funding, and crash risk. Calling it “arbitrage” can obscure that economic reality.

How do execution costs and market impact shape stat‑arb profitability?

| Cost type | Measure | Mitigation | Horizon |

|---|---|---|---|

| Spread & fees | Bid-ask plus commissions | Limit orders, netting | Very short |

| Market impact | Order-size square-root | Slicing, slow execution | Short–medium |

| Adverse selection | Trades vs informed flow | Volume-weighting signals | Intraday |

| Latency & slippage | Fill timing delay | Smart routing, colocate | High-frequency |

A spread that mean-reverts by 30 basis points is useless if it costs 40 basis points to capture. This is why execution is central, not peripheral, to statistical arbitrage.

The empirical evidence makes the point sharply. In the pairs-trading study, waiting one day to trade reduced returns substantially, implying that bid-ask bounce and transaction costs explained a meaningful part of the raw backtest profit. The authors estimate that this drop corresponds to a conservative lower-bound transaction cost of about 83 basis points per pair per roundtrip, or an effective spread around 42 basis points. Even if the exact number is sample-specific, the lesson is general: short-horizon edges are often of the same order of magnitude as trading frictions.

Execution has a second layer beyond spreads and commissions: market impact. If your own orders move prices against you, expected profit shrinks further. The optimal-execution literature frames this as a tradeoff between trading slowly, which reduces impact, and trading quickly, which reduces exposure to adverse price moves while you are still filling. Almgren and Chriss formalize this as minimizing a combination of volatility risk and transaction costs from temporary and permanent market impact. That framework is highly relevant to stat arb because mean-reversion signals often decay quickly, so waiting can destroy alpha even while it lowers impact.

The square-root impact evidence from bitcoin trading is also conceptually useful here. Donier and Bonart find that market impact scales roughly with the square root of order size across a wide range, and that this pattern appears even in a market with relatively weak statistical-arbitrage and market-making activity. The practical takeaway is not that equity stat arb and bitcoin are the same. It is that impact is a deep microstructure phenomenon, not something you can assume away just because your model found a signal.

This is why serious stat-arb implementation often includes execution-aware sizing. A signal that looks attractive in isolation may be too small once turnover, borrow cost, and impact are included. In live trading, the strategy is not “find deviations.” It is “find deviations large enough to survive the act of trading them.”

How do data quality and backtesting discipline prevent false discoveries in stat‑arb?

Statistical arbitrage is unusually exposed to false discovery because it searches for weak, noisy regularities in high-dimensional data. If you test enough spreads, factor definitions, thresholds, holding periods, and universe filters, some will look excellent by chance alone.

This is the part many smart readers underestimate. The strategy can fail even if the code is correct and the math is sophisticated, simply because the chosen relationship was an artifact of the sample. That is why robust validation matters as much as signal design. Walk-forward testing, strict separation of formation and trading periods, and skeptical treatment of parameter tuning are not optional hygiene. They are part of the economic test of whether the edge is real.

Gatev, Goetzmann, and Rouwenhorst explicitly discuss data-snooping risk in studying a well-known practitioner strategy. More generally, the model-selection problem is severe in quant research. Procedures like the Model Confidence Set were developed precisely because picking a single “best” model from many candidates can overstate confidence. The point is not that one must use a specific econometric device in every stat-arb workflow. The deeper point is that model uncertainty is real and should be treated as part of the object being estimated, not swept aside after optimization.

Data quality matters just as much as statistical testing. Survivorship bias is a classic example. Brown, Goetzmann, Ibbotson, and Ross show that truncating a sample by excluding failed funds can create the appearance of persistence even when no true persistence exists. In stat arb, the analogous danger appears whenever the universe excludes delisted names, unavailable borrow situations, stale quotes, or dead strategies. A backtest on surviving, liquid, easy-to-short names can silently import an unrealistic world.

Machine learning raises both the ceiling and the risk. Better feature engineering and more flexible models may detect subtler relative-value patterns, but they also make it easier to fit noise. Practitioner-oriented ML work in finance therefore emphasizes robust backtesting and avoiding false positives. That is exactly the right emphasis for stat arb, where small errors in estimated edge are magnified by leverage and turnover.

What risks remain if a mean‑reversion model is correct (crowding, funding, path risk)?

The most important failure mode is not that mean reversion disappears forever. It is that the path to convergence becomes unbearable before convergence arrives.

Because stat-arb portfolios are often leveraged and diversified across many small positions, they can look low-volatility in ordinary times. That apparent stability invites larger sizing. But when correlations rise, liquidity vanishes, or funding tightens, many spreads can widen together. Losses then arrive not as a single thesis failure but as a portfolio-wide de-correlation from history.

The August 2007 quant meltdown is the canonical case. Khandani and Lo argue that the episode reflected a combination of deleveraging in factor-based long/short equity portfolios and a temporary withdrawal of market-making risk capital. That mechanism matters. The losses did not require every model to become nonsense overnight. It was enough that many funds held similar positions and needed liquidity at the same time. In that environment, forced unwinds can push prices farther away from equilibrium, harming exactly the traders who expected reversion.

This reveals the deepest risk in statistical arbitrage: crowding. A relationship can be statistically valid in isolation and still become dangerous when too many balance sheets rely on it. The spread then stops being just an economic relationship between two assets. It becomes a map of who else is trapped in the same trade.

Shorting introduces additional fragility. Borrow can become expensive or unavailable. A short leg can gap violently on corporate news. A spread can also “mean-revert” for the wrong reason if the long leg collapses less than the short leg, creating profits that look clean in summary statistics but are much messier in path and financing terms.

Beyond pairs trading: how factor residuals, PCA, and portfolio stat‑arb differ in capacity and risk

| Strategy | Visibility | Diversification | Capacity | Main risk |

|---|---|---|---|---|

| Pairs | High visibility | Low diversification | Low–medium capacity | Idiosyncratic break risk |

| ETF residuals | Medium visibility | Medium diversification | Medium capacity | Proxy mismatch risk |

| PCA / factor residuals | Low visibility | High diversification | Higher capacity | Model / regime risk |

| Portfolio residuals | Low visibility | High diversification | Highest capacity | Interpretation ambiguity |

Pairs trading is the easiest entry point, but the underlying idea extends to a family of relative-value strategies. Some models trade stock residuals against sector ETFs. Others estimate latent factors using principal component analysis and trade deviations from those factors. Avellaneda and Lee show that PCA-derived factors can often be interpreted economically as long/short industry portfolios, which makes them useful for constructing residual mean-reversion signals.

That shift from pairs to portfolios changes the problem in an important way. With a pair, the relation is visible. With factor residuals, the relation is inferred. That can improve diversification and capacity because the strategy is no longer limited to obvious one-to-one matches. But it also increases model risk. The number of factors matters, the interpretation of residuals matters, and a model that fit one regime may degrade in another.

Their evidence also shows performance varying over time. PCA-based strategies performed better before 2003 than afterward, and all tested mean-reversion strategies suffered a sharp drawdown in August 2007. This is a useful antidote to the idea that statistical arbitrage is a timeless formula. It is better understood as a moving competition over who can estimate transient relative-value structure more accurately, more cheaply, and with more robust execution.

How to judge whether a statistical‑arbitrage edge is tradable (signal, costs, sizing, crowding)

| Condition | Test | Practical threshold | If failing |

|---|---|---|---|

| Durable relationship | Cointegration / out-of-sample test | Statistically significant OOS | Re-estimate or drop |

| Signal > costs | Net P&L after costs | Positive net per trade | Increase threshold |

| Size survivability | Stress path simulations | Loss < survival capital | Reduce size / leverage |

| Crowding | Peer position overlap / flows | Low peer exposure | Avoid crowded trades |

A statistical-arbitrage edge exists only if four things are simultaneously true. The relationship must be real enough to reappear out of sample. The signal must be large enough to dominate costs. The position must be sized small enough that you can survive adverse path dependence. And the trade must not be so crowded that your expected convergence is overwhelmed by other people’s liquidation needs.

If any one of these fails, the strategy can look elegant and still lose money. A beautiful model with weak execution fails. A strong signal with excessive leverage fails. A profitable backtest built on unstable relationships fails. This is why experienced practitioners often sound less excited about prediction and more focused on portfolio construction, execution, and risk plumbing. The mathematics finds candidates; the business of trading decides whether they are tradable.

Conclusion

Statistical arbitrage is best understood as systematic relative-value trading under uncertainty. It seeks temporary deviations from a modeled equilibrium, hedges broad market direction, and profits if prices revert before costs, impact, and funding pressure erase the edge.

Its promise comes from structure in relative prices. Its danger comes from the fact that this structure is only probabilistic, never guaranteed. The idea to remember tomorrow is simple: stat arb does not buy cheap things and wait; it buys temporarily cheap things against temporarily rich things, and everything depends on whether that “temporary” really is temporary on the timescale your capital can survive.

Frequently Asked Questions

No - stat arb is not risk-free; it is a disciplined, market‑neutral bet that relative prices will mean-revert faster than trading costs, funding pressures, and path-dependent losses can destroy the edge, so sharp losses can occur especially under crowding or liquidity stress.

You need an economically plausible common driver or a stationary residual (cointegration or stable factor residuals), because simple correlation over a sample does not guarantee a bounded spread or that the relationship will survive out of sample.

Execution costs matter materially: Gatev et al. report that a one-day trading delay cut reported excess returns sharply and estimate a conservative lower‑bound roundtrip cost of about 83 basis points per pair (≈42 bps effective one‑way spread); small raw mean-reversion edges can be fully wiped out by such frictions.

Crowding means many funds hold similar relative‑value positions so that when liquidity tightens or deleveraging occurs, simultaneous exits push spreads farther from equilibrium and magnify losses - the August 2007 quant drawdown is a canonical example of this mechanism.

Practitioners guard against false discoveries with robust validation: strict formation/trading period separation, walk‑forward tests, limited parameter tuning, data‑quality checks (no survivorship or stale‑quote bias), and model‑selection procedures such as the Model Confidence Set to avoid overfitting.

Yes - even a correct mean‑reversion model can lose money because spreads can widen further or for longer than your funding, margin limits, or stop rules allow; path dependence, leverage, and liquidity risk can turn expected gains into realized losses.

Pairs trading is the simplest stat‑arb form but not the only one: strategies also trade residuals from factor models, PCA‑derived latent factors, sector ETFs, or long/short portfolios that generalize the pair concept and change capacity and model‑risk characteristics.

Empirical microstructure work finds market impact roughly follows a square‑root law (impact ∝ sqrt(order size)) across many settings; this implies larger orders have disproportionately higher impact and must be accounted for when sizing stat‑arb trades.

Implementing stat arb at scale typically requires reliable borrow, financing and margin capacity, low‑latency execution and algorithmic execution tools, robust risk controls, and substantial data/computational resources to validate signals and avoid false positives.

Related reading