What Are Mean Reversion Trading Strategies?

Learn what mean reversion trading strategies are, how pairs and residual trades work, why stationarity matters, and where the approach breaks down.

Introduction

Mean reversion trading strategies are trading methods built on a specific claim: some price moves are temporary, so unusually stretched prices, spreads, or residuals tend to move back toward a more normal level. That idea is attractive because markets often overshoot in the short run, especially when liquidity is thin, order flow is one-sided, or related assets momentarily get out of line. But the same idea is also dangerous, because many prices that look “too far” are not mispriced at all; they are reacting to new information, shifting into a new regime, or simply trending longer than the trader can stay solvent.

That tension is the heart of mean reversion trading. The strategy is not really about predicting the future in a broad sense. It is about identifying a variable that should fluctuate around an equilibrium, measuring how far it has wandered, and betting on the path back. If that equilibrium is real, stable, and economically meaningful, the trade can make sense. If it is imagined, unstable, or overwhelmed by costs and crowding, the same trade can fail quickly.

The central question, then, is not “do prices revert?” in the abstract. It is more precise: which quantity is expected to revert, by what mechanism, on what timescale, and under what market frictions? Once that question is asked clearly, mean reversion becomes much less mystical and much more mechanical.

Which price deviations are suitable for mean-reversion trades?

A common first misunderstanding is to think mean reversion means “every price eventually comes back.” That is not the useful version. Many asset prices are better described as drifting, trending, or regime-shifting over long horizons. A stock can double and never revisit its old level because the business changed. A bond yield can sit in a new range for years. A commodity can reprice because supply truly changed.

What mean-reversion traders usually target is not the raw price level itself, but a deviation from a reference level that has a reason to be stable. That reference might be a stock’s move relative to its sector, one company relative to a close peer, a spread between two cointegrated assets, or an intraday move that overshot because impatient traders demanded immediacy. In each case, the trade is a wager that the deviation is larger than fundamentals justify and that market microstructure or temporary imbalance will unwind.

This is why so much mean reversion trading is naturally relative-value trading. Instead of saying “stock A is cheap,” the trader says “stock A is cheap relative to stock B,” or “this stock moved too far relative to the factors that usually explain it.” That framing matters because it removes some broad market noise. A dollar-neutral or beta-neutral portfolio can be wrong about the overall market and still profit if the relative mispricing closes.

In the statistical-arbitrage literature, this idea appears very explicitly. One influential formulation defines the common features of the strategy class as rules-based signals, a market-neutral book, and a statistical mechanism for excess returns. In practice, the signal often comes from the part of returns left over after removing common factors. If those residuals are mean-reverting, contrarian trades follow naturally.

What economic mechanisms create mean-reverting relationships?

For mean reversion to be more than a slogan, the “mean” must come from somewhere. There are a few broad mechanisms, and the important point is not the labels but the structure they share: each creates a force that makes large deviations less likely to persist than small ones.

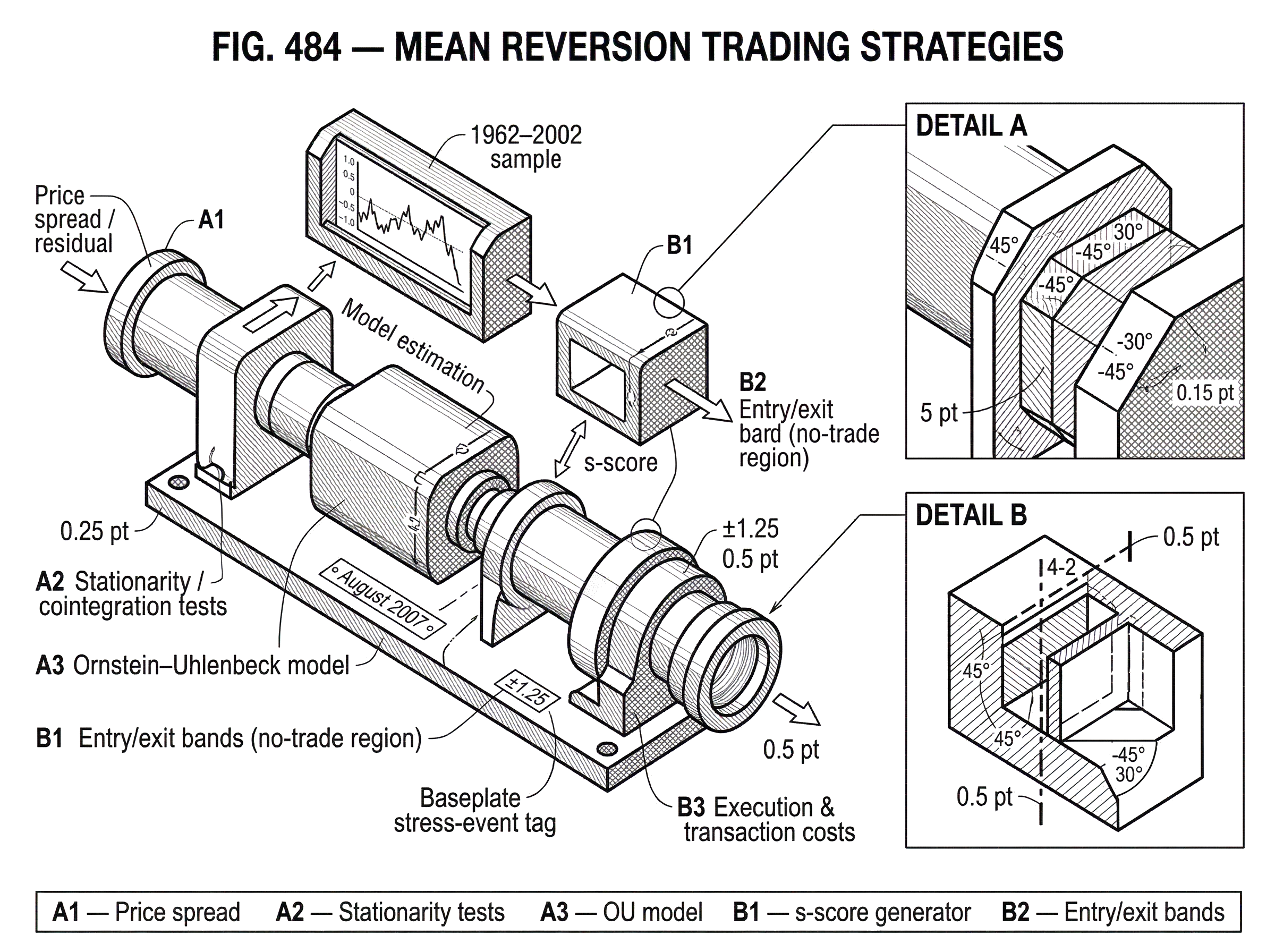

One mechanism is shared fundamentals. Two regional banks, two oil majors, or a stock and a sector ETF often respond to similar drivers. If one moves sharply without the other, a trader may interpret the gap as temporary rather than fundamental. This is the economic intuition behind classic pairs trading. Gatev, Goetzmann, and Rouwenhorst studied a canonical version in which stocks were matched into pairs by the closeness of their historical normalized price paths. When the pair spread widened beyond a threshold, the strategy bought the underperformer and shorted the outperformer, then closed the trade on convergence. Historically, over their long 1962–2002 sample, that simple rule produced economically large excess returns before frictions and remained meaningful under conservative cost adjustments.

A second mechanism is inventory and liquidity pressure. Short-horizon contrarian strategies often make money not because fundamentals changed, but because someone else urgently needed to trade. If a flow-driven sell order pushes a stock down too far today, liquidity providers who buy it are betting that tomorrow, when the urgency fades, price impact partially reverses. This is why a simple contrarian strategy (buy recent losers and sell recent winners) can be understood as a form of market-making. It provides immediacy now and hopes to earn that liquidity premium later.

A third mechanism is factor misalignment. A stock’s return is partly explained by the market, sector, style factors, and other common components. If it moves much more than those factors imply, the unexplained component may revert. Avellaneda and Lee built statistical-arbitrage strategies on exactly this logic, generating signals either from principal component analysis, or PCA, or by regressing stock returns on sector ETFs. After removing factor effects, they modeled the idiosyncratic residual as a mean-reverting process and traded against large deviations.

These mechanisms are similar in one crucial respect: the strategy needs some anchor that does not move as fast as the observed price. Without that anchor, “reversion” has no content. There is only price motion.

How should I think about mean reversion; a practical mental model and its limits

A useful analogy is a stretched rubber band. If two related assets usually move together, and one suddenly leaps away, the spread between them looks like tension in the band. Mean-reversion trading bets that the tension will pull the spread back inward.

This analogy explains why the trade is often strongest when the relationship is tight and the deviation is unusual. It also explains why traders care about the speed of reversion: a weak rubber band may pull back eventually, but too slowly to overcome financing costs, slippage, or risk.

But the analogy fails in an important way. Real markets do not have literal elastic bands enforcing fair value. Relationships can weaken, break, or invert. The “band” may have been inferred from history rather than guaranteed by structure. That is why good mean-reversion research spends so much effort asking whether the spread is actually stationary, whether the residual process is stable, and whether the historical link survives regime change.

How do stationarity and cointegration justify mean-reversion trades?

| Concept | Tests used | Practical meaning | Key caveat |

|---|---|---|---|

| Stationarity | ADF / PP on spread | Spread has fixed mean and variance | Highly persistent series can mislead |

| Cointegration | Engle‑Granger / Johansen | Linear combo of prices is stationary | Breaks after structural regime change |

| Unit‑root tests | ADF, Phillips‑Perron | Null: series non‑stationary | Different tests can disagree in finite samples |

When traders say a spread “reverts,” they usually mean the spread behaves like a stationary series: it fluctuates around a relatively stable mean and variance rather than wandering indefinitely. This is the statistical version of saying there is an equilibrium level.

That matters because many raw prices are not stationary. A stock price can behave more like a random walk with drift than like a spring around a fixed center. But a combination of two non-stationary prices can still be stationary. This is the idea of cointegration. If there exist constants a and b such that ax_t + by_t is stationary even though x_t and y_t individually are not, then the pair has a stable long-run relationship. That stationary combination is the object a mean-reversion trader wants.

The logic is simple. If the spread itself has a fixed center and finite variability, then an unusually wide spread is, at least statistically, an unusual event. Betting on contraction is no longer a vague intuition; it is a wager on a variable with a tendency to return toward equilibrium.

This is where unit-root testing enters. The Augmented Dickey-Fuller, or ADF, test is widely used to test whether a univariate series has a unit root. Its null hypothesis is that there is a unit root, meaning the series is non-stationary; the alternative is that there is no unit root. In practice, traders often apply such tests not to raw prices but to candidate spreads or residuals. If the spread fails stationarity tests, the statistical basis for a mean-reversion trade weakens sharply.

There are limits here. Finite samples can mislead, different tests can disagree, and a highly persistent stationary series can look a lot like a non-stationary one. Structural breaks are especially troublesome. A spread may look stationary in the backtest and stop behaving that way in live trading because the underlying relationship changed. So stationarity tests are helpful filters, not guarantees.

How do traders model mean reversion with the Ornstein–Uhlenbeck process?

Once a trader believes a spread or residual is mean-reverting, the next question is how fast and how noisily it reverts. A standard continuous-time model is the Ornstein–Uhlenbeck, or OU, process. The attraction of the OU model is not that markets literally follow its equations. It is that it captures the key mechanism in the simplest possible form: the farther the process is from equilibrium, the stronger the pull back.

In words, the OU model says the change in the spread has two parts. One part is deterministic and points toward the long-run mean. The other is random noise. If the spread is above equilibrium, the deterministic part nudges it downward. If the spread is below equilibrium, it nudges it upward. The parameter usually called kappa measures the speed of mean reversion. Larger kappa means faster pullback. The long-run level, often written mu, is the equilibrium mean. The volatility parameter controls how much random motion shakes the spread around that mean.

This model appears directly in practical statistical-arbitrage work. Avellaneda and Lee modeled idiosyncratic residuals as OU processes and estimated parameters on rolling windows. They then standardized the residual into an s-score, essentially a z-score measuring distance from equilibrium in units of equilibrium standard deviation. That transformation matters because a deviation of 2 cents means very different things for a calm spread and a volatile one. Standardizing turns “far” into a comparable quantity.

Once the signal is in z-score form, trading rules become straightforward. In their backtests, positions were typically opened when the residual moved far enough from equilibrium (such as around ±1.25 standard deviations) and closed when it moved back inside a tighter band. The exact numbers are a design choice, but the structure is general: enter when the deviation is large enough to justify costs and risk, exit when enough of the expected convergence has been realized.

How does a pairs trade work in practice?

Imagine two large utility stocks that have historically moved very closely together because they face similar interest-rate sensitivity, regulation, and demand conditions. Over a formation window, their normalized prices track each other tightly. Then one stock falls sharply after a temporary flow imbalance while the other barely moves.

A mean-reversion trader does not need to claim the fallen stock is “cheap” in an absolute sense. The narrower claim is that the difference between the two is unusually wide relative to its own history. So the trader buys the fallen stock and shorts the stronger one in matched size. This is the key mechanism: if the broad market drops tomorrow, both legs may fall, but the trade can still make money if the underperformer falls less or rebounds more than the outperformer.

Now suppose over the next several days the panic selling fades. Perhaps no new information arrives, and perhaps other relative-value traders notice the dislocation and lean the other way. The spread narrows. As it moves back toward its historical relationship, the long leg outperforms the short leg, and the trade is closed.

Notice what made the trade sensible. It was not merely that one line on a chart looked low. The trade depended on a specific invariant: the relative relationship between two economically similar assets was assumed to be more stable than either raw price. That is the real object being traded.

How does factor-based mean reversion differ from pairs trading?

| Method | Factor extraction | Pros | Cons |

|---|---|---|---|

| PCA | Latent eigenvectors | Captures broad latent factors | Can overfit if too many factors |

| ETF regression | Traded sector and market ETFs | Interpretable; easy hedging | Misses hidden latent factors |

| Pairs trading | Two-asset cointegrated spread | Simple; low-dimension | Limited coverage; idiosyncratic risk |

Pairs trading is intuitive because there are only two assets. Factor-based mean reversion generalizes the same logic to a larger universe. Instead of asking whether stock A diverged from stock B, the strategy asks whether stock A diverged from the combination of broad forces that usually explains it.

One way to estimate those broad forces is with sector ETFs. A stock in a particular industry is regressed on market and sector returns, and the leftover residual becomes the candidate mean-reverting signal. Another way is with PCA, which extracts latent factors from the covariance or correlation structure of returns. The top eigenvectors define factor portfolios, and the residual after projecting returns onto those factors is interpreted as idiosyncratic.

Avellaneda and Lee compared both approaches in U.S. equities. Historically, PCA-based strategies achieved higher average Sharpe ratios over the full sample than ETF-based strategies, though both showed deterioration after 2002. That result is useful not because it proves PCA is always better, but because it highlights a deeper point: the quality of mean reversion depends on how well you separate common movement from idiosyncratic movement. If factor extraction is too crude, the “residual” still contains systematic noise. If it is too aggressive, the model may start fitting noise itself.

Their work also showed that the number of meaningful PCA factors varies through time. In stressed regimes, fewer factors may explain more of the market’s variance because assets move more together. In calmer or more differentiated regimes, more factors may matter. That is a reminder that the equilibrium being estimated is not static simply because the model looks formal.

How should volume and trading time affect mean-reversion signals?

A subtle but important insight from the same literature is that trading volume changes how much trust to place in a move. A price move on heavy volume may reflect stronger information or stronger conviction than the same move on thin trading. Conversely, some apparent dislocations are less meaningful if they occurred in sparse conditions.

Avellaneda and Lee proposed signals that account for trading volume by moving from clock-time returns to a kind of trading-time perspective. In their tests, incorporating volume materially improved the ETF-based mean-reversion strategy, especially in the 2003–2007 period. The intuition is straightforward: the strategy should react differently to a one-percent move that happened with broad participation than to a one-percent move produced by a few impatient trades.

This is a good example of the difference between a toy strategy and a robust one. In a toy strategy, every deviation beyond a threshold is treated alike. In a robust strategy, the trader asks what process generated the deviation. The more the signal reflects temporary imbalance rather than genuine repricing, the more plausible the mean-reversion bet becomes.

How should transaction costs shape entry and exit bands for mean reversion?

| Policy | Trading frequency | Cost impact | Best when |

|---|---|---|---|

| Continuous rebalancing | High frequency | Large round‑trip erosion | Very fast, high‑kappa reversion |

| Buffered (no‑trade) region | Moderate | Cuts small round trips | Small costs; noisy signals |

| Infrequent trades | Low frequency | Low cumulative costs | Long horizon, large deviations |

Mean reversion strategies often target small, repeated dislocations. That immediately creates a problem: if the expected edge per trade is modest, costs can eat the entire strategy.

This is not incidental. It is structural. Trend-following can, at least in principle, catch a large move and hold it for a long time. Mean reversion often needs frequent rebalancing because the edge exists only when the deviation is fresh and the equilibrium still matters. The more eagerly the strategy trades every wobble, the more it pays in spreads, commissions, borrowing costs on shorts, market impact, and slippage.

The theory of optimal mean-reversion trading with costs therefore does not say “trade as soon as the process departs from the mean.” It says almost the opposite: introduce a no-trade region. Martin and Schöneborn show that with linear transaction costs, the optimal policy has a central no-trade zone, with trading only when the signal moves far enough away from the target. For small costs, the half-width of this buffer scales with the cube root of transaction cost. That result is technical, but the intuition is plain. If costs are nonzero, small corrections are not worth chasing. You wait for deviations large enough to pay for the round trip.

This same logic appears in simpler rule-based systems through entry and exit bands. The bands are not arbitrary ornaments. They are friction-management devices.

How do I set exits and stop-losses for mean-reversion strategies?

A second common mistake is to think mean reversion is mostly about finding the right entry signal. In practice, the exit rule often determines whether a plausible edge survives.

If a trader exits too quickly, the strategy may harvest only noise and pay too many costs. If the trader exits too slowly, the strategy may let small, normal losses turn into large losses from regime breaks. This is why the literature includes work on optimal stop-loss and take-profit rules for finite-horizon mean-reverting strategies. Under an OU assumption, there are analytical ways to choose profit-taking and stop-loss thresholds that maximize a target such as the Sharpe ratio over a finite horizon.

The larger idea is more important than any single formula. A mean-reversion position is a wager that a deviation is temporary. If enough time passes without convergence, the market is telling you something: either the reversion is too slow to monetize, or the equilibrium has moved. A disciplined exit rule is therefore not just risk control layered on top of the strategy. It is part of the strategy’s definition of what counts as a valid trade.

What are the main failure modes of mean-reversion strategies?

Mean reversion fails in more than one way, and the failure modes follow directly from the mechanism.

The first failure mode is false equilibrium. A relationship that looked stable was never truly stable, or it was stable only under one regime. This is the classic danger in pairs and cointegration work. Two stocks may have moved together because of an old business similarity that no longer exists. A spread may appear stationary in sample and break out of sample.

The second is slow reversion. Even if equilibrium is real, the pull back may be too weak. In the Avellaneda-Lee framework, residuals with too little estimated mean-reversion speed were rejected because they were not practically tradable. This is a useful discipline. A signal can be statistically stationary and still be economically useless if it takes too long to converge.

The third is cost domination. If the expected profit per trade is close to the spread and slippage paid to enter and exit, the strategy may look strong gross and weak net. This is why historical claims about profitability always need to be read alongside assumptions about round-trip cost, borrowing availability, and implementation timing.

The fourth is crowding and liquidity shock. This is perhaps the most important real-world failure mode because it turns a strategy built to harvest liquidity premia into one that is crushed by disappearing liquidity.

What did the August 2007 quant unwind reveal about crowding risk in mean-reversion?

The August 2007 quant crisis is the clearest warning sign in the modern literature. During that period, many quantitative long-short equity funds experienced unusually large losses over a very short interval. Khandani and Lo argued that the pattern was consistent with an unwind hypothesis: forced liquidation of one or more large market-neutral portfolios moved prices, those price moves hurt similar portfolios, and the resulting losses triggered further deleveraging and more price impact.

This matters for mean-reversion strategies because they often hold similar books. If many funds are long recent losers and short recent winners, or long the same “cheap” residuals and short the same “rich” ones, then the strategy’s apparent diversification is less real than it looks. When one large player is forced to exit, the very spreads others expect to revert can gap wider first.

The evidence from that episode is revealing. Simulated mean-reversion and market-making strategies were sharply negative during the stress window but positive before and after, consistent with a temporary withdrawal of market-making capital. Measures of price impact such as Kyle’s lambda also spiked, indicating lower liquidity and greater price movement per unit of order flow. Avellaneda and Lee likewise found large drawdowns across mean-reversion strategies in early August 2007, with PCA-based portfolios somewhat more resilient than ETF-based or simpler contrarian approaches.

The lesson is not that mean reversion stopped working. It is that the strategy depends on liquidity, and in crisis periods it can both consume and provide liquidity in unstable ways. What looks like a hedge in normal times can become shared exposure when many participants are trying to reduce the same positions at once.

What traders actually use mean reversion for

In live trading, mean reversion is less a single strategy than a recurring design pattern. It appears in short-horizon equity contrarian books, classic pairs trading, sector-relative trades, ETF-versus-constituent dislocations, futures spreads, statistical-arbitrage residual models, and some market-making systems. Across these variants, the practical use is the same: capture temporary relative mispricing while reducing broad directional exposure.

That is why mean reversion sits so close to statistical arbitrage. Statistical arbitrage is the broader category; mean reversion is one of its most common engines. It is also naturally connected to tools like the autocorrelation function, which helps reveal reversal or persistence in returns, and to the Dickey-Fuller family of tests, which help check whether the thing being traded is plausibly stationary.

The choice between a two-asset spread, a sector-relative residual, or a larger factor-neutral portfolio is mostly a question of what equilibrium the trader believes is strongest and most stable. The underlying logic does not change.

The practical standard: a good mean-reversion strategy is selective

A durable mean-reversion strategy does not trade every move against the tape. It is selective along several dimensions at once. It chooses a variable with a credible anchor. It measures deviation in standardized units rather than raw price terms. It demands enough distance from equilibrium to pay for costs. It filters out signals whose estimated reversion is too slow. It sizes the position so that one broken relationship does not dominate the book. And it accepts that some “mispricings” are in fact real repricings.

This selectivity is why the strategy can be harder than its basic slogan suggests. “Buy low, sell high” is not a strategy. Buy a deviation that has a reason to close, at a scale where the expected closure exceeds the friction of trading, and exit when the premise is no longer true; that is closer.

Conclusion

Mean reversion trading strategies exist because markets do not adjust smoothly and uniformly. Related assets can drift apart, factor residuals can overshoot, and liquidity shocks can push prices away from plausible equilibrium. A mean-reversion trader tries to monetize those temporary distortions.

The idea works only when the traded quantity truly has an anchor and the path back is strong enough to survive costs, noise, and crowding. That is the part worth remembering tomorrow: mean reversion is not a belief that prices go back; it is a disciplined bet that a specific deviation is temporary.

Frequently Asked Questions

Pick a deviation that has an economic anchor - a spread, a residual after removing common factors, or a cointegrating combination - because raw prices often drift; pairs (two similar stocks), factor-residuals (stock vs. sector/market), or cointegrated spreads are the typical objects traders target.

Use stationarity/cointegration checks and estimate the speed of reversion: if a candidate spread is statistically stationary or cointegrated and shows a meaningful mean-reversion speed it is plausibly tradable, but these tests can be misleading in short samples or across regime changes so they are filters, not guarantees.

Transaction costs force a no-trade zone: optimal policies with linear costs wait until the signal crosses an entry band, and Martin & Schöneborn show the half-width of that buffer scales roughly with the cube root of transaction cost, so costs materially widen entry thresholds and reduce turnover.

The Ornstein–Uhlenbeck model is used because it parsimoniously captures a pull toward an equilibrium (kappa = speed, mu = mean, sigma = noise), but it is a simplification: parameter-estimation error, finite-horizon effects, and model misspecification can make its prescriptions fragile in practice.

PCA-based factor extraction can produce stronger historical Sharpe ratios than simple ETF regressions in some samples because it can capture latent common movements, but it risks overfitting if too many factors are used and both approaches deteriorated in performance after 2002 in the referenced studies.

Trading-time or volume-aware signals generally perform better because a price move on high volume is more likely to reflect information than the same move on thin trading; Avellaneda and Lee found volume adjustments materially improved ETF-based strategies in their tests.

August 2007 showed that crowded market‑neutral books can unwind together: an initial forced liquidation reduced liquidity and amplified price impact, causing correlated losses across similar mean-reversion strategies and large short-term drawdowns.

Limit position size so no single broken relationship dominates, use disciplined stop-loss and finite-horizon exit rules (analytical solutions exist under OU assumptions), and avoid signals with very slow estimated reversion or weak economic anchors because those are most likely to convert small errors into large losses.

Related reading