What Is the Almgren–Chriss Model?

Learn what the Almgren–Chriss model is, how it balances market impact against price risk, and why it remains central to optimal execution.

Introduction

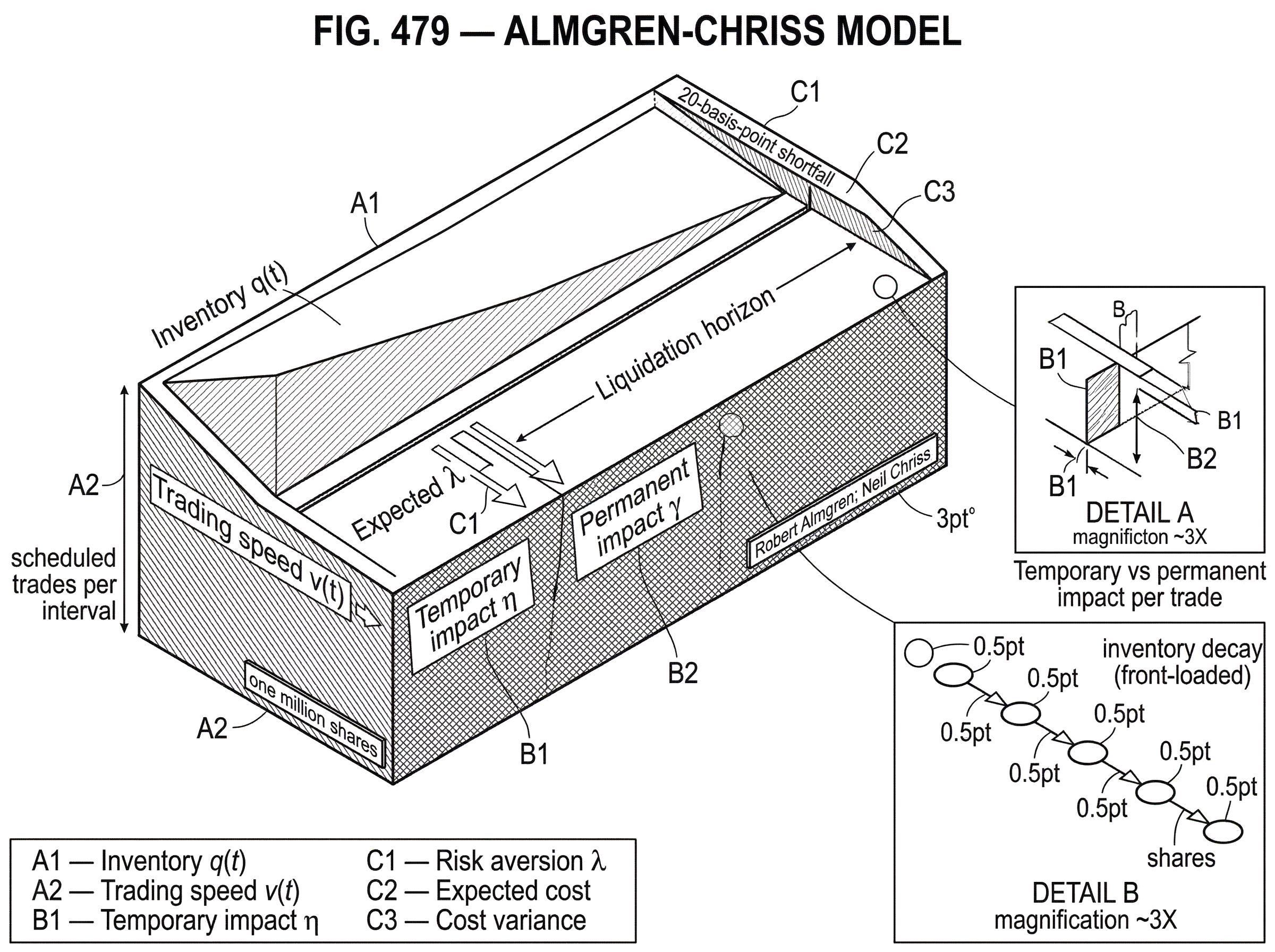

The Almgren–Chriss model is a framework for deciding how to execute a large trade over time when trading itself moves the market. That sounds narrow, but it addresses a central problem in modern trading: if you sell too quickly, you push the price against yourself; if you sell too slowly, you give the market time to move against you anyway. The model matters because it makes that tradeoff explicit and solvable.

At first glance, this can seem like a scheduling problem. Split an order into pieces, spread them out, and you are done. But the hard part is that execution creates its own feedback loop. Your own orders change the prices you can get now, and the inventory you still hold remains exposed to market risk. The right trading path is therefore not “as slow as possible” or “as fast as possible.” It is the path that balances market impact against price risk.

That is the core idea that makes Almgren–Chriss click: execution cost is not just about the average price you pay or receive; it is about how a time path of trades converts a fixed position into a combination of impact costs and risk exposure. Once you see execution as a path problem rather than a single-price problem, the model’s structure follows naturally.

The original framework, introduced by Robert Almgren and Neil Chriss, became one of the foundational models in optimal execution. It is still widely used as a benchmark for pre-trade cost estimation, execution scheduling, and transaction cost analysis. Just as importantly, many later models are best understood as reactions to its assumptions: linear impact, simple price dynamics, and the surprising result that under those assumptions a precomputed static schedule can already be optimal.

What trading problem does the Almgren–Chriss model solve?

Suppose a portfolio manager needs to liquidate a large position. If the order is small relative to market depth, the trader may not worry much about execution mechanics. But once the order is large, the market is no longer a passive backdrop. Trading becomes a source of cost.

The first source of cost is immediate. If you sell aggressively, you consume liquidity and accept worse prices as you move down the book or force market makers to widen their quotes. The second source is delayed. If you still hold a large position while you are working the order, any adverse price move hurts the unsold shares. Fast trading reduces the second cost by removing risk quickly, but increases the first by raising impact. Slow trading does the reverse.

This is why naive benchmarks such as TWAP or simple equal-sized slicing are incomplete. They specify a schedule without explaining why that schedule is right for this order, this asset, this horizon, and this trader’s risk tolerance. Almgren–Chriss instead asks a more fundamental question: among all possible liquidation paths, which one gives the best tradeoff between expected cost and uncertainty?

That “best tradeoff” language is important. The model is not trying to discover a magical strategy that is cheapest in every state of the world. It assumes there is a frontier: if you want less risk, you usually must accept more expected impact cost; if you want less expected cost, you must usually tolerate more exposure while you wait. The output is therefore not just a single strategy, but a family of optimal strategies indexed by risk tolerance.

Why is inventory the key state variable in execution?

A useful way to think about the model is to track only one state variable: inventory, meaning how many shares you still need to buy or sell. If you begin with q0 shares to sell, the execution problem is the problem of moving inventory from q0 down to 0 over a fixed horizon T.

Why does inventory matter so much? Because impact depends on how quickly you reduce it, while market risk depends on how much of it remains. Trading speed and inventory path are therefore two sides of the same object. If the inventory falls steeply at the start, you are front-loading impact and reducing later risk. If it declines slowly, you are saving on immediate impact but carrying more exposure for longer.

This is the invariant underneath the whole framework: the schedule matters only because it shapes the time profile of remaining inventory and therefore the balance between impact and volatility risk. Once that is clear, the optimization problem becomes conceptually simple. Choose the inventory path that best balances those two penalties.

In the standard liquidation setting, the trader begins with a fixed number of shares and must finish by time T. A strategy specifies how many shares to trade in each interval. If the intervals are indexed by k, and nk is the number of shares traded in interval k, then remaining inventory declines as trades are executed. Static strategies choose all nk in advance; dynamic strategies allow nk to depend on information that arrives along the way.

That distinction between static and dynamic will matter later. One of the most famous features of Almgren–Chriss is that under its base assumptions, the ability to react dynamically does not buy you much and may buy you nothing at all. That sounds counterintuitive until you see what the assumptions are actually doing.

How does Almgren–Chriss model temporary vs. permanent market impact?

| Impact type | Persistence | Main driver | Scheduling effect | Typical param |

|---|---|---|---|---|

| Temporary | Transient (now only) | Trading rate | Penalizes fast trading | η (temporary) |

| Permanent | Persistent (future) | Total quantity | Shifts reference price | γ (permanent) |

The model separates market impact into temporary impact and permanent impact. This split is not the only way to describe market microstructure, but it is the organizing approximation of the framework.

Temporary impact is the extra concession you pay because you demand immediacy. If you are selling, this means receiving a slightly worse price than the prevailing “unaffected” level because you are walking into available demand. In the model, this impact affects the price of the shares traded now, but does not persist in the same way for later trades.

Permanent impact is different. It represents the idea that trading reveals information or changes the market’s equilibrium price, so the effect remains for the rest of the liquidation horizon. If you sell aggressively now, the model assumes the underlying reference price shifts downward for the shares you still have left to sell.

This distinction matters because the two effects influence scheduling differently. Permanent impact depends mostly on total quantity traded, so under linear assumptions it is less sensitive to the exact slicing pattern. Temporary impact, by contrast, depends directly on trading rate. That means it is the main force discouraging very rapid execution. Risk pushes toward faster execution; temporary impact pushes toward slower execution.

In the original paper’s tractable specification, permanent impact is linear in trading rate, written conceptually as g(v) = γv, where v is the trading speed and γ is the permanent impact coefficient. Temporary impact is also taken in linear or affine form, with a coefficient often denoted by η. These linear assumptions are what make the cost functional quadratic and the optimization analytically solvable.

That solvability is a major reason the model became so influential. The linear forms are not a claim that markets are exactly linear. They are a modeling choice that makes the tradeoff transparent and gives explicit trajectories. The price of that clarity is approximation error when real impact is nonlinear, decaying, state-dependent, or shaped by the limit order book in richer ways.

How does Almgren–Chriss use a mean–variance objective to trade off cost and risk?

| Risk aversion | Typical λ | Trading speed | Expected cost | Variance exposure |

|---|---|---|---|---|

| Low risk aversion | Small λ | Slower | Lower expected cost | Higher variance |

| Medium risk aversion | Moderate λ | Balanced speed | Moderate expected cost | Moderate variance |

| High risk aversion | Large λ | Faster | Higher expected cost | Lower variance |

Impact alone would tell you to trade slowly. If there were no risk from holding inventory, the cheapest schedule would often be to spread the order out as much as possible. The model therefore adds a penalty for uncertainty in execution outcomes.

The usual assumption is that the unaffected asset price follows an arithmetic random walk with independent increments. In plain language, the price wanders unpredictably over time, and short-horizon price changes do not systematically predict the next ones. Under that assumption, the risk of waiting comes from variance: the longer you hold inventory, the more random price movement can hurt the value of the remaining position.

The execution objective then becomes a mean–variance tradeoff. The trader minimizes

expected cost + λ × variance of cost

where λ is the trader’s risk-aversion parameter. If λ is close to zero, the trader cares mostly about expected cost and is willing to wait. If λ is large, the trader cares much more about reducing uncertainty and therefore trades faster.

This is the heart of the Almgren–Chriss framework. It turns optimal execution into the same kind of frontier problem familiar from portfolio theory, except the object being optimized is not an asset mix but a time path of liquidation. The resulting family of solutions forms an efficient frontier of strategies with minimum expected cost for a given variance, or equivalently minimum variance for a given expected cost.

The paper shows that this frontier is smooth and convex. That is more than a geometric detail. Convexity means the tradeoff is well behaved: reducing variance further becomes progressively more expensive in expectation. Near the minimum-cost point, small increases in expected cost can buy meaningful reductions in risk. That is exactly the kind of practical decision support the model is designed to provide.

Why is the optimal execution path typically front‑loaded rather than constant?

Imagine you need to sell one million shares by the end of the day. Suppose the stock is reasonably liquid, but your order is large enough that aggressive selling clearly moves the market. If you dump the whole order immediately, you eliminate market risk at once, but your realized price is poor because temporary impact is severe. If you split the order evenly across the day, you reduce the instantaneous pressure on the book, but you spend hours carrying a large residual position that can lose value if the stock drifts down.

Now suppose you increase your aversion to risk. What should change mechanically? Not the total quantity. Not the final deadline. What changes is the shape of the inventory path. The optimal strategy sells more early, when inventory is largest and therefore risk exposure is highest. Later, as inventory shrinks, each additional minute of waiting is less dangerous because there are fewer shares left to be affected by random price moves.

This is why the optimal curve is typically not a simple straight line once risk matters. The pressure to reduce inventory is strongest at the beginning, because that is when exposure is largest. The strategy then tapers as remaining inventory falls. Under the standard linear-impact formulation, this gives the familiar exponential or hyperbolic-sine-shaped liquidation path rather than a constant-rate schedule.

The model summarizes this shape through an intrinsic execution timescale, often called the half-life of the trade. Intuitively, the half-life is the time over which the remaining position would be cut roughly in half under the optimal trajectory. More liquid markets, lower volatility, or lower risk aversion imply a longer half-life; higher volatility, stronger risk aversion, or lower liquidity imply a shorter one. So the schedule is not chosen arbitrarily. It comes from a characteristic timescale set by the balance between impact and risk.

When is a precomputed (static) execution schedule optimal?

One of the most surprising results in the original framework is that, under its assumptions, the optimal strategy can be static: you can compute the whole trading path in advance, and there is no gain from adapting it to intermediate price moves.

That can sound wrong. In actual trading, reacting to incoming information seems obviously useful. But here is the mechanism. If the unaffected price process has independent increments, then yesterday’s price move does not help predict the next one. And if the risk penalty is symmetric and quadratic, then what matters is exposure itself, not the sign of recent noise. Under those conditions, realized price changes along the way do not contain useful directional information for improving the remaining schedule.

So the model says: if price moves are just noise, and your objective only trades off expected impact against variance, then replanning after each noise realization does not improve the optimum. A precomputed deterministic path is already efficient. This gives a theoretical justification for scheduled execution curves used in practice.

The result is powerful, but conditional. The moment you relax those assumptions, dynamic adaptation can matter. If returns show serial correlation, if you receive informative signals during execution, if liquidity changes around scheduled events, or if market impact itself has transient dynamics, then reacting to new information can improve results. Almgren and Chriss themselves analyze cases with serial correlation and find the additional gain from dynamic adaptation is often small under linear assumptions, but not zero.

So the static-optimality result should be read carefully. It is not saying execution should never adapt. It is saying that in a world with no useful interim information and a particular symmetric risk criterion, the execution path is fundamentally a planning problem rather than a feedback-control problem.

Discrete vs. continuous Almgren–Chriss formulations and when to use each

The original formulation is often introduced in discrete time. Divide the trading horizon into intervals of length Δt. Let qk be inventory at time step k and vk be the trading rate in that interval. Inventory evolves by selling some amount each step until it reaches zero at the horizon.

In that setting, the execution price paid in each interval is the unaffected price adjusted by temporary impact, while the unaffected price itself evolves with noise plus permanent impact from trading. Because the objective is quadratic in the trading schedule under linear impact assumptions, the optimization reduces to a tractable control problem with closed-form solutions.

A continuous-time version expresses the same idea more compactly. Inventory becomes a smooth function of time, and trading speed is its rate of decline. In generalized forms, temporary impact can be written through a convex execution cost function L, often applied to participation rate relative to market volume. This allows extensions such as deterministic intraday volume curves, nonlinear impact, and multi-asset execution, while preserving the central tradeoff.

The important point is not whether one uses discrete or continuous notation. It is that both views describe the same mechanism: execution cost is accumulated by trading, risk is accumulated by holding inventory, and the optimal schedule is the path that best balances the two.

How do traders use Almgren–Chriss for pre‑trade analytics and benchmarking?

In practice, Almgren–Chriss is often less a literal trading robot than a benchmarking and planning framework. Before an order is sent, the model can estimate how execution cost should scale with order size, volatility, spread, liquidity, and urgency. That makes it useful for pre-trade analytics and for conditioning transaction cost analysis.

This matters because raw implementation shortfall numbers are hard to compare across trades. A 20-basis-point shortfall may be excellent for a large urgent order in a volatile name and poor for a small routine order in a deep market. A model-based estimate gives context: how difficult was the trade expected to be in the first place? Industry guidance has explicitly treated the Almgren–Chriss methodology and its later refinements as an appropriate basis for this kind of pre-trade market-impact modeling.

The framework also connects naturally to implementation shortfall, which is the slippage from the arrival price. Execution cost in Almgren–Chriss is essentially a decomposition of why that shortfall occurs: some from permanent impact, some from temporary concessions, some from randomness while inventory is still open. That is why the model is not just about strategy design. It is also about measurement.

The original paper goes further by defining L‑VaR, or liquidity-adjusted Value-at-Risk. The idea is to choose the liquidation strategy that minimizes Value-at-Risk once market impact and liquidation time are taken seriously. Standard VaR treats liquidation as if positions could be unwound frictionlessly. L‑VaR asks the more realistic question: what is the risk of exiting through an actual market? This is a natural extension of the same insight that motivated the model in the first place.

The framework has also been extended to multi-asset execution, where covariance between assets matters. If you are liquidating correlated names, it is not generally optimal to solve each asset independently. The risk term depends on the joint inventory vector and the covariance matrix, so the optimal schedules interact. Practical implementations and teaching materials often illustrate this with pairs of correlated stocks, where the joint execution path differs from simply running two single-name schedules side by side.

What are the key limitations and failure modes of Almgren–Chriss?

| Assumption | Model claim | Real‑world violation | Practical consequence | Mitigation |

|---|---|---|---|---|

| Linear impact | Closed‑form schedules | Impact often nonlinear | Wrong scaling; mis‑timed trades | Nonlinear empirical models |

| Independent returns | Static optimality | Serial correlation or signals | Value lost from no adaptation | Add signals and re‑plan |

| Permanent/temporary split | Simple decay structure | Impact often decays/transient | Suboptimal timing vs LOB dynamics | Include resilience/decay |

| Deterministic liquidity | Stable intraday volumes | Liquidity stochastic; stress spikes | Schedules brittle under stress | Overlay tactical throttles |

The most important limitation of Almgren–Chriss is not that it is “wrong.” It is that it is intentionally simple in places where real markets are not. Those simplifications are what make the model transparent and solvable, but they also mark the boundary of where its outputs should be trusted literally.

The first tension is the linear impact assumption. Empirical market impact is often not exactly linear, especially for large trades. Temporary impact can depend nonlinearly on participation rate, market state, and venue conditions. If impact is nonlinear, the neat closed-form exponential schedules change, and scaling laws such as the trade half-life no longer behave the same way.

The second tension is the permanent-versus-temporary decomposition itself. In real limit order books, impact often decays over time rather than fitting cleanly into “instant and gone” versus “permanent forever.” Models built around explicit order-book resilience make this point sharply. In some such models, the key dynamic variable is how fast liquidity replenishes after being consumed. That changes the structure of the optimal strategy and can lead to mixed policies with both block trades and continuous trading, rather than the purely smooth paths emphasized in simpler formulations.

The third tension is the assumption that interim price moves are uninformative. In modern markets, traders may observe short-term alpha signals, order-book imbalance, queue dynamics, news events, or regime shifts in liquidity. When those signals matter, static schedules become less compelling. The original framework can be adapted, but the clean “static is optimal” result no longer applies.

The fourth tension is that market volume and liquidity are often time-varying. Intraday liquidity has strong patterns, and liquidity can deteriorate abruptly in stress. A schedule chosen under deterministic average conditions can become too aggressive or too passive once the state of the market changes. This is one reason many practical systems use an Almgren–Chriss-type schedule as a baseline, then overlay tactical logic for venue selection, spread sensitivity, throttles, and intraday re-estimation.

There is also a deeper structural issue. Some impact models can unintentionally permit unrealistic or manipulative strategies if they omit features such as spread or book dynamics. Later execution literature paid close attention to these pathologies. That does not erase the usefulness of Almgren–Chriss, but it reminds us that a reduced-form impact model is a tool, not a full market theory.

Why does Almgren–Chriss remain a useful benchmark despite its simplifications?

Despite those limitations, Almgren–Chriss remains important because it identifies the right primitive quantities. The model says: to reason about execution, you need at least three ingredients; inventory over time, the cost of trading quickly, and the risk of continuing to hold. That triad remains the backbone of optimal execution even when later models replace linear impact with more realistic functions or replace static liquidity with explicit order-book dynamics.

It also remains valuable because it gives a common language for practical decisions. If a trader says an order should be more aggressive because volatility is high, or less aggressive because the stock is illiquid, or faster because risk limits are tight, that reasoning is essentially Almgren–Chriss reasoning whether or not the exact equations are used. The framework converts those intuitions into a quantitative schedule and an explicit cost-risk frontier.

Finally, it is a benchmark. Many execution strategies are easiest to evaluate by first asking how they differ from the Almgren–Chriss baseline. Are they using richer signals? Modeling transient impact? Respecting a volume curve? Coordinating across assets or venues? Without the baseline, those extensions are harder to interpret.

Conclusion

The Almgren–Chriss model is the classic framework for optimal execution because it makes one central truth precise: trading faster increases impact, trading slower increases risk, and the right execution schedule is the path that balances those forces. Its main contribution is not a single formula, but a way of seeing execution as an inventory-control problem with an efficient frontier of solutions.

If you remember one thing, remember this: the model works by turning a large order into a time path, then pricing that path through two penalties; the cost of trading now and the risk of waiting. Everything else in the framework is a refinement of that idea.

Frequently Asked Questions

The model splits impact into temporary impact (an immediate price concession that affects only the trades executed now and scales with trading rate) and permanent impact (a lasting shift in the reference price that depends on total quantity traded); temporary impact is the main force discouraging very rapid execution while permanent impact - under linear assumptions like g(v)=γv - is largely insensitive to the exact slicing pattern. This distinction shapes the schedule because temporary-cost terms penalize high trading rates and risk terms penalize slow decay of inventory.

A precomputed (static) schedule is optimal only under the model’s key assumptions: an arithmetic random walk with independent increments for the unaffected price and a symmetric quadratic (mean–variance) objective; under those conditions, interim price moves contain no directional information and replanning buys nothing. When returns exhibit serial correlation, when real-time alpha or liquidity signals arrive, or when impact is transient or state-dependent, dynamic adaptation can improve outcomes.

The closed-form exponential/hyperbolic-sine solutions rely on linear permanent and temporary impact (e.g., g(v)=γv and linear h(v)); if impact is nonlinear, decaying, or state-dependent, those explicit formulas and scaling properties (like the model’s intrinsic half-life) no longer hold and the optimal path changes. In practice this means you should treat the analytic curves as transparent benchmarks rather than literal prescriptions when empirical impact departs from linearity.

There is no single canonical choice of λ in practice; traders typically map λ to their operational risk preferences and often estimate or calibrate it empirically (for example by inversion from observed participation or via desk‑level studies), but the literature and teaching slides note this as a practical tuning problem rather than a closed-form decision.

The framework extends naturally to multi‑asset execution by replacing single‑asset inventory with a vector and the risk term with the joint inventory covariance: correlated assets’ schedules interact so you generally cannot run independent single‑name schedules when holdings are materially correlated. Multi‑asset formulations therefore jointly optimize liquidation paths to account for cross‑asset risk.

Practitioners most often use Almgren–Chriss as a planning and benchmarking tool - to generate pre‑trade cost estimates, compare trades on a like‑for‑like basis, and form a baseline execution curve - and then overlay tactical rules (venue choice, throttles, spread sensitivity) because real markets exhibit time‑varying liquidity and signals the basic model omits. The article emphasizes its role as a transparent baseline rather than a turnkey trading robot.

Calibration is challenging: reliable estimates of temporary (η), permanent (γ), and resilience parameters require large, high‑quality order‑book and trade datasets and can be unstable across regimes; the literature flags empirical estimation and in‑house desk studies as the common practical route, and measuring resilience (ρ) or time‑varying liquidity remains an open empirical question. Poor calibration materially reduces the model’s predictive value, so practitioners treat parameter estimation as a core operational task.

The standard formulations assume a deterministic intraday market volume curve (Vt) or otherwise time‑deterministic liquidity; when liquidity is stochastic or can evaporate (regime shifts, news, microstructure stress), the model’s static schedules can be misleading and traders either adapt the schedule piecewise or add tactical logic to handle stochastic liquidity. The slides and article both note deterministic volume as an assumption and recommend planning for events and regime shifts.

Related reading