What Is the Dickey-Fuller Test?

Learn what the Dickey-Fuller test is, how it detects unit roots, why traders use it for mean reversion, and where its assumptions can fail.

Introduction

The Dickey-Fuller test is a statistical test for deciding whether a time series has a unit root; roughly, whether it behaves like a random walk rather than a mean-reverting process. In trading, that distinction is not academic. If you mistake a wandering price series for a stable, mean-reverting one, you can build strategies that look sensible in backtests and then fail because the “mean” you expected the series to return to was never stable in the first place.

The puzzle the test solves is simple to state. Many market series look noisy and persistent. A price can drift for long stretches, a spread can widen and stay wide, and even a seemingly stable indicator can wander enough to make visual judgment unreliable. The key question is not whether the series moves around a lot. The question is whether shocks are temporary or permanent. The Dickey-Fuller framework was built to separate those two cases.

That is why the test shows up so often around mean-reversion work, spread modeling, and preprocessing decisions like differencing. Before fitting a model that assumes a stable level, you want some evidence that such a level exists in the data-generating process. The Dickey-Fuller test does not prove tradability, profitability, or good risk-adjusted returns. What it does is narrower and more fundamental: it tests whether the persistence in a series is consistent with a unit root.

Why do unit roots matter for trading and mean-reversion strategies?

The core issue is easiest to see by contrasting two worlds. In the first world, the series has a stable center. It can be pushed away by noise, but the push fades, so the series tends to drift back. In the second world, the series has no such anchor. A shock moves the level, and that new level can persist indefinitely. The second world is what a unit root captures.

A very simple model makes this concrete. Suppose a series Y_t evolves as Y_t = p Y_(t-1) + e_t, where e_t is a new shock at time t and p measures persistence. If |p| < 1, shocks decay over time and the series is stationary around its mean structure. If p = 1, the process becomes a random walk: Y_t = Y_(t-1) + e_t. In that case, shocks do not die out. They accumulate.

That difference changes almost everything downstream. If you regress or trade on levels that are actually random walks, relationships can look stable in-sample purely because all highly persistent series drift slowly. Signals based on “distance from average” become suspect because the average itself is unstable. Risk estimates based on historical dispersion can also mislead, since the variance of a unit-root process grows with time rather than settling around a fixed long-run level.

This is why traders often test not raw prices but things like spreads, residuals, or transformed series. A single asset price is often close to unit-root behavior, while a carefully constructed spread may be stationary if an economic linkage holds. The test is useful precisely because visual intuition is weak in persistent data. Two series can both look choppy, yet one may be mean-reverting and the other may simply be diffusing.

How does the Dickey–Fuller test detect a unit root?

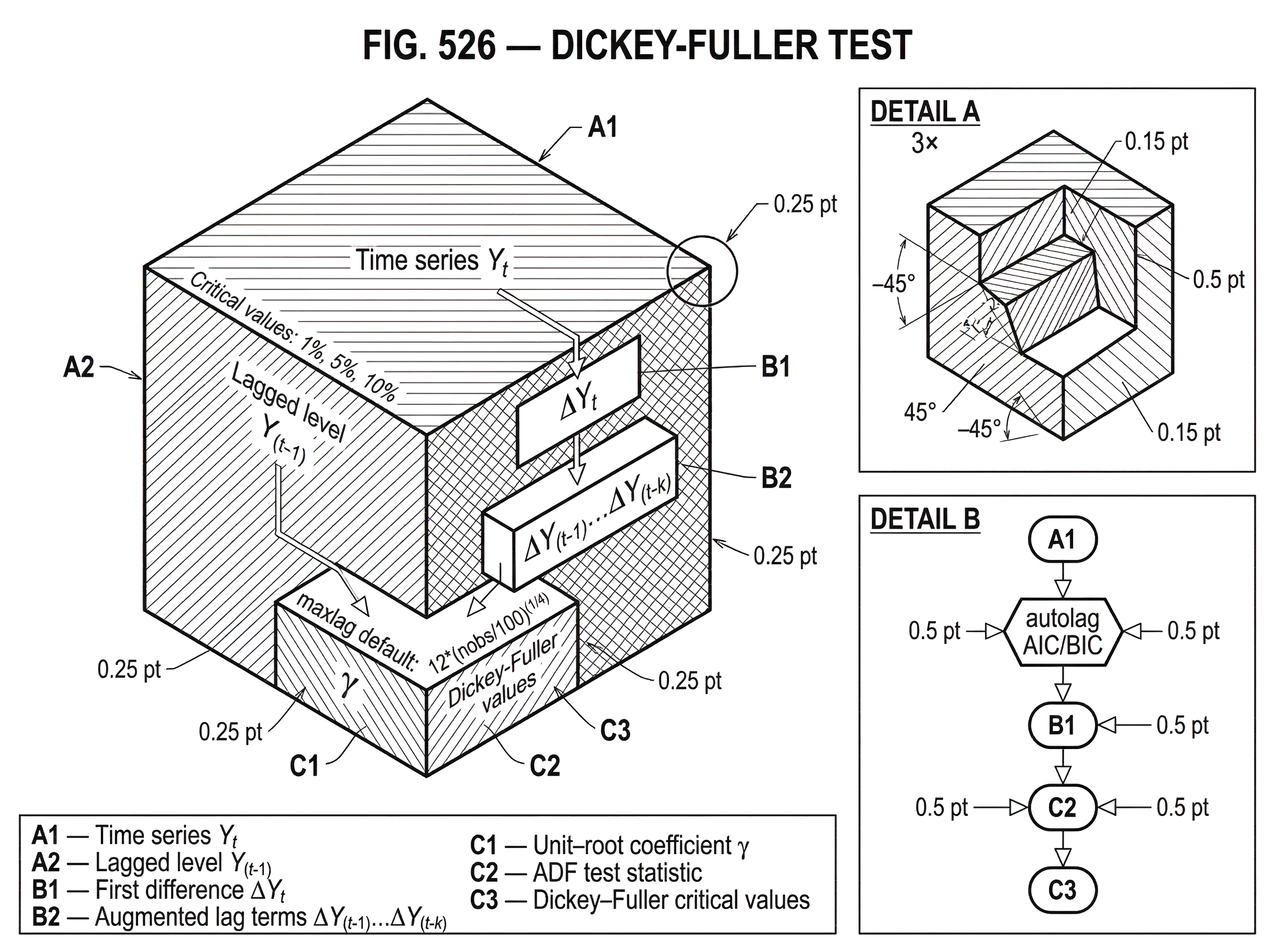

The Dickey-Fuller test asks whether the persistence parameter is exactly one. But it is usually written in a form that makes the mechanism easier to interpret. Starting from Y_t = p Y_(t-1) + e_t, subtract Y_(t-1) from both sides. Then the change in the series, ΔY_t = Y_t - Y_(t-1), satisfies ΔY_t = (p - 1) Y_(t-1) + e_t.

Now define γ = p - 1. The regression becomes ΔY_t = γ Y_(t-1) + e_t. This is the practical form of the test. If p = 1, then γ = 0, which corresponds to a unit root. If p < 1, then γ < 0, which means the change tends to push against the current level. A positive level today implies a negative expected change tomorrow, which is exactly the algebra of mean reversion.

So the test becomes: estimate γ and ask whether it is zero. If it is zero, the series behaves like a unit-root process. If it is sufficiently negative, the data support stationarity instead.

That sounds like an ordinary regression test, but here is the catch that makes Dickey-Fuller special: under the null hypothesis γ = 0, the usual t-statistic does not follow the ordinary Student-t distribution. Dickey and Fuller showed that under a unit root the estimator and related t-statistics have nonstandard limiting distributions, representable using functionals of a Wiener process. In plain language, the null changes the asymptotic geometry of the regression enough that standard t-tables are wrong. That is why Dickey-Fuller critical values are specialized.

This point is easy to miss and it is the intellectual center of the whole procedure. The test is not “just run a regression and check whether the coefficient is significant.” It is “run a very specific regression, under a very specific null, and compare the statistic to a nonstandard reference distribution designed for unit-root behavior.”

When should I test a spread instead of a raw price?

Imagine two series a trader might examine. The first is the price of a stock index. The second is the spread between two related securities after some hedge ratio adjustment. Both move around daily. Both can show long runs away from recent averages.

For the index level, a shock such as a macro surprise may permanently shift expectations about future cash flows or discount rates. Tomorrow’s price starts from today’s changed level. In the simplest approximation, that is close to a random walk. If you run a Dickey-Fuller regression on the level, the estimated γ may be near zero because the series has no tendency to undo level shocks.

For the spread, the mechanism can be different. Suppose the spread widens because one security temporarily overreacts relative to the other. If arbitrage, substitution, or common fundamentals pull the pair back together, then a positive spread today should imply a negative expected change in the spread tomorrow. In the regression ΔY_t = γ Y_(t-1) + e_t, that shows up as a negative γ. The larger the spread, the stronger the pull back.

The test is trying to detect exactly that tendency. It is not reading charts. It is checking whether the series’ own lagged level helps predict a corrective move in the next increment. If that corrective force is strong enough relative to noise, the test rejects the unit-root null.

Even here, caution is needed. Rejecting a unit root in a spread does not tell you whether the spread mean is stable enough after costs, whether convergence is fast enough to monetize, or whether the relationship survives structural changes. It says something narrower: the data are inconsistent with the spread being a pure unit-root process.

Which Dickey–Fuller specification should I use: none, constant, or trend?

| Variant | Deterministic | Alternative interpreted | When to use |

|---|---|---|---|

| No constant | none | Stationary around zero | Zero-mean residuals or de-meaned series |

| Constant (drift) | intercept only | Stationary around nonzero mean | Spreads or series with stable mean |

| Trend | intercept + trend | Stationary around deterministic trend | Series with clear deterministic trend |

The simplest Dickey-Fuller setup assumes no intercept and no time trend. That is appropriate only when the maintained model truly has neither. But many series drift or fluctuate around a nonzero mean, and some move around a deterministic trend. Dickey and Fuller generalized the statistics to models with an intercept and with time terms, and the critical values depend on which deterministic components are included.

This matters because deterministic terms change what “stationary” means. If you include no constant, the alternative is stationarity around zero. If you include a constant, the alternative is stationarity around a nonzero mean. If you include a trend, the alternative is stationarity around a deterministic time trend. These are different null/alternative environments, and using the wrong one can distort inference.

In software, this choice appears as options like no constant, constant only, or constant plus trend. In Python’s statsmodels.tsa.stattools.adfuller, the regression argument uses values such as 'n', 'c', 'ct', and 'ctt'. In R’s ur.df, the corresponding choices are none, drift, and trend. These options are not cosmetic. They define the regression you are fitting and the critical values you should use.

A common trading mistake is to throw every series into the default specification without thinking about the deterministic part. If a spread is plausibly centered around a stable nonzero mean, excluding an intercept can misstate the test. If a series has a deterministic trend and you ignore it, the test may confuse trend-stationary behavior with unit-root behavior. The right specification depends on the economic or statistical structure of the series, not on software defaults.

Why use the Augmented Dickey–Fuller (ADF) instead of the basic test?

The original Dickey-Fuller derivation starts from a very simple autoregressive setting. Real series often have more complicated short-run dynamics: serial correlation in the errors, richer autoregressive behavior, and leftover dependence that the basic regression does not absorb. If you ignore that dependence, the test’s size and power can deteriorate.

The Augmented Dickey-Fuller test, usually abbreviated ADF, fixes this by adding lagged differences to the regression. Instead of ΔY_t = γ Y_(t-1) + e_t, the model becomes something like ΔY_t = γ Y_(t-1) + a_1 ΔY_(t-1) + ... + a_k ΔY_(t-k) + e_t, plus any chosen constant or trend terms. The extra lagged differences soak up serial correlation in short-run movements so that the remaining disturbance is closer to the conditions under which the test statistic behaves as intended.

Mechanically, this is a separation of long-run and short-run persistence. The coefficient γ still carries the unit-root question. The lagged difference terms handle transitory Autocorrelation. That is why ADF is the version most practitioners actually run.

The statsmodels documentation states the purpose directly: the ADF test is used to test for a unit root in a univariate process in the presence of serial correlation. Its null is that the series has a unit root, and its alternative is that there is no unit root. The reported p-values are approximate, based on MacKinnon regression-surface approximations using updated critical-value tables. When the result is borderline, the critical values deserve more attention than the p-value alone.

How do I interpret ADF output, p-values, and critical values?

In practice, the output usually contains a test statistic, a p-value, the number of lags used, the effective sample size, and critical values at levels like 1%, 5%, and 10%. The sign convention matters. More negative test statistics provide stronger evidence against the unit-root null.

So the logic is:

- null hypothesis: the series has a unit root

- alternative: the series is stationary under the chosen deterministic specification

- decision: reject the null if the statistic is more negative than the relevant critical value, or if the p-value is below your threshold

The critical phrase is “under the chosen deterministic specification.” Rejecting with a constant-only model is not the same claim as rejecting with a constant-plus-trend model. The alternative is conditional on that modeling choice.

Lag selection is the next practical lever. In statsmodels, autolag can use 'AIC', 'BIC', 't-stat', or None. If maxlag is left unspecified, the default rule is 12*(nobs/100)^(1/4). In ur.df, lag length can be fixed or selected via AIC or BIC up to a user-specified maximum. This is not a mere implementation detail. Too few lags can leave residual autocorrelation and distort test size. Too many lags can reduce power by consuming degrees of freedom and adding noise.

A sensible workflow in trading research is to report the specification used, the lag selection method, the chosen lag count, the statistic, and the critical values. Otherwise a reader cannot tell whether your stationarity conclusion is robust or just a product of a convenient default.

What does rejecting or failing to reject the unit-root null imply for traders?

The Dickey-Fuller family does not answer “is this a good trading signal?” It answers a narrower statistical question about persistence. That narrower question is still valuable because it filters out an entire class of bad ideas.

If a level series appears attractive because it often returns near a historical average, a failure to reject the unit-root null is a warning that the apparent average may be unstable. If a spread is intended for mean reversion, rejecting the null supports (though does not prove) the structural premise that deviations tend to decay. In this way, the test acts as a gatekeeper for strategies built on equilibrium restoration.

Notice what the test does not guarantee. It does not measure half-life directly. It does not tell you whether the speed of reversion is fast enough for your holding period. It does not account for transaction costs, slippage, borrow constraints, or execution risk. And it does not ensure out-of-sample stability. Statistical stationarity and economic tradability overlap, but they are not the same thing.

When can Dickey–Fuller tests give misleading results in financial data?

| Failure | Why | Consequence | Mitigation |

|---|---|---|---|

| Model misspecification | Wrong intercept/trend choice | Incorrect rejection decisions | Choose correct term; report spec |

| Structural breaks | Breaks mimic permanence | Stationary series looks like unit root | Use break‑aware tests (Perron, ZA) |

| Small samples | Low power; finite-sample bias | Borderline or misleading p‑values | Bootstrap or enlarge window |

| Dependence / heteroskedasticity | Volatility clustering, serial corr. | Distorted size and power | ADF lags, PP test, robust bootstrap |

The most important failure mode is model misspecification. If you choose the wrong deterministic terms, the null distribution changes and inference can be wrong. This is not a small technicality; it is built into the theory. Dickey and Fuller explicitly showed that intercept and trend cases alter the limiting behavior of the statistics.

The next issue is dependence beyond the simple assumptions. The original derivations assumed independent errors with mean zero and constant variance, though the limit results extend to iid nonnormal errors. Financial data, of course, often show volatility clustering, heavy tails, and more complex serial dependence. ADF helps with serial correlation by augmenting the regression, but it is not a universal cure for every deviation from the textbook setup.

Small samples are another problem. The asymptotic theory explains why special critical values are needed, but finite-sample behavior can still be imperfect. Borderline results should be treated with caution, especially when the sample is short or the series is highly persistent but not exactly unit-root.

Then there is the issue of structural breaks. This is one of the deepest practical limitations. Perron showed that standard unit-root tests can fail to reject the unit-root null even when the true process is stationary around a trend with a one-time break in level or slope. In trading language, a regime shift can make a stationary-but-broken process look like a unit-root process if you insist on fitting a single unbroken trend structure. If your series contains major changes in market regime, policy, microstructure, or business model, plain Dickey-Fuller results can be misleading.

That is why break-aware tests such as Perron-type procedures or Zivot-Andrews are sometimes used when a single structural break is plausible. The need for these extensions is not an optional refinement. It follows directly from the mechanism: the test asks whether shocks are permanent, but a deterministic level shift can mimic permanence if the model does not allow it.

When should I run KPSS alongside ADF and why?

| Test | Null | Best use | Reject means | Software |

|---|---|---|---|---|

| Augmented Dickey‑Fuller (ADF) | Unit root | Handling serial correlation via lags | Evidence against unit root (conditional) | statsmodels.adfuller, ur.df |

| KPSS | Stationarity | Checking stationarity as the null | Evidence for nonstationarity if rejected | statsmodels.kpss, ur.kpss |

| Phillips‑Perron (PP) | Unit root | Robustness to heteroskedasticity | Evidence against unit root | pp.test implementations |

A useful complement is the KPSS test. The reason is conceptual symmetry. Dickey-Fuller and ADF take unit root as the null. KPSS takes stationarity as the null. Because the nulls are reversed, the two tests can be used together to separate clearer cases from ambiguous ones.

If ADF fails to reject a unit root and KPSS rejects stationarity, the message is aligned: nonstationarity is likely. If ADF rejects a unit root and KPSS fails to reject stationarity, that is also aligned: stationarity is plausible. When they disagree or both give weak evidence, the result is not “average them.” The result is “the sample or the specification is ambiguous, and the structure may need deeper modeling.”

This pairing is popular precisely because unit-root testing is often low-power near the boundary. A process with p very close to 1 can be hard to distinguish from a true unit root in realistic samples. Complementary tests help you see when your conclusion is robust and when it rests on thin statistical evidence.

How should traders apply the Dickey–Fuller test in strategy research?

For a trader, the cleanest interpretation is this: the Dickey-Fuller test is a persistence filter. It asks whether deviations are likely to fade or whether the series simply relocates after shocks. That makes it especially relevant before building mean-reversion signals from spreads, valuation gaps, basis measures, residual series, or inventory-style imbalances.

But the right object to test is usually not the raw price. Raw asset prices often behave much more like unit-root processes than stationary ones. What traders care about is often a transformed series designed to strip out common trends or deterministic components. A residual from a hedge regression, a log spread, or a basis adjusted for carry is often more meaningful than the outright price level.

Even then, the test should be part of a broader diagnostic set rather than a lone gatekeeper. You would typically also inspect residual autocorrelation, look for structural breaks, test robustness across windows, and avoid turning one favorable p-value into a full conviction. If you try hundreds of spreads and celebrate the few that reject at 5%, you have created a multiple-testing problem. In systematic research, that can be as dangerous as ignoring stationarity altogether.

Which parts of unit-root testing are fundamental versus implementation choices?

The fundamental idea is the unit-root question itself: does the process have a restoring force, or do shocks persist indefinitely? The transformation into differences, the regression on the lagged level, and the specialized critical values all exist to answer that question coherently.

What is more conventional are the implementation choices around lag length, deterministic terms, software defaults, and reporting format. Those choices matter a lot in practice, but they are not the essence of the method. The essence is the connection between a unit root and the absence of a corrective response in ΔY_t to the prior level Y_(t-1).

The nonstandard distribution under the null is also fundamental. Without that insight, you would misuse ordinary t-statistics and draw systematically wrong conclusions. That contribution of Dickey and Fuller is why the test exists as a distinct object rather than as a trivial regression exercise.

Conclusion

The Dickey-Fuller test exists to answer a precise question that trading models often smuggle in without checking: do shocks wash out, or do they stick? By testing the null of a unit root, it helps distinguish a genuinely mean-reverting series from one that only appears stable over a limited sample.

Used well, it is a disciplined first screen for mean-reversion research. Used carelessly, it can create false confidence; especially when lag choice, deterministic terms, small samples, or structural breaks are ignored. The idea worth remembering tomorrow is simple: before you trade a return to the mean, make sure the mean is statistically real.

Frequently Asked Questions

The Dickey–Fuller family of tests answers a narrow statistical question: whether shocks to a series are temporary or permanent - i.e., whether the series has a unit root (random-walk-like) or is stationary (roughly, mean-reverting) - which matters because trading strategies that assume a stable mean can fail if that mean is not statistically present.

The Augmented Dickey–Fuller (ADF) adds lagged differences to the regression (ΔY_t = γ Y_{t-1} + a_1 ΔY_{t-1} + ... + a_k ΔY_{t-k} + e_t) to soak up short-run serial correlation so the unit-root coefficient γ can be tested under more realistic disturbance dynamics.

You must choose deterministic terms that match the data-generating idea: use no constant only if the series plausibly reverts to zero, include a constant if it reverts to a nonzero mean, and include a trend if you believe in a deterministic trend - the choice changes the null/alternative and the appropriate critical values, so it should be driven by economic/statistical structure, not software defaults.

Lag length matters because too few lags leave residual autocorrelation (distorting size) while too many lags reduce power; common practice is to select lags by information criteria (AIC/BIC) or use automatic rules - many packages default maxlag = 12*(nobs/100)^(1/4) unless you override it.

Structural breaks, volatility clustering/heavy tails, and small samples commonly mislead DF/ADF results: a one-time level or slope break can make a stationary series look like a unit root, heteroskedastic or dependent errors violate simple assumptions, and small samples reduce the test's reliability.

Because their nulls are reversed, pairing ADF (null = unit root) with KPSS (null = stationarity) gives complementary evidence: if both agree the conclusion is stronger, while disagreement signals ambiguity that calls for deeper modeling or robustness checks.

No - rejecting a unit root only says the series is unlikely to be a pure random walk under the chosen specification; it does not measure half-life, convergence speed, robustness to costs, or out-of-sample tradability, all of which require separate analysis.

When a p-value is borderline you should examine the reported test statistic against the appropriate Dickey–Fuller critical values (not ordinary t-tables), report the deterministic specification and lag choice used, and check robustness across lag selections, windows, and complementary tests because ADF p-values are approximate.

The test has low power against alternatives very close to a unit root: processes with autoregressive roots near one can be hard to distinguish from true unit roots in realistic samples, so nonrejection does not prove a unit root and rejection near the boundary can be fragile.

No - you should not rely blindly on software defaults; common implementations expose options for deterministic terms (e.g., 'n','c','ct'), lag selection (AIC/BIC/autolag), and critical-value computation, and the right choices depend on the series and research question, so always report and justify them.

Related reading