What Is PCA for Portfolio Risk?

Learn what PCA for portfolio risk is, how it turns correlations into risk factors, what eigenvalues mean, and where PCA helps or breaks down.

Introduction

PCA for portfolio risk is a way to take a large set of correlated asset returns and rewrite their joint behavior in terms of a smaller number of dominant risk directions. That sounds abstract, but it solves a very practical problem: modern portfolios are exposed to many securities, sectors, maturities, and styles at once, while risk is driven by a much smaller set of shared movements. If you look only at individual positions, the picture is too detailed to be useful; if you ignore correlation, the picture is wrong. PCA sits in between: it compresses the covariance or correlation structure into a few orthogonal components that explain most of the variation.

The reason this is useful is not that markets are secretly simple. They are not. The usefulness comes from a structural fact: many assets co-move because they respond to common shocks. Equity indexes often rise and fall together because of a broad market factor. Bond yields across maturities often shift through a small number of curve movements. Credit instruments cluster around spread and liquidity shocks. PCA tries to discover those common movements directly from the data, without requiring you to name them in advance.

That is also why PCA is both powerful and easy to misuse. It can reveal the main modes of risk in a portfolio, but it does not know economics, regime change, or measurement error. It produces mathematically clean components, not necessarily stable or interpretable ones. To use PCA well, you need to understand both the mechanism that makes it work and the assumptions that make it fragile.

Why use PCA for high-dimensional portfolio risk?

Suppose you hold 500 stocks. In principle, portfolio risk depends on all 500 volatilities and all pairwise relationships among them. The covariance matrix already has the right object for this: it tells you not only how volatile each stock is, but how they move together. The difficulty is that this object becomes large very quickly. With N assets, the covariance matrix has N x N entries, and estimating it from historical returns becomes noisy when the number of observations is not much larger than N.

This is the central tension in portfolio risk. You want a model rich enough to reflect correlation, because diversification depends on correlation. But once you estimate a high-dimensional covariance matrix from finite data, you start fitting noise. In-sample, the matrix can look precise. Out of sample, it can be unstable enough to distort risk estimates, factor attribution, and optimization.

PCA addresses this by asking a more selective question. Instead of treating every direction in asset space as equally important, it asks which directions account for most of the observed variation. If many securities tend to move together, then the covariance matrix should have a few dominant directions. Keeping those directions and discarding the weak, noisy ones gives a lower-dimensional description of risk.

This is the key idea to remember: PCA is not mainly about reducing the number of assets; it is about reducing the number of independent risk directions. A portfolio can hold hundreds of names yet still be driven mostly by a handful of common shocks.

How does PCA identify preferred risk directions in return data?

Here is the intuition before the math. Imagine plotting daily returns of two highly correlated stocks as points on a plane. If the stocks moved independently with similar volatility, the cloud would look roughly circular. But if they usually rise and fall together, the cloud stretches along a diagonal line. That line is the direction of greatest variation. A second direction, perpendicular to the first, captures the leftover spread between the two stocks.

PCA generalizes this idea from two dimensions to many. In a space with N assets, it finds the direction along which the return cloud varies the most. That is the first principal component. Then it finds the next direction, constrained to be orthogonal to the first, that captures as much remaining variation as possible. It continues this process until there are N orthogonal components.

These components are not chosen for storytelling value. They are chosen because they maximize variance subject to orthogonality constraints. As a result, the principal components are uncorrelated with one another and are ordered by explained variance. The first few usually capture a large share of total variation when the original variables are strongly interrelated.

That is the entire reason PCA is useful in risk: if correlation structure is concentrated, risk can be summarized by a few dominant components. The portfolio may have 1,000 line items, but the market may only be charging you for a dozen broad risk directions.

How does PCA work mathematically on a covariance or correlation matrix?

Take a return matrix with T observations over time and N assets. After centering the returns, you estimate either a covariance matrix or a correlation matrix. Call this matrix S. It is symmetric and positive semidefinite, which is why PCA reduces to an eigenvalue-eigenvector problem.

An eigenvector of S is a direction in asset space that is only rescaled, not rotated, when S acts on it. Its eigenvalue tells you how much variance lies along that direction. If v_k is the kth eigenvector and lambda_k its eigenvalue, then v_k defines a principal direction and lambda_k is the variance explained by that component.

The ordering matters. If the eigenvalues are sorted from largest to smallest, then the first eigenvector corresponds to the direction of maximum variance, the second to the next-highest variance among directions orthogonal to the first, and so on. The total variance equals the sum of all eigenvalues. The explained-variance ratio of component k is lambda_k / sum(lambda_j).

In practical portfolio work, this leads to a simple decomposition. The covariance matrix can be written as a sum of rank-one pieces built from eigenvectors and eigenvalues. Keeping only the largest K eigenvalues gives a low-rank approximation. Mechanically, this says: “Treat the portfolio as exposed mainly to K common factors, and treat the rest as residual variation.”

That approximation is the bridge from pure statistics to risk modeling.

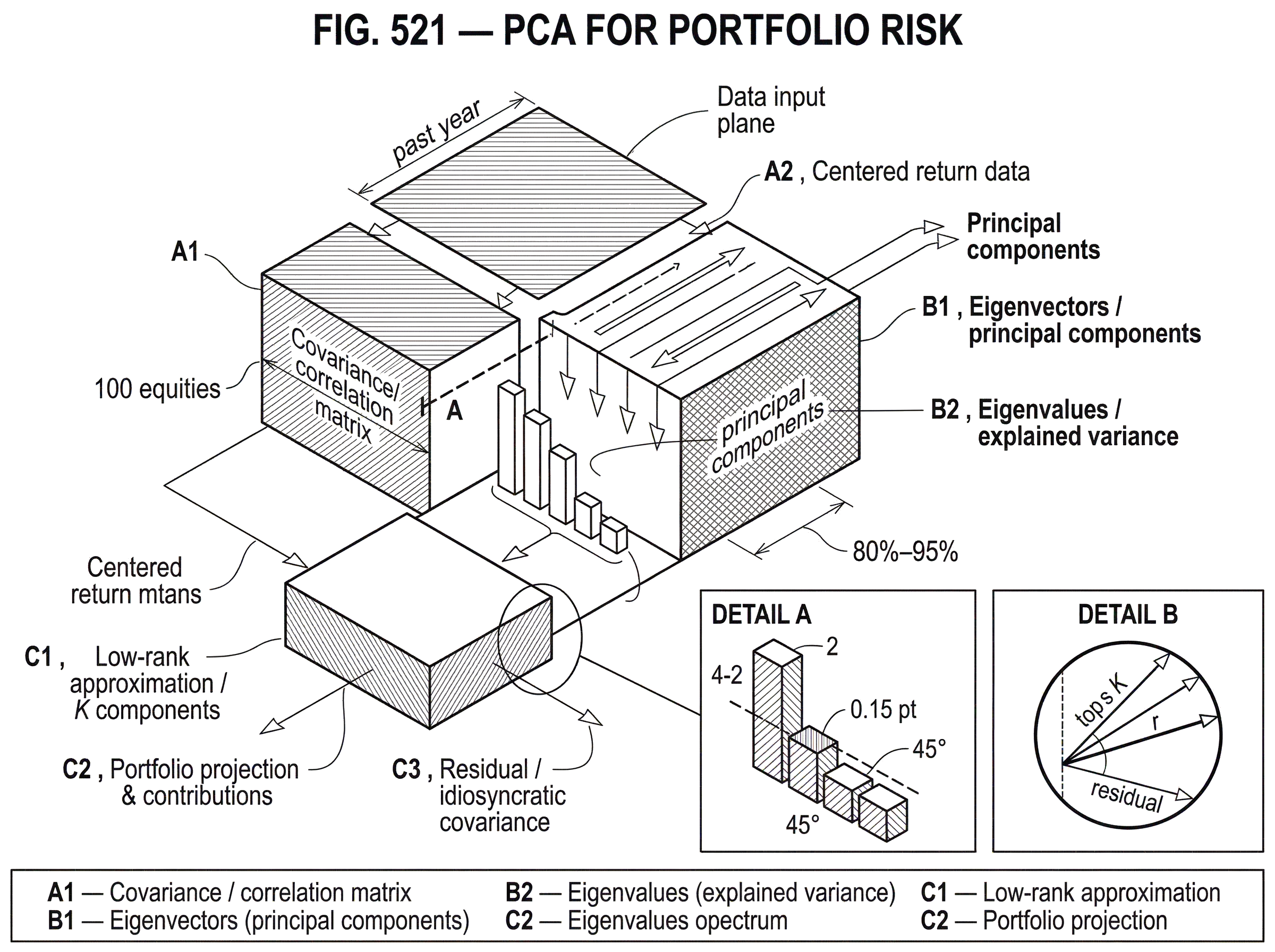

Example: applying PCA to a 100-equity portfolio

Imagine a universe of 100 equities. You compute their daily return correlation matrix over the past year. After diagonalizing it, you find that the largest eigenvalue is much bigger than the rest, the next few are meaningful but smaller, and then there is a long tail of tiny eigenvalues.

What does that mean economically? The first component is likely a broad market mode. Its eigenvector might place roughly similar positive weights on most stocks, which means the component rises when the whole market rises and falls when the whole market falls. A portfolio heavily tilted toward that direction is not diversified against market risk, even if it holds many names.

The second component might separate one cluster from another (for example, cyclicals versus defensives, or growth versus value) because that is the next strongest pattern of co-movement after the market mode. A third component might isolate a sector or regional effect. After that, the remaining components often become harder to interpret and more sensitive to sampling noise.

Now consider your actual portfolio weights. By projecting the portfolio onto these eigenvectors, you can see how much of its variance comes from each principal direction. Perhaps the portfolio looks diversified by issuer count, but 70% of its modeled variance comes from the first component and another 15% from the second. That tells you something more actionable than a list of holdings: the portfolio is effectively a concentrated bet on a small number of common drivers.

The same logic appears very clearly in fixed income. For a government bond curve, PCA often finds that yield changes are dominated by a small number of curve movements commonly described as level, slope, and curvature. PCA does not require those labels, but it often rediscovers them because they are genuine dominant directions of variation. In that setting, PCA helps translate dozens of maturities into a few curve risk exposures.

PCA on covariance vs. correlation: when to use each

| Aspect | Preserves scale | Emphasizes co-movement | Common use | Main downside |

|---|---|---|---|---|

| Covariance | Yes (preserves volatility) | Less emphasis on relative patterns | Model portfolio variance in currency | Dominated by high‑volatility assets |

| Correlation | No (standardized scales) | Highlights relative co‑movement | Reveal structural factors | Ignores absolute risk magnitudes |

This choice changes what PCA “sees.” If you run PCA on the covariance matrix, assets with higher volatility naturally carry more weight because variance is measured in original units. If you run PCA on the correlation matrix, each asset is standardized first, so the analysis focuses on co-movement patterns rather than raw volatility scale.

Neither choice is universally correct. The covariance matrix is often the more direct object for risk in currency units, because actual portfolio variance depends on covariance, not correlation alone. But if one asset is simply much more volatile than the others, covariance-based PCA may devote a component to scale rather than to structural co-movement. Correlation-based PCA removes that scale dominance and can reveal cleaner common factors.

This is why preprocessing matters. PCA implementations commonly center the data but do not automatically scale each feature. If you standardize returns, you are making a modeling decision: you are saying that relative co-movement matters more than absolute volatility differences. If you do not standardize, you are preserving the original volatility structure. That is not a cosmetic choice; it changes the components themselves.

What do principal components tell you about portfolio risk?

A principal component is best understood as a risk direction, not as an asset and not necessarily as an economically named factor. The associated eigenvector gives the portfolio weights of an “eigenportfolio,” and the associated eigenvalue gives the variance of that portfolio direction. In that sense, PCA converts the covariance matrix into a basis of orthogonal portfolios.

This viewpoint makes the risk interpretation concrete. If a portfolio has a large projection onto the first eigenvector, it is exposed to the highest-variance direction in the market. If it has offsetting exposures to the first component but large exposure to the third and fourth, then its risk may be more about relative-value or sector structure than broad beta.

This is useful for three related tasks. First, it helps summarize risk: a few numbers can explain most of a portfolio’s modeled variance. Second, it helpsattribute risk: you can ask how much each principal direction contributes to total variance. Third, it helpsmonitor changing structure: if the first component begins explaining much more variance than usual, correlations may be rising and diversification may be disappearing.

That last point matters in stress periods. When markets become more synchronized, the top component often grows. In equities, this is the familiar experience that “everything trades together” during crisis episodes. PCA gives a quantitative way to see that synchronization as a larger leading eigenvalue.

When does PCA reliably capture market risk structure?

It works well when the data really do have low effective dimensionality. Financial returns are noisy at the single-name level, but they are not arbitrary collections of independent series. They are shaped by shared macro shocks, common funding conditions, sector structures, and benchmark flows. Those mechanisms create correlation patterns that concentrate variance into a few directions.

PCA is attractive because it does not need a detailed structural theory to start extracting that concentration. You do not have to predefine “market,” “value,” or “curve steepening” factors. You estimate a covariance or correlation matrix and let the dominant directions emerge from the data.

That can be especially helpful when the factor structure is obvious in hindsight but hard to specify cleanly ex ante. In cross-asset portfolios, for example, a common risk direction might mix equities, credit, and rates in a way that no simple label captures. PCA can still find it if the covariance matrix contains it.

There is also a computational reason. Once the covariance matrix is estimated, PCA is a standard matrix decomposition problem. In modern software this is routine, and scalable implementations exist for large datasets, including approximate randomized solvers and incremental methods for out-of-core computation.

When and why does PCA fail for portfolio risk?

| Failure mode | Why it matters | Signals to watch | Practical fixes |

|---|---|---|---|

| Sample noise (small T) | Eigenvectors dominated by noise | Many tiny eigenvalues | Shrinkage and RMT cleaning |

| Nonstationarity, rotations | Components change over time | Leading eigenvalue spikes | Rolling windows and EWMA |

| Measurement error | Artifacts distort components | Inconsistent component weights | Clean data and long‑run covariance |

| Interpretability limits | Orthogonality not economic | Unclear factor loadings | Sparse PCA or factor rotation |

The first problem is sample noise. PCA on a sample covariance matrix gives sample principal components, not true population components. If you have many assets and not much history, the smaller eigenvalues and their eigenvectors can be dominated by estimation error. In finance this is not a corner case; it is normal.

Random matrix theory is useful here because it explains why empirical eigenvalue spectra are distorted when N is not small relative to T, where N is the number of assets and T the sample length. In that regime, many apparent components are just noise. Practitioners often respond by cleaning the covariance matrix, clipping noisy eigenvalues, shrinking estimates, or keeping only the leading components.

The second problem is instability over time. Even the leading eigenvectors move. Some of that movement is sampling error, and some reflects real market change. If the factor structure rotates, a component estimated on the last two years may not represent the next quarter well. This is especially important when people attach economic meaning to a component and forget that PCA directions are estimated objects, not fixed laws.

The third problem is measurement error and preprocessing sensitivity. If the underlying data are noisy, interpolated, stale, or asynchronous, PCA can learn artifacts. This matters in fixed income curves, where derived forward-rate data can contain observational errors, and in any dataset where missing values or microstructure effects are material. The decomposition will still produce clean-looking components, but clean-looking is not the same as correct.

The fourth problem is interpretability. Orthogonality is a mathematical convenience, not an economic principle. True market drivers need not be orthogonal, and PCA components can mix several economic effects into one statistical direction. The first few components are often interpretable, but beyond that the story can become fragile.

How many principal components should I retain for a PCA-based risk model?

| Rule | What it measures | Typical threshold | Pros | Cons |

|---|---|---|---|---|

| Explained variance | Share of total variance | 80%–95% | Simple quantitative rule | May retain noisy components |

| Stability across windows | Temporal factor consistency | No fixed threshold | Favors robust factors | Requires extra monitoring |

| Cross‑validation | Out‑of‑sample generalization | Choose by validation score | Guards against overfitting | Needs probabilistic PCA |

| Economic interpretability | Meaningful factor mapping | No numeric threshold | Aligns with decisions | Subjective judgment required |

This is the main modeling judgment in applied PCA. Keeping too many components reintroduces noise. Keeping too few can throw away real risk.

An explained-variance threshold is a common starting point: keep enough components to explain, say, 80% to 95% of total variance. But this is only a statistical rule of thumb. In risk work, what matters is not just how much variance is explained in sample, but whether the retained components are stable and useful out of sample.

A more practical standard combines three ideas. Keep components that explain meaningful variance, remain reasonably stable across nearby estimation windows, and support a coherent risk interpretation. If a component appears only because of a small window choice and then vanishes, it should not anchor major portfolio decisions.

This is one reason probabilistic or cross-validated approaches can help. They force the question away from “how much variance can I fit?” and toward “how much structure generalizes?” In a production risk model, that is usually the more important question.

Why treat PCA as a statistical risk model rather than only a decomposition step?

It is tempting to think of PCA as a neutral preprocessing step. In portfolio risk, it is better understood as a statistical risk model. The moment you decide how to estimate returns, what window to use, whether to standardize, how many components to retain, and whether to clean the spectrum, you have made modeling choices with consequences for exposures and forecasts.

This is why governance and validation matter. Any material PCA-based risk model should be checked for conceptual soundness, monitored over time, and evaluated against realized outcomes. The issue is not bureaucracy; it is that models can fail in two distinct ways. They can be wrong because the estimate is poor, and they can be wrong because users interpret them too strongly. PCA is vulnerable to both.

Good monitoring often asks simple questions. How much variance does the first component explain today versus historically? How stable are the leading eigenvectors across rolling windows? Are portfolio risk contributions dominated by a component that recently rotated? Has the sample ratio of assets to observations become too high for the chosen estimation method? These questions do not eliminate model risk, but they make it visible.

How is PCA applied in real-world portfolio risk workflows?

In practice, PCA is used wherever the dimension of the risk problem is large and the correlation structure matters. A statistical equity risk model may use PCA to identify common latent factors from asset returns. A rates desk may use PCA on yield-curve changes to summarize duration risk beyond simple key-rate buckets. A margining system may filter returns through PCA-derived latent factors to capture time-varying correlation more efficiently than working instrument by instrument.

The same mechanism is operating in each case. A high-dimensional return space is being compressed into a smaller latent factor space, and portfolio exposures are measured in that space. The differences are in data quality, horizon, estimation method, and how much interpretability the user requires.

The danger is to assume that because the linear algebra is the same, the reliability is the same. Equity returns, government curves, option surfaces, and sparse cross-asset books do not present the same noise structure. The method is portable; the quality of the result is not automatically portable.

Quick rules to avoid common PCA pitfalls in risk analysis

PCA tells you where variance has been concentrated in your data. It does not by itself tell you why that happened, whether it will persist, or whether the weak components are real. If you remember that, most misuse becomes easier to avoid.

An analogy helps, with limits. PCA is like rotating a map so that the widest spread of points lies horizontally. That makes the structure easier to summarize with a few axes. What the analogy explains is the geometric idea of finding preferred directions of variation. Where it fails is economics: markets are not just clouds of points. The cloud itself changes shape over time, and the axes you find may partly reflect noise, sampling design, and regime shifts.

Conclusion

PCA for portfolio risk is a way to rewrite a complex covariance structure in terms of a few dominant, uncorrelated risk directions. Its value comes from a real feature of markets: many assets move together because common shocks dominate idiosyncratic ones. When that is true, PCA can reveal what a portfolio is actually exposed to, often more clearly than a raw list of holdings or a full noisy covariance matrix.

The idea to remember tomorrow is simple: PCA works when risk is lower-dimensional than the position list, but it breaks when you mistake a sample-dependent statistical summary for a stable economic truth. Used carefully, it is one of the clearest tools for seeing concentration, correlation, and hidden common exposure in a portfolio.

Frequently Asked Questions

Running PCA on the covariance matrix preserves each asset’s volatility scale so components reflect absolute risk in currency units, whereas running it on the correlation matrix standardizes assets first and makes PCA focus on pure co-movement patterns; neither is universally correct - choose covariance if you care about portfolio variance in native units, choose correlation when a few very high‑volatility names would otherwise dominate the decomposition. PCA implementations typically center but do not scale by default, so scaling is an explicit modeling choice that changes the components.

There is no single rule; a common starting rule‑of‑thumb is to retain enough components to explain a large share of variance (e.g., 80–95%), but that can keep noisy directions; a more robust practice is to retain components that (a) explain meaningful variance, (b) are stable across nearby estimation windows, and (c) admit a coherent risk interpretation or cross‑validated predictive value. Probabilistic or cross‑validation approaches and out‑of‑sample checks help move the decision from ‘‘how much fit in sample’’ to ‘‘what structure generalizes.’

PCA estimates are sample objects and can rotate or change magnitude as market regimes shift; some movement is sampling error and some is genuine structural change, so you should monitor stability across rolling windows and be cautious using historical components as fixed economic factors. There is no guaranteed temporal prescription - window length, weighting, and whether to use dynamic factor models are modeling choices that affect robustness.

A principal component is a statistical risk direction (an orthogonal eigenportfolio) and should not automatically be equated with a named economic factor; the first one or two components are often interpretable (market, major sector splits, or curve level/slope), but beyond that components can mix economic effects and be hard to name reliably. Interpreting components requires caution because orthogonality is a mathematical convenience, not an economic law.

When the number of assets N is large relative to the sample length T, many empirical eigenvalues and eigenvectors are dominated by estimation noise; random matrix theory explains these distortions and suggests remedies such as eigenvalue clipping, parametric spectrum fitting, or shrinkage (‘‘cleaning’’) to improve out‑of‑sample behavior. Which cleaning method works best depends on the dataset and test - RMT‑based and shrinkage schemes have both shown empirical success but are dataset‑dependent.

Standardizing (scaling) returns before PCA makes the model focus on co‑movement regardless of absolute volatility; leaving returns unscaled preserves the volatility ordering and can make PCA allocate components to high‑variance assets rather than structural correlations. Because PCA routines typically only center by default, scaling is an explicit, consequential preprocessing choice you must make based on whether relative co‑movement or absolute variance matters for your risk objective.

Applied to yield‑curve changes, PCA commonly rediscovers level, slope, and curvature because those are dominant, low‑dimensional curve movements, but interest‑rate data (forward rates) are often noisy or interpolated so classical PCA on raw forward rates can misidentify factors; estimating a long‑run covariance or otherwise addressing measurement error improves recovery of true curve factors.

PCA‑based risk models should be governed and validated: maintain model inventory, check conceptual soundness, monitor how much variance top components explain over time, test eigenvector stability across windows, and run outcomes analysis against realized risks; regulators and supervisory guidance expect organizations to document limitations and increase validation frequency for high‑impact models.

For very high‑dimensional problems, randomized SVD implementations give large speed and memory gains though the inverse transform may be approximate, and SparsePCA (L1‑penalized PCA) can improve interpretability and reduce overfitting when sample sizes are small; these algorithmic variants are practical tools but introduce additional approximation or tuning choices.

PCA is used operationally in systems such as margining and portfolio aggregation because it compresses correlation structure efficiently, but its outputs can mislead for concentrated portfolios or when components are unstable; empirical tests of PCA‑based margining report good performance in many settings but warn that concentrated books and sample choices require special care.

Related reading