What Is Rolling Window Analysis?

Learn what rolling window analysis is in trading, how it works, why it matters for regime shifts, and where rolling estimates and walk-forward tests fail.

Introduction

Rolling window analysisis a way to study market behavior by repeatedly recalculating the same statistic, signal, or model on a moving slice of data rather than on the entire history at once. That sounds like a small technical choice, but it changes the question completely. A full-sample estimate asks, “What was true on average across all these years?” A rolling estimate asks, “What looks trueright now, given the recent past I could actually have seen at the time?”

That distinction matters because markets are not stationary in the simple textbook sense. Volatility clusters, correlations rise and fall, factor exposures drift, and a strategy that looked stable over ten years may have worked only in three specific regimes. If you compress all of that into a single average, you often get a number that is mathematically correct and economically unhelpful. Rolling window analysis exists to expose that time variation instead of hiding it.

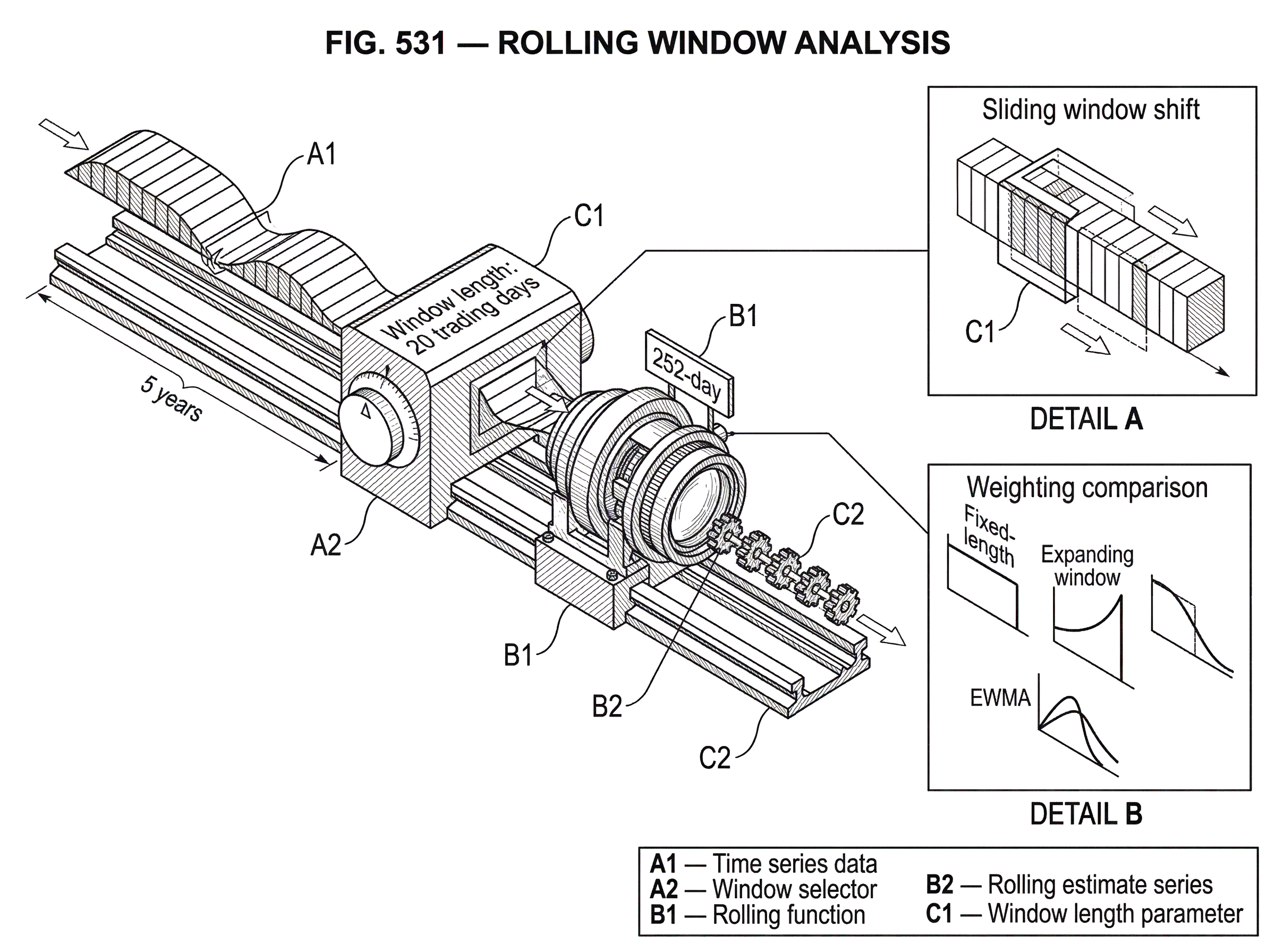

In trading, the idea shows up everywhere: moving averages, rolling volatility, rolling Sharpe estimates, rolling correlations, rolling regressions, and walk-forward optimization all rely on the same basic mechanism. You choose a window, compute something on that window, shift forward, compute again, and compare the sequence of results. The sequence is the point. It tells you not just how largea quantity is, buthow stableit is,when it changes, and whether a model is reacting to current conditions or to stale history.

Why prefer rolling (local) estimates over a full-sample estimate?

The simplest way to understand rolling windows is to start with the problem they solve. Suppose you want to estimate the volatility of a daily return series. If you use all five years of data, your estimate is influenced equally by old calm periods and recent turbulent periods. That can be useful if your goal is a long-run descriptive summary. But it is a poor choice if you are sizing positions tomorrow. For tomorrow’s risk, the relevant question is not what volatility used to be on average; it is what volatility has looked like in the recent past.

A rolling window answers that by replacing one global sample with many local samples. If the window length is 60 trading days, the first estimate uses days 1 through 60, the next uses days 2 through 61, the next uses days 3 through 62, and so on. Each estimate is built from a fixed amount of recent information. That creates a time series of estimates, each tied to a particular point in time.

The important invariant is this: at each date, the estimate should use only the observations inside the chosen window. That sounds obvious, but it is what makes the analysis temporally honest. You are not letting future observations leak backward into past estimates, and you are not letting ancient data dominate a quantity meant to describe current conditions.

This is why rolling window analysis is more than a charting trick. It is a disciplined way to ask time-local questions of time-varying data. The window is a filter on relevance. It says: among all the history we have, these are the observations we are willing to treat as informative for this date.

How does a rolling window compute time‑local statistics?

Mechanically, a rolling window has three ingredients: the data being analyzed, the rule that defines which observations belong in each window, and the function computed inside each window. The data might be prices, returns, spreads, factor values, or features used in a predictive model. The window rule might be a fixed number of observations such as 20 bars, or a calendar rule such as 90 days. The function might be something simple like a mean or standard deviation, or something more structured like a regression coefficient.

If we let x_t denote the value observed at time t, and the window length is w, then the rolling mean at time t is the average of x_(t-w+1) through x_t. The same pattern applies to many other quantities. A rolling standard deviation uses the observations in that interval to estimate dispersion. A rolling correlation uses paired observations from two series over that interval. A rolling regression fits the model again on that interval and stores the estimated coefficients at the end of the window.

That last alignment detail matters. In rolling regression, statsmodels’ RollingOLS stores estimates at the end of the window: a model fit on observations up to time t is aligned with time t. This right-alignment is usually the economically correct convention for trading because it matches what would have been known at that date. If you center a window for visualization, you can make charts look smoother, but you also risk creating timing interpretations that do not match live decision-making.

Software libraries expose this structure directly. In pandas, .rolling() returns a rolling-window object on which you can compute statistics such as count, sum, mean, median, variance, standard deviation, min, max, correlation, covariance, quantiles, ranks, and custom functions through apply or aggregate. Pandas also supports expanding windows through .expanding() and exponentially weighted windows through .ewm(). Those are closely related ideas, but they answer different questions because they define relevance differently.

Example: how a 20‑day rolling volatility responds to shocks

Imagine a trader monitoring the 20-day rolling volatility of an asset’s daily returns. For the first 20 trading days, there is not yet a full window, so the series may be undefined unless the implementation allows shorter partial windows. On day 20, the trader computes the standard deviation of returns from days 1 through 20. On day 21, day 1 drops out and day 21 enters, so the estimate is now based on days 2 through 21. Nothing else changes. The formula is the same; only the sample moves.

Now suppose a large market shock enters the window. The estimate rises, not because the volatility formula changed, but because the composition of the sample changed. As the shock remains inside the 20-day window, it continues to influence the estimate. Once it ages out, the estimate may fall sharply even if no new calm regime has fully emerged. That behavior is not a bug. It is the direct consequence of defining relevance by a fixed lookback horizon.

This example shows why rolling-window outputs often have a stepped or episodic feel. The statistic is not tracking some hidden smooth truth directly. It is reacting to which observations are currently included. If one extreme return enters or leaves the window, the estimate can move materially. The shorter the window, the stronger this sensitivity tends to be.

The same logic applies to rolling correlations. A stock and the market may show low 60-day correlation in one regime, then suddenly move together during stress. The rolling correlation rises because the recent joint behavior changed. A full-sample correlation would blur that regime shift into a single average and tell you much less about present co-movement risk.

How should I choose the window length for rolling estimates?

Most misunderstandings of rolling window analysis come from treating the window length as a harmless implementation detail. It is not. The window length expresses a belief about how quickly the underlying process changes and how much data you need before an estimate becomes usable.

A short window is adaptive. It reacts quickly to new information because old observations are discarded soon. That is useful when regime shifts are frequent or when recency is especially valuable. But short windows are also noisy. With fewer observations, estimates have higher sampling error. A rolling 10-day volatility estimate can swing dramatically simply because 10 observations do not pin down variance very precisely.

A long window is more stable. It reduces estimation noise because more observations are included. But the price of stability is inertia. If the market changes quickly, a 252-day rolling estimate may still be dominated by conditions from many months ago. In trading terms, you gain statistical precision but lose responsiveness.

This is the central tradeoff: adaptivity versus estimation error. There is no universally correct window because the right answer depends on the process, the frequency of the data, the decision horizon, and what cost you assign to being slow versus being noisy. A high-frequency microstructure signal and a monthly macro strategy should not share the same lookback by default.

The practical consequence is that window selection is itself part of model design. If you choose it by searching over many possibilities and reporting only the best result, you have created a new source of overfitting. This is why rolling windows naturally lead into walk-forward testing, time-series cross-validation, and multiple-testing corrections. The flexibility that makes rolling analysis useful also makes it easy to fool yourself.

Fixed vs expanding vs exponentially weighted windows; which should I use?

| Window type | Memory | Responsiveness | Discontinuity | Best for |

|---|---|---|---|---|

| Fixed | hard cutoff | high | step change at boundary | interpretable recent horizon |

| Expanding | growing memory | low | none (cumulative) | cumulative trends, stability |

| Exponentially weighted | fading memory | medium–high | smooth decay | recency with retained history |

There are three common ways to define how past observations matter, and the choice changes the behavior of the analysis.

A fixed rolling window uses the most recent w observations and gives zero weight to anything older. This creates a hard cutoff. The benefit is clear interpretability: everything inside the horizon matters, everything outside does not. The drawback is discontinuity. An observation can have full influence one day and none the next simply because it crossed the boundary.

An expanding window starts with an initial sample and then keeps all past observations as time advances. In pandas, this is handled by .expanding(). Expanding windows are useful when you believe the underlying relationship is stable enough that old data remains informative, or when you want cumulative estimates such as running means. But they are much less adaptive. Since successive training sets are supersets of previous ones, the estimate becomes harder to move over time.

An exponentially weighted window fades older data gradually rather than dropping it abruptly. In pandas, .ewm() computes exponentially weighted moments such as mean, variance, standard deviation, correlation, and covariance. This is often a better match for financial intuition: recent observations should matter more, but old ones should not vanish overnight. An EWMA volatility estimate, for example, can react quickly to shocks while still retaining memory of prior conditions.

The analogy is a memory system. A fixed rolling window has a hard memory limit; an expanding window has near-perfect memory; an exponentially weighted window has forgetful memory, where old experiences still matter but with diminishing force. The analogy helps explain responsiveness, but it fails in one place: real statistical estimates are not just memory devices. Their bias, variance, and timing properties depend on the specific function being estimated and the actual data-generating process.

When to use rolling indicators, diagnostics, or rolling models in trading

In trading practice, rolling analysis appears in three closely related forms.

The first is the rolling indicator: moving averages, rolling highs and lows, rolling z-scores, rolling volatility, rolling drawdown, rolling volume statistics. These summarize recent market state. They are usually used directly in signals, risk filters, or execution logic.

The second is the rolling diagnostic: rolling Sharpe, rolling win rate, rolling turnover, rolling beta, rolling correlation, rolling autocorrelation. These are less about generating trades and more about checking whether a strategy or market relationship is behaving differently over time. If a strategy’s rolling Sharpe collapses in certain regimes, that tells you something important that a full-sample Sharpe conceals.

The third is the rolling model: rolling regressions, rolling classification models, and rolling forecast systems re-estimated as the sample advances. Statsmodels’ RollingOLS is a clean example. It repeatedly fits ordinary least squares on a fixed sliding window, producing time-varying coefficients. In factor investing, this is often used to study changing exposure to market, size, value, or sector factors. A stock or portfolio may appear to have a stable beta in a full-sample regression while actually shifting materially across subperiods.

Suppose you fit a 60-month rolling regression of a portfolio’s excess return on market excess return. If the estimated beta rises from 0.8 to 1.3 during a stress regime, that is telling you the portfolio became more market-sensitive in precisely the period where such sensitivity mattered most. A single regression over the full history may report a beta near 1.0 and miss the transition. Rolling regression turns a static parameter into a path, and the path is often more informative than the average.

How to apply rolling windows in backtesting and walk‑forward optimization

| Approach | How it works | Overfitting risk | Computation cost | Best for |

|---|---|---|---|---|

| Static in-sample fit | fit once on full history | high (historic overfit) | low | stable regimes, initial research |

| Periodic walk-forward | optimize on trailing window then test | moderate | medium | time-varying markets, robustness check |

| Frequent re-optimization | refit very often to new data | high (noise hugging) | high | highly nonstationary markets (use cautiously) |

Once you start using models or parameters that depend on recent data, evaluation must also respect time. This is where rolling window analysis meets backtesting.

Walk-forward optimization is the most direct example. QuantConnect describes it as periodically adjusting strategy logic or parameters to optimize an objective over a trailing window of time. The mechanism is straightforward: choose a trailing training window, evaluate candidate parameter sets on that window, select the best-performing set according to an objective, trade the next out-of-sample segment, then roll forward and repeat.

This exists for the same reason rolling volatility exists: markets change. Parameters that fit one regime may fit another poorly. But the process introduces a second layer of model risk. You are no longer just fitting a trading rule; you are also fitting a re-optimization schedule, alookback length, and often asearch space. Optimizing too frequently may hug recent noise and raise overfitting risk. Optimizing less frequently reduces computation and often improves robustness, but may leave the strategy slow to adapt.

A useful way to think about walk-forward schemes is that they create a sequence of local research problems. At each forecast origin, you ask: using only the data available up to now, which model or parameter setting would I have chosen? The test is then whether that choice works on the next unseen segment. The purpose is not to find the single best historical parameter, but to estimate how well your selection process survives contact with new data.

Why standard cross‑validation fails for time series and what to use instead

| Method | Temporal order | Leakage risk | Fold dependence | When to use |

|---|---|---|---|---|

| Standard k-fold | shuffles order | high | folds approximately independent | not for time series |

| TimeSeriesSplit | preserves order | low if gap used | high (expanding by default) | ordered CV for forecasts |

| Purging & embargo / CPCV | preserves order | very low | controls overlapping labels | financial ML with label overlap |

In standard machine learning, random train-test splits are common because observations are often treated as exchangeable. Time series data is different. Training on the future and testing on the past is a form of leakage, even if it happens accidentally through a shuffled split.

Scikit-learn’s TimeSeriesSplit exists to enforce temporal order. It provides train/test indices for time-ordered data so that training sets come before test sets. By default, successive training sets are supersets of earlier ones, which makes the procedure expanding-window by default. Parameters such as max_train_size can limit the size of the training set, approximating a fixed rolling window. test_size controls how large each test segment is, and gap excludes observations between train and test sets to reduce leakage from near-boundary dependence.

That gap parameter encodes an important idea. In finance, leakage does not only come from literal future prices. It can also come from overlapping labels, delayed information, execution horizons, and features whose construction reaches into periods that touch the test set. In more advanced financial ML workflows, this is why practitioners use purgingandembargo. Mlfinlab documents purging as removing training samples whose information overlaps the test set, and embargo as excluding some observations around the test boundary to further reduce leakage.

The broader point is simple: a rolling analysis is only as honest as its time alignment. If features, labels, or model updates implicitly use information not available at the decision time, the apparent robustness of the rolling procedure can be illusory.

What are rolling windows used for in trading, risk, and model monitoring?

In trading, rolling windows are not used because they are fashionable; they are used because they answer operational questions that global estimates cannot.

If you are managing risk, you care about current volatility, current correlation, and current drawdown behavior. Rolling estimates make those visible. If you are monitoring a strategy, you care whether returns have degraded recently, whether turnover has spiked, whether market beta has drifted, and whether slippage sensitivity has changed. Rolling diagnostics show whether the strategy you thought you had is still the strategy you are running.

If you are building predictive models, rolling windows let you re-estimate relationships under the assumption that parameters may evolve. A predictor that worked over the full sample may have lost relevance, or its sign may have flipped. Rolling estimation makes these failures easier to see before they become expensive.

And if you are evaluating a research pipeline, rolling or walk-forward splits let you simulate the real act of model maintenance. Instead of pretending you picked one model once and never touched it, you can test the more realistic process where models are periodically re-fit or re-tuned as new data arrives.

What are the limitations and failure modes of rolling window analysis?

Rolling window analysis is useful, but it does not magically solve nonstationarity. It merely localizes your estimates. If the process changes faster than the window can react, you still lag. If the window is too short, your estimates become unstable. If structural breaks are large and rare, even a well-chosen rolling rule may spend long periods averaging incompatible regimes together.

Another common failure is false confidence from repeated observation. A chart of rolling statistics can look detailed and scientific while being driven mostly by overlapping windows. Successive rolling estimates share most of their observations, so they are highly dependent. A smooth-looking path does not imply many independent confirmations. It often reflects the fact that yesterday’s window and today’s window differ by only one observation.

There is also a model-selection trap. Researchers often try many window lengths, weighting schemes, features, objectives, and rebalance schedules, then choose the combination that backtests best. This multiplies the number of effective trials. The Deflated Sharpe Ratio was developed precisely to adjust for this kind of selection bias and non-normal returns. Its core message is not that Sharpe ratios are useless, but that once you search hard enough, the best backtest is expected to look better than truth would justify.

So the key question is never just “Did the rolling strategy work?” It is also “How many ways did I try to make it work?” A rolling framework can reduce some forms of look-ahead bias while still leaving substantial overfitting risk from parameter search and repeated experimentation.

Practical implementation checks for trustworthy rolling analyses

Several details that look minor often determine whether a rolling analysis is trustworthy.

Missing data is one. Statsmodels notes that RollingOLS drops missing values within the window by default, which means the effective sample size can vary across windows. That affects comparability. A coefficient estimated from 60 clean observations is not on quite the same footing as one estimated from 41 observations inside a nominal 60-period window.

Spacing is another. Scikit-learn notes that comparable metrics across time-series folds require equally spaced samples. If your data is irregular and you treat “60 observations” as if it means “60 days,” you may be comparing windows that cover materially different calendar durations. In trading data, weekends, holidays, suspensions, and intraday gaps all complicate this.

Window boundaries matter too. Pandas supports custom window indexers such as BaseIndexer, FixedForwardWindowIndexer, and VariableOffsetWindowIndexer. That matters because not all economically meaningful windows are fixed counts. Sometimes you want business-day offsets, event-based windows, or forward windows for specialized labeling or evaluation tasks.

Finally, computation matters. Rolling regressions can be expensive if each step recomputes everything from scratch. Statsmodels’ rolling estimators use incremental updating (adding the newest observation and removing the oldest) to avoid full matrix recomputation on every shift. That implementation detail is not just about speed. It is what makes large-scale rolling experiments practical enough to run systematically.

Conclusion

Rolling window analysis is the practice of recomputing a statistic or model on a moving slice of recent data so you can see how relationships evolve through time. Its value comes from a simple insight: in markets, the most relevant past is often not the entire past.

The hard part is not the syntax. It is choosing what “recent” should mean, preserving temporal honesty, and resisting the temptation to overfit that choice. Used carefully, rolling windows turn static summaries into time-aware evidence. Used carelessly, they turn noise into a convincing story. The difference is whether the moving window is helping you measure change; or merely helping you chase it.

Frequently Asked Questions

There is no universally correct length; pick a window that matches how fast you believe the process changes and the decision horizon - short windows increase responsiveness but also sampling noise, long windows reduce noise but can hide regime shifts. Treat window selection as part of model design (not a free tuning knob): validate it with time-aware evaluation (walk‑forward or time-series CV) and avoid reporting only the best-performing lookback without multiplicity correction.

Fixed rolling windows give a hard cutoff (most interpretable but discontinuous), expanding windows retain all past data so estimates become harder to move over time, and exponentially weighted windows (EWMA/EWM) downweight old observations gradually, often offering a middle ground that reacts to shocks faster than expanding windows while keeping some memory.

No - successive rolling estimates overlap and therefore are highly dependent; a smooth-looking path often reflects one-observation shifts rather than many independent confirmations, so treat apparent persistence cautiously and account for dependence when interpreting diagnostics.

Use time-ordered cross-validation tools (e.g., scikit-learn's TimeSeriesSplit) or walk-forward tests and add a gap/purging/embargo around train/test boundaries to avoid leakage from overlapping labels or delayed information; random K-fold splits are inappropriate because they can train on the future.

Rolling windows localize estimates but do not solve nonstationarity: if the data-generating process changes faster than your window can adapt you will lag, and if structural breaks are rare and large a rolling rule may still average incompatible regimes together. Treat rolling analysis as a way to measure change, not a cure for it.

Missing values change the effective sample inside each nominal window and can make window-by-window comparability misleading; for example, statsmodels’ RollingOLS drops missing observations in a window by default so the effective sample size can vary across endpoints and must be checked.

Walk-forward (rolling) optimization adapts parameters using a trailing training window and then tests them out-of-sample, which helps measure how a maintenance process would perform, but it also adds tuning choices (lookback length, reoptimization frequency, search space) that increase overfitting risk if chosen by exhaustive in-sample search.

If you align estimates to the end of each window (right-alignment), the stored value corresponds to what would have been known at that date; statsmodels’ RollingOLS follows this convention, and right-alignment is generally the economically honest choice for trading applications.

You can approximate a fixed rolling training set with TimeSeriesSplit by setting test_size and max_train_size and include a gap to reduce boundary leakage; TimeSeriesSplit enforces temporal order and offers parameters (test_size, gap, max_train_size) helpful for creating rolling or expanding splits.

Expect step-like behavior: when an extreme return enters a fixed lookback it can move a rolling statistic sharply and the statistic will remain affected while that observation sits inside the window, then may fall abruptly once the observation ages out - this is an expected consequence of a hard cutoff window.

Related reading