What Is Machine Learning for Market Prediction?

Learn what machine learning for market prediction is, how it works in trading, what it predicts, and why validation, execution, and risk control matter.

Introduction

Machine learning for market predictionis the use of statistical learning systems to estimate something about future market behavior from historical and live data. That sounds straightforward, but the hard part is not making predictions in the abstract. The hard part is making predictions that arespecific enough to trade on,robust enough to survive new market conditions, andhonest enough to survive contact with execution costs and operational risk.

This topic matters because markets produce far more data than any human can process directly: prices, volumes, order-book updates, filings, news, and increasingly many alternative datasets. Machine learning offers a way to compress that flood into a forecast; perhaps the probability a stock will outperform over the next day, the chance a short-term move will continue, or the expected effect of order-flow imbalance over the next few seconds. But markets are adversarial, adaptive systems. If many people discover the same pattern, they trade on it, and the pattern weakens or disappears. So the central problem is not merely prediction. It is extracting a signal from data in an environment where the signal is faint, changing, and partially destroyed by its own use.

A useful way to think about the subject is this: machine learning is not a crystal ball for prices. It is a method for ranking uncertain opportunities under noisy feedback. In practice, many successful systems do not try to predict the exact future price. They estimate a probability, a relative return, a class label such as up versus down, or a decision such as trade versus do not trade. That framing matters because trading decisions are usually threshold decisions made under uncertainty, not attempts to know the future with precision.

Why use machine learning for market prediction?

Why use machine learning at all instead of a hand-built rule? Because market data combines three awkward properties at once. First, it is high-dimensional: there are many possible inputs, from lagged returns to earnings revisions to order-book events. Second, relationships are nonlinear: the effect of a signal may depend on volatility, liquidity, time of day, or macro regime. Third, the data-generating process changes. A linear rule designed for one period may fail in the next because market participants adapt or because the underlying regime shifts.

Machine learning is useful here because it can fit flexible relationships between inputs and outcomes without the researcher needing to specify every interaction in advance. Reputable finance-focused treatments explicitly present ML as a distinct subject because the workflow differs from standard prediction problems. It is not enough to minimize forecast error on a random train-test split. You need finance-specific labeling, leakage-aware validation, and backtests designed to avoid false discoveries. That is why practical workflows in algorithmic trading are usually presented as end-to-end processes: start with an investment hypothesis and data, engineer features, train and tune models, design a strategy around the predictions, and backtest the whole pipeline, then repeat as the environment changes.

The compression point is this: a market-prediction model is only useful if its output changes an action, and that action still makes sense after accounting for execution and risk. A classifier with 55% accuracy can be worthless if it fires on tiny moves that trading costs erase. Conversely, a model with modest statistical skill can be valuable if it helps rank trades, filter bad entries, or size positions better. This is why finance practitioners often care less about generic accuracy than about whether a prediction improves expected return relative to cost and risk.

Which prediction targets should trading models use (returns, impact, fill probability)?

| Target | Horizon | Action tied | Labeling approach | Main risk |

|---|---|---|---|---|

| Directional move | Seconds–days | Go long/short | Fixed-horizon label | Noise dominates |

| Relative return | Days–months | Rank securities | Benchmark outperformance | Benchmark drift |

| Execution impact | Seconds–minutes | Order timing/sizing | Impact/fill labels | Self-impact erases edge |

| Fill probability | Milliseconds–seconds | Order placement | Event outcome label | Sparse signals |

| Regime label | Days–months | Switch strategy | Unsupervised/clustering | Boundary ambiguity |

A common misunderstanding is that market prediction means forecasting the next price tick or tomorrow’s closing price. Sometimes it does. More often, the target is chosen to match a trading decision.

If the goal is medium-horizon stock selection, the target may be future relative return over the next week or month. If the goal is intraday trading, the target may be a short-horizon move conditional on current order flow and depth. If the goal is execution rather than directional trading, the target may be short-term price impact or fill probability. In other words, the target variable is not a philosophical statement about the future. It is an engineering choice tied to the action the strategy wants to take.

This is why labeling matters so much in financial ML. One influential finance-specific approach is the triple-barrier method, which labels an observation by what happens first after entry: an upper profit barrier, a lower loss barrier, or a time limit. The point is not cleverness for its own sake. The point is that financial outcomes are path-dependent. A trade can be a success because price rises enough before it falls too far, and a fixed-horizon label can miss that structure. Closely related ismeta-labeling, where a primary model suggests trade direction and a secondary model learns whether taking that suggested trade is worthwhile. Mechanically, meta-labeling turns a blunt predictor into a filter: not “what side should I be on?” but “when is my base signal worth acting on?”

That distinction becomes clearer in a simple example. Imagine a momentum signal that says a stock is likely to continue upward after a breakout. On many days, the signal is directionally right but too weak to overcome spread and slippage. A secondary model can learn that breakouts following strong volume and low short-term reversal have a better chance of paying for costs, while breakouts into thin liquidity or event-driven volatility do not. The primary label gives side; the meta-label givesselectivity. In live trading, selectivity often matters more than raw hit rate.

What data and features power market-prediction models?

| Data type | Timescale | Typical features | Strength | Primary risk |

|---|---|---|---|---|

| Low-frequency | Days–months | Returns, volatility, fundamentals | Macro and cross-section signals | Stale for intraday |

| High-frequency | Milliseconds–minutes | Order-book depth, imbalance, ticks | Execution-aware signals | Latency and noise |

| Alternative data | Minutes–weeks | News, web, satellite, filings | Unique informational edges | Licensing and legality |

| Text/unstructured | Seconds–days | Filings, news sentiment, transcripts | Event-driven insight | Heavy processing; ambiguity |

Market-prediction models live or die by data representation. In ordinary ML problems, one often assumes the labels and features are already given. In markets, deciding what the input should be is much of the work.

At low frequency, features often come from prices, returns, volatility, volume, valuation ratios, earnings information, analyst revisions, and broader macro or cross-sectional context. Finance practitioners often call engineered predictive inputs alpha factors. The idea is simple: instead of feeding raw prices into a model and hoping it discovers structure unaided, you transform data into candidate drivers of return. This may include momentum over several horizons, mean-reversion signals, volatility-adjusted trends, quality metrics, or cross-sectional relative strength.

At higher frequency, the mechanism changes. Here the relevant structure is often not broad valuation but market microstructure: who is demanding liquidity, how much depth is resting at the best quotes, how cancellations and market orders alter supply and demand, and how fast the order book is changing. Research on order-book events finds that over short intervals, price changes are mainly driven by**order flow imbalance** at the best bid and ask (roughly, the imbalance between supply and demand at the top of the book) and that the relation is approximately linear with a slope inversely related to market depth. The practical consequence is important: for short-horizon models, raw trade volume is often a blunt feature, while imbalance and depth can be more directly connected to the mechanism moving price.

That point is easy to miss because “high volume” sounds informative. But market-structure evidence shows why it can mislead. During the May 6, 2010 flash crash, trading volume was enormous while liquidity evaporated; buy-side depth in the E-Mini fell to less than 1% of morning levels. So a model that treats high volume as a proxy for safety or tradability can fail precisely when the market is most dangerous. The deeper lesson is that the state variable that matters is often available liquidity at current prices, not executed volume in the last minute.

Alternative data extends this feature set beyond traditional market feeds. Practical ML-for-trading workflows increasingly include text from filings or news, satellite data, web activity, and other non-price signals. But such data only helps if it is timely, legally usable, and converted into features aligned with a trading horizon. A quarterly filing may matter for days or weeks; a level-2 order-book update matters for milliseconds. The model architecture and validation scheme need to respect that timescale.

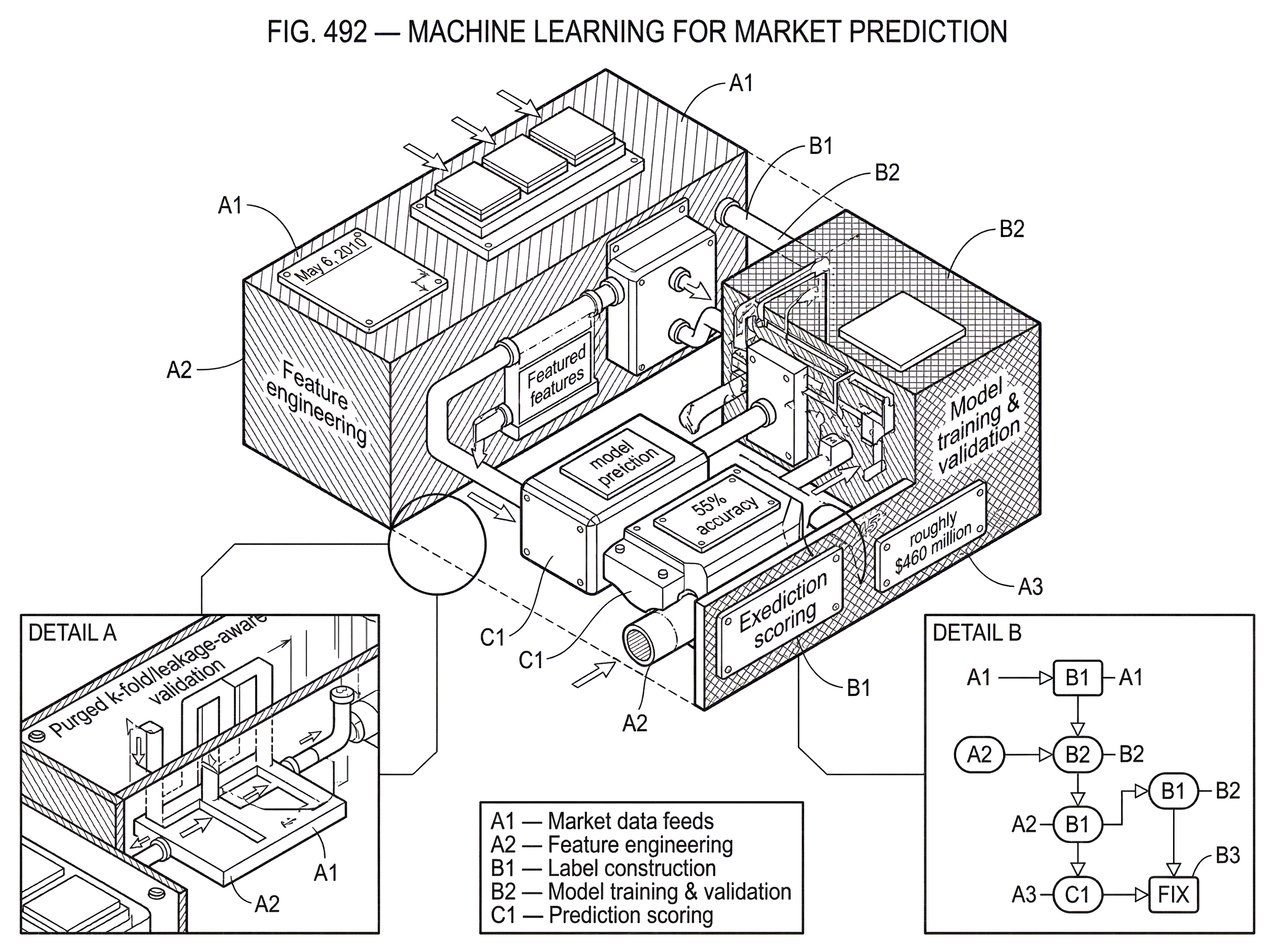

How does an end-to-end ML pipeline for trading work?

The public material around modern ML-for-trading workflows is strikingly consistent on one point: this is a process, not a model-selection contest. The workflow starts with a well-defined investment universe and a hypothesis about what inefficiency or behavior might be predictable. Data is collected and cleaned. Features are engineered. Labels are constructed. A model is trained and tuned. Then the predictions are embedded in a trading strategy and tested under realistic assumptions. If the strategy fails, the failure might come from weak signal, bad labeling, leakage, costs, or execution constraints; not just from choosing the wrong algorithm.

This process orientation explains why many very different models appear in the literature and codebases: linear regression, tree ensembles, gradient boosting, support vector machines, recurrent networks, convolutional networks, autoencoders, and reinforcement learning. They are tools for different statistical structures. Tree ensembles and boosting are often strong tabular baselines because they capture nonlinear interactions in engineered features. Recurrent models are designed for sequential dependence and are often reported in surveys as outperforming simpler feed-forward networks and SVMs on average, though the evidence is still heterogeneous and should not be treated as universal. Deep models become more attractive when the input is less handcrafted (text, images, rich event streams) but they also require more data and invite more overfitting.

A practical worked example makes this concrete. Suppose you want to predict next-day cross-sectional stock returns. You begin with a universe such as liquid U.S. equities and build features: short- and medium-term momentum, realized volatility, earnings surprise measures, turnover, and sector-relative ranks. You label each stock by whether it outperforms its sector or benchmark over the next day. A gradient-boosted tree model is trained on past periods to map feature patterns to that label. But the model output is not yet a strategy. You still need portfolio construction rules, turnover limits, capacity assumptions, and transaction-cost estimates. If the raw model wants to buy 300 names with tiny expected edges, execution costs may destroy the theoretical alpha. So you convert scores into a ranked list, trade only the top tail, neutralize unwanted exposures, and simulate costs. The prediction engine and the strategy become one system.

That is why backtesting is not an afterthought. In finance-specific ML pedagogy, backtesting is treated almost as an adversary: even a flawless-looking backtest may still be wrong if the research process generated false positives. Trying many features, horizons, and model settings on the same historical period can produce impressive results by chance. The more flexible the model, the easier it is to discover noise that looks like signal.

Why do standard train/test splits give misleading results for financial data?

| Method | Leakage risk | Use case | Pros | Cons |

|---|---|---|---|---|

| Random split | High | Quick prototyping | Simple, fast | Overstates performance |

| Purged k-fold | Low | Overlapping labels | Reduces leakage | More complex setup |

| Walk-forward | Low | Time-series deployment | Reflects chronology | Computationally heavier |

| Blocked CV | Medium | Seasonal effects | Balances variance | May underutilize data |

In many ML problems, random train-test splits are acceptable because examples are independent enough. In markets, they are often not. Observations overlap in time, labels can depend on future paths, and adjacent samples may share information. If you randomly mix data from nearby dates across training and test sets, you risk information leakage: the model effectively sees the future through correlated neighbors.

That is why finance practitioners emphasize special validation methods such as purged k-fold cross-validation. The intuition is simple. If a training observation and a test observation are temporally entangled (for example, because their labeling windows overlap) then the training sample should be removed, or “purged,” from around the test period. Without that step, cross-validation scores can look much better than genuine out-of-sample performance.

This is one of the major ways market prediction differs from generic tabular ML. The issue is not merely chronology in the broad sense. It is the structure of dependence induced by labels, horizons, and overlapping events. If that structure is ignored, the validation metric answers the wrong question.

How do model predictions become executable orders in the market?

A prediction only becomes economically meaningful when it reaches the market as an order. That handoff is where many elegant ML systems become fragile.

Execution lives inside market structure. Orders are sent through electronic messaging systems, often using standards such as the FIX Protocol, which defines trading-related messages across pre-trade, trade, post-trade, and infrastructure workflows. At the strategy level, the ML model may output a score; at the execution level, the system must decide venue, urgency, order type, size, and risk checks. Those are not implementation details in the trivial sense. They affect realized P&L, and sometimes they dominate it.

The May 6 flash crash report is a useful warning here. An automated sell algorithm that targeted a fixed percentage of recent volume without regard to price or time amplified stress in the E-Mini futures market. High-frequency participants and arbitrage links transmitted pressure into ETFs and stocks. Liquidity providers’ automated pauses then withdrew liquidity further. The lesson for market prediction is not that automation is bad. It is that the market reacts to your actions, and your model exists inside that feedback loop. If a model’s signal causes orders to be placed when liquidity is thin, the act of trading can erase the modeled edge.

Short-horizon prediction and execution are therefore coupled problems. If your model predicts a 2-basis-point move but the spread is 1 basis point and your own market impact is another 2, the sign may be right while the trade is still unprofitable. This is why execution-aware features (depth, spread, queue dynamics, imbalance) often matter even in directional models. They tell you not just what may happen, but whether you can capture it.

How should production systems maintain feature consistency, freshness, and deployment controls?

Once a strategy is live, another invariant appears: the features used in training and the features used in inference must mean the same thing. If a volatility feature is computed one way in research and another in production, model performance can collapse even if the code seems nearly identical.

That is one reason feature stores have become important in modern ML systems. Systems such as Feast are built to serve historical features for training and online features for inference from a common definition. For market prediction, this matters because feature freshness and consistency are operational, not academic, concerns. A stale order-book imbalance feature or a delayed news-derived signal changes the model’s effective input distribution. Production observability (latency, freshness, materialization health) becomes part of model quality.

This may sound like software plumbing, but market incidents show why it belongs in the explanation. The Knight Capital failure in 2012 was not an ML failure, yet it is directly relevant. A deployment error left one server running legacy order-router logic, which sent millions of unintended child orders and contributed to roughly $460 million in losses. The mechanism was mundane in a dangerous way: incomplete deployment, callable legacy code, missing secondary review, and post-execution rather than pre-trade risk controls. The message for ML systems is clear. A predictive model can be statistically excellent and still produce catastrophic outcomes if deployment governance, monitoring, and kill-switches are weak.

What are the main risks when using ML for market prediction (overfitting, regime change, misuse)?

The first fundamental risk is overfitting. Markets are noisy enough that random variation can resemble structure, especially when many hypotheses are tried. A model with too much flexibility, or a research process with too much iteration on the same data, can learn accidental patterns that vanish in live trading.

The second is regime dependence. Even a real edge may weaken when volatility changes, market participants adapt, or policy and macro conditions shift. The problem is not just that parameters drift. Sometimes the mechanism itself changes. A signal based on retail-flow behavior in one period may stop working when dealers hedge differently or when similar strategies crowd the same trade.

The third is misuse. The Federal Reserve’s supervisory guidance on model risk management defines model risk as adverse consequences from incorrect or misused model outputs and emphasizes that risk comes both from model error and from misunderstanding a model’s limitations. That maps cleanly onto ML for market prediction. A model trained on large-cap U.S. equities may be misapplied to small caps or another region. A model that predicts relative returns may be used as if it predicts absolute market direction. A probability score may be treated as a certainty. These are not subtle issues. They are common failure modes.

The guidance’s idea of effective challenge is particularly relevant. Models should be reviewed by informed parties who can question assumptions, data quality, scope, and monitoring. Validation should be independent of development, ongoing after deployment, and should include conceptual review, monitoring, benchmarking, and outcomes analysis such as backtesting. In trading terms, that means asking not just “does the model fit?” but “what conditions does it require, what would break it, and how would we know in time?”

What legal and governance checks are required before using alternative datasets in models?

Not all predictive data is usable simply because it is informative. Alternative datasets may carry licensing, privacy, or material-nonpublic-information concerns. Some open data licenses are permissive about results (for example, the CDLA Permissive 2.0 states that results of computational analysis, including ML models, are not restricted by the agreement) but the underlying data may still need license text preserved on redistribution. Other contexts are less permissive. If a system processes personal data in the EEA, GDPR becomes relevant. If an investment adviser’s workflows touch possible MNPI, SEC examination guidance is relevant.

The key practical point is modest but important: data legality is part of model quality, because a model trained on unusable or poorly governed data is not truly deployable. Traders sometimes talk as if data governance sits outside prediction. In production, it does not. A feature you cannot lawfully or contractually use is not a feature you have.

How are ML models actually applied in trading workflows (alpha, execution, anomaly detection)?

In practice, machine learning for market prediction is used less as a magical return generator and more as a structured decision aid across several layers of trading. It can rank securities cross-sectionally, estimate short-term directional moves, filter entries and exits, forecast volatility, infer latent factors, detect anomalies, or support execution decisions such as urgency and venue choice. In some workflows it is the main alpha engine. In others it is a secondary component that improves a more traditional strategy.

This explains why the field includes supervised learning, unsupervised learning, and reinforcement learning. Supervised models learn from labeled outcomes. Unsupervised methods help compress data, detect regimes, or extract latent structure. Reinforcement learning is sometimes used for sequential decision problems such as execution, though it is harder to evaluate and deploy safely because the environment reacts to the policy. The unifying principle is not the algorithm family. It is that each method tries to map observed market state into a better trading decision under uncertainty.

Conclusion

Machine learning for market prediction is best understood as a disciplined way to turn messy market data into decisions that can be tested, traded, and challenged. The model matters, but the deeper structure is the pipeline: choose a target that matches a trading action, build features that reflect the mechanism you care about, validate without leakage, and carry the prediction through execution, monitoring, and risk control.

What readers should remember tomorrow is simple: in markets, prediction is only half the problem. The other half is whether the prediction survives the realities of trading; costs, liquidity, regime change, deployment errors, and human misuse. The firms that treat ML as a full decision system, not just a forecasting tool, are the ones most likely to get real value from it.

Frequently Asked Questions

Pick a target that maps directly to the trading decision you will make - e.g., next-day relative return for stock selection, short-horizon impact or fill probability for execution - and use labeling schemes (the triple‑barrier method for path-dependent outcomes or meta‑labeling to learn when to act) so the model predicts what you can actually trade on.

Random train/test splits can be optimistic because nearby or overlapping samples leak information about future paths; finance practitioners therefore use leakage-aware methods such as purged k‑fold cross‑validation that remove training samples that are temporally or label-wise entangled with test periods.

A model’s apparent edge can be erased by bid‑ask spread, slippage, and your own market impact (the article gives the example that a predicted 2‑bp move can be unprofitable once spread and impact are included), and historical incidents such as the May 6, 2010 flash crash and the Knight Capital deployment failure show how execution dynamics and deployment errors can overwhelm statistical signals.

For low‑frequency problems, engineered “alpha” features (momentum, volatility, earnings surprises, valuation ratios) and cross‑sectional ranks are common; for high‑frequency problems, microstructure features like order-flow imbalance, best‑quote depth, cancellations and queue dynamics are more mechanistic and often more predictive than raw volume.

Meta‑labeling trains a secondary model to predict whether a base signal’s suggested trade is worth taking, turning a blunt directional predictor into a selective filter that improves real‑world profitability by trading only when the base signal is likely to overcome costs or adverse conditions.

Common operational failure modes include inconsistent feature computation between research and production, stale or delayed features, missing pre‑trade risk checks or kill‑switches, and poor deployment governance - issues illustrated by the need for feature stores (Feast), the Knight Capital incident, and supervisory guidance on model risk management.

Tree ensembles and gradient boosting are strong, robust baselines on engineered tabular features; recurrent models and other deep architectures can outperform on sequential or unstructured inputs (text, images, dense event streams) but evidence is heterogeneous and deep models need more data and invite more overfitting.

You must confirm licensing and privacy legality (including MNPI issues), preserve any required license text when redistributing data or results (for example, CDLA Permissive 2.0 requires the agreement text), and comply with jurisdictional laws such as GDPR when personal data is involved; data legality is part of whether a model is deployable, not an afterthought.

Related reading