What is the Autocorrelation Function?

Learn what the autocorrelation function (ACF) is in trading, how it measures serial dependence across lags, and how to interpret market memory.

Introduction

The autocorrelation function, orACF, is a way to measure how strongly a time series relates to its own past. In trading, that matters because market data arrive as sequences: returns, prices, spreads, volume, volatility, order flow. The central question is not onlyhow variablea series is, but alsowhether it remembers. If a return today makes a return tomorrow slightly more likely to have the same sign, that is a very different market from one in which each move is independent. If a spread tends to snap back after widening, that is different again.

This is why the ACF sits near the foundation of quantitative trading. It helps distinguish persistence from reversal, genuine temporal structure from randomness, and economic signal from artifacts created by sampling and market microstructure. It is also one of the first diagnostics used when deciding whether a simple model is adequate or whether a richer time-series model is needed. But the idea is easy to misuse. ACF measures linear dependence across lags, not every kind of predictability, and in markets it can be distorted by trend, seasonality, bid-ask bounce, nonsynchronous trading, and non-stationarity.

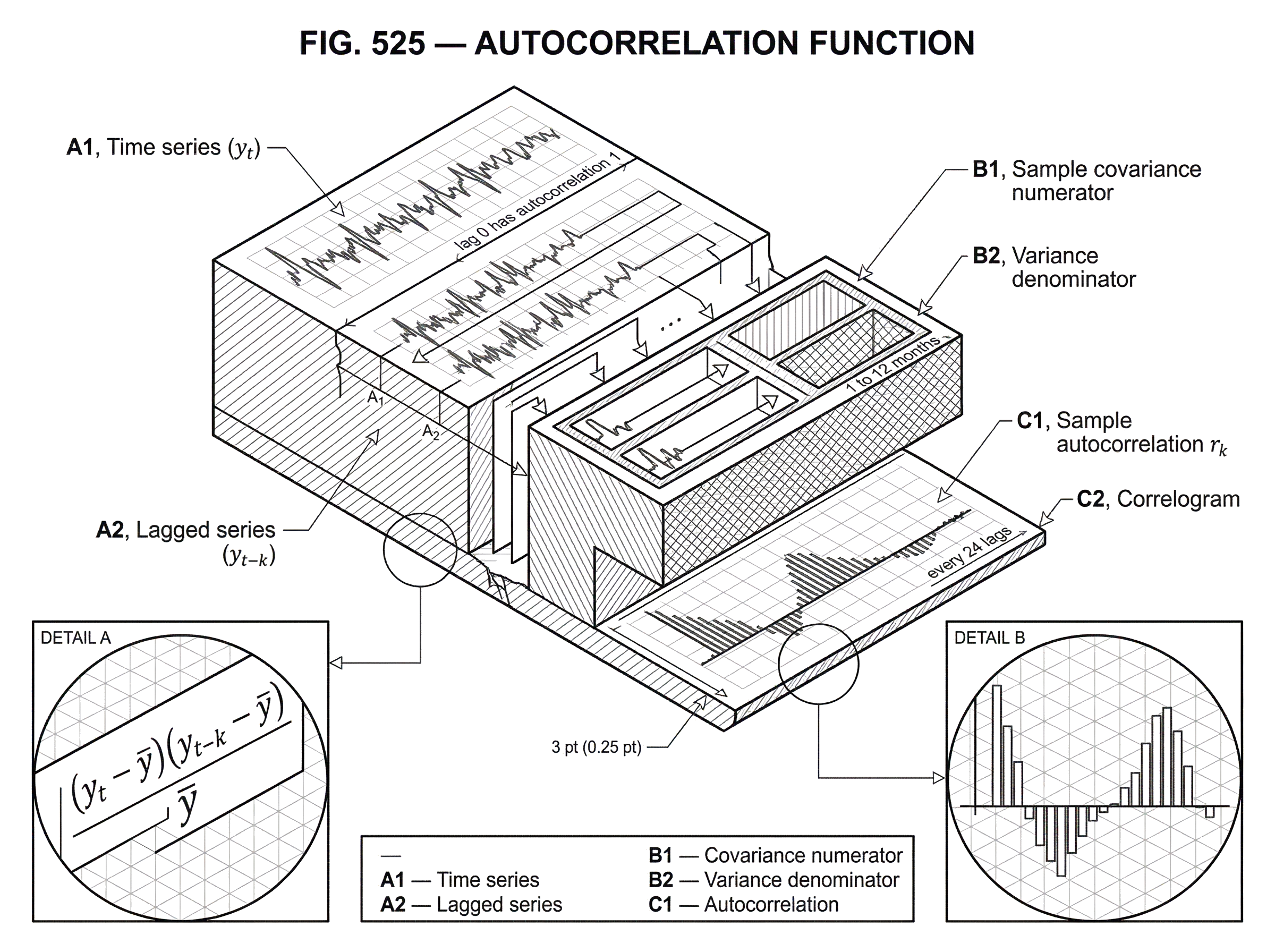

The core idea is simple: take a series, shift it by k time steps, and measure the correlation between the original and shifted versions. Do that for many values of k, and you get a picture of the series' memory as a function of lag. That picture is the autocorrelation function.

How does the autocorrelation function compare a series with its own past?

Ordinary correlation compares two different variables: for example, stock returns and bond returns. Autocorrelation compares a variable with itself at a different time. If y_t is a time series observed at equally spaced times, then the lag-k autocorrelation asks: how related is y_t to y_{t-k}?

That framing already explains why the ACF exists. Many statistical tools assume that observations are effectively unrelated except through a common distribution. But time series violate that assumption all the time. Nearby observations can be similar because of trend, inventory effects, delayed information incorporation, execution dynamics, seasonality, or mean reversion. The ACF is a compact way to quantify that dependence rather than guessing from a chart.

There is an important practical assumption hidden here: the observations should be equally spaced in time. The standard sample ACF formula uses ordering, not clock time directly. If your series is missing intervals, sampled irregularly, or stitched together from different trading sessions without care, the computed lags may not represent what you think they do.

In the simplest interpretation, a positive autocorrelation at lag 1 means consecutive observations tend to move together. A negative autocorrelation at lag 1 means they tend to alternate: an up move is more likely to be followed by a down move, or vice versa. Values near zero suggest little linear dependence at that lag.

How do you compute the sample autocorrelation (r_k) for market data?

Suppose you have observations y_1, y_2, ..., y_T, and let y_bar be their sample mean. The sample autocorrelation at lag k, usually written r_k, is

r_k = [sum from t = k+1 to T of (y_t - y_bar)(y_{t-k} - y_bar)] / [sum from t = 1 to T of (y_t - y_bar)^2]

The numerator is the sample covariance between the series and a version of itself shifted by k periods. The denominator scales by the total variance, so r_k behaves like a correlation coefficient.

That structure matters more than the notation. The numerator asks whether observations k periods apart tend to be above their mean together, below their mean together, or on opposite sides. The denominator normalizes that co-movement so the result is interpretable across lags and series. By convention, lag 0 has autocorrelation 1, because a series is perfectly correlated with itself without any shift.

If you compute r_1, r_2, r_3, ..., the resulting sequence is the sample autocorrelation function. Plotting those values against lag gives the familiarACF plot, also called acorrelogram.

For a stochastic process rather than a finite sample, people often write the population autocorrelation at lag k as rho_k. Under covariance stationarity (meaning the variance is stable over time and the covariance between two points depends only on their separation, not on calendar time) rho_k is well-defined as a function of lag alone. This stationarity condition is what makes the ACF concept clean. Without it, asking for “the autocorrelation at lag 5” can be ambiguous, because the answer may differ across time.

What does an ACF plot reveal about memory, persistence, and seasonality?

An ACF plot is not just a list of coefficients. It is a sketch of how a series forgets its past.

If the bars drop quickly toward zero, the series has short memory. If they stay large for many lags, the series has persistence. If they oscillate between positive and negative values, the series may have cyclical behavior or alternating reversal. If spikes recur at fixed intervals (say every 5 lags in daily business data, or every 24 lags in hourly data) that suggests seasonality.

This is why ACF is useful before any serious modeling. The plot gives a first read on the mechanism. A slowly decaying positive ACF often signals trend or non-stationarity rather than a tidy low-order autoregressive process. Seasonal peaks suggest the need for seasonal terms or seasonal differencing. A sharp negative lag-1 autocorrelation can point to short-horizon reversal, but in trading it can also be a warning sign of bid-ask bounce rather than exploitable economic mean reversion.

Some software also overlays significance bands. Those bands are useful, but only with restraint. They are usually based on approximations that assume white noise or other specific structures. In tools such as statsmodels, confidence intervals may be computed using Bartlett-style formulas, and their interpretation depends on whether you are looking at raw data or model residuals. A bar crossing a dashed line is not a proof of tradeable predictability. It is only evidence that the estimated linear dependence is larger than a simple null benchmark would suggest.

Why do price-level and return ACFs look different in markets?

| Series | Typical ACF | Reflects | Best for |

|---|---|---|---|

| Price level | Persistent, slowly decaying | Level / trend | Long-horizon trend |

| Returns | Near-zero at short lags | Incremental price change | Short-term signals |

| Squared / abs returns | Strong positive persistence | Volatility clustering | Volatility modelling |

The easiest place to misunderstand ACF in trading is the distinction between pricesandreturns.

Imagine you compute the ACF of a stock's closing price level. You may find large positive autocorrelations across many lags. At first glance, that can look like strong predictability. But the mechanism is usually simpler and less exciting: prices are highly persistent because today's price is close to yesterday's price plus a relatively small change. That persistence reflects the level process, not necessarily a useful directional edge.

Now imagine you instead compute the ACF of daily returns. Very often, the first few lags are much closer to zero. In many liquid markets, short-horizon return autocorrelation is weak once obvious frictions are accounted for. That tells you something important: the apparent “memory” in price levels often disappears when you look at the economically relevant variable for many strategies, which is the increment rather than the level.

This is the same underlying data viewed through two different transformations, and it shows why ACF is only as informative as the series you feed into it. For a trend-following strategy, you may examine returns over monthly horizons and see persistence. For a market-making or mean-reversion strategy, you may examine very short-horizon returns or spread changes and see negative lag-1 autocorrelation. For volatility modeling, you may find near-zero autocorrelation in returns but strong positive autocorrelation in squared or absolute returns. The question is never just “what is the ACF?” but “the ACF of what?”

How do traders use the ACF for signals, model selection, and diagnostics?

In trading, the ACF does three jobs at once.

The first is signal discovery. If returns exhibit positive autocorrelation over a horizon, that is the raw statistical footprint of momentum or trend-following. If they exhibit negative autocorrelation, that is the raw footprint of mean reversion. Research on time-series momentum in liquid futures has found persistence in excess returns over horizons such as 1 to 12 months, with partial reversal over longer horizons. In ACF language, that means positive dependence at some intermediate lags and weaker or opposite-signed dependence later. The trading rule comes later; the ACF is one way the underlying persistence reveals itself.

The second is model identification. Classical time-series modeling uses the ACF and related tools such as the partial autocorrelation function, or PACF, to choose plausible AR, MA, or ARIMA structures. The reason is mechanical. Different model families imply different decay patterns in autocorrelation. If you know what pattern a model should produce, you can compare it with the data before fitting a large search space.

The third is diagnostics. After fitting a model, you want the residuals to look as close to white noise as possible. If residual ACF still shows structure, your model has left predictable dependence unexplained. Portmanteau tests such as theLjung-BoxandBox-Pierce tests formalize this idea by testing whether a collection of autocorrelations up to some lag is jointly consistent with independence. Software in both Python and R exposes these tests directly, often with adjustments for model degrees of freedom when they are applied to ARMA-type residuals.

These are really versions of the same question: has the time dependence been explained, or is there structure left in the sequence?

What do common ACF shapes imply about underlying time-series mechanisms?

It helps to connect typical ACF shapes to data-generating mechanisms rather than memorizing patterns.

A rapidly decaying positive ACFmeans shocks leave an echo, but the echo fades. That is what you would expect from a stable autoregressive process. The canonical example is an AR(1) process, where X_t = rho* X_{t-1} + epsilon_t. In that model, the population autocorrelation at lag k is rho^k. So if rho is positive and less than 1 in magnitude, the ACF starts positive and shrinks geometrically. The parameter rho controls the memory: larger absolute values mean slower decay.

A negative lag-1 autocorrelation means adjacent observations tend to offset each other. In markets, that can reflect genuine mean reversion, but it can also arise from microstructure. Bid-ask bounce is the classic example: if trades alternate between bid and ask while the underlying efficient price barely moves, transaction-price returns can mechanically alternate sign. That creates negative short-lag autocorrelation even without economic predictability.

A slowly decaying ACF near 1 often means the series is not stationary in level. Trend and unit-root-like behavior can produce large positive autocorrelations at many lags because observations close in time are also close in value. In practice, this often means you should difference or detrend before interpreting the ACF as evidence about short-run dynamics.

A repeating pattern with peaks at regular intervals suggests seasonality. Forecasting texts emphasize that seasonal data produce elevated autocorrelations at the seasonal period and its multiples. In markets, this can show up in volume, volatility, or intraday activity patterns more clearly than in daily close-to-close returns.

An alternating sign pattern can signal oscillatory behavior, inventory adjustment, overreaction-and-correction, or a model with negative autoregressive feedback. But as always, the shape alone does not identify the mechanism uniquely; it only narrows the plausible stories.

What causes autocorrelation in financial markets?

In trading, asking whether autocorrelation exists is only half the problem. The harder question is why it exists.

Some autocorrelation is informational. News diffuses gradually, institutions trade over time, and trend-following or hedging pressure can create persistence. The evidence for time-series momentum across futures markets is one example: positive autocovariance in returns appears to drive part of the observed momentum effect across asset classes.

Some autocorrelation is structural. Market design, batch effects, opening and closing procedures, and seasonality in participation can all induce recurring temporal patterns. Intraday volume and volatility are obvious cases.

Some autocorrelation is microstructural and partly spurious from the perspective of economic forecasting. Bid-ask bounce can create negative first-order autocorrelation. Nonsynchronous trading can distort measured autocorrelation in portfolios and indices. Research on portfolio serial correlation has shown that daily first-order serial correlation in portfolios can exceed what individual-security coefficients would suggest, and that nonsynchronous trading is not the only cause.

This distinction matters because a strategy built on each type of autocorrelation has different durability. Informational persistence may survive costs if it reflects slow-moving capital or risk transfer. Microstructure-induced autocorrelation may disappear once you move from last-trade prices to quote-mid returns, or once you include realistic execution costs. ACF itself does not tell you which world you are in. It only tells you that temporal dependence is present.

Which estimation pitfalls should I watch for when computing ACF on market data?

| Issue | Effect on ACF | Quick mitigation |

|---|---|---|

| Finite sample noise | Spurious isolated spikes | Focus on overall pattern |

| Nonstationarity | Slow large positive decay | Detrend or difference |

| Lag choice | Missed horizon or noise | Pick lags by question |

| Missing data | Invalid autocorr sequence | Impute or drop gaps |

| Microstructure noise | Negative short‑lag bias | Use mid‑quote returns |

Sample ACF is an estimate, not the truth. That sounds obvious, but it has concrete consequences.

First, finite samples are noisy. Even if the true autocorrelation is zero, the sample ACF bars will not all sit exactly at zero. Some will look sizable by chance. This is why individual spikes need context, and why people often look at the overall pattern rather than a single bar.

Second, stationarity matters. The sample ACF is a consistent estimator of the population autocorrelation under covariance stationarity. If the underlying process changes regime, shifts mean, changes variance, or trends strongly, the estimated ACF can mix several different behaviors into a misleading average. This is one reason rolling-window analysis is so common in trading: it recomputes statistics like ACF over shifting samples to see whether dependence is stable or regime-specific.

Third, the choice of maximum lag is not innocent. Developer documentation in both statsmodels and R uses heuristics based on sample size, such as around 10 * log10(N) or related rules. Those defaults are convenient, but they are not economically privileged. In trading, the right lag horizon depends on the question. A high-frequency mean-reversion strategy may care about the first few ticks or seconds. A medium-term futures trend strategy may care about monthly or quarterly lags. Looking too far out can fill the plot with noisy bars; looking too narrowly can hide the mechanism.

Fourth, missing data handling can break things quietly. R warns that passing missing values through may yield an invalid autocorrelation sequence. statsmodels offers options such as drop and conservative, and these choices implicitly define how gaps are treated. In market data, gaps are common because of halts, holidays, stale quotes, sparse trading, or merged data sources. If you ignore how the software handles missing values, you may estimate the ACF of your preprocessing decisions rather than of the market.

Fifth, implementation details matter at scale. In Python, statsmodels.tsa.stattools.acf can compute the ACF via FFT, which is recommended for very long series. That does not change the concept, but it matters operationally when you are working with large intraday datasets.

Why the ACF alone is not a trading strategy

This is the most important guardrail.

An autocorrelation function is a diagnostic object, not a strategy by itself. It tells you whether a linear dependence pattern exists at particular lags. It does not tell you whether the effect is stable, causal, exploitable after costs, or robust to the way you sampled the data.

Suppose you observe negative lag-1 autocorrelation in a stock's trade-by-trade returns. A naive conclusion would be “mean reversion strategy.” But that pattern may mostly be bid-ask bounce. If you trade against it, you may simply pay the spread repeatedly while chasing a statistical artifact.

Suppose instead you observe positive autocorrelation in monthly futures returns. That is closer to the statistical footprint associated with time-series momentum, and historical research suggests such persistence has been economically meaningful across asset classes. But even there, the ACF is only the beginning. You still need to choose the horizon, handle overlapping returns, manage leverage and turnover, account for roll yield in futures, and test whether the pattern survives out of sample.

The same caution applies in relative-value trading. Mean-reverting spreads or pair distances often show negative autocorrelation after shocks, but strategy profitability depends on transaction costs, execution delay, borrowing constraints, and whether the convergence is genuine rather than a byproduct of microstructure or factor exposure.

When should I use ACF, PACF, and Ljung–Box during model building and diagnostics?

| Tool | Question answered | Modeling role | Typical output |

|---|---|---|---|

| ACF | Correlation vs lag | Suggest MA / shape | Correlogram (lags) |

| PACF | Direct lag effects | Suggest AR order | Partial‑corr plot |

| Ljung‑Box | Joint whiteness test | Residual diagnostic | Q‑stat and p‑value |

The ACF becomes more useful when paired with nearby ideas.

The partial autocorrelation function, orPACF, asks a sharper question: after controlling for the intermediate lags, how much direct association remains between y_t and y_{t-k}? That makes PACF especially helpful for identifying autoregressive structure, because it tries to separate direct lag effects from indirect chains through shorter lags.

Portmanteau tests such as Ljung-Box then aggregate information across multiple autocorrelations. Instead of asking whether lag 3 is individually large, they ask whether the set of autocorrelations up to some chosen lag is jointly too large to be consistent with independence. This is especially useful for model residuals. If your fitted model leaves residual autocorrelation, the model has not captured the sequence structure adequately.

These tools are complementary. ACF shows the shape. PACF helps interpret direct lag structure. Ljung-Box asks whether the remaining autocorrelation is jointly too large to ignore.

Which market realities invalidate textbook ACF assumptions?

The textbook ACF story assumes equally spaced observations, roughly stable dynamics, and a stationary relationship between lag and dependence. Markets often violate all three.

Regimes change. Volatility clusters. Trading hours interrupt the clock. Instruments stop trading asynchronously. Structural breaks alter dependence. Algorithmic market structure shifts the short-run behavior of quotes and trades. Research on the rise of algorithmic trading suggests that changes in quoting and liquidity provision can alter how information gets incorporated into prices, which can in turn change short-horizon serial dependence.

There is also the issue of linearity. ACF measures linear dependence. A series can have zero autocorrelation and still be highly predictable in variance or through nonlinear dynamics. Financial returns often look close to uncorrelated in mean while being strongly autocorrelated in squared or absolute returns. So “ACF near zero” should not be read as “nothing interesting is happening.” It means only that linear dependence in the level of the chosen series is weak.

Finally, significance is not economic value. A tiny autocorrelation can be statistically significant in a huge sample and still be useless after costs. Conversely, a modest pattern can matter economically if it aligns with a slow-moving, low-turnover strategy.

Conclusion

The autocorrelation function is the map of a time series' linear memory. It tells you, lag by lag, whether the series tends to echo its past, reverse it, or forget it quickly.

In trading, that makes ACF a first diagnostic for persistence, mean reversion, seasonality, and model adequacy. But its value comes from disciplined interpretation: use the right series, respect stationarity and sampling assumptions, separate economic structure from microstructure artifacts, and treat the ACF as evidence about mechanism rather than as a signal generator on its own.

A good short memory to keep is this: ACF does not ask whether a market is predictable in general. It asks whether the present still looks linearly related to the past at a given lag. That is a narrower question than many people think; and exactly why it is so useful.

Frequently Asked Questions

ACF measures the linear correlation between a series and lagged versions of itself - i.e., how much the present is linearly related to past values at each lag - but it does not capture nonlinear dependence (for example, predictability in volatility) or causal mechanisms on its own.

Standard ACF formulas assume equally spaced observations because they use ordering rather than clock time; if your data have irregular timestamps or gaps (halts, holidays, stitched sessions) the computed lags may not represent the intended calendar separations and can be misleading.

Level prices typically show large positive autocorrelations because today's price is close to yesterday's plus a small change, whereas returns often have much smaller ACF values; thus high ACF in price levels can reflect persistence of the level, not exploitable directional predictability in returns.

Microstructure effects like bid-ask bounce can create negative short-lag autocorrelation (alternating signs) and nonsynchronous trading can distort measured serial correlation in portfolios, so observed short-horizon ACF can be spurious from an economic forecasting perspective.

Significance bands on ACF plots are useful but conditional: many implementations use Bartlett-style approximations or white-noise assumptions, so a bar outside the band signals deviation from that null only under those assumptions and is not proof of tradeable predictability.

A statistically significant but very small autocorrelation can be economically irrelevant once trading frictions, turnover, and sample stability are considered; conversely, a modest autocorrelation can matter for low-turnover, leveraged, or long-horizon strategies, so statistical significance does not equal economic value.

Use the ACF for model identification by inspecting decay patterns (e.g., geometric decay suggests AR(1)) and for diagnostics by checking residuals for remaining structure, while relying on PACF and portmanteau tests like Ljung–Box to sharpen model-selection and whiteness checks.

How you handle missing values and gaps matters: some software drops or uses complete cases (which can bias the ACF), others offer conservative options, and incorrect NA handling can produce invalid autocorrelation sequences or estimates that reflect preprocessing choices rather than the market.

Zero ACF only implies little linear dependence in the chosen series; a series can have zero mean-autocorrelation while still exhibiting strong nonlinear predictability (for example, volatility clustering in squared returns), so ACF near zero is a narrow negative result, not a blanket statement of unpredictability.

There is no universal optimal max-lag: defaults (e.g., statsmodels' heuristic ~10*log10(N)) are convenient, but you should choose the lag horizon to match the trading question - ticks/seconds for high-frequency mean reversion, months for trend - because looking too far adds noise and looking too short can miss mechanisms.

Related reading