What Is an Anti-Phishing Code?

Learn what an anti-phishing code is, how it helps verify exchange emails, where it works, and why it complements - not replaces - email security.

Introduction

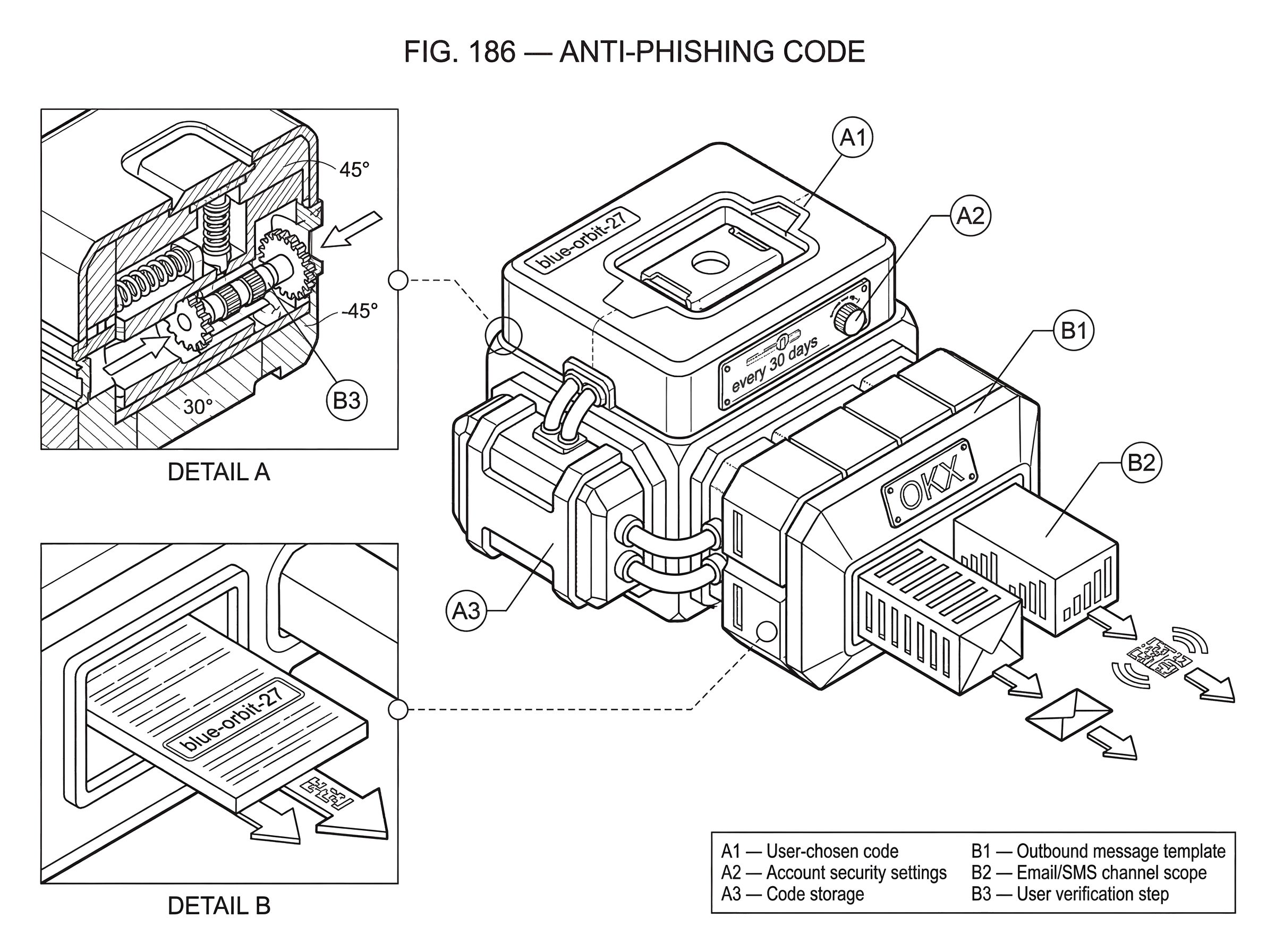

Anti-phishing code is a user-chosen marker that some exchanges and crypto platforms place into their legitimate messages so you can tell real communications from impersonations. The idea looks almost trivial: you pick a short word or phrase, and official emails later show it back to you. But the reason this feature exists is that phishing usually wins not by breaking cryptography, but by borrowing trust; copying branding, sender names, and familiar templates well enough that a user acts before thinking.

That is the puzzle. Modern platforms can use domain authentication, TLS, account security checks, and two-factor authentication, yet many still add a personalized code to outbound messages. If the provider already knows how to send authenticated email, why involve the user at all? The answer is that email security and user trust operate at two different layers. Domain-level controls help receiving mail systems judge whether a message likely came from the claimed domain. An anti-phishing code tries to solve a separate problem: can the human recipient quickly verify that this particular message fits a secret pattern known only to them and the platform?

Used well, this feature can reduce successful phishing attempts that pretend to be from an exchange or wallet provider. Used carelessly, it can create a false sense of safety. To understand when it helps, you need to see both the mechanism and its boundary: what the code actually proves, what assumptions it relies on, and what kinds of attacks it does not stop.

What phishing problem do anti‑phishing codes solve?

Most phishing is not about defeating a platform’s backend security directly. It is about getting a user to hand over something valuable: a password, a one-time code, a seed phrase, a wallet signature, or simply a click on a malicious link. The attacker’s main tool is imitation. They copy logos, button styles, subject lines, support language, and urgency cues until the message feels routine.

This works because a user usually does not have direct visibility into email authentication internals. Even if a provider has correctly configured SPF, DKIM, and DMARC, the recipient often sees only a display name, a sender address, and some polished HTML. Research on phishing usability has repeatedly shown that visual indicators are often ignored or misunderstood, and convincing imitations can fool even careful users. So the platform faces a practical design problem: how do you give the recipient a signal that is both easy for a human to check and hard for a random impersonator to guess?

An anti-phishing code is one answer. Instead of asking the user to inspect technical mail headers or subtle browser indicators, the platform asks them to remember a secret string they chose themselves. If a future message claims to be official but does not contain that string, the message should look suspicious. OKX describes the idea bluntly: after you set the anti-phishing code, emails sent by OKX will contain it, and if there is no anti-phishing code in the email, treat it as forged or fraudulent. Crypto.com and Bybit describe the same basic mechanism: a user-defined code appears in official emails, and its purpose is to help distinguish legitimate mail from phishing attempts.

The central point is simple: the code is a shared secret used as a visual authenticity check. Not cryptographic authenticity in the formal sense, but a lightweight personalized marker intended for human verification.

How do anti‑phishing codes work in practice?

The mechanism has three moving parts, and they matter because the feature fails if any one of them breaks.

First, the user creates a code inside an authenticated account session. The setup flow is usually protected by some additional confirmation step. Crypto.com’s App flow requires the user to go into security settings, enter a unique code, confirm it, and then enter their passcode. Its NFT flow requires a verification code sent to the inbox before submission. Bybit similarly requires account access and a security verification step before the code is confirmed or changed. This setup step matters because if an attacker could freely set or replace the code, the feature would become useless or even dangerous.

Second, the platform stores that code as account-specific security metadata and injects it into outbound messages for the supported channels. On Crypto.com NFT, the scope is explicitly email and explicitly limited to that product: once set up, the code appears in all emails sent from Crypto.com NFT, and only those emails include it. On the Crypto.com App, the code appears in all emails from the App and, when the Exchange account is connected, the Exchange as well. Bybit says its code appears in official emails and SMS messages. The scope is not a side detail. It is the whole trust boundary.

Third, the user is expected to compare incoming messages against that expected marker before acting. If the code is present and correct, the message is more likely to be genuine. If it is missing, wrong, or unfamiliar, the message should be treated as suspicious and independently verified.

That is the entire mechanism. The platform is not proving message authenticity to you with a new cryptographic protocol at the application layer. It is giving you a personalized challenge-response pattern spread over time: you chose the marker earlier, and the real platform can echo it later in messages because it knows your account state.

A concrete example makes this clearer. Imagine you use an exchange and set your anti-phishing code to blue-orbit-27. A week later you receive an email saying your withdrawal is on hold and you must log in immediately. The message uses the right logo and colors and even a plausible sender name. But before clicking anything, you look for your code. If the email includes blue-orbit-27 exactly where official emails normally show it, that is one positive signal. If it shows no code at all, or a different one, that breaks the pattern. The mechanism works not because the string is fancy, but because the attacker usually does not know it.

The important qualifier is usually.

What does an anti‑phishing code actually prove; and what can it not protect?

An anti-phishing code does not prove, in a formal security sense, that a message is authentic. It proves something weaker and more conditional: the sender included a secret marker that matches what your account expects. That can still be useful, but only if you keep the assumptions straight.

Here is the strongest interpretation you can reasonably make: if a message arrives through a channel covered by the feature, and it contains the correct code, then an attacker who does not know your code will have a harder time forging a convincing impersonation. That raises the cost of generic phishing campaigns. A mass attacker who sends the same fake “account suspended” template to thousands of users cannot personalize each message with the right code unless they have additional information.

Here is the weaker interpretation you should not overextend: the presence of the code does not mean the rest of the message is safe, the link is safe, your device is safe, or your account is uncompromised. If an attacker has already accessed your mailbox, compromised your platform account, or learned your code through some other breach, they may be able to craft phishing content that includes the correct code. If the legitimate sender’s account systems themselves are compromised, the code can appear in malicious-but-officially-sent messages. And if the code is only configured for email, then its absence from SMS or push notifications tells you nothing unless the platform explicitly says those channels are unsupported.

This is the crucial distinction: anti-phishing codes are a user-verification aid, not a replacement for email authentication, account security, or cautious behavior.

Why use an anti‑phishing code in addition to SPF, DKIM, and DMARC?

| Primary purpose | Automatable | User-visible | Typical scope | Best for |

|---|---|---|---|---|

| Personalized authenticity hint | No; requires human check | Displayed in message body | Account-specific channels | Stopping mass impersonation |

| Domain delivery authentication | Yes; server-side policy | Usually hidden from users | Domain and MTA level | Prevent forged mail delivery |

At first glance, anti-phishing codes may seem redundant because the email ecosystem already has standardized anti-spoofing controls. SPF lets a domain publish which servers are authorized to send mail for it. DKIM lets the sender cryptographically sign messages so recipients can verify that a domain took responsibility for the content. DMARC builds on SPF and DKIM to define policy and alignment rules around the visible From domain, letting domain owners request monitoring, quarantine, or rejection of unauthorized mail.

These standards are foundational. NIST guidance treats SPF, DKIM, and DMARC as the primary recommended techniques for authenticating sending domains and reducing spoofing. BIMI adds a further layer that can help authenticated messages display a brand indicator in mail clients, but BIMI itself relies on those underlying authentication systems and is not an authentication protocol on its own.

So where does the anti-phishing code fit? It sits at a different layer.

SPF, DKIM, and DMARC are mostly receiver-to-receiver infrastructure signals. They help mail servers and mail clients decide whether a message is aligned with the sender’s domain policies. They are standardized, automatable, and far more robust than asking users to inspect message content manually. But they have limits. DMARC does not solve lookalike domains or display-name tricks. DKIM signature status is often invisible to ordinary users. Mail interfaces do not consistently expose authentication outcomes in ways users understand. And even when they do, people often ignore or misread them.

An anti-phishing code tries to bridge that usability gap by creating a signal visible in the body of the message itself. It does not replace domain authentication beneath it; in a well-designed system, it should sit on top of those controls. In fact, the feature is safest when the underlying email program is already strong. If a platform has weak email authentication but adds a personalized code, it is asking the user to compensate for infrastructure that should have been fixed at the protocol level first.

The right way to think about the stack is this: SPF, DKIM, and DMARC try to stop forged mail from arriving; an anti-phishing code helps a user notice impersonation if a suspicious message still gets through or if the user is comparing messages manually.

Which phishing attacks are anti‑phishing codes effective against?

The feature helps most against broad impersonation attacks where the attacker knows your email address but not much else. In that situation, the attacker can copy a platform’s logo and subject line, but they cannot easily know the secret code you chose inside your account. The code therefore acts as a personalized detail that is expensive to fake at scale.

This is especially useful in crypto, where attackers often impersonate exchanges, wallet providers, NFT platforms, and support teams using urgent transactional language. “Withdrawal requested.” “New device login.” “KYC expires today.” “NFT offer received.” These messages work because they create emotional pressure while looking operationally routine. A missing anti-phishing code interrupts that flow. It gives the user a reason to pause before clicking.

There is also a subtle psychological advantage. Because the user chooses the code, it is more salient than a generic badge or security icon. It feels like their marker, not just the platform’s branding. That personal familiarity is the feature’s main usability strength.

But this strength is also where misunderstanding begins.

When do anti‑phishing codes fail or give a false sense of security?

| Limitation | Why it fails | Impact | Mitigation |

|---|---|---|---|

| Channel scope | Code only in supported channels | Leaves other notifications unprotected | Check which channels are covered |

| Secrecy leak | Mailbox or account compromised | Attacker can replicate code | Rotate or revoke the code |

| User behavior | Users stop checking or misread | False sense of safety | Adopt a verification workflow |

| Targeted attackers | Reconnaissance yields personal details | Code becomes easier to spoof | Use layered defenses (MFA) |

| Misplaced trust | Code does not vet links | Malicious links still dangerous | Navigate manually to official site |

The first limitation is channel scope. The code only protects the channels in which the platform actually includes it. Crypto.com’s help articles are explicit that the code applies to emails sent from the relevant product, and the NFT code is independent from codes for the App or Exchange. Bybit says the code appears in official emails and SMS messages. If a message comes through a channel not covered by the feature, the code cannot help you. Many users overgeneralize here: they assume “my account has anti-phishing enabled” means all platform communications will contain the marker. That is often false.

The second limitation is secrecy. The mechanism assumes the attacker does not know the code. If your mailbox is compromised, if screenshots leak the code, if malware reads your messages, or if an attacker gains access to your account and views or changes security settings, the code may stop being a useful differentiator. At that point it becomes just another copied element, like a logo.

The third limitation is user behavior. A control that depends on being checked every time creates friction, and repeated friction tends to decay into habit. Some users enable the code and then stop looking for it. Others notice only its presence, not whether it is exactly right.

A near miss can still succeed if the user is rushed.

- a similar word

- a partial match

- a familiar-looking phrase

That problem is not specific to anti-phishing codes; it is the broader weakness of any user-facing security signal.

The fourth limitation is attack evolution. Sophisticated phishing increasingly uses targeted reconnaissance, cloned templates, and account-specific lures. Research on phishing clones shows how easily visual imitation can bypass many detection systems. Once an attacker has enough context, a personalized code is no longer a high barrier. It remains a useful speed bump, but not a wall.

The fifth limitation is misplaced trust. A correct anti-phishing code does not make a link safe by itself. You still need to inspect where a link goes, or better, avoid clicking and navigate manually. OKX’s guidance complements its anti-phishing code feature with classic behavioral advice: do not click unknown links, avoid logging into unsafe websites, and manually enter the official site rather than trusting search results or embedded links. That pairing is important because the code only authenticates the message pattern, not every embedded action inside it.

How should I verify exchange messages using an anti‑phishing code?

The feature works best when it becomes part of a stable verification habit rather than a standalone checkbox in settings.

Suppose you receive an email saying a withdrawal request needs confirmation. A careful workflow is not “I saw my code, so I clicked the button.” It is: the message claims to be from the platform; I check whether this channel is one where the platform normally includes my anti-phishing code; I verify that the code is exactly what I set; I treat a missing or wrong code as suspicious; and even if the code is correct, I avoid clicking embedded links and instead open the app or type the known domain manually.

That workflow combines message verification with navigation hygiene. The anti-phishing code screens for obvious impersonation. Manual navigation screens for malicious links and domain tricks. Account-level protections such as MFA reduce the damage if credentials leak anyway. No single layer is enough on its own.

There is also a maintenance dimension. Several platforms recommend rotating the code periodically; Crypto.com recommends updating it every 30 days, and allows update or disable actions from the same screen. This is best understood as exposure reduction, not a fundamental requirement. If the code has somehow leaked, rotation limits how long that leaked knowledge remains useful. But frequent rotation can also make the code less memorable, which weakens the user-checking habit. So the practical tradeoff is between freshness and recognizability.

A reasonable choice is to use a code that is memorable to you but not publicly associated with you, and to change it if you suspect mailbox compromise, account compromise, or repeated exposure. Rotation on a rigid schedule is less important than making sure you still actually recognize the code when you see it.

How do exchanges and platforms differ in anti‑phishing code implementation?

| Platform | Channels covered | Sync across products | Setup verification | Rotation advice |

|---|---|---|---|---|

| Crypto.com NFT | Email only | Independent from App/Exchange | Inbox verification on setup | 30‑day update recommended |

| Crypto.com App / Exchange | App and Exchange emails | Codes can sync when connected | Menu → Settings → Security | 30‑day update recommended |

| Bybit | Official emails and SMS | Account-level application | Security verification required | No cadence stated |

| OKX | Official emails | Account-bound | User Center → Security setup | No cadence stated |

There is no broadly adopted formal standard for anti-phishing codes in transactional messages comparable to the IETF standards for DKIM or DMARC. The evidence here is telling. Exchanges and crypto platforms document the feature in their help centers and product settings, but the formal standards and government guidance around trustworthy email focus on domain authentication, policy enforcement, reporting, transport security, and, in some cases, brand indicators like BIMI. They do not define a standard format, placement rule, or security model for personalized anti-phishing codes.

That lack of standardization has consequences. Different platforms cover different channels. Crypto.com NFT scopes the feature to emails from that product and keeps it separate from the App or Exchange. Crypto.com App can synchronize the code with a connected Exchange account. Bybit extends the marker to official emails and SMS. OKX frames the code as an email authenticity check and pairs it with a list of official sender addresses.

These are meaningful design differences, but they all implement the same underlying concept: a personalized marker inserted into official communications after account-bound setup. Because there is no shared protocol standard, users need to read each platform’s own rules carefully. You cannot assume portability of expectations from one exchange to another.

Which aspects of anti‑phishing codes are security fundamentals and which are implementation choices?

The fundamental part of the concept is the shared secret marker between user and platform. If the user can choose or recognize a secret string, and the platform can reliably include it in legitimate messages, then fake messages without access to that secret become easier to detect.

Most other details are convention. Whether the code appears at the top or bottom of the email, whether it is called “anti-phishing code” or “security phrase,” whether it covers email alone or email plus SMS, whether updates require passcode entry or inbox verification; these are implementation choices. They affect usability and operational safety, but not the core idea.

Also fundamental is that the code is not self-authenticating. It gets much of its real-world value from being embedded in a broader security environment: authenticated email delivery, strong sender-domain controls, careful account-change verification, and user habits that do not rely on a single visual cue. Without that environment, the code is mostly decoration.

Conclusion

An anti-phishing code is a personalized marker that some platforms insert into official messages so users can better distinguish real communications from impersonation attempts. Its power comes from a simple mechanism: the attacker can copy branding more easily than they can guess a secret that only you and the platform know.

That simplicity is both its value and its limit. The code helps against generic phishing, especially in email-driven crypto scams, but it does not replace SPF, DKIM, DMARC, cautious navigation, or strong account security. The right way to remember it is short: an anti-phishing code is a useful authenticity hint, not a proof of safety.

How do you secure your crypto setup before trading?

Secure your account by combining access controls, message verification, and transfer hygiene before you trade. On Cube Exchange, use the account security settings to enable strong multi-factor authentication, verify communication markers (anti-phishing phrase where available), and follow the transfer checks below to reduce phishing and destination-risk.

- Enable time-based MFA (TOTP) in your Cube account security settings and store the backup recovery codes offline.

- Set or confirm an anti-phishing phrase in your account (if available) and note which channels (email, SMS, or push) include it so you only trust messages that show the exact phrase.

- Never click links in suspicious messages: open Cube by typing the known domain or using a bookmarked URL and verify that any message includes your phrase before acting.

- Before withdrawing, paste the destination address into a local text editor and compare its checksum (first/last 6 characters); send a small test transfer if you haven’t used the address before.

Frequently Asked Questions

They complement each other: SPF/DKIM/DMARC are protocol-level signals that help mail systems block or flag forged mail, while an anti‑phishing code is a human‑visible, account‑specific marker inserted into message bodies to help recipients spot impersonations; it is useful when authentication fails to stop a forged message or when users need a simple visible cue.

No - if an attacker already controls your inbox, your platform account, or has learned the code by other means, a correct code in a message no longer distinguishes attackers from the real sender.

It depends on the vendor: some products limit the code to specific product emails (Crypto.com NFT is explicit about email-only, per‑product scope), Bybit documents use in official emails and SMS, and OKX frames it as an email authenticity check - there is no universal channel list, so you must check each platform’s help pages.

Treat a missing or incorrect code as suspicious: verify the message by independently opening the official app or typing the known domain rather than clicking embedded links, and contact support if unsure - platforms and the article recommend independent verification rather than trusting absence/presence alone.

Yes - an attacker can include a code if they have discovered it, so anti‑phishing codes mainly raise the cost of large, untargeted phishing but are not a reliable barrier against targeted recon or account/messaging compromise.

Pick something memorable to you but not publicly linked to your identity, and change it if you suspect compromise; some vendors (Crypto.com) advise periodic updates (e.g., every 30 days) as an exposure‑reduction practice, though rotation is advisory and may reduce memorability.

No - the code is a lightweight, user‑visible authenticity hint that proves only that the message contains an account‑specific marker; it does not cryptographically authenticate links, guarantee the sender’s systems are uncompromised, or make embedded links safe.

There is no standardized format or universal policy for these codes; implementations vary in channel coverage, placement, and setup flows across platforms, so you cannot assume one provider’s behavior applies to another.

Related reading