What Is Post-Quantum Cryptography?

Learn what post-quantum cryptography is, why quantum computers threaten today’s public-key systems, and how new PQC standards work.

Introduction

Post-quantum cryptography is cryptography designed to remain secure even if attackers eventually gain access to large-scale quantum computers. That sounds like a distant concern, but the stakes are immediate: much of today’s digital security depends on public-key systems such as RSA and elliptic-curve cryptography, and those systems are exactly the ones a sufficiently capable quantum computer could undermine.

The puzzle is that cryptography already works remarkably well on ordinary computers. If that is true, why is anyone replacing it? The answer is not that all cryptography is suddenly obsolete. It is that modern cryptography rests on specific hard problems, and quantum computers change which problems look hard. Post-quantum cryptography exists because security is never “math in general”; it is always security relative to assumptions about what an attacker can compute.

That distinction is the whole topic. If the attacker’s machine changes, the safe assumptions change. Post-quantum cryptography keeps the familiar goals of encryption, key exchange, and digital signatures, but rebuilds them on mathematical problems that are currently believed to resist both classical and quantum attacks.

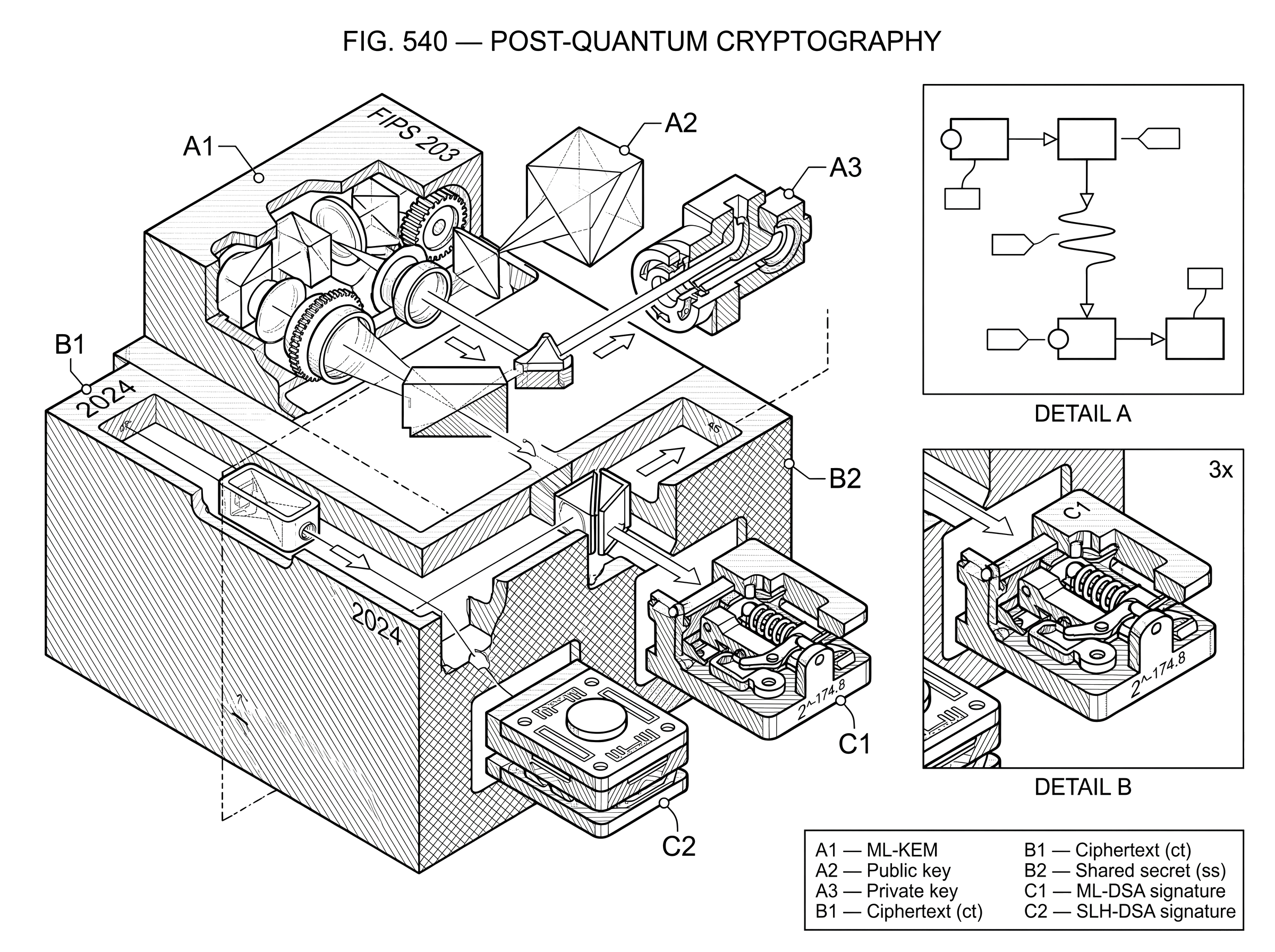

In 2024, NIST finalized its first three principal PQC standards: FIPS 203 for ML-KEM key establishment, FIPS 204 for ML-DSA signatures, and FIPS 205 for SLH-DSA signatures. NIST’s guidance is not to wait for some dramatic future moment; these standards can and should be used now, partly because migration takes years and partly because some attackers can already collect encrypted data today in hopes of decrypting it later.

How do quantum computers threaten public-key cryptography?

Most readers have heard a vague version of the claim that quantum computers will “break encryption.” That phrase is too broad to be useful. The more precise statement is narrower and more important: a cryptanalytically relevant quantum computer would threaten much of today’s public-key cryptography, especially schemes based on integer factorization and discrete logarithms, such as RSA, Diffie–Hellman, ECDH, ECDSA, and Schnorr-style systems over elliptic curves.

Here is the mechanism. Classical cryptosystems are built around one-way structure: it is easy to compute the public object from the private one, but believed hard to reverse. In RSA, the structure comes from multiplying large primes while making factoring hard. In elliptic-curve systems, the structure comes from repeated group operations while making the discrete logarithm hard. A large enough quantum computer changes this landscape because quantum algorithms are known that can solve these underlying problems dramatically faster than known classical methods.

That does not mean every cryptographic primitive collapses. Symmetric cryptography, such as AES and hash functions, is affected much less directly. Quantum attacks like Grover’s algorithm can reduce effective brute-force security, but usually by a square-root factor rather than by the kind of structural collapse seen with Shor’s algorithm against factoring and discrete logs. So the immediate migration pressure falls mostly on public-key cryptography.

This is why PQC is mostly about replacing the asymmetric pieces of real systems. When your browser negotiates a session key, when a server proves its identity, when a wallet signs a transaction, when a package manager verifies a software update, or when a blockchain validates account ownership, the vulnerable layer is often the public-key mechanism underneath.

What security goals does post-quantum cryptography preserve?

It helps to separate the job of a cryptosystem from the math used to do it. The jobs stay the same.

A system still needs a way for two parties to establish a shared secret over an open network. A system still needs signatures so that a verifier can check who authorized a message and whether the message was altered. A system still needs these mechanisms to be efficient enough for servers, browsers, phones, hardware devices, and long-lived infrastructure.

Post-quantum cryptography keeps these same jobs and changes the assumptions underneath them. Instead of relying on factoring or elliptic-curve discrete logs, it uses problems from other mathematical families that are currently believed to remain hard even for quantum attackers. NIST’s first standards reflect two such families: structured lattices and hash-based constructions.

That is why the phrase “quantum-resistant” is more useful than “future cryptography.” PQC is not a new communication model and not a quantum network. It is ordinary cryptography, implemented on ordinary computers, but with different hard problems.

This also clarifies a common confusion: post-quantum cryptography is not the same thing as quantum cryptography. PQC is classical software and hardware using different mathematics. Quantum cryptography, by contrast, relies on quantum-physical effects in the communication process itself. The names sound similar, but the mechanism is entirely different.

How do designers pick problems that resist quantum attacks?

The hardest part of cryptography is not inventing a complicated formula. It is finding a computational problem with the right asymmetry: easy for honest parties to use, but hard for attackers to invert or forge against.

For post-quantum cryptography, the design criterion becomes stricter. The underlying problem must appear hard not only for ordinary algorithms but also for known quantum ones. This is why NIST describes PQC algorithms as based on mathematical problems believed to be difficult for both conventional and quantum computers.

That phrase “believed to be” matters. Cryptography almost never gives unconditional guarantees. Security comes from reductions, models, and accumulated cryptanalysis. A standard does not prove an algorithm unbreakable; it says the algorithm has survived significant public scrutiny, fits known theory, and is currently the best available design for the intended use.

The NIST process made this visible. Starting in the mid-2010s, NIST ran an open, international, multi-round evaluation with dozens of submissions from many countries. Candidates were attacked, benchmarked, debated, and in some cases eliminated. That winnowing is not an embarrassing side story. It is the process by which cryptography becomes trustworthy.

It also explains why there are multiple standards rather than a single winner. In cryptography, diversity can be a form of risk management. If an entire family later weakens, having an alternative built on a different structure matters.

How does a post-quantum key-exchange (KEM) work in practice?

| Parameter set | Security strength | Performance | Decapsulation failure | Typical use |

|---|---|---|---|---|

| ML-KEM-512 | Lower security | Fastest | 2^-138.8 | Latency sensitive clients |

| ML-KEM-768 | Medium security | Balanced | 2^-164.8 | General purpose servers |

| ML-KEM-1024 | Higher security | Slowest | 2^-174.8 | High assurance systems |

The easiest way to make PQC concrete is to look at the problem of establishing a shared secret.

In older public-key systems, this job was often done with Diffie–Hellman or elliptic-curve Diffie–Hellman. In the post-quantum setting, NIST’s first standardized replacement for this role is ML-KEM, specified in FIPS 203. A KEM, or key-encapsulation mechanism, is a three-part primitive: KeyGen, Encaps, and Decaps.

The idea is simple. Bob runs KeyGen to create a public key and a private decapsulation key. He publishes the public key. Alice uses Encaps on Bob’s public key, which produces two outputs: a ciphertext ct and a shared secret ss. She sends ct to Bob. Bob applies Decaps with his private key to ct, recovering the same shared secret ss. They can then feed that shared secret into the rest of the protocol to derive symmetric session keys.

What matters is the asymmetry. Anyone can see Bob’s public key and Alice’s ciphertext, but without Bob’s private key an attacker should not be able to recover the shared secret. In ML-KEM, the security is tied to the presumed hardness of the Module Learning With Errors problem, usually abbreviated MLWE. NIST states that ML-KEM’s security is related to the difficulty of MLWE and is presently believed secure even against adversaries with a quantum computer.

A good intuition for lattice-style schemes is that they hide structure inside noisy high-dimensional linear relations. The legitimate user knows how the randomness and trapdoor fit together, so recovery is possible. The attacker sees equations polluted by carefully chosen noise, where the problem of separating signal from noise appears computationally hard. This analogy explains why “errors” are central; it fails if taken too literally, because the actual schemes depend on delicate algebraic structure, not just generic messy linear algebra.

A worked example helps. Imagine a browser connecting to a server in a future TLS session using a PQC-capable handshake. The server presents an ML-KEM public key. The browser encapsulates to that key, producing a ciphertext and a shared secret, then sends the ciphertext in the handshake. The server decapsulates and gets the same shared secret. From there, both sides derive symmetric traffic keys exactly as before. The visible change is mostly in the public-key step. The rest of the channel still relies on standard symmetric cryptography for bulk encryption because symmetric ciphers remain efficient and comparatively less disrupted by quantum attacks.

This is one reason migration is operationally plausible. You are not rebuilding the entire security stack from scratch. You are replacing the asymmetric component where the quantum threat is most acute.

How do post-quantum signature schemes differ from classical signatures?

| Scheme | Assumption | Signature size | Performance | Best for |

|---|---|---|---|---|

| ML-DSA | Lattice (MLWE/MSIS) | Moderate size | Fast verification | General purpose signatures |

| SLH-DSA | Hash preimage resistance | Large signatures | Higher verification cost | Conservative backup |

| Classical EC | Elliptic-curve discrete log | Small signatures | Very fast verification | Existing deployments |

Digital signatures solve a different problem. They do not establish a shared secret; they prove authorization and integrity.

NIST standardized two signature families in 2024. ML-DSA in FIPS 204 is a module-lattice-based signature scheme derived from CRYSTALS-Dilithium. SLH-DSA in FIPS 205 is a stateless hash-based signature scheme based on SPHINCS+.

The reason to standardize both becomes clearer once you ask what could go wrong. Lattice-based signatures are attractive because they are practical and relatively efficient. Hash-based signatures are attractive because they rest on a more conservative assumption: the hardness of finding hash preimages and related hash-function security properties. In other words, ML-DSA is often the practical workhorse, while SLH-DSA is a structurally different backup.

ML-DSA is “Schnorr-like” in flavor, but that phrase should be handled carefully. It does not rely on the same hardness assumption as Schnorr signatures over elliptic curves. Rather, it uses a Fiat–Shamir-with-aborts style construction over lattice problems including MLWE-related assumptions. The rough mental model is that the signer produces a commitment, derives a challenge from the message and commitment using hashing, and then responds in a way that proves knowledge of the secret while controlling information leakage. The “with aborts” part exists because some responses would reveal too much structure and must be rejected and retried.

SLH-DSA works very differently. It builds signatures from hash-based components such as FORS, WOTS+, and Merkle-tree authentication inside a larger hypertree structure. The mechanism is less elegant from a size perspective but more conservative in its assumptions. If you trust cryptographic hash functions, you get a path to signatures that avoids the algebraic assumptions used by lattice systems.

This difference has practical consequences. In many settings, signature size, verification cost, implementation complexity, and confidence in the hardness assumption trade off against each other. PQC is not a single “better” replacement. It is an engineering choice under uncertainty.

Why should organizations begin migrating to post-quantum cryptography now?

The main reason is a simple timing mismatch.

If a quantum computer capable of breaking today’s public-key cryptography appeared overnight, most organizations could not replace every vulnerable protocol, certificate, firmware signing process, hardware module, embedded device, and software dependency quickly enough. Migration is slow because cryptography is buried deep inside systems, supply chains, standards, and procurement cycles.

The second reason is the harvest now, decrypt later threat. Some information remains sensitive for many years: government data, trade secrets, health information, industrial designs, identity archives, and long-lived credentials. An attacker does not need a quantum computer today to benefit later. They can collect encrypted traffic now, store it, and wait.

This is why NIST says the standards can and should be used now, and why CISA, NSA, and NIST have urged organizations to build quantum-readiness roadmaps, perform cryptographic inventories, assess long-secrecy data, and engage vendors. The uncertainty in quantum timelines does not remove the need to prepare; it increases the value of preparation because the transition itself is long.

NIST has also set a policy direction: quantum-vulnerable algorithms are expected to be deprecated and ultimately removed from NIST standards by 2035, with high-risk systems moving earlier. That does not mean every system flips on a single deadline. It means the migration window is already open.

What implementation risks matter for post-quantum deployments?

| Failure mode | What fails | Example | Mitigation |

|---|---|---|---|

| Weak randomness | Reduced claimed security | Insufficient RNG entropy | Use approved RBGs |

| Side channels | Secret leakage | KyberSlash timing leak | Constant time implementations |

| Component misuse | Broken guarantees | Using K-PKE standalone | Follow specified transforms |

| Input validation | Interoperability or leakage | Seed/expandedKey mismatch | Validate and reject malformed |

A common misunderstanding is that once an algorithm is standardized, the security problem is basically solved. In practice, implementation is where many real failures happen.

NIST’s standards are careful about this. They repeatedly note that conformance to the standard does not guarantee a secure implementation. Randomness must come from approved generators with sufficient security strength. Sensitive intermediate values must be protected and destroyed. Inputs must be validated correctly. Building blocks intended only as internal components must not be exposed or reused in unsafe ways.

ML-KEM illustrates the point well. FIPS 203 explicitly warns that the internal K-PKE component is not meant to be used as a stand-alone public-key encryption scheme. That may sound like a technical footnote, but it expresses a deep rule of cryptographic engineering: a secure system is often more than the apparent core primitive, and stripping away the surrounding transform can remove exactly the security property you needed.

Side channels create another layer of risk. The KyberSlash episode showed how an implementation of Kyber-derived code could leak information through variable-time integer division. The underlying math was not broken. The danger came from low-level timing behavior in software. That is not unusual. Post-quantum schemes, like classical ones, live or die by constant-time coding, careful memory handling, and sound integration into protocols.

So when people say PQC is ready, the careful version is this: standardized algorithms are available, but secure deployment still requires ordinary cryptographic discipline. Quantum resistance does not excuse weak engineering.

Why avoid relying on a single post-quantum algorithm family?

PQC is mature enough to standardize, but not so mature that uncertainty is gone.

The clearest evidence is that some seemingly promising families have fallen sharply under cryptanalysis. SIKE, an isogeny-based candidate that advanced deep into the NIST process, was practically broken in 2022 by a key-recovery attack on SIDH/SIKE. Rainbow, a multivariate signature finalist, was also broken far more efficiently than expected. These failures are not proof that PQC as a whole is unsound. They are proof that public cryptanalysis is doing its job.

The right lesson is not panic; it is humility. Security claims in cryptography are always conditional on assumptions and current knowledge. A post-quantum algorithm is not secure because it has a futuristic name. It is secure only insofar as the underlying hardness assumption, proof strategy, and implementation all hold up under attack.

This is also why NIST continues to evaluate additional algorithms, including Falcon for signatures and HQC for key encapsulation, as backups or alternatives. Standardization is not just a race to pick winners. It is a process for building enough resilience into the ecosystem that one break does not collapse the whole migration effort.

How will post-quantum cryptography affect blockchains, wallets, and internet infrastructure?

The implications are broad because public-key cryptography sits almost everywhere.

In web security, TLS handshakes need post-quantum key establishment, and transitional hybrid designs combine a classical mechanism with a PQ one so the session remains secure if at least one component holds. The IETF’s TLS hybrid design uses this exact idea: concatenate the contributions from two key exchange mechanisms and feed the result into the normal TLS 1.3 key schedule. This is a migration strategy, not a permanent theorem of how all protocols must work, but it is attractive because it reduces the risk of switching all trust to a newer primitive at once.

In PKI, certificates and software stacks need new identifiers, formats, and encoding rules for PQ keys. That is why standards work extends beyond raw algorithms into certificate formats, protocol negotiation, and validation tooling.

In blockchains and wallets, the problem is especially visible because ownership is often expressed directly through signatures. A chain that relies on ECDSA or Schnorr-style signatures over elliptic curves inherits their quantum vulnerability at the signature layer. The exact migration path depends on the architecture: some systems could add new signature types, some could use address formats that commit to upgraded keys, some could rely on script or account abstraction, and some may face awkward compatibility tradeoffs. But the core issue is the same as on the web: if authorization ultimately rests on discrete-log assumptions, a sufficiently capable quantum adversary changes the threat model.

Hash-based signatures are often discussed in blockchain contexts because blockchains already rely heavily on hash functions and Merkle structures. That does not automatically make them the best answer for every chain. Signature size and throughput costs matter. Still, the conceptual connection is real: post-quantum migration in decentralized systems often pushes attention back toward the trust one places in hash-function security.

What are the main tradeoffs when adopting post-quantum cryptography?

The simplest durable summary is this: post-quantum cryptography buys a different security assumption, not free security.

You gain resistance against the known quantum attacks that threaten RSA and elliptic-curve systems. In exchange, you usually accept larger keys, larger ciphertexts or signatures, different performance profiles, and younger assumptions with a shorter real-world track record than classical ECC. You also inherit the usual implementation hazards: bad randomness, side channels, misuse of APIs, and protocol integration mistakes.

For example, ML-KEM has standardized parameter sets with increasing security strength and decreasing performance. That is a normal engineering tradeoff. Likewise, signature schemes differ in size and speed. There is no universal best choice outside a use case.

This is why serious migration work starts with inventory and threat modeling rather than algorithm fandom. You first ask where the vulnerable public-key mechanisms are, how long the protected data must stay secret, what devices and protocols are involved, and what interoperability constraints exist. Only then do parameter choices make sense.

Conclusion

Post-quantum cryptography is the effort to keep modern digital security working when the attacker’s computer changes. The important idea is not “quantum is coming” in the abstract; it is that cryptographic security depends on concrete hardness assumptions, and some of today’s most widely used assumptions would fail against a sufficiently capable quantum machine.

PQC responds by keeping the familiar jobs of cryptography (key establishment and digital signatures) while rebuilding them on assumptions currently believed to survive both classical and quantum attack. The standards are now real, the migration has begun, and the enduring lesson is simple: cryptography is only as future-proof as the problems it asks attackers to solve.

Frequently Asked Questions

Start now: NIST says the new PQC standards "can and should be used now" because migration takes years, some attackers may harvest encrypted data today, and NIST plans to deprecate quantum-vulnerable algorithms through about 2035, so organizations should inventory and roadmap migration immediately.

No - quantum computers don’t collapse all cryptography. Symmetric ciphers and hash functions are affected much less directly: quantum search (Grover) gives roughly a square-root speedup against brute force, while Shor-like algorithms specifically threaten factoring and discrete-log based public-key systems, so the primary migration pressure is on asymmetric crypto.

Because different algorithm families rest on different mathematical assumptions and future cryptanalysis can invalidate entire families, NIST standardized multiple algorithms (ML-KEM, ML-DSA, SLH-DSA) to preserve diversity and risk management; lattice schemes are practical but rely on algebraic assumptions, while hash-based schemes are more conservative but typically larger.

No - PQC schemes are not proven unbreakable; their security is described as "believed to be" hard against quantum attackers based on reductions, public cryptanalysis, and accumulated scrutiny, not unconditional proofs, so continued analysis and conservatism are necessary.

Standards are necessary but not sufficient: real-world risks include bad randomness, API misuse, exposed internal components, and side channels; the KyberSlash/crystals-go episode is an example where variable-time integer division leaked secrets despite the math remaining sound, and NIST documents explicitly warn that conformance does not ensure a secure implementation.

Hybrid key-exchange designs are the recommended transitional approach for TLS and similar protocols: combine a classical mechanism with a PQ KEM (e.g., concatenate contributions and feed into the TLS key schedule) so the session is secure if at least one primitive holds, though protocol updates and practical limits (very large public keys or ClientHello size) remain operational challenges.

Expect tradeoffs: post-quantum replacements typically change key sizes, ciphertext/signature sizes, and performance profiles and also rely on younger assumptions; for example, ML-DSA (lattice-based) is comparatively efficient while SLH-DSA (hash-based) is based on more conservative hash assumptions but tends to produce larger signatures, so choice depends on your threat model, device constraints, and interoperability needs.

Blockchains and wallets are exposed because account ownership is often asserted directly by signatures; hash-based signatures are attractive in that context because blockchains already use hash/Merkle structures and hash assumptions are conservative, but larger signature sizes and throughput impacts mean they are not an automatic best fit for every chain - migration choices depend on each protocol’s architecture and performance constraints.

Related reading