What Is Algorithmic Trading?

Learn what algorithmic trading is, how it works, why markets use it, and how automation improves execution while creating new risks.

Introduction

Algorithmic trading is the use of computer algorithms to determine order parameters, submit orders, and often manage those orders after submission. That sounds straightforward, but it hides the real puzzle: why did markets move so much of the trading process into software in the first place, and why does that same shift make markets both more efficient and more fragile?

The answer starts with a basic constraint. Trading is not just deciding whatto buy or sell. It is also decidinghow to express that decision into a market full of other participants, changing prices, limited liquidity, and strict infrastructure rules. A portfolio manager may know they want to sell a large position, but if they expose the whole order at once, they can move the market against themselves. A market maker may want to quote both sides continuously, but doing that across many symbols and venues is impossible by hand. An arbitrageur may see a temporary mispricing, but the opportunity may disappear in milliseconds.

So algorithmic trading exists because markets are not merely places for opinions; they are matching engines under constraints. Software is good at applying rules consistently under those constraints. It can slice a large order into smaller pieces, update quotes when the order book changes, enforce limits before an order is sent, and react to information faster than a human workflow can. In both U.S. and EU regulatory descriptions, the defining feature is that a computer algorithm automatically determines meaningful order parameters rather than merely routing or post-processing an already decided trade.

That definition is broad on purpose. It includes a broker’s execution algorithm that tries to minimize market impact, a market-making system that continuously updates bid and ask quotes, and higher-frequency strategies that react to very short-lived signals. What unifies them is not a particular speed or style. It is the transfer of decision logic from a person making each order manually to a system executing pre-specified rules.

How do you turn trading intent into executable algorithmic rules?

The simplest way to understand algorithmic trading is to separate investment intentfromexecution mechanism. A human or institution may decide, “I want exposure to this asset,” or “I need to reduce this position.” That is the economic goal. Algorithmic trading begins when that goal is translated into rules a machine can apply repeatedly: how large each order should be, when to send it, at what price, on which venue, and what to do if conditions change.

This distinction matters because many trading mistakes come from treating “buy 1 million shares” as if it were a single decision. In practice, it is a sequence problem. Sending everything immediately may complete the trade quickly, but it can push the price away from you. Waiting too long may reduce impact, but it exposes you to the risk that the market moves before you finish. The algorithm’s job is to manage that tradeoff.

That is why many of the most important algorithms in markets are not speculative at all. They are execution algorithms. Regulatory and market-structure documents often describe them as automated execution routines that target profiles defined by time, price, volume, or some combination. A time-based algorithm might spread an order evenly through the day. A volume-based algorithm might trade in proportion to observed market volume. A price-sensitive algorithm might slow down or speed up depending on where the market is trading.

A concrete example makes this clearer. Imagine a pension fund wants to sell a very large position in a stock index future. If a trader enters the whole order at once, everyone in the book sees unusual size and may lower their bids, making execution worse. An execution algorithm instead breaks the total order into smaller child orders, submits some now, waits, measures how much trading is happening, and continues according to its rules. Nothing magical has happened. The algorithm has simply converted a large, blunt instruction into a controlled stream of smaller decisions.

This is also where the phrase “algorithm” can mislead. The hard part is often not prediction; it is control. The system must keep track of inventory, partial fills, venue responses, risk limits, cancellations, and the state of the order book. It is less like a one-time calculation and more like a feedback system operating inside a market.

How do execution algorithms balance market impact and price risk?

| Pace | Primary cost | Short-term effect | Best when |

|---|---|---|---|

| Fast | High market impact | Quick completion, moves price | Urgent liquidity need |

| Slow | High price exposure | Lower immediate impact | Stable market, low urgency |

| Adaptive (volume/price) | Model and signal risk | Pace adjusts with conditions | Reliable liquidity signals |

The central problem of execution is easy to state and impossible to eliminate: trading itself changes price. If you need to trade a lot, you are not a passive observer of the market; you become part of what moves it.

This is why execution theory focuses on a basic tradeoff between market impactandprice risk. If you trade quickly, you are more likely to move the market through your own demand or supply. If you trade slowly, you reduce immediate impact, but you spend more time exposed to whatever the market does next. The classic Almgren-Chriss framework formalized this as a tradeoff between transaction costs from temporary and permanent market impact and the volatility risk of waiting.

Here is the intuition in plain language. Suppose you must sell a large position by the end of the day. Selling aggressively now may push prices down, so your average execution gets worse. Selling gradually avoids some of that pressure, but if the market falls during the day for unrelated reasons, delay also hurts you. There is no strategy that makes both problems disappear. Algorithmic execution is the art of choosing which cost to bear, and in what amount.

That is why simple schedules like TWAP- or VWAP-like behavior became so common. They are not optimal in every situation, but they solve a real operational problem: they impose discipline on large trades. A time-weighted schedule prevents a trader from dumping too much too early. A volume-linked schedule tries to hide a large order inside the market’s normal flow. More adaptive versions modify the pace when spreads widen, depth falls, or fills become difficult.

But each design choice creates assumptions. A volume-linked algorithm assumes observed volume is a useful proxy for available liquidity. In quiet conditions that may be reasonable. In stressed conditions it can fail badly, because rising volume may reflect panic, position unwinds, or rapid intermediation rather than genuine capacity to absorb more order flow. The distinction between trading activityandtrue liquidity becomes crucial.

What types of algorithmic trading strategies exist and when are they used?

People often speak about algorithmic trading as if it were a single style. It is better understood as a family of systems that automate different parts of trading.

One important class is execution algorithms, which exist to carry out a pre-existing buy or sell decision with lower cost and less information leakage. Their success is judged less by whether they “predict the market” than by whether they obtain better execution relative to a benchmark.

A second class is market making. Here the goal is to continuously post buy and sell quotes, earn the spread, and manage inventory risk. The algorithm must update quotes as market prices move, other participants trade against it, and its own inventory accumulates. What matters most is not just speed but maintaining a stable quoting process without becoming the easy counterparty when others are better informed.

A third class is statistical or relative-value trading, where the algorithm looks for price relationships that historically revert or should move together. The software monitors many instruments, computes signals, and trades when the relationship deviates enough to justify costs and risk.

High-frequency trading sits across some of these categories rather than replacing them. It usually refers to very fast, highly automated strategies that depend on rapid order submission, cancellation, and response. Some high-frequency firms are effectively market makers. Others are short-horizon arbitrageurs or liquidity takers reacting to fleeting signals. Speed matters here, but it is still not the essence. The essence is that the decision and order-management loop has been compressed into software operating at machine timescales.

That broader framing helps avoid a common misunderstanding: algorithmic trading is not synonymous with high-frequency trading. Many algorithmic trades are slow by machine standards and exist simply to execute institutional orders more carefully. High-frequency trading is a subset, not the whole field.

How does market microstructure (order books and venue fragmentation) affect algorithmic trading?

Algorithmic trading makes more sense once you stop imagining the market as one number on a screen. A real electronic market is an evolving order book across one or many venues. At any moment there are bids, offers, hidden intentions, cancellations, queues, routing rules, and market data feeds updating asynchronously.

An algorithm does not trade against “the market” in the abstract. It interacts with this microstructure. It decides whether to cross the spread and demand immediate execution, or wait passively in the queue. It decides whether to expose size on one venue or split across several. It decides whether to cancel and repost when the book changes. In fragmented markets, it may also decide how to route orders across exchanges and alternative trading systems.

This is why message traffic matters. A large share of algorithmic activity is not completed trades but updates to trading intentions: new orders, cancellations, replacements, and quote revisions. Research on the NYSE’s autoquote rollout found that increased algorithmic activity was associated, particularly for large stocks, with narrower spreads and more informative quotes. The mechanism is intuitive: when algorithms update quotes quickly in response to new information, part of price discovery happens in the quotes themselves rather than only after trades occur.

That improvement comes with a condition. The infrastructure has to keep up. Automated quoting, routing, and data dissemination only help if the underlying systems have enough capacity, resiliency, and integrity. That is one reason modern regulation does not focus only on trading firms. It also addresses exchanges, ATSs, and other market infrastructure whose automated systems support trading, routing, market data, and surveillance.

When does algorithmic trading improve liquidity and price discovery?

Used well, algorithmic trading improves markets through a simple mechanism: it reduces the gap between changing information and changing orders. Humans are slow, inconsistent, and capacity-limited. Software can revise quotes or execution schedules continuously, which tends to narrow spreads, reduce manual delay, and make prices incorporate information more quickly.

That is the best case for algorithmic trading. A broker handling a large institutional order can reduce signaling and market impact by trading gradually. A market maker can quote many instruments more consistently than a human specialist ever could. Arbitrage algorithms can reduce price gaps across related instruments and venues, helping prices stay aligned. In liquid products, this often shows up as tighter spreads and faster price discovery.

Even standardization contributes to this improvement. Industry infrastructure such as FIX messaging and FIXatdl exists because algorithmic trading is not just strategy logic; it is also interface design. A buy-side trader using an execution algorithm needs a standard way to specify parameters like urgency, benchmark, start and end times, and participation limits. Standardized electronic expression lowers friction between front-end trading tools and broker execution systems.

So the benefit is not merely “computers are faster.” It is that software can express, transmit, and revise trading intent with far more precision than voice trading or manual key entry. Precision lowers certain costs.

How can algorithmic trading destabilize markets during stress?

| Failure mode | Mechanism | Typical result | Mitigation |

|---|---|---|---|

| Volume-targeting algos | Speed increases with observed volume | Algorithm accelerates selling, deepens liquidity hole | Add price/time checks; limit participation |

| HFT liquidity withdrawal | Rapid inventory rebalancing and quote removal | Buy-side depth collapses; spreads widen | Circuit breakers; calibrated VCMs |

| Code deployment bug | Faulty release or misconfiguration | Runaway erroneous orders and losses | Release discipline; rollbacks; staging |

| Correlated risk limits | Many firms trigger similar controls | Simultaneous quote withdrawal, stub markets | Granular, localized controls; stress tests |

The same mechanism that improves markets can also destabilize them. If many firms react to the same signals, use similar controls, or withdraw simultaneously under stress, automation creates correlation where participants may have thought they were independent.

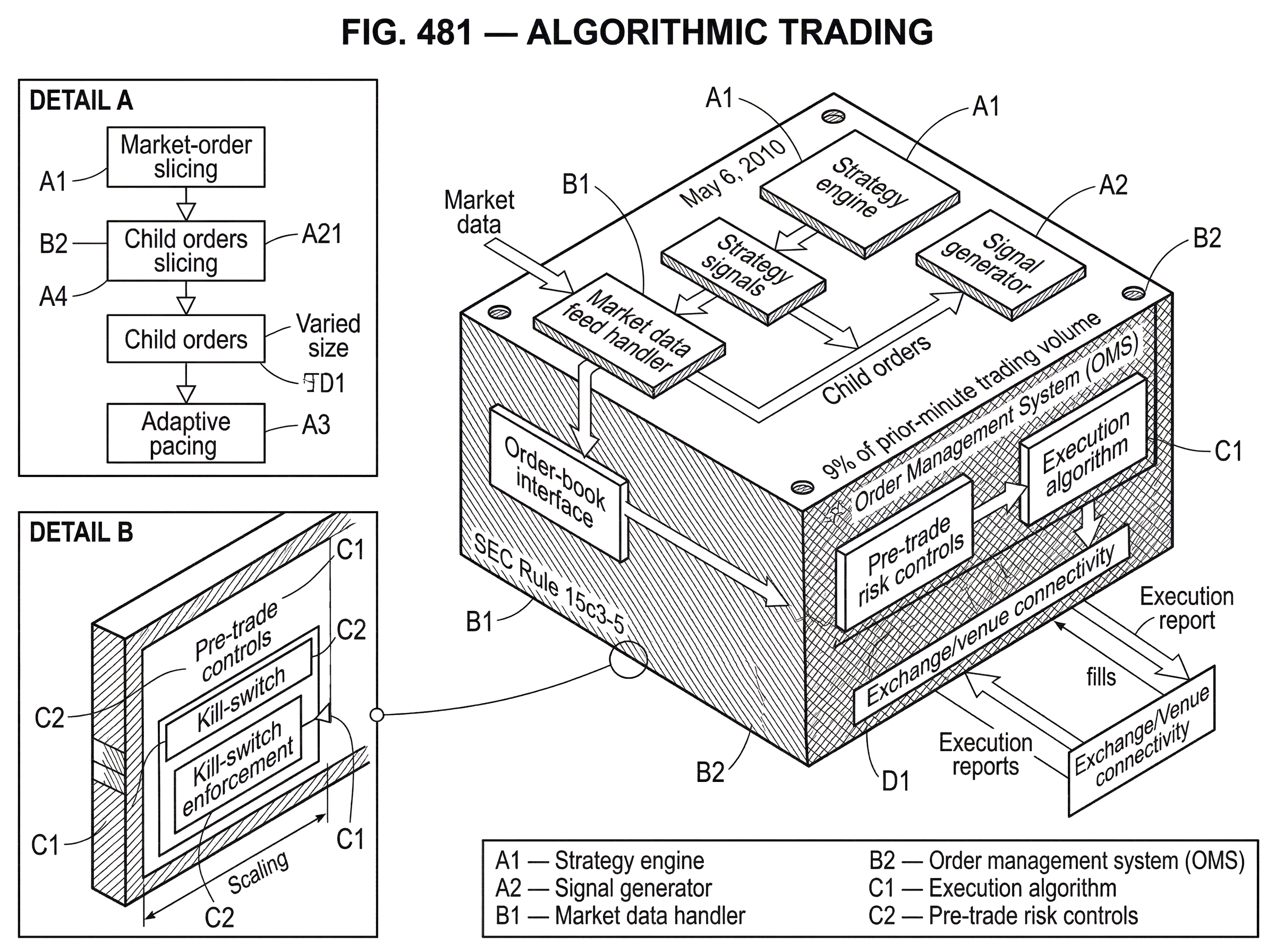

The clearest illustration is the May 6, 2010 flash crash. According to the joint CFTC-SEC staff report, a large institutional seller used an automated execution algorithm in the E-Mini S&P 500 futures market that targeted 9% of prior-minute trading volume and did not account for price or time. As volume rose during a stressed market, the algorithm accelerated its own selling. That design made sense only if volume reliably indicated available liquidity. In those conditions, it did not.

The report describes a feedback loop. High-frequency traders initially absorbed some of the sell pressure, but then rapidly traded among themselves and reduced net liquidity provision. Buy-side depth in the E-Mini collapsed to a tiny fraction of its earlier level, the stress propagated into related products including SPY and individual equities, and many liquidity providers’ automated controls or manual risk reviews caused them to pull back. The important lesson is not that “algorithms caused everything.” It is more specific: a rule that ignores the wrong variable can become dangerous precisely because it is followed faithfully and quickly.

This is a useful place to separate fact from caricature. The issue was not that machines traded fast in some abstract sense. It was that market participants used automated systems whose behaviors interacted: volume-sensitive execution, inventory-sensitive high-frequency responses, integrity checks, quote withdrawals, routing frictions, and data delays. Market fragility emerged from the coupling of these systems.

A later and different example often discussed in this context is the Knight Capital incident in 2012, where a software deployment problem caused erroneous orders and large losses within minutes. That episode points to another failure mode: the market can be perfectly liquid by normal standards, and yet a bad release, misconfiguration, or stale code path can still produce runaway trading. In other words, some algorithmic trading risks are economic, while others are plainly software-engineering risks.

What automated risk controls should live inside algorithmic trading systems?

| Control | Where enforced | Primary purpose | Main trade-off |

|---|---|---|---|

| Pre-trade controls | Client/broker gate | Block erroneous/outsize orders | May reject legitimate flow |

| Message throttles | Exchange or broker | Limit messaging load | Can delay non-cancel messages |

| Kill switch | Firm/exchange layer | Immediate halt of trading activity | Stops both errors and valid trades |

| Cancel-on-disconnect | Exchange service | Remove orphaned resting orders | Best-effort may miss orders |

| Self-match prevention | Venue/broker rule | Prevent trades with self | May change execution behaviour |

Because algorithmic trading compresses decision-making into software, risk control must live in the same layer of automation. A human supervisor who notices a problem minutes later is often too late.

That is the logic behind pre-trade controls. In the U.S., SEC Rule 15c3-5 requires broker-dealers with market access to maintain automated risk management controls reasonably designed to prevent orders that exceed preset capital or credit thresholds or appear erroneous. The rule also effectively ended “naked” sponsored access, where customer orders could reach markets without adequate pre-trade filtering. The underlying idea is simple: if the order reaches the market before control is applied, the control is not really a control.

European supervision under MiFID II and RTS 6 expresses a similar principle in more explicit operational terms. Firms are expected to apply pre-trade controls such as price collars, maximum order values and volumes, message limits, and throttles on repeated automated executions. The emphasis is on all orders, not just suspicious ones. A functioning algorithmic environment assumes that mistakes will occur and therefore installs hard boundaries before the market has to absorb them.

There is a deeper reason these controls matter. An algorithm is not just “what signal says buy or sell.” It is the whole chain from strategy decision to order generation to market access. If you can generate orders at machine speed, your protections must also operate at machine speed. That is why firms and exchanges use mechanisms such as kill switches, cancel-on-disconnect, self-match prevention, and message throttles. These are not decorations around trading logic. They are the last layers preserving order when the main logic misbehaves.

The design tradeoff is real, though. Controls that are too loose fail to stop bad orders. Controls that are too tight can interfere with legitimate price discovery or cause participants to withdraw unnecessarily during volatile conditions. Industry guidance increasingly treats this as a calibration problem rather than a binary one: controls should be localized, transparent where appropriate, and granular enough to stop errors without freezing normal activity.

Why is software engineering discipline critical for algorithmic trading systems?

A common beginner mistake is to imagine algorithmic trading as mostly about models. In practice, robust algorithmic trading is at least as much about software lifecycle discipline as about alpha, signals, or execution theory.

FINRA’s guidance to firms reflects this clearly. The recommended practices cover risk assessment, code development and implementation, testing and validation, trading-system controls, and compliance processes. That grouping is revealing. It says that firms should not think of “the strategy” and “the system” as separate worlds. A profitable strategy implemented without version control, release discipline, test segregation, or fast-disable capability is not operationally sound.

This is where many real-world failures live. Code changes interact with old configurations. Test environments differ from production. Market-data assumptions break under unusual loads. Monitoring looks fine until multiple subsystems fail together. A strategy may be logically correct in backtests and still be dangerous in production because it was not built to be testable, observable, and stoppable.

Regulation SCI extends that operational view from firms to market infrastructure. Its focus on written policies, capacity, integrity, resiliency, availability, security, incident handling, and disaster recovery reflects a structural fact: automated markets depend on automated venues and data systems. If exchange routing, market data, or matching systems fail, every algorithm connected to them is suddenly acting on partial or stale information. The algorithmic market is therefore only as strong as the infrastructure stack beneath it.

What are the main real-world use cases for algorithmic trading?

At this point the practical picture should be clearer. Firms use algorithmic trading because software is often the least bad way to handle one of three recurring problems.

The first is executing large or sensitive orders without paying unnecessary market impact. This is the institutional execution case: slicing orders, adapting pace, reducing signaling, and measuring performance against benchmarks.

The second is supplying liquidity continuously in markets where manual quoting would be too slow or too costly. This is the market-making case: maintain two-sided quotes, manage inventory, and adjust to information and fills in real time.

The third is exploiting structured, repeatable patterns faster and more consistently than a discretionary trader can. This includes statistical relationships across instruments, cross-venue price alignment, and short-horizon reactions to market microstructure signals.

These use cases differ economically, but they share one underlying feature: they all benefit from turning repeated decisions into programmable rules. That is why algorithmic trading spread so widely. Once markets became electronic, not automating those repeated decisions often meant accepting worse execution, slower reaction, and weaker control.

When does algorithmic trading fail or stop working well?

Algorithmic trading works best when the world is stable enough that yesterday’s rules still make sense today. It breaks down when the mapping from signal to market behavior changes abruptly, when displayed activity is not true liquidity, when infrastructure fails, or when too many participants respond to stress in the same way.

This is why no serious explanation should present algorithmic trading as either obviously good or obviously bad. In normal conditions, it often improves liquidity, consistency, and execution quality. In stressed conditions, its speed and rule-following can amplify errors, drain displayed depth, or spread disturbances across venues and products. The same properties that create discipline in calm markets can create rigidity in turbulent ones.

A second boundary is conceptual. Not every automated system is intelligent in the predictive sense. Many successful algorithms are simple, because their job is execution control rather than market forecasting. Conversely, replacing a deterministic rule with a machine-learning model does not remove the need for testing, governance, and hard risk limits. AI trading is a narrower category inside the broader world of algorithmic trading, not a substitute for its operational disciplines.

Conclusion

Algorithmic trading is best understood as the automation of trading decisions that matter at the order level: price, size, timing, venue, and order management after submission. It exists because modern markets are too fast, fragmented, and constrained for repeated manual decision-making to work well at scale.

Its promise is discipline, precision, and speed. Its danger is that bad rules, weak controls, or correlated reactions are also disciplined, precise, and fast.

The idea to remember tomorrow is simple: algorithmic trading is not just computers trading instead of humans. It is markets turning trading itself into a control problem; and then solving that problem with software.

Frequently Asked Questions

Execution algorithms explicitly trade off faster execution (which raises market impact) against slower trading (which increases exposure to price moves); models such as Almgren–Chriss formalize that tradeoff and practitioners use schedules like TWAP, VWAP or adaptive, volume‑sensitive pacing to choose where on that spectrum to operate.

Algorithmic trading is the broad automation of order‑level choices (price, size, timing, venue and order management); high‑frequency trading is a subset that compresses the decision and order‑management loop to very short, machine timescales but is not synonymous with all algorithmic trading.

Automation can destabilize markets when many systems follow similar rules or the wrong variables - for example, a volume‑targeted seller accelerated its own selling during the May 6, 2010 flash crash and liquidity providers withdrew, producing a feedback loop that amplified the dislocation.

U.S. Rule 15c3‑5 requires broker‑dealers with market access to operate automated pre‑trade risk controls (e.g., price collars, maximum order sizes, message limits and throttles) and MiFID II/RTS guidance imposes comparable pre‑trade and operational controls in the EU; the goal is to stop erroneous or outsized orders before they reach the market.

Because algorithmic trading compresses decision‑making into software, operational failures often stem from poor development, testing, deployment and monitoring practices; FINRA guidance and Regulation SCI therefore emphasize lifecycle discipline, testing/validation, resiliency and incident response alongside strategy design.

Algorithms treat the market as an evolving order book and must choose between demanding immediate execution (crossing the spread) or waiting passively in queues, decide venue routing and when to cancel/repost, and generally act on asynchronous book updates rather than a single displayed price.

Volume‑linked algorithms assume observed trading volume proxies available liquidity; that assumption can fail in stressed markets where rising volume reflects panic or unwinding, causing the algorithm to accelerate into thinner liquidity and worsen execution - a documented failure mode in the SEC flash crash report.

Regulators and venues introduced several mitigations after the flash crash, including a pilot circuit breaker pausing a security after an extreme move (e.g., a five‑minute pause for a 10% move), clarified trade‑break procedures for canceling clearly erroneous trades, and heightened focus on consolidated market‑data and venue resilience.

Controls must be calibrated: overly permissive limits fail to stop bad orders while overly strict limits can impede legitimate price discovery; industry guidance therefore recommends localized, granular thresholds, message throttles and kill‑switches combined with transparency and dynamic calibration by product and participant type.

Related reading