What Are GARCH Models?

Learn what GARCH models are, how they forecast time-varying market volatility, why GARCH(1,1) matters, and where these risk models work or fail.

Introduction

GARCH modelsare statistical models for volatility that try to answer a practical market question:if returns themselves are hard to predict, can the size of future moves be predicted better than a constant-risk model would suggest? That question matters because traders, portfolio managers, options desks, and risk systems do not just care about direction. They care about position sizing, margin, Value-at-Risk, stress sensitivity, and whether today’s calm is likely to persist or give way to turbulence.

The puzzle is easy to see in any return series. Prices often look close to a random walk in the short run, so the sign of tomorrow’s return is notoriously difficult to forecast. But the magnitude of returns is not so elusive. Large moves tend to be followed by large moves, and quiet periods tend to be followed by quiet periods. In other words, volatility clusters.

That single observation is the reason GARCH exists. A model with constant variance treats a calm day and a crisis day as draws from the same risk environment. Markets plainly do not behave that way. GARCH models let the variance evolve over time, using recent shocks and recent volatility to update the next volatility forecast.

At a high level, that is the idea: returns may be nearly unpredictable in mean, but their conditional varianceis often persistent and forecastable. The wordconditional is doing real work here. GARCH does not claim markets have a fixed true volatility. It claims that given what just happened, there is a better estimate of near-term risk than the long-run average.

Why does financial volatility need its own time‑varying model?

| Model | Memory | Parameters | Best for | Estimation ease |

|---|---|---|---|---|

| Constant variance | No memory | One fixed value | Stable, low-frequency risk | Very easy |

| ARCH(p) | Finite lag memory | Many lagged terms | Short-lived spikes | Moderate |

| GARCH(1,1) | Infinite effective memory | Few parameters | Persistent volatility | Easy robust |

A standard time-series or regression model often starts from residuals: the part of returns not explained by the mean equation. In a simple model, those residuals are assumed to have constant variance, a property called homoskedasticity. For financial returns, that assumption is usually too strong. The residuals are small for a while, then suddenly large, then small again. The variance changes over time.

This changing variance is called heteroskedasticity. ARCH and GARCH were designed to model a special form of it: variance that depends on the recent past. Robert Engle’s 1982 ARCH framework introduced the core move: let current variance depend on past squared errors. Tim Bollerslev’s 1986 GARCH generalization added past conditional variances as well, making the model more flexible and much more parsimonious.

Why was that extension important? Because pure ARCH often needs many lags to capture the slow decay of volatility after a shock. Markets do not usually forget a large move after a day or two. A volatility spike can echo for weeks or months. GARCH captures that persistence with a compact recursive structure rather than a long list of lagged squared returns.

The key invariant is simple: volatility is treated as a latent state that updates through time. It is not directly observed in the same way a return is observed, but it leaves fingerprints in the size of returns. Large squared returns push the volatility estimate up. In the absence of fresh shocks, the estimate gradually drifts back toward its long-run level.

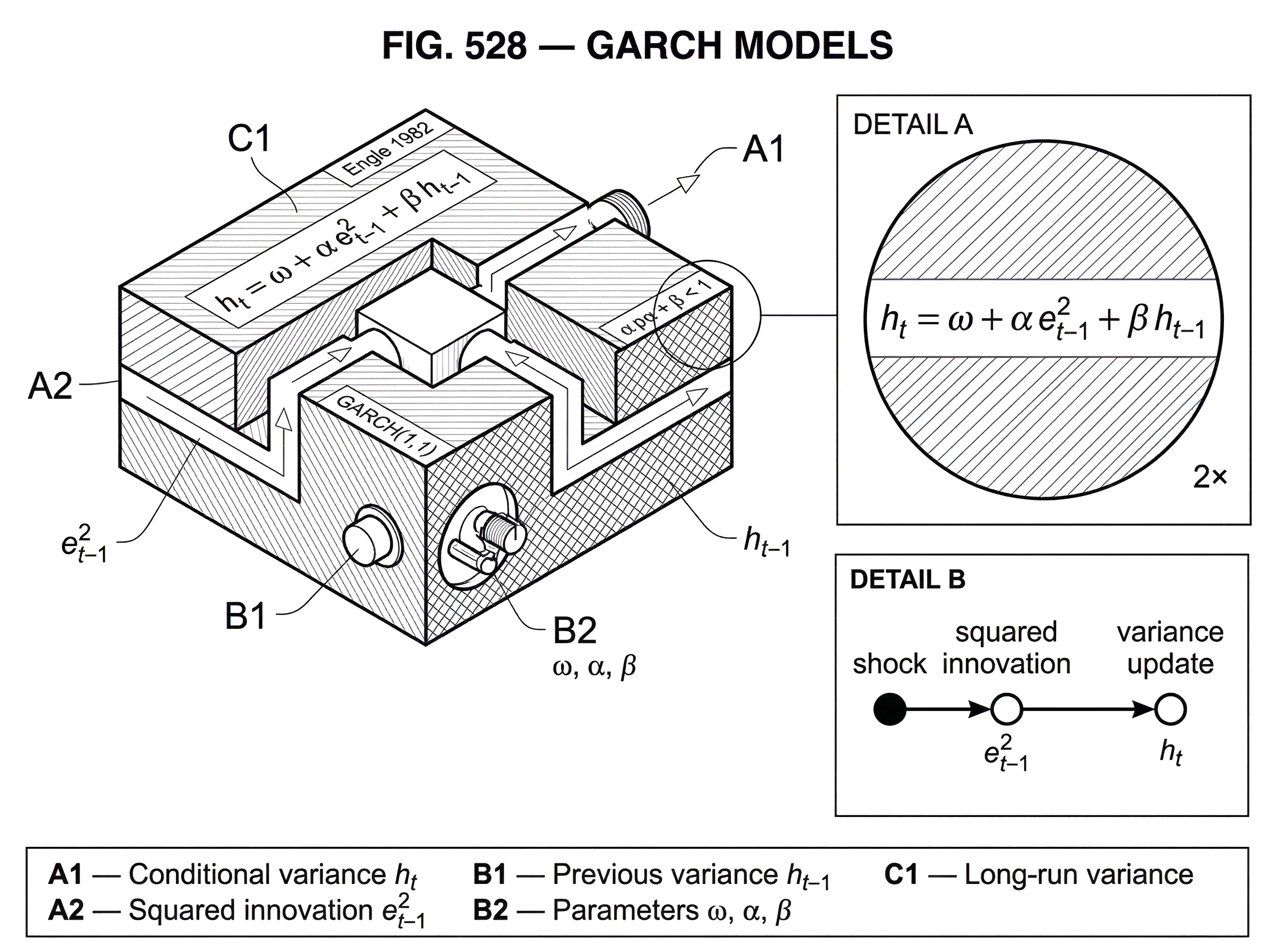

How does the GARCH(1,1) update rule determine tomorrow’s variance?

The workhorse specification is GARCH(1,1). It is called a workhorse for a reason: despite its simplicity, it often captures the main persistence in daily financial volatility surprisingly well.

Write the return innovation at time t as e_t. This is the unexpected part of return after removing any mean model. GARCH assumes that conditional on information available at time t - 1, the innovation has mean zero and variance h_t. The quantity h_t is the model’s forecast of volatility squared for period t.

The update rule is:

h_t = omega + alpha e_{t-1}^2 + beta h_{t-1}

Here omega is a positive baseline level, alpha measures how strongly new shocks affect next-period variance, and beta measures how persistent volatility itself is. The parameters are typically constrained to be nonnegative, and for a mean-reverting stationary model we usually require alpha + beta < 1.

This equation becomes intuitive once you read it as a weighted memory system. Tomorrow’s variance forecast is built from three ingredients: a long-run anchor, the most recent surprise squared, and yesterday’s variance forecast. If today brought a very large return shock, then e_{t-1}^2 is large and tomorrow’s forecast rises. If the market was already in a volatile regime, then h_{t-1} is large and that elevated state carries forward. If nothing unusual keeps happening, the recursion pulls variance back toward its long-run average.

That long-run average variance is omega / (1 - alpha - beta) when alpha + beta < 1. This is one of the most useful interpretations of the model. It tells you that GARCH is not just chasing recent noise. It balances short-run reaction against long-run mean reversion.

Engle’s 2001 survey describes GARCH(1,1) in exactly this spirit: as a weighted average of long-run variance, the previous forecast, and new information from the most recent squared residual. That description is why the model is so easy to connect to actual trading intuition. Traders already think this way: current risk depends on the background regime, the latest shock, and the fact that volatility tends to persist.

Example: how does a GARCH update respond after a large market shock?

Suppose an equity index has been in a relatively calm regime. Your model’s current variance forecast is low, reflecting several quiet weeks. Then a surprise macro release hits, the index moves far more than expected, and the residual e_t is large in absolute value. In a constant-variance model, that shock is just an unusually large error. In GARCH, it is also information about tomorrow’s environment.

Because the model squares the residual, it does not care whether the shock was strongly positive or strongly negative. What matters in basic GARCH is magnitude. The large e_t^2 feeds into the next update, so h_{t+1} rises. The next day, even if returns are not extreme again, the elevated h_{t+1} itself feeds forward through the beta * h_t term, so volatility stays elevated. If the following days also bring large shocks, the model keeps ratcheting up its estimate. If the market quiets down, the absence of new large squared shocks lets the recursion decay back toward the long-run variance.

This is what volatility clustering looks like in mechanism form. A shock does not just create one large return observation. It changes the state variable that governs the scale of near-term future returns.

The analogy is a heated metal plate rather than a light switch. A shock adds heat quickly, but the plate cools gradually. That analogy explains persistence well. Where it fails is that GARCH is not a physical diffusion law; the rate of “cooling” is a statistical parameter estimated from data, and the next shock can arrive at any time.

Why do GARCH models use squared residuals to update variance?

A smart reader often asks: why does the model use squared residuals rather than absolute returns or some other transformation? The answer is partly statistical and partly practical. Variance is about second moments, so squared shocks connect directly to the object the model is trying to update. Squaring also makes positive and negative shocks contribute equally to scale in the basic specification.

That symmetry is useful, but it is also a limitation. In many equity markets, negative returns tend to be followed by larger increases in volatility than positive returns of the same size. This is the intuition behind leverage and asymmetry effects. Standard GARCH(1,1) does not capture that asymmetry because e_t^2 removes the sign.

This is one reason extensions such as EGARCH and threshold-type GARCH models were developed. Nelson’s 1991 critique pointed out that standard GARCH rules out certain negative return–future volatility relationships by construction and imposes parameter restrictions that can be too rigid. So when practitioners say “GARCH,” they often mean not just the basic form, but a whole family of models built around the same conditional-variance idea.

How does GARCH act like an ARCH(infinity) model to capture long volatility persistence?

One reason GARCH became so influential is that it explains long volatility persistence without requiring an unwieldy high-order ARCH model. Bollerslev showed that GARCH can be viewed as an ARCH(infinity) representation. That sounds technical, but the intuition is straightforward.

An ARCH model uses a finite number of lagged squared shocks. GARCH adds lagged conditional variance, and that recursion means the effect of an old shock keeps echoing into the future through many later variance updates. So a compact GARCH(1,1) behaves like a model with infinitely many lagged squared shocks whose influence decays over time.

This is exactly the behavior we see in many market series. Volatility does not vanish immediately after a shock, but neither does it stay permanently high in ordinary regimes. The GARCH recursion creates a smooth decay profile rather than a short, abrupt memory.

Bollerslev also showed that GARCH can be represented in an ARMA-like form for squared innovations. That matters because it links GARCH to familiar time-series diagnostics. In practice, autocorrelation in squared residuals is one of the signs that conditional heteroskedasticity remains in the data and that a volatility model may be warranted.

How are GARCH models estimated and what do the estimators actually fit?

In applied work, GARCH models are usually estimated by maximum likelihoodor, more precisely in many financial settings,quasi-maximum likelihood. The distinction matters.

A common implementation assumes conditional normality: given past information, e_t is distributed with mean zero and variance h_t, often under a normal density. This gives a convenient likelihood function. But financial returns frequently have fatter tails than the normal distribution allows. Bollerslev and Wooldridge showed that even when conditional normality is false, the normal quasi-maximum likelihood estimator can still be consistent for the conditional mean and variance parameters if the first two conditional moments are correctly specified.

That is the practical lesson: you do not have to believe returns are truly normal to use a normal-based GARCH fit, but you should be careful about inference. Standard errors and test statistics can be misleading under nonnormality unless you use robust methods.

This is also why practitioners often fit GARCH with Student-t or skewed distributions for the residuals. The volatility recursion may be the main object of interest, but distributional assumptions matter a lot when the model is used for tail-sensitive tasks such as Value-at-Risk.

How do traders and risk managers use GARCH forecasts in practice?

In trading, GARCH is usually not a crystal ball for direction. It is a tool for forecasting the scale of risk. That scale forecast feeds into several concrete decisions.

The most direct use is position sizing. If the model forecasts higher near-term volatility, a risk-targeting strategy reduces exposure to keep expected portfolio variance near a target. If forecast volatility falls, the same strategy can scale up. This is the basic mechanism behind volatility targeting and many managed-futures or overlay systems.

Another use is Value-at-Risk and related risk metrics. Engle’s 2001 overview emphasizes how ARCH/GARCH models can be used to estimate next-day VaR. The logic is simple: forecast tomorrow’s conditional variance, pair it with an assumption about the residual distribution, and compute a tail quantile. But here the model’s weak points matter. If you assume normal residuals in a market with fat tails, your VaR can look more precise than it really is.

GARCH also appears in derivatives and relative-value workflows. Option desks care about the evolution of realized volatility, even if implied volatility from option prices is often the market’s forward-looking benchmark. Statistical arbitrage and execution systems may use volatility forecasts to normalize signals, compare moves across assets, or allocate risk capital dynamically. In multi-asset settings, the same basic logic extends to time-varying covariance models, though those become much harder to estimate as dimension rises.

Why is GARCH(1,1) still the industry benchmark for volatility forecasting?

A natural question is why such a simple specification survived when many richer variants exist. Part of the answer is empirical discipline. Hansen and Lunde’s well-known comparison across hundreds of volatility models found that, in their samples, the best alternatives often did not significantly outperform GARCH(1,1) out of sample. That does not mean GARCH(1,1) is universally best. It means complexity often buys less than people expect.

This is an important lesson in trading and forecasting generally. A model can fit in-sample quirks while adding little forecasting value. GARCH(1,1) sits near a useful balance point: simple enough to estimate robustly, flexible enough to capture volatility clustering and persistence, and interpretable enough that parameter changes have clear meaning.

The parameter sum alpha + beta is especially informative. When it is close to 1, volatility is highly persistent: shocks decay slowly. In many asset return series, this sum is indeed estimated close to 1. But “close to 1” is not the same as “equal to 1.” If it reaches or exceeds the stationary boundary, the model no longer has the same mean-reverting interpretation.

When does basic GARCH(1,1) fail and which extensions fix those failures?

The cleanest way to understand GARCH is also the cleanest way to see its limits. It assumes that the conditional variance today can be summarized by a smooth recursive function of yesterday’s variance and yesterday’s squared shock. Real markets are often messier.

First, basic GARCH treats positive and negative shocks symmetrically. That is often too simple for equities and credit-sensitive assets, where negative returns may generate disproportionately larger volatility responses. Asymmetric extensions exist because this mismatch is common, not exotic.

Second, the model is built for gradual state evolution, but markets sometimes jump between regimes. A central-bank surprise, a market halt, a de-pegging event, or a structural break can make a single stable recursion a poor description across the full sample. In those settings, regime-switching models or models with explicit break handling may be more appropriate.

Third, GARCH is a low-frequency model in spirit, often fit to daily returns. High-frequency data introduced another way to think about volatility: instead of inferring it only from daily closes, one can estimate realized volatilityby summing intraday squared returns. Research by Andersen, Bollerslev, Diebold, and Labys showed that realized measures can provide much cleaner ex post volatility estimates than daily squared returns alone. That changed how forecasters evaluate volatility models and led to extensions such as**Realized GARCH**, which jointly models returns and realized volatility measures through a measurement equation.

Fourth, multivariate GARCH quickly becomes cumbersome. In a single asset, a variance recursion is manageable. Across many assets, you need a time-varying covariance matrix that remains positive definite and is still estimable. The parameter count can explode. This is why practical large-scale risk systems often combine GARCH-style ideas with factor structures, shrinkage, or simpler covariance dynamics.

Finally, GARCH is only as good as the assumptions you keep fixed. The conditional mean is often treated as negligible at daily horizons, which is usually reasonable but not always. The residual distribution may be misspecified. The parameters themselves may drift over time. None of these issues makes the model useless; they just define the boundary between a helpful approximation and a false sense of precision.

How does GARCH relate to realized‑volatility methods and Realized GARCH?

| Approach | Data source | Measurement noise | Forecast strength | Best use |

|---|---|---|---|---|

| Classic GARCH | Daily returns | High noise | Good short-term | When only daily data |

| Realized measures | Intraday high-frequency | Low noise | Strong ex post | Backtests and diagnostics |

| Realized GARCH | Daily + realized | Reduced noise | Improved forecasts | If intraday data available |

A useful way to place GARCH in the larger volatility toolkit is this: classic GARCH is a parametric filterfor latent variance using low-frequency returns, while realized-volatility methods aremeasurement-based estimates built from high-frequency data. They are solving related problems from different directions.

If you only have daily returns, GARCH is a natural way to model volatility dynamics. If you have rich intraday data, realized measures can tell you much more directly what volatility was over the day. Realized GARCH combines the two ideas by using a return equation and a measurement equation that links the latent conditional variance to observed realized measures. The attraction is obvious: daily returns alone are noisy indicators of latent variance, while realized measures add information.

This connection also clarifies what is fundamental in GARCH and what is conventional. The fundamental part is the idea that volatility is conditional, persistent, and forecastable from its own history and from recent shocks. The conventional part is the specific linear recursion in squared innovations. That recursion is useful, but it is not sacred.

What do omega, alpha and beta imply for responsiveness and persistence in volatility?

| Parameter pattern | Alpha (reaction) | Beta (persistence) | Omega (anchor) | Trading implication |

|---|---|---|---|---|

| High alpha, low beta | Fast reactive | Short persistence | Low anchor | Reduce positions quickly |

| Low alpha, high beta | Slow reactive | Long persistence | Higher anchor | Adjust allocation gradually |

| Alpha+beta close to 1 | Very reactive | Very persistent | Stable anchor | Expect long volatility regimes |

When practitioners look at a fitted GARCH(1,1), they often care less about the formal derivation than about what the parameters imply operationally.

A larger alpha means the model reacts strongly to fresh shocks. Volatility forecasts jump more after surprising returns. A larger beta means the model is more inertial. Once volatility is high, it tends to stay high. A larger omega raises the long-run variance level around which the process fluctuates.

If alpha is high and beta moderate, volatility reacts quickly and forgets relatively quickly. If alpha is modest but beta very high, volatility reacts less dramatically day to day but remains persistent once elevated. Two assets can have similar unconditional variance and very different volatility dynamics because these parameters distribute the adjustment differently.

This matters for trading horizons. A short-horizon risk system may care most about immediate responsiveness. A longer-horizon allocator may care more about persistence and mean reversion. The same model supports both, but the interpretation of the parameters changes with the decision horizon.

Conclusion

GARCH models exist because market risk is not constant. Volatility clusters, recent shocks change the near-term risk environment, and that changing environment can often be forecast better than a fixed-variance model would allow.

The core GARCH idea is simple enough to remember: tomorrow’s variance is updated from a long-run anchor, yesterday’s variance, and yesterday’s surprise squared. That recursion is why the model became a workhorse. It is not the final word on volatility, and it breaks when asymmetry, jumps, structural breaks, or high-dimensional covariance dynamics dominate. But as a first model of time-varying market risk, it remains one of the clearest and most useful ideas in quantitative trading.

Frequently Asked Questions

GARCH(1,1) updates the conditional variance using the recursion h_t = omega + alpha e_{t-1}^2 + beta h_{t-1}, so tomorrow’s variance is built from a long-run anchor (omega), yesterday’s squared surprise (alpha term), and yesterday’s variance forecast (beta term).

Squared residuals target the second moment (variance) directly and make positive and negative shocks contribute equally to scale; this is statistically natural for modeling variance but enforces sign-symmetry, which can be a limitation when negative returns have larger volatility effects.

Omega sets the long-run anchor, alpha controls how strongly new shocks push up next-period variance, and beta governs persistence; the sum alpha + beta indicates how slowly shocks decay, with alpha + beta < 1 required for the usual mean-reverting interpretation.

If alpha + beta reaches or exceeds 1 the variance recursion no longer has the standard mean-reverting stationary interpretation (the usual long-run variance formula breaks down), so the model behaves like a near-unit-root or nonstationary volatility process and requires different treatment or interpretation.

Basic GARCH is sign-symmetric and therefore cannot capture the common equity-market feature that negative returns raise future volatility more than positive returns of the same size; practitioners use asymmetric variants such as EGARCH or threshold/GJR-type GARCH to model that effect.

GARCH is typically estimated by (quasi-)maximum likelihood assuming conditional normality; QMLE can yield consistent parameter estimates for the conditional mean and variance even under nonnormal returns, but inference (standard errors and tests) can be misleading without robust methods, and practitioners often fit Student-t or skewed error laws when tails matter.

To produce VaR you forecast tomorrow’s conditional variance from the GARCH recursion, combine it with an assumed residual distribution to get a tail quantile, and be cautious because misspecified tail behavior (e.g., assuming normal when returns have fat tails) can materially understate tail risk.

Realized-volatility methods estimate daily variance from high-frequency intraday returns and typically give cleaner ex post volatility measures; Realized GARCH combines a GARCH-type latent-variance recursion with a measurement equation that links the latent variance to observed realized measures to improve inference and forecasting when intraday data are available.

GARCH(1,1) often survives as a practical benchmark because its parsimonious recursion captures volatility clustering and persistence robustly, and comparative studies have found many more complex alternatives do not reliably outperform it out of sample, so simplicity plus interpretability often wins in forecasting practice.

GARCH can be extended to multivariate settings, but the number of parameters and the need to keep the covariance matrix positive definite make large-scale multivariate GARCH hard to estimate in practice; therefore practitioners typically combine GARCH ideas with factor models, shrinkage, or simpler dynamic covariance structures for many assets.

Related reading