What Is a Statistical Risk Model?

Learn what statistical risk models are, how they estimate portfolio volatility and correlation, and why factor models, PCA, and VaR depend on them.

Introduction

Statistical risk models are tools for estimating how a portfolio might behave under uncertainty, especially how its holdings move together. That sounds straightforward until you face the real problem: a portfolio rarely fails because each position becomes risky in isolation. It fails because correlations change, hidden exposures stack up, and yesterday’s calm data are used to make decisions about tomorrow’s stress. The reason statistical risk models exist is to compress messy market history into a structure you can actually use for portfolio construction, monitoring, and control.

At the foundation sits a simple portfolio idea that goes back to Markowitz: investors form beliefs about future performance from observation and experience, then choose portfolios by balancing expected return against variance. That framing matters because risk models live exactly in that gap between raw observation and usable belief. A portfolio optimizer, a risk-parity allocator, or a VaR engine cannot work directly from intuition alone; each needs numerical estimates of volatility, covariance, and sometimes the full loss distribution. Statistical risk models are the machinery that produces those estimates.

The central difficulty is not measuring the risk of one asset. It is measuring the joint behavior of many assets at once. If you own N securities, the number of pairwise covariances grows roughly with N^2. Even for a moderate universe, that is a huge object to estimate from limited history. And market data are noisy: some co-movements are persistent, some are accidental, and some disappear exactly when you need them most. A useful risk model therefore does not merely summarize history. It imposes structure on history so that the estimates are stable enough to guide decisions.

What problem does a statistical risk model solve?

A portfolio’s risk is not the weighted average of its constituents’ standalone volatilities. What matters is how positions interact. Two volatile assets can offset each other if they tend to move in opposite directions; two seemingly safe assets can become dangerous if they are both exposed to the same hidden driver. The practical object you want is the portfolio variance, which depends on weights, volatilities, and correlations together. In words, portfolio risk comes from the entire covariance matrix, not from a list of asset-level risk scores.

That creates a basic estimation problem. Historical returns give you one sample path of the world, not the true underlying covariance structure. If you estimate every covariance directly from raw sample data, the result is often unstable, especially when the asset universe is large relative to the length of history. Small estimation errors then become large portfolio errors because optimizers exploit them. A portfolio optimizer does not know which inputs are noisy; it treats all estimated risk differences as real opportunities. This is one reason risk models need regularization, shrinkage, factor structure, or other statistical discipline.

There is also a horizon problem. A risk model estimated from recent daily returns may be useful for short-horizon monitoring, but less useful for long-term strategic allocation. A model calibrated on long history may smooth over the very regime changes that matter for tactical control. This is why practical systems often distinguish between daily, short-horizon, and long-horizon forecasts. The underlying question is always the same: which part of the past should be allowed to influence the future estimate, and by how much?

How do factor models reduce complexity and explain asset co-movement?

The most important idea in a statistical risk model is that asset returns are not treated as independent facts. They are explained as the combination of a smaller set of common drivers plus asset-specific noise. This is the idea that makes the topic click.

Suppose you hold 500 equities. You could try to estimate every pairwise covariance directly, but that gives you an enormous and noisy matrix. Or you can say something more structured: many of those stocks co-move because they share exposure to broad market moves, industries, styles such as size or momentum, and a residual component unique to each company. Once you describe returns in terms of common factors, the covariance problem becomes much smaller. Instead of estimating every asset-against-asset covariance independently, you estimate factor volatilities, factor correlations, each asset’s factor exposures, and the residual risk left over.

Mechanically, the usual decomposition is this: an asset’s return is modeled as exposure to common factors plus an idiosyncratic term. If asset i has exposure β[i,k] to factor k, and factor k has return f[k], then the asset return is approximated by the sum of these factor contributions plus residual noise. Once you accept that structure, the covariance matrix of asset returns can be rebuilt from three parts: factor exposures, factor covariance, and specific risk. That is why factor models are so central to portfolio risk.

This is not magic. It is a statement about shared causes. If two semiconductor firms move together, the model should attribute much of that co-movement to common industry and market exposures rather than treat it as a mysterious asset-pair fact. If two banks move together because of interest-rate sensitivity and credit conditions, again the explanation should run through common drivers. The payoff is not just computational efficiency. It is that the model becomes more interpretable, more stable, and often more useful out of sample.

Fundamental vs. statistical (PCA) factor models

| Approach | How factors chosen | Interpretability | Best for | Key risk |

|---|---|---|---|---|

| Fundamental | Economic hypotheses | High | Communication and exposure control | Misspecified factors |

| Statistical (PCA) | Data-driven extraction | Low | Discovering latent drivers | Noise and instability |

| Hybrid | Combined rules + PCA | Medium | Stability with flexibility | Complex calibration |

There are two broad ways to decide what the common drivers are. In a fundamental factor model, the factors are chosen because they have economic or portfolio meaning: market, industry, size, value, momentum, liquidity, and similar dimensions. The Barra-style models used in institutional equity risk are the canonical example. MSCI’s USE4, for instance, describes risk through industry factors based on GICS and style factors such as beta, liquidity, residual volatility, momentum, size, book-to-price, growth, leverage, dividend yield, earnings yield, and newer terms like non-linear beta. Here the model is saying: these are the recurring structures by which stocks tend to move together.

In a statistical factor model, the factors are extracted from the return data themselves without requiring them to be economically named in advance. Principal component analysis, or PCA, is the standard tool. PCA looks for orthogonal directions in return space that explain as much variation as possible. The first principal component captures the direction of maximum variance; the next captures the largest remaining variance subject to being orthogonal to the first; and so on. In portfolio language, PCA tries to find the hidden axes along which assets collectively move.

The distinction matters because the two approaches solve slightly different problems. Fundamental models are often better for interpretation, exposure control, and communication. A portfolio manager can understand an unintended overweight to momentum or a sector concentration in energy. Statistical models are often better at discovering co-movement that the predefined taxonomy missed. They can capture latent structure, especially in markets where economic classifications are incomplete or where the dominant correlation pattern is not well described by traditional factors.

But the choice is not fundamental-versus-statistical in a pure sense. In practice, many systems blend the two. They may use economically defined factors while estimating correlations statistically, or they may use PCA inside narrower blocks of the covariance estimation problem. The organizing principle is always the same: use enough structure to stabilize estimation, but not so much that the model becomes blind to the data.

Example: how factor structure makes portfolio risk estimates more stable

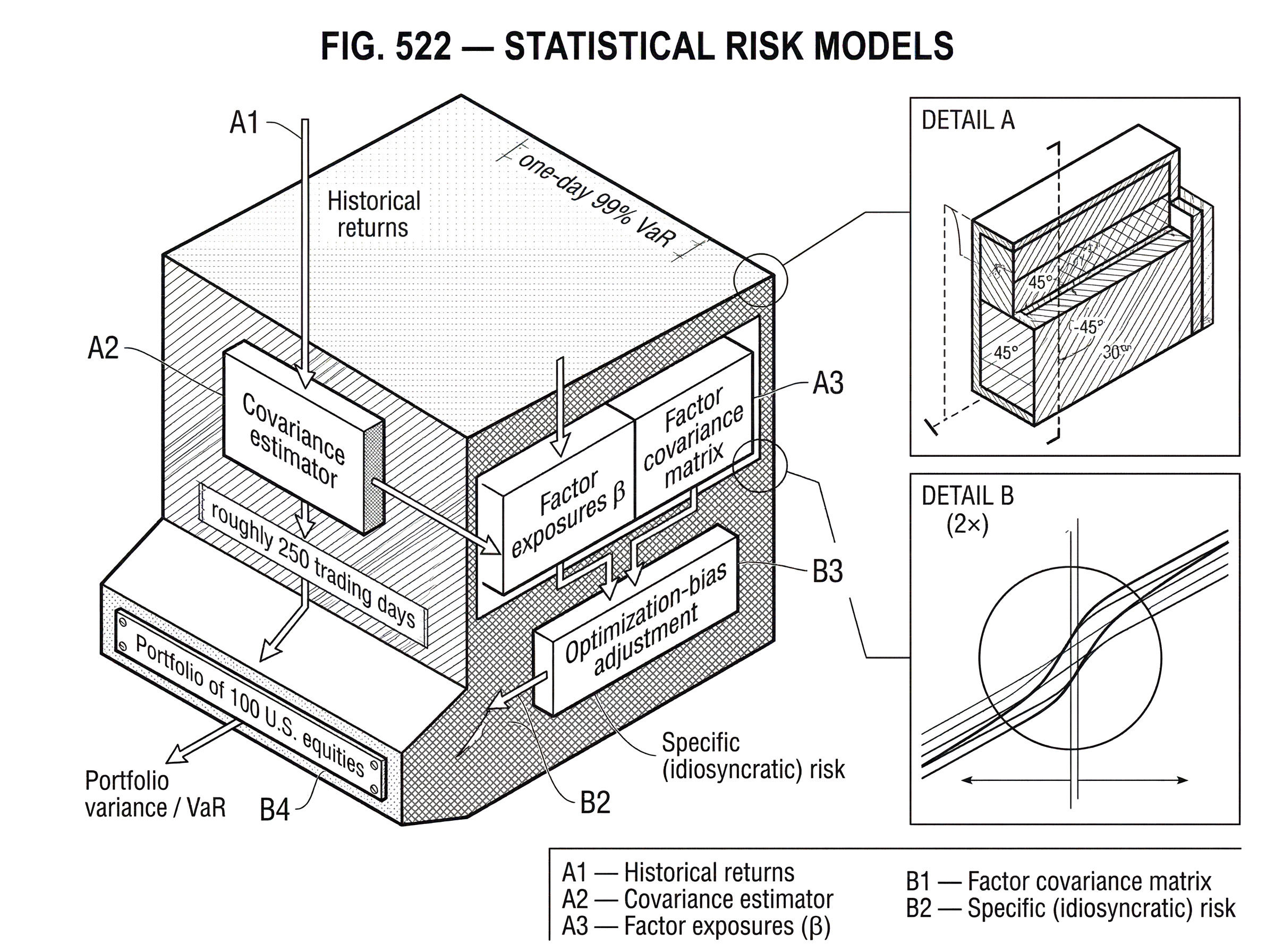

Imagine a portfolio of 100 U.S. equities spread across technology, banks, retailers, and industrials. You want to estimate next-day risk. If you simply compute the sample covariance matrix from the last year of daily returns, you get a large matrix shaped by every temporary episode in that year: earnings shocks, sector rotations, one-off news, and noise. It contains information, but it also contains many accidental relationships.

Now suppose you instead model each stock as exposed to a broad market factor, a set of industry factors, and style factors such as size, momentum, and liquidity, plus a stock-specific residual. A semiconductor stock and a software stock may both load on the market, but differ in industry exposure. A small-cap bank and a large money-center bank may share industry exposure yet differ on size and leverage-related characteristics. The model no longer asks whether Stock A and Stock B happened to have a covariance of exactly 0.00037 in the sample. It asks why they moved together and whether that cause is likely to persist.

When you aggregate up to the portfolio, the risk estimate becomes more intelligible. Perhaps 45% of forecast variance comes from market exposure, 25% from industry concentration, 15% from style tilts, and the rest from stock-specific risk. If the manager then reduces the momentum tilt or diversifies industry concentration, the model can tell a coherent story about why forecast risk changes. This is one reason statistical risk models are used not only to measure risk but to shape portfolios before trades are placed.

The example also shows where things can go wrong. If the factor set omits a real common driver, the model pushes that risk into the residual bucket and may understate how correlated “specific” positions really are. If the estimated factor covariance is stale, a calm-period forecast can understate current stress. And if the optimizer trusts every decimal place of the model, it can build positions that are fragile to estimation error rather than genuinely diversified.

Why covariance estimation is the core of a statistical risk model

| Estimator | Weighting | Responsiveness | Typical use | Main drawback |

|---|---|---|---|---|

| Sample covariance | Equal weights | Low | Baseline historical estimate | High estimation error |

| EWMA (RiskMetrics) | Exponential weighting | High | Short-horizon monitoring | Procyclical spikes |

| GARCH-family | Model-based dynamics | Medium | Asset-level volatility forecasting | Estimation complexity |

| Shrinkage / Factor | Structured shrinkage | Low–Medium | Large-N regularization | Risk of misspecified structure |

Although factor language gets most of the attention, covariance estimation is the engine of a statistical risk model. Whether you model assets directly or through factors, the key output is still an estimate of future variance and covariance.

A simple historical estimator gives equal weight to past observations. That is easy to compute but often too slow to react when volatility changes. RiskMetrics popularized a different idea: estimate volatility with an exponentially weighted moving average, or EWMA, so recent returns matter more than older ones. The underlying judgment is that volatility clusters and recent observations carry more information about near-term risk than distant history. In the RiskMetrics framework, two modeling assumptions are emphasized: risk-factor returns are treated as normally distributed, and volatilities are estimated with EWMA. Those assumptions made the system tractable and standardized, which helped it become an industry benchmark, even though both assumptions are simplifications.

This is a good place to separate intuition from fact. The intuition is that markets have memory in volatility but not a perfectly stable one, so fading the influence of older data can improve responsiveness. The fact is that any weighting scheme is a modeling choice. If you respond too slowly, you miss regime shifts; if you respond too quickly, your estimates become noisy and procyclical. Practical models often try to balance this with regime adjustments, smoothing, and longer-run anchors.

MSCI’s USE4 brochure makes that tradeoff explicit. It describes a Volatility Regime Adjustment that calibrates factor volatilities to current market levels so the model reacts faster when volatility rises and does not remain too elevated after stress subsides. That tells you something important about real-world risk modeling: the raw covariance estimator is rarely accepted untouched. Vendors and practitioners add corrections because they know the statistical base model otherwise tends to lag the market.

What is optimization bias and how can it break risk models?

| Approach | What it does | When to use | Effect on portfolios | Trade-off |

|---|---|---|---|---|

| No adjustment | Uses raw estimates | Avoid for optimizers | Concentrated, fragile | Exploits estimation error |

| Shrinkage / regularization | Pulls toward target | When data noisy | Reduces overfitting | Can damp true signals |

| Optimization-bias adjustment | Scale covariances by bias | For optimizer-driven books | Improves optimized forecasts | Needs historical calibration |

| Constraints / robust opt | Limit extreme weights | When stability required | More diversified portfolios | Possible return drag |

There is a particularly important failure mode in portfolio construction: optimization bias. If you optimize a portfolio using estimated expected returns and covariance, the optimizer naturally selects positions that look attractive under the model. But some of those apparent opportunities are artifacts of estimation error. As a result, the optimized portfolio often ends up concentrated in directions where the covariance matrix understated risk.

This is why vendors like MSCI include an Optimization Bias Adjustment that scales parts of the covariance matrix up or down depending on where risk has historically been under- or over-forecast for optimized portfolios. The underlying mechanism is simple. Optimization acts like a microscope for model error. A portfolio built by hand may not lean hard enough into mistaken estimates for the problem to become obvious, but an optimizer will. So the risk model must be designed not just to fit return data, but to survive contact with optimization.

This is a common misunderstanding. People often think of a risk model as a passive descriptive object: estimate covariance, then hand it off. In practice, the downstream use matters. A covariance estimate that looks statistically reasonable in isolation may be dangerous once inserted into a mean-variance optimizer, because the optimizer hunts the weakest parts of the estimate. Good risk models are built with that adversarial interaction in mind.

How do statistical risk models feed into VaR, and what are the limits?

Statistical risk models often show up to end users in the form of Value at Risk, or VaR, but VaR is not the model itself. This distinction matters. RiskMetrics states it plainly: VaR is a risk measure, while RiskMetrics is a model that can be used to calculate VaR among other outputs.

A statistical risk model provides the ingredients for VaR: volatilities, correlations, factor dynamics, and assumptions about the distribution of returns. VaR then compresses that estimated loss distribution into a threshold such as, “with 99% confidence, one-day loss should not exceed X.” If the underlying model is delta-normal, the VaR depends heavily on covariance estimates and normality assumptions. If the model is historical simulation or Monte Carlo, the path from covariance to VaR is less direct, but the risk model still governs how scenarios are generated or interpreted.

This is also where limitations become sharp. A model that assumes normal returns can underestimate tail losses and nonlinear exposures. Jorion’s practitioner treatment of VaR emphasizes computing, backtesting, and forecasting correlations, but also warns of pitfalls in risk-management systems. Danielsson’s critique goes further: market data are endogenous to market behavior, so models estimated in stable periods may offer little guidance in crises. That is not a minor technical objection. It points to a deep structural problem: if market participants use similar models and react similarly, the data-generating process changes with behavior.

So VaR is best understood as a reporting surface above a deeper engine. If the covariance engine is unstable, the VaR number is unstable. If the factor structure misses nonlinear or stress correlations, the VaR hides that miss behind a single percentile. This is why serious risk practice treats VaR as one summary among several, not as a complete picture.

How should statistical risk models be backtested and validated?

Because statistical risk models are built from assumptions and imperfect data, they require disciplined validation. The Federal Reserve’s SR 11-7 guidance defines a model broadly as any quantitative method that transforms inputs into estimates, and defines model risk as the adverse consequences of incorrect or misused model outputs. That is a useful framing because it identifies two different failure modes. A model can be wrong in construction, or it can be used wrongly by people who misunderstand its assumptions and limitations.

Validation therefore has to test both mechanics and use. Conceptual soundness asks whether the structure makes sense for the intended problem. Ongoing monitoring asks whether inputs and outputs remain stable enough to trust. Outcomes analysis, including backtesting, asks whether realized results are at least broadly consistent with what the model forecast.

For market-risk models, Basel’s backtesting framework gives a very concrete version of this idea. A one-day 99% VaR model is compared against roughly 250 trading days of realized outcomes. Days when realized loss exceeds the VaR are called exceptions. Too many exceptions move the model from the green zone into yellow or red, triggering supervisory concern and potentially higher capital. The framework explicitly acknowledges that backtesting has limited statistical power: no single threshold perfectly separates good from bad models. That humility is important. Validation is not a proof of truth; it is an organized attempt to detect serious model failure.

The strongest lesson here is that no statistical risk model is self-justifying. Even if the math is elegant, the model must be monitored against the world and against the decisions it drives. Quiet periods are especially deceptive because they can make almost any model look better than it is.

When do statistical risk models fail? Common assumption breakages

Statistical risk models are most useful when market structure is persistent enough that past co-movement tells you something about near-future co-movement. They become fragile when that persistence weakens.

One assumption that often breaks is correlation stability. In stress, assets that normally diversify each other can suddenly move together because liquidity evaporates, funding constraints bind, or macro shocks dominate micro differences. Another assumption that breaks is distributional shape. Normal approximations can miss fat tails, skewness, and jump risk. A third fragile assumption is linearity. Factor models work most cleanly when portfolio returns are approximately linear in underlying factors. Options, path-dependent instruments, and exposures with threshold effects can violate that approximation.

There is also a data-quality boundary. Basel’s distinction between modellable and non-modellable risk factors reflects a practical truth: if you do not have enough real price observations, the statistical estimate is too weak to support confident modeling. In large portfolios, this becomes a recurrent issue for illiquid assets, bespoke instruments, or very granular risk buckets. At that point, add-ons, conservative assumptions, or stress methods must supplement the statistical model.

And then there is the endogenous-data problem. If many institutions estimate risk from similar recent histories, falling volatility can invite leverage, which in turn makes the eventual regime shift more violent. In that sense, risk estimates can be procyclical: they look safest when leverage is easiest to add. This does not make statistical models useless. It means they should be treated as conditional maps of the recent regime, not as timeless laws of market behavior.

How are statistical risk models used in practice for portfolio construction and monitoring?

The practical value of a statistical risk model is that it turns a portfolio into a set of manageable exposures. A manager can ask not just “how risky is this portfolio?” but “how much of that risk comes from market direction, sector bets, style tilts, residual concentration, or changing volatility?” That decomposition supports position sizing, risk budgeting, hedge design, optimizer constraints, and performance attribution.

This is why neighboring portfolio ideas depend on statistical risk models. Risk parity needs volatility and correlation estimates to size allocations by risk rather than by dollars. Sharpe and Sortino ratios summarize reward relative to risk, but they depend on some underlying estimate of volatility or downside behavior. VaR and expected shortfall sit even closer: they are direct outputs of statistical loss models. PCA-based portfolio diagnostics are another nearby example, especially when managers want to know whether a supposedly diversified book is actually concentrated in a few latent correlation trades.

Institutional workflows reflect this breadth. Vendor models like Barra are used for daily monitoring, rebalancing, scenario analysis, and backtesting. Simpler frameworks like RiskMetrics remain influential because they offer a standardized baseline. Regulatory systems impose further layers of testing, comparators, and conservatism. Across all of these settings, the point is the same: statistical risk models provide a disciplined estimate of the covariance and exposure structure that portfolio decisions implicitly rely on anyway.

Conclusion

A statistical risk model is, at heart, a structured guess about how portfolio returns co-move. Its job is to turn noisy historical data into stable estimates of volatility, correlation, and factor exposure so investors can build, monitor, and constrain portfolios.

The key idea to remember is simple: portfolio risk is mostly about shared movement, not isolated positions. Statistical risk models exist to explain that shared movement with enough structure to be useful and enough humility to be monitored, challenged, and supplemented when markets change.

Frequently Asked Questions

Factor models reduce an N-by-N covariance estimation problem to a much smaller set of factor volatilities, factor correlations, asset exposures, and specific risks, which makes estimates more stable and interpretable; the trade-off is that omitted or misspecified factors can hide real common drivers by pushing them into residuals and understate tail co-movement.

Fundamental factor models pre‑define economically meaningful drivers (market, sector, size, momentum) and are easier to interpret and control, while statistical factor models (e.g., PCA) extract latent directions directly from returns and can discover structure missed by predefined taxonomies; in practice many systems blend both approaches to balance interpretability and data-driven discovery.

RiskMetrics popularized using an exponentially weighted moving average (EWMA) so recent returns carry more weight when estimating volatility, on the judgment that volatility clusters; this makes short-horizon forecasts more responsive but also can increase noise and procyclicality if the decay is too fast.

Optimization bias occurs because optimizers exploit estimation error and concentrate positions where risk has been understated; vendors therefore apply adjustments (MSCIs 'Optimization Bias Adjustment' is an example) or inflate uncertain parts of the covariance matrix to avoid fragile, optimizer-driven concentrations.

A statistical risk model supplies volatilities, correlations, factor dynamics, and distributional assumptions that feed into VaR, but VaR itself is just a summary statistic; if the underlying model assumes normality, linearity, or stale covariances, the resulting VaR can understate tail losses and nonlinear exposures so VaR should be treated alongside other diagnostics.

Validation must cover both model mechanics and use: conceptual soundness, ongoing monitoring, and outcomes analysis/backtesting; regulators and supervisors formalize this (e.g., Federal Reserve SR 11‑7 and Basel backtesting rules), so firms are expected to inventory models, backtest performance, and adjust or restrict models that fail predefined thresholds.

Models break down when correlation stability, distributional shape, or linearity assumptions fail: in crises normally uncorrelated assets can co-move, fat tails and jumps invalidate normal approximations, nonlinear instruments violate linear factor assumptions, and estimation can be undermined for illiquid or non‑modellable factors - these failure modes make models fragile unless supplemented by stress tests and conservative add‑ons.

Covariance estimation is the operational core of a risk model: whether you use factors or not, the final task is to deliver reliable variance and covariance forecasts, and vendor systems routinely augment raw estimators (EWMA, regime adjustments) because unadjusted historical estimates tend to lag regime changes or be too noisy.

When price history is thin or assets are illiquid (the Basel distinction between modellable and non-modellable risk factors), statistical estimates become unreliable, so practitioners either use conservative add‑ons, limit model reliance for those buckets, or supplement with scenario and stress methods rather than pure statistical inference.

Related reading