What Is Value at Risk (VaR)?

Learn what Value at Risk (VaR) is, how it is calculated, why firms use it, and where it breaks down in portfolio and market risk management.

Introduction

Value at Risk (VaR) is a way to summarize portfolio market risk in a single number: over a chosen time horizon and at a chosen confidence level, how large a loss should we expect not to exceed under normal model assumptions? That sounds simple, and that simplicity is exactly why VaR became so influential in portfolio management, bank risk control, and regulation. But the idea is only useful if you understand what the number is actually saying, what machinery produced it, and what it leaves out.

The puzzle behind VaR is easy to state. A portfolio can contain thousands of positions across equities, bonds, currencies, options, and credit instruments. Risk managers still need a compact answer to questions like: Is this portfolio riskier today than yesterday? How much capital should we hold? Are traders staying within limits? VaR exists because decision-makers often need a threshold, not a full probability distribution. It compresses a complicated loss distribution into a single cutoff.

That compression is both its strength and its weakness. A VaR number can be operationally powerful because it is comparable across desks and time. But it can also create false comfort, because the number tells you a boundary at a confidence level, not what happens beyond that boundary. Much of modern market-risk practice can be understood as an attempt to keep the usefulness of that compression while correcting for the places where it breaks.

What does Value at Risk (VaR) actually measure?

At first principles, VaR starts with a random future change in portfolio value. Imagine freezing today’s portfolio weights and asking what tomorrow, or the next 10 days, might do to its value if market prices move. That future profit-and-loss distribution has many possible outcomes: gains, small losses, moderate losses, and rare severe losses. VaR picks a percentile of that distribution and reports the corresponding loss threshold.

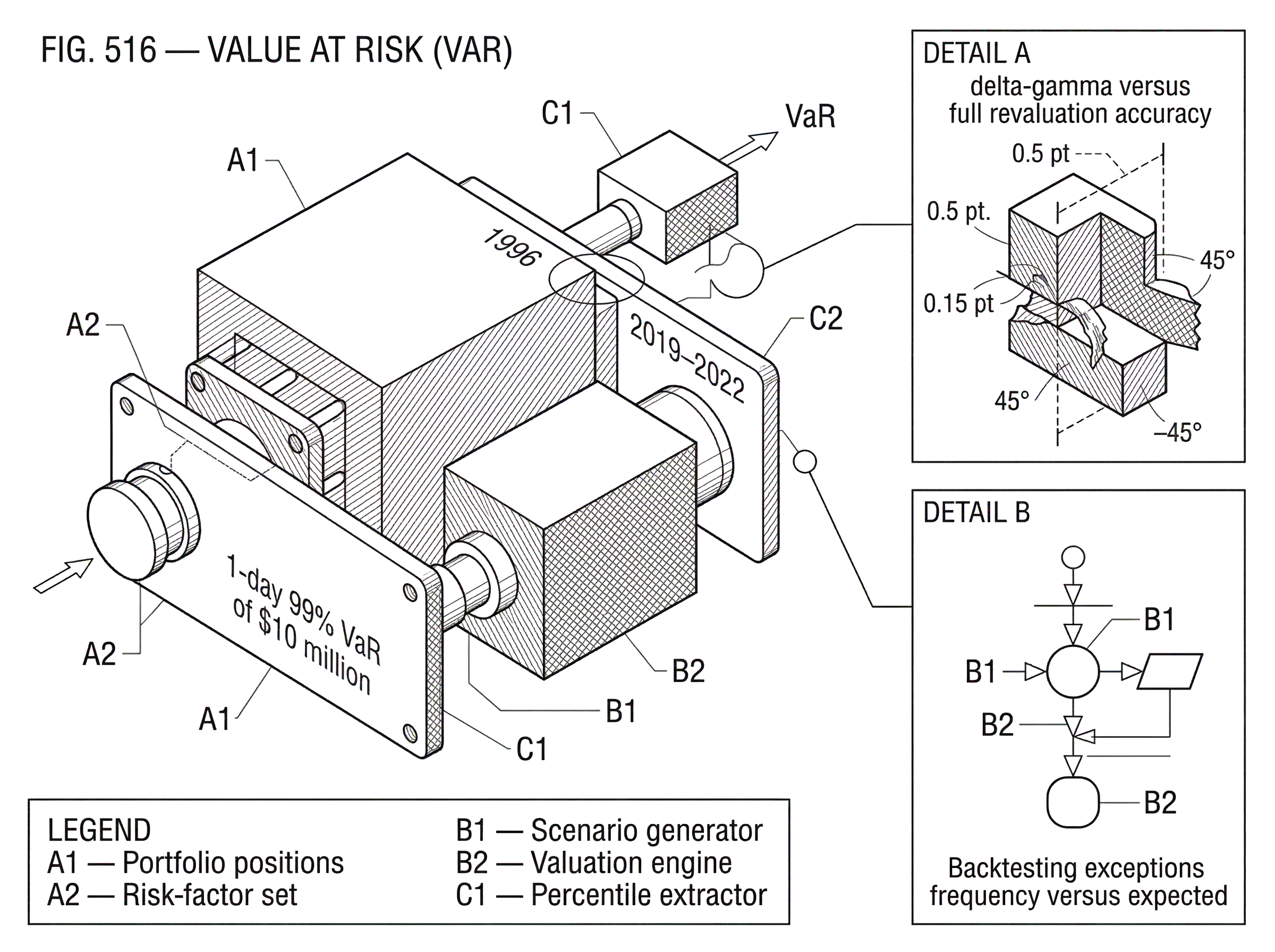

Suppose a portfolio has a 1-day 99% VaR of $10 million. The intended reading is not “the most we can lose is $10 million.” It means something narrower: *under the model and assumptions used, there is a 99% chance the 1-day loss will be no worse than $10 million, and about a 1% chance it will be worse. * Basel’s market-risk standards describe VaR as a measure of the worst expected loss over a given horizon and confidence level. In practice, that means a quantile of the loss distribution.

The key ingredients are therefore not mysterious but easy to overlook. You must specify a portfolio, a holding period, and a confidence level. Change any of those and you change the VaR. A 95% VaR is a shallower tail cutoff than a 99% VaR. A 1-day VaR asks a different question from a 10-day VaR. And a portfolio with options or illiquid positions may require a very different model from a simple cash equity book, even if both are assigned the same confidence level.

A useful way to think about VaR is as a statement about frequency, not severity beyond the threshold. It answers: How bad can losses get before we enter the exceptional tail? It does not answer: If we do enter the tail, how much worse can things become? That missing information is the main reason VaR later came under criticism and why expected shortfall became more important in regulation.

Why did the finance industry adopt Value at Risk (VaR)?

VaR became important because financial institutions needed a common language for market risk. Traders, desk heads, chief risk officers, and regulators were all looking at heterogeneous portfolios. A single metric that could translate positions into a comparable loss number was extremely attractive. The 1996 Basel market-risk amendment made this need concrete by allowing banks, with supervisory approval, to use internal models for market-risk capital. In that framework, VaR became not just a management tool but part of the regulatory machinery.

That regulatory adoption tells you what problem VaR was solving. Banks already had credit-risk capital rules, but trading books needed a way to measure losses arising from market-price moves. Basel defined market risk as the risk of losses in on- and off-balance-sheet positions arising from movements in market prices. The challenge was to convert that diffuse idea into a daily operational measure. VaR fit because it translated market movements into a number that could feed limits, monitoring, and capital formulas.

The attraction was not that VaR was perfect. It was that it was standardizable. Supervisors could require backtesting, stress testing, independent risk control, and board oversight around a common quantitative core. The number was simple enough for governance, even if the underlying model was not. That tradeoff explains much of VaR’s persistence: institutions need imperfect but operational metrics more often than they need theoretically complete ones.

How is VaR calculated from portfolio positions?

To compute VaR, you need a model of how market risk factors move and how the portfolio responds to those moves. The risk factors might include equity prices, interest rates at different maturities, foreign-exchange rates, credit spreads, implied volatilities, and commodity prices. The portfolio is then repriced under many possible changes in those factors, either approximately or exactly, to generate a distribution of potential gains and losses.

Here is the mechanism in ordinary language. First, identify what drives the portfolio’s value. A bond portfolio cares about the yield curve; an equity portfolio cares about stock prices and perhaps factor exposures; an options book also cares about volatility and nonlinearity. Second, estimate how those risk factors can move over the chosen horizon. Third, map those moves through the portfolio to get hypothetical profit-and-loss outcomes. Finally, sort those outcomes from best to worst and read off the chosen percentile.

A simple example makes this concrete. Imagine a portfolio that holds a large basket of U.S. equities, some Treasury futures, and a few equity index options. On a calm day, the risk system begins by expressing the portfolio in terms of sensitivities. The equities are mostly exposed to stock-index movements; the Treasury futures load on interest-rate changes; the options depend not only on index moves but also on changes in implied volatility and on the curvature of the payoff. The model then generates many possible next-day market moves. In each scenario, equities may fall a bit, rates may rally, volatility may rise, and the options are revalued accordingly. After the system has translated each scenario into a portfolio profit or loss, it ranks the outcomes. If the 1st percentile loss is $12 million, that becomes the 99% 1-day VaR.

Notice what makes this work. VaR is never just a formula pasted on top of a portfolio. It is the endpoint of a pipeline: position mapping, risk-factor selection, distributional assumptions, valuation methodology, and percentile extraction. Two firms can report the same confidence level and horizon but produce different VaR numbers because they chose different data windows, covariance estimators, option approximations, or stress calibrations.

Parametric, historical, and Monte Carlo VaR; how they differ and when to use each

| Method | Speed | Tail fidelity | Nonlinearity handling | Data needs | Best for |

|---|---|---|---|---|---|

| Parametric | Very fast | Low | Poor | Covariance matrix | Linear portfolios |

| Historical simulation | Moderate | Medium | Limited | Past returns window | Observed joint moves |

| Monte Carlo | Slow | High if model good | Good | Model and compute | Nonlinear or path-dependent portfolios |

There are three broad modeling choices because there are three broad ways to generate the loss distribution: assume a functional form, reuse observed history, or simulate possible futures. The organizing principle is simple: the harder it is to describe the portfolio and the market with a compact approximation, the more computation you need.

The parametric, or variance-covariance, approach starts by assuming something tractable about returns, often that they are approximately jointly normal over the horizon or at least sufficiently summarized by volatilities and Correlations. If the portfolio is approximately linear in the underlying factors, then its profit and loss can be approximated from those sensitivities and the covariance matrix of factor returns. This is the world popularized by RiskMetrics, which used exponentially weighted moving averages to estimate volatilities and correlations. The reason this method became widespread is speed: once you have the exposures and covariance matrix, VaR falls out quickly. The cost is that linearity and near-normality are modeling choices, not laws of nature.

The historical simulation approach avoids imposing a full parametric distribution. Instead, it takes a window of past market moves, applies each observed move to today’s portfolio, and treats the resulting revaluations as the empirical loss distribution. If you have 500 historical days, you get 500 hypothetical profit-and-loss observations for today’s positions. The VaR is then just the relevant empirical percentile. The appeal here is intuitive realism: you are using actual combinations of market moves that really happened. The weakness is equally important: history may not contain the stresses you most need to imagine, and the tail of the empirical distribution can be unstable when data are sparse.

The Monte Carlo simulation approach explicitly models future risk-factor dynamics and then samples many scenarios from that model. This is especially useful when portfolios are nonlinear, path-dependent, or exposed to many interacting factors. In an options-heavy book, for example, full revaluation under simulated scenarios is often more faithful than delta-based approximations. The tradeoff is computational burden and model risk. A fast wrong model can be dangerous, but so can a sophisticated wrong one.

These are not merely technical variants. They reflect different beliefs about what matters. Parametric VaR prioritizes estimation efficiency and comparability. Historical simulation prioritizes observed joint moves. Monte Carlo prioritizes flexibility in describing future states and nonlinear pricing. In practice, firms often use several in parallel, not because one is universally correct, but because model disagreement itself contains information.

Why are option-heavy and nonlinear portfolios harder to model with VaR?

| Approach | Accuracy | Speed | Best use | Main drawback |

|---|---|---|---|---|

| Delta only | Low | Fast | Small linear exposures | Ignores curvature |

| Delta-gamma approximation | Medium | Fast | Mild nonlinearity | Misses large moves |

| Full revaluation | High | Slow | Large gamma or exotic options | Computationally intensive |

A common misunderstanding is that VaR is only about volatility. That is true only for simple linear portfolios. Once options enter the picture, the portfolio value changes nonlinearly with the underlying risk factors. Small market moves might produce modest losses, while larger moves can produce disproportionately bigger ones because option payoffs curve.

This is why regulatory frameworks historically paid special attention to options. The 1996 Basel amendment noted that significant options traders would be expected to move to comprehensive VaR models, and when firms used simpler methods, they still had to account explicitly for sensitivities such as delta, gamma, and vega. The reason is mechanical. Delta captures first-order exposure, but gamma measures how that exposure itself changes as the market moves, and vega captures sensitivity to implied volatility. A linear approximation that ignores these terms can seriously understate risk in volatile markets.

In practice, that means a nonlinear portfolio often requires either a delta-gamma approximation or full revaluation across scenarios. The approximation can be useful for speed, but full revaluation is conceptually cleaner because it asks the pricing model directly what the portfolio is worth in each scenario. Here the boundary between VaR and pricing-model risk becomes visible: your risk number is only as credible as the valuation engine used to generate it.

How do firms backtest VaR and what are the limits of backtesting?

A risk model that cannot be checked is not a risk model in the operational sense; it is just an opinion. That is why backtesting became central both in regulation and in internal governance. The basic idea is to compare realized profit and loss with the model’s VaR forecasts. If you say the 99% daily VaR should only be exceeded about 1% of the time, then over many days the frequency of exceptions should be broadly consistent with that claim.

This sounds straightforward, but the mechanism is subtler than it appears. A model can fail because it gets volatility wrong, because correlations shift, because positions were mis-specified, because valuations were stale, or because the desk took risks outside the modeled universe. A backtest does not isolate these causes by itself; it only tells you whether the full forecasting pipeline is producing too many or too few exceptions. That is why supervisory guidance and Basel standards pair backtesting with broader model validation, independent risk control, and stress testing.

There is another subtlety. Passing a backtest does not prove the model is good. Statistical tests can have low power, especially for rare-tail events, meaning a bad model may not fail decisively in small samples. This is one reason model governance matters so much. The Federal Reserve’s model risk guidance emphasizes conceptual soundness, ongoing monitoring, benchmarking, and outcomes analysis, rather than treating backtesting as a magic certification stamp.

When does VaR fail to capture portfolio risk?

The deepest critique of VaR is not that it is “wrong.” It is that it discards precisely the information you most care about in severe stress: the size of losses after the cutoff is breached. If a portfolio has a 99% VaR of $10 million, the 1% tail could contain losses of $11 million or $500 million; VaR alone does not tell you which world you are in.

That tail blindness is not just a philosophical problem. It becomes concrete in crises, forced liquidations, and portfolios with hidden concentration. The 2007–09 crisis exposed weaknesses in VaR-based market-risk frameworks, leading Basel first to add stressed VaR and other charges, and later under the Fundamental Review of the Trading Book to replace VaR with expected shortfall in the internal-models approach. Expected shortfall measures the average loss beyond the VaR threshold. The shift makes sense from first principles: if the question is how damaging tail events are, you need a tail average, not just a tail boundary.

A second problem is that VaR depends heavily on assumptions about distributions and dependence. Correlations that look stable in ordinary periods can jump in stress. Liquidity can disappear. Historical windows can omit the regime you are about to enter. Danielsson and de Vries argued that extreme-loss estimation is dominated by tail behavior and that common methods can either underpredict or overpredict low-probability losses, depending on the approach. In other words, the tail is exactly where your data are thinnest and your model choices matter most.

A third problem is aggregation. Artzner and coauthors famously showed that VaR need not be subadditive, meaning the VaR of a combined portfolio can in some cases exceed the sum of the parts in unintuitive ways, or diversification can be misrepresented. The exact pathology depends on the distributional setting, and for many well-behaved elliptical distributions it is less problematic. But the theoretical point matters: VaR is not guaranteed to reward diversification in a mathematically clean way. That weakness helped motivate coherent alternatives such as tail conditional expectation and expected shortfall.

A fourth problem is that VaR often assumes away the act of liquidation. The LTCM post-mortem and the August 2007 quant unwind both illustrate this. In stressed conditions, losses do not come only from exogenous price moves. They are amplified by deleveraging, crowded positioning, market-maker withdrawal, and feedback between market, liquidity, and credit risk. A VaR model based on normal trading conditions may estimate the distribution of marks reasonably well while badly missing the distribution of realized liquidation losses.

How did regulation change after VaR’s failures in crisis?

| Regime | When | Key change | Purpose | Practical effect |

|---|---|---|---|---|

| Basel 1996 | 1996 | Permitted internal VaR models | Standardize market-risk measurement | Operational VaR for capital |

| Basel 2.5 | Post-2007 crisis | Added stressed VaR and incremental risk charge | Address tail and trading-book credit risk | Higher capital in stress |

| FRTB (expected shortfall) | 2019–2022 | Replaced VaR with stress-calibrated expected shortfall | Capture tail-average and liquidity effects | Stricter desk approval and PLA/backtesting |

Regulatory evolution is useful here because it shows, in institutional form, which deficiencies mattered most. The 1996 Basel amendment allowed internal models, including VaR-based approaches, but only with supervisory approval, independent risk control, regular backtesting, and routine stress testing. That already reveals an important truth: regulators never treated VaR as self-sufficient.

After the financial crisis, Basel 2.5 added stressed VaR, requiring a 10-day, 99% VaR calibrated to a 12-month period of significant financial stress, along with an incremental risk charge for default and migration risk in trading-book credit exposures. The logic was clear. Ordinary VaR estimated risk from recent history and could become too benign in calm periods, precisely when leverage tends to build. Stress calibration was meant to reduce that procyclicality.

Then FRTB went further. It replaced VaR and stressed VaR in the internal-models capital framework with expected shortfall, calibrated to stress and adjusted for differing liquidity horizons. It also tightened model approval at the trading-desk level and required desks to pass backtesting and profit-and-loss attribution tests. Even banks using internal models must also calculate the standardized approach, providing a benchmark and fallback. The lesson is not that VaR became useless. It is that, for regulatory capital, supervisors concluded that a threshold-only metric was too narrow for modern trading books.

What is VaR useful for in modern risk management?

Despite its shortcomings, VaR remains useful because not every decision requires a full theory of tail catastrophe. If a chief risk officer wants to compare the broad market-risk footprint of ten trading desks each morning, a single standardized loss threshold is practical. If a portfolio manager wants to monitor whether yesterday’s rebalance materially increased short-horizon market risk, VaR can give a fast signal. If a risk committee wants a limit framework that is stable enough for governance, VaR remains attractive.

The right way to view VaR is therefore as a control metric, not a complete description of danger. It is especially informative when the portfolio is liquid, the horizon is short, the exposures are well mapped, and the model is supplemented by stress testing and qualitative judgment. It is less reliable when the portfolio is highly nonlinear, illiquid, crowded, or exposed to structural breaks in correlation and volatility.

In modern practice, the best use of VaR is usually plural rather than solitary. Firms compare parametric, historical, and simulation-based versions; they examine stress scenarios alongside VaR; they monitor expected shortfall or other tail metrics; and they embed all of this in model governance. The point is not to worship any single number. It is to use each metric for the part of the risk landscape it can genuinely illuminate.

Conclusion

Value at Risk exists because portfolios are too complex to manage without compression. It takes a full loss distribution and reduces it to a threshold: over a chosen horizon, at a chosen confidence level, how large a loss should we not exceed most of the time? That is a powerful question, and VaR remains a useful answer to it.

But VaR’s usefulness depends on remembering what it does not say. It does not tell you how bad losses become beyond the threshold, how liquidation changes outcomes, or whether your historical relationships survive stress. The durable lesson is simple: VaR is a valuable summary of market risk, but never the whole story of risk.

Frequently Asked Questions

It means that, under the portfolio, model, time horizon, and assumptions used, there is a 99% chance the 1‑day loss will not exceed $10 million and about a 1% chance it will be worse; it is not a statement of the maximum possible loss and depends on the chosen portfolio, holding period, and confidence level.

Parametric (variance‑covariance) VaR assumes a compact distributional form and uses exposures plus a covariance matrix for speed but can misstate risk when returns are non‑normal or exposures nonlinear; historical simulation revalues the portfolio under past observed moves so it uses real joint patterns but may miss unseen stresses; Monte Carlo simulates futures from an explicit model and can handle nonlinearity at the cost of computation and model risk.

No - VaR reports a percentile (a cutoff) and does not describe the size of losses beyond that threshold; expected shortfall (or tail conditional expectation) measures the average loss in the tail and was adopted in regulations because it answers that ‘how bad beyond the cutoff’ question.

VaR need not be subadditive, so in some distributions the VaR of a combined portfolio can exceed the sum of the parts and thus may misrepresent diversification benefits; this theoretical shortcoming motivated coherent alternatives like expected shortfall and related tail measures.

Backtesting compares realized P&L exceptions to forecasted VaR and is a core governance tool, but statistical tests can have low power for rare‑event tails and a passing backtest does not prove the model is sound; backtesting must therefore be combined with validation, stress testing, and model governance.

Regulators kept VaR-like modeling but added layers: Basel introduced internal‑model permissions with supervisory validation (1996), later required stressed VaR and incremental charges after 2007–09, and the FRTB replaced internal‑model VaR capital with expected shortfall and stricter desk‑level approvals and liquidity adjustments.

Treat VaR as a fast, standardized control metric useful for short horizons and liquid, well‑mapped books, but always supplement it with stress tests, alternative VaR implementations (parametric/historical/MC), tail metrics like expected shortfall, and qualitative judgment where portfolios are illiquid, nonlinear, or crowded.

VaR that ignores liquidation dynamics can understate realized losses because deleveraging, crowded exits, market‑maker withdrawal, and feedback effects amplify losses in stress - historical episodes such as LTCM and the August 2007 quant unwind illustrate this amplification.

Because VaR is the endpoint of a pipeline (position mapping, choice of risk factors, data window, covariance estimator, and valuation method), two firms using the same confidence level and horizon can produce different VaRs simply from different data windows, estimation choices, or pricing approximations.

Related reading