What Are Latency Arbitrage Models?

Learn what latency arbitrage models are, how they explain stale-quote races, why speed creates rents, and how market design can reduce them.

Introduction

latency arbitrage models explain how market design turns tiny delays into tradable profit opportunities. That may sound like a niche engineering issue, but it reaches a basic question in market structure: when new public information arrives, should anyone earn money merely by being microseconds faster to the exchange than someone trying to update a stale quote?

The surprising part is that these models do not begin with secret information. In the canonical setup, the information is public and symmetrically observable. The opportunity appears because markets process messages in a particular way: continuously, serially, and with nonzero communication and matching delays. If a price should move from 100 to 100.01, there is a short interval in which some traders know the old quote is stale, the liquidity provider is trying to cancel it, and a faster trader can buy it first. The model is designed to make that interval explicit.

This is why latency arbitrage models matter. They are not mainly about clever traders discovering mispricing in the deep economic sense. They are about whether the rules and plumbing of a trading venue create a temporary mechanical mismatch between the current economic value and the currently executable quote. Once you see that distinction, much of the debate over high-frequency trading, speed races, speed bumps, and batch auctions becomes easier to understand.

How can public information outrun quote updates and create stale prices?

At a first-principles level, a modern electronic market does two jobs at once. It lets liquidity providers post executable prices in advance, and it lets other traders hit those prices when they choose. That arrangement is efficient because it creates immediacy: you do not need to negotiate every trade from scratch.

But immediacy creates a vulnerability. A displayed quote is a standing commitment made under uncertainty. If public information changes the asset’s value, the quote may no longer be correct. The liquidity provider then wants to update or cancel it. In a frictionless textbook market, that update would happen instantly, so the stale quote would never be exposed. In an actual exchange, however, messages travel, queues form, and the matching engine processes requests one by one. That gap between should have updated and has updated is the opening that latency arbitrage models study.

The most important invariant in these models is this: the profit does not come from superior valuation of the asset; it comes from superior speed in the race between quote removal and quote taking. If the signal is public, then in principle everyone agrees that the stale quote is stale. The dispute is only about who reaches the exchange first.

That is why the phenomenon is often described as “sniping” stale quotes. A liquidity provider posts a bid or offer at the old price. Public information implies the price should move. The provider sends a cancel. A fast trader sends a marketable order. Because the exchange is continuous and serial, one message arrives first and wins. The winner captures an arbitrage-like rent; the loser gets a failed cancel or failed trade attempt.

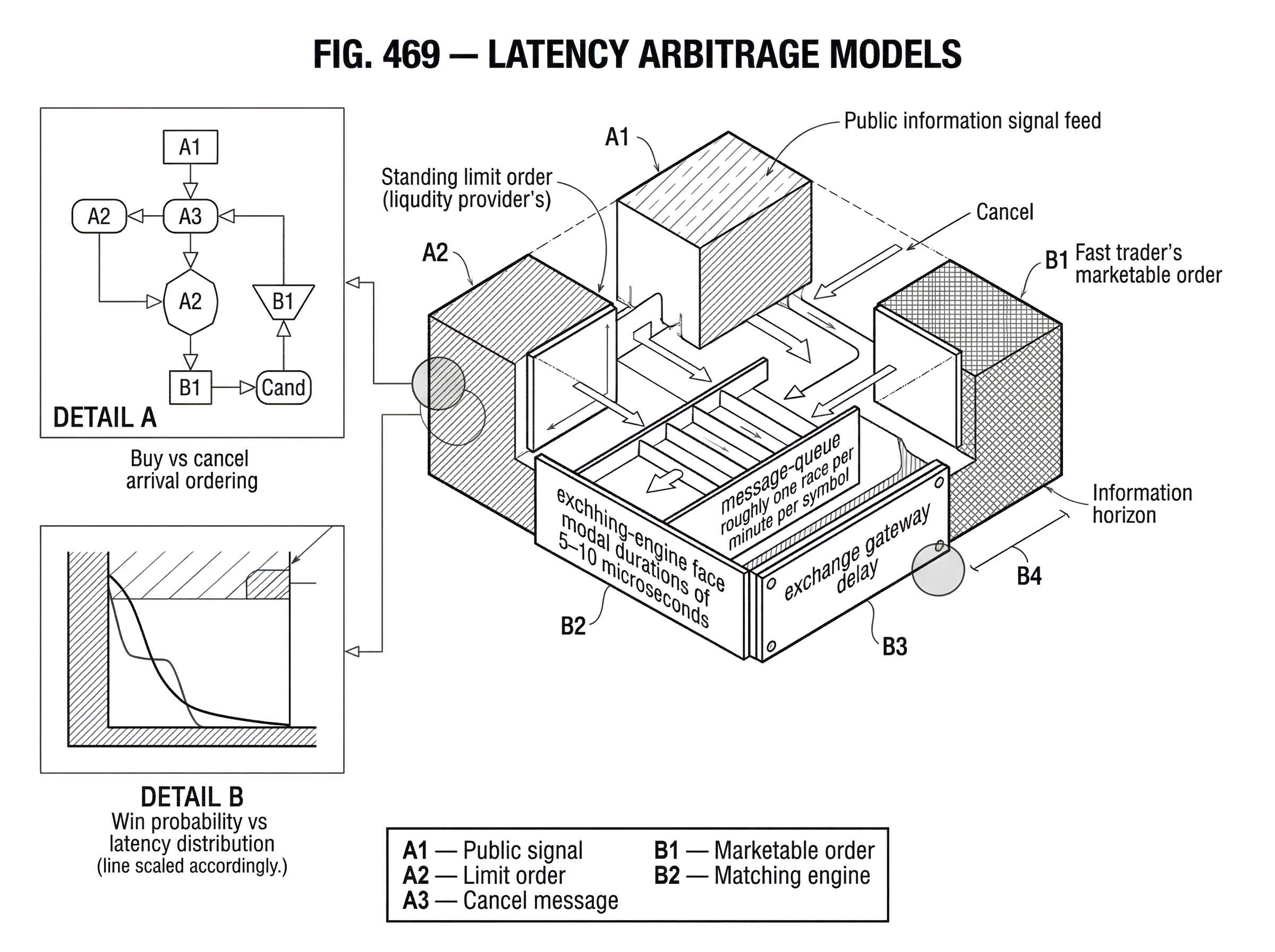

Empirical work using full exchange message data makes this mechanism visible in a way standard order-book data often cannot. The key methodological point in the FCA and BIS work by Aquilina, Budish, and O’Neill is that message data include failed attempts to trade or cancel, which reveal both winners and losers in these races. In their London Stock Exchange sample, races are frequent, extremely fast, and economically meaningful in aggregate. For FTSE 100 symbols, they report roughly one race per minute per symbol, modal durations of 5–10 microseconds, and about 22% of trading volume occurring in races. That evidence matters because it shows the models are not describing a hypothetical edge case.

What is a minimal latency-arbitrage model (one LP, one fast trader)?

| Scenario | Message order | Winner | Profit per race | LP outcome |

|---|---|---|---|---|

| Buy first | Buy arrives before cancel | Fast trader | Mispricing gap | Executed at stale price |

| Cancel first | Cancel arrives before buy | Liquidity provider | No trader profit | Quote removed |

A useful way to understand a latency arbitrage model is to strip it down to the smallest nontrivial case.

Imagine there is one asset, one public signal, one liquidity provider, and one fast trader. The asset’s fundamental value is initially V0 = 100.00. The liquidity provider has a standing ask at 100.01. Then a public signal arrives implying the value is now V1 = 100.03. The ask at 100.01 is stale: it is too cheap by 0.02.

If the liquidity provider could cancel immediately, nothing interesting would happen. The stale quote would vanish. If the fast trader could trade only after the cancellation was processed, again nothing interesting would happen. The model becomes nontrivial only when both messages are in flight at nearly the same time.

Now suppose the liquidity provider sends a cancel after observing the public signal. The fast trader also observes the same signal and sends a buy order to lift the stale ask. The exchange receives and processes these messages in order of arrival. If the buy order arrives first, the fast trader buys at 100.01 an asset now worth 100.03, earning 0.02. If the cancel arrives first, the quote disappears and the opportunity vanishes.

That is the whole mechanism. The model does not need secret information, irrational traders, or a mistaken valuation model. It needs only three ingredients: public information, stale quotes, and continuous-time priority.

This basic story also clarifies what often gets misunderstood. Latency arbitrage is not just “arbitrage that happens quickly.” It is a specific type of rent generated by the interaction between public-information shocks and market-processing latency. If you removed the latency but kept the information shock, the rent would disappear. If you removed the continuous serial processing and processed all updates in synchronized batches, the rent would also disappear.

Why do continuous limit order books create winner-take-all speed races?

The foundational theoretical argument associated with Budish, Cramton, and Shim is that the continuous limit order book makes tiny speed differences disproportionately valuable. The reason is not mystical. In a serial queue, being first is discontinuously better than being second.

Suppose ten traders all know that a displayed quote is stale by one tick. The first one to hit it gets the entire profit opportunity. The second gets nothing. The payoff function is therefore close to winner-take-all. When the prize is winner-take-all, firms rationally spend resources to shave latency because even a tiny improvement can flip the outcome from zero to positive profit.

This is where the “arms race” comes from. The private value of speed can be high even when the social value is low. From society’s perspective, it does not help much whether the stale quote disappears in 50 microseconds or 5 microseconds; either way the quote gets corrected. But from the trader’s perspective, those microseconds determine who captures the rent. The model therefore predicts overinvestment in speed technology relative to the underlying social problem being solved.

The NBER work on stock exchange competition extends this logic from trading firms to exchanges. If speed-sensitive rents exist, exchanges can monetize them by selling exchange-specific speed technology such as co-location, proprietary feeds, and connectivity advantages. That creates a second layer of incentive: not only traders but also venues may benefit from preserving an environment in which speed matters.

This point is subtle and important. The exchange’s matching rule is not neutral infrastructure. It shapes the payoff to speed, and therefore shapes both participant behavior and exchange business models. A model of latency arbitrage is therefore also a model of market-design incentives.

How are latency-arbitrage races formalized (mispricing, win probability, costs)?

Once the intuition is in place, the formal structure is straightforward.

A latency arbitrage model usually includes a fundamental value V(t) that changes when public information arrives, a displayed quote Q(t) set by liquidity providers, and a nonzero adjustment lag between a change in V(t) and the removal or repricing of Q(t). The key state is the stale-quote region where Q(t) still reflects the old value while traders already know V(t) has changed.

The expected arbitrage profit over such an event is roughly the stale-price gap times the probability of winning the race, minus the costs of participating. In plain language:

expected profit = mispricing size × win probability - speed and execution costs

The model’s strategic heart lies in the win probability. That probability depends on latency distributions: communication delays, processing delays, feed latency, and reaction time. If all firms had identical speed and costs, competition would compress expected profits. But because “first” gets the prize and “second” gets nearly nothing, firms invest until marginal speed improvements are privately worthwhile.

Empirical implementations then need a rule for deciding when multiple messages belong to the same race. Aquilina, Budish, and O’Neill introduce the idea of an information horizon: a timing threshold that determines whether two messages could plausibly be responses to the same public information rather than reactions to one another. Their implementation uses observed exchange latencies and minimum reaction times, with a cap in the baseline specification. The exact threshold is a modeling choice, not a law of nature, which is why sensitivity analysis matters.

That caveat is worth stating clearly. The existence of latency-sensitive races does not depend on one exact empirical cutoff. But any attempt to count races, measure their duration, or estimate aggregate rents must choose parameters. The model gives the mechanism; the measurement requires an operational definition.

What questions do latency-arbitrage models answer for regulators and market designers?

The practical use of latency arbitrage models is not merely to say “speed matters.” Market participants and regulators already knew that. Their value is that they let us distinguish several different questions that are otherwise easy to conflate.

The first question is whether a given profit opportunity comes from private information or from public information plus processing delay. That distinction matters because classic adverse selection is part of the ordinary economics of market making: if someone knows more than you, quotes widen. Latency arbitrage is different. It says the signal can be public, and the rent can still arise because the trading mechanism lets someone pick off stale quotes before updates are processed.

The second question is how much of observed trading cost comes from those races. The FCA and BIS evidence suggests the answer is not trivial. Their estimates imply latency arbitrage contributes materially to effective spreads and price impact, and that eliminating it could reduce investors’ cost of liquidity by about 17% in their setting. Any exact percentage is context-dependent, but the core implication is robust: the design of time priority can affect execution costs even when each individual race is worth only a small amount.

The third question is what kinds of market design can change the equilibrium. Here the models become policy-relevant because they let designers ask: if the rent comes from stale quotes plus serial processing, what happens if we change either of those ingredients?

How do batch auctions and speed bumps change latency-arbitrage outcomes?

| Design | Mechanism | Effect on time priority | Stale-quote protection | Best for |

|---|---|---|---|---|

| Continuous LOB | Serial continuous processing | Strong first-mover advantage | Minimal | High immediacy |

| Frequent batch auctions | Discrete-time clearing | Removes microsecond priority | High | Reduce speed races |

| Speed bumps (e.g., IEX) | Fixed short delay on orders | Blunts micro-latency wins | Moderate, design-dependent | Coexist with continuous trading |

There are two broad ways to reduce latency-arbitrage rents, and the organizing principle is simple: you either reduce the stale-quote window or make “being infinitesimally first” less decisive.

Frequent batch auctions do the second. Instead of processing orders one by one in continuous time, the exchange groups messages arriving within a short interval and clears them together at a uniform price. In the Budish-Cramton-Shim framework, this removes the discontinuous advantage of arriving a few microseconds earlier inside the batch. If many traders react to the same public signal, they compete on price and quantity within the batch rather than on microscopic arrival priority. The stale quote is no longer something one trader can uniquely “snipe” just because its message was first by a hair.

Speed bumps do a more targeted version of the first idea. They do not eliminate continuous trading, but they alter timing enough to reduce the chance that stale quotes are executed before the venue can observe and incorporate related market information. IEX’s design is the best-known case: a physical 350-microsecond delay on incoming messages, combined with logic such as the Crumbling Quote Indicator, is intended to protect against executions at prices judged likely to be stale. The mechanism is not “make everything slow” in the abstract. It is “create enough time for quote updating and cross-venue information to catch up before execution becomes final.”

A worked example makes the distinction concrete. Imagine a stock whose best offer on one venue is 100.01, while a public signal implies the fair price has just moved to 100.03. In a standard continuous book, a fast trader can immediately send a buy order to lift 100.01 while the seller’s cancel is still in flight. Under a short batch auction, both the cancel and the buy order may fall into the same batch; the old offer is not individually pick-off-able by being 10 microseconds earlier. Under a speed-bump venue, the incoming buy order is delayed, giving the venue time to ingest related market data and, depending on the design, reprice or protect the stale quote before the buy reaches the matching engine. The mechanisms differ, but both are trying to break the same causal chain.

These interventions are not perfect substitutes. Batch auctions more directly attack the logic of time-priority races. Speed bumps are narrower and can coexist with continuous books, but their effectiveness depends on calibration, routing behavior, and which message types are delayed versus exempted.

What complications arise when applying latency-arbitrage models to fragmented, multi-venue markets?

The cleanest latency arbitrage models are deliberately spare. Real markets are not.

For one thing, fragmented markets create cross-venue complications. In U.S. equities, the relevant quote can depend on the national best bid and offer, direct feeds, and venue-specific delays. A stale quote on one venue may reflect a price move observed first on another. That means the race is not just trader versus liquidity provider on one exchange; it can be a network problem involving feed speeds, routing logic, and protection rules across venues.

This is one reason empirical and regulatory attention has focused on specific exchange designs. The SEC’s approval materials for IEX and its proceedings around other delayed venues such as EDGA’s proposed LP2 mechanism show that the details matter: whether delays are symmetric or asymmetric, whether market data are delayed, whether certain pegged or hidden orders update without delay, and whether quotes remain protected all affect the resulting incentives. A model that says “add delay” without specifying where in the message path the delay sits is incomplete.

Another complication is that the line between latency arbitrage and informed trading is not always sharp in data. A trader reacting very quickly to a public signal may look observationally similar to a trader whose superior technology lets it infer information slightly sooner. The FCA paper explicitly notes this empirical challenge. Models help by drawing a conceptual line (symmetric public information versus asymmetric private information) but the real world often contains mixtures of both.

Finally, equilibrium adaptation matters. If you eliminate one stale-quote mechanism, participants may migrate to another venue, change order types, or alter quoting behavior. The NBER model of exchange competition emphasizes that even when a socially better design exists, incumbents may not voluntarily converge to it because the current system supports exchange revenues from speed-sensitive services. So the question is not only whether a market design works technically. It is whether the surrounding competitive environment gives anyone a reason to adopt it.

Does latency arbitrage appear in crypto and other non-equity venues (MEV, transaction ordering)?

| Market type | Priority mechanism | Latency source | Arbitrage channel | Typical mitigation |

|---|---|---|---|---|

| Centralized LOB | Matching-engine timestamps | Exchange feed and processing delay | Stale-quote sniping | Batch auctions or speed bumps |

| Blockchain DEX | Block / transaction ordering | Mempool propagation and miner ordering | Frontrunning via gas bids (PGAs) | Ordering rules, sealed bids |

| AMM / RFQ | Request sequencing or peg rules | Dealer response or router latency | Quote hit before update | RFQ batching, dealer incentives |

Although the canonical models were developed in electronic equity markets, the underlying logic is broader: whenever ordering priority and update latency jointly determine who can trade against stale state, latency arbitrage-like behavior can emerge.

Blockchain markets offer a useful comparison. In decentralized exchanges, bots compete to exploit public, visible opportunities created by pending transactions and price discrepancies. The details differ (instead of exchange matching-engine priority, the contest may run through block ordering and transaction fees) but the structural resemblance is real. The Flash Boys 2.0 paper describes priority gas auctions in which bots bid for ordering priority to capture arbitrage and frontrunning opportunities. That is not the same mechanism as a continuous limit order book, and the analogy fails if pushed too far: miners or validators, mempools, and block inclusion create a different control structure than a centralized exchange. But the analogy does explain one thing well: if a public opportunity exists briefly and ordering is scarce, participants will spend resources to win priority.

That broader perspective helps isolate what is fundamental and what is conventional. The fundamental part is the race for priority over a short-lived public opportunity. The conventional part is how a specific market encodes priority; timestamps in a limit order book, queue position in a venue router, or fee-based transaction ordering in a blockchain.

When do latency-arbitrage models fail or provide limited insight?

Latency arbitrage models are powerful because they isolate one mechanism cleanly. They are also limited for the same reason.

They become less informative if public-information shocks are not the main driver of short-term price moves. In markets where private information, inventory pressure, or search frictions dominate, stale-quote sniping may be only a small part of trading costs. Similarly, if quote updates are already fast relative to the information process, the stale-quote window may be too small to matter much.

They also depend on the exact processing architecture. A model built for a continuous central limit order book should not be transferred uncritically to dealer RFQ markets, periodic auctions, or on-chain AMMs. The underlying question (who gets priority over stale state) still matters, but the exact equilibrium logic changes with the mechanism.

And there is a normative limit. Not every speed investment is socially wasteful. Some technology improves price discovery, routing efficiency, and resilience. The models are most convincing when they isolate a rent that survives even under symmetric public information. That is the part easiest to call wasteful, because the speed contest reallocates surplus without obviously improving the informational content of prices. But once speed also improves other margins, the welfare analysis becomes more conditional.

Conclusion

Latency arbitrage models explain a simple but consequential idea: if public information updates the economic value of an asset before the market can update the executable quote, then speed creates a race for stale prices. The profit comes not from knowing more, but from arriving first.

That insight turns market microstructure into cause-and-effect. Continuous serial processing creates winner-take-all races; those races justify heavy investment in speed; and that investment can be privately rational even when it is socially wasteful. Change the timing rule (with batch auctions, speed bumps, or other mechanisms) and you change the rent.

The memorable version is this: latency arbitrage is what happens when the market’s clock matters more than anyone’s valuation model.

Frequently Asked Questions

Because the rent comes from who reaches the exchange first, not from owning superior information: public information makes a posted quote stale, and a faster trader can execute against that stale quote before the liquidity provider’s cancel or repricing arrives, capturing the gap between the stale quote and the new fundamental value.

Continuous limit-order books give a discontinuous, winner-take-all payoff to arriving first: the first taker captures the whole stale-quote profit while later entrants get little or nothing, so even tiny latency advantages can have large private value and incentivize an arms race; exchanges can amplify this by selling speed services (co-location, feeds), creating a second layer of incentives.

Researchers use full exchange message data (including failed cancels and failed trade attempts) and an "information horizon" timing rule to group messages that plausibly respond to the same public signal; the choice of timing threshold and inclusion rules matters, so empirical counts report sensitivity analyses rather than a single definitive number.

They attack the problem differently: batch auctions remove microsecond priority by grouping messages in short time buckets and clearing them together, which eliminates the tiny first-mover discontinuity; speed bumps impose short delays on incoming orders (as IEX’s 350 μs example) to give venues time to observe and act on related information without abandoning continuous trading.

Not necessarily - elimination depends on market architecture, calibration, and participant responses: batch auctions or well-calibrated speed bumps can substantially reduce time-priority rents, but fragmentation, order-type exemptions, routing changes, and equilibrium migration of strategies can blunt or shift effects, so interventions typically reduce rather than fully eliminate all speed-sensitive rents.

Estimates vary by setting, but empirical audits (e.g., LSE message-data work and FCA/BIS analyses) find races are frequent and economically meaningful and suggest eliminating latency-sensitive pick‑offs could reduce some measures of execution cost (effective spreads/price impact) by on the order of tens of percent in their samples (the article cites ~17% in one setting), though any exact number is context-dependent and sensitive to measurement choices.

Yes - any market where ordering priority plus update latency determine who can act on brief public opportunities can produce analogous behavior; decentralized exchanges exhibit related dynamics (MEV and priority‑gas auctions) where bots pay for transaction ordering priority to extract fleeting arbitrage, though the technical implementation and mitigation options differ from centralized books.

The models are least informative when short-term moves are driven mainly by private information, inventory or search frictions, or when the market mechanism (RFQ, periodic auctions, AMMs) does not create time-priority races; they also lose bite when quote-update latency is negligible relative to the information process, so applicability must be checked case-by-case.

Related reading