What is Latency Arbitrage?

Learn what latency arbitrage is, how stale quotes create speed races, why it matters for market structure, and how exchanges try to reduce it.

Introduction

Latency arbitrage is the practice of profiting from tiny delays in how market information travels and how orders are updated. The puzzle is simple: if markets are electronic and prices update in fractions of a second, why do stale prices exist at all? The answer is that a market is not a single brain with one instant view of the world. It is a network of exchanges, data feeds, routers, and trading firms, each seeing and acting on information at slightly different times.

Those slight timing differences create moments when one participant knows a displayed price is already obsolete, while another participant is still forced to honor it. In that moment, speed itself becomes the edge. The trade is often described as sniping or picking off a stale quote, and the core mechanism is mechanical rather than deeply informational: the winner is often just the party that reaches the exchange first.

This is why latency arbitrage matters far beyond a niche dispute about high-frequency trading. It changes the economics of liquidity provision, because anyone posting a quote must worry that the quote can become stale before it can be canceled. It changes the economics of market access, because firms then spend heavily on co-location, faster networks, proprietary data feeds, and optimized hardware. And it changes market design debates, because the question stops being merely whether some traders are fast and becomes whether the rules themselves are rewarding speed in a way that produces useful price discovery or mostly a transfer from slower traders to faster ones.

How does latency arbitrage create a race to hit stale quotes?

Here is the simplest way to think about latency arbitrage. A market maker posts an offer to sell at 100.00. Somewhere else in the market, new information arrives: perhaps a futures price jumps, perhaps another venue's best offer moves to 100.01, perhaps a correlated asset changes enough to imply that 100.00 is too cheap. The market maker wants to cancel or reprice the stale offer. A latency arbitrageur wants to buy at 100.00 before that cancellation arrives.

That creates a race with two messages aimed at the same exchange. One message says, in effect, remove my stale quote. The other says, trade against that quote before it disappears. If the aggressor arrives first, the stale quote is executed and the arbitrageur can often unwind almost immediately at the new, better price elsewhere or later in the same market. If the cancel arrives first, the opportunity vanishes.

The compression point is this: latency arbitrage is not fundamentally about superior valuation; it is about being first to a price that is already known to be wrong. That is why debates around it are so intense. In ordinary arbitrage, a trader helps align prices by discovering a discrepancy and taking risk against it. In latency arbitrage, the discrepancy is often obvious and fleeting, and the main scarce resource is transmission time.

This also explains why the strategy is tightly linked to fragmented markets. If all trading happened in one place, and if quotes updated there instantly and atomically, there would be fewer stale prices to pick off. But modern markets are distributed across venues and data channels. Even on a single venue, participants do not all learn the same thing at the same instant, and cancellation requests do not arrive simultaneously. The market is synchronized only approximately.

Why do stale quotes persist in electronic markets?

At first glance, stale quotes seem like a design bug. In practice, they are a consequence of combining continuous trading with real-world communication limits. Information must be observed, processed, routed, and acted on. Each step takes time, and those times differ across firms and venues.

Suppose a change in one market implies that a quote resting in another market should move. The liquidity provider must first detect the change. Detection may come through a consolidated feed, a proprietary direct feed, a futures market signal, or an internal model using several inputs. Then the provider must decide to cancel or update the quote, transmit that message, and wait for the exchange to process it. A faster rival can sometimes detect the same change sooner, route an aggressive order faster, or simply be physically closer to the matching engine.

That sequence matters because a displayed quote is a commitment until the exchange processes a cancellation. From the liquidity provider's perspective, the dangerous interval is not just I have stale information. It is I know I am stale, but the exchange has not yet removed my quote. Latency arbitrage lives inside that interval.

The same structure appears across products. In equities, a futures move may imply that quotes in an ETF or individual stocks are stale. In foreign exchange, a move on one venue can leave another venue's quote briefly behind. In crypto, where exchanges are even more fragmented and no single national best quote exists, cross-venue delays can create similar races, though the precise institutional details differ. The underlying mechanism is the same: price-relevant information updates asynchronously, and someone fast enough can trade before the slower side finishes adjusting.

What is a concrete example of latency arbitrage in action?

Imagine an index future jumps because new macro information hits. A market-making firm quoting a large ETF has models that use the future as an input, so it now wants to raise its ETF offer by one cent. At nearly the same time, a fast trading firm sees the same futures move and infers that the ETF offer resting on one exchange is stale.

The market maker sends a cancel message for its old ETF offer. The fast trader sends a buy order to hit that offer. These messages travel through networks with different routes and delays, arrive at the exchange in some order, and are processed by the exchange's systems. If the buy order reaches the exchange first, the trader purchases at the stale offer, then may immediately sell the ETF at a slightly higher price, hedge with the future, or unwind on another venue where prices have already adjusted. The profit on that single event may be tiny, perhaps a fraction of a tick or a few dollars. But if this happens hundreds of times per day across many symbols, the aggregate can be large.

Notice what the fast trader did not need. It did not need a long-term view on the ETF. It did not need deep fundamental research. It needed a reliable signal that the old offer was stale and enough speed to outrun the cancel. That is why latency arbitrage sits at the boundary between price efficiency and rent extraction. The trade helps remove stale prices from the book, but it does so by imposing losses on whoever posted those quotes.

How do researchers measure and detect latency arbitrage?

| Data type | What it shows | Required precision | Main limitation | Best use |

|---|---|---|---|---|

| Order-book snapshots | displayed quotes and trades | millisecond timestamps | misses failed cancels | broad liquidity trends |

| Exchange message data | full inbound/outbound messages | microsecond timestamps | restricted access | identify winners and losers |

| Consolidated SIP feeds | aggregated top-of-book | millisecond-level | latency and ordering variance | cross-venue monitoring |

| Proprietary direct feeds | faster venue updates | sub-millisecond | partial market view | low-latency signals |

Latency arbitrage is easy to describe but harder to measure than many readers expect. Ordinary trade-and-quote datasets show what traded and what was displayed, but they often do not show the failed attempts that lost the race. That missing piece matters. If you can only see the winning trade and the final state of the book, you may infer that a stale quote was hit, but you cannot directly see the loser who tried to cancel too late.

The strongest empirical evidence therefore comes from exchange message data rather than ordinary order-book snapshots alone. Message data include the full back-and-forth between participants and the exchange, including unsuccessful trade attempts and unsuccessful cancel requests. That makes it possible to identify a race as a cluster of messages aimed at the same resting liquidity in a very short time window, and to observe both the winner and the loser.

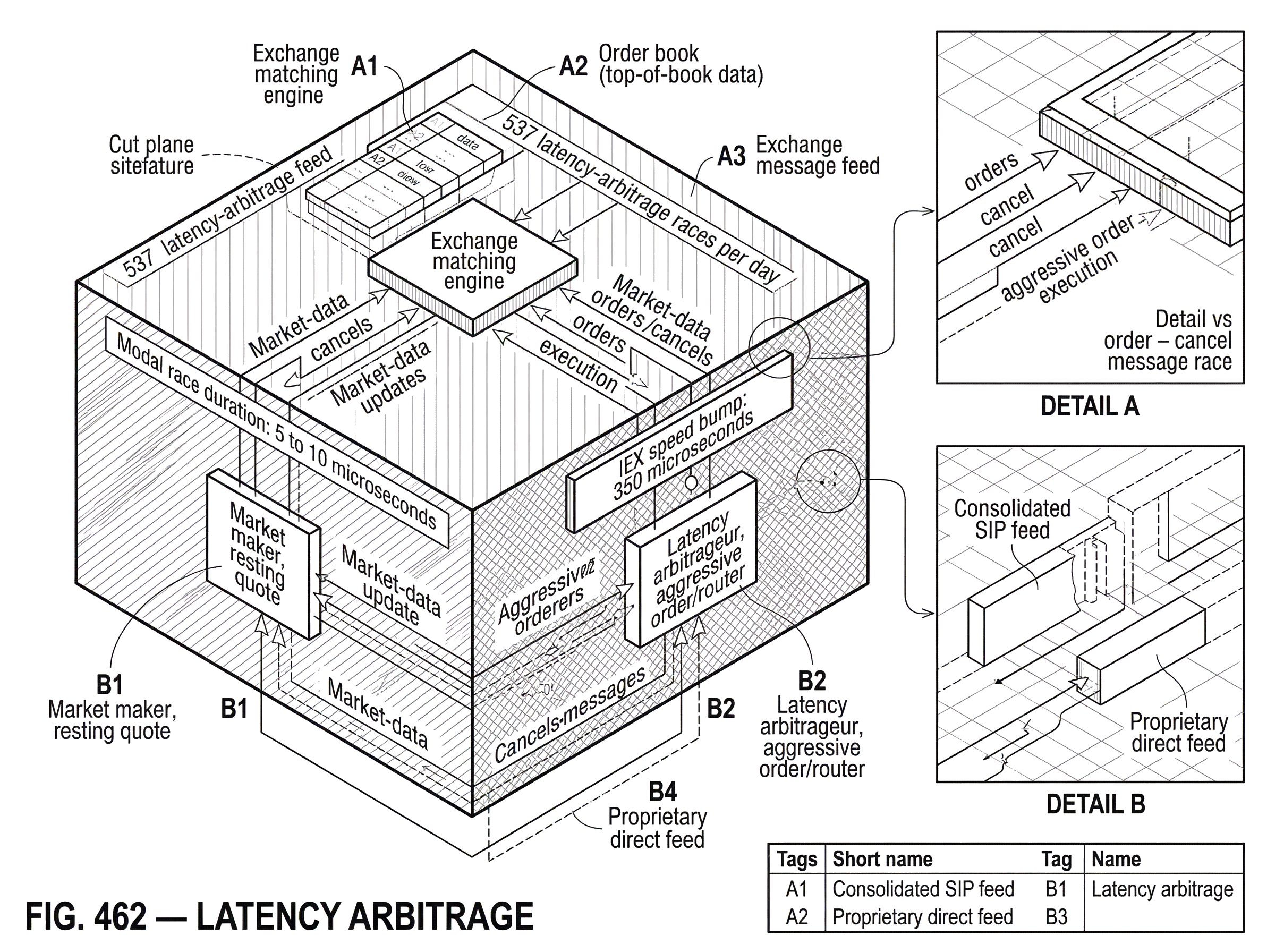

A widely cited study using London Stock Exchange message-level data for FTSE 350 stocks during a nine-week period in 2015 finds that these races were frequent, fast, and concentrated. For FTSE 100 stocks, the average symbol experienced about 537 latency-arbitrage races per day, roughly one per minute. The modal race duration was 5 to 10 microseconds, and the average duration was about 79 microseconds. About 22% of FTSE 100 trading volume and 21% of trades occurred in such races.

Those numbers are useful because they change the argument from anecdote to mechanism. If races were rare edge cases, the market-design implications would be limited. But if a substantial share of volume is tied to microsecond contests over stale quotes, then the possibility of being picked off becomes part of the normal cost of supplying liquidity.

The same study also finds that participation is highly concentrated: the top six firms account for over 80% of race wins and losses. That concentration is exactly what one would expect in a technology-intensive contest. Once speed matters at the level of microseconds, only a small set of firms can justify the infrastructure needed to compete effectively.

How does latency arbitrage act as a ‘tax’ on market makers and displayed liquidity?

| Provider strategy | Direct cost | Displayed liquidity effect | Market impact |

|---|---|---|---|

| Widen spreads | reduced trade volume | less attractive quotes | higher trading costs |

| Reduce displayed size | fewer shown shares | shallower book | worse visible depth |

| Cancel aggressively | more message traffic | less firm quotes | more execution uncertainty |

| Invest in speed | large fixed expenditure | faster access for some | arms race and redistribution |

A useful way to see the economics is to ask what a posted quote really means under the threat of latency arbitrage. In a frictionless model, a market maker posts a bid and ask to earn the spread while managing inventory and adverse selection risk. But if the market maker is regularly picked off when prices move, then the quoted spread has to cover an additional expected loss: the loss from stale quotes that cannot be canceled in time.

This is why some researchers describe latency arbitrage as a tax on trading or, more precisely, a tax on displayed liquidity. The same LSE-based evidence estimates that average race stakes are small per event, around half a tick or roughly £2 on average, but that these add up to a latency-arbitrage tax of roughly 0.4 to 0.5 basis points of trading volume. The paper's main estimates suggest that races account for roughly one-third of effective spread and price impact, and that eliminating latency arbitrage through market-design changes could reduce liquidity costs by around 17% in that setting.

The exact numbers depend on sample, venue, and methodology, so they should not be treated as universal constants. But the mechanism is robust. If liquidity providers expect to be adversely selected by faster traders whenever prices move, they can respond in only a few ways: widen spreads, reduce displayed size, cancel more aggressively, or invest in speed themselves. None of those responses is free.

This is the deeper reason the speed race can persist even if per-trade profits look tiny. What matters is not just the arbitrageur's gain on each pickoff. It is the market-wide equilibrium response: everyone adapts around the possibility of being picked off. The cost then shows up not only in direct transfers, but also in less stable displayed liquidity and more spending on technology whose social value is contested.

Why do firms spend on co‑location, feeds, and routing in the latency arms race?

Once speed determines who captures stale quotes, firms have a clear incentive to shave microseconds wherever possible. That incentive drives the familiar arms race in co-location, optimized hardware, microwave and specialized fiber routes, exchange gateway engineering, and direct market-data feeds.

From first principles, the spending is easy to explain. If two firms are competing for the same transient opportunities, then reducing your end-to-end latency raises your probability of winning. If a profitable opportunity appears often enough, even tiny latency improvements can justify large fixed costs. The private incentive is strong because race outcomes are often winner-take-all at the message level: arriving first captures the trade; arriving second usually gets nothing.

The social value is less obvious. Some speed investments genuinely improve market function by narrowing the time it takes for new information to be reflected in prices. But beyond some point, the contest begins to look positional. If one firm spends to be faster, rivals must spend merely not to fall behind. Aggregate industry spending can then rise sharply even if the main effect is redistribution of the same stale-quote opportunities among a handful of firms.

That is why latency arbitrage is often discussed together with the phrase high-frequency trading arms race. The issue is not that all fast trading is suspect. Many algorithmic traders provide useful liquidity, manage inventory efficiently, and enforce cross-market consistency. The narrower claim is that a market design that makes some profits depend mainly on outrunning cancels may over-reward raw speed relative to informational or risk-bearing contributions.

What are common misconceptions about latency arbitrage?

A common misunderstanding is that latency arbitrage is simply ordinary arbitrage and therefore unambiguously beneficial. The truth is more nuanced. It does help align prices by removing stale quotes. But it also weakens incentives to display quotes in the first place, because displayed liquidity becomes more dangerous when others can hit it before it is updated.

Another misunderstanding is that the problem disappears if everyone just buys faster technology. That cannot be right in equilibrium. If all participants become faster together, relative speed differences still matter, and the system may simply move the contest to a smaller time scale. What matters is not absolute speed alone but whether the market's matching rules convert tiny speed differences into meaningful economic rents.

A third misunderstanding is that latency arbitrage is the same as informed trading. Informed trading is about having a better estimate of value. Latency arbitrage can use information, of course, but the information is often extremely short-horizon and mechanical: a signal that one venue or instrument has moved and another quote has not yet caught up. The edge lies less in knowing what the right price should be over the next hour than in knowing which currently displayed price is stale over the next few microseconds or milliseconds.

How do exchanges and regulators try to reduce latency arbitrage?

| Mitigation | How it works | Example | Main tradeoff | Best when |

|---|---|---|---|---|

| Speed bump | deliberate access delay | IEX 350 microsecond delay | slower routing complexity | protecting pegged orders |

| Frequent batch auctions | discrete-time clearing | small periodic auctions | changes liquidity supply | reducing microsecond rents |

| Order-type protections | conditional execution rules | CQI and D-Limit | prediction errors possible | targeted protection needed |

| Quote timing rules | minimum quote lifetime | 50ms expiration proposal | limits rapid repricing | curbing quote-stuffing |

If the core problem is that continuous trading turns tiny timing differences into race outcomes, then mitigation usually works by changing either the timing or the firmness of quotes.

One approach is to insert a small, intentional delay so the exchange has time to update its view of the market or so incoming messages are treated more evenly. The best-known example is IEX's speed bump, implemented as roughly 350 microseconds of physical-path delay using coiled fiber. IEX describes this delay as giving the exchange time to ingest market data from other venues and update prices before executing trades, helping protect pegged orders from trading at stale prices. IEX also uses its Crumbling Quote Indicator, or CQI, a signal designed to detect when the best bid or offer is likely about to move. When the signal is on, certain IEX order types such as D-Peg and D-Limit become less aggressive, reducing the chance that resting liquidity is picked off.

The SEC approved IEX as a national securities exchange and, in doing so, clarified that very small intentional access delays can still be compatible with protected automated quotations under U.S. market rules. Staff guidance at the time treated delays of less than one millisecond as de minimis. That does not settle the larger policy debate, but it shows that market design can be adjusted within existing exchange structures rather than only through broad prohibitions on fast trading.

Another approach is to move away from continuous-time priority and toward discrete-time clearing, often discussed as frequent batch auctions. The idea is not to stop electronic trading, but to replace constant micro-races with short intervals in which orders arriving within the same batch are treated as simultaneous. If time priority is coarsened enough, shaving a few microseconds no longer determines the outcome, and the rent from being infinitesimally faster can collapse.

A related theoretical line argues that existing order types do not let traders express enough about when they want execution to occur. If traders could specify execution timing more directly, exchanges might better synchronize order interaction and reduce opportunities to exploit asynchronous updates across venues. That framing is useful because it treats latency arbitrage not merely as a speed problem but as a market-design problem.

When do speed bumps or batch auctions reduce latency arbitrage; and when do they create new problems?

No mitigation is free. A speed bump can reduce sniping, but it can also complicate routing and interaction with other venues. If some participants or order types are exempt from delay while others are not, the design can create new asymmetries. Critics of asymmetric speed bumps have argued that they can create something like last look liquidity: quotes appear available, but privileged participants can cancel before incoming orders are allowed to execute. That is a different failure mode from the one the speed bump was supposed to solve.

Even symmetric protections rely on models. IEX, for example, warns that it cannot guarantee the timeliness or completeness of the data and calculations behind its crumbling-quote logic. That is unavoidable to some extent. Any system that tries to predict when a quote is unstable will sometimes trigger too often or not often enough. The practical question is whether the errors are smaller than the problem being mitigated.

Frequent batch auctions also involve tradeoffs. They can weaken the incentive to spend on ever-faster speed, but they may change how liquidity is supplied, how large orders are sliced, and how prices evolve between auctions. A design that works well for highly liquid equities may work differently in thinner markets. The point is not that there is one universally correct architecture. The point is that once you understand the mechanism of latency arbitrage, you can see which design levers matter: how precisely time priority is enforced, how quotes are protected, and how much room there is to cancel stale interest before someone else can seize it.

How does latency arbitrage fit into broader market structure, fragmentation, and data issues?

Latency arbitrage sits next to several neighboring ideas, but it is not identical to them. It is a form of arbitrage because it exploits transient price inconsistency. It overlaps with market data because stale quotes often arise from differences between feeds, venues, and processing times. It overlaps with market fragmentation because the opportunity is larger when related prices live in different places. And it overlaps with algorithmic trading risk because the same ultra-fast infrastructure that enables efficient repricing can also amplify failures, as market history shows during episodes of data disruption, liquidity withdrawal, or automated-routing errors.

This broader context matters because it shows why the debate does not reduce to a moral judgment about fast traders. Modern electronic markets need automation. They benefit from quick incorporation of information, rapid hedging, and tight cross-market linkage. The harder question is whether the marginal microsecond of speed is improving those functions or mainly deciding who gets to trade against a quote that everyone already knows should be gone.

That is also why empirical measurement has been so important. Without message-level evidence, the argument can sound philosophical. With it, the picture becomes more concrete: races are common, durations are microscopic, participation is concentrated, and the economic effects, while tiny per event, can be meaningful in aggregate.

Conclusion

Latency arbitrage is best understood as a race to trade against stale quotes before they can be canceled. Its root cause is not greed or bad software in the abstract, but a structural fact about modern markets: information, orders, and cancellations do not arrive everywhere at the same instant.

Once a market rewards being first to an already-obsolete price, firms will invest heavily in speed, liquidity providers will protect themselves against being picked off, and market designers will look for ways to make tiny timing differences matter less. That is the lasting takeaway: latency arbitrage is not just a trading tactic. It is a window into how the rules of time, priority, and information shape the market itself.

Frequently Asked Questions

Latency arbitrage exploits tiny timing mismatches to trade against a quote that is already known (by the arbitrageur) to be stale; informed trading rests on having a superior estimate of fundamental value and taking risk on that view. Latency arbitrage’s edge is mechanical and horizon‑short (microseconds to milliseconds), whereas informed trading reflects private or analyzed information about longer‑run value.

Researchers need exchange message‑level data with microsecond‑accurate timestamps (showing orders, cancels, and failed attempts) because ordinary trade-and-quote snapshots typically hide the losing cancel attempts that define a race. That point is emphasized in the article and in studies using London Stock Exchange message data, which rely on optical TAP/message logs to identify both winners and losers.

In the London Stock Exchange message‑level study cited in the article, FTSE 100 symbols experienced on average about 537 latency‑arbitrage races per day, with a modal race duration of 5–10 microseconds and an average of ~79 microseconds; the same work reports that roughly 21–22% of trades or trading volume occurred in such races. These estimates come from the LSE message‑level analysis described in the article and underlying working papers, but the authors caution that results are sample‑ and venue‑specific.

Stale quotes persist because information, detection, decision and transmission all take non‑zero and heterogeneous time: a liquidity provider must detect a related price move, decide to cancel or update, transmit the cancel, and wait for the exchange to process it, while faster rivals can route aggressive orders to hit the resting quote before the cancel is processed. This sequence - not a software ‘bug’ - is the structural cause described in the article.

Common exchange‑level fixes include small intentional delays (IEX’s roughly 350‑microsecond ‘speed bump’ and its Crumbling Quote Indicator) and coarsening time priority via frequent batch auctions; both aim to make microsecond speed less determinative. Each approach has trade‑offs: speed bumps can complicate routing or create new asymmetries if implemented asymmetrically, and batch auctions change how liquidity is supplied and sliced, so empirical and design details matter.

Liquidity providers typically respond by widening quoted spreads, reducing displayed sizes, canceling more aggressively, or investing in faster infrastructure (co‑location, direct feeds, microwave/fiber, gateway engineering); the article frames these responses as the reason latency arbitrage acts like a ‘tax’ on displayed liquidity.

Whether the arms race is socially beneficial depends on scale: some speed investments improve how quickly information is reflected in prices, but beyond a point spending becomes positional because rivals must match investments just to avoid falling behind, producing large aggregate private costs with debatable public benefit. The article makes this nuanced point and notes that only a few firms typically dominate race outcomes once microsecond advantages matter.

No mitigation is foolproof: IEX itself warns it cannot guarantee perfect timeliness of its crumbling‑quote logic, regulators have highlighted limits on allowed delays (SEC staff treated sub‑1ms delays as de minimis), and asymmetric or poorly designed delays can create new forms of privilege or 'last look' problems, so empirical evaluation and careful rules are required.

Related reading