What Is a Kalman Filter?

Learn what a Kalman filter is, how its predict-update mechanism works, and why traders use it for latent state estimation and time-series forecasting.

Introduction

Kalman filter is a method for estimating an unobserved, changing state from noisy measurements, and that is exactly why it keeps showing up in quantitative trading. Prices are visible, but many of the things a trader actually wants are not: a latent trend, a fair value, a time-varying hedge ratio, a changing volatility regime, or the "true" spread between two assets after temporary noise is stripped away. The puzzle is that these hidden quantities matter most when the observations are least trustworthy. If you react directly to every price move, you mostly trade noise. If you smooth too aggressively, you miss the regime change that matters.

The Kalman filter sits in the middle of that tension. It assumes that there is some hidden state evolving through time and that each new observation is an imperfect glimpse of that state. From there it does something very practical: it updates the estimate recursively. That word, recursively, is the key operational advantage. At time t, the filter does not need to refit the entire history. It takes yesterday's estimate, today's observation, and the uncertainty around both, then produces a new estimate and a new uncertainty measure.

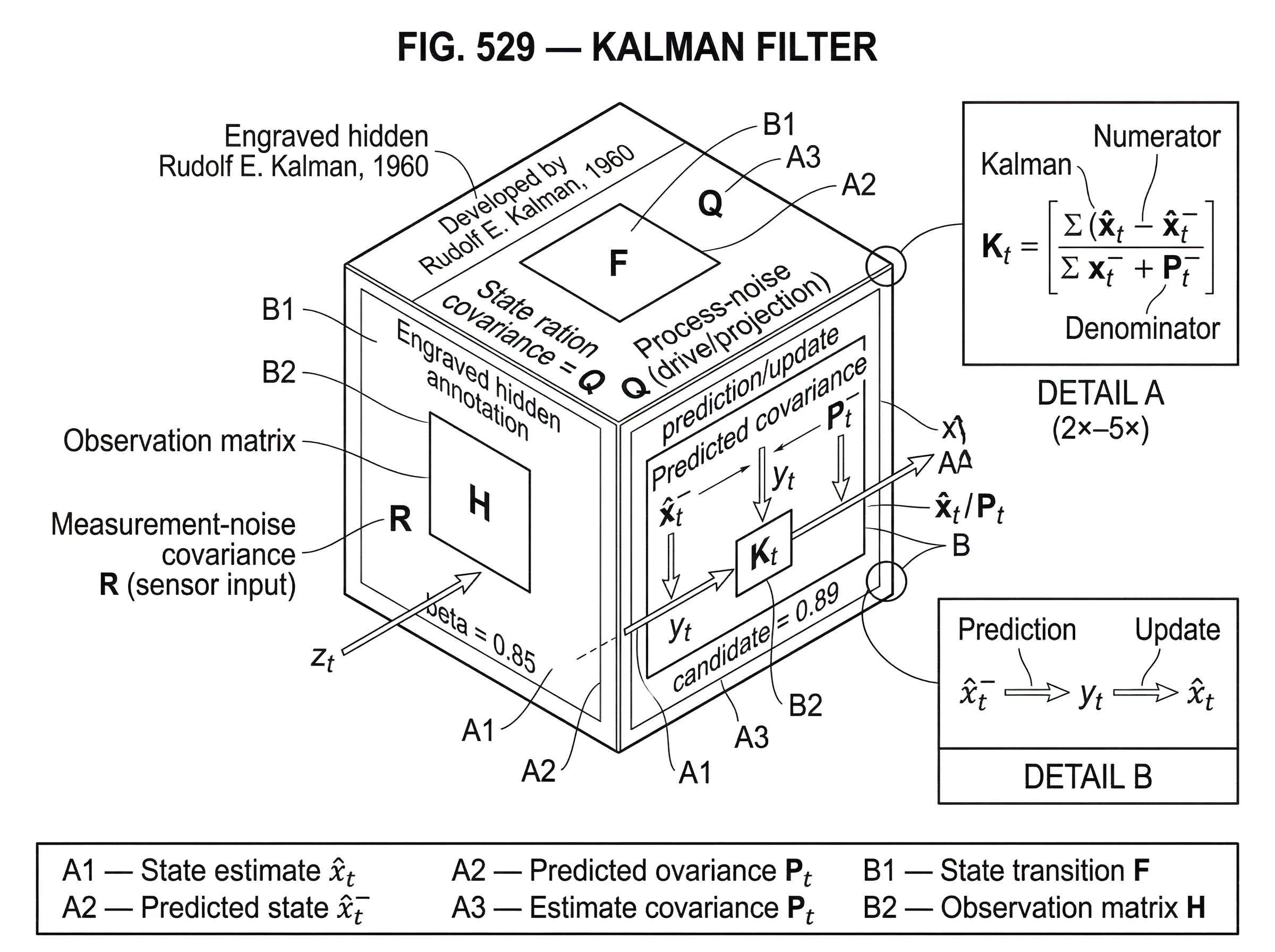

This is why the method has lasted. In its classical form, developed by Rudolf E. Kalman in 1960, it gives an optimal linear estimator in the minimum-mean-squared-error sense for linear state-space systems under the standard Gaussian noise assumptions. But even when markets violate those assumptions, the framework remains useful because it forces the right question: what is the hidden process, how does it evolve, and how noisy are my observations of it?

Why estimate a latent state instead of modeling observed prices?

The central distinction in a Kalman filter is between the stateand theobservation. The state is the hidden quantity that actually matters for prediction. The observation is what you can measure. In trading, observed prices are not always the best object to model directly because they mix signal and microstructure noise, temporary dislocations, and regime-dependent dynamics. A state-space model says: there is an underlying process driving the data, and we should estimate that process rather than treating raw observations as truth.

That sounds abstract until you make it concrete. Suppose you are trying to estimate a stock's short-term latent trend. You observe the last traded price, but that price can jump because of bid-ask bounce, a block trade, or a transient liquidity shock. If you treat each new print as the signal itself, your estimate whips around. If instead you posit a hidden trend state that moves gradually and a measurement process that adds noise, then each new price becomes evidence rather than command. The filter asks how much the new observation should change your belief, given both the estimated uncertainty of the state and the estimated noisiness of the measurement.

That mechanism generalizes. In pairs trading, the hidden state may be the time-varying regression coefficients linking two assets. In term-structure models, it may be latent factors. In market making, it may be a short-lived efficient price behind noisy quotes and trades. The recurring structure is the same: hidden state evolves, noisy observation arrives, estimate updates.

Kalman's original formulation emphasized the state as the minimal information needed about the past to predict the future behavior of the system. That is the right way to think about trading applications too. The point is not to store all history. The point is to summarize the relevant history in a compact, updateable object.

How does a Kalman filter's state-space model work?

| Relative size | Adaptivity | Trusts | Typical effect |

|---|---|---|---|

| R >> Q | Low adaptivity | Prior / model more | Smooth, slow updates |

| Q >> R | High adaptivity | Observations more | Rapid updates, may chase noise |

| Q ≈ R | Balanced adaptivity | Blend prior and data | Moderate responsiveness |

A standard discrete-time Kalman filter uses two equations. The first describes how the hidden state evolves. The second describes how observations are generated from that state.

Write the hidden state as x_t and the observed data as z_t. Then the model is:

x_t = F x_(t-1) + w_tz_t = H x_t + v_t

Here F is the state transitionmatrix: it says how the hidden state moves from one step to the next. H is theobservationmatrix: it maps the hidden state into what you can measure. The term w_t isprocess noise, with covariance Q, and v_t ismeasurement noise, with covariance R.

These symbols matter because each one corresponds to a modeling choice. F encodes your belief about persistence or dynamics. H encodes what the market data actually reveal. Q says how much the hidden state itself can change unpredictably. R says how noisy the observations are. In practice, most of the art lives in Q and R. The filter equations are straightforward; deciding what uncertainty should mean in a market context is harder.

The most important intuition is that Q and R control adaptivity versus smoothness. If R is large relative to Q, the filter treats observations as noisy and changes slowly. If Q is large relative to R, it assumes the underlying state itself can move quickly, so it adapts more aggressively. This is not merely parameter tuning in the casual sense. It is the formal expression of a belief about whether surprising data mean "the world changed" or "the measurement was noisy."

That distinction is exactly what traders struggle with every day.

How does the Kalman filter prediction–update cycle work in practice?

The Kalman filter works in a repeating two-part cycle: predictionandupdate. This is the mechanism that makes the method recursive and computationally efficient.

In the prediction step, the filter pushes the previous state estimate forward through the dynamics model. If yesterday's estimate was x̂_(t-1) and the system evolves according to F, then today's prior estimate is x̂_t^- = F x̂_(t-1). The superscript - means before seeing today's measurement. At the same time, the filter updates the uncertainty of that estimate. If P_(t-1) is the covariance of the estimation error yesterday, then the predicted covariance is P_t^- = F P_(t-1) F' + Q, where F' is the transpose of F.

This covariance update matters as much as the state update. The filter does not just produce a point estimate. It keeps track of how uncertain that estimate is. That uncertainty expands during prediction because time passes and unobserved shocks may have moved the state.

Then comes the update step. A new observation z_t arrives. The filter compares it with what the current model predicted it should see, namely H x̂_t^-. Their difference, y_t = z_t - H x̂_t^-, is the innovationorresidual. It is new information: the part of the observation that was not already implied by the prior estimate.

The filter then computes the Kalman gain K_t, which determines how strongly the innovation should affect the estimate. In standard form, K_t = P_t^- H' (H P_t^- H' + R)^(-1).

You do not need to memorize the formula to understand what it does. The numerator contains prior uncertainty projected into observation space. The denominator adds measurement noise. So the gain is high when the prior is uncertain and the measurement is reliable, and low when the prior is confident and the measurement is noisy.

With that gain in hand, the updated estimate becomes x̂_t = x̂_t^- + K_t y_t. The covariance is updated as well, shrinking to reflect the information gained from the observation.

This is the click point for many readers: the Kalman filter is not a moving average with fancier algebra. It is an uncertainty-weighted correction rule. It predicts, measures surprise, and then decides how much that surprise should matter.

How to use a Kalman filter to estimate a time-varying hedge ratio (pairs trading example)

| Method | Memory | Reaction to regime change | Produces uncertainty | Best for |

|---|---|---|---|---|

| Rolling OLS | Fixed window | Abrupt change after window | No per-update variance | Stable relationships |

| Kalman filter | Recursive weighting | Soft, model-driven adaptation | Per-step covariance estimate | Time-varying relationships |

The most natural market example is pairs trading, because it shows why a hidden state can be more useful than a fixed parameter. Imagine two related assets, say two Treasury ETFs or two highly linked futures contracts. You believe their prices move together, but not with a constant slope forever. A static regression estimates a single hedge ratio, but markets are rarely that stable. The relationship drifts as duration exposure, liquidity, macro conditions, or market composition change.

A Kalman filter lets you treat the regression coefficients themselves as hidden states. Suppose asset Y_t is modeled as alpha_t + beta_t X_t + noise. Instead of assuming fixed alpha and beta, you let the state be theta_t = [alpha_t, beta_t]. The state transition might say that today's coefficients are close to yesterday's coefficients plus some process noise. The observation equation says today's observed Y_t is generated from today's X_t and the hidden coefficients.

Now consider the narrative of a single update. Yesterday, the filter believed the hedge ratio beta_t was about 0.85, with moderate uncertainty. Today, X_t and Y_t move in a way that would have been more consistent with a ratio of 0.89. The innovation is the gap between the observed Y_t and the value predicted using the old state estimate. If your measurement noise setting R is low and your process noise setting Q allows coefficients to drift, the Kalman gain will be larger, and the hedge ratio estimate will move noticeably toward 0.89. If instead you believe daily deviations are mostly noisy and the structural relationship changes slowly, the gain will be smaller and the estimate will barely move.

This is why Kalman-filter pairs trading feels more natural than rolling-window regression. A rolling regression uses a hard window boundary: observations inside the window count fully, observations just outside count not at all. The Kalman filter uses a soft, model-driven weighting scheme. There is no arbitrary cliff at day 60 or day 120. The effective memory emerges from the assumed dynamics and the noise covariances.

In practice, many trading rules are then built from the estimated spread and its forecast error. Some implementations enter trades when the residual is large relative to its predicted variance, for example when the innovation exceeds a threshold based on the forecast standard deviation. The crucial point is not the threshold itself. It is that the filter supplies both a residual and an uncertainty estimate for that residual, which lets the strategy distinguish an ordinary move from an unusually informative one.

What does the Kalman gain tell you about trading decisions?

If there is one object in the filter that deserves intuition, it is the Kalman gain. Traders often describe the method as "adaptive smoothing," but that phrase hides the mechanism. The gain is the mechanism.

A high gain means the filter is saying: trust the new data. A low gain means:trust the model and prior estimate more than this observation. This is why the filter can look trend-following in some regimes and mean-reverting in others. The algorithm itself is not choosing a trading style. It is expressing a belief about signal persistence and observation quality.

This also clarifies a common misunderstanding. People sometimes think the Kalman filter is mainly about forecasting. Strictly speaking, its first job is state estimation. Forecasting comes from estimated state plus transition dynamics. If you estimate the current hidden state badly, your forecast will be bad even with a perfect transition equation. So the filter is most valuable when there is a latent structure worth estimating now: fair value, beta, drift, latent factor, hidden spread, local trend.

In Kalman's original paper, the innovation process in the optimal linear filter is orthogonal, or in the common shorthand, "white." Intuitively, once the filter has used the available information efficiently, the remaining surprise should not have predictable structure left in it. In trading terms, that means persistent structure in residuals can be a clue that the model is missing something: perhaps the state dimension is too small, the dynamics are wrong, or the noise assumptions are unrealistic.

When is a Kalman filter better than rolling-window or exponential smoothing?

The practical problem the Kalman filter solves is not just denoising. It solves online estimation under changing conditions. That is different from fitting a model repeatedly on a sliding window.

Rolling methods throw away old data abruptly. Expanding-window methods keep too much of it forever. Exponential smoothing uses gradual discounting, which is closer in spirit, but it usually lacks an explicit hidden-state model and uncertainty propagation. The Kalman filter keeps a compressed summary of the past in the current state estimate and its covariance. That is why it can adapt while remaining coherent.

This matters in markets because many parameters of interest are not static. Betas drift. Cointegration relationships weaken and strengthen. Short-term trend persistence comes and goes. Efficient price is obscured by noise that itself changes with liquidity. A filter is useful precisely when the thing being estimated should move, but not too erratically, and when observations are informative, but not fully trustworthy.

Another advantage is that the same framework can be used for filtering,prediction, andsmoothing. Filtering estimates the current state using information up to now. Prediction estimates a future state. Smoothing, when done offline, uses later data to improve estimates of past states. In research, smoothing can help you understand what the model thinks the latent state really was. In live trading, only filtering and prediction are available in real time.

What assumptions does the Kalman filter require and when do they break?

The classical Kalman filter is exact for a linear state-space model with known noise covariances under the standard Gaussian assumptions. Those conditions are not cosmetic. They are what make the method optimal in the usual minimum-mean-squared-error linear sense.

Markets, of course, are not clean linear-Gaussian systems. Returns can be heavy-tailed. Volatility clusters. Microstructure effects are state-dependent. Regime shifts can be abrupt rather than gradual. Covariances are not known and often not stable. So when a trader applies a Kalman filter, the right question is not "is this perfectly true?" but "is this a useful approximation to the hidden process I care about?"

Some failures are especially common. If Q is set too low, the filter becomes overconfident and slow; it interprets genuine structural changes as temporary noise. If Q is too high, the estimate chases noise. If R is underestimated, every observation gets too much authority. If R is overestimated, the model becomes stale. In the worst case, severe model mismatch can cause filter divergence, where the estimated uncertainty and the actual estimation error part ways badly.

This is why identifying or tuning Q and R is often the hardest part. Practical implementations sometimes estimate them offline, sometimes tune them by backtest performance, and sometimes use adaptive schemes or expectation-maximization procedures. But there is no escape from the modeling choice. The filter does not discover the right worldview automatically just because the recursion is elegant.

Which Kalman filter variants handle nonlinear or non-Gaussian market dynamics?

| Variant | Nonlinearity handling | Approximation quality | Compute cost | Best when |

|---|---|---|---|---|

| Linear Kalman | Linear models only | Exact under Gaussian | Low | Linear dynamics |

| Extended KF (EKF) | First-order linearization | Moderate, local | Moderate | Mild nonlinearities |

| Unscented KF (UKF) | Sigma-point transform | Better than EKF | Moderate-high | Moderate nonlinearities |

| Particle filter | Sampling / nonparametric | Can be accurate | High | Strong nonlinearities or heavy tails |

Once the observation or transition relationship is nonlinear, the standard linear Kalman filter no longer applies exactly. The most common extension is the Extended Kalman Filter or EKF, which linearizes the nonlinear functions around the current estimate. This can work, but it is an approximation. The reason is fundamental: a nonlinear transformation of a Gaussian variable is generally not Gaussian. So the tidy exactness of the linear-Gaussian case disappears.

For trading, this matters when the latent process or the measurement equation is materially nonlinear. In such settings, practitioners may use EKF, Unscented Kalman Filter, particle filters, or more modern state-space approaches. The point is not that the classical Kalman filter becomes useless, but that its assumptions define the regime where its guarantees hold.

There are also robust and numerically stabilized variants. Some are designed for better numerical conditioning, such as square-root filters and covariance-factorization approaches. Others address uncertainty in the covariance matrices themselves. That line of work exists for a reason: in real systems, including markets, misspecified noise is often the central weakness.

How do you implement a Kalman filter in a quant trading workflow?

In production, a Kalman filter is usually less mysterious than it sounds. You specify the state dimension, the transition dynamics, the observation mapping, an initial state estimate, an initial covariance, and the noise covariances. Then you run the recursion as new data arrive.

Python libraries such as statsmodels and pykalman expose this state-space setup directly. They also make clear what practitioners eventually learn: beyond the clean textbook equations, implementation involves choices about initialization, numerical stability, missing data, and whether you need online filtering or offline smoothing. Those are not secondary details. For long time series, high-dimensional states, or ill-conditioned covariance matrices, numerical method choices can materially change behavior.

In trading research, it is common to use Kalman filtering for dynamic linear regression, trend extraction, spread estimation, or latent factor tracking. But the danger is to mistake a flexible estimation tool for an alpha source by itself. The filter does not create predictability. It estimates a hidden variable under a model. If the model captures a real economic structure, the filter is helpful. If the model is just a clever way to smooth noise in-sample, the backtest will look better than live trading.

This is especially visible in pairs trading examples. A Kalman-estimated hedge ratio can be more adaptive than rolling OLS, but the strategy still depends on a real mean-reverting relationship, realistic costs, and robust thresholding. Gross backtest results without slippage or transaction costs often look much stronger than reality. The filter helps estimate the spread; it does not guarantee the spread is tradable.

How is the Kalman filter an instance of Bayesian sequential updating?

A useful way to frame the Kalman filter for quant readers is as a concrete instance of Bayesian updating in a linear-Gaussian world. Before seeing the new observation, you have a prior belief about the current state. The new measurement arrives. You combine prior and evidence according to their uncertainties. The posterior becomes the new state estimate. Then the process repeats.

That framing is not just philosophical. It explains why uncertainty is carried forward as carefully as the mean. A state estimate without uncertainty is incomplete because the next update rule depends on how uncertain the current estimate is. In that sense, the filter is not merely predicting a path. It is updating a distribution over the state, though in the linear-Gaussian case that distribution is completely summarized by mean and covariance.

This is also why the filter sits naturally beside broader Bayesian methods in trading. If you already think in terms of latent states, priors, posterior beliefs, and model uncertainty, the Kalman filter is the simplest rigorous engine for sequential inference when the structure is approximately linear and Gaussian.

Conclusion

The Kalman filter is best understood as a disciplined answer to a simple problem: how do you estimate something important that you cannot observe directly, using measurements you do not fully trust? Its answer is to model a hidden state, propagate that state forward, measure surprise, and weight that surprise by uncertainty.

In trading, that makes it valuable wherever the quantity of interest is latent and time-varying: trends, fair values, hedge ratios, spreads, and factors. Its strength is not magic prediction. It is coherent online estimation. And its weakness is equally clear: the result is only as good as the state-space story you tell about the market.

If you remember one thing tomorrow, remember this: a Kalman filter is an uncertainty-aware update rule for hidden state estimation. Everything else (the matrices, gains, regressions, and trading signals) is an implementation of that idea.

Frequently Asked Questions

Q and R encode your belief about how much the hidden state can move (Q) versus how noisy each observation is (R); a larger Q relative to R makes the filter more adaptive (it chases recent data), while a larger R relative to Q makes it smoother and slower to react. The article emphasizes this as the formal expression of the trade-off between "the world changed" and "the measurement was noisy."

The Kalman gain is the computation that weights the prediction versus the new observation: high gain means trust the data, low gain means trust the model/prior; the article frames it as the real decision rule determining whether the filter behaves more like trend-following or mean-reverting in a given step. The gain is computed from prior covariance and R, so it directly reflects uncertainty in both the state and the measurement.

Unlike rolling-window regression which abruptly includes or excludes observations, the Kalman filter maintains a compact prior (mean and covariance) and applies a soft, model-driven weighting to new data through the gain; exponential smoothing is similar in spirit but typically lacks the explicit latent-state model and uncertainty propagation the filter provides. The article uses pairs trading to show how the filter's soft weighting avoids an arbitrary window cliff and supplies a residual plus its forecast variance.

Its classical optimality holds only for linear state-space models with known Gaussian noise: in that case the filter is the minimum-mean-squared-error linear estimator. The article and cited foundational sources stress markets often violate these assumptions, so the Kalman filter is best seen as a useful approximation rather than a guaranteed optimal tool in real market data.

Choosing Q and R is often the hardest practical step: practitioners estimate them offline, tune them against backtests, or use adaptive schemes like expectation–maximization, but there is no universally principled procedure that works for all market settings and model mismatch can materially affect responsiveness and stability. The article warns about mis-specified Q/R causing sluggishness or noise-chasing, and the evidence notes this remains an unresolved practical challenge.

When either the transition or observation relationship is materially nonlinear, the linear Kalman filter is only approximate; common alternatives are the Extended Kalman Filter (EKF), which linearizes the model, the Unscented Kalman Filter that better handles nonlinear transforms, or particle filters for strongly non-Gaussian or highly nonlinear problems. The article and source notes caution that EKF is an approximation and can fail when nonlinearities are strong, motivating these other variants.

Common failure modes are model mismatch and mis-specified noise: if Q is set too low the filter becomes overconfident and slow to adapt; if Q is too high it chases noise; underestimating R gives too much authority to observations and overreacts. The article also warns that severe mismatch can lead to filter divergence and that market features like heavy tails, clustered volatility, and abrupt regime shifts violate core assumptions.

The Kalman filter is an online state-estimation engine, not a source of alpha by itself: it produces a filtered latent variable and an uncertainty estimate, but profitable trading still requires that the modeled latent structure (e.g., a genuinely mean-reverting spread) exists, realistic transaction-cost assumptions, and robust decision rules. The article and practical backtest evidence caution that nice in-sample results can reflect overfitting or ignored costs rather than a tradable edge.

Related reading