What Is Exponential Smoothing?

Learn what exponential smoothing is, how it works, how ETS and EMA relate, and why traders use it for trend, forecasting, and volatility updates.

Introduction

Exponential smoothing is a forecasting and signal-extraction method that updates an estimate by giving more weight to recent observations and progressively less weight to older ones. That sounds simple enough to be uninteresting, yet the idea sits underneath a surprising amount of practical trading work: price filters, trend estimates, volatility updates, and many real-time models that must react quickly without becoming pure noise. The reason it matters is not that it is fashionable or mathematically ornate. It matters because markets force a hard compromise between memoryandadaptation, and exponential smoothing is one of the cleanest ways to manage that compromise.

The puzzle is this: if markets change, why average the past at all? But if markets are noisy, why trust the latest tick or bar? A useful estimator must do both. It must remember enough history to suppress noise, while forgetting enough history to notice change. Exponential smoothing solves that by making the influence of the past decay geometrically. Yesterday matters more than last month, and last month matters more than last year, but nothing is discarded abruptly.

In trading, that simple property has two distinct lives. The first is the familiar indicator view: the exponential moving average, or EMA, used as a smoothed price series or as a building block in crossover rules. The second is the forecasting view: a family of models that estimate latent components such as current level, trend, and seasonality, often written in the ETS form for Error, Trend, Seasonal. The first use is common in charting and execution logic. The second is more statistical and matters when you want not just a point estimate, but also a forecast distribution and a disciplined way to choose among variants.

The central idea is easy to state: do not re-estimate everything from scratch each time new data arrives. Instead, carry forward a state (an estimate of what matters now) and update it with the latest forecast error. Once that clicks, the many versions of exponential smoothing stop looking like a bag of formulas and start looking like one repeated mechanism.

How does exponential smoothing update an estimate using forecast errors?

Suppose you are watching daily closes and want an estimate of the market's current level. If you use a simple average of the last n observations, you face a blunt choice. Inside the window, all observations get equal weight. Outside the window, they get zero. That creates an artificial cliff. A data point just inside the window counts fully; the next one just outside counts not at all. Markets do not change in that discontinuous way, so the estimator often should not either.

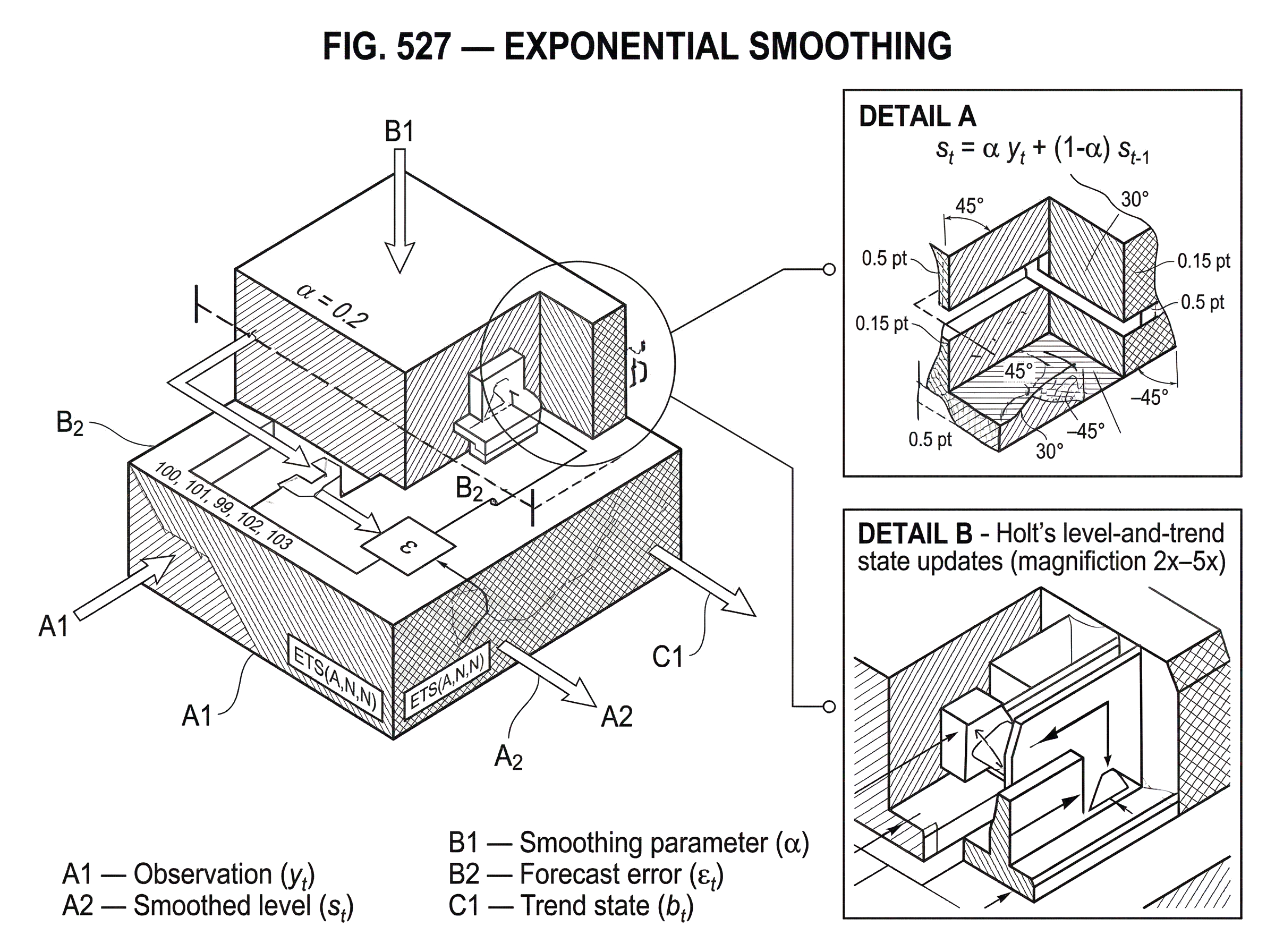

Exponential smoothing replaces the fixed window with continuous forgetting. In its simplest form, the smoothed value at time t, call it s_t, is updated as s_t = α y_t + (1-α) s_{t-1}, where y_t is the latest observation and α is the smoothing parameter with 0 <= α <= 1. If α is large, the estimate moves strongly toward the newest observation. If α is small, the estimate changes slowly and carries more memory from the past.

That equation is often presented as a recipe. More useful is to read it as a mechanism. The old estimate s_{t-1} is your prior belief about the current level. The new observation y_t tells you how wrong that belief was. The update takes a fraction α of that surprise and folds it into the estimate. Rearranged, the same formula is s_t = s_{t-1} + α (y_t - s_{t-1}). The term y_t - s_{t-1} is the forecast error. So exponential smoothing says: new estimate = old estimate + learning rate × forecast error.

That is why the method is so widely reused. It is not merely an average. It is a recursive error-correction rule. The same logic appears in signal processing, control systems, online estimation, and state-space forecasting. In trading, it is attractive because it is causal, cheap, and stable: you can update it one bar at a time without storing long histories or refitting a model from scratch.

If you expand the recursion backward, you can see where the name comes from. s_t is a weighted sum of current and past observations, with weights that decay exponentially as you go further back in time. So the method remembers the entire past, but it does so in a compressed way. You do not keep every old observation as a separate influence. You keep one state variable whose content already summarizes the past with the chosen decay.

How should I choose the smoothing parameter (α) and what does it control?

| α choice | Memory | Responsiveness | Noise passed | Best horizon |

|---|---|---|---|---|

| High α | Short memory | Fast | More noise | Intraday / minutes |

| Medium α | Moderate memory | Balanced | Moderate noise | Short–medium term |

| Low α | Long memory | Slow | Less noise | Weeks–months |

Almost everything about exponential smoothing comes down to the meaning of α. This is the parameter that controls the trade-off between responsiveness and stability. A higher α means shorter memory: the estimator follows new moves more closely, but it also lets more noise through. A lower α means longer memory: the estimator is smoother, but slower to react when the underlying process shifts.

It helps to think in terms of half-lifeortime constant rather than raw α. The question a trader usually cares about is not “Should α be 0.07 or 0.11?” but “How quickly should information fade?” If you are estimating a medium-term trend on daily data, you may want shocks to matter for weeks. If you are tracking intraday microstructure noise, you may want memory measured in minutes or even seconds. The parameter is a choice about how quickly the world you are modeling is allowed to change.

This is also where many misunderstandings begin. People often speak as if exponential smoothing “predicts the future” by itself. In the simplest case, it does something more modest and more useful: it estimates the present underlying level more robustly than the raw series does. Forecasts then come from projecting that estimated level forward. For simple exponential smoothing with no trend or seasonality, the multi-step forecast is flat because the model says the best description of the future is the current estimated level.

That sounds naive until you notice what problem the method is solving. If the underlying process is approximately level but noisy, a flat forecast from a well-estimated level is often exactly the right baseline. Trouble begins when users force the same method onto data with persistent trend, structural breaks, or volatility clustering and assume the parameter alone will fix the mismatch. It will not. The right question is always: what latent structure am I trying to estimate?

Example: applying exponential smoothing to daily closes in a trading context

Imagine a market whose daily closes over several sessions are 100, 101, 99, 102, 103. The raw series is moving, but not every move carries equal information. Suppose your current smoothed level after the first close is initialized at 100, and you choose α = 0.2. When the next close arrives at 101, your estimate does not jump all the way to 101; it moves partway, to 100.2. When the following close is 99, the estimate moves down, but only partially, because one down day is not enough evidence that the true level has fully shifted. With each new bar, the estimate absorbs the latest surprise in proportion to α.

What is happening mechanically is more important than the arithmetic. Each close is treated as a noisy observation of an unobserved current level. The filter assumes the latest error contains information, but not perfect information. So it updates cautiously. If you feed this process a choppy sideways market, the smoothed series will be more stable than price. If you feed it the early stages of a trend, it will lag; not because the method is broken, but because every smoother pays for noise reduction with delay.

That lag is not an implementation detail. It is the price of smoothing. In trading, this matters because many strategies implicitly confuse a smoother with a timing oracle. A crossover system, for example, often compares a fast and slow exponentially smoothed estimate. The resulting signal is not discovering a hidden law of markets. It is measuring whether short-memory price information has moved far enough away from long-memory price information to justify a trend interpretation. That can work in persistent trends, and fail badly in mean-reverting chop, because the underlying signal structure has changed.

When should I extend simple smoothing to Holt's level-and-trend method?

Simple exponential smoothing assumes that the only latent component worth tracking is the current level. But many financial and economic series drift. If the process has a genuine trend, a flat forecast from a level-only model will systematically lag. This is why Holt's method extends exponential smoothing by maintaining not just a level estimate but also a trend estimate.

In the additive-trend form, you can think of the forecast as level + trend. The current observation is compared with what the previous level and trend would have predicted. The resulting forecast error is then used to update both states. The level is adjusted, and the trend is adjusted too, each with its own smoothing parameter. In the ETS innovations state-space formulation, Holt's linear method with additive errors can be written using a measurement equation y_t = l_{t-1} + b_{t-1} + ε_t and state equations l_t = l_{t-1} + b_{t-1} + α ε_t and b_t = b_{t-1} + β ε_t, where l_t is level, b_t is trend, and ε_t is the innovation or one-step-ahead error.

The idea is the same as before, but now there are two states being corrected by surprise instead of one. This is the right way to read the formulas. The model predicts the next observation from its current internal state. Whatever it gets wrong becomes the signal for updating that internal state. If the series is rising faster than expected, the error pushes both the level and the trend upward. If it slows down, the error pushes them down.

In trading, this starts to resemble familiar “fast versus slow” thinking, but with a cleaner interpretation. You are not just smoothing price twice. You are estimating the current level and the current slope. That can be useful when you want a forecast rather than merely a filtered chart line. It also helps explain why trend models can become unstable if used carelessly: a model that extrapolates slope indefinitely can produce unrealistically aggressive long-horizon forecasts.

That is why damped trends matter. A damped trend says, in effect, that current slope information is useful, but should fade as the forecast horizon extends. In software implementations such as ets() in R or Holt-Winters classes in statsmodels, damped trends are standard options because they often produce more reasonable forecasts than undamped linear extrapolation.

What is the ETS formulation of exponential smoothing and why use it?

Many traders know exponential smoothing only through indicator formulas. The statistical view is more powerful. In the ETS framework, exponential smoothing methods are written as innovations state-space models. Each model has a measurement equation, which links the observation to the latent states, and state equations, which describe how those latent states evolve.

This reframing solves an important limitation of the algorithm-only view. A plain smoothing recursion gives you point forecasts, but not a full probabilistic model of uncertainty. The state-space version generates the same point forecasts for a given specification and smoothing parameters, while also supporting likelihood-based estimation, prediction intervals, and model comparison. That matters whenever you want to ask not just “What is my next estimate?” but also “How uncertain is it?” and “Which structural variant best fits the data?”

The ETS label summarizes the model components as ETS(Error, Trend, Seasonal). Error can be additive A or multiplicative M. Trend can be none N, additive A, or damped additive A_d in the standard exposition. Seasonal components, where relevant, can also be additive or multiplicative. For a non-seasonal trading series, the most relevant forms are often simple level-only models like ETS(A,N,N) and level-plus-trend models like ETS(A,A,N).

For example, simple exponential smoothing with additive errors, ETS(A,N,N), can be written as y_t = l_{t-1} + ε_t and l_t = l_{t-1} + α ε_t, with ε_t assumed to be normally and independently distributed with mean 0 and variance σ^2. Notice how directly this recovers the earlier recursion. The forecast is the previous level estimate; the new level is the old level plus a fraction of the forecast error.

That is the unifying insight: exponential smoothing is not a collection of unrelated heuristic formulas. It is a family of state-space models updated by innovations. Once you see that, extensions become easier to reason about. Add trend if the latent process drifts. Add seasonality if there is recurring structure. Change the error form if uncertainty scales with the level.

Additive vs multiplicative errors: which error form should I use?

| Error type | Error scale | State update | Forecast intervals | Best when |

|---|---|---|---|---|

| Additive | Absolute units | Additive correction | Symmetric intervals | Variance stable across level |

| Multiplicative | Relative (percent) | Proportional correction | Scale-dependent intervals | Variance grows with level |

The distinction between additive and multiplicative errors sounds technical, but it reflects a concrete modeling choice. With additive errors, mistakes are measured in absolute units: a forecast miss of 2 means the same thing whether the series is around 20 or 200. With multiplicative errors, mistakes are measured relatively: being off by 2% has the same interpretation across scales.

In the ETS framework, each exponential smoothing method has additive-error and multiplicative-error forms. If they use the same smoothing parameters, they can produce identical point forecasts, but they imply different forecast distributions and therefore different prediction intervals. That difference is not cosmetic. If variability grows with the level (common in many economic and financial series, and especially relevant after transformations are not used) multiplicative errors can better reflect how uncertainty behaves.

For multiplicative-error simple smoothing, the innovation is defined as a relative error rather than an absolute one. The model then takes a form like y_t = l_{t-1}(1 + ε_t) and l_t = l_{t-1}(1 + α ε_t). The mechanism is still forecast, compare, correct. What changes is the scale on which the correction operates.

There is a practical caveat here. The convenient distributional results for ETS models usually rely on assumptions such as Gaussian, independently distributed innovations in the additive case. Real market data often violate these assumptions through heavy tails, volatility clustering, and regime changes. So the point forecasts may remain useful while the textbook intervals become less trustworthy. This is not a reason to discard the framework. It is a reason to separate the update mechanism, which is often robust and useful, from the probabilistic assumptions, which may need stronger scrutiny.

How do traders use exponential smoothing for signals, volatility, and execution metrics?

In market practice, exponential smoothing is used less often as a grand forecasting system than as a component inside larger decision rules. That is partly because the method is lightweight and interpretable, and partly because many trading problems are online problems. You need an estimate now, and you need to update it cheaply as data arrives.

For price series, the most common use is the EMA-style filter. A trader may smooth closing prices to estimate local direction, compare fast and slow smoothers for crossover signals, or feed smoothed inputs into a broader signal stack. In this role, exponential smoothing is acting as a trend filter. It is reducing noise enough to expose persistence, if persistence is there.

For risk, the close cousin is exponentially weighted moving average volatility estimation. Instead of smoothing the level of a series, you smooth a volatility-relevant quantity such as squared returns. The same logic applies: recent shocks should matter more than distant shocks because market volatility clusters and conditions evolve. This is one reason exponentially weighted methods became so important in risk systems: they are computationally simple, update in real time, and can adapt faster than long equal-weighted rolling windows.

There is also a more subtle use in portfolio and execution workflows. Exponential smoothing can estimate spread, slippage, fill-rate tendencies, or other microstructure quantities that are noisy but time-varying. The method is attractive whenever the target is not perfectly constant, yet not so unstable that only the most recent observation matters.

Still, it is important not to overstate what the method is doing. Exponential smoothing is not a market theory. It does not explain why trends exist or why volatility clusters. It provides a disciplined way to estimate a changing latent quantity under a recency-weighted memory scheme. Whether that estimate becomes a profitable trading signal depends on market structure, transaction costs, regime stability, and how many alternative parameterizations you tested before choosing the one that looked best.

How should I initialize and warm up an EMA to avoid startup discrepancies?

| Method | Ease | Early bias | Reproducibility | When to use |

|---|---|---|---|---|

| Warm trailing history | High effort | Low early bias | High reproducibility | Backtest → production |

| Estimated initial values | Automated | Moderate bias | Consistent if fixed | Short samples / model fitting |

| Heuristic / legacy | Easy | Higher bias | Matches legacy outputs | Legacy compatibility |

| Known initial values | Manual | Depends on choice | Highest reproducibility | Controlled experiments |

A recurring practical annoyance is that two systems can compute “the same” EMA or exponential smoothing model and still disagree at the start of the sample. The reason is initialization. The recursion needs an initial state: the starting level, and if relevant the starting trend and seasonal components. Early values can depend materially on those choices.

This is not a small implementation detail. QuantConnect's EMA documentation, for example, explicitly notes that values can differ depending on how many samples you feed into the indicator, and recommends warming it up with trailing history for consistency. That is exactly what the mechanism implies. Since the estimator carries forward compressed memory, what you feed it at the beginning affects the state it carries later. The effect decays over time, but it does not vanish instantly.

Statistical implementations treat initialization more formally. Packages such as R's forecast::ets() and Python's statsmodels expose options for estimated, heuristic, legacy, or known initial values, and can choose models automatically. This is useful because the “best” initialization is not purely a coding issue; it is part of the estimation problem. If your sample is short, initialization can have a visible impact on fitted parameters and forecasts.

For trading systems, the practical consequence is straightforward: if you backtest a smoothed signal, define the warm-up policy and keep it consistent with live deployment. Otherwise you may discover that the backtest and production code generate slightly different indicator states, which then cascade into different trades. In recursive methods, small startup differences can persist longer than intuition suggests.

What are the common failure modes and risks of exponential smoothing in markets?

The main failure mode is not mathematical. It is conceptual mismatch. Exponential smoothing works best when the quantity you care about evolves gradually enough that recent history contains useful information, but noisily enough that the latest observation alone is unreliable. If the process jumps across regimes, has long periods of mean reversion with no persistence, or contains structure not represented by the model, the smoother can give very clean-looking but strategically weak estimates.

In trading, a common mistake is to tune α until a backtest looks good and then attribute the result to “finding the right speed.” Often what happened is simpler: you searched across enough decay parameters to fit the sample's accidents. This is especially dangerous because exponential smoothing has so few parameters that it feels immune to overfitting. But low-dimensional models can still be overfit if you try enough variants, combinations, asset universes, filters, or signal thresholds. The risk is not only parameter complexity. It is selection complexity.

Another breakdown comes from nonstationary noise. If the observation variance changes abruptly, a fixed-parameter smoother can become badly calibrated. In volatility estimation, this is why practitioners sometimes prefer adaptive decay or richer heteroskedastic models. In forecasting, it is why multiplicative-error or transformed models may be more appropriate than naive additive forms when scale changes materially.

And there is a domain-specific limitation. Many market series do not have stable seasonality in the classical sense that retail or utility demand data do. So the full Holt-Winters seasonal machinery, although important in time-series forecasting generally, is often less central for asset prices than level, trend, and volatility smoothing are. That is not because seasonality never appears in markets. It is because market seasonal structure is often weaker, more regime-dependent, or better modeled at specific frequencies such as intraday effects rather than with a generic seasonal template.

How is exponential smoothing related to EMA indicators and ARIMA models?

It helps to place exponential smoothing next to its neighbors. The EMA is not a different concept so much as the indicator-language version of simple exponential smoothing. In trading conversations, “EMA” usually means the recursively updated smoothed price series. In forecasting language, “exponential smoothing” is the broader family that includes level-only, trend, damped-trend, and seasonal variants, often with state-space formulations.

There is also a close theoretical relationship to ARIMA models. Simple exponential smoothing corresponds to an ARIMA(0,1,1) model without a constant term under standard conditions. That connection matters because it shows exponential smoothing is not outside mainstream time-series theory. It is another way of expressing a particular dynamic structure. In practice, though, the choice between ETS-style and ARIMA-style modeling often comes down to what structure is most naturally described in the data: evolving components such as level and trend, or autoregressive and moving-average dependence in differenced series.

For trading, the useful takeaway is not that one class dominates the other universally. It is that exponential smoothing is especially compelling when you want a transparent online update rule with interpretable memory and possibly a state-space probabilistic wrapper. ARIMA is often more natural when serial dependence in residual dynamics is the main object. The two families overlap, but they are not just stylistic aliases.

Conclusion

Exponential smoothing is a way to estimate a changing quantity by carrying forward a state and updating it with a fraction of the latest forecast error. Its power comes from that simple rule. By letting the past fade exponentially rather than disappear at a fixed window boundary, it creates a practical compromise between stability and responsiveness.

In trading, that makes it useful for filtered prices, trend estimates, volatility updates, and real-time forecasting components. But its usefulness depends on matching the model to the structure of the data, choosing memory sensibly, and not mistaking a smooth line for a durable edge. The part worth remembering tomorrow is this: exponential smoothing works by turning surprise into a controlled update of belief. Everything else (EMA indicators, Holt trends, ETS models, and EWMA risk estimates) is a variation on that mechanism.

Frequently Asked Questions

Choose α by deciding how quickly information should fade in the units of your data (minutes, hours, days, weeks). A higher α means faster adaptation and more noise, a lower α means more stability and lag; there is no single "best" value - pick the memory (half-life/time constant) you need for the trading horizon and validate it out of sample. This guidance follows the article's framing that α primarily controls the trade‑off between responsiveness and stability.

Initialization matters: the recursive state must be warmed up with trailing history and different startup choices can produce visibly different early values that decay only slowly. The article and implementation notes recommend defining and replicating the same warm‑up policy in backtest and live code to avoid startup discrepancies. Quantitative warm‑up lengths are implementation dependent and the article does not prescribe a single number.

Use additive errors when forecast mistakes are naturally measured in absolute units and multiplicative errors when relative (percentage) mistakes are more appropriate; they can give identical point forecasts under the same smoothing parameters but imply different uncertainty and prediction intervals. The ETS literature and the article stress that multiplicative errors better reflect scale‑dependent variability, while additive errors pair with the usual Gaussian white‑noise assumptions - choose based on how variability changes with level. Note that the choice affects intervals even if point forecasts match.

Add a trend state (Holt) when the latent process shows persistent drift so that a level‑only, flat multi‑step forecast systematically lags; use a damped trend when you want slope to influence short horizons but to fade for longer horizons, avoiding aggressive long‑run extrapolation. The article describes Holt's level+trend updates and explains why damped trends are commonly offered to produce more reasonable long‑horizon forecasts.

Simple exponential smoothing is best understood as estimating the current level rather than magically predicting future turning points; its multi‑step forecast (without trend) is flat and will lag when the true process has trend or regime shifts. The article emphasizes that the method turns surprise into controlled updates of belief and that failure modes arise when the assumed latent structure (level vs trend vs seasonality) is mismatched to the data.

Simple exponential smoothing has a theoretical equivalence to an ARIMA(0,1,1) representation under standard conditions, so it sits inside mainstream time‑series theory rather than being an unrelated heuristic. The article notes this connection and uses it to place ETS‑style methods alongside ARIMA modeling, with the practical choice driven by which structure (evolving components versus ARMA dependence) the data suggest.

To limit overfitting, avoid blindly tuning α (or other variants) until a backtest looks best; instead use objective model‑selection tools, holdout or rolling out‑of‑sample tests, and keep warm‑up/initialization consistent between backtest and production. The article warns about selection complexity and the ETS literature (and software like forecast::ets) provides likelihood/BIC‑based procedures and automated model selection to reduce ad‑hoc searches.

Exponentially weighted methods are widely used for volatility estimation (EWMA) because they update in real time, adapt faster than long equal‑weight windows, and are computationally cheap; however the RiskMetrics tradition and later studies also show the decay parameter matters and may be optimized for horizon or asset class. The article frames EWMA volatility as a close cousin of EMA filters and notes practitioners sometimes prefer adaptive decay or richer heteroskedastic models when variance is nonstationary.

The probabilistic results for ETS state‑space models typically assume independently distributed (often Gaussian) innovations, so when market data exhibit heavy tails, volatility clustering, or heteroskedasticity those interval estimates and distributional claims become questionable. The article explicitly advises separating the robust online updating mechanism from the stronger distributional assumptions and cautions that prediction intervals may be unreliable when the NID assumption fails.

Related reading