What Is Bayesian Inference in Quant Trading?

Learn what Bayesian inference in quant trading is, how posterior updating works, and why it matters for forecasts, risk, model uncertainty, and signals.

Introduction

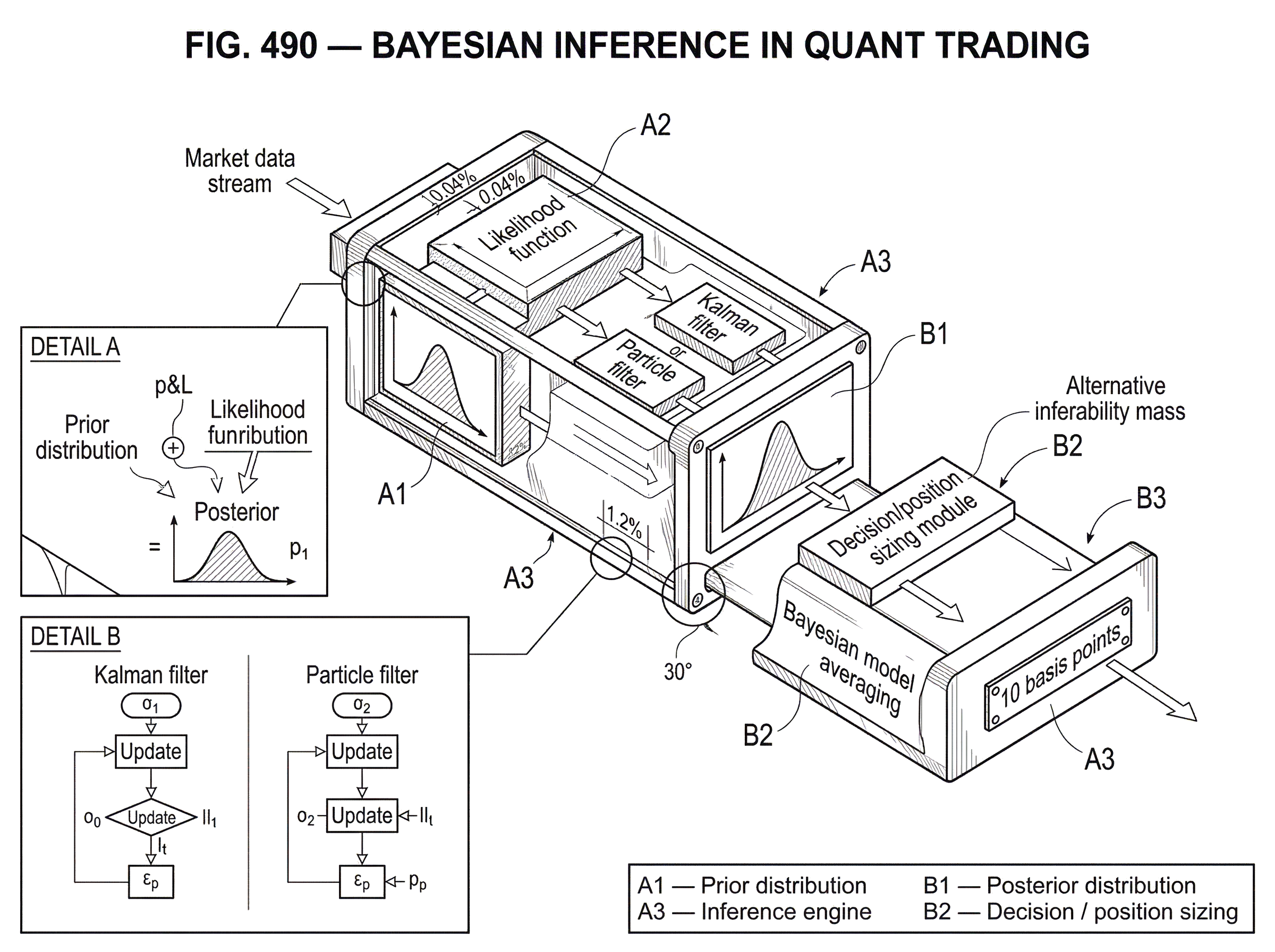

Bayesian inference in quant trading is the practice of treating unknown quantities in a trading model as probability distributions and then updating those distributions as new market data arrives. That sounds abstract, but it solves a very concrete problem: markets change faster than our certainty about them should. A mean-reversion signal may look strong for a month and disappear the next. Volatility may seem quiet until it suddenly is not. A factor that worked in one regime can become noise in another.

The central question is not just, “What is the forecast?” It is, “How sure are we, and how should that uncertainty change the trade?” Bayesian methods are built for that question. They combine prior beliefs, observed data, and an explicit model of how the data is generated to produce a posterior distribution: an updated view of what is plausible now.

In trading, that matters because decisions are made under incomplete information and under time pressure. You never observe the “true” drift, the “true” volatility, or the “true” regime directly. You observe prints, quotes, returns, order flow, and macro releases; all filtered through noise. Bayesian inference gives a disciplined way to separate signalfromuncertainty about the signal, and that separation is often the difference between a robust strategy and an overfit one.

How does trading change when parameters are treated as distributions instead of point estimates?

Most statistical trading workflows start by estimating something: expected return, factor loading, spread mean, volatility, transition probability, execution cost. A common simplification is to collapse each unknown into a single number. That is useful, but it hides an important fact: the number itself is uncertain because it was inferred from finite, noisy data.

Bayesian inference keeps that uncertainty alive. Instead of saying “the daily mean return is 0.04%,” it says something closer to “here is the distribution of plausible daily mean returns given what we believed before and what we have seen so far.” Instead of saying “volatility is 1.2%,” it says “volatility is likely in this range, with this shape of uncertainty, and these tail risks.”

That change in representation has mechanical consequences. If your posterior distribution for expected return is wide and centered near zero, a sensible system should trade smaller or not at all. If the posterior for a model parameter is unstable across windows, that is not a minor implementation detail; it is evidence that the strategy depends on more uncertainty than its backtest admitted. In this sense, Bayesian inference is not only about forecasting. It is about decision-making under parameter uncertainty.

The simplest intuition is to imagine that every model parameter comes with a confidence cloud around it. New data does not magically reveal the truth. It reshapes the cloud. Strong, consistent data narrows it. Contradictory or sparse data leaves it wide. A Bayesian trading system reacts not just to the center of that cloud, but also to its width.

What are priors, likelihoods, and posteriors in Bayesian trading models?

The Bayesian update is often summarized as:

posterior ∝ prior × likelihood

This is compact, but the words matter more than the formula at first.

The prior is what the model believed before seeing the current data. In trading, that might encode beliefs such as “daily drift is usually small,” “volatility changes over time but not infinitely fast,” or “most factors have weak effects unless data strongly supports otherwise.” A prior is not necessarily subjective guesswork. It can be built from domain knowledge, previous samples, cross-sectional structure, or hierarchical pooling across assets.

The likelihood says how probable the observed data would be under a candidate set of parameter values. If you assume returns are conditionally Gaussian, or Student-t, or generated by a state-space model, the likelihood is the part that maps parameters to observed prices or returns.

The posterioris the updated distribution after combining those two pieces. It tells you which parameter values are plausibleafter seeing the data.

The deepest point here is that the prior and the likelihood play different roles. The likelihood brings in the new evidence. The prior supplies structure where the data is weak, noisy, or too short to stand alone. In finance, that happens constantly. Daily returns often contain very little information about expected return, but much more about volatility. Without some structure, estimates of drift, covariance, or regime persistence can become unstable very quickly.

A worked example makes this concrete. Suppose you run a simple mean-reversion strategy on a spread between two related assets. You model the spread as fluctuating around a latent equilibrium level, but you do not know how quickly deviations revert. A non-Bayesian workflow might fit a half-life from a rolling window and plug that point estimate into the signal. If the latest window is noisy, the estimate jumps, and the strategy jumps with it. In a Bayesian version, you start with a prior that says reversion speed is positive and usually moderate, not extreme. As new spread observations arrive, you update the posterior for that speed. If the evidence for fast reversion is weak, the posterior does not overreact. If the evidence accumulates consistently, the posterior shifts and narrows. The trade changes because the belief changed, not because one unstable window happened to produce a dramatic estimate.

That is the mechanism in plain terms: priors prevent the model from treating every wobble as revelation, while the likelihood prevents the prior from ignoring data indefinitely.

Why is Bayesian inference well-suited for financial markets?

| Method | Assumptions | Compute | Online? | Best for |

|---|---|---|---|---|

| Kalman filter | Linear Gaussian | Low | Yes | Linear‑Gaussian latent states |

| Particle filter | Nonlinear, non‑Gaussian | High | Yes | Strongly nonlinear state estimation |

| Extended / Unscented KF | Local linearisation | Medium | Yes | Mildly nonlinear systems |

Markets are a natural setting for Bayesian thinking because they are both dynamicandpartially observed. Many of the quantities traders care about are latent states rather than directly visible facts: current volatility, regime, trend strength, signal decay, liquidity conditions, and market impact are all inferred indirectly.

This is why Bayesian dynamic models became important in forecasting and time-series analysis. In dynamic environments, the task is not a one-time estimate but repeated updating as the environment evolves. A model has to learn sequentially. That is exactly the setting studied in Bayesian forecasting and dynamic models, including dynamic linear models, where latent states evolve over time and observations arrive sequentially.

In the special case where the system is linear and Gaussian, the Kalman filter gives an exact Bayesian update for the latent state. That is why it appears so often in trading: it is not just a smoothing trick, but a concrete implementation of Bayesian state estimation. If you model a latent trend, fair value, hedge ratio, or temporary dislocation as a hidden state that evolves through time and generates noisy observations, the Kalman filter updates your belief about that state each time new data arrives.

When the model is nonlinear or non-Gaussian, exact updating usually breaks. Then you move to approximations such as particle filters, also called sequential Monte Carlo methods. These maintain a set of weighted samples (particles) representing the current posterior over hidden states. As new observations arrive, particle weights are updated and resampled. The key advantage is flexibility: unlike local linearization methods, particle methods do not depend on crude linear approximations. The cost is computational. They can be expensive, and over long horizons their path representations can degenerate if not handled carefully.

So Bayesian inference in trading is not a niche mathematical preference. It is a response to the actual structure of the problem: hidden states, streaming data, noisy observations, and changing regimes.

How do you use Bayesian stochastic volatility models for trading?

Volatility is one of the cleanest examples of Bayesian inference in trading because everyone agrees on the puzzle. Returns are observed. Volatility is not. Yet volatility drives position sizing, risk limits, option pricing, and many signal filters.

A stochastic volatility model treats volatility as a latent process that changes over time. The observed return at time t depends on the hidden volatility state at time t, and that hidden state itself evolves stochastically. The Bayesian task is to infer the posterior distribution of the latent volatility path and the model’s parameters from observed returns.

A PyMC example on S&P 500 daily returns makes the mechanism visible. The model uses a latent volatility process, a prior on the volatility step size, and a Student-t likelihood for returns. The Student-t choice matters because financial returns often have heavier tails than a Gaussian can represent well. After fitting, the output is not just a single volatility curve but a posterior distribution over volatility through time, along with posterior predictive draws for returns.

What is especially useful here is not merely that the model produces a time-varying volatility estimate. It is that the workflow exposes when the model’s assumptions are unreasonable. In the same example, prior predictive checks show that some prior choices imply return magnitudes many orders larger than what was actually observed. That is a powerful reminder: Bayesian modeling does not make assumptions disappear; it makes them inspectable. Before trusting the posterior, you can ask what the priors imply about plausible data. If those implications are absurd, the problem is often in the model design, not in the sampler.

This is one of the places where Bayesian quant workflows differ from simplistic “fit and trade” pipelines. The question is not only whether the posterior converged. It is whether the prior, likelihood, and predictive implications make sense for the market you are modeling.

How do you convert Bayesian forecasts into trading and position‑sizing rules?

A posterior distribution is not itself a strategy. It becomes useful when mapped into a decision rule.

Suppose your model produces a posterior distribution for next-period return, call it p(r_next | data). A naive strategy might trade on the posterior mean alone. But a Bayesian strategy has more information available. It can ask whether the probability that r_next > 0 exceeds a threshold, whether the expected return remains positive after transaction costs, whether downside tail mass is too large, or whether parameter uncertainty is so wide that any apparent edge is economically meaningless.

This matters because the same expected return can imply very different trades depending on uncertainty. A posterior mean of 10 basis points with a tight distribution is a different object from a posterior mean of 10 basis points with a huge distribution crossing zero. Bayesian inference gives a direct language for that distinction.

This naturally connects to position sizing. If uncertainty widens, leverage should usually fall. If the posterior predictive distribution becomes more skewed or heavy-tailed, stop logic and risk budgeting may need adjustment. If the posterior probability of regime change rises, the model may switch to a more defensive policy or allocate weight to alternative models.

In that sense, Bayesian inference does not replace trading logic. It supplies better inputs to it: distributions instead of fragile point estimates.

How should traders handle model uncertainty and use Bayesian model averaging?

| Approach | Model weights | Adaptivity | Compute | Best when |

|---|---|---|---|---|

| Single model | Single winner | Static | Low | Stable regime, simple concept |

| Bayesian model averaging | Posterior weights | Slowly adaptive | Medium | Persistent model uncertainty |

| Dynamic model averaging | Time‑varying weights | Highly adaptive | High | Regime shifts, streaming data |

A large share of trading failure does not come from wrong parameter values inside the right model. It comes from using the wrong model class altogether. A factor model may work in one environment, a trend model in another, and a volatility-break model in a third. Pretending one specification is “the” model creates false confidence.

Bayesian model averaging addresses this by assigning probability across multiple candidate models rather than selecting a single winner and acting as if selection uncertainty does not exist. The idea is straightforward: each model gets posterior weight based on how well it explains the data and on the prior over model space, and forecasts are averaged using those weights.

This is valuable in quant trading because model uncertainty is often large. You may not know whether returns are better forecasted by momentum, carry, value, volatility state, macro variables, or some combination. Model averaging keeps uncertainty about that choice explicit. Dynamic model averaging extends the idea to evolving environments, allowing model weights themselves to change over time. That makes sense in markets, where the “best” forecasting relationship is often regime-dependent.

The benefit is not mystical ensemble power. The mechanism is more specific: averaging over plausible models tends to reduce overconfidence that comes from conditionalizing on a single selected specification. The tradeoff is computational and conceptual. Marginal likelihoods, Bayes factors, and model-space priors matter, and results can be sensitive to those choices.

What computational methods power Bayesian inference in trading and when should you use them?

| Method | Accuracy | Latency | Scale | Best for |

|---|---|---|---|---|

| MCMC (NUTS) | High | High | Moderate–high | Offline research, detailed posteriors |

| Variational inference | Approximate | Low | High | Fast large‑model inference |

| Particle / SMC | Good sequential fit | Medium–high | Moderate | Nonlinear online filtering |

| Analytic (Kalman) | Exact if linear Gaussian | Very low | Low | Real‑time linear state estimation |

For simple conjugate models, posterior updates can be computed analytically. But most useful trading models are not that simple. They may have latent states, heavy tails, hierarchical structure, time variation, nonlinear observation equations, or multivariate dependence. Then the posterior is not available in closed form.

That is why modern Bayesian practice relies on numerical inference. Tools such as StanandPyMC let researchers specify a probabilistic model and then approximate the posterior using algorithms such as Hamiltonian Monte Carlo and its adaptive variant NUTS. These methods can be remarkably effective, but they are not magic. Their quality depends on parameterization, priors, geometry of the posterior, and diagnostics.

The implementation details matter more than many newcomers expect. The Stan user guide emphasizes that vectorized probability statements are much faster than explicit loops, that QR reparameterization can substantially improve sampling in regression-type models, and that Cholesky and non-centered parameterizations improve numerical stability and efficiency in hierarchical and multivariate settings. These are not cosmetic optimizations. In quant research, where you may refit many models across assets and windows, computational geometry becomes part of model design.

There is also a division between offlineandonline inference. Markov chain Monte Carlo is often well-suited for offline research, where you can spend time estimating a rich posterior carefully. For live systems receiving new observations continuously, sequential methods such as Kalman filtering, particle filtering, or approximate online updating are often more practical. The right inference method depends less on philosophical purity than on latency, dimensionality, and how often the model must refresh.

How should priors be chosen and validated for financial trading models?

A common misunderstanding is that Bayesian methods are mainly about subjective priors. In practice, priors are unavoidable because every model regularizes somehow. If you choose a rolling window length, a shrinkage penalty, a stationarity constraint, or a factor sparsity threshold, you are already imposing structure. Bayesian methods make that structure explicit.

The real question is not whether to have priors, but whether the priors are sensible and testable. In finance, this usually means encoding facts like these: drifts are small relative to volatility, correlations are noisy in short samples, coefficients across related assets should often partially pool rather than float independently, and volatility parameters should live in realistic ranges.

This is where hierarchical modeling becomes useful. Suppose you estimate a mean-reversion speed separately for hundreds of spreads. Some spreads have rich histories; others are sparse. A hierarchical prior lets asset-specific parameters borrow strength from the cross-section while still differing where data supports it. That often stabilizes estimates and reduces the temptation to overfit each series in isolation.

Still, priors can mislead if chosen badly. The PyMC stochastic volatility example is instructive precisely because prior predictive checks reveal implausible implied returns. The lesson is broad: before fitting a Bayesian trading model, ask what data the model would generate before seeing the actual sample. If the answer looks nothing like market reality, the posterior may be mathematically valid but economically useless.

What are the main failure modes and risks of Bayesian trading systems?

Bayesian inference is powerful, but it does not rescue a bad trading idea.

The first failure mode is model misspecification. If your likelihood does not represent the data-generating mechanism well enough (for example, if it ignores heavy tails, jumps, changing microstructure noise, or regime shifts) the posterior can be sharply wrong. Bayesian updating is conditional on the model. It is not a guarantee of truth.

The second failure mode is regime change faster than learning. Bayesian methods update sequentially, but they still need evidence. If market structure changes abruptly, a posterior informed by yesterday’s regime may be too slow unless the model explicitly allows rapid transitions, forgetting, or dynamic model switching.

The third is computational fragility. Complex posteriors can mix poorly. Samplers can look stable while missing important regions. Particle filters can suffer weight degeneracy. High-dimensional covariance structures can become expensive or unstable. In production, these are not merely academic concerns; they affect latency, reliability, and whether a signal is usable.

The fourth is false comfort from quantified uncertainty. A posterior interval can look rigorous while still being conditional on arbitrary modeling choices. If priors, likelihood class, or model universe are poor, the uncertainty estimate may itself be miscalibrated.

And the fifth is operational. Any model that feeds real capital creates model risk. Supervisory guidance in finance emphasizes conceptual soundness, ongoing monitoring, benchmarking, and outcomes analysis such as backtesting. That logic applies especially strongly to Bayesian systems because their outputs are probabilistic and can appear more authoritative than they are. A good Bayesian workflow includes sensitivity analysis, posterior predictive checks, benchmark comparisons, and explicit rules for when the model should be distrusted.

Historical failures in quant finance make this vivid. The LTCM episode showed how models trained in calmer periods can underestimate extreme co-movements and liquidity stress. The Knight Capital incident showed that even a reasonable algorithmic concept can fail catastrophically when deployment controls and pre-trade safeguards are weak. These were not “Bayesian failures” specifically, but they underline the same lesson: uncertainty that is not modeled, governed, or operationally controlled does not stay theoretical for long.

Which trading problems benefit most from Bayesian methods?

Bayesian methods tend to be most valuable where uncertainty is structurally important rather than incidental. That includes latent-state estimation, such as trends, fair values, and volatility; sparse-signal problems, where data is weak relative to noise; hierarchical problems across many assets; online updating under streaming data; and model-combination problems where no single specification deserves full trust.

This is why you see Bayesian ideas underneath several familiar quant tools. The Kalman filter is Bayesian state updating in linear Gaussian form. Stochastic volatility models are Bayesian latent-variable models for risk. Particle filters are Bayesian sequential methods for nonlinear state-space systems. Bayesian model averaging is a principled response to model uncertainty. Even when a desk does not describe itself as “Bayesian,” if it is tracking latent states, shrinking noisy estimates, and updating beliefs sequentially, it is often using Bayesian logic in practice.

The crucial distinction is whether uncertainty is treated as a nuisance afterthought or as part of the object being modeled. Bayesian inference forces the latter.

Conclusion

Bayesian inference in quant trading is best understood as a way to trade on distributions, not on illusions of certainty. It starts from a simple observation: market-relevant quantities are unknown, noisy, and often changing, so a single fitted number is usually not the whole truth.

By combining prior structure with observed data, Bayesian methods produce posterior beliefs that can be updated sequentially, propagated into forecasts, and translated into position sizing, risk control, and model weighting. Their strength is not that they remove uncertainty, but that they represent it explicitly.

That is the idea worth remembering tomorrow: in trading, the edge is rarely just the forecast; it is the forecast together with a realistic measure of how fragile that forecast is.

Frequently Asked Questions

Choose priors to encode realistic market structure and to regularize weak data - for example, priors that shrink drifts toward small values, partially pool coefficients across related assets, or restrict volatility parameters to plausible ranges - and validate them with prior predictive checks to ensure they do not imply absurd return magnitudes.

Use the Kalman filter when the latent-state model is linear and Gaussian because it provides exact Bayesian updates; use particle (SMC) methods when the model is nonlinear or non‑Gaussian but be prepared for higher computational cost and issues like particle weight degeneracy.

Translate posterior uncertainty into decisions by reducing position size or leverage when the posterior for expected return is wide, by conditioning trades on posterior probabilities (e.g., P(r_next>0)), and by incorporating tail mass and transaction costs into whether an apparent edge is economically actionable.

Bayesian methods help but do not eliminate regime‑change risk: they update sequentially and can incorporate dynamic model switching or forgetting, yet if regime change is faster than the model’s learning or not represented in the model class, posteriors will lag and can be misleading.

Common production pitfalls include poor sampler geometry and slow mixing for complex posteriors, overflow/NaN issues in generated quantities, high cost and path degeneracy in particle filters, and sensitivity to reparameterization choices - all of which the Stan and particle literature explicitly warn about.

Validate Bayesian trading models with prior and posterior predictive checks, sensitivity analysis to priors and model‑space choices, benchmarking against alternatives, and ongoing monitoring; supervisors also expect effective independent challenge and periodic validation (the guidance explicitly discusses ongoing monitoring and periodic review).

Bayesian model averaging or dynamic model averaging can reduce overconfidence by spreading weight across plausible specifications, but it adds computational cost and its results depend on model‑space priors and marginal likelihood calculations, so it is not a free performance guarantee.

A Bayesian volatility posterior is immediately useful for risk and sizing decisions, but the example PyMC notebook demonstrates inference without a canonical conversion to P&L rules, so practitioners must design and backtest specific mappings from posterior volatility (and its uncertainty) into position sizes, stop logic, and transaction‑cost adjustments.