What Are Informed Trading Models?

Learn what informed trading models are, how they explain spreads and price impact, and how measures like PIN and VPIN infer information from order flow.

Introduction

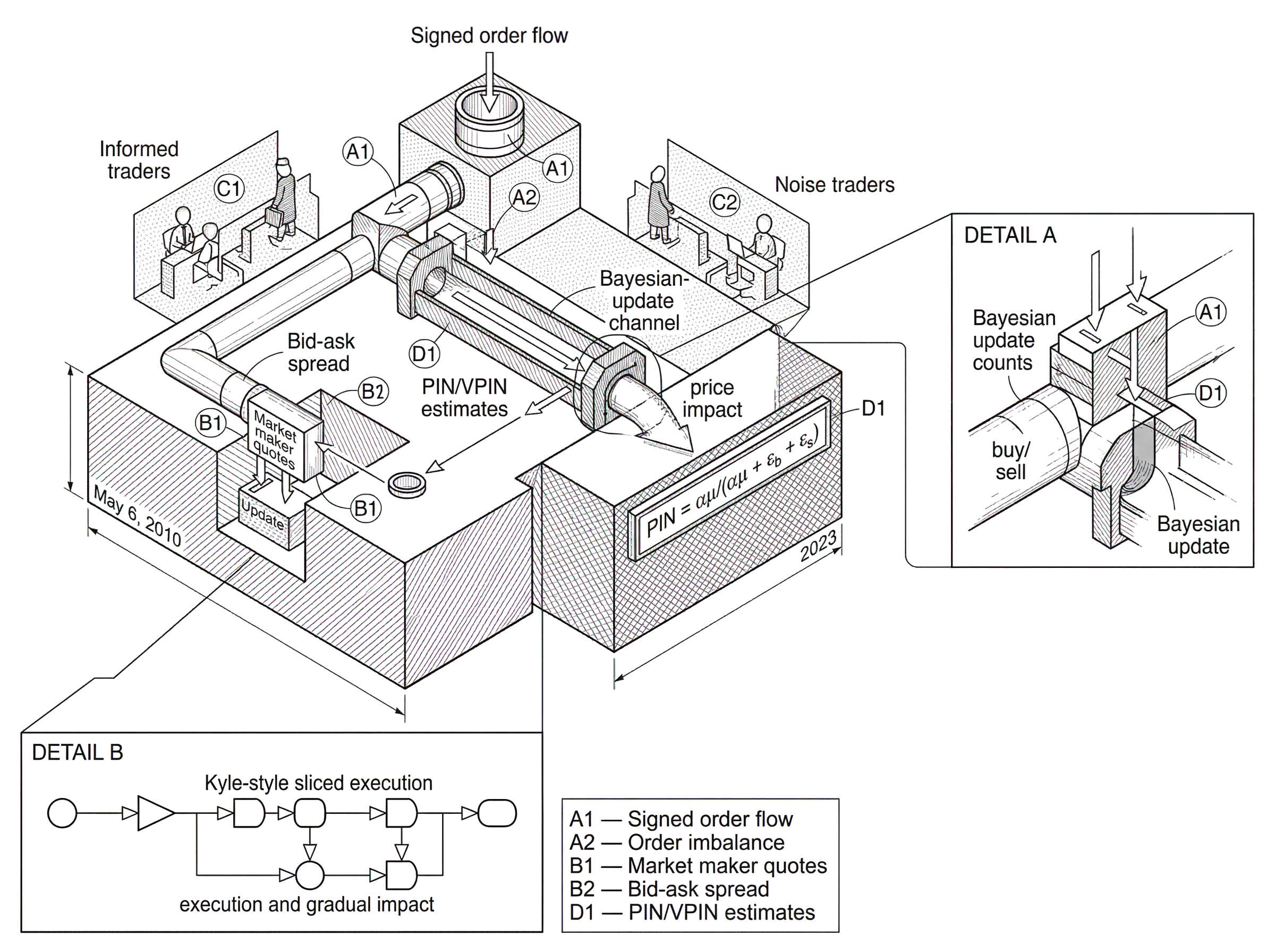

Informed trading models are models of how markets behave when some traders know more than others. That sounds abstract, but the stakes are concrete: every market must decide, trade by trade, whether incoming orders are likely to contain information about value. If they do, prices should move. If they do not, prices should mostly absorb the trade without much revaluation. The central problem is that the market does not observe a trader’s motive directly. It only sees orders, quotes, and executions.

That simple asymmetry creates a puzzle. A buy order can mean at least two very different things. It may come from an index fund rebalancing at the close, from a retail investor chasing momentum, or from a trader who already knows the firm’s earnings are better than the market expects. The market sees the same mechanical event (someone buys) but the economic meaning is not the same. Informed trading models exist to connect that visible order flow to the hidden information behind it.

Once you see the problem that way, several familiar market-structure facts fall into place. Bid-ask spreads are not just payment for matching buyers and sellers; part of the spread compensates liquidity suppliers for the risk of trading against better-informed counterparties. Price impact is not just a friction; it is the market’s defensive response to the possibility that order flow is informative. Measures like PIN and VPIN are attempts to infer, from trading data, how much of the flow is likely to be information-driven rather than merely noisy.

The core idea is not specific to one venue or asset class. A dealer in corporate bonds, a limit-order-book market maker in equities, a futures market under stress, and a crypto liquidity provider all face the same structural question: is this order flow telling me something about value that I do not yet know? Informed trading models provide formal answers to that question, each by making different assumptions about how information arrives, how traders act on it, and how prices adjust.

How do markets decide whether incoming orders contain private information?

A market does two jobs at once. It transfers risk from those who want to shed it to those willing to bear it, and it aggregates information into prices. Those jobs sit in tension. If markets made every trade free and absorbed all flow without moving price, informed traders would exploit that passivity and liquidity suppliers would lose money. If markets treated every order as highly informative and moved prices aggressively, ordinary trading would become expensive and liquidity would disappear. Market structure is, in large part, the design of a compromise.

The key invariant is this: someone on the other side of a trade bears the risk that the trader initiating the trade knows more than they do. In dealer markets, that someone is the dealer. In electronic Order Book, it is whoever posted the resting quote. In either case, the problem is the same. If informed traders systematically buy before good news and sell before bad news, then passive liquidity providers lose on average unless quotes already reflect that possibility.

This is the mechanism behind adverse selection. Suppose the true fundamental value of an asset is higher than the market currently believes. An informed trader buys. The seller who fills that order has sold too cheaply. If this happens often enough, rational liquidity suppliers protect themselves by widening spreads or by updating prices after trades. The market is not being irrational or punitive; it is extracting information from the fact that someone was eager to trade.

This is also why not all liquidity is the same. A market can show high volume and yet be fragile if much of that activity comes from traders rapidly recycling inventory rather than posting durable depth. The CFTC-SEC report on the May 6, 2010 Flash Crash makes this distinction explicit: high trading volume was not a reliable indicator of available liquidity under stress, and buy-side depth in the E-Mini collapsed dramatically even while trading activity remained intense. That is exactly the kind of setting where order flow becomes hard to interpret and where informed-trading-style measures get operational attention.

Noise vs. informed trades: how market makers price defensively

The cleanest way to think about informed trading models is to split order flow into two broad sources. Some trades are uninformed in the narrow microstructure sense: they happen for liquidity, hedging, indexing, cash-flow, or behavioral reasons unrelated to superior private information about fundamental value. Other trades are informed: they are initiated because the trader has an informational advantage, or at least believes they do, and expects the price to move in their favor.

This split is a modeling choice, not an ontological truth. Real markets contain many motives at once. A hedge fund may have both a valuation view and a financing need. A dealer may infer information from client flow while also managing inventory. But the informed-versus-noise distinction is useful because it isolates the part of order flow that should rationally move prices.

Here is the mechanism. If the market believes a buy order is more likely to come from an informed trader than from noise, then that buy order should increase the market’s estimate of asset value. Prices rise, and the ask quote at which the trade executes is set higher than it otherwise would be. If the market believes order flow is mostly noise, then the same buy order causes less price adjustment. Price impact, in this view, is Bayesian learning under strategic uncertainty.

The analogy to a smoke alarm helps, up to a point. Order flow is like smoke: sometimes it signals a real fire, sometimes it is just burnt toast. A market maker must decide how sensitive the alarm should be. Too insensitive, and informed traders exploit stale quotes. Too sensitive, and harmless activity triggers unnecessary repricing and wide spreads. The analogy explains why markets react to flow probabilistically rather than mechanically. Where it fails is that traders respond strategically to the alarm itself: they can split orders, hide size, route across venues, or choose times of day when detection is harder.

How sequential trading models explain spreads and Bayesian learning from orders

One important family of informed trading models assumes trading happens sequentially and prices update as orders arrive. The market maker does not see the trader’s information directly. Instead, they infer from whether the next order is a buy or a sell, and from how surprising that order is under different possible states of the world.

This is the basic intuition behind the classic Glosten-Milgrom style setup. Imagine a single asset whose true value is either high or low. Some traders are informed and know which state holds. Others are liquidity traders who buy or sell for unrelated reasons. A competitive market maker posts bid and ask quotes before knowing which type of trader is arriving. If a buy order arrives, the market maker reasons that buys are more likely when value is high, because informed traders with good news tend to buy. So the posterior probability of the high-value state rises, and the transaction price must reflect that.

The spread emerges endogenously from this logic. The ask quote is set above the unconditional expected value because a trader willing to buy may be informed. The bid is set below expected value because a trader willing to sell may also be informed, but on the downside. The difference between the two is the compensation required to break even against adverse selection. This is the compression point for many readers: the bid-ask spread is not only a payment for immediacy; it is the price of not knowing whether your counterparty knows more than you do.

That idea explains a great deal of observed market behavior. When the probability of informed trading rises (around earnings announcements, macro releases, or firm-specific news) spreads tend to widen. When public disclosure improves and private information asymmetries fall, spreads often narrow. Even if the institutional details differ between dealer markets and order books, the same cause-and-effect logic survives.

Why do informed traders split orders? Kyle’s model on execution and price impact

| Execution type | Information leakage | Price impact | Execution cost | Best for |

|---|---|---|---|---|

| Immediate block | High | Very high | High market impact | Urgent trades |

| Sliced execution | Moderate | Gradual | Moderate total cost | Large informed trades |

| Passive posting | Low | Low immediate | Low cost, slow fill | Minimizing leakage |

A second canonical approach, associated with Kyle, shifts attention from quoted spreads to the depth of the market and the strategic trading path of an informed trader. Instead of modeling a single buy or sell as an isolated signal, Kyle treats informed trading as a dynamic optimization problem. The informed trader knows the asset’s value before others do but cannot simply trade all at once, because doing so would reveal too much information and move the price sharply.

The important idea here is concealment through gradual execution. In Kyle’s framework, noise traders generate random order flow, and the informed trader hides inside that flow by trading incrementally. A market maker observes only the aggregate signed order flow, not who generated it, and sets price based on that total imbalance. This creates a linear price impact relation: more net buying implies a higher price because it is more likely that informed demand is present.

The market depth parameter in Kyle can be read as the sensitivity of price to order flow. When prices are very sensitive, informed traders reveal information quickly but pay more impact. When prices are less sensitive, the informed trader can trade more cheaply, but information enters price more slowly. That tradeoff explains why market efficiency and trading costs are linked rather than separate. Markets become informative by making informative trading costly.

A worked example makes this concrete. Suppose a trader knows that an asset worth 110 is currently trading near 100. If they buy the entire desired position immediately, the order imbalance itself reveals too much, and market makers raise the price quickly toward 110. Most of the informational profit disappears into impact. So the trader buys in pieces, mixing their demand with background noise flow. Market makers, seeing persistent net buying over time, infer that someone likely knows something, and they revise price upward gradually. The path of prices is therefore the path by which private information is converted into public information.

This mechanism remains highly relevant in modern electronic markets. Large informed or information-sensitive traders still manage execution carefully to trade off urgency against information leakage. Algorithms that slice orders, use dark pools, randomize size, or lean on passive posting can all be understood as practical responses to the same basic force Kyle formalized.

What does PIN measure and how does it estimate the probability of informed trading?

| Measure | What it estimates | Time aggregation | Strength | Weakness |

|---|---|---|---|---|

| PIN | Model-implied fraction of informed flow | Calendar-day bars | Structural identification | Sensitive to order splitting |

| VPIN | Order-flow toxicity over volume | Volume-synchronized buckets | Aligns with trading activity | Highly parameter sensitive |

| Bid-ask spread | Compensation for adverse selection | Real-time quotes | Direct market cost signal | Mixes inventory and info risk |

| Order imbalance | Short-term directional pressure | High-frequency bars | Simple operational signal | Noise can dominate |

The theoretical models explain why spreads and price impact arise. Empirical informed trading models try to go a step further and estimate how much informed trading is present in observed data. The most widely known measure is PIN, the probability of informed trading.

PIN starts from a stylized story about daily trading. On some days, no private-information event occurs. On other days, a private-information event occurs, and it is either good news or bad news. Uninformed buy and sell orders arrive at baseline rates on all days. When an information event occurs, additional informed trading arrives on the appropriate side: more buys on good-news days, more sells on bad-news days. The model then asks: given observed counts of buys and sells over many days, what parameter values make those observations most likely?

In the standard formulation, the model estimates parameters often denoted by alpha, delta, mu, epsilon_b, and epsilon_s. Here alpha is the probability that an information event occurs on a day, delta is the probability that the event is bad news conditional on an event, mu is the arrival rate of informed orders when news exists, and epsilon_b and epsilon_s are the baseline arrival rates of uninformed buys and sells. Once these are estimated, PIN is computed as alpha mu / (alpha mu + epsilon_b + epsilon_s).

The intuition is simple even if the estimation is not. The numerator represents expected informed order arrivals. The denominator represents total expected order arrivals. So PIN is the model-implied fraction of order flow attributable to informed traders. It is not the fraction of traders who are informed, nor a direct reading of private information in the market. It is a structural estimate generated by strong assumptions about how news arrives and how orders are generated.

That distinction matters. A reader might easily overinterpret PIN as a literal measure of hidden informed activity. It is better understood as a compact proxy for information asymmetry under a specific data-generating story. If the story fits poorly (because of order splitting, strategic execution, fragmented venues, asymmetric retail flow, or changing market regimes) the estimate can be misleading even when the computation is performed perfectly.

Why is PIN estimation numerically unstable and what fixes are used?

The formal definition of PIN is tidy. The practical estimation problem is not. The likelihood function can be numerically unstable, especially for heavily traded assets. The R Journal article on the InfoTrad package emphasizes two specific issues that became well known in the literature: floating-point exceptions for large-volume stocks and boundary solutions where estimated parameters stick at 0 or 1. These are not cosmetic software problems. They can materially bias results and create sample-selection issues if active stocks become harder to estimate.

The mechanism behind floating-point problems is straightforward. PIN likelihoods are built from trading-count probabilities, and for large buy and sell counts the raw arithmetic can become numerically ill-behaved. Algebraically equivalent factorizations, such as those associated with Easley et al. and Lin-Ke, were introduced to stabilize computation. The InfoTrad paper describes these as remedies for the original likelihood’s numerical fragility.

Boundary solutions create a different problem. Maximum-likelihood procedures may settle on parameter values at the edge of the feasible space, not because the economic structure truly points there, but because the likelihood surface is awkward, multi-modal, or sensitive to starting values. The Yan-Zhang approach addresses this by searching across many initial vectors and selecting the best non-boundary solution. Again, the lesson is conceptual as much as technical: what looks like a clean market-structure measure often depends on delicate estimation choices. Recent software work reflects this practical reality. The 2023 PINstimation paper presents tooling for estimating original PIN, adjusted PIN, multilayer PIN, and VPIN, along with simulation, aggregation, and classification utilities. The existence of this tooling ecosystem tells you something important about the field: the intellectual problem did not end with the original models. Much of the real work lies in making estimation stable, classifying trades correctly, and understanding how preprocessing choices shape the final metric.

Why trade-classification accuracy matters for informed-trading estimates

PIN-style models usually need buy and sell counts. But many datasets do not directly tell you whether a trade was buyer-initiated or seller-initiated. That information has to be inferred. This is where trade-classification rules, such as Lee-Ready-style methods and related heuristics, become foundational.

This may sound secondary, but it is not. If you misclassify trade direction, you distort the very buy-sell imbalance the model is trying to interpret as information. A market with balanced flow can look one-sided. A news-driven burst can look like routine noise. The downstream estimate of informed trading then reflects not only market behavior but also the classifier’s mistakes.

This dependence is one reason later work and software packages devote serious attention to classification and data handling. The PINstimation article explicitly includes classification tools, and the broader literature repeatedly revisits how classification errors affect inference. Informed trading models are therefore best seen not as isolated formulas but as full pipelines: market data comes in, trades are signed, time or volume is aggregated, a structural or reduced-form model is fitted, and only then is a number like PIN or VPIN produced.

How VPIN uses volume-synchronized buckets to detect order-flow toxicity

| Aggregation | Aligns with activity | Sensitivity to spikes | Implementation needs | Best use |

|---|---|---|---|---|

| Calendar time bars | Poor alignment | Distorted by volume bursts | Accurate timestamps required | Low-frequency analysis |

| Volume bars (VPIN) | Good alignment | Robust to time-of-day spikes | Volume-per-bucket data required | Event-sensitive monitoring |

| Bulk Volume Classification | Moderate alignment | Lower timestamp sensitivity | Trade and volume records needed | Scalable multi-venue use |

VPIN, the volume-synchronized probability of informed trading, was proposed to handle a weakness of time-based aggregation. Trading activity is not evenly spaced through clock time. A five-minute interval at the open is not economically comparable to a five-minute interval in a quiet midday market. If one cares about information contained in order flow, volume time can be more natural than calendar time because it indexes the market by how much trading has actually occurred.

That is the basic mechanism behind VPIN. Instead of forming bars by equal time intervals, the method forms volume buckets of equal traded volume. It then estimates order imbalance within those buckets, commonly using classification methods such as bulk volume classification. By averaging recent imbalances over a rolling window of buckets, VPIN produces a measure intended to capture order-flow toxicity: the degree to which current flow looks one-sided in a way that could threaten liquidity provision.

The appeal is intuitive. If informed traders trade strategically when volume is high, or if activity clusters unevenly across the day, volume synchronization should align the metric more closely with the market’s information-processing rhythm. It also makes cross-period comparisons less distorted by quiet versus busy intervals. This is why VPIN became associated not only with academic measurement but also with operational monitoring of stressed markets.

But VPIN’s usefulness depends heavily on implementation choices. The Berkeley sensitivity-analysis report on VPIN stresses that false-positive rates are highly sensitive to parameters, especially the number of buckets per day and the threshold used for signaling. In their study, systematic optimization reduced false positives substantially. That is an important practical result, but it also carries a warning: a metric that looks conceptually robust may behave very differently under slightly different bucketing and threshold choices.

This is also where the connection to order-flow toxicity becomes explicit. Toxic order flow is flow that is dangerous for passive liquidity providers because it is likely to be information-laden or otherwise predictive of adverse price movement. PIN and VPIN are model-based ways of estimating that danger. They are therefore implementations of the broader idea of order-flow toxicity, not separate from it.

What informed-trading models reliably explain (and what they do not) in real markets

Informed trading models are most useful when treated as explanations of mechanism rather than as single-number verdicts on a market. They explain why spreads widen before information-rich events, why large hidden orders are executed gradually, why liquidity sometimes vanishes just when volume surges, and why some trading activity moves prices far more than other activity of equal size.

Consider a futures market under stress. A wave of aggressive selling hits the book. At first, high-frequency traders and other short-horizon participants may absorb some of it. But if they cannot tell whether the selling is inventory-based or information-based, they shorten their holding horizons, reduce displayed depth, and recycle inventory faster. The 2010 Flash Crash report describes a version of this dynamic: HFTs initially absorbed flow, then engaged in rapid turnover while buy-side depth collapsed and cross-market arbitrage propagated pressure into related instruments. That is not a literal PIN model in action. It is the market-structure environment that informed-trading models are trying to formalize.

The same logic appears in less dramatic settings. Before an earnings release, market makers often protect themselves because order flow arriving in that window is more plausibly informative. In less transparent markets such as some credit products, dealers quote conservatively because customer flow itself can reveal private information about issuer conditions or investor needs. In crypto markets, fragmented venues and uneven transparency make the identification problem even harder: a sudden sweep on one venue may be informational, manipulative, or merely inventory transfer. The structure of the problem remains the same even when the institutional plumbing changes.

Which strong assumptions do informed-trading models make and why they matter

The most important limitation of informed trading models is not that they are wrong, but that they simplify aggressively. They often assume a clear separation between informed and uninformed flow, simple arrival processes, stable parameters over estimation windows, and a single mechanism of private-information events. Real markets violate all of these.

Strategic behavior is especially disruptive to simple models. Informed traders split orders, use algorithms, trade across venues, hide in dark pools, or choose moments when liquidity is deep. Uninformed traders can also generate persistent one-sided flow for mechanical reasons, such as index rebalancing or risk deleveraging, making them look informed in the data. Conversely, public information can cause nearly everyone to trade in one direction, which is informative for price discovery but not “private information” in the narrow structural sense assumed by PIN.

Modern market fragmentation adds another complication. A model estimated from one venue’s visible trades may miss off-exchange activity or latency differences across books. Bulk classification methods help operationally, and volume synchronization can reduce some distortions, but neither removes the underlying identification problem. The model sees a shadow of the trading process, not the full process.

This is why careful users treat these models as inference tools, not direct sensors. A high PIN or VPIN value does not prove insider trading, nor does a low value prove a market is informationally benign. The measures are useful because they summarize patterns in order flow that, under a structured interpretation, are consistent with elevated information asymmetry or toxicity. They become less reliable when the structural interpretation is a poor fit.

Conclusion

Informed trading models exist because markets must infer information from orders without seeing traders’ motives directly. That problem produces spreads, price impact, and defensive liquidity provision; the models formalize those responses and, in cases like PIN and VPIN, try to measure how much order flow is likely to be information-driven.

The idea worth remembering is simple: prices do not react to trades just because trades happen; they react because trades may reveal value. Everything else (adverse selection, bid-ask spreads, Kyle impact, PIN estimation, VPIN bucketing, and order-flow-toxicity monitoring) is a more detailed way of working out that single fact.

Frequently Asked Questions

Models do not observe motives directly; they impose a data-generating story that splits trades into “uninformed” baseline flow and “informed” arrivals and then infer parameters from buy/sell counts (PIN’s alpha, delta, mu, epsilon_b, epsilon_s) or from volume-bucket imbalances (VPIN). In practice this requires signing trades with a classifier and aggregating counts or volumes before fitting the structural model, so the inferred split reflects the model assumptions as much as market behavior.

PIN estimation is numerically fragile because likelihoods built from large trade counts can trigger floating‑point exceptions and because optimization can hit boundary solutions; authors recommend algebraically equivalent likelihood factorizations (e.g., Lin–Ke/Easley variants) and extensive multi-start searches to avoid spurious boundary optima. Software packages (InfoTrad, PINstimation) implement these remedies and show that careful numerical methods materially change estimates and stability.

Kyle’s model explains order splitting as a strategic concealment problem: an informed trader trades incrementally to hide inside random noise flow because large immediate trades would reveal information and move prices; market makers set prices linearly in aggregate order imbalance, creating a tradeoff where faster execution increases price impact and reduces informational profit.

No - high raw trading volume can coexist with fragile or evaporating liquidity: the May 6, 2010 Flash Crash showed intense activity while displayed buy‑side depth collapsed, so volume alone is a poor proxy for available liquidity under stress. VPIN and other flow-toxicity measures attempt to capture the one‑sidedness or informativeness of flow rather than volume per se.

PIN and VPIN rest on strong simplifications (clear informed/noise split, simple arrival processes, stable parameters) and are sensitive to preprocessing (trade‑signing, aggregation) and implementation choices (likelihood factorization, optimization, bucket size, threshold). Because of those dependencies they are useful as structured inference tools but not definitive proofs of insider trading or market toxicity.

Misclassifying trade direction directly distorts the buy–sell imbalance the models use: a market with balanced true flow can look one‑sided if many trades are signed incorrectly, and news-driven bursts can be misread as private‑information flow if classifiers or aggregation choices are poor. That is why modern toolkits embed classification routines (Lee‑Ready style, bulk volume classification) and why researchers treat classification as part of the estimation pipeline rather than a side task.

VPIN is intentionally volume‑synchronized to avoid calendar‑time distortions (it forms equal‑volume buckets and tracks imbalance across them), but its false‑positive rate depends strongly on parameter choices such as buckets per day and signaling thresholds; sensitivity analyses (e.g., the Berkeley report) show systematic tuning can reduce false positives, so operational use requires calibration for the instrument and regime in question.

The core structural question - whether an incoming order conveys private information - is the same across dealers, limit‑order books, futures, and crypto, but institutional details matter: dealer inventory risk, order‑book matching, venue fragmentation, off‑exchange trades, and venue transparency all change how much of the true process is visible to the estimator and therefore affect identification and interpretation. Practitioners must adjust data sources, classification, and model scope to the market’s plumbing rather than transplanting a model unchanged.

Related reading