What Is a Market Data Feed Handler?

Learn what market data feed handlers are, why exchanges need them, and how they decode, sequence, recover, normalize, and publish usable market state.

Introduction

market data feed handlers are the software systems that receive raw exchange market data, decode it, restore it to the correct sequence, and turn it into something downstream systems can actually use. That sounds mundane until you notice the constraint they live under: the market is changing continuously, the exchange protocol is often binary and venue-specific, and the useful output must be both fast and correct. If the handler is wrong, every pricing model, chart, strategy, and risk check built on top of it is wrong in the same direction.

This is why feed handlers exist as a distinct layer rather than as a small parser embedded inside every application. Exchanges publish data in their own transport protocols, message formats, channel layouts, recovery mechanisms, and lifecycle rules. NASDAQ TotalView-ITCH, for example, is an outbound-only binary feed made of sequenced, variable-length messages with nanosecond timestamps and intraday stock-locate codes. NYSE’s Integrated Feed publishes matching-engine events in sequence with tightly specified binary message layouts and separate refresh behavior. CME’s Market Data Platform uses a dual-feed UDP multicast architecture and supports event-based binary formats including SBE. A trading system that consumed each venue directly would have to solve the same hard problems again and again.

The central idea is simple: a feed handler preserves the exchange’s meaning while changing its form. It should not invent market state. It should take the exchange’s stream of adds, modifies, deletes, executions, auctions, and status changes, and produce a trustworthy local representation of the book and tape. Everything else in the design follows from that requirement.

What problem does a market data feed handler solve?

An exchange does not usually send you “the current order book” as a continuously refreshed table. It sends you a stream of events. A new visible order arrives. An existing order is partially executed. A price level is updated. An auction imbalance changes. A security status flips. The receiver’s job is to apply those events in the right order so that a local machine can reconstruct the same market state the exchange intended to publish.

That reconstruction step is the core problem. The exchange is optimized for matching orders and disseminating high-rate events, not for maintaining a separate bespoke view for every subscriber. So the feed is incremental. Incremental delivery is efficient because it sends only changes, but it pushes state-management complexity onto the consumer. A feed handler exists to absorb that complexity once, carefully, and expose a cleaner interface to everything downstream.

This is also why feed handlers are usually stateful, not just stateless decoders. If a venue publishes an order add with an OrderID, then later sends an execution or delete for that same order, the handler must remember the prior state in order to apply the new message correctly. NYSE’s Integrated Feed makes this explicit through Add, Modify, Replace, Delete, and Execution messages. NASDAQ TotalView-ITCH similarly uses a series of order messages to track the life of a customer order. Without maintained state, the messages are not very meaningful.

There is a second problem as well: raw exchange protocols are not designed for broad internal consumption. They are designed for efficient dissemination under the exchange’s own operational constraints. They may use venue-specific identifiers, day-scoped symbol indexes, compact binary layouts, separate refresh channels, or recovery services that are sensible for line-rate delivery but awkward for research systems, dashboards, or multi-venue trading engines. A feed handler transforms those conventions into a representation that is easier to consume while preserving semantics.

How does a feed handler turn packets into a usable order book?

The easiest way to understand a feed handler is to picture the path from packet to usable market state.

At the outer edge is the network interface. Some feeds arrive as TCP streams, such as SoupBinTCP variants. Others arrive over UDP multicast, such as NASDAQ MoldUDP64 options or CME’s dual-feed UDP multicast architecture. Transport choice matters because it changes what the handler must do. TCP gives ordered byte-stream delivery but may add head-of-line blocking. UDP multicast gives low-overhead fan-out and efficient one-to-many dissemination, but the application must tolerate packet loss, duplication, and reordering at the transport edge.

The handler first has to identify message boundaries and parse the transport envelope. Then it decodes the payload format. In some venues that means reading fixed binary fields at exact offsets; NYSE’s specifications, for instance, define message sizes and field positions precisely. In other venues it may mean interpreting variable-length messages. In SBE-based feeds, decoding also depends on having the message schema out of band, because SBE messages are not self-describing. That design saves bandwidth and parsing work, but it means the handler must manage schema versions carefully. If the decoder and the live schema disagree, the bytes may still parse, but the meaning can be wrong.

Once the payload is decoded, the next task is sequencing. Most direct feeds are published as ordered event streams, and the order matters because state transitions are not commutative. An add followed by a delete is not equivalent to a delete followed by an add. NASDAQ TotalView-ITCH is explicitly a series of sequenced messages. NYSE Integrated Feed similarly promises events in sequence as they appear on matching engines. The feed handler therefore tracks sequence numbers, detects gaps, and decides whether to wait, request recovery, switch to a backup path, or continue with known incompleteness.

After sequencing comes state application. This is the part that turns wire messages into a local order book, trade record, auction state, or security status map. If the feed is market-by-order, the handler maintains individual order objects keyed by venue identifiers. If it is market-by-price, it may maintain aggregate levels instead. CME documents both MBP and MBO styles in surrounding ecosystem materials, and that distinction matters because MBO preserves queue detail while MBP preserves only aggregate price-level depth. The handler must mirror whichever model the venue disseminates; it cannot infer missing queue information from MBP alone.

Finally, the handler emits output. That output might be a normalized event stream, an in-memory order book API, a callback interface, a shared-memory bus, persisted replay logs, or some combination. Refinitiv’s Ultra Direct product describes this explicitly: the feed handler ingests source-specific protocols, normalizes the messages into a consistent format, and dispatches them through programmatic callbacks. That is not the only architecture, but it captures the usual purpose. The downstream user does not want to know the byte offset of FirmID in one venue and the daily symbol index convention in another. The downstream user wants a trustworthy event saying that a bid was added, modified, traded, or removed.

Why do sequencing and recovery drive feed handler design?

| Action | Correctness | Latency impact | When to use | Notes |

|---|---|---|---|---|

| Wait for retransmit | Max correctness | High latency | Critical instruments | Depends on retransmit SLA |

| Request snapshot / refresh | Max correctness | High latency | Large or persistent gaps | Requires venue refresh service |

| Use redundant feeds | High correctness | Low latency penalty | Dual-feed deployments | Arbitrate by sequence |

| Publish degraded state | Lower correctness | Low latency | Latency-sensitive consumers | Mark gaps explicitly |

A smart reader might assume parsing is the hard part. It is important, but the deeper difficulty is preserving continuity when the world is imperfect. Feeds are high-rate streams carried over real networks. Packets can be lost. Multicast copies can arrive out of order. A consumer can fall behind. Exchanges know this, which is why production feeds often come with duplicate channels, refresh channels, snapshot services, or retransmission facilities.

CME’s MDP is described as a dual-feed UDP multicast architecture. That means the receiver often has two logically equivalent streams and can arbitrate between them. Refinitiv’s feed-handler documentation names feed arbitration as a major function for exactly this reason. The system compares the two feeds, prefers whichever message arrives first while maintaining sequence integrity, and uses redundancy to reduce effective loss. The point is not merely speed. The point is to keep a single coherent event history despite the network’s imperfections.

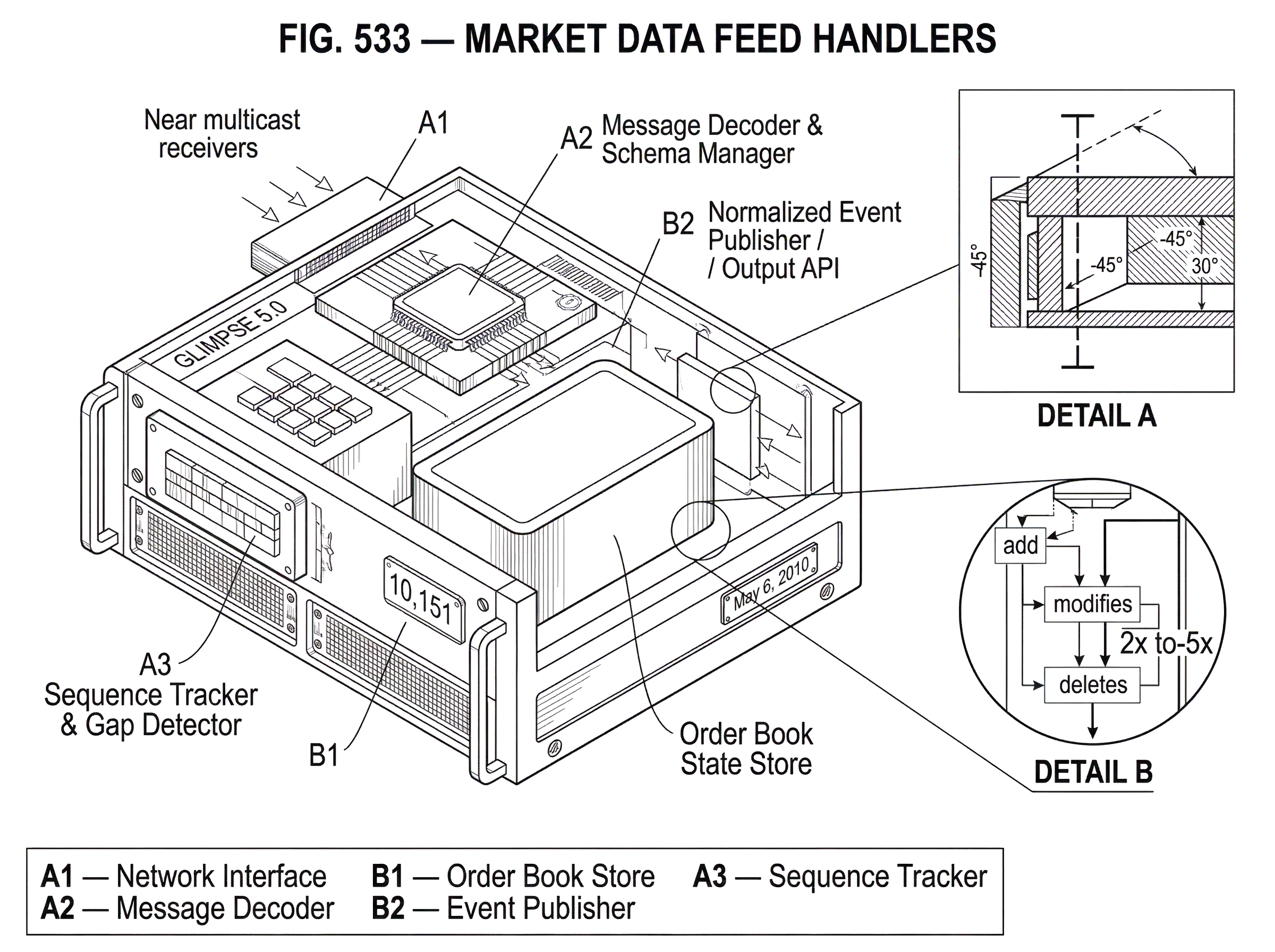

NASDAQ’s ecosystem shows a related pattern in a different form. TotalView-ITCH can be disseminated in SoupBinTCP or MoldUDP64 variants, and the FPGA version is guaranteed to deliver payload messages in the same exact order as the software feed. Firms can use GLIMPSE 5.0 retransmissions or the software feed for fault tolerance and disaster recovery. That tells you something fundamental about feed-handler design: reliability is usually achieved not by pretending the main stream is flawless, but by composing a live stream with one or more recovery paths.

A worked example makes this concrete. Imagine a handler receiving a multicast depth feed for a symbol. It has processed sequence numbers 10,001 through 10,150 and built a local book. The next packet it sees starts at 10,152. That means 10,151 is missing. At this point, the handler has a choice, but not a free one. If it keeps applying later messages, it may produce a book built on an unknown missing transition. If it stops everything globally, latency explodes. A well-designed handler usually marks the affected channel or instrument as having a gap, attempts retransmission or refresh through the venue’s recovery mechanism, and only resumes normal publication once state can again be trusted. The exact operational behavior depends on venue rules and system requirements, but the invariant is stable: never silently convert uncertainty into false certainty.

That invariant is why feed handlers often separate “decoded” from “validated” and “published” stages. A message can be syntactically valid and still unsafe to expose if a prior sequence gap makes its state implications ambiguous.

When should you normalize multi-venue market data, and what are the risks?

Once a firm consumes more than one venue, normalization becomes tempting. It promises a single event model for all exchanges: one add-order type, one trade type, one imbalance type, one order-book API. This is often valuable. It reduces integration effort, makes cross-venue analytics possible, and allows strategies to operate on a common representation.

But normalization has a trap: different venues do not mean exactly the same thing by similar-looking events. Some provide full order-level depth with attribution. NASDAQ TotalView-ITCH, for example, carries order-level data and can include participant attribution. Some provide aggregate price levels. Some use day-scoped identifiers. Some publish auctions and imbalances on dedicated channels or with specific timing rules; NYSE imbalance messages are published during auctions, typically once per second when values change, while NASDAQ NOII messages are disseminated at frequent intervals before opening and closing crosses and include non-displayable as well as displayable interest. A “generic imbalance event” can hide those differences so aggressively that downstream users lose information they actually needed.

So good normalization preserves the venue’s semantics even while simplifying access. The right question is not “how do we force all feeds into one schema?” but “what common structure can we expose without erasing important distinctions?” In practice this often means a layered model: a common event envelope plus venue-specific fields or flags where meaning diverges.

This is also where symbol mapping and identifier hygiene matter. NASDAQ’s stock-locate codes are designed as low-valued integers for fast lookup and are dynamically assigned each day. They are useful precisely because they are not heavyweight symbolic identifiers on the wire. But that means a feed handler must map them to symbol metadata and must not assume yesterday’s locate code has today’s meaning. Similar caution applies to day-only order identifiers on venues that scope identifiers intraday.

How should feed handlers balance latency against correctness?

Market-data infrastructure is full of low-latency language, and for good reason. Binary encoding choices such as SBE exist because parsing cost matters. SBE prefers native binary datatypes and fixed positions or lengths so a decoder can access fields directly rather than parse tags or walk variable heaps. CME’s event-based formats and vendor implementations emphasize lower latency, lower CPU use, and fine timestamp granularity. FPGA distribution options exist because some consumers care about shaving still more processing delay.

But feed handlers are a useful place to resist a common confusion: low latency is not the same thing as quality. A handler that is ten microseconds faster but occasionally emits a corrupted book is not better. It is worse. In most real systems, the first duty of the handler is to maintain the exchange’s intended event history. Performance engineering serves that goal.

This is why timestamping gets special attention. Refinitiv describes high-precision timestamping, optionally GPS-based, as a major feed-handler function. Timestamping tells downstream systems when a message was observed locally, which is different from when the matching engine created the event. Some exchange messages also carry source timestamps, such as nanoseconds since midnight in TotalView-ITCH or source-time fields in NYSE message layouts. Both are useful, but they answer different questions. The exchange timestamp helps reconstruct market event time. The local receipt timestamp helps measure transport and handling latency. Conflating them is a classic mistake.

Monitoring should reflect this distinction. If you instrument feed handlers, distributions matter more than simple averages. Histograms are usually more useful than summaries when you need to aggregate latency across many handler instances, because histogram buckets can be aggregated and server-side quantiles can be computed afterward. That is operationally important in fleets where no single process tells the whole story.

How do network architecture and security choices affect direct market-data feeds?

| Transport / Option | Delivery semantics | Security | Operational cost | Best use |

|---|---|---|---|---|

| UDP multicast (SSM) | Source-specific datagrams | Network controls; IPsec caveats | Low to medium | High fan-out distribution |

| TCP streams (SoupBinTCP) | Ordered byte-stream | TLS or session auth | Medium | Reliable single-subscriber feeds |

| DTLS over UDP | Datagram with crypto | DTLS (auth + encryption) | Medium to high | Secure datagram delivery |

| Private colocation network | Physical isolation | Access controls, segmentation | High | Lowest-latency secure links |

Direct market-data feeds often rely as much on network design as on application logic. In multicast environments, the receiver usually subscribes to specific channels rather than opening a one-to-one session for every stream. Source-Specific Multicast is a good conceptual model here: a channel is identified by a source-and-group pair, and receivers request traffic from that specific source to that specific multicast destination. That reduces ambiguity and unnecessary traffic compared with any-source multicast models.

Security in these systems is subtle because the transport goals differ from ordinary web applications. A UDP-based dissemination fabric wants to preserve datagram semantics and low overhead. If datagram confidentiality or integrity is required, something like DTLS can provide TLS-like security while preserving datagram behavior, but it does not create reliable or in-order delivery. Applications still have to handle loss and reordering. In practice, many direct-feed deployments rely heavily on private connectivity, controlled colocation, access controls, and network segmentation rather than wrapping every multicast packet in heavyweight session semantics. The right choice depends on operational context, but the principle remains: security controls cannot break the feed’s delivery assumptions without changing the handler’s design.

What failure modes should feed handlers survive during market stress?

Feed handlers are tested hardest when markets are least orderly. Heavy message rates expose parser inefficiencies, lock contention, and memory churn. Gaps become more likely to matter because the book is changing rapidly. Recovery paths can themselves become congested. And downstream systems may treat conflicting or delayed data as a signal that something is wrong with the market, not just the network.

The public analysis of the May 6, 2010 event is useful here not because it blames feed delays as the primary cause (it does not) but because it shows that data discrepancies and uncertainty can trigger firm-level integrity pauses and widen a liquidity crisis. Some firms used multiple data sources for integrity checks, and disagreement between those sources could trigger pauses. That is a reminder that a feed handler is not just a passive pipe. It is often part of the trust boundary by which a trading system decides whether the observed market is coherent enough to act on.

This is also why “best effort” is not enough as a design philosophy. Exchanges may disclaim warranties and continued availability in product documentation, but a subscriber still needs internal operational discipline: schema rollout procedures, gap alarms, replay testing, sequence-health metrics, deterministic startup logic, and clear rules for when an output should be suppressed because state is not trustworthy.

Build vs. buy: how to choose a market-data feed handler

| Option | Time to deploy | Control | Operational burden | Best for |

|---|---|---|---|---|

| Build in-house | Long | Full control | High | Custom requirements |

| Buy vendor product | Short | Limited control | Medium | Fast time-to-market |

| Hybrid (vendor + custom) | Medium | Moderate control | Medium | Balance speed and control |

Many firms do not write every feed handler from scratch. Vendor libraries such as Refinitiv RTUD or OnixS products exist because implementing venue-specific protocols, recovery behavior, and normalization is expensive. Vendor products often add replay support, prebuilt decoders, callback APIs, and exchange certifications. That can sharply reduce time to production.

Still, buying a handler does not remove the conceptual burden. Someone must understand whether the product exposes raw venue semantics or a normalized abstraction, how it handles gaps, how it versions schemas, how it timestamps packets, and how it replays history. Historical replay is especially important. If a handler can consume packet captures or persisted replay logs, teams can reproduce incidents and validate book reconstruction against known inputs. That matters because many feed-handler failures are not obvious parser crashes. They are subtle state divergences that only show up later as impossible books, mismatched fills, or unexplained strategy behavior.

Conclusion

A market data feed handler is best understood as a state-preserving translator between an exchange’s raw event stream and a firm’s usable view of the market. Its job is not just to decode bytes. It must maintain sequence, recover from loss, apply order-lifecycle rules, map venue-specific identifiers, timestamp observation, and expose a trustworthy local market state.

If you remember one thing, remember this: the feed handler is where raw market events become belief. Done well, it gives every downstream system the same timely, coherent picture of the market. Done poorly, it gives them the same mistake at machine speed.

Frequently Asked Questions

Feed messages represent incremental state transitions (adds, modifies, executions, deletes) that only make sense relative to previously seen messages, so a handler must remember prior orders or price levels to apply later updates correctly; without maintained state, execution or delete messages cannot be interpreted reliably.

Handlers track sequence numbers, detect gaps, and then either wait, request retransmission/refresh, switch to a backup/redundant stream, or mark the instrument/channel as uncertain until recovery completes - because applying later messages over a missing transition can produce an incorrect local book.

Normalization is useful but dangerous because superficially similar events can carry different semantics across venues (e.g., order-level vs price-level feeds, day-scoped identifiers, or venue-specific auction timing), so good designs expose a common envelope while preserving venue-specific fields rather than forcing everything into one flattened schema.

Low-latency encodings and delivery are important, but correctness is the primary product: a handler that is faster but occasionally emits an incorrect book is worse than a slightly slower, correct handler; timestamping should keep exchange event time distinct from local receipt time so latency measurements and event reconstruction remain meaningful.

Buying vendor handlers speeds deployment and often supplies prebuilt decoders, replay and recovery support, and exchange certifications, but you still must verify how the product handles sequencing, schema/versioning, timestamping, normalization, and replay because those operational semantics determine whether the product meets your correctness and audit needs.

SBE-encoded messages are not self-describing, so handlers must obtain and manage out-of-band message schemas and careful versioning; mismatched schema or byte-order expectations can cause silently incorrect decoding, making schema distribution and lifecycle control a production concern.

Security for multicast feeds usually relies on controlled network architecture (colocation, private links, access controls) because adding session-layer security (e.g., DTLS) preserves datagram semantics but does not add reliability or ordering guarantees; any security layer that alters delivery assumptions requires corresponding changes to handler logic.

In stressed markets message rates, parser CPU, and recovery channels are all strained and divergent data sources can cause integrity pauses; handlers must expose clear gap/health signals, support replayable recording for incident reconstruction, and avoid silently publishing state when sequence continuity is uncertain.

Day-scoped identifiers such as NASDAQ stock-locate codes and many exchange OrderIDs are reassigned or reset each day, so handlers must map those transient IDs to persistent symbol metadata at runtime and never assume yesterday’s codes apply today.