What Is a Hidden Markov Model in Order Flow Prediction?

Learn how Hidden Markov Models predict order flow by inferring latent trading regimes from trades, volume, timing, and market microstructure signals.

Introduction

Hidden Markov Models in order flow prediction are a way to treat the market not as a random stream of buys and sells, but as a system that moves through a small number of unobserved trading states. That framing matters because short-horizon price pressure, fill probability, and liquidity risk often depend less on the last trade by itself than on the hidden process generating the sequence. If a stock is in the middle of a large buy metaorder being split over time, the next few trades are not independent. If liquidity providers are facing increasingly toxic flow, quoting behavior changes before that stress is obvious from price alone. The practical question is whether we can infer those hidden conditions from the tape quickly enough to make better decisions.

That is the problem HMMs were built to solve. They separate what we observefrom what we think iscausingthose observations. In order flow, the observations might be trade signs, volumes, inter-arrival times, spread changes, or whether an aggressive order moved price. The hidden state is the latent regime: perhaps balanced flow, persistent buy pressure, persistent sell pressure, or a stressed high-toxicity state. The model then asks two linked questions at every step:given the recent sequence, what hidden state are we probably in now?andif we are in that state, what is likely to happen next?

The core idea sounds simple, but the reason it works is deeper. Financial order flow is not merely noisy; it is noisy around persistent structure. Empirical work on equity markets finds that signed order flow is highly persistent, with positive autocorrelation extending to very long lags, and that at intraday horizons this persistence is driven overwhelmingly byorder splitting rather than broad herding. That matters for modeling. If persistence mostly comes from large parent orders being executed in pieces, then the market has memory that is not fully visible in the last tick. An HMM gives that hidden memory a concrete form.

Why use a hidden-state model to predict order flow?

At first glance, predicting order flow seems like a classification problem: look at recent buys and sells, then forecast the next sign. But that approach misses the mechanism that creates predictability. A sequence of buyer-initiated trades can arise for very different reasons. It might reflect a single institution gradually buying over an hour. It might reflect many traders reacting to public news. It might reflect a thin book in which small market orders repeatedly move price and induce short-lived follow-on behavior. These situations can produce similar recent observations while implying very different next-step probabilities.

An HMM is useful precisely when observations are an incomplete view of a more stable hidden process. The model assumes there is an underlying state process that evolves over time with some persistence. That hidden state is not directly seen, but it changes the distribution of what we do observe. In markets, this is natural. Traders do not observe the true inventory objectives of other participants, the urgency of a metaorder, or the internal risk limits that cause market makers to widen or step back. Yet those hidden drivers shape trade direction, size, intensity, and impact.

This is also why HMMs sit between two simpler extremes. A pure iid model assumes each event is almost independent of the past except through a few observed features; that is too weak when flow has regime persistence. A fully structural agent model tries to represent every strategic motive explicitly; that is often too ambitious for live prediction. HMMs impose a middle structure: a few latent regimes, Markov persistence between them, and observable emissions from each regime. That compromise is often powerful because it captures persistence without pretending to identify every economic cause.

There is an important subtlety here. The “state” in an HMM is usually best treated as a statistical summary, not a literal named class of traders. A hidden state may correlate with informed buying, or with a large execution algorithm, or with fragile liquidity, but the model itself only guarantees that the state is a regime with distinct emission behavior and transition dynamics. This matters because financial data can support regime inference even when economic interpretation remains approximate.

How do hidden regimes create the observable patterns in trades?

A useful way to think about an HMM is as a two-layer machine. The top layer is hidden and moves from one regime to another. The bottom layer is visible and generates the data we see, conditional on the hidden regime. The visible sequence is therefore not Markov by itself in any simple way, because its apparent dependence comes from the unobserved layer carrying memory forward.

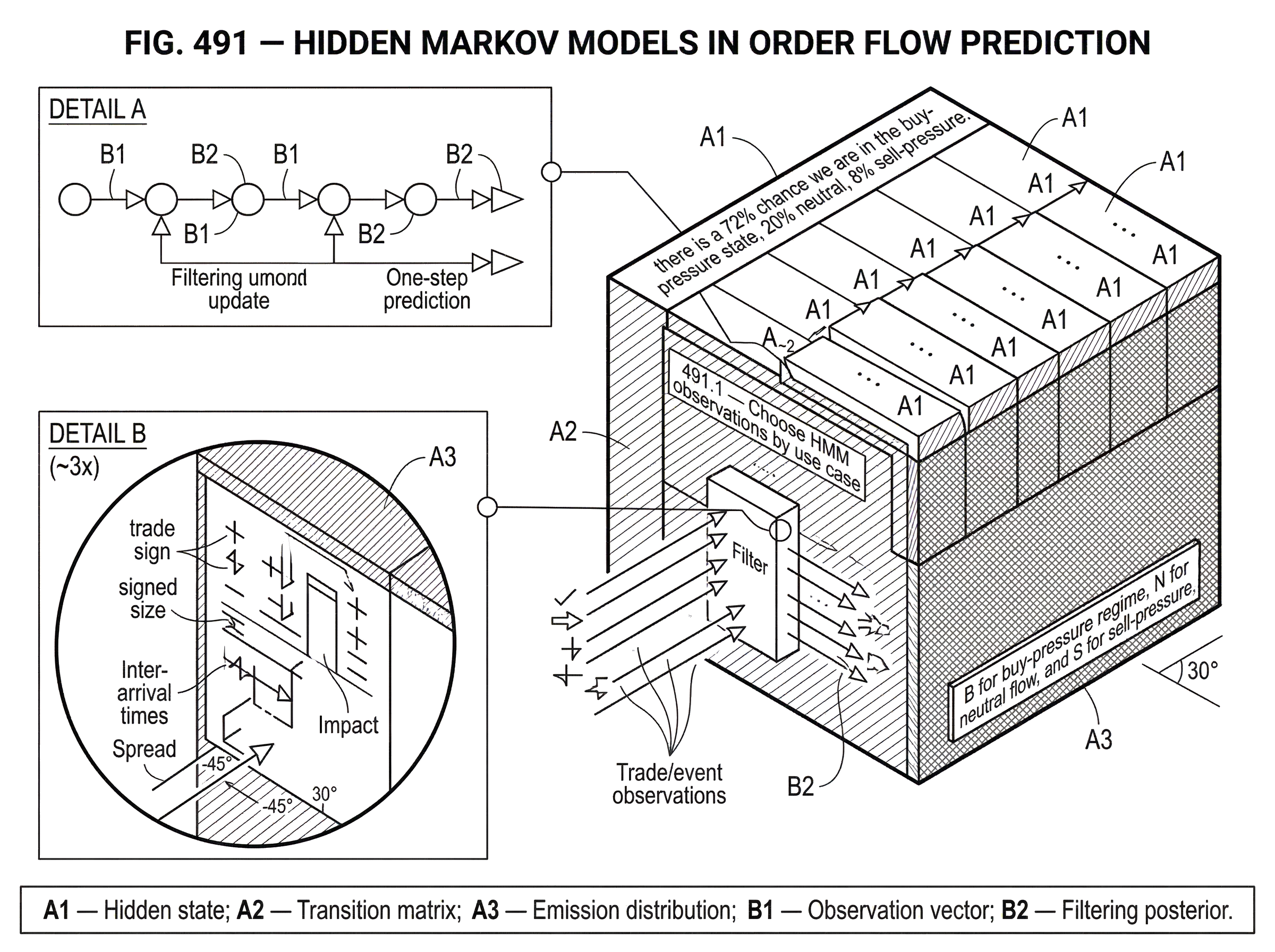

Suppose we define three hidden states for a liquid futures contract: B for buy-pressure regime, N for neutral flow, and S for sell-pressure regime. At each event time, the hidden state can stay where it is or switch according to a transition matrix. If the model is in B, buyer-initiated trades are more likely, trades may arrive faster, and aggressive orders may be more likely to consume ask-side depth. If the model is in S, the same logic applies in the other direction. If the model is in N, flow is more balanced and impact is milder.

The key is that we do not observe B, N, or S directly. We observe a stream like this: buy trade, buy trade, small sell, buy trade, price-moving buy, short inter-arrival time, widened spread. Each new observation updates our belief over hidden states. After several buys in quick succession, the posterior probability of B rises. If a price-changing trade triggers opposite-side responses from other traders, the state probabilities may shift again. Prediction is then based not on the raw last observation but on the evolving belief distribution across hidden states.

This belief distribution is often more useful than a hard classification. In live trading, you usually care less about declaring “the regime is definitely buy-pressure” than about saying “there is a 72% chance we are in the buy-pressure state, 20% neutral, 8% sell-pressure.” Those probabilities can feed execution logic, inventory control, or risk throttles.

The analogy to medical diagnosis is helpful up to a point: symptoms are visible, disease state is hidden, and diagnosis updates as new symptoms arrive. The analogy explains why a sequence of observations can reveal a latent cause only probabilistically. Where it fails is that markets are reflexive. In medicine, the disease usually does not respond to the diagnosis itself. In markets, your own quote changes, order submissions, and withdrawals can affect the future observations. That feedback is why some trading applications use extensions such as input-output HMMs rather than a plain HMM.

How does a Hidden Markov Model work for order-flow data?

An HMM has three moving parts. First is the hidden state process. Let Z_t denote the hidden regime at event time t. The Markov assumption says that the distribution of Z_t depends on the past only through Z_(t-1). In words, once you know the current regime, older hidden history adds no extra information about the next regime transition. This is a modeling choice, not a law of nature, but it makes inference tractable.

Second is the transition mechanism. This is encoded in probabilities such as P(Z_t = B | Z_(t-1) = B) or P(Z_t = N | Z_(t-1) = B). High self-transition probabilities create persistent regimes; low ones create fast-switching regimes. In order flow, persistence is often economically meaningful because order splitting and liquidity conditions do not usually disappear after a single trade.

Third is the emission model. Let X_t denote the observed market event or feature vector at time t. Conditional on the current hidden state Z_t, the model specifies a distribution for X_t. This is where market microstructure enters. X_t might include trade sign, signed volume, elapsed time since the last event, spread, queue imbalance, short-term return, and an indicator for whether the event changed the midprice. In a simple model, each state has its own distribution over trade sign and volume. In a richer model, each state has its own joint distribution over several microstructure variables.

The inference problem is then straightforward in principle. When a new observation arrives, combine the previous state probabilities, the transition probabilities, and the likelihood of the new observation under each state. This is the filtering step. The result is an updated posterior distribution P(Z_t | X_1, ..., X_t). Prediction of the next event uses that posterior combined with one-step-ahead transitions and state-conditional emission distributions.

Here is the mechanism in narrative form. Imagine the model begins the day unsure whether the market is balanced or under buy pressure. Early trades are mixed, so the posterior remains diffuse. Then a cluster of buyer-initiated trades arrives with short inter-arrival times and repeated ask-side price impact. Under the buy-pressure state's emission model, that sequence is relatively likely; under the neutral or sell-pressure states, less so. The filter shifts weight toward buy pressure. Because the transition matrix says buy pressure is persistent once established, that state probability does not collapse after a single contrary small sell. As a result, the model still predicts elevated odds that the next few events will be buys or that upward pressure on the book will continue.

That is the practical attraction of the HMM: it distinguishes noise inside a regimefromevidence of a regime change. A naive model often overreacts to each tick. An HMM smooths inference through the hidden state.

What observation features should I use for an HMM on order flow?

| Prediction target | Top features | Why they help | Typical horizon |

|---|---|---|---|

| Directional pressure | Trade sign, signed size, inter-arrival | Captures order splitting persistence | Next few trades |

| Liquidity stress | Spread, depth proxies, queue imbalance | Shows available liquidity changes | Seconds to minutes |

| Toxicity / adverse selection | Price impact, imbalance, large returns | Links flow to informed trades | Short intraday |

| Fill probability | Quote-relative size, arrival intensity, recent fills | Predicts execution likelihood | Milliseconds to seconds |

The most common mistake is to think the observation must be only the sign of the next trade. Trade sign is useful, but by itself it throws away much of the structure that helps distinguish states. Different latent regimes can generate similar sign persistence but different size, tempo, and impact patterns.

A better starting point is to ask what aspects of order flow change when the hidden regime changes. If the latent regime reflects a metaorder being worked, you might expect persistent sign, characteristic child-order sizes, and relatively regular arrival patterns. If the regime reflects toxic informed flow, you may also expect stronger imbalance, elevated intensity, and larger price response per unit traded. If the regime reflects fragile liquidity, spread and depth measures may move with the trade sequence.

This is where the HMM connects naturally to neighboring ideas in market microstructure. Research on order-flow persistence shows that intraday sign autocorrelation is largely driven by order splitting. That suggests state duration matters: hidden states should usually be persistent enough to mimic long execution programs rather than flicker every few observations. Research on flow toxicity, such as VPIN-style imbalance measures, suggests that some states may be better understood not merely as buy or sell pressure but as adverse-selection intensity. In that case, the emissions should include features linked to toxicity, not just direction.

The design principle is simple: choose observations that are consequences of the hidden mechanism you want the state to represent. If you care about latent directional pressure, signed trade flow and impact features are central. If you care about latent liquidity stress, spread, depth proxies, and price-response features matter. If you care about execution against your own quotes, your observations may need to include quote-relative information.

When should I prefer an IOHMM over a plain HMM for market applications?

| Model | Transition dependence | Input conditioning | Models feedback | Best when | Complexity |

|---|---|---|---|---|---|

| Plain HMM | Previous state only | No | No | Passive forecasting | Lower |

| Input-Output HMM | Prev state plus inputs | Yes | Yes | Active quoting or interactive markets | Higher |

A plain HMM assumes the hidden state drives the observations, but the state transitions and emissions are not directly conditioned on external inputs. In trading, that is often too restrictive. Order flow does not evolve in isolation from the state of the book, public price changes, or your own quotes.

This is why some market applications use Input-Output Hidden Markov Models, or IOHMMs. In an IOHMM, observed inputs help govern both state transitions and output distributions. A technical paper on stock order-flow modeling used IOHMMs fit to historical trade, order, and quote data, with the explicit goal that simulated order flow should react to market conditions and the market maker’s quoted prices. That extension matters because market participants respond to spread, depth, and relative price attractiveness. If your quote improves, you may alter the probability of receiving the next marketable order. A plain HMM would struggle to represent this feedback cleanly.

Mechanically, the difference is not mysterious. Instead of saying only “state B tends to emit buy orders,” the model says something more like “in state B, the probability of a buy depends on current inputs such as spread, recent price move, or quote placement.” Likewise, the probability of staying in or leaving a state may depend on those inputs. That makes the model less purely regime-based and more behaviorally conditional.

This is often the right move when the trader is an active participant rather than a passive forecaster. If you are predicting order flow around your own market-making quotes, the future flow depends partly on what you choose to quote. The hidden state still matters, but the market is now interactive.

How do you estimate and update HMM parameters on streaming market data?

Once the model structure is fixed, the next problem is parameter estimation: transition probabilities, state-specific emission parameters, and possibly input effects. In classical HMM work, this is often done with the EM algorithm, especially the Baum–Welch procedure. The logic is elegant. Because the state sequence is hidden, treat it as missing data. Alternate between inferring expected state occupancies and transitions under current parameters, then updating the parameters to maximize expected complete-data likelihood.

The difficulty in trading is not the idea but the setting. Order-flow data is large, fast, and nonstationary. A batch EM procedure that repeatedly scans an entire historical sample is often too slow or too stale for live adaptation. This is why online and block-wise EM variants matter. Research on online EM for HMMs proposes recursive or block-update procedures that use sufficient-statistics-style updates and smoothing recursions so parameters can adapt as new data arrives. That is appealing for streaming order flow because it matches how the data is generated: continuously, not in one fixed retrospective batch.

But there is a tradeoff. If parameters adapt too quickly, the model chases noise and regime labels become unstable. If they adapt too slowly, the model becomes blind to structural change. Block-wise online EM makes that tradeoff explicit: larger blocks mean more stable but slower updates; smaller blocks mean faster but noisier adaptation. Some work establishes convergence properties for block-wise online EM in general HMMs, while other online EM approaches have weaker theory and stronger reliance on empirical behavior.

In practice, model estimation is where many elegant HMM ideas become ordinary engineering questions. How many hidden states are enough? How should emissions be parameterized: Gaussian, multinomial, Poisson, mixture, or something custom? Should the model be fit in event time, clock time, or volume time? How often should it be retrained or adapted? These choices matter as much as the abstract model class.

How can HMMs help predict fills and detect short‑horizon buy/sell pressure?

Consider a simple market-making setting. You quote both bid and ask in a liquid instrument and want to decide whether to skew your quotes, widen spreads, or reduce size. The immediate risk is adverse selection: getting filled on the wrong side just before price moves against you. The tape alone gives clues, but those clues are noisy. An HMM turns them into a state estimate.

Suppose your observation vector includes trade sign, signed size, inter-arrival time, short-horizon midprice response, and a flag for whether recent aggressive orders changed price. Over the last few seconds, buy trades have become more frequent, arrivals have accelerated, and ask-side trades increasingly move the midprice. The filter begins assigning high probability to a hidden state that you interpret as persistent toxic buy pressure. You do not need to know whether the cause is informed trading, a large execution algorithm, or temporary liquidity withdrawal. What matters is that this state historically implies a higher probability of further buy-side pressure and a higher expected cost of being hit on the offer.

The consequence is operational. You may widen the ask, reduce offered size, skew inventory defensively, or demand more edge before providing liquidity. A related partial-information market-making model makes this logic explicit by letting order arrival intensities depend on an unobservable Markov chain and then solving the resulting control problem through filtering. Its central insight is intuitive: if you ignore uncertainty about the latent regime and act as if you knew the true state, your spreads are biased. Uncertainty itself should widen or otherwise alter optimal quotes.

This is an important point. In trading, the output of an HMM is rarely “buy now” by itself. More often it is an improved estimate of the hidden short-horizon environment, which then feeds execution, quoting, or risk rules.

HMMs vs changepoint detection and particle filters: which fits order‑flow prediction?

| Method | When best | Main strength | Main weakness | Computational cost | Typical horizon |

|---|---|---|---|---|---|

| Changepoint model | Abrupt structural breaks | Fast break detection | Assumes independent segments | Low to moderate | Sudden events |

| Particle filter | Continuous or hybrid latents | Flexible posterior tracking | High compute and tuning | High | Variable horizons |

Order flow does not always drift gradually between persistent states. Sometimes it breaks sharply. A volume-targeting execution algorithm hits the market, liquidity collapses, or a cross-market arbitrage wave propagates stress from futures into ETFs and stocks. In such cases, a model built around smooth Markov persistence can lag.

That is why changepoint methods are often a natural neighbor to HMMs. Bayesian online changepoint detection focuses on inferring the distribution of the current run length, meaning time since the last structural break. Conceptually, an HMM says “the system moves among persistent regimes with known transition tendencies.” A changepoint model says “the key event is a reset in the data-generating parameters.” For order flow, the distinction matters. If you believe markets revisit familiar latent regimes, HMMs are attractive. If you care most about abrupt onset of a new episode, changepoint methods may respond faster.

Particle filters become relevant when the hidden state is not well represented by a small discrete set, or when the latent dynamics are hybrid discrete-continuous. A plain HMM is fundamentally a discrete-state model. That is a strength when discrete regimes are a good abstraction, but a weakness when important hidden variables are continuous or high-dimensional. Particle methods can track richer posterior shapes, though at a greater computational and design cost.

So HMMs are not the universal answer. They are the right tool when the main hidden structure is well approximated by a modest number of persistent regimes and when the computational benefit of that simplification outweighs the misspecification it introduces.

What are common failure modes of HMMs on order‑flow data?

The most important failure mode is misspecified states. If the true market dynamics are not well approximated by a few recurring regimes, the HMM will still fit something, but the states may become unstable mixtures rather than meaningful structures. This often shows up when state interpretations drift across samples or when predictive gains disappear out of sample.

A second problem is state-duration bias. Standard HMMs imply geometric state durations: at each step there is a constant chance of leaving the state. Real order-splitting episodes may have richer duration patterns. If a metaorder tends to persist for a while and then decay in a non-geometric way, a standard HMM can misrepresent persistence. Hidden semi-Markov models sometimes address this, but the plain HMM assumption is often used anyway because it simplifies inference.

A third problem is nonstationarity in the parameters themselves. The transition matrix and emission distributions that fit one month may degrade in the next. Market structure changes, participant mix changes, tick size changes, volatility regimes change. Online EM helps, but adaptation itself can be unstable or theoretically incomplete depending on the algorithm.

A fourth problem is aggregation and observability. We often observe broker-level or trade-level data, not the investor’s true parent order. Empirical work shows that brokerage aggregation can distort inference about whether persistence comes from one agent or many. An HMM trained on aggregated observations may confuse order splitting with herding if the observation design does not respect the underlying market structure.

Finally, there is reflexivity. If many strategies use similar signals, the hidden state is not simply there to be discovered; it is partly shaped by participants reacting to it. This does not make HMMs useless, but it means their predictive success can decay as strategies crowd the same structure.

How should I implement and validate an HMM for order‑flow prediction?

A practical implementation tends to be modest rather than grand. The most robust systems usually start with a small number of states, carefully chosen event-time features, and a narrow prediction target such as next-trade sign probability, short-horizon imbalance, or fill-conditioned adverse selection. The goal is not to produce a complete theory of the market. The goal is to compress recent order-flow information into a latent state belief that improves a concrete decision.

Validation should focus on mechanism, not just fit. If the model claims a state represents persistent buy pressure, that state should actually show higher subsequent buy probability, stronger ask-side impact, or worse expected outcomes for passive selling. If it claims a toxic-flow state, that state should align with realized adverse selection or spread-widening behavior. Good HMM work in markets is less about achieving a beautiful in-sample likelihood and more about showing that inferred states line up with economically coherent consequences.

It is also wise to compare against simpler baselines. Often a rolling imbalance measure, a Hawkes-style intensity model, or a changepoint detector captures much of the same edge with less estimation risk. HMMs earn their keep when the hidden-state representation adds something those simpler tools do not: cleaner persistence handling, better regime-conditioned predictions, or a more useful posterior state estimate for control.

Conclusion

A Hidden Markov Model for order flow prediction works by assuming that the market’s visible stream of trades is generated by a smaller hidden stream of persistent regimes. That idea matters because short-horizon predictability in order flow often comes from latent structure (order splitting, changing liquidity conditions, or toxic-flow episodes) rather than from the last observation alone.

The model’s real value is not that it magically reveals the true motive behind every trade. It is that it provides a disciplined way to infer hidden persistence from noisy microstructure data and turn that inference into better forecasts and trading decisions. Used carefully, an HMM is a practical bridge between raw tape reading and fully structural modeling: simple enough to run, rich enough to capture the fact that markets remember more than they show.

Frequently Asked Questions

An HMM assumes observed trades are generated by a small number of persistent, unobserved regimes and uses a filter over those latent states to predict future events, whereas a simple last‑n‑ticks classifier forecasts the next sign from recent observations only and cannot capture the memory carried by an unobserved process such as an executing metaorder or changing liquidity.

Include variables that change when the latent regime changes: trade sign and signed size, inter‑arrival times or intensity, short‑horizon midprice response or whether a trade moved the price, spread/depth or queue‑imbalance proxies, and any quote‑relative flags you care about, because direction alone often discards helpful tempo, impact, and liquidity signals.

Train offline with EM/Baum–Welch for batch analyses, but for live streaming prefer online or block‑wise EM variants to update parameters incrementally; this speeds adaptation to nonstationarity but introduces a bias–variance tradeoff where small blocks react faster and large blocks give more stable estimates, and some online EM algorithms lack complete convergence guarantees.

Use an IOHMM when external inputs (your quotes, spread, recent price moves, or book state) materially affect either the transition probabilities between regimes or the emission distributions, because IOHMMs let transitions and outputs condition on those inputs and so better represent interactive market settings.

Common failure modes are misspecified states (the market isn’t well described by a few recurring regimes), state‑duration bias from the geometric duration implied by plain HMMs, parameter nonstationarity that makes fitted transitions/emissions stale, aggregation/observability issues (brokerage vs investor-level data), and reflexivity when many participants act on the same signal.

Start modest: a small number of states, event‑time features tied to a narrow decision target (next‑trade sign, short‑horizon imbalance, or fill‑conditioned adverse selection), and validation that each inferred state has the claimed economic consequences rather than just better in‑sample likelihood; compare against simple baselines like rolling imbalance or Hawkes models to check robustness.

No - an HMM’s state is best read as a statistical summary of differing emission behaviour, not a guaranteed identification of economic causes; empirical work cited in the article shows short‑horizon sign persistence is largely driven by order splitting, so an HMM state correlated with persistent buys may reflect splitting, but the model itself does not prove the underlying motive.

HMMs assume Markovian regime switching and so can lag when flow changes abruptly; Bayesian online changepoint detectors (e.g., BOCPD) respond faster to sudden structural breaks because they infer run length since the last changepoint, so use changepoint methods when you expect abrupt, previously unseen episodes and HMMs when familiar discrete regimes with persistence are a better prior.

Plain HMMs imply geometrically distributed state durations, which can misrepresent the lifetime of execution programs; if duration patterns matter, consider hidden semi‑Markov models or explicit duration modeling to allow non‑geometric sojourn times.

Related reading