What Is Monte Carlo Derivatives Pricing?

Learn what Monte Carlo derivatives pricing is, how risk-neutral simulation values complex payoffs, and why variance reduction and validation matter.

Introduction

Monte Carlo derivatives pricing is a way to value derivatives by simulating many possible future paths for the underlying risk factors and then averaging the discounted payoffs. The idea sounds almost too simple: if a derivative is worth its expected future payoff under the right pricing measure, why not create many hypothetical futures, see what the contract pays in each one, and take the average? That basic move turns out to be powerful precisely where cleaner methods start to fail.

The puzzle is this: for a plain European call on a stock, finance already has closed-form formulas in familiar models. So why would anyone reach for simulation, which is slower and introduces sampling noise? The answer is that most interesting derivatives are not plain vanilla. Their value may depend on an entire path rather than just the ending price, on several assets moving together, on barriers being crossed, on stochastic volatility, on jumps, on early exercise features, or on exposure profiles needed for risk management rather than a single trade price. In those settings, the hard part is no longer writing down the payoff; it is taking the expectation.

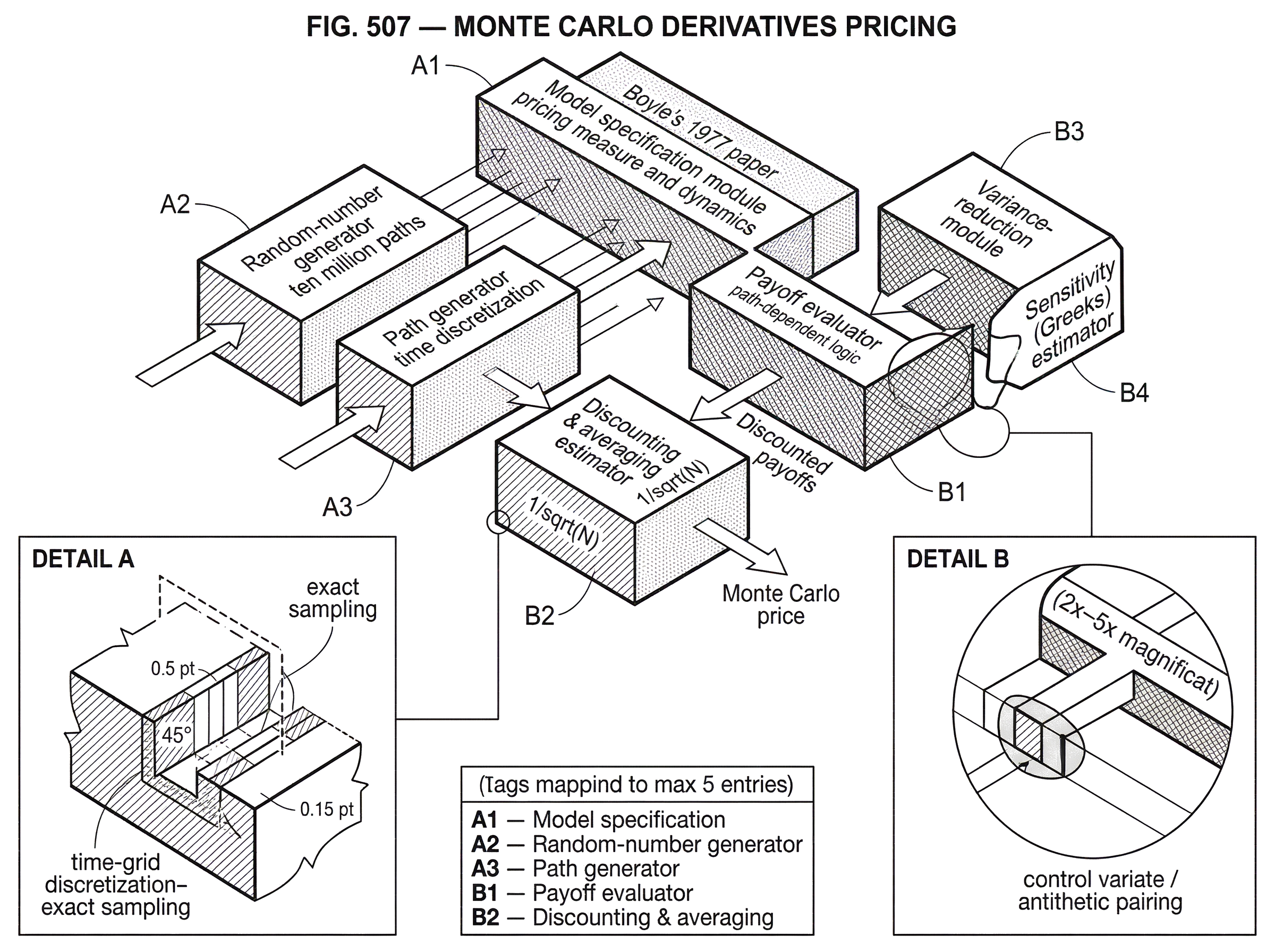

Monte Carlo exists to approximate that expectation when exact integration is impractical. It is widely used in derivatives pricing and risk management, and the core logic is straightforward: specify a model for how risk factors evolve, simulate many sample paths under risk-neutral assumptions, compute the payoff on each path, discount back to today, and average. What makes the subject interesting is not that basic recipe, but the engineering around it: how to simulate paths accurately, how to reduce variance so results converge faster, how to estimate sensitivities, and how to avoid trusting a numerically precise answer that rests on a poor model.

Pricing as an expectation: how Monte Carlo turns discounted payoffs into prices

A derivative is a contract whose payoff depends on future states of the world. If the derivative pays Payoff at maturity T, then under standard no-arbitrage assumptions its time-0 value is the present value of an expected payoff under a risk-neutral measure. In plain language, once you move to the pricing measure, expected returns on tradable assets are adjusted so that discounting at the risk-free rate becomes the right way to turn future contingent cash flows into current prices.

That is the conceptual center of Monte Carlo pricing. It does not change the economics of derivative pricing; it changes the numerical method used to compute the expectation. If you already know that the price is “discounted expected payoff,” then Monte Carlo says: instead of solving the expectation analytically, estimate it by random sampling.

Suppose a stock price today is S0, and you want to value a European call with strike K and maturity T. In a Black-Scholes world, there is a closed-form answer, so Monte Carlo is not necessary. But it gives a clean first example. You simulate many terminal stock prices ST under the risk-neutral dynamics, compute the payoff max(ST - K, 0) on each simulated path, discount each payoff by exp(-rT) where r is the risk-free rate, and average across simulations. As the number of simulations N grows, that average converges to the model price.

The method is therefore not model-free. That is a common misunderstanding. Monte Carlo does not replace assumptions with data-free randomness. It is only a numerical wrapper around a model. The model tells you how to generate paths. The payoff definition tells you what cash flow each path produces. The risk-neutral framework tells you why averaging discounted payoffs is the right pricing rule.

When is simulation necessary for derivative pricing?

The most useful mental contrast is between low-dimensional integrationandhigh-dimensional path dependence. When a payoff depends only on one terminal variable, and the model gives a tractable terminal distribution, direct formulas or numerical integration may be efficient. But each extra source of uncertainty and each extra observation date expands the state space. A payoff on a monthly monitored basket barrier option, for example, may depend on several correlated assets across dozens of monitoring times. What looked like a one-dimensional integral is now a very high-dimensional expectation.

This is where Monte Carlo becomes attractive. Its convergence rate is not especially fast, but it is relatively insensitive to dimensionality in a way that grid methods are not. Finite-difference methods and tree methods often become cumbersome as the number of state variables grows. Closed forms may disappear entirely once you add jumps, stochastic volatility, empirical return distributions, or complex path-dependent rules. Simulation remains mechanically usable as long as you can generate sample paths from the model.

That flexibility was already recognized early in the option-pricing literature. Boyle’s 1977 paper framed the method clearly: simulate the process generating returns on the underlying asset, apply risk-neutral valuation, and estimate the option price from the discounted average payoff. He also emphasized something practitioners still care about: Monte Carlo is especially useful when the return distribution need not have a closed-form analytic expression. That matters if you want mixtures of processes, jump components, or empirically motivated distributions that are awkward for analytic methods.

The cost of that flexibility is statistical noise. A closed-form formula gives one deterministic number. A Monte Carlo estimator gives a random estimate with sampling error. That tradeoff is fundamental. You gain generality but lose exactness at finite sample size.

How does a Monte Carlo pricing algorithm work, step by step?

At a mechanical level, Monte Carlo pricing has four moving parts: the model, the path generator, the payoff evaluator, and the averaging step. Each part contributes error in a different way.

Start with the model. You need a specification for the evolution of the underlying risk factors under the pricing measure: equity prices, rates, volatilities, default intensities, FX rates, or whatever drives the payoff. In a simple geometric Brownian motion model, the stock follows lognormal dynamics. In a Heston-type model, variance itself evolves randomly. In a jump-diffusion model, occasional discontinuous moves are added to a continuous diffusion. The Monte Carlo engine does not decide among these; it only implements whichever one you choose.

Then comes path generation. If the model admits exact sampling at the horizon you need, you can generate terminal values or path segments directly. If not, you discretize time into steps and approximate the continuous-time process. This introduces discretization error, which is different fromsampling error. Sampling error shrinks as you increase the number of paths. Discretization error shrinks as you refine the time grid or use better numerical schemes. It is important not to confuse the two. A simulation with ten million paths can still be wrong if the path dynamics were discretized poorly.

Next, for each path, you evaluate the derivative’s payoff. For a vanilla option this is easy. For a path-dependent product, the payoff logic may need to track the maximum price reached, whether a barrier was crossed, the running average of an index, or the timing of coupon triggers. This is often where the implementation complexity sits in practice. The stochastic model may be elegant, but the contract language can be the messy part.

Finally, you discount and average. If Xi is the discounted payoff on simulated path i, the Monte Carlo price estimate is the sample mean (1/N) * sum Xi. This estimator is unbiased in many basic settings when paths are generated correctly under the pricing measure. Its standard error typically falls like 1/sqrt(N). That square-root law is the reason naive Monte Carlo can become expensive. To cut standard error by a factor of 10, you usually need 100 times as many paths.

How do you price an Asian option with Monte Carlo (worked example)?

Imagine a one-year Asian call option on a stock. The payoff is based not on the stock price at maturity, but on the average monthly stock price over the year. This small change destroys the convenience of the standard European call formula, because the payoff depends on the whole path.

A Monte Carlo pricer begins by simulating a possible monthly path for the stock under the risk-neutral model: perhaps the stock rises early, dips midyear, and finishes slightly above where it started. The option does not care mainly about the final level; it cares about the average of the twelve monthly observations. So on this path, the engine computes that average and compares it with the strike. If the average exceeds the strike, the difference is the payoff; otherwise the payoff is zero. That payoff is then discounted back one year.

Now the engine repeats the exercise over and over. On another path the stock may finish high but spend most of the year below the strike, producing a small payoff. On a third path the stock may briefly spike but mostly trade sideways, again changing the average in a way a terminal-price formula would miss. After many such paths, the pricer averages the discounted payoffs. The option price emerges not from any one path being “likely enough,” but from the law of large numbers across all of them.

The mechanism is worth noticing. Monte Carlo handles the Asian feature naturally because the simulation already stores the full path. Once you have the path, any payoff that can be computed from it is, in principle, priceable. That is why simulation is so useful for path-dependent structures.

What are the main sources of error in Monte Carlo pricing (sampling, discretization, model)?

There are three distinct layers of uncertainty in a Monte Carlo price, and they are often blurred together.

The first is sampling error. Even if the model is perfect and the path simulation is exact, a finite number of paths produces only an estimate. This is the cleanest uncertainty because it can be quantified statistically. Confidence intervals come from this layer. If you double the number of paths, sampling error falls, but only slowly because of the square-root rule.

The second is discretization error. Many continuous-time models cannot be sampled exactly at all required times, so you approximate them on a grid. If the payoff is sensitive to what happens between grid points, as barrier options are, a coarse grid can bias the result. This is not fixed by adding more paths. You need a finer time grid, a bridge correction, or a more suitable scheme.

The third is model error. The underlying dynamics may simply be wrong for the market or product. Maybe volatility is not captured properly, jumps matter, correlation is unstable, or calibration is poor. Monte Carlo often gives a very precise estimate of the wrong quantity if the model underneath is misspecified. This is why regulatory guidance on model risk management matters in practice: all models are imperfect, and complexity increases the chance of misuse or hidden error.

A useful discipline is to ask, after seeing a simulation output: is the uncertainty mostly from too few paths, too coarse a discretization, or too much faith in a fragile model? Each problem requires a different remedy.

Why use variance‑reduction techniques and which ones provide the biggest gains?

| Technique | When to use | Typical gain | Implementation cost |

|---|---|---|---|

| Antithetic variates | Symmetric payoffs | Modest | Low |

| Control variates | Has analytic control | Large | Medium |

| Importance sampling | Rare‑event or tails | Large for tails | High |

| Stratified sampling | Reduce sampling noise | Medium | Medium |

The central practical problem in Monte Carlo is not writing the estimator down. It is making it efficient enough to be useful. Because crude Monte Carlo converges slowly, variance reduction is not an optional refinement; it is often the difference between a usable engine and an impractical one.

The logic is simple. The simulation estimator is a random variable. If you can keep its expected value the same but reduce its variance, then you get tighter confidence intervals from the same number of paths. This is equivalent to getting the same accuracy with fewer paths.

Boyle’s early paper made this point vividly. He showed both the slow convergence of crude Monte Carlo and the impact of variance-reduction techniques such as antithetic variates and control variates. Antithetic variates work by pairing random draws with mirrored draws such as z and -z when z is standard normal. If the corresponding payoff estimates move in opposite directions, averaging the pair reduces variance. This often helps, but the gain can be modest.

Control variates are usually more powerful because they exploit structure. You identify a related quantity whose expected value is known analytically and whose simulation error is strongly correlated with the error in your target payoff. Then you correct the noisy estimator using that known benchmark. The magic is not magic at all: if the control tracks the target closely, the unpredictable part of the target shrinks after adjustment. In derivative pricing, a common control variate is a simpler option on the same underlying with known price.

The deeper lesson is that variance reduction works best when it uses problem-specific information. Generic tricks help, but the biggest gains usually come from understanding what drives noise in the particular payoff you are pricing.

How do special Monte Carlo techniques address path‑dependent payoffs (for example, barrier options)?

Some derivatives create forms of inefficiency that plain sampling handles badly. Barrier options are a good example. A knock-out barrier option becomes worthless if the underlying crosses a specified barrier during its life. In a standard simulation, many paths may knock out early and contribute zero payoff. If knock-out is likely, much of the computational effort is spent generating paths that tell you little beyond “still zero.”

This is where tailored variance-reduction methods become valuable. Glasserman and Staum analyzed a method based on conditioning on one-step survival. Instead of simulating the next step under the original law and often knocking out, you simulate conditional on surviving the next monitoring step. Because this changes the sampling distribution, you apply a likelihood-ratio weight to keep the estimator unbiased. The result is that every simulated path survives each step by construction and therefore remains informative about potentially positive payoff.

The mechanism is instructive. Variance here comes partly from a binary event: many paths die and pay zero. Conditioning removes much of that source of variance, though not the conditional payoff variance among surviving paths. So the method does not “solve barrier options” in a universal sense; it attacks a specific part of the randomness. That is a good example of how advanced Monte Carlo methods are designed: find the source of wasted randomness, then reorganize the simulation so fewer samples are spent on uninformative paths.

How does Monte Carlo handle American options and the early‑exercise decision?

Monte Carlo handles European-style payoffs naturally because the payoff is determined once the path is complete. American-style options are harder because the holder may exercise before maturity, and optimal exercise depends on comparing immediate exercise value with continuation value at each exercise date.

That comparison is not directly visible from a single simulated path. The continuation value is itself a conditional expectation of future payoffs given the current state. So the problem becomes recursive: Monte Carlo is trying to estimate a quantity that depends on other conditional expectations inside the path. This is why American options are a special topic in the Monte Carlo literature rather than a trivial extension.

In practice, simulation-based methods approximate continuation values, often using regression or dual formulations. The exact details can become technical, but the key idea is intuitive: at each exercise opportunity, estimate what it is worth to continue rather than exercise, based on the state variables available then. Early exercise is optimal when immediate exercise beats that estimated continuation value. Monte Carlo can do this, but it loses some of the clean simplicity it has for purely terminal payoffs.

How are Greeks and exposure profiles estimated with Monte Carlo?

| Method | Mechanism | Bias/variance | Best for |

|---|---|---|---|

| Bump-and-revalue | Finite-difference reprice | High variance, can bias | Simple payoffs |

| Pathwise derivative | Differentiate payoff along path | Low variance if smooth | Smooth payoffs |

| Likelihood ratio | Differentiate path density | Can be high variance | Discontinuous payoffs |

| Adjoint (AAD) | Reverse-mode differentiation | Low variance, efficient | Many Greeks simultaneously |

Pricing is usually only the start. Traders and risk managers need sensitivities: delta, gamma, vega, rho, and more exotic risk measures. Monte Carlo is also used to estimate these, though again the numerical challenge is more subtle than the basic idea suggests.

The naive approach is bump-and-revalue: slightly change an input, rerun the simulation, and use finite differences. This is easy to understand but can be noisy and expensive, especially when many risk measures are required. The literature therefore studies more efficient estimators, including pathwise derivative methods and likelihood-ratio methods, though the exact choice depends on the payoff regularity and model structure.

This matters because Monte Carlo is deeply tied to risk management, not just front-office pricing. The same ability to simulate future paths under complex dynamics makes it useful for portfolio exposures, market risk, and credit risk calculations. In modern practice, the line between “pricing engine” and “risk engine” is often thinner than it sounds. A system that can price a path-dependent derivative by simulation can often be extended to produce scenario-based sensitivities and exposure profiles across a portfolio.

Why do hardware and architecture matter for production Monte Carlo workloads?

Monte Carlo is embarrassingly parallel in a very specific sense: many paths can be simulated independently once the model and random-number streams are set up. That makes the method well suited to vectorized CPUs, clusters, and GPUs. The economic reason is obvious: if error falls slowly with N, practitioners compensate by throwing hardware at the problem.

Benchmarking reflects this reality. STAC’s derivatives-risk benchmark, for example, evaluates a compute-intensive Monte Carlo Greeks workload involving a Heston-based, path-dependent, multi-asset option with early exercise. That is a good proxy for why production systems are performance-sensitive: the pricing problem is not one payoff on one path, but a large nested computational workload where latency, throughput, and energy efficiency all matter.

The implementation lesson is that algorithm design and hardware design interact. A poor variance-reduction strategy can waste even excellent hardware. Conversely, a theoretically elegant method that does not map well to parallel execution may disappoint in practice. Modern GPU-oriented architectures exploit the fact that many paths can be batched together, reducing overhead and increasing throughput for large simulation jobs.

What are Monte Carlo’s limits and what problems does it not solve?

There is a recurring temptation to treat Monte Carlo as a universal answer because it can price almost anything in principle. But several limits matter.

First, simulation does not remove dependence on the risk-neutral model. If the model is badly calibrated or structurally inappropriate, Monte Carlo will estimate the wrong price with great discipline. Second, slow convergence remains a real cost, especially for payoffs with rare but important events. Third, path discretization can interact badly with discontinuous payoffs such as barriers and digitals. Fourth, early-exercise features, nested credit adjustments, and large portfolios can make the computational burden severe.

There is also an institutional limit. Complex simulation engines create model risk. Supervisory guidance from the Federal Reserve and OCC emphasizes that model risk grows with complexity, uncertainty in inputs and assumptions, extent of use, and potential impact. That is directly relevant here. A Monte Carlo engine has many failure points: the stochastic model, calibration, random-number generation, discretization scheme, payoff code, variance-reduction logic, parallel implementation, and reporting layer. Validation therefore has to test conceptual soundness, ongoing performance, benchmarking, and outcomes where observable.

That governance point is not peripheral. In derivatives pricing, a model can be mathematically sophisticated and operationally dangerous at the same time.

When should you choose Monte Carlo instead of closed‑form, PDE or tree methods?

| Scenario | Preferred method | Why | Use Monte Carlo? |

|---|---|---|---|

| Vanilla European | Closed-form (Black–Scholes) | Exact and fast | Only for nonstandard features |

| Low-dim early exercise | PDE or lattice | Handles early exercise | Prefer PDE unless high-dim |

| Path-dependent high-dim | Monte Carlo | Handles path functionals | MC recommended |

| Jumps or stochastic vol | Monte Carlo or numeric | Flexible dynamics | Use MC if no formula |

The practical question is not whether Monte Carlo is good in the abstract. It is whether it is the right numerical tool for the derivative at hand.

If the payoff is simple and a reliable closed form exists, Monte Carlo is usually not the first choice for a standalone price. A formula will be faster and exact within the model. If the state space is low-dimensional and early exercise is central, lattice or PDE methods may be more efficient. But once the payoff depends on many risk factors, many dates, complicated path functionals, or nonstandard dynamics, Monte Carlo often becomes the natural method because its complexity grows more gently with dimensionality.

That is the key tradeoff to remember tomorrow: Monte Carlo gives up exactness at finite sample size in exchange for flexibility in high-dimensional, path-dependent problems. It is not preferred because it is elegant. It is preferred because many important derivatives are easier to simulatethan tosolve.

Conclusion

Monte Carlo derivatives pricing works by turning “price equals discounted expected payoff” into a computational experiment. You choose a risk-neutral model, simulate many future paths, compute the payoff on each one, discount, and average. The idea is simple; the craft lies in making the estimate accurate, efficient, and trustworthy.

What matters most is not the randomness itself but the structure underneath it. Monte Carlo is valuable when the derivative is too complex for closed-form or grid-based methods, especially with path dependence, multiple risk factors, or rich dynamics. But the output is only as good as the model, the path generation, the variance control, and the validation around it. In practice, that is why Monte Carlo is both essential and never quite automatic.

Frequently Asked Questions

No - Monte Carlo is a numerical wrapper around a chosen risk‑neutral model: you still have to specify dynamics, generate paths from that model, compute payoffs and average discounted results; it does not eliminate modeling assumptions or calibration choices.

There are three distinct error layers: sampling error (statistical noise that falls like 1/sqrt(N)), discretization error from time‑stepping or approximate path generation, and model error from misspecified dynamics or poor calibration - each requires a different fix (more paths, finer/better schemes, or model validation respectively).

Variance‑reduction can often cut effective required trials by orders of magnitude, but gains are problem‑specific; simple antithetic variates give modest improvements while control variates are usually much more powerful when you can simulate a correlated quantity with known exact expectation (Boyle 1977 and follow‑ups show large practical gains).

For barrier/knock‑out products, conditioning on survival for each monitoring step and applying a likelihood‑ratio weight (the one‑step survival estimator of Glasserman & Staum) reduces wasted paths that immediately knock out, though its efficiency depends on monitoring frequency and knowledge or estimation of one‑step transition probabilities.

Yes, but American options are harder because optimal exercise requires estimating conditional continuation values at each exercise date; simulation methods typically approximate those continuation values with regression (e.g., least‑squares Monte Carlo) or use dual formulations, making implementation and error analysis more complex than for European payoffs.

Bump‑and‑revalue is simple but inefficient and noisy; more efficient and often unbiased methods include the pathwise (infinitesimal perturbation) estimator and the likelihood‑ratio (score) method, and the right choice depends on payoff differentiability and the model’s structure.

Prefer Monte Carlo when payoffs are path‑dependent, multi‑factor, or the model includes jumps/stochastic volatility so closed forms or grids become impractical; for low‑dimensional, vanilla claims with known formulas or PDE/tree efficiency, those methods typically win on speed and determinism.

Model risk governance is essential: firms should inventory models, document assumptions, perform independent validation and benchmarking, and apply conservative adjustments where appropriate - supervisory guidance (e.g., SR 11‑7 and OCC bulletins) emphasizes board/senior‑management oversight and periodic revalidation for complex valuation models.

Monte Carlo is highly parallelizable so GPUs, multi‑core CPUs and clusters can greatly reduce wall‑clock time, but hardware gains matter only if algorithms and variance‑reduction map well to the architecture; recent STAC benchmarks and GPU reference stacks show significant practical speedups for production‑sized Heston/early‑exercise workloads when software and hardware are co‑designed.

Related reading