What Is Tick Data?

Learn what tick data is, how market feeds encode trades, quotes, and order-book events, and why tick-level sequencing matters for analysis and trading.

Introduction

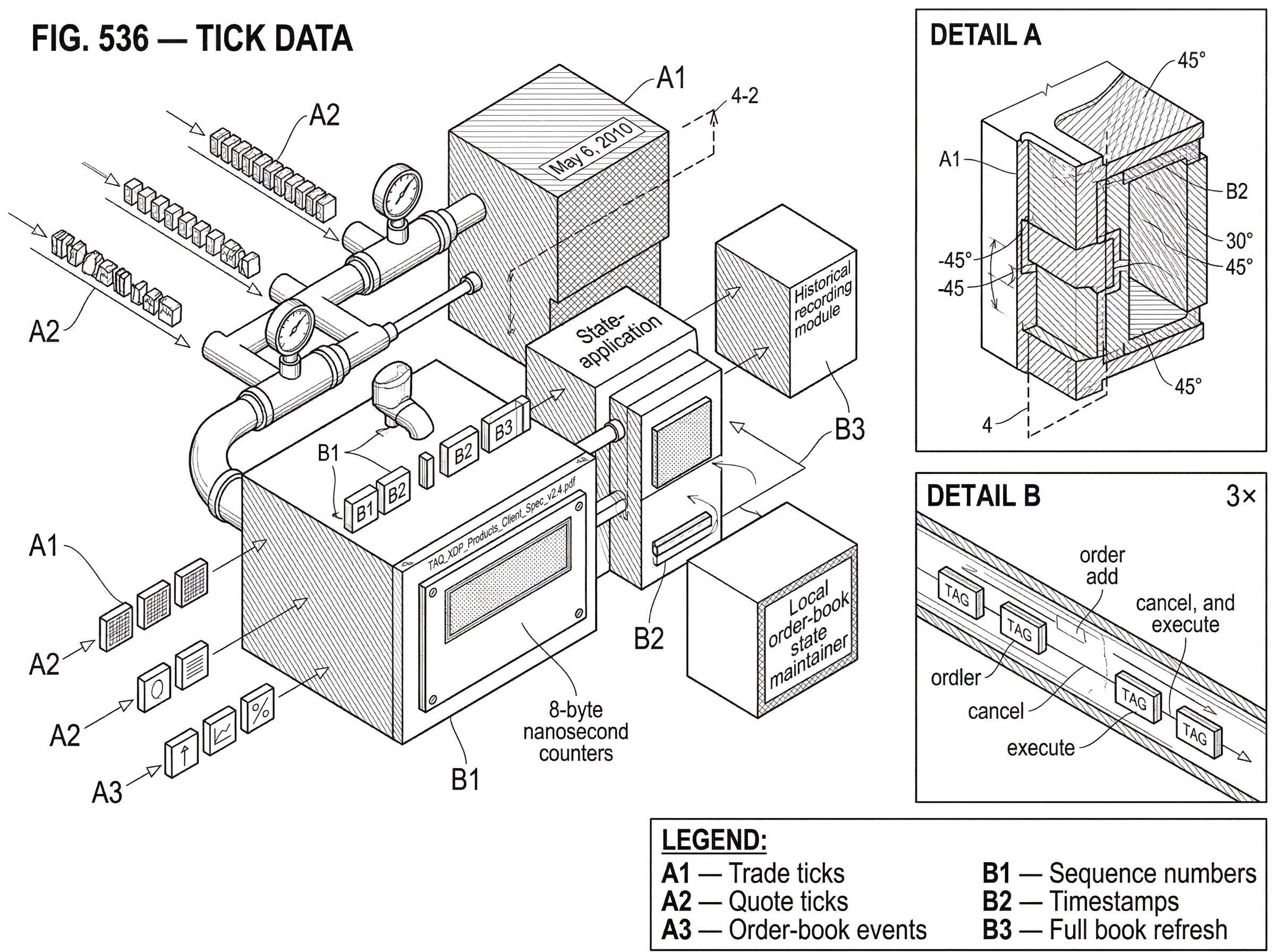

Tick data is the most granular form of market data in common use: a record of individual market updates such as trades, quote changes, and sometimes order-book events. If you want to know not just where a market was, but how it moved through time, tick data is the raw material.

That distinction matters more than it first appears. A one-minute bar can tell you that a stock traded between 100.00 and 100.20 and closed at 100.15. It cannot tell you whether the market moved smoothly upward, gapped around on thin liquidity, or briefly traded through multiple levels before snapping back. Tick data exists because market behavior is path-dependent. The sequence of events (which quote changed first, which trades printed, how the spread widened or narrowed, when liquidity disappeared) often matters as much as the final price.

In practice, “tick” does not mean only “one trade.” Different feeds define ticks differently. A tick may be a trade report, a best-bid-or-offer update, an auction imbalance message, or an order-book event such as add, cancel, replace, or execute. The unifying idea is simpler: a tick is an atomic market event as represented by a feed. That last phrase matters, because tick data is never the market in some pure abstract sense. It is always the market as observed, encoded, sequenced, and distributed by a particular venue, consolidator, or data vendor.

Why do trading systems and analytics need tick data?

| Use case | Sequencing needed? | Best data | Why it matters |

|---|---|---|---|

| Execution analysis | Yes | Trade+quote ticks | Determine fill plausibility |

| Spread analysis | Yes | Quote-level ticks | Time-resolved spread changes |

| Surveillance / post‑mortem | Yes | Order-level / depth ticks | Reconstruct event chains |

| Intraday backtesting | Yes | Timestamped ticks | Signal and fill coexistence |

| Dashboarding / long-term | No | Minute bars | Aggregated market summary |

Markets are matching systems, not just price lists. Orders arrive, rest, cancel, execute, and interact under timing rules. The visible state at any moment is the result of that event stream. If you compress the stream too early into bars or snapshots, you keep the outcomes but lose much of the mechanism.

This is why tick data is indispensable for any question where timing and sequencing matter. Execution analysis depends on whether your order would have traded before or after a queue moved. Spread analysis depends on when quotes changed, not just what the average spread was over a minute. Market surveillance depends on reconstructing exact sequences around suspicious prints or sudden dislocations. Backtesting of intraday strategies depends on whether a signal and a fill could plausibly have coexisted at the same time. Even simple-looking metrics, such as slippage or realized spread, rest on event-level observations.

There is a deeper reason too. Most familiar market summaries are derived objects. OHLC candles are aggregations of lower-level trade or quote events. A national best bid and offer is a cross-venue consolidation of venue-level quotes. A depth chart is a transformed view of order-book state reconstructed from updates. Tick data exists because downstream views are useful only if someone preserves the event stream they were built from.

What events count as a "tick" in market data?

The cleanest way to understand tick data is to separate economic events from feed events. An economic event is something like a trade occurring or a quote becoming the new best bid. A feed event is the message that tells you about it. In real systems, you consume the second in order to infer the first.

That is why different feeds produce different kinds of tick data. A top-of-book feed gives you updates whenever the best bid or best offer changes. IEX TOPS, for example, provides real-time top-of-book quotations, last-sale information, trading-status messages, and auction information, but not the full depth of the order book. It reports aggregated best sizes rather than the sizes of individual resting orders, and it excludes routed executions. That is still tick data; just tick data at the top-of-book level.

A depth-of-book feed goes further. NYSE OpenBook Ultra publishes price-level depth updates with aggregated volume and order counts at each price point, and it distinguishes complete book images from incremental changes. Nasdaq TotalView-ITCH goes further still by publishing order-level events that let a receiver track the life of displayed orders through add, cancel, replace, and execution messages. Here the tick is not merely “the quote changed”; it is “this specific order was added” or “that reference number partially executed.”

Historical tick products preserve those feed events after the fact. NYSE’s TAQ XDP products are described as end-of-day historical records of what was published on the real-time XDP feeds for a given day. Each TAQ record corresponds to a single real-time data event, and the records are kept in the same order as the real-time feed. That design tells you what historical tick data is trying to preserve: not merely values, but the event sequence itself.

So the answer to “what counts as a tick?” is not a universal market-law definition. It depends on feed scope. Trade-only feeds produce trade ticks. Quote feeds produce quote ticks. Full-depth feeds produce book-state ticks. Auction feeds produce imbalance and indicative-price ticks. What makes them all tick data is their event-level granularity.

How does an ordered event stream make tick data replayable?

The central idea that makes tick data click is this: tick data is a replayable log of state changes.

Suppose you want to know the current best bid and offer for a symbol. One way is to ask for a snapshot every time you care. The better way, for speed and scale, is to receive a starting state and then a stream of updates. If the feed says “best bid becomes 100.01 for 500 shares,” then later “best offer becomes 100.03 for 200 shares,” then later “trade at 100.03 for 100 shares,” you can maintain the evolving state locally. The feed does not need to resend the entire market after every change. It sends the deltas.

That is why sequencing is fundamental. If you apply updates in the wrong order, you reconstruct the wrong state. Exchange specifications reflect this very explicitly. NYSE TAQ states that records are in the same order as the real-time feed. OpenBook Ultra packets include packet sequence numbers, and clients use them to detect missing data. Cboe One uses a sequenced unit header for both multicast and TCP delivery. Sequence numbers are not decoration; they are the mechanism that turns many independent messages into one coherent timeline.

This also explains why recovery procedures are built into feed design. Multicast is fast and scalable, but packets can be missed. So exchanges pair it with retransmission or refresh paths. NYSE OpenBook Ultra allows clients to request retransmissions or refreshes over TCP. IEX TOPS offers gap fills over TCP or UDP unicast. Cboe One supports gap recovery through a gap-request service and advises that larger gaps be recovered via TCP replay. These recovery systems exist because a tick stream is only useful if the receiver can restore continuity after loss.

A worked example makes the mechanism concrete. Imagine a client starting up on a depth feed. First it receives a full update; a complete image of the visible order book for a symbol. That is the base state. Then it begins receiving incremental delta updates: one price level size increases, another is removed because its quantity falls to zero, a new best offer appears, and then a trade reduces displayed liquidity at that price. If the client processes every delta in sequence, it always has a current local copy of the book. If it misses one packet in the middle, the local state becomes suspect. At that point the client cannot safely “guess” the missing change; it must request retransmission or refresh the symbol. Tick-data handling is therefore not just parsing messages. It is maintaining state under uncertainty while preserving ordering guarantees.

What do tick timestamps mean; and what are their limits?

Tick data is often associated with very high timestamp precision; nanoseconds in many equity-feed specifications. NYSE TAQ timestamps are formatted down to nanoseconds. IEX TOPS timestamps are 8-byte nanosecond counters since the POSIX epoch and are documented as establishing a happened-before ordering inside the IEX trading system. Nasdaq ITCH splits time into a seconds message plus per-message nanoseconds for bandwidth efficiency. FIX even defines an addendum extending timestamp precision to picoseconds.

But precision is not the same thing as truth. A timestamp with nine decimal places tells you the field is represented at nanosecond granularity. It does not automatically mean the whole end-to-end system knows event time to nanosecond accuracy. There are several layers here: the clock source, the synchronization method, the timestamping point in the system, and the semantics of the field.

The semantics matter most. IEX’s documentation is unusually clear: timestamps define happened-before ordering within the IEX trading system. That is a strong and useful claim, but it is not the same as saying you can compare any two venues’ timestamps and recover a single perfect cross-market causal order. Across venues, different matching engines, network paths, and clock disciplines intervene.

Time synchronization exists to make those comparisons meaningful enough to be useful. NTPv4 is a widely used protocol for synchronizing clocks and, under good conditions on fast local networks, can achieve potential accuracy in the tens of microseconds. In more demanding environments, operators often use GPS-disciplined clocks as reference sources because GPS time is widely available and traceable to UTC. The broad point is that high-quality tick data depends on high-quality time infrastructure. Without disciplined clocks, sub-millisecond sequencing across systems becomes partly guesswork.

A smart misunderstanding to avoid is this: “If a feed says nanoseconds, I can totally order the market.” Usually you can totally order events within that feed’s own sequencing model. You often cannot infer a perfect global order across all venues from timestamps alone. For that, you need to understand feed semantics, capture locations, and synchronization limits.

How are market events encoded in tick messages?

Tick data feels abstract until you see that it is really a language for describing state changes compactly.

Some feeds use binary fixed-field encodings for speed. IEX TOPS uses little-endian binary messages and encodes prices as signed 8-byte fixed-point integers with an implied four decimal places. Nasdaq ITCH uses integer price fields in fixed-point form with four decimal digits. NYSE OpenBook Ultra encodes prices as integer numerators interpreted using a shared PriceScaleCode, which defines the denominator. These are different conventions, but they solve the same problem: represent prices exactly and cheaply without floating-point ambiguity.

The same pattern appears in message design. A feed does not say “the book now looks like this” unless it is sending a refresh. Instead it says “add this order,” “replace that order,” “remove this level,” or “this trade occurred.” The receiver is expected to know the message schema and update local state. Message types therefore matter as much as values. NYSE TAQ historical products enumerate message types for adds, modifies, deletes, executions, quotes, trades, imbalances, and more. Nasdaq ITCH has dedicated messages for orders, trades, and net order imbalance indicators. IEX TOPS distinguishes quote updates, trade reports, trade breaks, auction information, official prices, and retail liquidity indicators.

This is why parsing tick data is less like reading a spreadsheet and more like interpreting an event protocol. Two rows with the same price and size can mean very different things if one is a new quote and the other is a delete. Context is carried by message type, symbol, side, timestamp, sequence number, and sometimes reference identifiers linking an event to a specific order or price level.

How does historical tick data differ from live feeds and why it matters

| Product type | Preserves sequence? | Normalized? | Delivery | Best for |

|---|---|---|---|---|

| Exchange TAQ / raw EOD | Yes | No | Compressed CSV (S3 / MFT) | Exact feed-order replay |

| Vendor-normalized dataset | Partial | Yes | Vendor files / cloud | Cross-venue analysis |

| Cloud query service | Partial | Yes | In-place BigQuery / SQL | Fast ad-hoc research |

| Custom raw archive | Yes | No | Local/object storage | Full-fidelity proprietary needs |

People often think historical tick data is simply “the live feed, saved to disk.” That is close, but incomplete.

A historical product has to preserve enough information to support replay and analysis while being practical to distribute and parse offline. NYSE TAQ, for example, delivers end-of-day files as ASCII CSV records compressed with GNU Zip and made available through S3 or managed file transfer. Each record corresponds to one real-time feed event, in feed order. That structure makes the data analyzable in ordinary batch pipelines while preserving the event granularity needed for reconstruction.

The offline format may also normalize or simplify some real-time conventions. NYSE documents that default or unused real-time values may appear as blank fields in TAQ CSV output, so parsers must interpret blanks as documented defaults rather than as unknowns. That detail illustrates a general rule: historical tick products are shaped by downstream usability, not just by wire-level fidelity.

Vendors then add another layer. Services such as LSEG Tick History normalize data across many venues, map instruments into a common symbology, and expose the result in cloud query environments. That is valuable because raw venue feeds differ in message schema, identifiers, and semantics. But normalization is also a modeling choice. It can make cross-venue analysis far easier while potentially hiding some venue-specific texture. The fundamental trade-off is between local fidelity and cross-market comparability.

What is tick data used for in trading, surveillance, and research?

The most immediate use is reconstruction. If you have order-level or depth-level ticks, you can rebuild the visible order book through time and ask what liquidity existed at each instant. With trade and quote ticks, you can reconstruct the top of book, spreads, trade prints, and quote responses. This is the foundation for intraday analytics.

From there, the uses branch naturally from the mechanism. Because tick data preserves path and timing, it is used for implementation shortfall and other post-trade cost analysis: did an execution occur when the spread was wide, when the quote was fading, or after the market moved? Because it preserves microstructure, it is used to test execution schedules against plausible historical state rather than against coarse bars. Because it preserves exact event sequences, it is used in surveillance and post-mortem analysis.

A vivid example of why this matters appears in the joint SEC/CFTC analysis of the May 6, 2010 market event. The report reconstructs the sequence by looking at order-level and trade-level behavior across futures and equities, showing how a large automated sell program interacted with thinning liquidity and how cross-market arbitrage propagated stress from E-Mini futures into SPY and other equities. That kind of explanation is impossible from daily summaries and weak even with minute bars. It requires event-level data aligned closely enough in time to study mechanism rather than just outcome.

Tick data also matters in auctions, where the market is not continuously matching in the ordinary way. Imbalance messages and indicative prices tell participants how opening and closing auctions are forming. NYSE publishes imbalance messages every second when relevant values change during the auction process, and Nasdaq disseminates NOII messages at scheduled intervals leading into opening and closing crosses. These are tick data too, because the auction is itself an evolving state machine, and participants need event-level visibility into that state.

What are the limitations and failure modes of tick data?

Tick data is powerful, but it is not omniscient.

First, feed scope limits what you can infer. A top-of-book feed cannot tell you full depth. IEX TOPS explicitly provides only aggregated best sizes, not individual orders, and points users to a separate depth feed for complete book data. If you only have trades, you cannot know whether a move happened through a thin book or a deep one. If you only have quotes, you may miss hidden-liquidity executions or trade reporting nuances.

Second, not every feed is a one-for-one record of every internal market event. Cboe One is explicitly an aggregated feed whose updates may be combined as capacity allows, meaning multiple underlying updates can be coalesced into a single delivered message. That makes the delivered image current, but it can compress the path. For some analytics that is fine; for strict microstructure replay, it matters a lot.

Third, even rich direct feeds are still representations. There may be hidden orders, reserve size, venue-specific auction logic, routing activity, or internal state transitions not exposed in the public data. A direct feed can be extremely detailed while still omitting economically important information.

Fourth, packet loss and recovery choices can affect what a consumer actually saw in real time. A historical archive may preserve the canonical feed sequence, while a live consumer experienced temporary gaps and backfills. That distinction matters if you are analyzing what the market did versus what a specific trading system could have known at the time.

Finally, timestamps can mislead when readers overinterpret them. Precision without clear semantics invites false certainty. The right question is not just “how many digits?” but “timestamped where, synchronized how, and ordered under what assumptions?”

Where does tick data sit in the market‑data stack and how is it used?

| Layer | Examples | Main value | Tradeoff |

|---|---|---|---|

| Direct venue feeds | ITCH, OpenBook, TOPS | Lowest latency, full detail | High integration cost |

| Consolidated feeds | Cboe One, NBBO feeds | Cross-venue view | Sequencing complexity |

| Normalized archives | LSEG Tick History | Easy cross-date queries | Hides venue-specific texture |

| Derived products | Bars, indicators, dashboards | Ready-to-use insight | Lose event sequence |

The easiest way to place tick data in the broader market-data landscape is to see it as the layer from which most other views are derived.

At one end are raw venue feeds: direct feeds from exchanges such as Nasdaq ITCH, NYSE OpenBook Ultra, IEX TOPS, or CME’s Market Data Platform. These define the venue’s own event stream and usually offer the lowest-latency, richest venue-specific view.

Above or beside them are consolidated feeds, which combine data across venues into common views such as best bid and offer or last sale. U.S. market structure has long treated consolidated market information as a core part of price transparency. Consolidation makes the market easier to consume but introduces its own engineering problems: sequencing across sources, validation tolerances, capacity limits, and governance choices about who consolidates and how.

Then come historical archives and normalized vendor datasets. These make the same underlying events easier to store, query, and compare over long periods and across many venues. In return, the consumer typically accepts another layer of formatting, normalization, and product design.

And above all of those sit the familiar derived products: bars, indicators, dashboards, execution reports, and research datasets. They are useful because tick data already did the hard work of preserving the market’s event stream.

Conclusion

Tick data is event-level market data: the ordered stream of trades, quotes, book updates, and related messages through which a market reveals its state changes. Its value comes from preserving sequence. Once you understand that, many design choices make sense: sequence numbers, retransmissions, full-refresh versus delta updates, exact price encodings, and high-resolution timestamps.

The short version to remember is this: bars tell you where the market was; tick data tells you how it got there.

Frequently Asked Questions

A "tick" is any atomic market event as represented by a feed - it can be a trade report, a best-bid-or-offer update, an auction imbalance message, or an order-book add/cancel/replace/execute; what counts as a tick depends on the feed's scope and schema.

Sequence numbers and strict ordering are what make a tick stream usable for historical replay: applying deltas out of order produces an incorrect local book or quote state, so feeds and archives preserve sequence numbers and design recovery paths to restore continuity after loss.

Nanosecond timestamps show field precision and can establish happened‑before ordering inside a single venue or feed, but they do not guarantee a perfect global cross‑venue causal order unless you also control capture points, clock discipline, and the semantics of the timestamp fields.

Top‑of‑book feeds deliver updates only when the best bid/offer changes (often with aggregated sizes) and are insufficient to reconstruct full depth, whereas depth or order‑level feeds publish price‑level or order‑level adds/cancels/executions that let you rebuild visible liquidity and the life of individual orders.

Historical tick products preserve feed events and ordering for offline replay but are shaped for distribution and parsing (for example, NYSE TAQ delivers end‑of‑day CSVs in feed order with some real‑time defaults blanked), so they are close to live feeds but may normalize or encode some real‑time conventions differently.

On multicast feeds you must detect gaps and use the feed's retransmit/refresh mechanisms to restore missing packets because a missed delta corrupts local state; exchanges provide TCP or request servers for retransmission, but they also enforce quotas and limits on gap/retransmit requests that should be accounted for in recovery logic.

No - tick data need not expose hidden or reserve orders; many public feeds only show displayed liquidity or aggregated sizes at best prices, and internal routing, reserve portions, or non‑displayed interest can be omitted from the feed.

Vendor normalization makes cross‑venue analysis easier by mapping identifiers and harmonizing fields, but it is a modeling choice that can hide venue‑specific message semantics or timing nuances important for strict microstructure replay.

Exchanges and feeds typically encode prices as fixed‑point integers (or integer numerators with a shared PriceScaleCode) rather than floating point to represent prices exactly and compactly, and message types (add, delete, execute, quote) carry the contextual meaning needed to update local state.

Yes - some consolidated or aggregated feeds intentionally coalesce multiple underlying updates into single delivered messages to manage capacity; that preserves the current image but can compress the original event path and thus reduce fidelity for microstructure replay.

Related reading