What Is Market Regime Detection with Hidden Markov Models?

Learn how Hidden Markov Models detect market regimes, infer hidden states from returns and volatility, and help traders adapt risk and strategy.

Introduction

Market regime detection with Hidden Markov Models is a way to infer the market condition you cannot observe directly from the price and volatility data you can observe. That matters because many trading rules fail not because their logic is always wrong, but because the market environment changes underneath them. A trend-following strategy can work well in persistent moves and then bleed in a choppy range; a mean-reversion strategy can do the opposite. The practical question is not just what is the signal? but what kind of market is this signal operating in right now?

The key idea is almost embarrassingly simple once it clicks. Markets do not announce, “I am now in a high-volatility risk-off regime.” But they leave traces: returns become more erratic, cross-asset correlations tighten, drawdowns deepen, rebounds shorten, or persistence changes. A Hidden Markov Model, or HMM, treats those latent conditions as hidden states and treats the observed market data as noisy emissions generated by those states. The model’s job is to infer which hidden state most likely produced what you just saw.

That framing is why HMMs are appealing in trading. They are not trying to predict each next tick from first principles. They are trying to detect a slower-moving structure behind the noise. In that sense, regime detection sits between raw forecasting and pure descriptive statistics: it asks whether recent observations are more consistent with a calm market, a stressed market, a trending market, or some other recurring condition that matters for trading and risk.

Why do traders detect market regimes and how does that help trading?

A market regime is a period in which the behavior of prices and related variables is meaningfully different from other periods in a way that tends to persist for a while. The persistence matters. If every bar were generated independently from the same distribution, there would be no real notion of regime; there would only be random fluctuation. Regime language becomes useful when the market’s distribution seems to change in clusters: low-volatility periods bunch together, crisis behavior bunches together, and the transition between them is not purely random noise.

Here is the mechanism behind the demand for regime models. Most trading strategies are conditional bets on structure. Momentum assumes persistence. mean reversion assumes overshooting. Carry assumes compensation for bearing certain risks. Option selling often assumes volatility and correlation do not explode too quickly. When the background process shifts, the same strategy can face a different payoff distribution. So the trader wants a model that answers a narrower question than full price prediction: given recent evidence, which environment am I probably in, and how likely is a change?

This is also why regime detection is often more useful for risk management and strategy selection than for direct point forecasting. A regime signal can reduce leverage in turbulent states, switch between strategy sleeves, widen stops, cut position size, or suspend a model known to fail in unstable conditions. In applied work, this often produces a tradeoff: a regime-aware system may give up some upside during benign periods but avoid some of the worst losses during shifts. That tradeoff is exactly why practitioners care.

What assumptions does a Hidden Markov Model make for market-regime detection?

An HMM starts with two layers. The first layer is a hidden state process: at each time t, the market is assumed to be in one state S_t, such as regime 0, 1, or 2. Those labels are not known in advance and are not observed directly. The second layer is the observation process: you do observe something at time t, such as return, realized volatility, volume imbalance, spread, or a feature vector made from several of these.

The hidden states evolve according to a Markov chain. “Markov” means that the next state depends only on the current state, not on the entire distant past. In symbols, the model works with transition probabilities like P(S_t = j | S_{t-1} = i). Collected together, these form a transition matrix, which tells you how sticky each state is and how likely switches are.

Then each hidden state has its own observation distribution. If you use a Gaussian HMM, state 0 might generate low-volatility, near-zero-mean returns, while state 1 might generate larger-variance returns with a negative mean. The crucial point is that the observations are not themselves the regimes. They are evidence about the regimes.

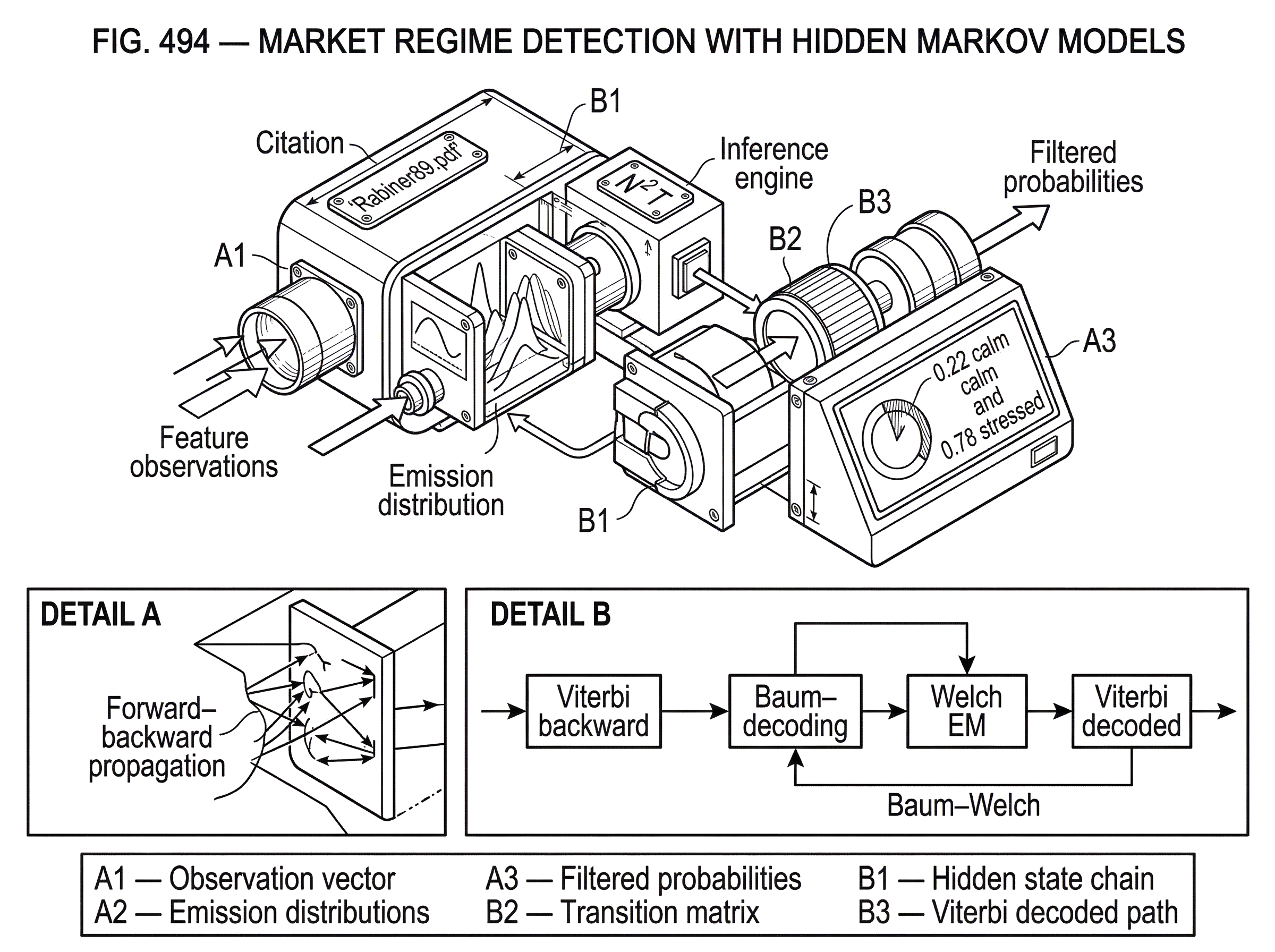

Rabiner’s classic formulation describes an HMM as a doubly embedded stochastic process: an unobserved state process, visible only through another stochastic process that emits observations. That definition is abstract, but for markets the intuition is concrete. The state is something like “stress level” or “market mode.” The data are the footprints that state leaves in returns and other features.

The compression point: persistence plus noisy emissions

The single idea that makes HMM-based regime detection work is the combination of state persistence and state-specific observation patterns. If states did not persist, then there would be little value in talking about regimes at all. If observations did not differ by state, then no amount of inference could tell states apart. HMMs become useful precisely when both are true at once.

Think about a simple two-state world. In one state, daily returns are usually small and volatility is subdued. In the other, returns are more erratic and downside moves are larger. If volatility spikes for a day, that alone may not prove a regime change; noise exists. But if you also know that high-volatility states tend to persist once entered, then a cluster of volatile observations becomes much stronger evidence that the hidden state has changed. The HMM formalizes that update.

This also explains why HMMs often feel more realistic than hard threshold rules. A threshold rule says, in effect, “if volatility exceeds x, we are in regime B.” An HMM says something softer and often more plausible: “given the whole sequence of observations and the estimated persistence of each state, the probability that we are in regime B has risen to 78%.” The difference is not cosmetic. It is the difference between deterministic classification from one measurement and probabilistic inference from a process.

What are the core HMM tasks: evaluation, decoding, and training?

In the canonical HMM treatment, there are three core problems: evaluation, decoding, and training. Those are not academic bookkeeping categories. They map directly to what a trader actually needs.

Evaluation asks: given a model and an observed sequence, how likely is that sequence under the model? This matters because training and model comparison both rely on sequence likelihood. If one parameterization makes the observed return history much more plausible than another, it may be a better fit.

Decoding asks: given the observed sequence and the fitted model, what hidden state sequence is most plausible? In trading language, this is the regime-inference problem. You want either the most likely full path of regimes or, at each date, the probability of being in each regime.

Training asks: how should the model parameters be adjusted to make the observed data more likely? This is the estimation problem. You do not know the transition matrix or the state-specific means and variances in advance, so you estimate them from historical data.

Rabiner’s tutorial is foundational partly because it showed how these problems can be solved efficiently. Direct enumeration of all possible hidden-state paths becomes computationally impossible quickly. The forward-backward procedure and Viterbi algorithm reduce that burden to something practical, with complexity on the order of N^2 T, where N is the number of states and T is sequence length.

How does an HMM infer market regimes from observed price and volatility data?

| Output | Data used | Realtime? | Best use |

|---|---|---|---|

| Filtered | data ≤ t | Yes | Online signals |

| Smoothed | all data | No | Historical diagnostics |

| Viterbi | all data | No | Single coherent path |

The basic inference logic is easier to see through a worked example than through notation alone. Imagine you fit a two-state Gaussian HMM to daily equity index returns. After training, the model has learned that one state tends to have low variance and mildly positive average returns, while the other has much higher variance and more negative skew in the observed sample. It has also learned that both states are persistent, but the calm state is more persistent than the stressed state.

Now a new stretch of data arrives. At first the returns are small and uneventful, so the filtered probability of the calm state remains high. Then several large down days arrive close together, followed by sharp rebounds and wider realized intraday ranges. A threshold model would flip when one chosen statistic crosses a line. The HMM does something richer. Each new observation updates the probability of each state, but that update is moderated by the transition probabilities. A single bad day may not be enough to overturn a highly persistent calm-state belief. A cluster of bad and volatile days is.

This is why HMM outputs are usually probabilistic rather than binary. You do not get just “regime 1.” You get posterior probabilities such as 0.22 calm and 0.78 stressed. Those probabilities can then drive trading decisions smoothly. A portfolio might cut risk linearly as stress probability rises, rather than switching all at once.

There is also an important distinction between filtered and smoothed probabilities. Filtered probabilities estimate the state at time t using data only up to time t. That is what matters for live trading. Smoothed probabilities estimate the state at time t using the entire sample, including future data. Those are useful for historical interpretation and model diagnostics, but they leak future information and cannot be used honestly for real-time signals. Statsmodels makes this distinction explicit in its Markov-switching examples, and it is one of the most important practical details to keep straight.

Which observable features should I use as HMM inputs for regime detection?

The observation choice is where much of the craft lives. In the abstract HMM, the observation is just a symbol or vector. In market regime detection, it is your operational definition of what the regime leaves behind.

The simplest choice is raw returns. That can work when the regimes differ mainly in mean and variance. But returns alone often throw away structure that traders actually care about. Many practitioners therefore use feature vectors that include realized volatility, rolling standard deviation, drawdown measures, volume, spreads, correlation proxies, or trend indicators. If the latent state is better thought of as a volatility and liquidity condition than as a return-mean condition, these extra features may distinguish states more clearly.

This choice is not a minor implementation detail. It changes what “regime” means in your model. If you train on returns only, the states may separate mostly by return distribution. If you train on returns and volatility, the states may align more with calm versus turbulent environments. If you include macro variables or cross-asset features, the states may become broader market-condition clusters. The model is only discovering hidden structure relative to the evidence you choose to show it.

That is why market-regime HMMs are often less objective than they first appear. The hidden states are latent, but their interpretation is shaped by feature design, sampling frequency, and model form. Calling a state “risk-off” is an interpretation layered on top of a statistical partition.

How should I train an HMM for regimes and mitigate EM (Baum–Welch) pitfalls?

In most practical libraries, HMM parameters are estimated by Expectation-Maximization, often via the Baum–Welch procedure. The logic is iterative. Starting from an initial guess, the model computes expected state occupancies and transitions given the data. Then it updates the parameters to improve the data likelihood under those expected state assignments. Repeating this tends to increase likelihood until convergence.

The important caveat is that this is not magic. Rabiner explicitly notes that Baum–Welch reaches local maxima, not guaranteed global optima. In plain terms, the likelihood surface is rough. If you start from poor initial values, you may end up with a poor solution that still looks mathematically “converged.” This matters a great deal in finance because different local optima can imply very different regime interpretations.

That is why practical toolchains often combine EM with multiple starting values or randomized searches. The Statsmodels Markov-switching examples call this out directly: because these likelihoods often have many local maxima, it can help to perturb starting values repeatedly and choose the best result. In production, this is less an optional enhancement than basic hygiene.

The same realism applies to convergence settings. In hmmlearn, EM stops when either the maximum number of iterations is reached or the likelihood improvement falls below a tolerance. If those settings are careless, the fit may stop too early or wander into overfitting. Covariance floors such as min_covar exist for a reason: without regularization, a Gaussian state can collapse onto a tiny variance region and fit noise too eagerly.

How can HMM regime signals be used to switch risk and position sizing?

A common and sensible use of HMM regime detection is not “buy when state 1, sell when state 2” in isolation. It is to use the inferred state as a controller on top of another strategy.

Imagine a moving-average trend strategy on an equity future. In quiet directional periods it works reasonably well, but during violent transitions it gets chopped up and gives back gains. You fit an HMM on returns and realized volatility and infer two broad states: calm and turbulent. In live trading, when the filtered probability of the turbulent state rises, you do not necessarily reverse the trend signal. Instead, you reduce gross exposure, widen execution limits, tighten risk caps, or require stronger confirmation before entering. When the turbulent-state probability falls, you restore normal sizing.

This is where HMMs often earn their keep. They can improve the distribution of outcomes even if they do not maximize raw return. Secondary applied examples using hmmlearn and Gaussian HMMs often report exactly this pattern: some profit may be sacrificed, but major losses around regime shifts can be reduced. That result should not be romanticized (small samples and ad hoc features can overstate it) but the mechanism is economically plausible.

The same logic extends beyond a single strategy. A multi-strategy book might allocate more weight to trend in one inferred regime, more to mean reversion in another, and more to cash or options hedges in a third. Here the HMM is functioning as a latent-state router.

How do I choose the number of hidden states for a regime HMM?

| Method | What it favors | Pros | Cons |

|---|---|---|---|

| AIC / BIC | Numerical fit | Quantitative | Tends to overfit |

| Stability across refits | Repeatable states | Robust | Computationally intensive |

| Downstream performance | Trading impact | Decision‑focused | Requires realistic backtests |

| Parsimony / interpretability | Simple states | Actionable | May reduce fit |

A beginner often assumes the hardest part is fitting the HMM. In practice, the more treacherous part is deciding how many states there should be and whether the fitted states are meaningful. There is no universal answer.

AIC and BIC can help compare models, and libraries such as hmmlearn expose both. But there is a serious catch. Methodological work on HMM order selection shows that standard information criteria often favor too many states when the practitioner wants states to be interpretable entities rather than tiny statistical refinements. In real market data, extra states can appear because the model is absorbing heavy tails, outliers, unmodeled autocorrelation, seasonal effects, or structural breaks that are not truly separate trading regimes.

This is the central misunderstanding to avoid: more states do not necessarily mean better regime detection. They may just mean the model is using hidden-state labels to patch over misspecification elsewhere. A five-state model can fit better numerically and still be less useful than a two-state model for trading.

So state count should be chosen with a combination of criteria: likelihood and information metrics, yes, but also stability across refits, plausibility of state interpretation, robustness across samples, and whether the regime signal actually improves a downstream decision without lookahead leakage. If a state exists only in one training window and vanishes in the next, it may not be a useful regime for action.

When do standard HMM assumptions fail for financial regime detection?

The Markov assumption is both the strength and limitation of the standard HMM. It gives a compact, tractable description of state persistence. But it also implies that state durations follow a geometric distribution. In simple terms, the probability of leaving a state is constant at each step, regardless of how long you have already been in it.

That may be a poor description of markets. Some regimes feel short and shock-like. Others feel sticky in a way that depends on age: once stress has persisted for a while, the odds of remaining stressed may change. Rabiner notes that standard HMMs imply exponential or geometric state-duration behavior, and that explicit-duration variants can model dwell times more realistically; but at much higher computational and parameter cost.

This matters in finance because regime duration is often economically meaningful. If your inferred calm state is really a mixture of many long stretches and a few very short ones, or if crisis states are more clustered than a geometric model allows, the standard HMM may distort transition timing. Sometimes this is tolerable. Sometimes it is the whole point of the problem.

Another friction is the emission distribution. Gaussian HMMs are convenient and often the default, but financial returns are not neatly Gaussian. They can be skewed, fat-tailed, and contaminated by jumps. More flexible emissions, such as Gaussian mixtures or generalized hyperbolic families in more advanced research, can better capture these stylized facts. The tradeoff is that extra flexibility increases estimation difficulty and can make states harder to interpret.

HMM vs. Markov‑switching autoregression and time‑varying transition models

| Model | Captures | Best when | Complexity |

|---|---|---|---|

| Basic HMM | State persistence and emissions | Distinct emission patterns | Low–medium |

| Markov‑switching AR | Regime dependent dynamics | Autocorrelated series | Medium |

| Time‑varying transitions | Covariate-driven switches | Observable drivers of switching | High |

| Regime graphical HMM | State-specific networks | High-dimensional connectivity | High |

In finance, people often say “HMM” and “Markov-switching model” almost interchangeably, but the distinction is worth keeping straight. A basic HMM is a general framework with hidden states and state-dependent emissions. A Markov-switching autoregression, in the Hamilton tradition, is a time-series model in which parameters such as the mean, autoregressive dynamics, or variance switch according to a latent Markov state.

That difference matters when market dynamics have serial dependence beyond state persistence. If returns or macro series have regime-dependent autoregressive structure, a switching autoregression may fit better than an HMM with independent emissions conditional on state. Statsmodels exposes this through MarkovAutoregression and MarkovRegression, including options for switching variance and even time-varying transition probabilities.

Time-varying transition probabilities are especially interesting for trading. Instead of assuming fixed chances of moving from calm to stress, you let those transition probabilities depend on observable covariates through a logistic form. That means the regime process is still hidden, but the chance of a switch can rise when, say, spreads widen or macro stress indicators deteriorate. Mechanically, this makes the latent-state model more responsive; conceptually, it moves from a purely self-contained Markov chain toward a conditional one.

What common failure modes break HMM-based regime detectors?

Regime models are tempting because they tell a compelling story: hidden states, probabilistic transitions, smooth inference. But markets punish elegant stories that rest on fragile assumptions.

The first failure mode is lookahead contamination. If you evaluate a strategy using smoothed probabilities or labels inferred with future data, your backtest is overstated. This is one of the easiest mistakes to make because smoothed state plots look cleaner and more convincing.

The second is overfitting by state proliferation. If the model keeps adding states to absorb every anomaly in historical data, you can mistake noise-fitting for regime discovery. Better in-sample likelihood is not enough.

The third is structural instability. A regime definition learned in one period may not survive a new market microstructure, policy environment, or volatility regime. In a deep sense, the model assumes recurrent patterns. Markets do exhibit recurring behavior, but not perfectly reusable hidden templates.

The fourth is distributional misspecification. If your emissions are too simple, the model may create artificial states to represent fat tails or outliers. If they are too flexible, estimation becomes fragile and interpretation degrades.

The fifth is the broader lesson from episodes like LTCM: extreme shifts often involve not just volatility changes but simultaneous changes in liquidity, correlation, funding conditions, and market impact. A regime model calibrated on calm historical windows can understate how violent joint changes can be. HMMs can help detect shifts, but they do not repeal model risk.

Which implementation choices most affect HMM regime-detection results?

A few implementation details seem technical but materially affect outcomes. Sampling frequency is one. Intraday data can reveal regime shifts earlier, but it also introduces more noise, microstructure effects, and nonstationarity. Daily data are cleaner but slower. Neither is universally better; the right choice depends on whether the regime you care about is a fast execution environment or a slower portfolio-risk condition.

Decoder choice is another. In hmmlearn, viterbi returns the most likely overall state path, while map returns per-timepoint most-likely states from smoothing. Those are not identical objects. If you want a coherent single path, Viterbi is appropriate. If you want marginal state probabilities, posterior-based methods matter more.

Numerical implementation also matters. hmmlearn supports forward-backward in log space or with scaling; scaling is generally faster, while log formulations are familiar and stable. These choices sound low-level, but long sequences and many refits can make them operationally important.

Finally, validation must match the use case. If the HMM is just an unsupervised descriptive model, likelihood and state interpretability matter. If it drives trading, what matters is whether the filtered probabilities improve out-of-sample decisions after transaction costs and realistic latency. That sounds obvious, but many regime-detection projects stop at making persuasive historical state plots.

Conclusion

Market regime detection with Hidden Markov Models is an attempt to infer a persistent but unobserved market state from noisy data. The reason it works, when it works, is simple: markets often cluster into conditions that both persist and leave statistical fingerprints in returns, volatility, and related features.

The reason it is hard is equally simple: those hidden states are not physical objects waiting to be discovered. They are model-based summaries of changing market behavior, and what you find depends on your assumptions, features, state count, and validation discipline. Used well, HMMs are less a crystal ball than a structured way to ask, what kind of market am I probably trading in right now, and how should that change my risk?

Frequently Asked Questions

There is no single correct number; practitioners combine information criteria (AIC/BIC) with stability across refits, interpretability of states, and whether the regime signal improves downstream decisions out-of-sample - because information criteria can favor extra states that only fit noise or heavy tails, not economically meaningful regimes.

EM (Baum–Welch) increases likelihood but only to a local maximum, so results depend strongly on initial values; common mitigations are multiple randomized restarts, careful convergence tolerances, and regularization (e.g., covariance floors) to avoid degenerate solutions.

Filtered probabilities use data only up to time t and are appropriate for real-time trading; smoothed probabilities use the entire sample including future observations and are useful only for historical interpretation or diagnostics because they leak lookahead information.

Standard first-order HMMs imply geometric/exponential state-duration behavior, which can misrepresent regimes whose exit probability depends on age; explicit-duration HMMs can model realistic dwell times but at a steep computational and parameter-cost increase (Rabiner documents order-of-magnitude increases in storage and computation for explicit-duration variants).

Choose observations to match what you mean by a regime: raw returns separate states by mean/variance, while adding realized volatility, rolling std, drawdowns, volume, spreads or correlation proxies will push the model to find regimes defined by volatility, liquidity or cross-asset structure - the feature set effectively defines the regimes the HMM can discover.

HMMs are usually more useful as risk controllers or strategy routers than as direct point predictors: they infer latent environments so you can reduce leverage, switch strategy sleeves, or change stops when stress probability rises, often improving outcome distributions at the cost of some upside in benign periods.

If returns or other observables have regime-dependent serial dynamics, a Markov‑switching autoregression (Hamilton-style) will typically fit better than independent-emission HMMs; if you believe observable covariates should influence switch odds, time-varying transition probabilities let transition rates depend on exogenous signals and make the latent process more responsive.

Watch for lookahead contamination (using smoothed states in backtests), overfitting via too many states, structural instability of learned regimes, and distributional misspecification; these failure modes commonly inflate historical performance and undermine live robustness unless addressed by proper validation and conservative design.

Gaussian emissions are convenient but can miss skew and fat tails; Gaussian‑mixture emissions or heavier‑tailed families capture financial return features better but increase estimation complexity and can make state interpretation harder, so there is a tradeoff between realism and robustness.

Related reading