What Is Alternative Data Sources in Trading?

Learn what alternative data sources are in trading, how they become signals, why they can add edge, and where bias, drift, and compliance risk arise.

Introduction

Alternative data sources are nontraditional datasets that traders and investors use to understand companies, sectors, and economies before that information appears clearly in financial statements, filings, or management commentary. The attraction is simple: markets price expectations, not accounting history. If you can observe pieces of real-world activity earlier or more precisely than everyone else, you may estimate revenue, demand, user growth, inventory stress, or competitive change before the consensus fully catches up.

That promise is easy to state and much harder to realize. A credit-card panel is not the economy. A scraped website is not a company’s revenue ledger. A satellite image is not earnings. The central fact about alternative data is that it is indirect observation. You are usually not measuring the thing you want. You are measuring a trace left by the thing you want, then asking whether that trace is stable enough, timely enough, and clean enough to support a trading decision.

That is why alternative data is best understood not as a list of exotic datasets, but as a process of inference. The trader’s problem is not “How do I get unusual data?” It is “How do I convert a noisy, partial, sometimes biased external footprint into a point-in-time estimate of an economically meaningful variable?” Once that clicks, the rest follows: why these datasets can be valuable, why they decay as more firms adopt them, why engineering matters so much, and why provenance and compliance are not side issues but part of whether the signal is real.

What counts as alternative data in trading?

In investment practice, alternative data usually means data used for investment decisions that sits outside traditional investor sources such as financial statements, company filings, presentations, and press releases. Industry explainers and research briefs converge on that basic definition. The important part is not merely that the data is “new” or “different.” It is that the data arrives from a different observation channel.

Traditional fundamental data is largely company-authored or company-audited. Alternative data is often generated as a byproduct of some other activity: consumer purchases, website visits, mobile app usage, shipping flows, location pings, online text, or sensor output. That difference changes the logic of analysis. With a company filing, the question is often interpretation. With alternative data, the first question is whether the measurement process itself is valid.

This is why investors care so much about speed, granularity, and coverage. A filing may tell you what happened last quarter at the company level. A card dataset may tell you what seems to be happening this week in a specific merchant category among a defined consumer panel. A web-traffic series may show changing interest patterns daily. An app-intelligence feed may show downloads or engagement trends much earlier than management commentary. The gain is not magical foresight. The gain is a potentially earlier, narrower, and more operational view of reality.

That narrower view can still be powerful because many market-moving questions are not abstract. They are operational. Is store traffic accelerating? Are consumers trading down? Is a subscription app losing momentum? Is a marketplace gaining share? If you can estimate those underlying motions before the earnings release, you may form a better prior about the release; or about how the market is mispricing it.

How do traders convert observable traces into economic estimates?

The core mechanism of alternative data is straightforward: observe a proxy, calibrate it against a target, then use the relationship to forecast or nowcast the target. Here the word proxy matters. A proxy is not the same thing as the underlying variable. It is a measurable footprint that tends to move with it.

Suppose you want to estimate a retailer’s quarterly revenue before earnings. You do not have the company’s internal sales ledger. What you might have instead is a panel of debit and credit card transactions associated with that merchant. Those transactions are only a slice of reality. They may underrepresent cash users, certain regions, older demographics, or specific issuers. Returns may be handled inconsistently. Merchant mapping may be messy. But if the panel is large enough, sufficiently stable through time, and reasonably representative, then changes in panel spend may help you infer changes in company revenue.

The same structure appears across many alternative datasets. Web data measures attention or product availability rather than sales directly. Geolocation measures physical presence rather than purchases. App usage measures digital engagement rather than monetization. Satellite imagery measures visible activity rather than output or profit. In every case, the investor is building a bridge from observable trace to hidden business variable.

That bridge has three parts. First, the raw data must be made usable. Public and sensor-derived data are often difficult to access, noisy, and unstructured; their value frequently comes from collecting, cleaning, standardizing, and aggregating them. Second, the processed data must be mapped to an economic target such as same-store sales, active users, churn, inventory, or pricing power. Third, the relationship must be validated in a point-in-time way so that the model only uses information that would actually have been available at the moment of the hypothetical trade.

If any of those three parts fails, the signal is usually illusory. The raw feed may look impressive, but without stable preprocessing, economic interpretation, and proper validation, it is just an interesting dataset.

Which alternative datasets are most useful for investment signals?

| Dataset | Closeness | Update speed | Cost/complexity | Typical stability | Best for |

|---|---|---|---|---|---|

| Card transactions | Very close to revenue | Daily–weekly | Medium–High | High if panel stable | Retail revenue tracking |

| Web / app data | Close to demand/attention | Daily | Low–Medium | Medium | Traffic and share estimates |

| Geolocation | Proxy for visits | Near real‑time | Medium | Low–Medium | Foot‑traffic trends |

| Satellite imagery | Proxy for visible activity | Weekly–monthly | High | Low | Supply‑chain & inventory |

| Social / sentiment | Proxy for attention | Real‑time | Low | Low | Short‑term attention spikes |

Not all alternative data is equally useful, and the reason is mechanical rather than aesthetic. A good dataset sits close to the economic variable of interest, updates fast enough to matter, and remains stable enough that yesterday’s relationship still means something tomorrow.

That is one reason transaction data and web data are so widely used. Industry surveys summarized by AlternativeData.org describe credit/debit card and web data as among the most utilized and most insightful categories, with card data also the highest grossing. This pattern makes sense. Consumer transaction data can sit relatively close to revenue for certain businesses. Web data can sit relatively close to demand, share of attention, assortment changes, or pricing behavior for internet and consumer businesses. These are still proxies, but often useful ones.

By contrast, some sensor-based categories can be more fragile than their imagery suggests. Geolocation may suffer from limited granularity, coverage gaps, and collection-method differences. Satellite data can be expensive, variable in quality, and heavily dependent on image processing before it becomes analytically useful. The issue is not that these sources never work. It is that the path from raw measurement to reliable economic estimate is longer and more model-dependent.

A useful way to think about dataset quality is with three distances. There is the distance from the data to the business variable, the distance from collection to clean analysis, and the distance from signal discovery to crowding. When all three distances are short, the dataset is often more valuable. When they are long, the work multiplies and the edge can evaporate before you finish building it.

How can card-transaction data be used to estimate company earnings?

Imagine a fund tracking a large restaurant chain ahead of quarterly results. The team licenses an aggregated card-spend dataset from a vendor. At first glance, the dataset seems simple: each day brings spend totals associated with the merchant. But the numbers are not yet an estimate of reported revenue. They are just panel activity.

The first job is to understand what is actually being measured. Does the panel cover debit, credit, or both? Is it based on a stable panel of consumers, a changing panel, or a bank-specific population? Are refunds netted? How are franchise locations mapped? Are delivery apps counted as merchant spend or lost into intermediaries? Without answers, apparent trends may be artifacts of the vendor’s collection method rather than changes in the business.

Once the collection process is understood, the team normalizes the series. Calendar effects matter because spending around holidays, weekends, and payday cycles can move sharply even when underlying demand is unchanged. Weather shocks can distort specific regions. New store openings can make total spend rise even if same-store demand weakens. The team therefore creates features tied to comparable periods, regional mixes, and merchant-consistency rules so that the time series better reflects the question it is supposed to answer.

Only then does modeling begin. The team compares historical panel spend changes with subsequently reported company revenue and same-store sales. If the relationship has been stable, they may build a mapping from panel growth to expected reported growth. But that mapping is still conditional: it depends on the panel staying representative, on the vendor not changing methodology, and on the company not shifting channel mix in a way that breaks the old relationship.

Now the signal becomes tradable. Two weeks before earnings, the processed panel suggests materially stronger same-store sales than consensus expects. That does not guarantee a winning trade, because the market may already know something similar, margins may disappoint, and management guidance may dominate the quarter. But it is at least a causal chain: consumer spend panel -> calibrated estimate of business performance -> view on likely earnings surprise -> trade expression. That is what a real alternative-data workflow looks like.

Why does data engineering determine whether an alternative-data edge survives?

People often speak about alternative data as if the edge comes from discovering a clever source. In practice, much of the edge comes from infrastructure. Raw datasets are messy, late, revised, inconsistent across entities, and easy to misuse. If you cannot timestamp them correctly, join them point-in-time, and monitor quality drift, you do not really have a signal pipeline.

This is where machine-learning and data-platform practices become central. Practitioner tools emphasize turning large, heterogeneous data into features, labels, and backtests rather than stopping at collection. Financial ML workflows are built around structuring data so it is consumable by models, engineering features, generating labels, and backtesting while avoiding false positives. The point is not that every alternative-data strategy must use advanced ML. The point is that these datasets behave like a data-engineering problem before they become an investment problem.

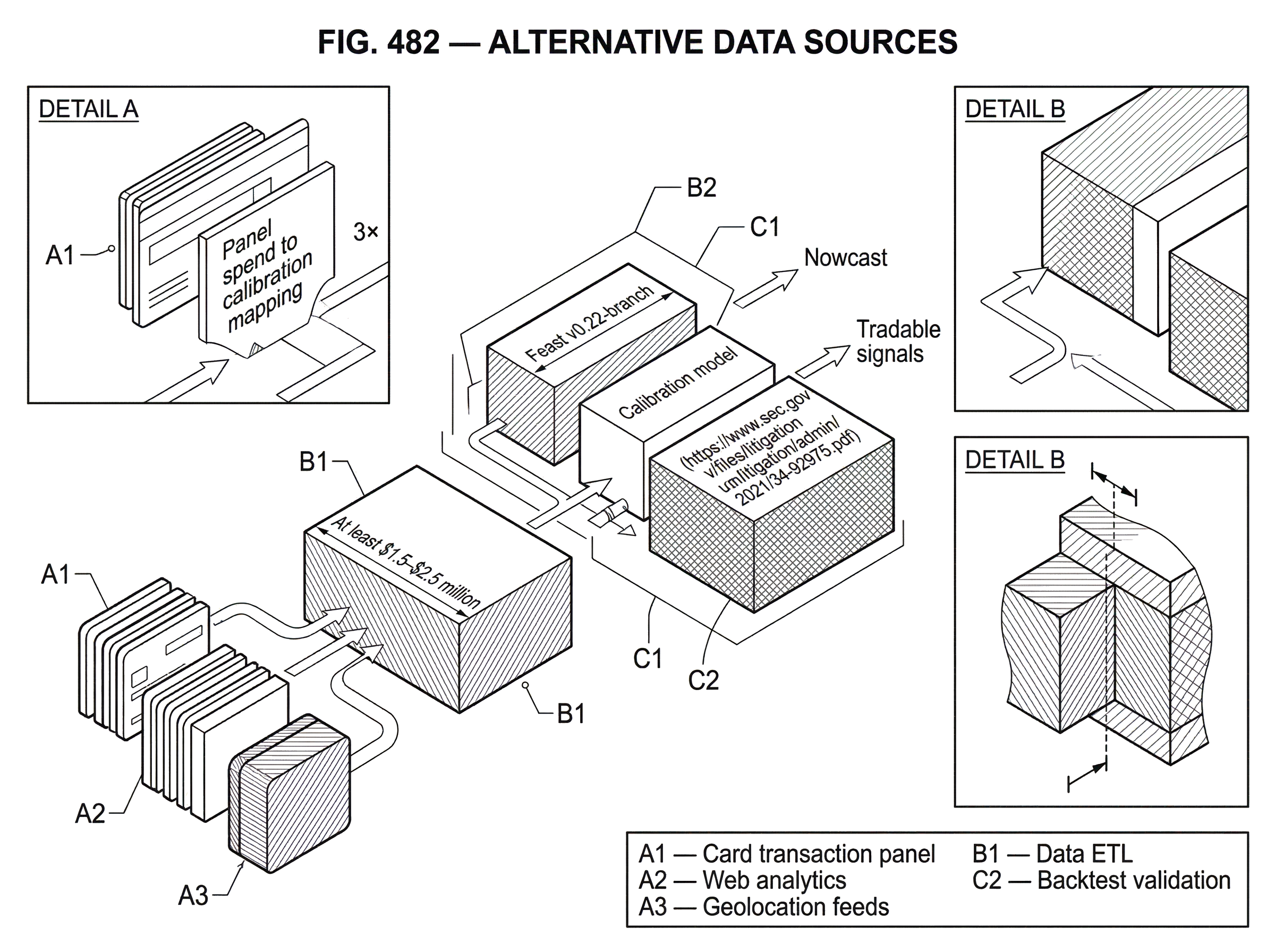

Feature stores and validation systems illustrate the same principle. Systems such as Feast are designed to manage historical and online features and, crucially, to create point-in-time correct training sets so models do not accidentally use future information. That matters enormously in trading. If a feature table joins a revised dataset, or aligns a vendor field using publication time instead of actual availability time, the backtest can look excellent while being impossible in live trading. The problem is not subtle. It is one of the most common ways researchers fool themselves.

Data validation tools serve a different but equally necessary role. Alternative data vendors change schemas, collection methods, panels, and field definitions. A missing region, a shifted timestamp convention, or a reclassified merchant can quietly destroy signal quality. Monitoring systems such as Great Expectations exist because data failure in production rarely announces itself as an exception; it often appears as a plausible-looking but structurally wrong input. In alternative data, that is especially dangerous because there is often no clean ground truth available in real time.

What limits the reliability of alternative-data signals (bias, drift, decay)?

| Limit | What it is | Common symptom | How to detect | Mitigation |

|---|---|---|---|---|

| Panel bias | Unrepresentative sample | Estimates diverge from filings | Benchmark vs known series | Reweight or augment panels |

| Drift | Changing proxy-target relationship | Backtest performance falls | Rolling correlation checks | Retrain and revalidate models |

| Crowding / decay | Alpha erodes with adoption | Signal priced earlier | Monitor crowding indicators | Seek orthogonal sources |

The biggest misunderstanding about alternative data is that more data automatically means more truth. It does not. It means more observations generated by a particular mechanism, with that mechanism’s own blind spots.

Panel bias is the clearest example. AlternativeData.org notes that panel-based consumer datasets such as email receipt data can be biased and often smaller than card panels, limiting representativeness. Even a large panel can become misleading if the composition shifts. If younger users adopt a payment method faster than older users, your apparent consumer trend may partly reflect changing panel mix rather than changing brand strength. If a web panel underweights mobile traffic, a desktop-heavy competitor may appear stronger than it is.

Then there is drift. The world changes, companies change, and vendors change. A proxy that once tracked revenue may stop doing so after a business launches a new channel, bundles products differently, or routes transactions through a marketplace. Geolocation that once tracked store visits may degrade if operating systems tighten location permissions. A scrape-based dataset may weaken when website layout changes or anti-bot defenses improve. Because alternative data depends on indirect observation, the mapping from trace to target is never permanent.

Finally, successful signals attract capital. Deloitte notes that some early alternative datasets became less robust as they proliferated. This is exactly what market competition would predict. If many funds extract the same predictive pattern from the same source, the market will begin to price that pattern earlier. The source does not become useless, but the alpha shrinks. Over time, what was once a differentiated trading edge can become just another input to consensus expectations.

How do compliance and provenance affect the usability of alternative data?

| Risk | Why it matters | Example | Typical mitigation |

|---|---|---|---|

| MNPI exposure | Creates insider‑trading liability | App Annie post‑model edits | Vendor diligence; trade walls |

| Poor provenance | Unknown collection or licensing | Undisclosed scraping methods | Demand lineage; contractual reps |

| Privacy risk | Regulatory and reputational harm | Re‑identification of users | Pseudonymisation; legal review |

| Vendor change | Sudden method/schema shifts | Panel or schema updates | Monitoring; contractual change notices |

A dataset is not truly useful if you cannot rely on how it was collected, licensed, and transformed. This is not merely a legal footnote. It is a question of whether the numbers mean what you think they mean, and whether you can safely continue using them.

The SEC’s Division of Examinations has warned that advisers using alternative data sometimes lacked policies and procedures reasonably designed to address the risk of receiving or using material nonpublic information, or MNPI. The staff also observed ad hoc and inconsistent diligence of alternative-data providers. That tells you something important: alternative data is heterogeneous, and not all risk lies in the model. Some of it lies upstream in sourcing, contractual terms, and collection practices.

The App Annie enforcement action makes the point sharper. App Annie sold app-performance estimates that more than 100 trading firms used in investment decisions. According to the SEC’s order, the firm represented that estimates came from a statistical model using aggregated and anonymized data with consent, but in fact used non-aggregated, non-anonymized confidential data to alter estimates through manual and automated processes. The lesson is not just “misconduct exists.” It is that if you do not understand the vendor’s data lineage and controls, you may think you bought a model output when in substance you bought something tainted by confidential source data.

Data provenance matters for more ordinary datasets too. Deloitte frames data provenance risk as the risk tied to the origin and gathering of the data: whether it was procured in accordance with applicable terms and conditions from the originator. Web scraping is a good example of why this is delicate. Official privacy guidance makes clear that using web-scraped personal data can raise serious transparency and lawful-basis problems, and regulators encourage firms to seek lower-risk alternatives where possible. Even where a dataset is commercially available, availability is not the same as clean provenance.

For personal-data-heavy sources, pseudonymisation helps but does not magically remove obligations. European guidance emphasizes that pseudonymised data can still remain personal data if attribution remains possible with additional information. In plain terms, stripping obvious identifiers may reduce risk, but it does not convert a questionable collection practice into a harmless dataset. For a trading firm, that means privacy controls and vendor diligence are not separate from research quality; they are part of the precondition for using the data at all.

What does it take (and cost) to operationalize alternative data at scale?

The outside view of alternative data often imagines a trader subscribing to a special feed and immediately gaining an edge. Institutional reality is much costlier. AlternativeData.org reports rapid growth in buy-side alternative-data teams and suggests a data team typically costs at least $1.5–$2.5 million to stand up. That magnitude is plausible because the job is multidisciplinary.

A serious program needs research talent that can ask economically sensible questions, data engineers who can ingest and normalize unusual feeds, ML or statistical practitioners who can test fragile relationships, and compliance and procurement staff who can diligence vendors and usage rights. It also needs storage, lineage, metadata, quality monitoring, and reproducible research workflows. Once you see the actual pipeline, the staffing cost no longer looks surprising.

Third-party risk management follows naturally from that complexity. Banking guidance on third-party relationships emphasizes a lifecycle approach and proportional oversight based on criticality and risk. The same logic applies to alternative-data vendors. Some feeds are replaceable convenience inputs. Others sit directly in the investment process. The more a vendor’s data influences trading decisions, the more deeply a firm needs to understand collection practices, controls, revisions, outages, and failure modes.

How do investors use alternative data for nowcasting and near‑term state estimation?

At a deeper level, alternative data exists because traditional financial information is sparse relative to the pace of the economy. Companies report periodically; businesses operate continuously. Investors therefore look for ways to fill the gap between reporting dates with observable traces of real activity.

That is why alternative data is used for nowcasting as much as for prediction. Often the aim is not to foresee a distant future. It is to estimate the present more accurately than public consensus does. What are sales likely to be this quarter? Is user growth inflecting now? Has pricing changed this week? Has foot traffic softened since a competitor launched a promotion? These are near-term state-estimation problems.

This also explains the connection to machine learning for market prediction. ML is often the tool that helps turn raw traces into stable features and combine multiple weak proxies into a stronger composite estimate. But ML does not solve the core problem by itself. If the data is biased, mis-timestamped, or improperly sourced, a more sophisticated model mostly learns those defects more efficiently.

And it explains the connection to market-data pipelines. Alternative data only becomes usable in trading when it is ingested, normalized, timestamped, versioned, and delivered in a way research and production systems can consume consistently. Without that operational layer, there is no repeatable edge; only ad hoc analysis.

Conclusion

Alternative data sources are best understood as indirect, faster observations of economic reality. Their value comes from seeing traces of business activity before those traces are summarized in traditional disclosures. Their difficulty comes from the same place: a trace is never the thing itself.

The enduring question is not whether a dataset looks novel. It is whether the chain from collection to proxy to forecast to trade is sound. If the data is representative enough, the mapping is stable enough, the timestamps are honest enough, and the provenance is clean enough, alternative data can improve how investors estimate the present and price the near future. If those conditions fail, the dataset is usually just noise wearing the costume of insight.

Frequently Asked Questions

Investors validate a proxy by calibrating it against the target using historical comparisons, normalizing for calendar/region/channel effects, and performing point‑in‑time validation so the model only uses information that would have been available when the trade decision was made.

Because raw alternative feeds are noisy, frequently revised, and heterogenous, most of the edge comes from infrastructure: correct timestamping, point‑in‑time joins, feature engineering, automated validation and monitoring, and production feature stores rather than merely finding a novel source.

Common failure modes include panel bias (unrepresentative samples), vendor methodology changes, mis‑timestamping or improper joins that introduce look‑ahead, channel shifts by the company that break historical mappings, and quiet schema or coverage changes that destroy signal quality.

Signals decay both because the underlying proxy relationship can drift (e.g., companies change distribution or OS privacy settings change collection) and because widespread adoption crowds the edge - Deloitte and the article note early datasets often lose predictive power as they proliferate.

Key compliance risks include receiving or using material nonpublic information without adequate policies (SEC EXAMS found firms lacking adequate procedures), poor vendor diligence or opaque provenance (App Annie showed how hidden origins can taint estimates), and privacy/licensing issues tied to personal or scraped data.

Pseudonymisation reduces but does not eliminate GDPR risk: according to EDPB guidance, pseudonymised data can still be personal data if attribution is possible with additional information, so further technical and organisational measures are required.

Operationalizing institutional‑grade alternative data is costly because it requires multidisciplinary teams (research, engineering, compliance), storage and lineage systems, monitoring and reproducible workflows; the article cites industry reports that a data team typically costs at least $1.5–$2.5 million to stand up.

Web scraping raises practical legal and privacy issues: UK ICO guidance warns that scraped content may be personal data in context and can create copyright/IP and transparency problems, so firms are encouraged to assess identifiability and consider licensed or lower‑risk alternatives.

Avoid look‑ahead bias by constructing point‑in‑time correct training sets, using feature stores and tooling that preserve historical feature availability, and validating models only on information that would have been known at each backtest date.

Related reading