What Is Finite Difference Option Pricing?

Learn how finite difference option pricing solves option PDEs on grids to value American, barrier, Asian, and stochastic-volatility derivatives.

Introduction

Finite difference option pricing is a way to value derivatives by solving the pricing equation numerically on a grid instead of looking for a closed-form formula. That sounds like a technical substitution, but it exists for a simple reason: most options traders care about are not plain European calls and puts under the simplest assumptions. As soon as you add early exercise, barriers, averaging features, jumps, local volatility, or stochastic volatility, the neat analytic formulas often disappear. At that point, you either simulate paths, transform the problem into another numerical form, or solve the pricing partial differential equation approximately.

The core idea is easier than the name suggests. In continuous-time option pricing, the model often tells you that the option value must satisfy a partial differential equation, or in jump models a partial integro-differential equation. A finite difference method replaces the continuous derivatives in that equation with differences between nearby grid points. Instead of asking for an exact function everywhere, you ask for approximate values at many discrete locations in asset price, time, and sometimes additional state variables such as variance. That translation turns a continuous pricing problem into linear algebra and time-stepping.

This is why finite difference methods remain central in practice. They are especially useful when the contract has structure that matters locally in state space: an American exercise boundary, a barrier near spot, a sharp payoff kink at the strike, or a stochastic-volatility state variable that changes how curvature evolves. Libraries such as QuantLib expose this directly through engines like FdBlackScholesVanillaEngine, FdBlackScholesBarrierEngine, FdHestonVanillaEngine, and FdBlackScholesAsianEngine, each with explicit controls for time grids, state grids, damping steps, and scheme choice. Those parameters are not decoration. They are the practical knobs that determine whether the computed price is accurate, unstable, or simply misleading.

Why does option pricing lead to a partial differential equation (PDE)?

The starting point is not numerics but replication. In the Black–Scholes world, if the underlying follows a geometric Brownian motion and markets satisfy the usual frictionless assumptions, you can build a hedged portfolio whose uncertain term cancels. The no-arbitrage condition then implies that the derivative value V(S,t) must satisfy a PDE, where S is the underlying price and t is time. For a one-factor Black–Scholes setting, the unknown is the option value as a function of price and time.

For a plain European call, that PDE can be solved analytically, which is why the Black–Scholes formula is so famous. But the formula is famous partly because it is special. The PDE framework survives when the closed form does not. If you switch from a European option to an American option, the problem gains an early exercise feature and becomes a free-boundary problem: you must solve not only for the value but also for the boundary separating the continuation region from the exercise region. If you introduce jumps, the PDE becomes a PIDE because future value depends not just on local derivatives but on an integral over jump sizes. If you add stochastic volatility, you typically gain another state variable, so the value depends on both S and variance v, producing a two-dimensional problem.

That is the main reason finite difference pricing exists. It is not a different theory of option value. It is a numerical way to solve the same no-arbitrage pricing equations when exact formulas stop being available.

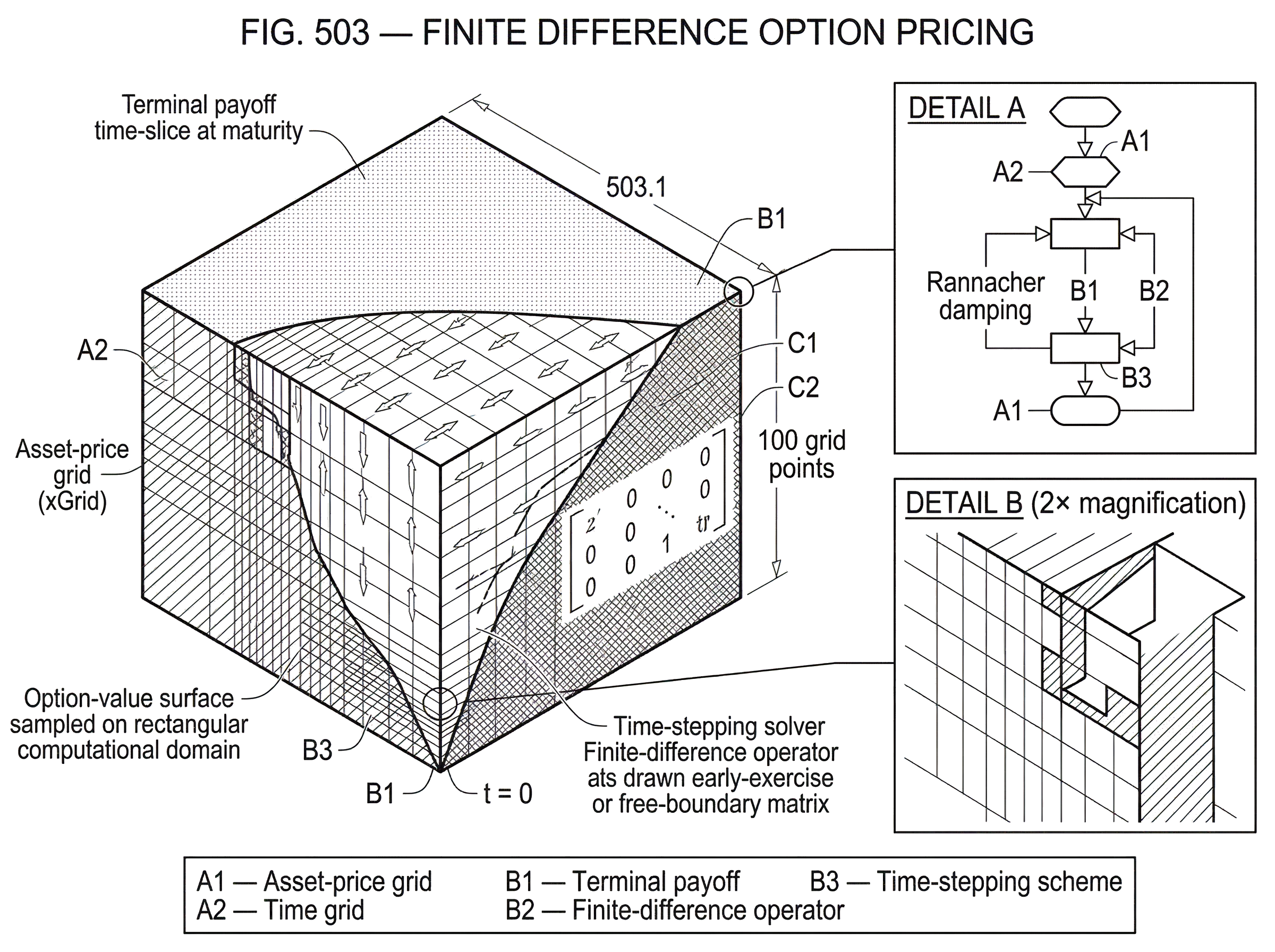

How do finite-difference methods turn the option value surface into a computational grid?

It helps to picture the option value as a surface stretched over state space. In the simplest case, the surface is drawn over asset price on one axis and time on another. Near expiry, the surface must match the payoff. At high or low asset prices, it must satisfy boundary behavior implied by the contract and model. Inside the domain, the PDE tells you how curvature in price and decay in time must balance with drift, discounting, and other model effects.

A finite difference method samples that surface on a mesh. Suppose you place xGrid points along the asset-price direction and tGrid points along time. The PDE contains derivatives like ∂V/∂t, ∂V/∂S, and ∂²V/∂S². On the grid, these become ratios of neighboring values. A first derivative becomes a slope between nearby nodes; a second derivative becomes a curvature estimate from three nearby nodes. Once those local derivatives are replaced by discrete differences, the continuous PDE becomes a system of algebraic equations linking the value at one time layer to the next.

This gives a useful intuition for what the method is doing mechanically. It is not simulating possible paths one by one, as Monte Carlo does. It is evolving the entire value surface across the grid, usually backward from maturity to today. That backward direction matters because the terminal payoff is known. At maturity, a call is worth max(S-K,0). The PDE then tells you how to propagate that known terminal condition backward through time to obtain the present value.

The analogy to a heat equation is often used here, and it explains something real: diffusion in the PDE behaves like smoothing across the price dimension. But the analogy fails if taken too literally, because option pricing also includes discounting, drift terms, early exercise constraints, and sometimes jumps or additional factors. So the right lesson from the analogy is narrow: nearby grid points influence one another through local derivative terms, and that coupling is what the solver exploits.

How does a finite-difference pricer compute a European put and then an American put?

Consider a vanilla put under Black–Scholes assumptions. At expiry, the value is easy: if the strike is K, the payoff is max(K-S,0). On the grid, that means the last time slice is known exactly at every asset-price node. Now move one time step earlier. The PDE says that the value at each interior node must fit a relation involving nearby nodes in price and the value change over the small time interval. If you choose an implicit or semi-implicit scheme, solving one time step means solving a linear system whose coefficients come from volatility, rates, dividends, and the spacing of the grid.

As you repeat this process backward, the put value surface emerges. Far above the strike, the put approaches zero, so the upper boundary is straightforward. Far below the strike, the put behaves roughly like intrinsic value discounted appropriately, which guides the lower boundary. When you finally step back to t = 0, the node nearest the current spot gives the approximate option price. If you need delta or gamma, you can approximate them from neighboring grid values with additional differences.

Now change only one contract feature: make the put American. The terminal payoff is the same, but the mechanism changes before expiry. At each backward time step, the continuation value from the PDE must be compared with the immediate exercise value K-S. The American option value is the larger of the two. That simple statement creates the free-boundary problem. Somewhere on the grid, there is a boundary where continuation and exercise meet. You do not know that boundary in advance; the numerical method must uncover it as part of the solution.

This is exactly where finite difference methods are attractive. QuantLib’s finite-difference Black–Scholes vanilla engine explicitly notes that it can price both European and American payoffs. Analytic Black–Scholes cannot do that for the American put in a full exact formula, but the grid method can, because early exercise becomes a local constraint applied during backward propagation.

How do terminal and boundary conditions affect finite-difference option prices and Greeks?

A finite difference solver is only as good as the problem you tell it to solve. The PDE is not enough by itself. You also need terminal conditions and boundary conditions.

The terminal condition comes from the payoff at expiry. This sounds trivial until you meet payoffs with discontinuities or kinks. A vanilla option has a kink at the strike. A digital option has a jump in payoff. A barrier option may reintroduce discontinuities at monitoring dates. These non-smooth features are not cosmetic. They generate high-frequency numerical error, which can pollute prices and especially Greeks.

Boundary conditions matter because the computational grid is finite while the theoretical asset-price domain is often unbounded. You must cut the domain off somewhere and specify what happens there. For a call, the large-S boundary reflects the fact that the option behaves increasingly like the stock minus discounted strike. For a put, the value decays at large S. For barrier products, the barrier itself may act like an interior condition where the option is knocked out or transformed. A poor boundary treatment can contaminate the whole solution, especially if the interesting region sits too close to the edge of the chosen grid.

This is why practical engines expose grid-size controls like tGrid, xGrid, and in higher dimensions vGrid or aGrid. Those parameters define not just resolution but the whole numerical domain in which the contract is being approximated.

Explicit vs implicit vs Crank–Nicolson: which time-stepping scheme should I use for option pricing?

| Scheme | Stability | Temporal accuracy | Spatial sharpness | Typical cost | Best for |

|---|---|---|---|---|---|

| Explicit | Conditional (CFL) | First-order | Can oscillate near kinks | Low per step | Small grids short maturities |

| Implicit (Backward Euler) | Unconditionally stable | First-order | Numerical diffusion dampens features | Linear system solve | Stiff problems large timesteps |

| Crank–Nicolson | Unconditionally stable but oscillatory | Second-order for smooth data | Sharp for smooth problems; oscillates at discontinuities | Linear system solve | Smooth payoffs moderate stiffness |

Once you discretize space, you still must decide how to step through time. This is where scheme choice enters. In finance, common finite difference time-stepping choices include explicit methods, implicit methods, and Crank–Nicolson, along with more advanced splitting and Runge–Kutta-type schemes.

An explicit method computes the next time layer directly from the current one. It is conceptually simple, but stability restrictions can be severe. If the time step is too large relative to the spatial step and volatility, the method can blow up. That makes explicit schemes less attractive for many production pricing tasks.

An implicit method solves a system at each time step using the unknown future layer. It is usually more stable and better behaved for stiff problems, but numerical diffusion can make the solution overly damped. In pricing terms, it can be robust but less sharp.

Crank–Nicolson sits between these. It averages the explicit and implicit viewpoints and is popular because it is second-order in time under smooth conditions while remaining much more stable than a purely explicit method. That popularity is visible in textbooks and practical libraries alike. But “under smooth conditions” is doing a lot of work. Real option payoffs are often not smooth.

The evidence on this point is important. Research and practical case studies show that Crank–Nicolson can behave badly around discontinuities, barriers, and early exercise features. It may generate spurious oscillations in prices and even larger distortions in Greeks. For touch and barrier-type problems, these artifacts can be large enough to matter economically, not just aesthetically.

When and how should you use smoothing and Rannacher damping in finite-difference pricers?

| Treatment | Effect on high-frequency error | Convergence | Cost | Implementation difficulty |

|---|---|---|---|---|

| No smoothing | High-frequency persists | Reduced or erratic | Lowest | Trivial |

| Smoothing only | Reduces initial noise | Improves spatial convergence | Low | Low |

| Rannacher only | Damps high frequencies | Improves temporal behaviour | Moderate | Moderate |

| Smoothing + Rannacher | Damps and stabilizes | Restores second-order convergence | Higher | Moderate |

| Projection (L2) | Best high-freq control | Best theoretical convergence | High | High |

The numerical failure mode around non-smooth payoffs has a clear mechanism. A discontinuity or sharp kink injects high-frequency error into the grid representation. Crank–Nicolson does not damp those high-frequency components strongly enough. The result can be oscillatory convergence: as you refine the grid, the price and Greeks do not settle smoothly.

A widely used remedy is to combine payoff smoothing with Rannacher time-stepping. The idea behind Rannacher timestepping is simple: before using Crank–Nicolson, take a small number of fully implicit steps. Those implicit steps damp the troublesome high-frequency components. After that, Crank–Nicolson can proceed on a smoother problem. QuantLib exposes this through the dampingSteps parameter in its finite-difference engines.

The deeper point is that damping alone is often not enough, and smoothing alone is often not enough. Work by Pooley, Vetzal, and Forsyth showed that for discontinuous payoffs, both a smoothing treatment and modified time-stepping are needed to recover clean second-order convergence. They discuss several smoothing approaches, including averaging, mesh shifting, and projection methods. The common purpose is to represent the non-smooth initial data in a way the grid can handle without injecting excessive numerical noise.

A concrete example makes this vivid. In a discretely monitored barrier option, every monitoring date can reintroduce a discontinuity into the value function. Practical demonstrations using Crank–Nicolson show oscillations appearing after those dates unless Rannacher-type damping is applied repeatedly. With damping, smoothing, and a carefully chosen non-uniform grid, the solution becomes much cleaner, including for gamma. But there is a cost: every damping episode adds extra work and can erode the ideal time-convergence advantage of Crank–Nicolson.

Even then, the story is not “problem solved forever.” Practical examples with touch options show that Crank–Nicolson plus Rannacher smoothing can still leave noticeable spikes in delta near barriers. More robust second-order schemes such as TR-BDF2 or Lawson–Morris can behave better in these cases. So the real lesson is not that one famous scheme wins universally. It is that the numerical method must match the structure of the contract.

How should I place non-uniform grid points to resolve strikes, barriers, and exercise boundaries?

A uniform grid spreads points evenly across the state domain. That is easy to implement but often wasteful. Options usually have localized features: a strike, a barrier, a spot region where Greeks are needed, or an early exercise boundary. Resolution is most valuable where the solution bends sharply.

A non-uniform grid clusters points where the action is. Around a barrier, tighter spacing can capture the steep gradient and prevent large local error. Around the strike, it can better resolve the payoff kink and improve delta and gamma estimates. Practical barrier-option studies show that non-uniform grids can reduce spatial error substantially, especially when combined with damping and smoothing.

This is another place where finite difference pricing shows its character relative to Monte Carlo. Monte Carlo handles dimension well but does not naturally give you a localized spatial picture. Finite differences struggle as dimension increases, but in low to moderate dimension they let you place computational effort exactly where the contract needs it.

How do finite-difference methods handle multi-factor models and the curse of dimensionality?

| Method | Scalability | Typical speed | Accuracy | Best use case |

|---|---|---|---|---|

| Full grid finite difference | Poor beyond 2–3 dims | Slow exponential growth | High in low dims | Low-dim PDEs with local features |

| Operator splitting / ADI | Better to 3 dims | Faster than full FD | Good for mild coupling | Two-factor volatility models |

| Monte Carlo | Excellent high-dim scaling | Relatively slow per path but parallel | Converges slowly for Greeks | High-dim path-dependent payoffs |

| Deep-learning / BSDE solvers | Scales to many dims | GPU-accelerated | Promising but variable accuracy | Very high-dim nonlinear PDEs |

The simple one-factor picture breaks once the option depends on additional state variables. In the Heston model, value depends on asset price and variance. In some interest-rate or multi-asset products, there may be two or more state dimensions beyond time. The grid then becomes multidimensional.

Mechanically, nothing fundamental changes. You still write the PDE and replace derivatives with finite differences. But the computational burden grows very fast. If one dimension uses 100 grid points, then two dimensions need about 10,000 spatial nodes, and three dimensions need about 1,000,000, before accounting for time. This is the curse of dimensionality in grid form.

That is why practical multi-factor finite difference methods rely on more structure. Textbook and library implementations use operator-splitting methods, including ADI-style schemes such as Douglas or Hundsdorfer variants, to handle multidimensional coupling more efficiently. QuantLib’s finite-difference engines reflect this by allowing a schemeDesc choice, with defaults like Douglas() for Black–Scholes engines and Hundsdorfer() for Heston engines. These schemes are not just implementation details. They are attempts to preserve stability and efficiency when the full multidimensional solve would otherwise be too expensive.

QuantLib’s FdHestonVanillaEngine also exposes xGrid for price, vGrid for variance, dampingSteps, and a scheme descriptor, and it can operate in a stochastic local volatility mode if supplied with a leverage function and mixing factor. That tells you something about where finite differences are used in real systems: not only for textbook Black–Scholes, but for richer models calibrated to market volatility structure.

Still, dimension is where finite differences eventually run into hard limits. For very high-dimensional PDEs, alternative methods become more natural. Monte Carlo is one route. Newer deep-learning PDE and BSDE solvers are another. These methods exist largely because grid methods become impractical once the state space gets too large.

How are barriers, Asian payoffs, jumps, and free boundaries handled with finite differences?

Finite difference pricing becomes especially valuable when contract mechanics map naturally into PDE constraints.

Barrier options are a clear example. A continuously monitored knock-out barrier imposes a value condition at the barrier. A discretely monitored barrier imposes state resets at monitoring dates. Both effects are awkward for closed forms except in specific models, but they fit naturally into a backward grid algorithm. QuantLib includes a dedicated FdBlackScholesBarrierEngine, with configurable grids, damping steps, scheme choice, dividend schedules, and optional local-volatility handling.

Asian options show a different kind of complexity. If the payoff depends on an average, the state space expands because the running average matters. QuantLib’s FdBlackScholesAsianEngine handles discrete arithmetic Asians, which tells you both the strength and the specificity of finite-difference design. The method can handle path dependence if you promote the right summary statistic into an extra state dimension, but each product definition still matters. That same engine explicitly does not support geometric averaging.

Jump models push the PDE into a PIDE because jumps create nonlocal dependence. Instead of only neighboring grid points mattering, the integral term couples a node to a wider range of states. Finite difference methods can still be used, but now the mechanism includes numerical integration as well as differencing. This is why texts on the subject treat jump processes as a separate implementation challenge rather than a small add-on.

American-style options bring back the free-boundary problem. The mathematically precise formulation is often a complementarity problem: the value must be at least as large as exercise value, the PDE holds in the continuation region, and the two conditions interact at the exercise boundary. In practice, front-fixing, penalty methods, variational formulations, or exact linear complementarity solvers are used. The important idea is that the early exercise feature is not appended after pricing. It changes the PDE problem itself.

Which numerical parameters do practitioners tune in production finite-difference pricers?

In production or research, nobody asks only, “Which equation am I solving?” They ask, “How fine should the grid be, where should the points go, which scheme should I use, how many damping steps are enough, and are the Greeks trustworthy?”

Those questions matter because finite difference prices are approximations with controllable error, not exact truths. Increasing tGrid and xGrid usually improves resolution but increases runtime. A better scheme may reduce oscillations but require more complex implementation. Non-uniform grids can improve local accuracy but complicate calibration of error behavior. Damping steps improve robustness near discontinuities but add cost.

This is why practical implementations are often benchmarked against known cases. Developers compare to Black–Scholes closed forms when available, reproduce published values from the literature, and test grid convergence. QuantLib’s barrier engine notes that its correctness is checked against literature and Black pricing. That kind of benchmarking is essential because numerical methods can fail quietly: a price may look plausible while delta or gamma is unusably noisy.

When are finite-difference methods appropriate, and when should you use alternatives like Monte Carlo?

The strongest use case for finite difference option pricing is low- to moderate-dimensional derivatives with important local features and a PDE representation that is well understood. American puts, many barrier options, one- and two-factor volatility models, some fixed-income derivatives, and certain Asian structures fit this pattern well. In these settings, finite differences often provide a good balance of speed, interpretability, and direct access to Greeks.

Their weaknesses are just as structural. High dimensionality makes the grid explode. Non-smooth payoffs and repeated discontinuities can break naive schemes. Poor boundary placement or poor mesh design can contaminate results. Popular methods such as Crank–Nicolson work well in many smooth problems but can misbehave badly for barriers, binaries, and early-exercise Greeks unless modified carefully.

So the right way to think about finite difference pricing is not “grid methods are approximate.” Everything numerical is approximate. The real question is whether the approximation respects the structure of the pricing problem. When it does, finite differences are powerful. When the model dimension is too large or the chosen discretization ignores the contract’s singular features, the method can become slow, unstable, or misleading.

Conclusion

Finite difference option pricing is the practice of turning an option-pricing PDE into a grid problem and solving it backward through time. Its importance comes from a simple fact: real derivatives usually outgrow closed-form formulas before they outgrow no-arbitrage PDE logic.

What makes the method work is local structure. The grid captures how nearby states determine value, exercise decisions, barrier effects, and curvature. What makes it hard is also local structure: kinks, discontinuities, free boundaries, and extra state variables. If you remember one thing, remember this: finite differences are not just about replacing derivatives with differences. They are about choosing a discretization that respects the economics and geometry of the option you are trying to price.

Frequently Asked Questions

Finite differences are the right choice when the derivative is low-to-moderate dimensional and has localized PDE-driven structure (early exercise, barriers, strikes, local or stochastic volatility) that closed forms or Monte Carlo cannot handle efficiently; Monte Carlo is usually preferable once dimension becomes large because the grid explodes. This trade-off is discussed throughout the article (strengths in low/mid dimensions, curse of dimensionality and Monte Carlo as an alternative).

American early exercise is handled by treating the problem as a free-boundary or linear-complementarity problem: at each backward time step you compare the PDE continuation value to the immediate exercise payoff and enforce the larger value, using techniques like front-fixing, penalty methods or exact LCP solvers to recover the exercise boundary numerically. The article explains this mechanism and why the exercise boundary must be solved as part of the grid algorithm.

Crank–Nicolson under-damps the high-frequency error injected by kinks or payoff jumps and can produce oscillatory prices and noisy Greeks; standard fixes are Rannacher (implicit) pre-steps plus payoff smoothing (averaging/projection or mesh shifting), and in some cases L-stable two-stage methods (e.g., TR-BDF2 or Lawson–Morris) give better behaved Greeks. The article describes the CN failure mode and recommends Rannacher+smoothing, while the cited numerical studies and practitioner notes show alternative integrators can further reduce spikes.

Choose a non-uniform spatial grid that clusters nodes where the solution bends (around strikes, barriers, spot, or exercise boundaries) and place outer boundaries far enough that their imposed conditions do not contaminate the region of interest; grid controls such as xGrid and tGrid materially affect both accuracy and runtime. The article stresses the importance of boundary conditions and adaptive/non-uniform grids for resolving local features.

Yes: jumps convert the PDE into a partial integro-differential equation (PIDE) that couples nodes via an integral term and requires numerical integration techniques in addition to differencing; arithmetic Asians and other path-dependent payoffs are handled by promoting a running-average or path-statistic to an extra state variable, which increases dimensionality and grid cost. The article explains these model-specific changes and the evidence materials discuss PIDEs and QuantLib’s dedicated Asian engine for discrete arithmetic averaging.

The grid size grows exponentially with the number of state variables (the curse of dimensionality), so multi-factor problems are typically attacked with operator-splitting/ADI schemes (Douglas, Hundsdorfer, etc.) to reduce cost, and beyond moderate dimension practitioners switch to Monte Carlo or newer deep-learning/BSDE solvers. The article describes the dimensionality explosion and notes operator-splitting choices; the cited literature and QuantLib scheme descriptors show these are standard practical responses.

Greeks are especially sensitive to payoff discontinuities, poor mesh design, and boundary placement, so you should apply smoothing, Rannacher damping, targeted non-uniform grids, and validate against closed-form or high-resolution reference solutions; practitioners explicitly benchmark prices and Greeks to detect quietly misleading results. The article highlights how kinks and jumps pollute Greeks and recommends smoothing/damping, while the evidence emphasizes benchmarking and grid-convergence testing in production code.

Production pricers expose knobs like tGrid, xGrid (and vGrid for variance), dampingSteps, and scheme descriptors (e.g., Douglas, Hundsdorfer); QuantLib’s FD engines document these parameters and use modest defaults (often tGrid=xGrid=100 and dampingSteps=0) that must be increased and validated for production accuracy. The source documentation and headers cited in the evidence show these exact parameters are exposed and that defaults are conservative starting points but not guarantees of sufficient accuracy.

Related reading